- The paper introduces a graph-based memory architecture for LLM agents that unifies traditional representations and enables dynamic multi-hop reasoning.

- It outlines a detailed taxonomy and lifecycle framework for memory extraction, storage, retrieval, and evolution, optimizing agent performance over time.

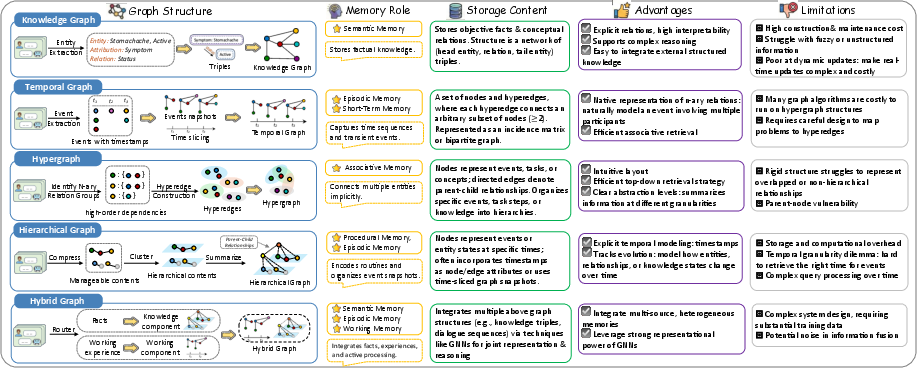

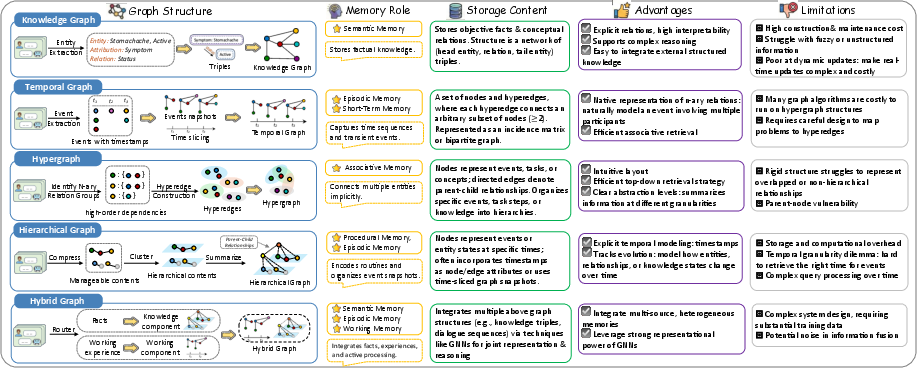

- The study evaluates diverse graph paradigms including knowledge graphs, hierarchical trees, temporal graphs, and hypergraphs, highlighting distinct use cases and challenges.

Graph-based Agent Memory: Taxonomy, Techniques, and Applications

Introduction and Motivation

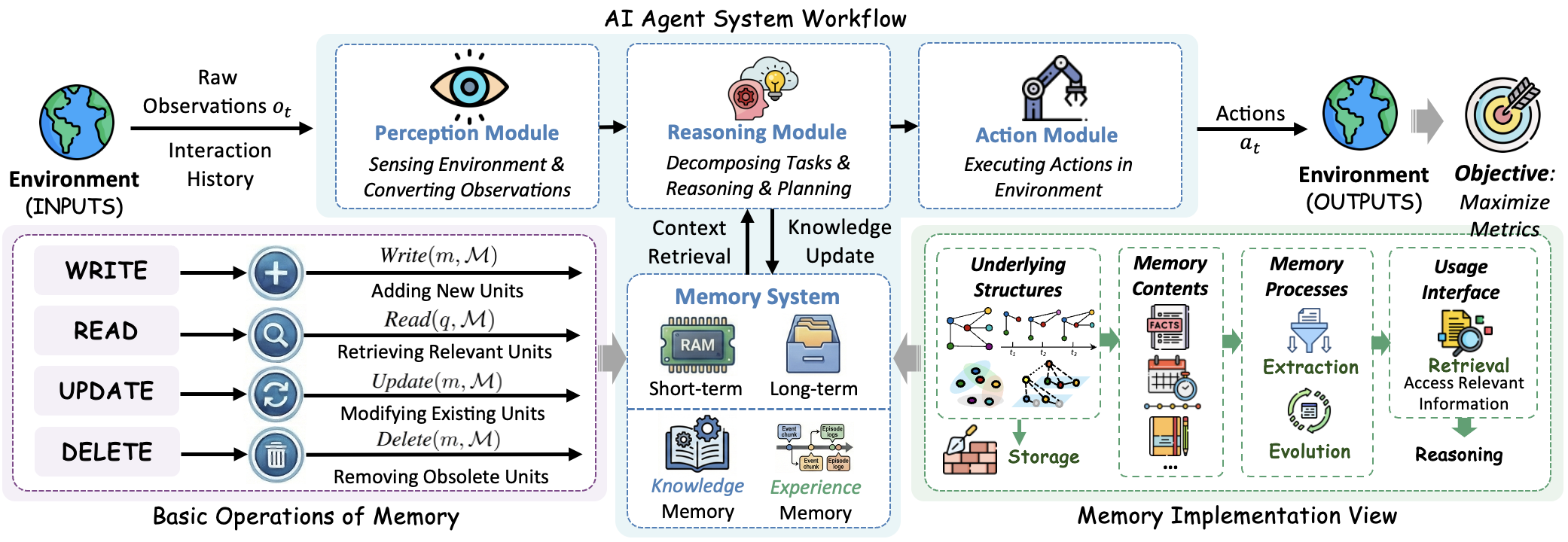

The proliferation of LLM-based agents for long-horizon and multi-step tasks has revealed severe limitations in agents' statelessness and inability to accumulate, utilize, and continually refine knowledge and experience. The surveyed work systematically formalizes memory as a central module for LLM agents, focusing on graph-based representations that offer explicit modeling of relational, hierarchical, and temporal dependencies, and proposing a comprehensive taxonomy, lifecycle framework, and architectural foundation for such memory systems.

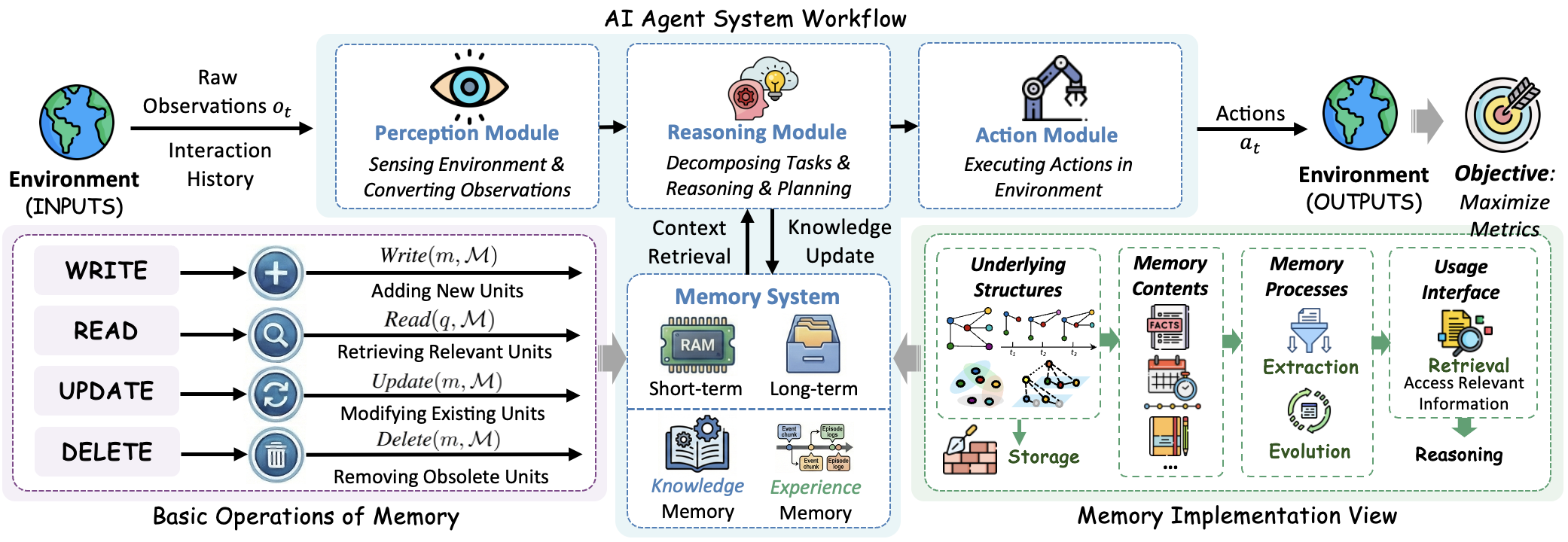

Figure 1: Agent system workflow highlighting memory as a modular component and its detailed implementation in LLM-based agent architectures.

The paper contends that traditional unstructured and sequence-based agent memory implementations are insufficient for complex, adaptive decision-making. Instead, structuring agent memory as a dynamic graph enables expressivity for both knowledge and experience retention, multi-hop and temporal reasoning, efficient evidence retrieval, and self-evolution — requisites for robust autonomy and continual learning.

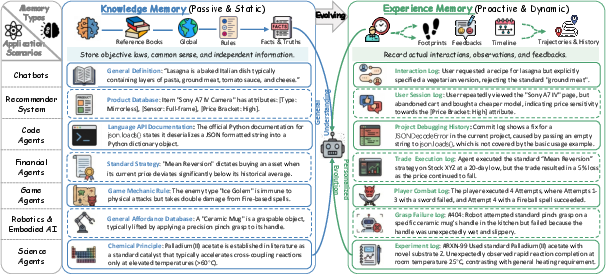

Taxonomy and Categorization of Agent Memory

The proposed taxonomy distinguishes agent memory along three major axes: temporal scope (short-term vs. long-term), cognitive function (knowledge vs. experience), and representational structure (non-structural vs. graph-based).

A salient claim is that graph-based memory subsumes traditional representations (linear buffers, key-value logs, vector DBs) as degenerate or restricted graph instances. Thus, graph-structured memory provides a unified formalism for highly adaptive agents.

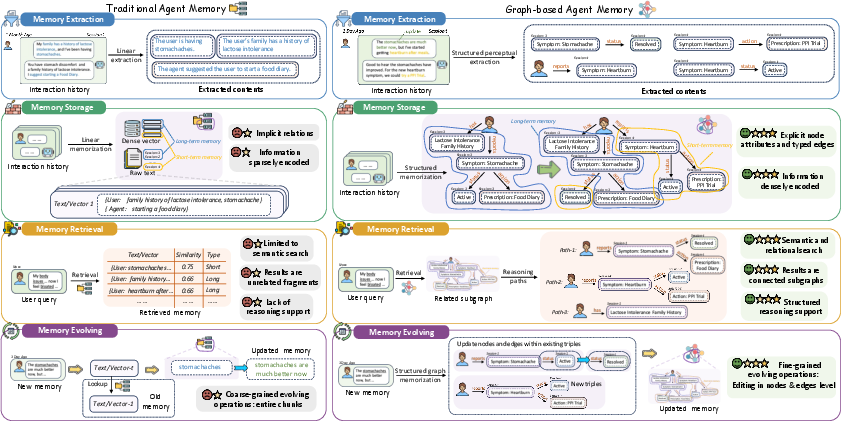

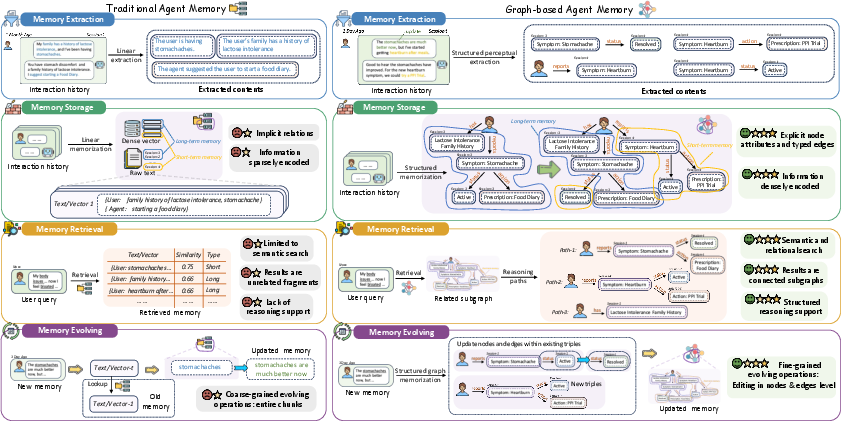

Figure 3: Structural and capability contrast between traditional agent memory (flat or buffer-based stores) and graph-based agent memory (explicit relational, hierarchical, and temporal modeling).

Agent Memory Lifecycle

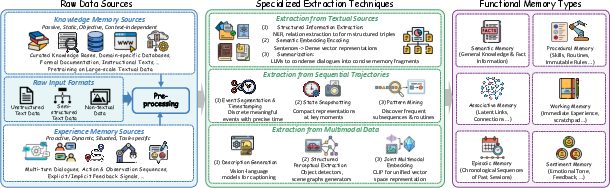

Agent memory is formalized as a four-stage lifecycle:

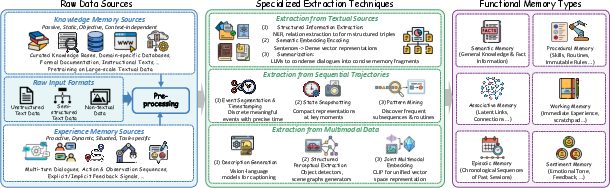

- Extraction: Transformation of raw, heterogeneous signals (text, state, vision, etc.) into structured memory units (entity-relational triples, event episodes, multimodal embeddings).

- Storage: Selection of graph paradigms (KGs, temporal graphs, hierarchical trees, hypergraphs, hybrids) to organize and index memory units efficiently for subsequent access and update.

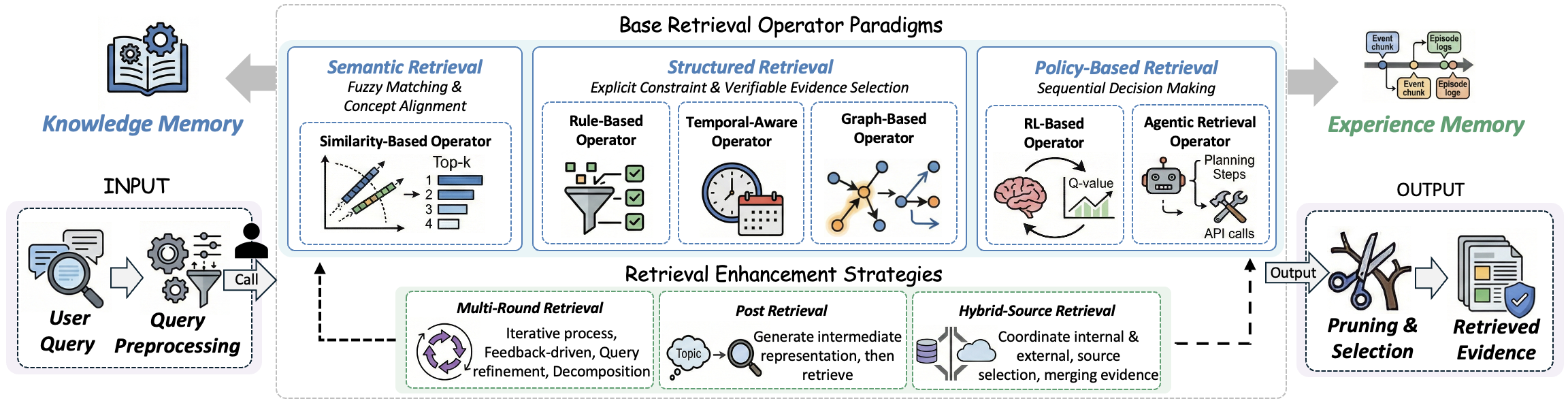

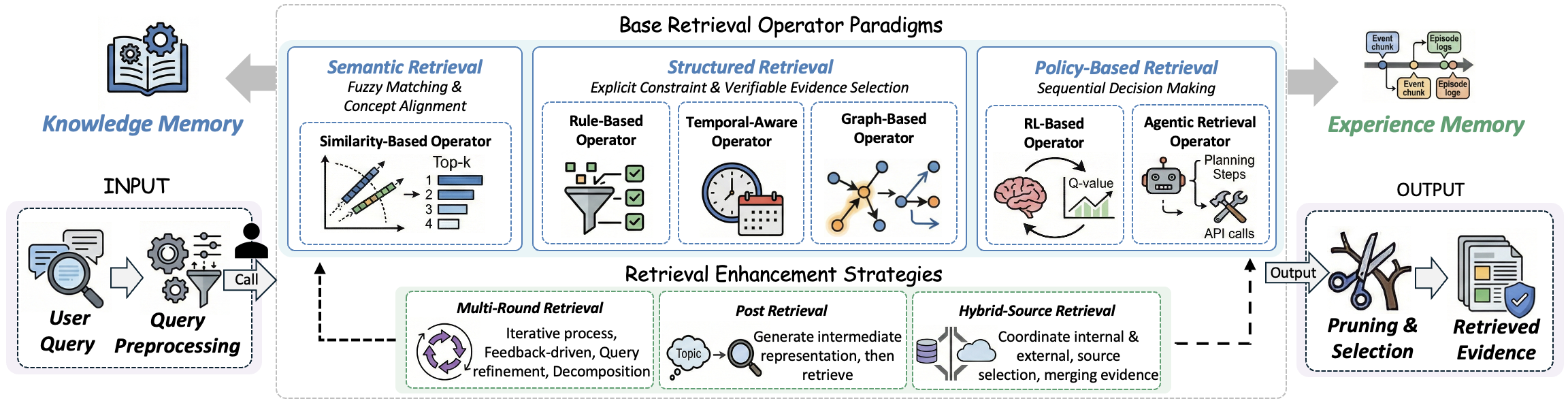

- Retrieval: Integration of semantic (similarity-based), structured (rule, temporal, traversals), and policy-based (RL, agentic control loops) operators for finding relevant memory in response to dynamic queries.

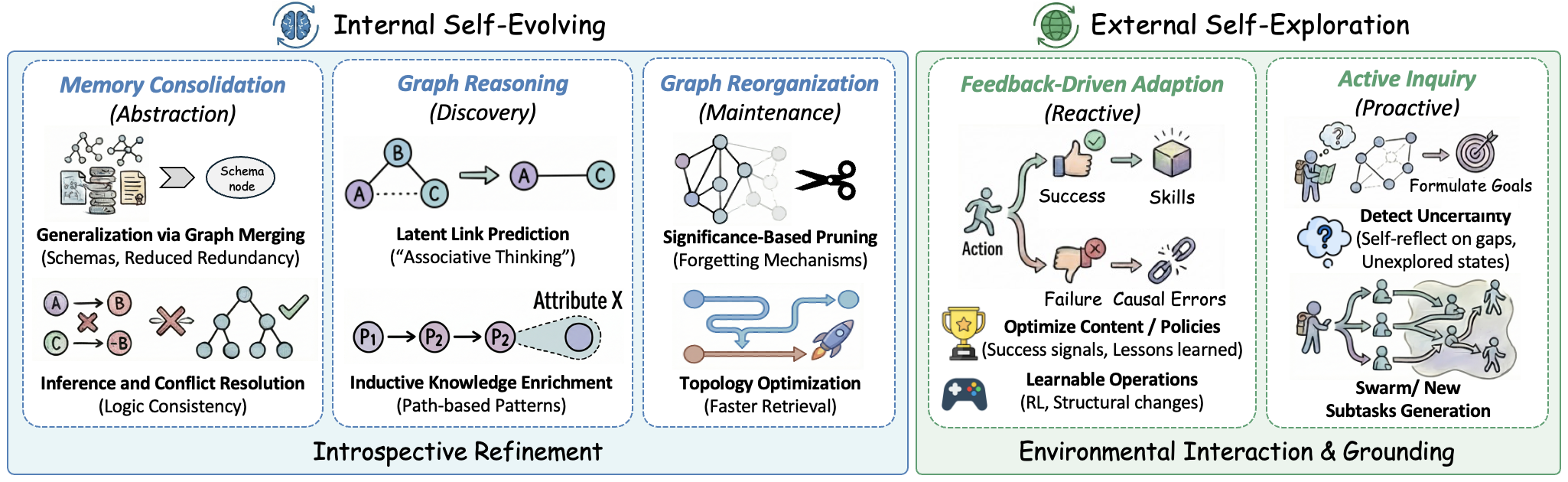

- Evolution: Internal and external mechanisms for introspective graph consolidation, abstraction, pruning, and active environmental exploration and adaptation.

Figure 4: Unified pipeline for agent memory extraction — from modality-diverse raw data to structured, compact representations partitioned by function and memory type.

Figure 5: Taxonomy of graph paradigms for memory construction: knowledge graphs, temporal graphs, hierarchical and hypergraph structures, with explicit mapping to memory function tradeoffs.

Graph-Based Memory Structures and Techniques

Knowledge Graphs (KG)

KGs are constructed via open-vocabulary LLM-based entity and relation extraction, continuously integrated into a persistent triple store with active conflict resolution and schema evolution. This supports efficient, multi-hop retrieval and explicit modeling of both factual and episodic memory, especially in high-assurance and auditable planning tasks [anokhin2024arigraph].

Hierarchical Trees

Hierarchical (DAG) and tree-structured memory leverages semantic clustering and recursive abstraction for compressing expansive agent experience into manageable conceptual hierarchies. This structure is essential for both session-based conversational memory and general conceptual reasoning over user–agent history [wu2025sgmem].

Temporal Graphs

Temporal knowledge graphs incorporate dual timelines (event time and transaction time) for managing distributional drift, contradiction resolution, and time-scoped reasoning. Advanced frameworks enforce monotonicity on retrieval paths and organize multi-session event memory as timeline-indexed trees [rasmussen2025zep, ge2025tremu, tan2025memotime].

Hypergraphs

Hypergraph-structured memory can natively represent n-ary relations and groupwise dependencies, which are critical for high-fidelity modeling of multimodal, tabular, or complex relational data (e.g., triple-drug interactions, entire document sections).

Hybrid Architectures

Hybrid designs decouple static world knowledge (structured KGs) from dynamic, personalized experience (multimodal memory pools, episodic vector DBs), enabling the agent to exploit both fixed rules and adaptive experience for optimal planning and execution [li2024optimus, jiang2025kg].

Memory Retrieval Mechanisms

Figure 6: Retrieval architecture integrating three core retrieval paradigms (semantic, structured, policy-based) and advanced retrieval enhancements for memory usage.

- Semantic retrieval: Fuzzy matching via embedding similarity, typically suitable for coarse candidate selection.

- Structured retrieval: Rule-based, graph traversal, and temporal operators enable precise, verifiable selection and compositional (multi-hop) reasoning over graphs.

- Policy-based retrieval: RL and agent-centric feedback-driven policies decide not just what to retrieve but how and when to stop, critically augmenting experience memory for adaptive actions.

- Enhancement strategies: Multi-round, post-retrieval, and hybrid-source retrieval augment single-shot look-ups, improving the recall, specificity, and reliability of retrieved evidence, especially for reasoning and continual learning [yan2025general, zhou2025mem1].

Memory Evolution Paradigms

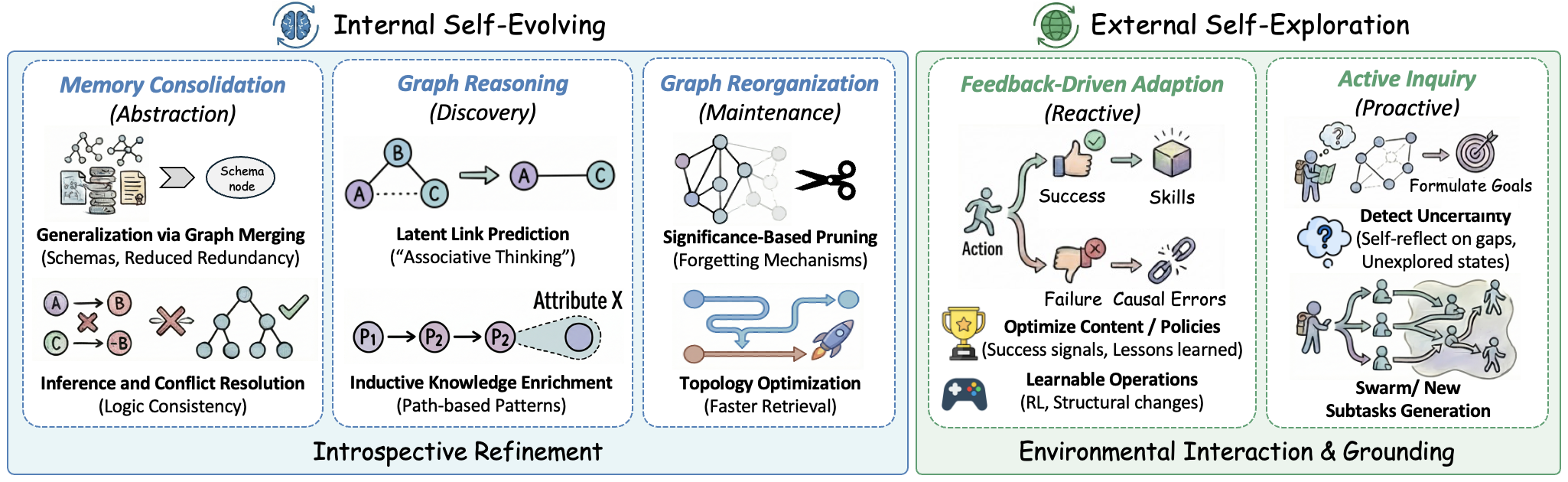

Figure 7: Dual taxonomy of memory evolution — left: internal self-evolving (consolidation, graph reasoning, and pruning); right: external self-exploration (feedback-driven adaptation, proactive inquiry).

- Internal self-evolving: Graph consolidation, abstraction, generalization, latent link prediction, pruning, and topological optimization for increased retrieval efficacy, logical consistency, and memory compression [rasmussen2025zep, chhikara2025mem0, nan2025nemori].

- External self-exploration: Agents utilize environmental feedback (reinforcement, success/failure signals) and initiate active exploration to identify knowledge gaps, formulate and pursue new goals, and autonomously organize collective or hierarchical memory [xu2024matrix, yan2025memory, zhai2025agentevolver].

Evaluation, Benchmarks, and Open Source Resources

The work curates numerous open-sourced libraries and benchmarks, grouping memory evaluation across interaction scenarios (multi-turn, cross-session, web-based, continual, tool-centric, and multimodal environments). Benchmarks are contextualized based on their coverage of factual and experiential memory, environmental realism, and lifecycle management. The authors highlight that robust evaluation must move beyond static information recall to include memory update, overwrite, and efficient dynamic adaptation, especially under constraints of context window, compute, and privacy [tan2025membench, hu2025evaluating, wu2025longmemeval].

Application Domains

Graph-based agent memory underpins a broad spectrum of domains:

- Conversational and Personal Assistant Agents: Sustained context, personalization, and hallucination reduction via structural memory [wu2025sgmem].

- Code Generation and Debugging Agents: Management of complex dependency trees and feedback loops [wang2026memgovern, krishnamoorthy2025multi].

- Recommender Systems: Synergistic handling of persistent user profiles, preference drift, and scenario-grounded reasoning [cai2025agentic, zhang2024agentcf].

- Financial Agents: Temporal recency, hierarchical asset models, risk tracking, collective intelligence [li2025investorbench].

- Game, Robotics, and Embodied Agents: Trajectory storage, hierarchical skill abstraction, multi-session planning [wang2024voyager, tan2025cradle].

- Healthcare, Science, and Scientific Discovery Agents: Longitudinal patient histories, medical ontologies, experiment-log integration, and evidence-driven hypothesis refinement [schmidgall2024agentclinic, qiu2024llm, hu2025agentmental].

Limitations and Future Directions

The survey details foundational challenges for graph-centric memory systems:

- Memory graph quality (structural, temporal, semantic), standard metrics, and their connection to agent performance remain underdefined.

- Scalability: Graph operations introduce computational complexity; research into graph compression, incremental update, and approximate retrieval is urgently needed.

- Privacy and Security: Graph structure increases attack surface, necessitating advances in privacy-preserving mechanisms, federated memory, and robust auditing.

- Schema Learning and Transfer: There is a pressing need for domain-agnostic, dynamically-adaptive schemas and robust methods for inter-domain transfer and meta-knowledge.

- Interpretability and Theoretical Analysis: Memory provenance, visualization, and formal completeness/complexity guarantees are essential for high-assurance deployment.

- Multi-Agent Coordination: The memory module's role moves from an isolated store to a synchronizable, distributed resource in collaborative environments.

Conclusion

This survey positions graph-based agent memory as a foundational architectural shift for next-generation LLM agents, advocating for explicit, relational, and evolvable structures. By comprehensively mapping taxonomy, technical paradigms, retrieval/evolution mechanics, benchmarks, and application venues, the paper provides a rigorous substrate for advancing research in memory-augmented, autonomous, and generalist agents. The surveyed methodology is poised to influence future directions in robust continual learning, explainable AI, privacy-assured personalization, and the emergence of collaborative agent collectives.

(2602.05665)