LLMs + Security = Trouble

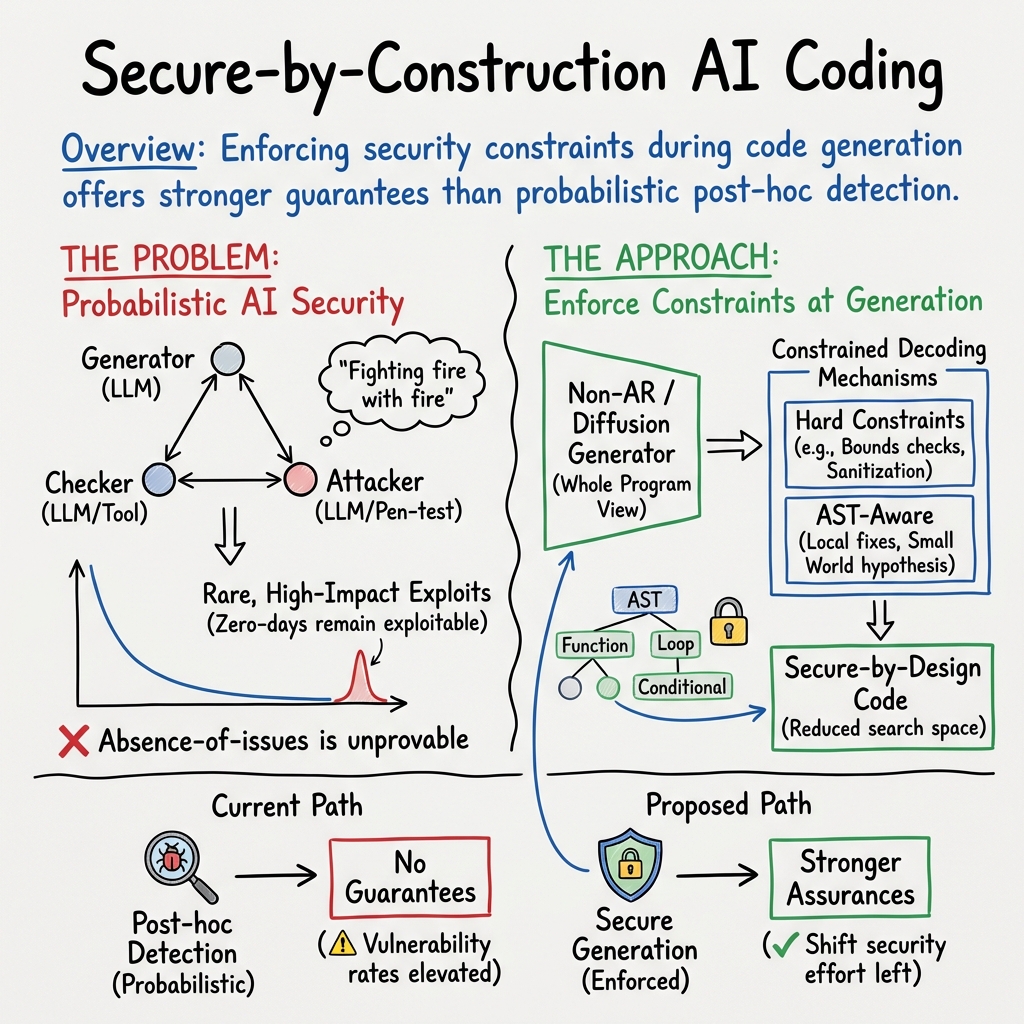

Abstract: We argue that when it comes to producing secure code with AI, the prevailing "fighting fire with fire" approach -- using probabilistic AI-based checkers or attackers to secure probabilistically generated code -- fails to address the long tail of security bugs. As a result, systems may remain exposed to zero-day vulnerabilities that can be discovered by better-resourced or more persistent adversaries. While neurosymbolic approaches that combine LLMs with formal methods are attractive in principle, we argue that they are difficult to reconcile with the "vibe coding" workflow common in LLM-assisted development: unless the end-to-end verification pipeline is fully automated, developers are repeatedly asked to validate specifications, resolve ambiguities, and adjudicate failures, making the human-in-the-loop a likely point of weakness, compromising secure-by-construction guarantees. In this paper we argue that stronger security guarantees can be obtained by enforcing security constraints during code generation (e.g., via constrained decoding), rather than relying solely on post-hoc detection and repair. This direction is particularly promising for diffusion-style code models, whose approach provides a natural elegant opportunity for modular, hierarchical security enforcement, allowing us to combine lower-latency generation techniques with generating secure-by-construction code.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- Abstract Syntax Tree (AST): A tree-structured representation of source code that captures its syntactic structure. "AST nodes such as modules, functions, loops, conditionals, and the like."

- agentic security guardrails: Safety mechanisms that constrain the behavior of autonomous, tool-using agents to prevent harmful actions. "and agentic security guardrails."

- agentic workflow: A development or analysis process that coordinates multiple autonomous agents with distinct roles. "These roles sometimes become the basis for an agentic workflow."

- AI red team: An AI-driven offensive testing setup that proposes or executes candidate exploits against systems. "an ``AI red team'' that proposes candidate exploits"

- AI security triangle: A pattern comprising generator, checker, and attacker roles used in securing or evaluating AI-generated code. "The AI security triangle is the general patterns we've seen emerge, constisting of the following three roles, as illustrated below."

- autoregressive decoding: A generation process that produces tokens sequentially, conditioning on previously generated tokens. "during autoregressive decoding, we cannot evaluate the properties of the entire program during the generation because only a partial program is available."

- bounds check: A runtime or static check ensuring indices or offsets remain within valid array or buffer limits. "bounds check for array accesses (buffer overrun prevention)"

- buffer overruns: Memory safety vulnerabilities where writes exceed a buffer’s bounds, corrupting adjacent memory. "buffer overruns or double frees."

- Chain-of-Thought (CoT): Prompting or reasoning technique where models generate explicit intermediate reasoning steps. "Chain-of-Thought (CoT) guides"

- constrained decoding: A generation method that enforces predefined constraints during decoding to ensure properties like security or correctness. "via constrained decoding"

- Control Flow Graph (CFG): A graph representation of the possible execution paths through a program. "Control Flow Graph (CFG) based semantic segmentation"

- correct-by-construction: An approach ensuring outputs satisfy correctness specifications by design rather than via post-hoc checks. "VeriGuard addresses this by shifting to a correct-by-construction paradigm."

- cross-site scripting: A web security vulnerability where untrusted input is injected into pages, leading to script execution in a victim’s browser. "cross-site scripting issues"

- data exfiltration: Unauthorized extraction or transfer of sensitive data from a system. "unauthorized data exfiltration"

- data leakage: The inadvertent inclusion or exposure of evaluation/test data in training or experimental setups, compromising validity. "implicit data leakage"

- differential testing: A testing approach comparing behaviors across versions or implementations to detect regressions or vulnerabilities. "The benchmark assesses functional correctness through differential testing"

- Diffusion models: Generative models that iteratively denoise samples, increasingly used for parallel and holistic code generation. "Diffusion models~\cite{gongDiffuCoderUnderstandingImproving2025, kapurDiffusionSyntaxTrees2024, liAutoregressionEmpiricalStudy2025, liDiffuGuardHowIntrinsic2025, zhangExploringPowerDiffusion2025,zengTreeDiffASTGuidedCode2025} have been heralded as an attractive alternative for code generation"

- DoS: Denial-of-Service, disrupting service availability by overwhelming or incapacitating systems. "it cannot just freely DoS production deployments"

- few-shot: A prompting setup where models are guided by a small number of examples to perform a task. "zero-shot or few-shot settings"

- Formal Query Language (FQL): A high-level, precise language used to express user intents for formal verification of generated code. "Formal Query Language~(FQL)."

- formal methods: Mathematically rigorous techniques for specifying, verifying, and reasoning about software correctness and safety. "neurosymbolic approaches that combine LLMs with formal methods"

- Foundry testing framework: A tooling framework for testing Ethereum smart contracts with high automation and reproducibility. "compatible with the Foundry testing framework."

- forward security: The ability of code or systems to remain secure against stronger future adversaries and tools. "AI-generated code may have very weak forward security"

- fuzzing: Automated generation of inputs to explore program behaviors and trigger crashes or bugs. "Fuzzing-based testing enjoys an increased coverage"

- human-in-the-loop: A process where humans participate in the decision or verification loop of automated systems. "making the human-in-the-loop a likely point of weakness"

- interprocedural backward slicing: A program analysis technique that traces data and control dependencies backward across function boundaries from a target point. "interprocedural backward slicing"

- invariant checks: Assertions that certain properties must always hold in a program, used to prevent classes of bugs. "pro-active invariant checks"

- Knowledge Base: A structured repository that maps high-level concepts to platform-specific details to aid verification or generation. "use of a Knowledge Base to map high-level concepts"

- LLM-as-a-Judge: A pattern where an LLM adjudicates or consolidates evidence to make final decisions about code or vulnerabilities. "an LLM-as-a-Judge module"

- Low-Rank Adaptation (LoRA): A parameter-efficient fine-tuning technique that injects low-rank updates into model weights. "Low-Rank Adaptation (LoRA)"

- metamorphic testing: A testing method that uses expected relations between multiple inputs/outputs to detect defects without an oracle. "using metamorphic testing"

- Nagini verifier: A formal verification tool used to prove correctness properties of code or policies. "using the Nagini verifier."

- non-autoregressive decoding: A generation method that produces outputs in parallel or as wholes, enabling global constraint checking. "non-autoregressive decoding"

- path explosion: The combinatorial growth of execution paths in program analysis, making exhaustive exploration infeasible. "runs into some version of path explosion"

- PatchDB: A dataset of real-world vulnerability fixes useful for studying the locality and nature of security patches. "PatchDB~\cite{wangPatchDBLargeScaleSecurity2021}"

- penetration testing: Authorized simulated attacks against systems to evaluate and improve security. "manual attack generation approaches such as penetration testing, etc."

- prefix tuning: A technique that steers transformer models by optimizing virtual prompt tokens prepended to inputs. "prefix tuning"

- Program-Assisted Language (PAL): A method that augments LLMs with lightweight programmatic tools to collect evidence. "Program-Assisted Language (PAL)"

- Proof-of-Concept (PoC): A minimal, reproducible exploit or test that demonstrates a vulnerability is real. "Proof-of-Concept (PoC) exploits"

- Reason-Act-Observe loop (ReAct): An agent pattern that alternates between reasoning, taking actions, and observing outcomes to iteratively improve. "Reason-Act-Observe loop (ReAct)"

- Retrieval-Augmented Generation (RAG): Enhancing model outputs by retrieving and conditioning on relevant external documents or signatures. "Retrieval-Augmented Generation (RAG), which pulls relevant vulnerability signatures from a local database."

- runtime monitor: A component that intercepts and checks actions at execution time against verified policies. "a runtime monitor"

- sanitization: The process of cleaning or encoding untrusted inputs to prevent injection attacks. "sanitization for strings that are output to HTML"

- SAST: Static Application Security Testing, analysis that detects security flaws without executing code. "SAST tools"

- secure-by-construction: Ensuring security properties are enforced during generation so outputs are secure by design. "secure-by-construction guarantees"

- semantic equivalence: The property that two artifacts have the same meaning or behavior, even if syntactically different. "cross checking the formalizations for semantic equivalence"

- SMT-LIB: A standard input language for SMT solvers used to formalize and verify logical constraints. "By formalizing policies into SMT-LIB,"

- State Calculus: A mathematical model used to represent and compare system state changes for verification. "State Calculus---a simplified mathematical model"

- static analysis: Automated analysis of code without execution to detect bugs or vulnerabilities. "under static analysis"

- symbolic interpreter: An engine that executes code with symbolic values to reason about all possible behaviors. "A symbolic interpreter then compares"

- Systematic Literature Review (SLR): A structured methodology for comprehensively surveying and analyzing research literature. "Systematic Literature Review (SLR)"

- taint flows: Paths through which untrusted data propagates to sensitive operations, potentially causing vulnerabilities. "taint flows"

- transformer attention mechanism: The attention computation in transformer models that controls how tokens influence each other. "transformer attention mechanism"

- uncertainty estimation grounded in semantic equivalence: Techniques that assess model uncertainty using criteria tied to meaning rather than surface form. "uncertainty estimation grounded in semantic equivalence"

- zero-day vulnerabilities: Previously unknown and unpatched security flaws that attackers can exploit before fixes are available. "zero-day vulnerabilities"

- zero-shot: Performing a task without seeing any task-specific examples during prompting or training. "zero-shot or few-shot settings"

Collections

Sign up for free to add this paper to one or more collections.