- The paper introduces a dynamics-aware world model that predicts latent object states using historical proprioceptive data and reference motion.

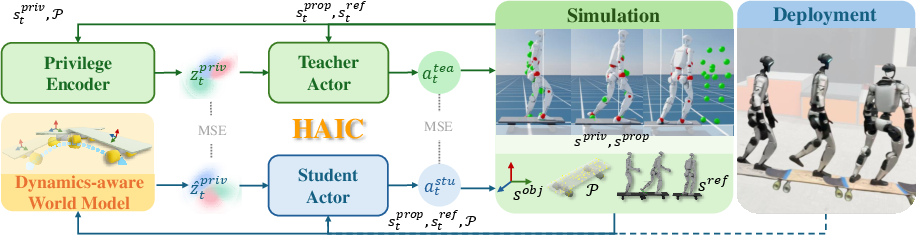

- It employs explicit geometric projection and an asymmetric distillation framework to enable robust sim-to-real transfer without external perception.

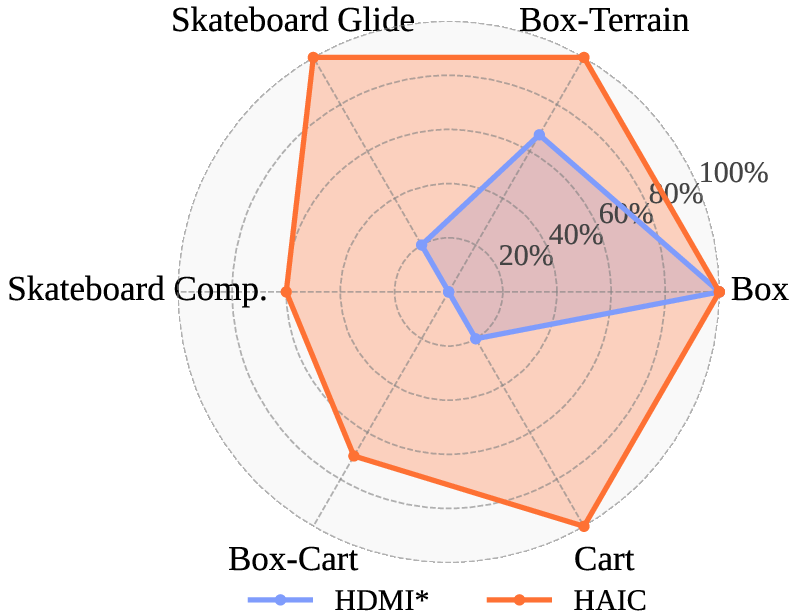

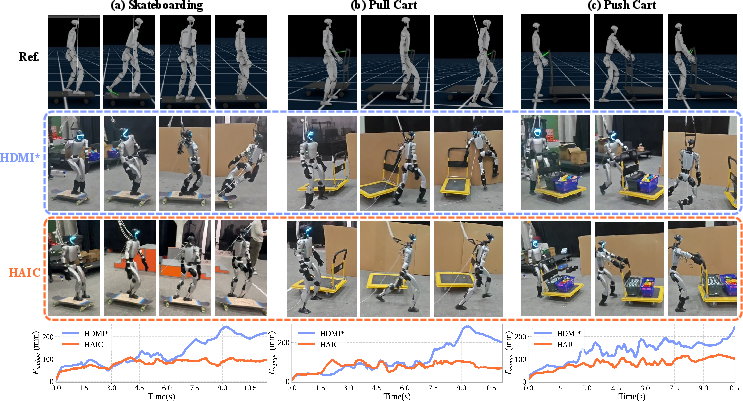

- Real-world evaluations demonstrate 100% success in complex agile tasks, including skateboarding and cart manipulation, under visual occlusion.

Humanoid Agile Object Interaction Control via Dynamics-Aware World Model (HAIC): Technical Analysis

Introduction and Problem Motivation

The HAIC framework introduces a comprehensive approach to humanoid Human-Object Interaction (HOI) focusing on robust, agile interaction with both fully actuated and underactuated objects in visually occluded, unstructured environments. The primary limitation of prior HOI methods is their focus on fully actuated objects, where object kinematics are tightly constrained by the end-effector, resulting in tractable, but unrealistic, dynamics. Real-world scenarios involve underactuated objects, such as carts and skateboards, introducing independent dynamics and non-holonomic constraints that generate complex contact forces and frequently occlude visual state. Traditional policies, reliant on privileged state estimation or explicit visual feedback, are unsuited to these challenges.

HAIC addresses these limitations by integrating proprioception-driven, simulation-distilled world modeling with high-order dynamics prediction and explicit geometric priors, enabling sim-to-real transfer for whole-body humanoid control without reliance on external perception.

Figure 1: HAIC targets a diverse suite of fully actuated and underactuated objects, critical for robust HOI.

Dynamics-Aware World Model Architecture

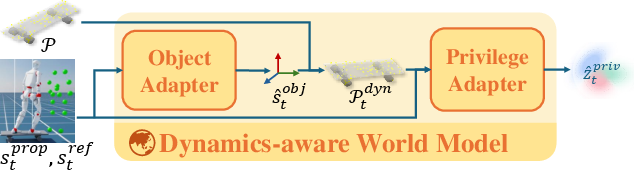

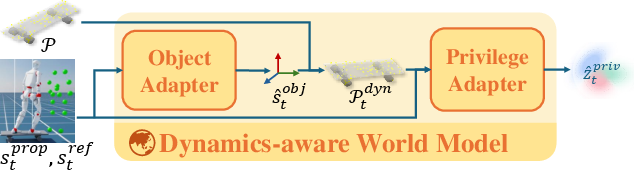

HAIC’s central innovation is a dynamics-aware world model (DWM) that predicts latent, unobserved object states—velocity and acceleration—exclusively from historical proprioceptive data and reference motion. The core pipeline comprises the following stages:

- High-Order Dynamics Prediction: The model leverages a temporal window of proprioception to infer linear/angular positions, velocities, and accelerations of interactive objects, accounting for coupled humanoid-object dynamics during contact and manipulation.

- Explicit Geometric Projection: Predicted object kinematics are mapped onto canonical point cloud templates (geometric priors) via a differentiable transform, yielding a dynamic, spatially grounded representation of occupancy and surface contact affordances. This geometric grounding is critical for stable control under visual occlusion, functioning as an implicit collision boundary predictor.

Figure 2: The DWM architecture predicts latent object dynamics from proprioceptive histories, projecting states onto geometric priors to reconstruct spatial features required for downstream control.

Policy Distillation and Training Paradigm

HAIC employs a two-stage asymmetric distillation model (privileged teacher → partial observation student):

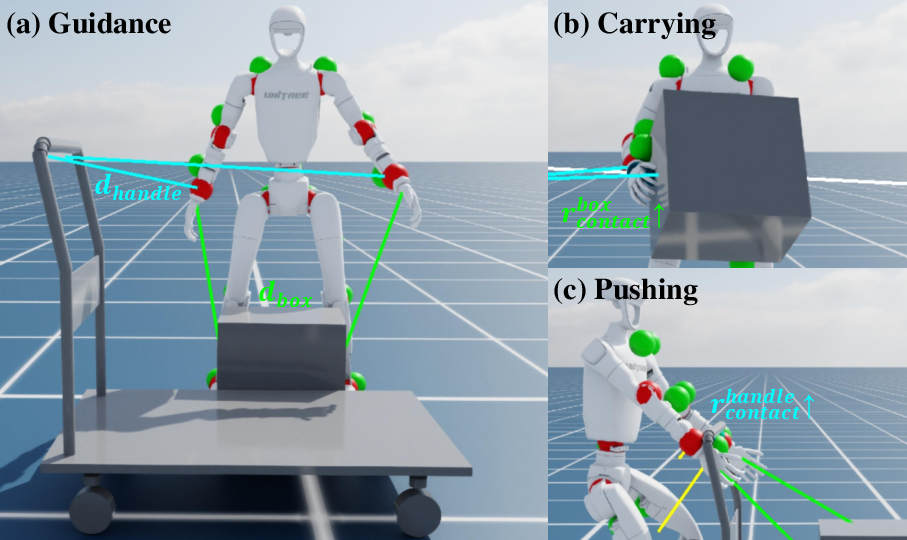

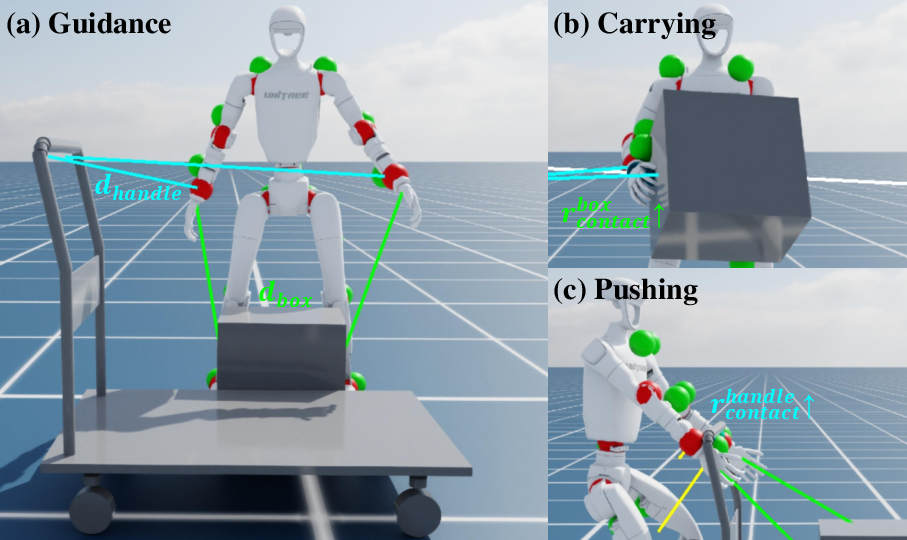

Reward Design and Multiple Objects Interaction

To support robust multi-object manipulation, HAIC introduces a contact reward unifying geometric alignment and force regulation, structured into guidance and execution phases. The reward jointly optimizes proximity of end-effectors to semantic targets during contact search and maintains regulated contact force post-establishment, preventing drift and instability. This strategy supports mode-switching and discrete contact transitions critical for long-horizon sequential tasks.

Figure 4: Multi-object contact guidance integrates geometric and force constraints for mode switching and robust manipulation.

Empirical Results: Real-World and Sim-to-Real Evaluation

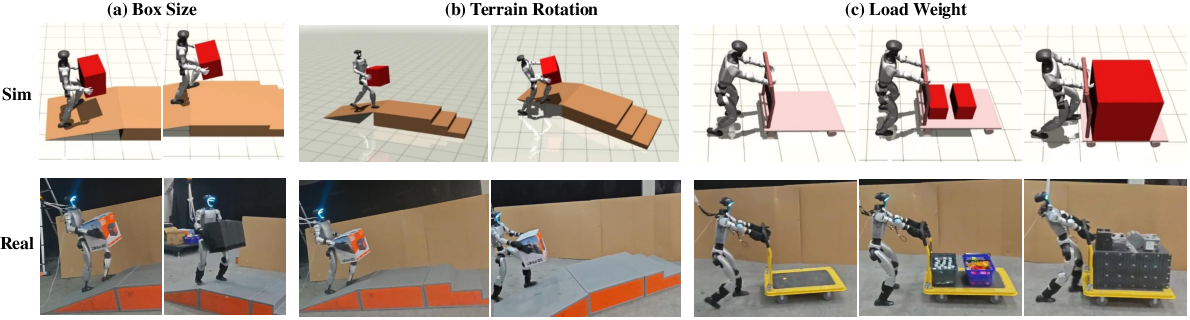

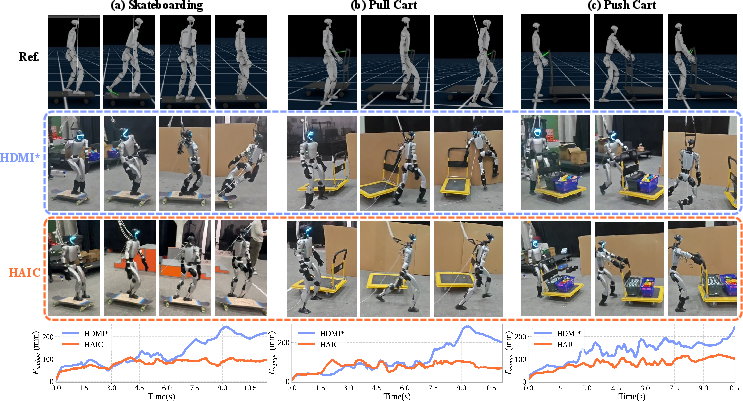

HAIC demonstrates superior sim-to-real transfer and robust generalization in complex underactuated and multi-terrain scenarios on a physical humanoid platform. Key empirical highlights include:

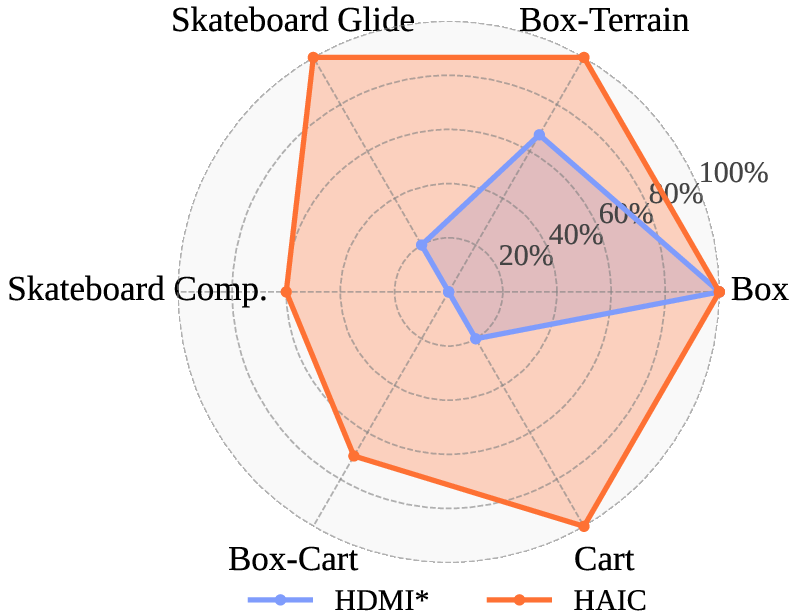

- Underactuated Object Interaction: In skateboarding and cart manipulation tasks, HAIC achieves up to 100% gliding and balancing success on skateboards and carts, outperforming proprioception-only and baseline HDMI* (Table results).

- Long-Horizon Composite Tasks: HAIC is the only approach able to sequentially load payloads and manipulate carts with high success rates, whereas all baselines fail due to error accumulation.

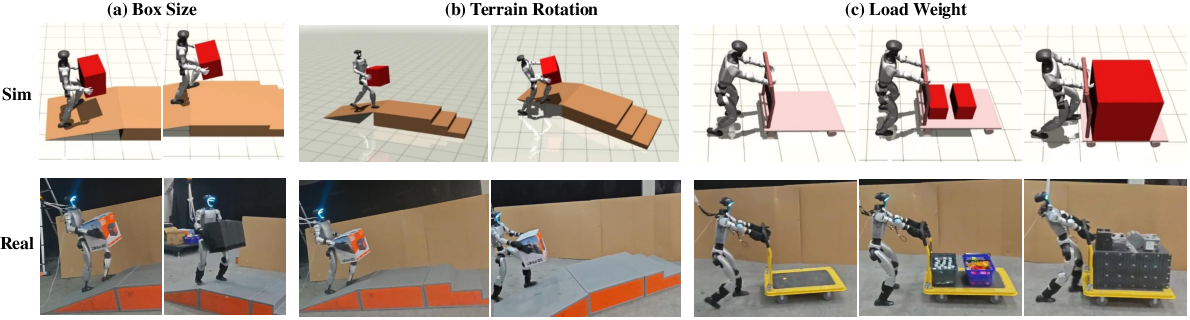

- Multi-Terrain Locomotion: In terrain-adaptive box-carrying tasks (platforms, slopes, stairs), HAIC maintains 100% success rates even under visual occlusion and compounded task difficulty. The DWM’s explicit geometric projection and dynamics prediction effectively bridge the perception gap in sensor-constrained deployments.

Figure 5: HAIC exhibits robust stability in all real-world interaction domains, outperforming baselines that fail under trajectory drift and loss of balance.

Figure 6: Sim-to-real transfer for HAIC shows robust, zero-shot generalization across HOI tasks, terrain types, object sizes, orientations, and load weights.

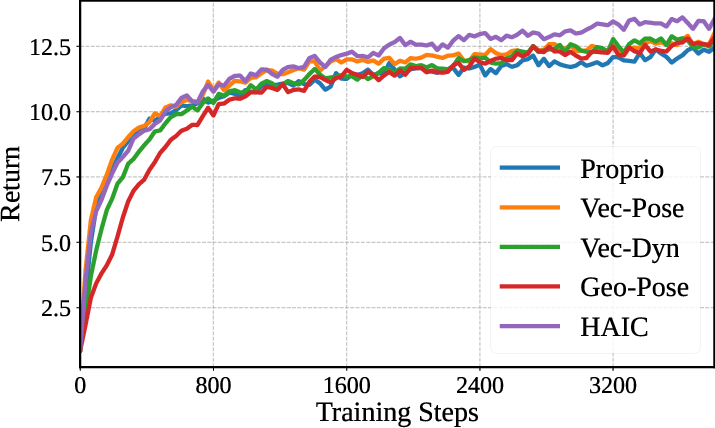

Ablation Study

The authors rigorously dissect contributions of dynamics prediction and geometric projection:

Implications and Future Directions

HAIC’s architecture marks a significant step in realistic, robust sim-to-real humanoid control for HOI:

- Practical Deployment: Eliminating dependence on privileged sensors/motion capture for object/terrain state, policies are deployable on unmodified hardware platforms.

- Theoretical Contributions: The explicit integration of geometric priors and high-order dynamics sets a new standard for internal world modeling in partial observability. The approach robustifies control under interplay of occlusions, distribution shift, and contact discontinuities.

- Bottlenecks/Future Expansion: The next research directions should include bridging perception-action using real-time vision, incorporating deformable or articulated objects, and scaling up world models to more diverse, non-rigid environments. Extension to multi-contact and collaborative settings (human-robot joint manipulation) is plausible given the demonstrated generalization.

Conclusion

HAIC fundamentally extends humanoid HOI by fusing dynamics-aware world modeling with explicit geometric priors and asymmetric training, enabling real-world agile whole-body interaction with underactuated objects and complex multistage environments under partial observations. Quantitative results confirm strong sim-to-real transfer, task success, and robustness, while ablation underscores the necessity of high-order prediction and geometric reasoning. These advances pave the way for vision-agnostic, generalizable humanoid deployment in unstructured, visually occluded domains.