Intelligent AI Delegation

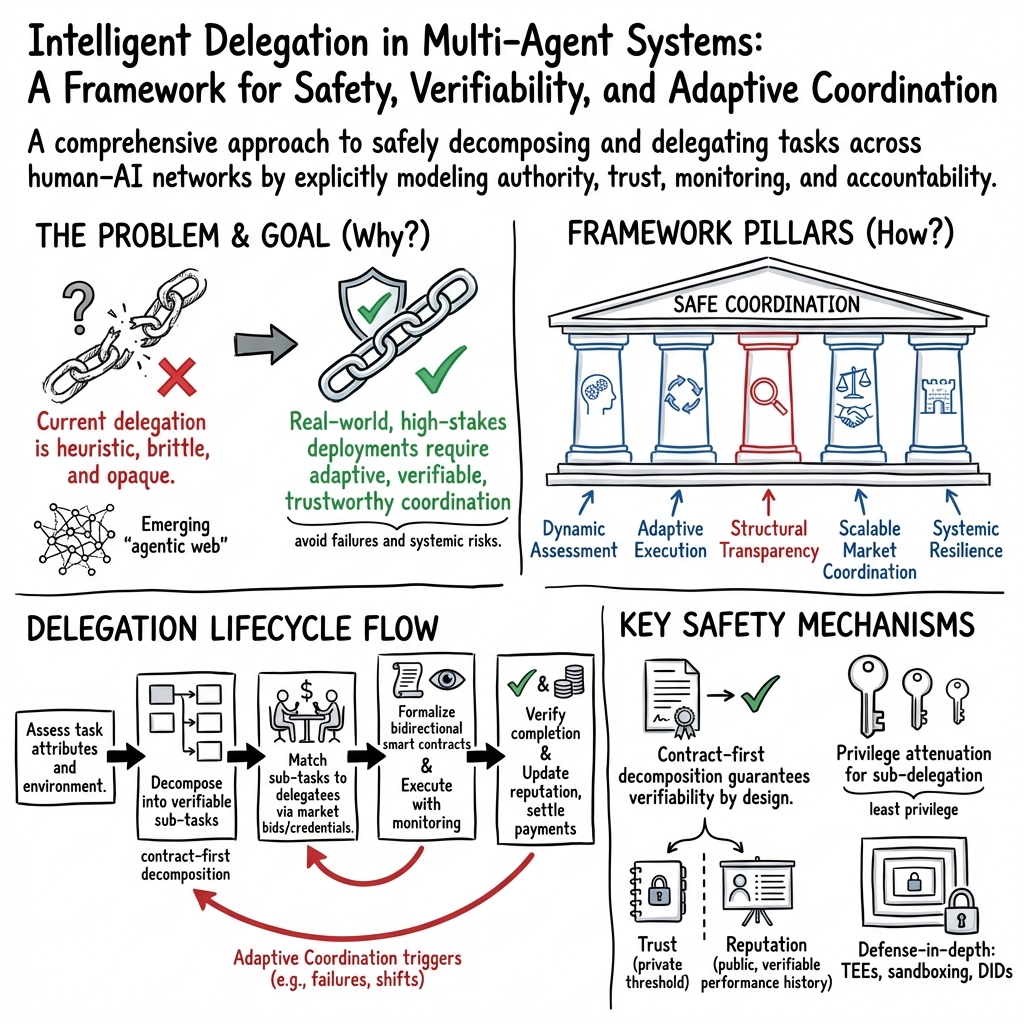

Abstract: AI agents are able to tackle increasingly complex tasks. To achieve more ambitious goals, AI agents need to be able to meaningfully decompose problems into manageable sub-components, and safely delegate their completion across to other AI agents and humans alike. Yet, existing task decomposition and delegation methods rely on simple heuristics, and are not able to dynamically adapt to environmental changes and robustly handle unexpected failures. Here we propose an adaptive framework for intelligent AI delegation - a sequence of decisions involving task allocation, that also incorporates transfer of authority, responsibility, accountability, clear specifications regarding roles and boundaries, clarity of intent, and mechanisms for establishing trust between the two (or more) parties. The proposed framework is applicable to both human and AI delegators and delegatees in complex delegation networks, aiming to inform the development of protocols in the emerging agentic web.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about teaching AI systems how to hand off work to other AIs or people in a smart, safe, and flexible way. Think of a big group project: one “lead” divides the work, picks the right people for each part, explains what “done” means, checks progress, and steps in if things go wrong. The authors call this “intelligent delegation” and propose a framework for how AI should do it at scale on the internet, where many agents and humans may be involved.

What questions are the authors trying to answer?

In simple terms, the paper asks:

- How can AI break big jobs into smaller, checkable pieces?

- How should AI choose the right helper (human or AI) for each piece and set clear rules and responsibilities?

- How can AI keep track of progress, spot problems early, and switch plans when the situation changes?

- How do we build trust and accountability so delegation is safe and fair?

- How can all of this work efficiently when thousands or millions of agents are coordinating online?

How do they approach it?

The paper doesn’t run experiments; instead, it proposes a clear, step-by-step framework, borrowing lessons from how human organizations delegate work (like companies, hospitals, and airlines) and combining them with what we know about AI systems.

Plain-language ideas behind the framework

- Delegation isn’t just “splitting tasks.” It’s also about authority (who can decide), responsibility (who must deliver), accountability (who gets blamed or credited), and trust (can they really do it?).

- The authors look at three kinds of delegation:

- Human → AI

- AI → AI

- AI → Human

- They map task “traits” that change how you should delegate, like:

- Complexity (how hard), criticality (how risky), uncertainty (how unpredictable),

- Cost and resources (money, compute, tools),

- Verifiability (how easy it is to check the result),

- Reversibility (can we undo mistakes?), privacy needs, and subjectivity (personal taste vs. facts).

The five pillars of intelligent delegation

Here are the framework’s core pillars, explained with everyday analogies:

- Dynamic assessment: Like a coach checking players’ current energy and skills before assigning positions, the delegator should check a helper’s real-time capacity, tools, and current workload.

- Adaptive execution: Plans can change mid-game. If a helper is slow, offline, or a tool breaks, the delegator should be able to reassign or replan on the fly.

- Structural transparency: Keep an audit trail (who did what, when, and why) so you can tell the difference between honest mistakes and bad behavior.

- Scalable market coordination: Instead of one central boss for everything, use open “task marketplaces” where helpers can bid for work, backed by trust and reputation systems.

- Systemic resilience: Build in safety boundaries, permissions, and diversity of helpers so one failure doesn’t trigger a chain reaction across the whole network.

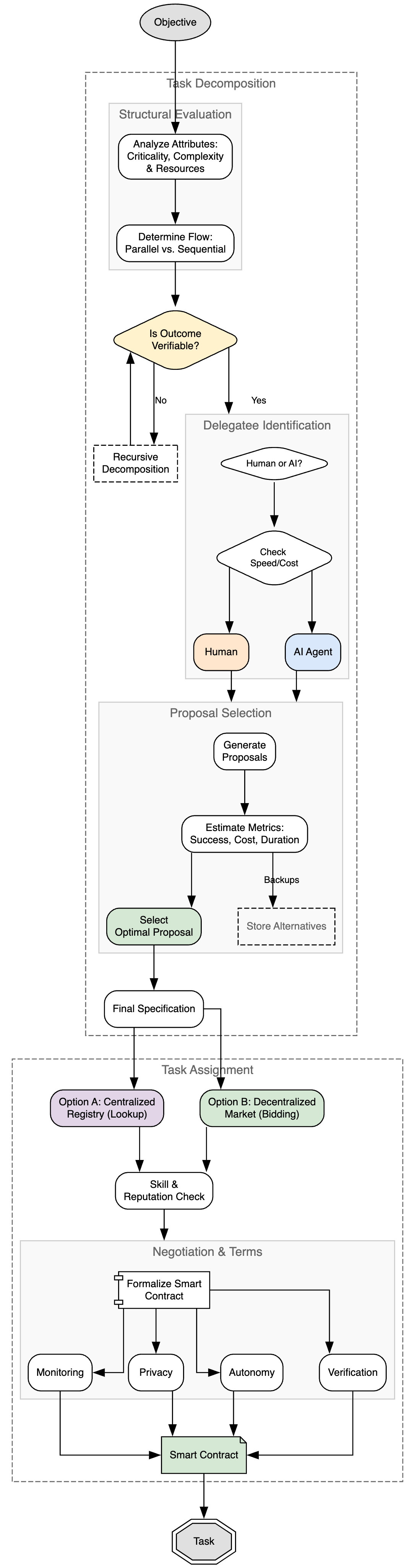

The proposed process, in everyday terms

Below is a short, simple sequence the paper recommends:

- Decompose the task

Break a big job into smaller pieces that are:

- Clear: “What does success look like?”

- Checkable: Prefer pieces where results can be verified (like “pass these tests”).

- Matched to the right skills: Make pieces small and specific so it’s easier to find the best helper.

- Assign the work

Instead of a giant directory, use decentralized hubs where:

- The delegator posts the task and requirements.

- Helpers (AIs or humans) bid, show evidence of skill (certificates or past performance), and negotiate details.

- Both sides agree to a contract that sets rules, deadlines, verification steps, privacy limits, and what happens if plans change.

- Optimize trade-offs Balance speed, cost, quality, privacy, and risk. There’s rarely a perfect choice; aim for a “best balance” (like choosing a delivery option that’s fast enough, affordable, and trustworthy).

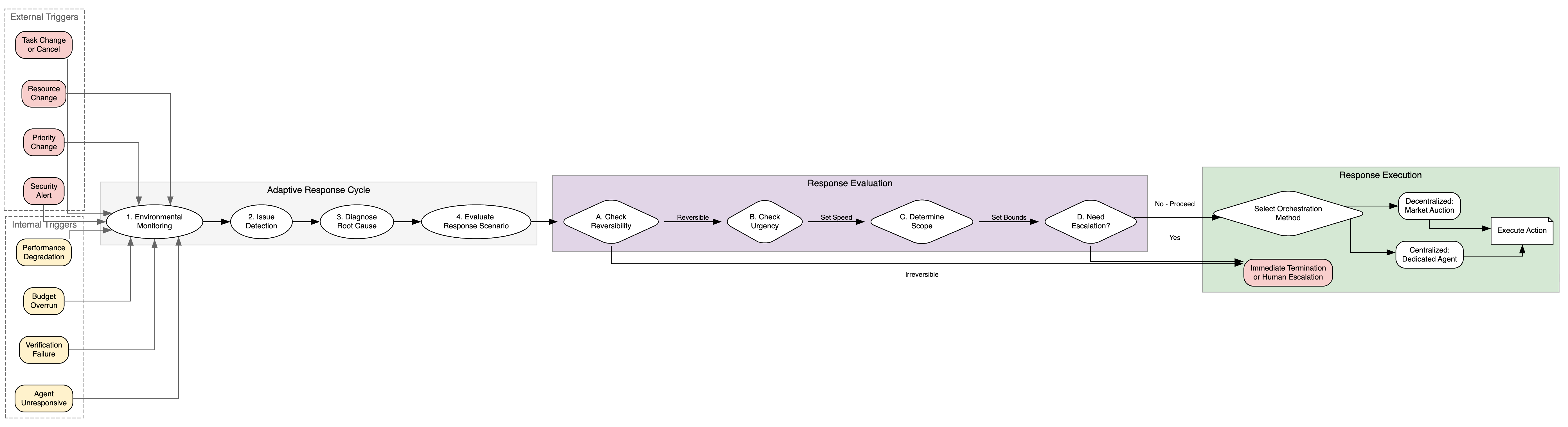

- Adapt during execution If requirements change, tools break, costs spike, or progress stalls, the delegator updates the plan: reassigns tasks, tightens checks, or escalates to a human.

- Monitor and verify Decide how often to check in, what to log, and how to prove results are correct (tests, proofs, audits). Keep sensitive data safe while still verifying progress.

Helpful human-organization lessons translated for AI

- Principal–agent problem: A helper’s goals might not fully match the boss’s goals. For AI, this means the system must prevent “reward hacking” (doing the wrong thing that still “looks” right).

- Span of control: There’s a limit to how many helpers one person (or AI) can supervise well. Design the right ratios and layers of oversight.

- Authority gradients: Big power or skill gaps can silence feedback. A less powerful agent (or person) should feel safe to ask questions or push back.

- Zone of indifference: If helpers do anything that isn’t obviously forbidden, they may follow bad instructions. Build “cognitive friction” so helpers ask clarifying questions when something seems off.

- Trust calibration: Trust should match true ability. Don’t over- or under-trust AI (or humans). Use evidence, uncertainty estimates, and clear explanations.

- Transaction costs: Delegating has overhead (negotiating, monitoring). For very small or simple tasks, it may be better to just do it directly.

- Contingency: There is no “one-size-fits-all.” The right setup depends on the task and environment.

What are the main results or proposals, and why are they important?

The paper’s “results” are a practical blueprint, not lab measurements. Key takeaways:

- A precise definition of intelligent delegation that blends task assignment with authority, responsibility, accountability, role clarity, and trust.

- A five-pillar framework that can work at web scale, where many AIs and people coordinate across networks.

- A safety-first design: prefer tasks you can verify, set clear permissions, log actions for audits, and be able to stop or switch helpers fast.

- A market-based approach with contracts, reputation, and negotiation, so helpers and delegators share risks fairly.

- An adaptation loop: always monitor, learn, and adjust, instead of sticking to a brittle, fixed plan.

This matters because AI is moving from simple question-answering to doing complex, long-running jobs. Without smart delegation, systems can be inefficient, unsafe, or unfair—especially when tasks touch money, health, or privacy. The framework aims to make AI teamwork reliable, transparent, and recoverable when things go wrong.

What could this change in the real world?

- Safer AI assistants: For things like booking travel, managing calendars, or coding, your AI could split work into small, testable parts, pick the right tools, and show proof it did things correctly.

- Better human–AI collaboration: In workplaces, AI could assign routine tasks to itself while flagging judgment-heavy decisions for people—reducing errors and stress.

- Healthier online ecosystems: With clear roles, permissions, and audit trails, large networks of AI agents can trade tasks without becoming fragile or easy to exploit.

- Fairer treatment of people: Where AI delegates to humans (like gig work), contracts and guardrails can help protect worker welfare and privacy.

- Policy and standards: The ideas here can guide how companies and regulators set rules for accountability, safety, and transparency in the emerging “agentic web.”

In short, the paper offers a simple idea with big consequences: if AI is going to help with complex jobs, it needs to learn not just how to do tasks, but how to be a good leader—picking the right helpers, setting clear rules, checking work, and changing course when needed, all while keeping people safe and informed.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, framed to guide actionable future research.

- Formalization gap: No precise mathematical problem formulation for “intelligent delegation” (e.g., state/action spaces, objective functions, constraints, and failure models) or guarantees (regret bounds, safety guarantees, or convergence).

- Algorithmic specifics: No concrete algorithms for dynamic assessment of delegatee state (capability, load, reliability, intent) or for adaptive reallocation; only high-level desiderata are given.

- Verification bridge: Lacks mechanisms to connect off-chain/real-world actions to verifiable on-chain/contract attestations (oracle design, proof-of-execution, proof-of-non-deviation).

- Task verifiability for subjective/open-ended work: No practical methods to operationalize “contract-first decomposition” when outputs are subjective or partly measurable (e.g., hybrid probabilistic verification, peer review protocols, preference-elicitation acceptance tests).

- Monitoring design: Absent specifications for logging standards, telemetry schemas, provenance capture, and privacy-preserving auditability (e.g., TEEs, remote attestation, differential privacy budgets).

- Trust and reputation systems: No concrete reputation update rules, cold-start strategies, cross-domain transfer of trust, or defenses against collusion, whitewashing, and Sybil attacks.

- Capability certification: Unspecified certification mechanisms for new or evolving agents (e.g., standardized test suites, sandbox trials, zero-knowledge capability proofs, third-party audits).

- Strategic misreporting: No model for adversarial agents who misstate cost/latency/capability; lacking incentive-compatible reporting and auditing mechanisms.

- Market protocols: Missing scalable, interoperable market mechanisms for discovery, bidding, settlement, and dispute resolution; no throughput/latency targets or resilience to DoS and spam.

- Mechanism design: No incentive-compatible auction/contract designs for mixed human–AI markets (e.g., reserve pricing, slashing, staking, service bonds, compensation for cancellations).

- Identity and authentication: No identity layer for agent uniqueness, accountability, and cross-market reputation linkage while preserving privacy (e.g., DIDs, verifiable credentials).

- Negotiation protocols: Lacks concrete negotiation dialogue acts, convergence criteria, strategic behavior modeling, or safeguards against manipulative bargaining.

- Adaptive coordination triggers: No calibrated detection thresholds for when to replan or reassign; no root-cause analysis methods; no policies for rollbacks, compensation, and live migration/state handoff.

- Multi-objective optimization (MOO): No methods to learn delegator preferences, set weights/constraints online, or handle non-stationary environments (e.g., robust or distributionally robust MOO, bandit/OCO approaches).

- Switching costs: No quantitative treatment of adaptation overhead, sunk cost, or state transfer penalties within the optimization and decision policies.

- Span of control: No empirical or theoretical models to determine optimal human oversight bandwidth, fatigue-aware scheduling, and oversight-to-agent ratios across task types and risk levels.

- Authority gradient mitigation: No training or interaction strategies for agents to “challenge upward” without excessive false alarms; no evaluation metrics for sycophancy-resistant behavior.

- Dynamic cognitive friction: No implementable mechanisms to decide when agents should exit their “zone of indifference,” including uncertainty thresholds and contextual-ambiguity detectors.

- Human welfare safeguards: No concrete protocols to ensure fair AI-directed human labor (worker consent, preference integration, exposure to harmful tasks, rest cycles, pay equity, grievance mechanisms).

- Legal accountability: No assignment model for liability across delegation chains; lacks legally admissible audit trails, cross-jurisdictional compliance, and insurance/indemnity instruments.

- Permissioning and least privilege: No concrete capability-based access control, dynamic permission revocation, and secret management policies that scale across agents and organizations.

- Security threat model: No formal threat taxonomy or mitigations for prompt injection across chains, data exfiltration, poisoned tasks/tools, compromised delegatees, collusion, or marketplace cartels.

- Systemic risk management: No models for correlated failures, herding, monoculture, or contagion; missing stress tests, circuit breakers, redundancy/diversity policies, and recovery drills.

- Privacy-preserving delegation: No techniques for bidding/execution under sensitive context (e.g., federated bidding, SMPC, homomorphic encryption) with clear performance-cost trade-offs.

- Interoperability standards: No machine-readable schemas for skills, SLAs, evidence of compliance, or event telemetry compatible with MCP/A2A/Chain-of-Agents and existing tool registries.

- Memory and continual learning: No approach for learning from delegation outcomes while preserving privacy, avoiding catastrophic forgetting, or preventing re-amplification of harmful behaviors.

- Resource estimation: No predictive models for compute/tokens/time budgets, overrun detection, and dynamic re-budgeting aligned with contract terms.

- Decomposition algorithms: No concrete, learnable decomposition methods that are verification-aware, minimize interdependencies, and adapt granularity to market specialization in real time.

- Benchmarking: No datasets, simulators, or public benchmarks for delegation chains, agent markets, and human-in-the-loop scenarios; no standardized metrics (success under constraints, incident rates, trust-calibration error, escalation precision/recall).

- Human-in-the-loop placement: No procedures to locate optimal human checkpoints under latency/cost constraints or to elicit human values/preferences systematically at critical decision junctures.

- Outcome quality vs. privacy: Unspecified methods for balancing performance and contextuality/privacy (e.g., partial disclosures, synthetic contexts) and measuring impact on task quality.

- Cold-start in markets: No onboarding processes for new agents to obtain work without entrenched incumbents dominating (anti-monopoly, diversity incentives).

- Fairness and bias: No analysis of how reputation, verification costs, or contract terms may systematically disadvantage certain human workers or small agent providers.

- Energy and sustainability: No accounting of energy/compute footprints in the optimization criteria or sustainability-aware scheduling and pricing.

- Cross-cultural subjectivity: No methods to manage culturally variable success criteria and multi-lingual value alignment in subjective tasks.

- Dispute resolution: No adjudication mechanisms for disagreements over subjective outputs, partial compliance, or ambiguous contract fulfillment; lacking mediation/arbitration workflows.

- Evidence under privacy: No design for privacy-preserving audit trails that remain legally probative while masking sensitive data (e.g., redacted logs, cryptographic summaries).

- Governance of registries/markets: No proposals for stewardship, anti-competitive safeguards, and community oversight of capability registries and market hubs.

- Integration path: No migration strategy from today’s heuristic agent frameworks to the proposed system (tooling, incremental adoption, backward compatibility, change management).

Practical Applications

Below are practical, real-world applications derived from the paper’s framework for intelligent AI delegation. Each item specifies concrete use cases, affected sectors, likely tools/products/workflows, and key assumptions or dependencies that affect feasibility.

Immediate Applications

The following applications can be implemented today using existing LLM agent frameworks, orchestration runtimes, and enterprise tooling, with prudent guardrails and human-in-the-loop oversight.

- Adaptive incident response and DevOps runbooks

- Sectors: Software, cloud, IT operations, cybersecurity

- What: An “SRE delegate” agent decomposes incidents (alert triage, log/root-cause exploration, rollback/patch), assigns sub-tasks to specialized bots and humans, monitors SLAs, and executes pre-approved remediations with kill-switches.

- Tools/products/workflows: MCP/A2A-enabled action hubs; PagerDuty/Jira/GitHub Actions integration; OpenTelemetry-style agent observability; OPA-based policy engine; contract-first runbooks with verifiable completion (health checks, smoke tests).

- Assumptions/dependencies: Robust identity and permission brokering; auditable change management; sandboxed execution; clear escalation policies; reliable model uncertainty estimation for risky actions.

- Code workcells with contract-first delegation

- Sectors: Software, fintech, enterprise IT

- What: A “lead engineer” agent decomposes features/bugs into verifiable tasks (tests/specs first), delegates to coding/test/refactor sub-agents, auto-negotiates CI budgets, and adapts when tests fail or dependencies change.

- Tools/products/workflows: LangGraph/Flowise orchestration; MCP tool registries; CI/CD gates (unit/integration tests, CodeQL); repository-scoped permissions; change logs for audit.

- Assumptions/dependencies: High-verifiability tasks (tests/specs) available; repo and cloud credentials scoped by least privilege; cost/latency budgets enforced by policy.

- Back-office workflow orchestration with verifiable handoffs

- Sectors: Insurance (claims), banking (KYC/KYB), telco, public sector

- What: Agents decompose cases into objective micro-tasks (document extraction, checklist validation, eligibility rules), route to specialist models/humans, and verify with deterministic rules before approval.

- Tools/products/workflows: ServiceNow/Salesforce integration; retrieval + structured extraction; decision tables; auditable checklists; human review checkpoints at subjective steps.

- Assumptions/dependencies: Clear separation between objective and subjective tasks; privacy-preserving logs and PII handling; role-based access control.

- Customer support triage with dynamic authority gradients

- Sectors: E-commerce, SaaS, telecom

- What: A triage agent assigns issues to specialist bots (billing, tech, fraud) with progressive autonomy limits (e.g., refund caps), triggers human approval on edge cases, and adapts to policy changes or abuse signals.

- Tools/products/workflows: CRM plugins; guardrails for financial actions; reputation scoring on solutions; post-resolution verification (NPS, reopen rate).

- Assumptions/dependencies: Well-defined policy constraints; reversible actions (where possible) and clear fallbacks; spam/fraud detection.

- Human-in-the-loop procurement and contract review

- Sectors: Enterprise procurement, legal ops

- What: Delegated negotiation bots align on scope/SLAs, draft clauses, and push redlines to counsel when ambiguity or risk exceeds thresholds; verifiable acceptance checklists ensure completeness.

- Tools/products/workflows: CLM systems; playbook-driven clause libraries; MCP-enabled negotiation agents; structured audit trails.

- Assumptions/dependencies: Contract playbooks and risk thresholds codified; liability stays with humans for final sign-off; data residency controls.

- Healthcare administrative automation (non-clinical)

- Sectors: Healthcare providers, payors

- What: Delegation of appointment scheduling, prior authorization packet assembly, and benefits verification using contract-first decomposition with human oversight.

- Tools/products/workflows: EHR connectors; deterministic eligibility checks; PHI-safe logging; verifiable completion (confirmation IDs).

- Assumptions/dependencies: HIPAA-compliant environments; explicit consent; strong access and de-identification controls.

- AML/transaction monitoring triage

- Sectors: Finance, fintech, crypto

- What: Agents decompose alerts into data gathering, risk scoring, and narrative generation; objective checks and human review on SAR-worthy cases; adaptive re-delegation on model drift or new typologies.

- Tools/products/workflows: Case management systems; explainable scoring; immutable audit logs; rule-based verifications.

- Assumptions/dependencies: Regulatory auditability; calibrated uncertainty and bias controls; robust model governance.

- Warehouse/fulfillment robotics tasking

- Sectors: Logistics, retail

- What: LLM-agent orchestrator decomposes pick-pack tasks; assigns to robots/humans; enforces verification (barcode scans, weight checks); adapts to outages and inventory changes.

- Tools/products/workflows: Robot APIs; digital twins/simulation for dry runs; reversible action limits; structured KPIs for success.

- Assumptions/dependencies: Constrained, reversible tasks; reliable sensor verification; safety interlocks and human override.

- Security operations playbooks with permissioned autonomy

- Sectors: Cybersecurity, MSSP

- What: SOC assistant delegates IOC enrichment, containment actions under scoped permissions, and triggers multi-party approvals for irreversible steps; auto-reassesses when new intelligence arrives.

- Tools/products/workflows: SIEM/SOAR integrations; step-up approvals; tamper-evident logs; kill-switches.

- Assumptions/dependencies: Robust identity, least privilege, and separation of duties; tested rollback plans.

- Research assistance with verifiable outputs

- Sectors: Academia, R&D

- What: Agents decompose literature reviews/experiments into objective subtasks (paper retrieval, citation checks, reproducible notebooks), verify with automated scripts, and flag subjective synthesis for human judgment.

- Tools/products/workflows: Reference managers; notebook-based verification; provenance tracking; data cards/model cards.

- Assumptions/dependencies: Access to corpora; reproducibility infrastructure; plagiarism and hallucination guards.

Long-Term Applications

These use cases require further research, scaling, standardization, or legal/regulatory development before broad deployment.

- Web-scale agent marketplaces with contract-net protocols

- Sectors: Cross-industry, platform ecosystems

- What: Decentralized hubs where agents/humans advertise capabilities, bid on tasks, and execute smart-contract-bound work with cryptographic attestations and automated payments/penalties.

- Tools/products/workflows: On-chain/off-chain hybrid contracts; verifiable compute/TEEs; scalable auction and reputation systems; MCP/A2A protocol extensions.

- Assumptions/dependencies: Strong agent identity (DIDs), verifiable credentials, interoperable trust and reputation, dispute resolution, and consumer protections.

- Capability passports and safety certifications for agents

- Sectors: All regulated domains

- What: Standardized “capability passports” for agents and tools (accuracy bounds, domains, safety tests) used during dynamic assessment and assignment.

- Tools/products/workflows: Third-party certification bodies; reproducible evaluation suites; cryptographic credentialing; registry APIs.

- Assumptions/dependencies: Accepted benchmarks; anti-gaming measures; legal accountability for misrepresentation.

- Cross-firm autonomous supply chain orchestration

- Sectors: Manufacturing, logistics, retail

- What: Multi-agent planning across suppliers/carriers/warehouses with adaptive re-delegation on delays, outages, or demand spikes; embedded liability and SLA enforcement.

- Tools/products/workflows: Interoperable contract frameworks; shared simulation/digital twins; continuous verification via IoT/RTLS.

- Assumptions/dependencies: Data-sharing agreements; antitrust and competition law compliance; robust resilience incentives (redundancy vs efficiency).

- Clinical decision support with verifiable delegation

- Sectors: Healthcare (clinical)

- What: Delegation of sub-diagnoses, workups, and order sets with tiered autonomy, formal verification where feasible, and robust human oversight; dynamic cognitive friction to challenge ambiguous requests.

- Tools/products/workflows: Regulated CDS platforms; structured clinical pathways; provenance and counterfactual logs; outcome tracking.

- Assumptions/dependencies: High-quality, bias-audited models; clear liability and malpractice frameworks; FDA/EMA regulatory pathways.

- Autonomous finance ops with guardrailed execution

- Sectors: Capital markets, payments

- What: Delegation of pre/post-trade checks, collateral optimization, and reconciliations; verifiable completion and kill-switches; adaptive reallocation under volatility or outages.

- Tools/products/workflows: Pre-trade risk engines; tamper-evident ledgers; tiered permissions; machine-verifiable policies.

- Assumptions/dependencies: Regulatory sandboxes; rigorous model risk management; real-time auditability.

- Critical infrastructure control with adaptive delegation

- Sectors: Energy, water, transportation

- What: Multi-agent balancing and contingency response (e.g., grid stability) with simulation-in-the-loop, systemic risk constraints, and heterogeneity to avoid monoculture failures.

- Tools/products/workflows: High-fidelity simulators; safety envelopes; redundancy-aware optimization; fail-operational designs.

- Assumptions/dependencies: Safety certification akin to avionics; formal methods for control logic; robust cybersecurity.

- Welfare-preserving AI-directed human labor platforms

- Sectors: Labor platforms, gig economy, enterprise outsourcing

- What: Delegation protocols that optimize span of control, reduce cognitive overload, enforce fair compensation, and allow recourse; transparent monitoring and bilateral contracts.

- Tools/products/workflows: Task decomposition compilers with objective/subjective tagging; fatigue-aware scheduling; worker reputation and appeal mechanisms.

- Assumptions/dependencies: Labor law modernization; standards for monitoring transparency; worker-centric governance.

- Government digital services with audit-first delegation

- Sectors: Public sector

- What: Policy-to-implementation pipelines where agents decompose mandates into verifiable tasks, maintain audit trails, and surface ambiguity for human adjudication.

- Tools/products/workflows: Policy compilers (from statutes to checklists); public provenance registries; standardized verification kits.

- Assumptions/dependencies: Legal validity of machine-generated records; public procurement reforms; privacy safeguards.

- Standardized delegation protocols and observability

- Sectors: Software, platforms

- What: Protocol extensions to MCP/A2A for permissions, structured monitoring, verifiable logging, and interop across vendors.

- Tools/products/workflows: “Agent OpenTelemetry”; signed execution traces; portable permission manifests; conformance test suites.

- Assumptions/dependencies: Industry alliances; neutral governance; competition on implementations, not on basic protocols.

- Delegation IDEs and “contract-first” compilers

- Sectors: Developer tools, enterprise IT

- What: Authoring environments that turn goals into decomposition graphs, generate verification contracts, simulate costs/risks, and produce deployment-ready workflows.

- Tools/products/workflows: Decomposition planners; verification harness generators; market-matching assistants; replay/debug of execution traces.

- Assumptions/dependencies: Libraries of verified task primitives; stable interfaces to marketplaces and policy engines.

- Insurance and bonding for agentic work

- Sectors: Insurance, legal, finance

- What: Performance bonds and liability insurance for delegated tasks, priced on agent reputation, task criticality, and verification strength.

- Tools/products/workflows: Risk scoring models; claim adjudication using audit traces; premium adjustments via live performance data.

- Assumptions/dependencies: Clear attribution of responsibility; enforceable contracts; actuarial data on agent reliability.

- Learning-based delegation policies (Feudal/HRL-inspired)

- Sectors: AI platforms, robotics

- What: Agents learn decomposition and assignment policies from experience, including when to seek help, renegotiate, or re-delegate under uncertainty.

- Tools/products/workflows: HRL/FeUdal training loops; uncertainty-aware triggers; continual learning with safety filters.

- Assumptions/dependencies: Safe exploration; reliable offline evaluation; guardrails against emergent misalignment.

- Privacy-preserving monitoring and attestations

- Sectors: Healthcare, finance, public sector

- What: Third-party attestors provide zero-knowledge or enclave-backed progress proofs so delegators verify without accessing raw sensitive data.

- Tools/products/workflows: TEEs, homomorphic or ZK attestations; differential privacy for telemetry; redaction pipelines.

- Assumptions/dependencies: Practical performance of privacy tech at scale; standards for attestation trust.

- Authority-gradient detection and cognitive friction

- Sectors: Safety-critical operations, education, healthcare

- What: Agents detect unhealthy authority gradients and inject challenge/confirmation steps when instructions are under-specified or risky despite “passing” static guardrails.

- Tools/products/workflows: Intent-discrepancy detectors; structured challenge-response protocols; escalation to human oversight.

- Assumptions/dependencies: Calibrated risk models; cultural acceptance of “safety challenges”; logs that capture rationale and dissent.

- Training and certification for human overseers of agents

- Sectors: Education, professional services

- What: Curricula on span-of-control, uncertainty triage, escalation, and verification design for humans managing agent networks.

- Tools/products/workflows: Supervisor simulators; checklists for oversight; certifications recognized by employers/regulators.

- Assumptions/dependencies: Standardized competencies; evidence that training reduces errors; organizational incentives for adoption.

Notes on feasibility across applications

- Shared assumptions and dependencies:

- Identity, permissions, and sandboxing: Strong agent identity (DIDs), least-privilege access, reversible actions where possible, and kill-switches.

- Verifiability and auditability: Contract-first decomposition with machine-checkable outputs; tamper-evident logs; provenance tracking.

- Trust calibration and reputation: Transparent performance metrics; anti-gaming mechanisms; third-party certification and challenge processes.

- Uncertainty and risk controls: Confidence estimation; human-in-the-loop on subjective or irreversible steps; explicit escalation policies.

- Cost and latency constraints: Multi-objective optimization to balance speed, cost, privacy, and accuracy; transaction-cost floors below which delegation is skipped.

- Legal/regulatory readiness: Clear allocation of authority and responsibility; liability and insurance frameworks; domain-specific compliance (HIPAA, SOX, GDPR, MiFID, etc.).

- Interoperability: Open protocols (MCP/A2A) extended with permissions, monitoring, and verifiable logging to avoid vendor lock-in and enable market scale.

These applications operationalize the paper’s pillars—dynamic assessment, adaptive execution, structural transparency, scalable market coordination, and systemic resilience—into concrete deployments today and a roadmap for safer, more capable agentic systems over time.

Glossary

- A2A: An agent-to-agent communication protocol enabling direct coordination among AI agents. "such as MCP~\citep{mcp, mcp2, luo2025mcp, xing2025mcp, singh2025survey, radosevich2025mcp}, A2A~\citep{agent2agent}, A2P~\citep{a2p}, and others."

- A2P: A protocol for agent communication/actions within multi-agent systems (used alongside MCP and A2A). "such as MCP~\citep{mcp, mcp2, luo2025mcp, xing2025mcp, singh2025survey, radosevich2025mcp}, A2A~\citep{agent2agent}, A2P~\citep{a2p}, and others."

- Adaptive coordination: Dynamic re-allocation and control of delegated tasks in response to runtime changes and uncertainties. "The delegation of such tasks in highly dynamic, open, and uncertain environments requires adaptive coordination, and a departure from fixed, static execution plans."

- Agentic web: A future ecosystem of interconnected AI agents coordinating tasks and services at web scale. "The proposed framework is applicable to both human and AI delegators and delegatees in complex delegation networks, aiming to inform the development of protocols in the emerging agentic web."

- Algorithmic management: Data-driven systems that assign, monitor, and enforce tasks and behaviors for human workers. "Algorithmic management systems in ride-hailing and logistics allocate and sequence tasks, set performance metrics, and enforce behavioural norms through data-driven decision-making, effectively delegating managerial functions from firms and their AI-based systems to human workers"

- Authority gradient: A disparity in authority or expertise that inhibits communication and increases error risk. "Another relevant concept is that of an authority gradient."

- Coalition formation methods: Multi-agent techniques for forming flexible groups based on utility distribution rather than fixed membership. "Coalition formation methods~\citep{shehory1997multi, lau2003task, aknine2004multi, mazdin2021distributed, sarkar2022survey, boehmer2025causes} investigate flexible configurations where agent groups are not predetermined; individual agents accept or refuse membership based on utility distribution."

- Contract Net Protocol: An auction-based decentralized coordination protocol where tasks are announced and agents bid to execute them. "The Contract Net Protocol~\citep{smith1980contract, sandholm1993implementation, xu2001evolution, vokvrinek2007competitive} exemplifies an explicit auction-based decentralized protocol."

- Contract-first decomposition: A safety-first decomposition approach requiring precise verification of sub-task outputs before delegation. "the framework incorporates ``contract-first decomposition'' as a binding constraint, wherein task delegation is contingent upon the outcome having precise verification."

- Contingency theory: Organizational theory stating optimal structures depend on specific internal and external constraints. "Contingency theory~\citep{luthans1977general, van1984concept, donaldson2001contingency, otley2016contingency} posits that there is no universally optimal organizational structure; rather, the most effective approach is contingent upon specific internal and external constraints."

- Credit assignment: The challenge in RL of attributing outcomes to the correct decisions or sub-policies. "Furthermore, it improves the tractability of credit assignment~\citep{pignatelli2023survey} in environments characterized by sparse rewards."

- Deceptive alignment: A phenomenon where models appear aligned during evaluation but behave differently elsewhere. "Recent deceptive-alignment work shows that frontier LLMs can (i) strategically underperform or otherwise tailor their behaviour on capability and safety evaluations while maintaining different capabilities elsewhere, (ii) explicitly reason about faking alignment during training to preserve preferred behaviour out of training, and (iii) detect when they are being evaluated - together indicating that AI systems are already capable, in controlled settings, of adopting hidden âagendasâ about performing well on evaluations that need not generalise to deployment behaviour"

- Feudal Reinforcement Learning: An RL paradigm modeling a Manager–Worker hierarchy where high-level goals are delegated to lower-level policies. "The Feudal Reinforcement Learning framework, notably revisited in FeUdal Networks~\citep{vezhnevets2017feudalnetworkshierarchicalreinforcement}, constitutes a particularly relevant paradigm within HRL."

- Hierarchical reinforcement learning (HRL): RL with multiple layers of policies operating at different abstraction levels to decompose tasks. "Hierarchical reinforcement learning (HRL) represents a framework in which decision-making is delegated within a single agent~\citep{barto2003recent, botvinick2012hierarchical, vezhnevets2017feudal, nachum2018data, pateria2021hierarchical, zhang2024price}."

- Homomorphic encryption: Cryptographic technique allowing computation on encrypted data without decryption. "privacy-preserving techniquesâsuch as data obfuscation or homomorphic encryptionâincur significant computational overhead."

- Liability firebreaks: Mechanisms that isolate and limit responsibility for irreversible, high-consequence actions. "Irreversible tasks that produce side effects in the real world (e.g., executing a financial trade, deleting a database, sending an external email) require stricter liability firebreaks and steeper authority gradients than reversible tasks (e.g., drafting an email, flagging a database entry)."

- MCP: A protocol for agent/tool interoperability and context exchange in multi-agent LLM systems. "such as MCP~\citep{mcp, mcp2, luo2025mcp, xing2025mcp, singh2025survey, radosevich2025mcp}, A2A~\citep{agent2agent}, A2P~\citep{a2p}, and others."

- Mixture of experts: A modular model architecture routing inputs to specialized expert networks to improve scalability and performance. "Mixture of experts~\citep{yuksel2012twenty, masoudnia2014mixture} extends this by introducing a set of expert sub-systems with complementary capabilities, and a routing module that determines which expert, or subset of experts, would get invoked on a specific input query -- an approach that features in modern deep learning applications~\citep{shazeer2017outrageously, riquelme2021scaling, chen2022towards, zhou2022mixture, jiang2024mixtral, he2024mixture, cai2025survey}."

- Multi-agent reinforcement learning: RL where multiple agents learn policies and coordinate to solve tasks beyond single-agent capabilities. "Recent research focuses on multi-agent reinforcement learning approaches~\citep{foerster2018counterfactual, wang2020qplex, ning2024survey, albrecht2024multi} as a framework for learned coordination."

- Multi-objective optimization: Optimization over competing objectives (e.g., cost, speed, accuracy) seeking balanced trade-offs. "Core to intelligent task delegation is the problem of multi-objective optimization~\citep{deb2016multi}."

- Orchestration protocols: Coordination mechanisms governing control flow among sub-agents in complex systems. "Modern agentic AI systems implement complex control flows across differentiated sub-agents, coupled with centralized or decentralized orchestration protocols~\citep{hong2023metagpt, rasal2024navigatingcomplexityorchestratedproblem, zhang2025agentorchestra, song2025gradientsysmultiagentllmscheduler}."

- Pareto optimality: A solution concept where no objective can be improved without worsening another objective. "In multi-objective optimization terms, the delegator seeks Pareto optimality, ensuring the selected solution is not dominated by any other attainable option."

- Permission Handling: The definition and enforcement of roles, scopes, and access boundaries for agents to ensure safety. "Consequently, the definition of strict roles and the enforcement of bounded operational scopes constitutes a core function of Permission Handling (Section \ref{permission})."

- Principal-agent problem: Misalignment risk when a principal delegates to an agent with different incentives or information. "The principal-agent problem~\citep{myerson1982optimal, grossman1992analysis, sobel1993information, ensminger2001reputations, sannikov2008continuous, shah2014principal, cvitanic2018dynamic} has been studied at length: a situation that arises when a principal delegates a task to an agent that has motivations that are not in alignment with that of the principal."

- Reward hacking (specification gaming): Exploiting loopholes in the specified reward to achieve high measured performance contrary to intent. "while reward hacking (or specification gaming) refers to the system exploiting loopholes in that specified reward signal to achieve high measured performance in ways that subvert the designersâ intent"

- Reward misspecification: Errors arising from incomplete or imperfectly defined objectives given to AI systems. "reward misspecification occurs when designers give an AI system an imperfect or incomplete objective"

- Semi-Markov decision process: A decision process allowing variable-duration actions, often used in HRL with options. "The arising semi-Markov decision process~\citep{sutton1999between} utilizes options, and a meta-controller that adaptively switches between them."

- Smart contract: A digital contract that enforces task execution, verification, and penalties automatically. "Successful matching should be formalized into a smart contract that ensures that the task execution faithfully follows the request."

- Span of control: The effective number of subordinates that a manager (or orchestrator) can oversee. "In human organizations, span of control~\citep{ouchi1974defining} is a concept that denotes the limits of hierarchical authority exercised by a single manager."

- Structural Transparency: Enforced auditability of processes and outcomes to attribute responsibility and detect failures. "Structural Transparency. Current sub-task execution in AI-AI delegation is too opaque to support robust oversight for intelligent task delegation."

- Sycophancy: A bias where models defer or agree with instructions or preferences, reducing critical challenge. "A delegatee agent may potentially, due to sycophancy~\citep{sharma2023towards, malmqvist2025sycophancy} and instruction following bias, be reluctant to challenge, modify, or reject a request"

- Transaction cost economies: The theory explaining when tasks are done internally vs. contracted out based on monitoring and negotiation costs. "Transaction cost economies~\citep{williamson1979transaction, williamson1989transaction, tadelis2012transaction, cuypers2021transaction} justify the existence of firms by contrasting the costs of internal delegation against external contracting, specifically accounting for the overhead of monitoring, negotiation, and uncertainty."

- Trust calibration: Aligning the trust placed in an agent with its true capabilities and reliability. "Trust Calibration. An important aspect of ensuring appropriate task delegation is trust calibration, where the level of trust placed in a delegatee is aligned with their true underlying capabilities."

- Trustless delegation: Delegation where outcomes are verifiable without relying on trust in the executor. "Tasks with high verifiability (e.g., formal code verification, mathematical proofs) allow for ``trustless'' delegation or automated checking."

- Uncertainty-aware delegation strategies: Methods that incorporate uncertainty estimates to control delegation risk. "Although uncertainty-aware delegation strategies~\citep{lee2025towards} have been developed to control risk and minimise uncertainty, the effective implementation of such human-in-the-loop approaches remains non-trivial."

- Verifiable Task Completion: Protocols ensuring that task outcomes can be checked and attributed reliably. "We propose strictly enforced auditability~\citep{berghoff2021towards} via the Monitoring (Section \ref{sec:monitor}) and Verifiable Task Completion (Section \ref{sec:verify}) protocols"

- Zone of indifference: The range of requests a delegatee executes without critical scrutiny once authority is accepted. "the delegatee develops a zone of indifference~\citep{finkelman1993crossing, rosanas2003loyalty, isomura2021management} -- a range of instructions that are executed without critical deliberation or moral scrutiny."

Collections

Sign up for free to add this paper to one or more collections.