Pedagogically-Inspired Data Synthesis for Language Model Knowledge Distillation

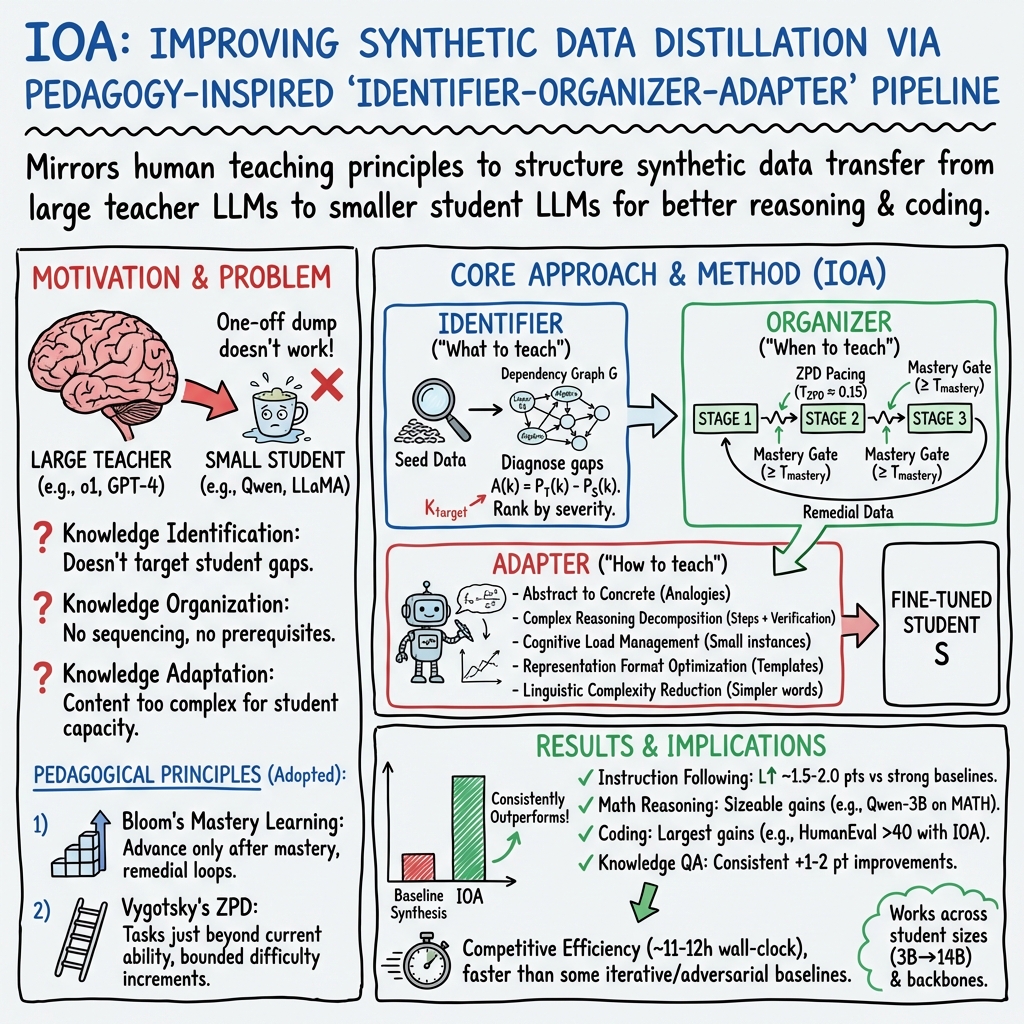

Abstract: Knowledge distillation from LLMs to smaller models has emerged as a critical technique for deploying efficient AI systems. However, current methods for distillation via synthetic data lack pedagogical awareness, treating knowledge transfer as a one-off data synthesis and training task rather than a systematic learning process. In this paper, we propose a novel pedagogically-inspired framework for LLM knowledge distillation that draws from fundamental educational principles. Our approach introduces a three-stage pipeline -- Knowledge Identifier, Organizer, and Adapter (IOA) -- that systematically identifies knowledge deficiencies in student models, organizes knowledge delivery through progressive curricula, and adapts representations to match the cognitive capacity of student models. We integrate Bloom's Mastery Learning Principles and Vygotsky's Zone of Proximal Development to create a dynamic distillation process where student models approach teacher model's performance on prerequisite knowledge before advancing, and new knowledge is introduced with controlled, gradual difficulty increments. Extensive experiments using LLaMA-3.1/3.2 and Qwen2.5 as student models demonstrate that IOA achieves significant improvements over baseline distillation methods, with student models retaining 94.7% of teacher performance on DollyEval while using less than 1/10th of the parameters. Our framework particularly excels in complex reasoning tasks, showing 19.2% improvement on MATH and 22.3% on HumanEval compared with state-of-the-art baselines.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging the IOA framework’s findings (deficiency diagnosis, dependency-aware curricula, mastery gating, and cognitively aligned data synthesis), which demonstrably transfer teacher LLM capabilities to smaller models with strong efficiency and performance (e.g., ~94.7% of teacher performance on DollyEval with <1/10th parameters; notable gains in math and code):

- Smaller, high-reasoning on-device assistants

- Sector: software, consumer devices, IoT

- Use case: Distill powerful LLMs into 3–8B SLMs for offline assistants on laptops/phones, preserving instruction-following and code/math reasoning for everyday tasks (summarization, scheduling, debugging small scripts).

- Tools/workflows: Integrate

Identifier-Organizer-Adapterinto MLOps; probe-based capability maps; DAG construction; mastery-gated fine-tuning; adapter prompt library. - Assumptions/dependencies: Access to teacher outputs (API or open model), compact seed data with validation probes, licensing compliance, modest GPU training time (~11–12 hours as reported), quality seed data is more impactful than sheer quantity.

- Enterprise domain upskilling of SLMs

- Sector: healthcare, finance, legal, customer support

- Use case: Targeted distillation of domain-specific knowledge (terminology, compliance rules, workflows) to smaller models for secure in-house deployment; reduces hallucinations via prerequisite-aware curricula and progression controls.

- Tools/workflows: Knowledge module decomposition per domain; dependency graphs; mastery thresholds for deployment gating; synthetic data with representation templates and linguistic simplification.

- Assumptions/dependencies: Domain experts help structure knowledge modules; validated probes for critical capabilities; privacy-preserving synthetic generation; legal vetting of teacher outputs.

- Developer productivity assistants with improved code reasoning

- Sector: software engineering

- Use case: Distill code teacher models into small IDE copilots that perform better on tasks like HumanEval/MBPP; adapter-controlled step templates (plan, implement, test) for robust synthesis and fewer errors.

- Tools/workflows: Adapter’s standardized solution scaffolds; multi-step reasoning decomposition; intermediate verification filters; integration into CI for automated checks.

- Assumptions/dependencies: Access to code-specific teacher traces (black-box allowed); curated seed tasks; coverage of language/toolchains; careful mastery thresholds to avoid overfitting.

- Educational content generation aligned with pedagogy

- Sector: education

- Use case: Generate math/science exercises and explanations that concretize abstract concepts, decompose multi-step reasoning, and scaffold with consistent formats; usable for human learners and LLM tutors.

- Tools/workflows: Adapter’s analogies (e.g., derivative as speed), standardized step-by-step templates, difficulty pacing via ZPD threshold, mastery gating for curriculum progression.

- Assumptions/dependencies: Well-structured knowledge hierarchies; teacher outputs are pedagogically sound; alignment checks to ensure age/curriculum appropriateness.

- Privacy-preserving model customization

- Sector: policy/compliance, healthcare, finance

- Use case: Replace sensitive corpora with synthetic distillation data to reduce PII exposure while transferring capabilities; applies to internal QA and report-generation models.

- Tools/workflows: Synthetic-only pipelines; audit trails of data synthesis; DP-aware synthetic generation extensions.

- Assumptions/dependencies: Reliability of synthetic data quality; policy acceptance of synthetic data use; awareness of “model collapse” risks in recursive pretraining (the paper focuses on post-training distillation).

- Capability mapping and evaluation budgeting

- Sector: academia, ML operations

- Use case: Use the Identifier’s probe tasks and severity scoring to produce fine-grained capability maps of SLMs, allocate training/evaluation resources to the most critical gaps, and prioritize release gates.

- Tools/workflows: Probe design per module; automated severity scoring; dashboards tracking mastery progression.

- Assumptions/dependencies: Valid probes per knowledge unit; thoughtful severity weighting; maintenance of dependency graphs over time.

- Energy- and cost-efficient AI deployment

- Sector: energy, IT operations

- Use case: Replace large inference clusters with smaller mastered students, cutting inference costs and footprint while maintaining performance on targeted tasks.

- Tools/workflows: Distill-to-deploy pipeline; token-efficient representation adaptation; wall-clock monitoring and reporting.

- Assumptions/dependencies: Task targeting to avoid performance regressions; monitoring for drift; governance around energy reporting.

Long-Term Applications

The following applications require further research, scaling, standardization, or cross-domain integration before broad deployment:

- Sector-scale knowledge DAGs and standardized curricula

- Sector: healthcare, law, engineering, public policy

- Use case: Build comprehensive, shared dependency graphs for entire professional domains to enable systematic, mastery-gated distillation and evaluation across organizations.

- Potential tools/products: “Knowledge DAG Builder,” standardized curriculum repositories, domain probe libraries.

- Assumptions/dependencies: Community consensus on knowledge decomposition; ongoing curation; governance over updates and versioning.

- Multi-teacher and adaptive teacher selection

- Sector: software, education, robotics

- Use case: Dynamically route synthesis to different teacher models (e.g., math, code, compliance) based on the student’s evolving gaps; ensemble distillation for broader capability coverage.

- Potential tools/products: “Teacher Router” services; ensemble distill orchestrators; policy for teacher licensing and provenance.

- Assumptions/dependencies: Inter-teacher consistency; API availability and cost; conflict resolution when teachers disagree.

- Human-in-the-loop mastery gating for safety-critical domains

- Sector: healthcare, aviation, finance, legal

- Use case: Augment automated mastery checks with expert review before student models advance to sensitive capabilities (diagnosis, trading decisions, legal drafting).

- Potential tools/products: Mastery Review Workbenches, audit trails, escalation protocols.

- Assumptions/dependencies: Expert availability; standardized safety criteria; regulatory approval.

- Personalized cognitive alignment for users and teams

- Sector: education, enterprise training, consumer apps

- Use case: Tailor knowledge representation and difficulty pacing to specific learner profiles or organizational skill distributions, enabling personalized LLM tutors and team upskilling.

- Potential tools/products: Cognitive profiles, adaptive adapter prompt libraries, progression analytics.

- Assumptions/dependencies: Ethical handling of user data; validated personalization strategies; mechanisms to avoid bias or inequity.

- Multimodal pedagogical distillation

- Sector: robotics, autonomous systems, healthcare imaging, media

- Use case: Extend IOA to vision/audio/action spaces (e.g., robotic task decomposition, medical image reasoning), combining multimodal dependencies and mastery criteria.

- Potential tools/products: Multimodal IOA SDKs, robotic task curricula, medical imaging probes.

- Assumptions/dependencies: Robust multimodal teachers; cross-modal dependency modeling; safety validation in real environments.

- Regulatory and standards adoption for efficient AI

- Sector: public policy, sustainability

- Use case: Codify mastery-gated distillation and synthetic data practices in guidelines (energy reporting, procurement standards, privacy-preserving customization).

- Potential tools/products: Compliance checklists; certification programs for pedagogical distillation pipelines.

- Assumptions/dependencies: Stakeholder alignment; evidence linking IOA-like methods to measurable energy/privacy benefits.

- Pretraining with pedagogically structured synthetic corpora

- Sector: foundational model research

- Use case: Use curriculum-aware synthetic data to mitigate model collapse risks and improve scaling efficiency beyond post-training; integrate reinforcement signals for staged mastery.

- Potential tools/products: Pedagogical pretraining datasets; curriculum schedulers; anti-collapse validators.

- Assumptions/dependencies: Further empirical validation across scales; careful balance of real vs synthetic data; safety and diversity safeguards.

- Safety alignment via prerequisite enforcement

- Sector: AI safety, compliance

- Use case: Enforce safety prerequisites (policy compliance, red-team resistance) as dependencies in curricula; gate model advancement until safety modules reach mastery.

- Potential tools/products: Safety DAGs; adversarial probe suites; progression gates tied to safety metrics.

- Assumptions/dependencies: High-quality safety probes; robust measurement; resilience against distribution shift.

These applications derive directly from IOA’s core innovations: knowledge deficiency identification, dependency-aware curriculum sequencing, bounded difficulty increments (ZPD), mastery-based progression, and representation adaptation (concretization, decomposition, cognitive load management, templating, linguistic simplification). Their feasibility hinges on access to teacher outputs, high-quality seed probes and domain decomposition, legal and privacy constraints, and careful hyperparameter tuning (e.g., TZPD ≈ 0.15, Tmastery ≈ 0.90 as effective starting points).

Collections

Sign up for free to add this paper to one or more collections.