BEACONS: Bounded-Error, Algebraically-Composable Neural Solvers for Partial Differential Equations

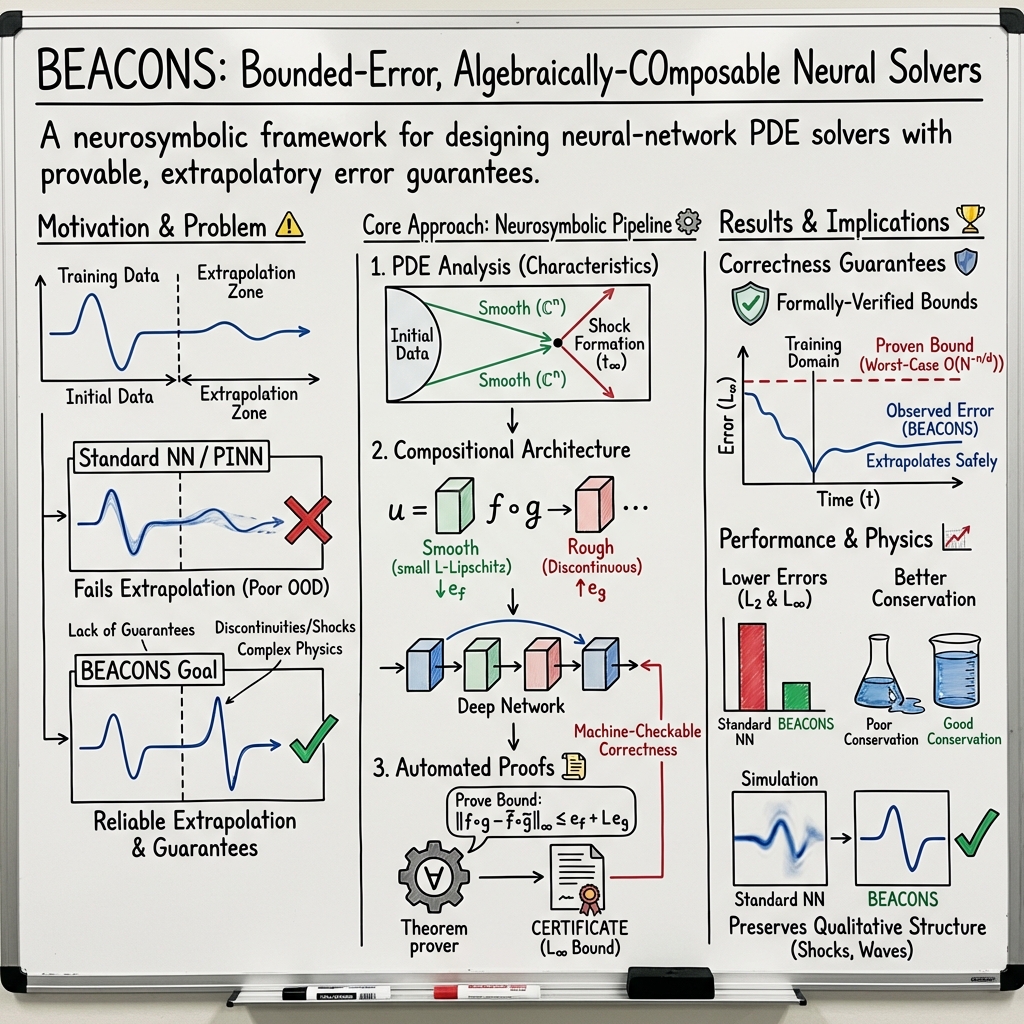

Abstract: The traditional limitations of neural networks in reliably generalizing beyond the convex hulls of their training data present a significant problem for computational physics, in which one often wishes to solve PDEs in regimes far beyond anything which can be experimentally or analytically validated. In this paper, we show how it is possible to circumvent these limitations by constructing formally-verified neural network solvers for PDEs, with rigorous convergence, stability, and conservation properties, whose correctness can therefore be guaranteed even in extrapolatory regimes. By using the method of characteristics to predict the analytical properties of PDE solutions a priori (even in regions arbitrarily far from the training domain), we show how it is possible to construct rigorous extrapolatory bounds on the worst-case Linf errors of shallow neural network approximations. Then, by decomposing PDE solutions into compositions of simpler functions, we show how it is possible to compose these shallow neural networks together to form deep architectures, based on ideas from compositional deep learning, in which the large Linf errors in the approximations have been suppressed. The resulting framework, called BEACONS (Bounded-Error, Algebraically-COmposable Neural Solvers), comprises both an automatic code-generator for the neural solvers themselves, as well as a bespoke automated theorem-proving system for producing machine-checkable certificates of correctness. We apply the framework to a variety of linear and non-linear PDEs, including the linear advection and inviscid Burgers' equations, as well as the full compressible Euler equations, in both 1D and 2D, and illustrate how BEACONS architectures are able to extrapolate solutions far beyond the training data in a reliable and bounded way. Various advantages of the approach over the classical PINN approach are discussed.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces BEACONS, a new way to build neural networks that solve complex math problems called partial differential equations (PDEs). The big idea is to design neural networks that come with guaranteed limits on how wrong they can be, even when asked to predict things far beyond the data they were trained on. BEACONS also provides machine-checked proofs that these guarantees are actually true.

Key Objectives and Questions

Here are the simple questions the paper tries to answer:

- Can we make neural networks that solve PDEs reliably, even in situations they haven’t seen during training?

- Can we mathematically prove how accurate these neural networks will be, and keep the errors within known bounds?

- Can we build bigger, better networks by combining smaller ones in a way that keeps those error bounds under control?

- How does this approach compare to popular methods like Physics-Informed Neural Networks (PINNs)?

Methods and Approach

To understand the approach, it helps to break down a few ideas in everyday terms.

What are PDEs?

- PDEs are equations that describe how things change over space and time. Examples include modeling waves, fluid flow, weather, or traffic.

- Solving PDEs is hard because they can be very complex, and solutions can develop sharp changes like “shock waves” (think of a sudden traffic jam on a highway).

The usual problem with neural networks

- Neural networks are great at predicting things similar to what they’ve seen (interpolation), but often fail when asked to predict outside that range (extrapolation).

- Imagine you’ve only seen weather in spring and try to predict winter weather—the network might guess wildly wrong.

How BEACONS sets guaranteed error bounds

- BEACONS uses a mathematical technique called the “method of characteristics.” In simple terms, it tracks how information moves through space and time along paths, like following cars along lanes to see where traffic forms.

- This method can predict how smooth or bumpy the solution will be, even far away from the training data. That helps estimate the “worst-case error”—the maximum difference between the neural network’s prediction and the true answer anywhere in the region. This kind of maximum difference is called the L-infinity () error.

- For simple, smooth parts of the solution, BEACONS uses known theorems to show that a shallow network (one hidden layer) can approximate the solution with a small worst-case error, as long as it has enough neurons.

Building bigger networks from smaller ones (like Lego blocks)

- Some parts of PDE solutions are smooth and easy to approximate; others can be sharp or discontinuous (like shock waves), and are much harder.

- BEACONS composes networks: it builds small networks for the smooth parts and combines them with networks for the tricky parts. The smooth parts help “limit” the errors from the discontinuous parts—much like adding shock absorbers to a bike to smooth out the bumps.

- This is inspired by a classic trick in numerical methods called “flux limiters,” but now done with neural networks.

Software and formal proofs

- BEACONS isn’t just theory—it’s a complete software framework:

- A small language (DSL) written in Racket to describe PDEs and neural network architectures.

- An automatic code generator that produces efficient C code to build, train, and test the networks.

- An automated theorem prover that creates machine-checkable proofs showing the error bounds are correct and that the network follows important physical rules (like conservation of mass or energy). These proofs respect the rules of computer arithmetic (IEEE-754), so they match what the computer actually does.

Main Findings and Why They Matter

The authors tested BEACONS on several well-known PDEs:

- Linear advection (describes quantities flowing at a constant speed).

- Inviscid Burgers’ equation (often used to model shock waves).

- Compressible Euler equations (a system describing fluid dynamics with density, momentum, and energy), in both 1D and 2D.

They compared BEACONS to ordinary neural networks of similar size and found:

- Lower errors overall (both average error, called , and worst-case error, called ).

- Better conservation of physical quantities over time.

- More stable and reliable behavior, especially in higher dimensions.

- Crucially, the actual errors stayed within the mathematically proven bounds.

This shows BEACONS can reliably handle situations far beyond the training data. That’s a big deal for scientific simulations that often explore new, extreme conditions where we don’t have experiments to check against.

Implications and Impact

- Trustworthy AI for science: BEACONS helps make neural networks more reliable for physics and engineering problems by giving them built-in error guarantees and formal proofs.

- Better extrapolation: Instead of hoping a network will generalize, BEACONS provides mathematical reasons why and how far it can.

- Practical integration: The framework can plug into existing simulation tools (like Gkeyll), and its training data can come from fully verified solvers, enabling end-to-end verification.

- Beyond PINNs: Unlike traditional PINNs—which restrict the training to keep solutions “physically valid”—BEACONS allows the network to explore freely during training but ensures it ends up near a valid solution, thanks to its compositional design and proven error bounds.

- Future directions: Extending BEACONS to more complex PDEs, bigger systems, and faster training could make neural networks a standard, trustworthy tool in scientific computing.

In short, BEACONS shows how to build neural networks for PDEs that are not just accurate, but provably reliable—even when pushed far beyond their training data.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of concrete gaps and open questions left unresolved by the paper; each item is phrased to suggest actionable directions for future work.

- Activation/architecture scope: The theory and certificates rely on smooth (C∞) activations and shallow MLPs; it is unclear how to extend the guarantees to common non-smooth activations (e.g., ReLU), convolutional/attention layers, spectral layers, or operator-learning architectures (e.g., FNO/DeepONet).

- Curse of dimensionality in bounds: The worst-case L∞ approximation rate O(N{-n/d}) inherits the curse of dimensionality; strategies to mitigate this for high-dimensional spatiotemporal settings (3D space + time, parametric inputs) are not provided.

- Extrapolatory smoothness in complex settings: The method-of-characteristics-based smoothness predictions are developed primarily for 1D conservation laws without source terms; extensions to multi-D characteristics, variable coefficients, stiff source terms, dispersive terms, and complex geometries are not shown.

- Discontinuity handling constructiveness: The algebraic-composability result assumes a decomposition into a smooth, low-Lipschitz function composed with a discontinuous function; there is no general, constructive algorithm to discover such decompositions from data or PDE structure, especially in multiple dimensions or for vector systems.

- Lipschitz control and certification: Error suppression depends on having a small Lipschitz constant for the “smooth” subnet; methods to train for, certify, and tightly bound the Lipschitz constant of learned subcomponents (e.g., via spectral norm control) are not specified.

- Optimization vs approximation gap: Guarantees are asymptotic and approximation-theoretic (infinite data/epochs); there are no finite-sample, finite-epoch optimization guarantees that gradient descent will reach the regimes required by the certificates.

- Weighting and loss design: The selection and adaptation of λ_PDE, λ_BC, and the residual weight matrix W are heuristic; principled, certified procedures for choosing/tuning these weights and analyzing sensitivity are missing.

- Domain generality: Theory and normalization are set on Id; handling irregular/moving/curved domains, mixed boundary types (e.g., inflow–outflow, partially periodic), and the impact of domain mappings on certified constants are not addressed.

- Conservation/entropy guarantees post-shock: While conservation and stability are emphasized, a precise, general mechanism for certifying entropy stability and admissibility across shocks (especially for multi-D Euler) is not fully formalized.

- Conditional verification reliance: For Euler experiments, certificates are conditional on unverified training data; there is no method to remove this dependency or to bound the propagation of training-data errors into the neural certificates.

- Scalability of formal verification: The computational complexity and scaling of the automated theorem prover and bound-generation (with network size, depth, and dimension) are not quantified; practical limits and performance trade-offs remain unknown.

- Composition calculus breadth: Error-propagation rules are given for serial compositions; a general calculus covering residual/skip connections, branching/parallel composition, and attention-style mixing is not provided.

- Sampling strategy and adaptivity: Interior/boundary sampling is fixed; there is no adaptive, goal-oriented, or certified sampling policy to tighten bounds or efficiently capture rare events and discontinuities.

- Numerical precision and nondeterminism: Although proofs respect IEEE-754, the impact of mixed-precision training, GPU nondeterminism, parallel reductions, and compiler optimizations on the validity of per-network certificates is not analyzed.

- Beyond hyperbolic PDEs: Applicability to parabolic/elliptic equations, nonlocal operators, and multi-physics systems with stiff source terms (reaction–diffusion–advection) is left open.

- Operator-level generalization: The framework targets solution learning for fixed setups; certified operator learning across families of initial/boundary conditions and parameters (with quantified generalization) is not developed.

- Uncertainty quantification: Only worst-case deterministic bounds are offered; probabilistic UQ, Bayesian certification, and handling of stochastic PDEs or noisy training data are not considered.

- Baseline breadth: Empirical comparisons are to standard feed-forward NNs; benchmarking against strong PDE ML baselines (advanced PINN variants, DeepONet, FNO, neural Galerkin) is missing, leaving relative performance unquantified.

- Multi-D shock topology: Theoretical treatment of interacting shocks, contacts, and vortical structures in 2D/3D (including topology changes) under the compositional framework is not provided.

- Automatic discovery of structure: There is no automated procedure (e.g., program synthesis/structure learning) to infer effective compositional architectures from a PDE specification or data.

- Long-time extrapolation theory: Rigorous bounds on long-time error growth and stability (especially for chaotic or turbulent regimes) are not established.

- Physical scaling/normalization: The effect of physical units, scaling, and normalization on bound constants and training stability is not discussed; guidelines for unit-consistent preprocessing are absent.

- Certificate granularity: It is unclear whether certificates bind the full trained network over the entire domain or only per-layer worst-case bounds; methods for localized, tighter regional certificates are not provided.

- Sample complexity: There is no analysis of how many residual/boundary samples are required to achieve a target certified error, nor active-learning strategies to minimize data for a desired certificate.

- Hybridization with classical solvers: How BEACONS architectures can be embedded in traditional schemes (e.g., as flux limiters/closures/preconditioners) with end-to-end certificates and measurable runtime gains remains unexplored.

Glossary

- algebraic composability: Designing deep models by composing simpler modules so that their individual error bounds combine controllably. "the idea behind algebraic composability is to assemble deeper, more expressive neural network architectures by composing together shallower, more tractable ones"

- applied category theory: The use of category-theoretic abstractions to reason about compositional structures in applied settings (e.g., machine learning). "applied category theory"

- automated theorem-proving: Software-supported generation and checking of formal mathematical proofs. "an automated theorem-proving framework"

- boundary operator: An operator specifying how a solution behaves on the boundary of the domain (encodes boundary conditions). "boundary operator"

- characteristic lines: Curves (often straight lines for simple hyperbolic PDEs) along which solution values are transported and remain constant. "characteristic lines intersect"

- compressible Euler equations: A system of hyperbolic PDEs modeling inviscid, compressible fluid flow (mass, momentum, and energy conservation). "compressible Euler equations"

- conservation law form: A PDE formulation expressing time evolution of a conserved quantity via divergence of a flux. "conservation law form"

- conservation laws: Fundamental invariants (e.g., mass, momentum, energy) that must be preserved by physical and numerical systems. "enforcement of conservation laws to machine precision"

- convex entropy functionals: Convex scalar functionals of state variables ensuring thermodynamic admissibility and stability of solutions. "convex entropy functionals"

- convex hull: The smallest convex set containing a given set of points (e.g., training samples). "convex hull of their training sets"

- Dirichlet boundary conditions: Boundary conditions that prescribe the value of the solution on the boundary. "Dirichlet initial or boundary conditions"

- discontinuous Galerkin method: A finite element technique using piecewise-polynomial basis functions that may be discontinuous across element boundaries. "discontinuous Galerkin method"

- eigenstructure: The collection of eigenvalues and eigenvectors (here, of flux Jacobians) governing wave speeds and characteristic directions. "eigenstructure of their flux Jacobians"

- finite element (method): A discretization approach approximating solutions with basis functions on a mesh of elements. "finite element"

- finite volume method: A discretization that enforces conservation by integrating fluxes over control volumes (cells). "finite volume method"

- flux function: A mapping from state variables to fluxes in conservation-law PDEs. "flux function"

- flux Jacobians: Jacobian matrices of flux functions with respect to the state; their eigenstructure determines wave propagation. "flux Jacobians"

- flux limiters: Nonlinear limiters used to prevent spurious oscillations near discontinuities in numerical schemes. "flux limiters"

- foundation model (FM): Large-scale pretrained models whose broad training data enable strong generalization capabilities. "foundation model (FM) architectures"

- Gibbs and Runge phenomena: Oscillatory artifacts in Fourier and high-degree polynomial interpolation near discontinuities or interval endpoints. "the Gibbs and Runge phenomena"

- hyperbolic PDEs: Partial differential equations characterized by wave-like propagation and finite-speed characteristics. "hyperbolic PDEs"

- IEEE-754 standard: The widely adopted standard defining formats and semantics for floating-point arithmetic. "IEEE-754 standard for floating-point arithmetic"

- inviscid Burgers' equations: Nonlinear conservation-law PDEs without viscosity, often used to study shock formation. "inviscid Burgers' equations"

- Kolmogorov complexity: The length of the shortest program that outputs a given object; a formal measure of algorithmic simplicity. "algorithmic/Kolmogorov complexity"

- Lipschitz constant: A bound L such that a function’s change is at most L times the change in its input; measures smoothness. "Lipschitz constant "

- L2 stability: A property ensuring the squared-integral (energy) norm of numerical solutions does not grow unbounded. "guaranteed stability"

- L{\infty}-norm: The supremum (maximum absolute value) norm over a domain; measures worst-case error. "standard ${L^{\infty}$-norm:"

- machine-checkable certificates of correctness: Formal proof artifacts that can be verified by software to certify properties of models or solvers. "machine-checkable certificates of correctness"

- method of characteristics: A technique for solving hyperbolic PDEs by reducing them to ODEs along characteristic curves. "method of characteristics"

- multi-layer perceptron: A feed-forward neural network consisting of stacked linear layers and nonlinear activations. "multi-layer perceptron"

- Occam's razor hypothesis: The conjecture that learning systems prefer simpler functions among those that fit the data. "the Occam's razor hypothesis"

- Out-of-Distribution (OOD): Data or inputs that do not follow the training distribution, often leading to degraded performance. "Out-of-Domain or Out-of-Distribution (OOD) cases"

- PDE residual: The discrepancy obtained by applying the PDE operator to a candidate solution and subtracting sources; used in loss functions. "PDE residual"

- Physics-Informed Neural Network (PINN): A neural approach incorporating PDE residuals and boundary conditions into the loss to enforce physical laws. "physics-informed neural network (PINN)"

- positive semi-definite: A matrix property implying all quadratic forms are nonnegative, ensuring well-defined weighted norms. "symmetric and positive semi-definite"

- shock wave: A propagating discontinuity in a solution to a hyperbolic conservation law. "shock wave"

- Sobolev space: A function space that includes functions with square-integrable derivatives up to a given order. "Sobolev space"

- space-time boundary conditions: A unified view of initial and spatial boundary conditions as constraints on the space-time domain boundary. "space-time boundary conditions"

- strict hyperbolicity: A property of systems whose characteristic speeds are real and distinct, ensuring well-posed wave propagation. "strict hyperbolicity preservation"

- total variation diminishing: A property of numerical schemes that do not increase the total variation of the solution, helping prevent oscillations. "total variation diminishing"

- vector of differential operators: A tuple of operators acting on vector-valued fields in coupled PDE systems. "vector of differential operators"

- weight matrix: A matrix defining weighted norms in residual losses to balance and couple different equation components. "weight matrix ${W \in \mathbb{R}^{m \times m}$"

Practical Applications

Overview

Below are practical, real-world applications that follow directly from the paper’s findings and software artifacts (bounded extrapolatory error guarantees for NN PDE solvers, algebraic composability, automatic code generation, and machine-checkable certificates). Each item notes sector relevance, plausible tools/workflows, and key assumptions/dependencies.

Immediate Applications

- Certified NN surrogates for hyperbolic PDE solvers (sector: aerospace, automotive, energy, plasma physics, CFD)

- Use BEACONS to replace or augment costly finite-volume/finite-element solvers with fast, bounded-error neural surrogates for 1D/2D linear advection, inviscid Burgers, and compressible Euler equations.

- Workflow: generate formally verified training data via gkylcas; synthesize C code with BEACONS; attach machine-checkable L∞ error certificates; deploy inside Gkeyll or as standalone libraries.

- Assumptions/dependencies: underlying PDE is hyperbolic; flux functions are sufficiently smooth; training data is trustworthy (ideally formally verified); IEEE-754 compliance in proof pipeline.

- Extrapolation-safe simulation components (sector: aerospace flight dynamics, propulsion, atmospheric entry)

- Replace PINN-style modules with BEACONS components that maintain bounded L∞ error when extrapolating beyond trained time ranges or operating conditions (e.g., off-design Mach numbers, shock formation).

- Tools/products: “BEACONS-certified” shock-capturing modules; compositional architectures that generalize flux limiters.

- Assumptions: valid characteristic analysis; compositional layer bounds calibrated; source terms either absent or explicitly modeled.

- Bounded-error digital twins for operational monitoring (sector: energy, process industries, HVAC)

- Embed BEACONS surrogates that can run in real time with certificates, enabling robust predictions in OOD regimes (transients, startup/shutdown, fault conditions).

- Workflows: plant OPS dashboards display both predictions and certified bounds; CI/CD pipelines generate new certificates upon model updates.

- Assumptions: target subsystems governed (primarily) by hyperbolic PDEs; training coverage for typical operating regimes; acceptable bound sizes for decision-making horizons.

- Risk-aware simulation for policy and compliance (sector: nuclear safety, aerospace certification, environmental modeling)

- Provide auditors/regulators with machine-checkable error certificates attached to simulation results used in safety cases or environmental impact assessments.

- Products: “certificate-as-artifact” packages accompanying reports; verifiable proof-of-correctness bundles in procurement.

- Assumptions: regulator acceptance of formal methods artifacts; traceable provenance (training data, code versioning).

- Robust parameter sweeps and design-space exploration (sector: R&D across academia and industry)

- Use BEACONS surrogates to accelerate large parameter sweeps (geometry, boundary conditions) with guaranteed maximum error over ranges, reducing need for full-scale reruns.

- Workflow: automatic layer-level error budgeting; architecture search guided by algebraic composability rules.

- Assumptions: bound tightness adequate for screening; PDE residual weighting is appropriately tuned.

- Education and reproducible pedagogy (sector: education)

- Teach PDEs, characteristics, numerical methods, and formal verification by running the open-source BEACONS pipeline end-to-end; students inspect generated proofs and test extrapolation behavior.

- Tools: Racket DSL exercises; theorem prover insights; side-by-side comparisons vs PINNs.

- Assumptions: curricular time for formal methods; availability of compute for training and proof generation.

- Continuous integration for scientific software (sector: software/simulation tooling)

- Integrate BEACONS code generation and certificates in CI pipelines to fail builds when layer bounds or conservation checks regress.

- Products: CI plugins for bound regression detection; dashboarding of L∞ and conservation metrics across commits.

- Assumptions: stable HPC/dev environment; standardized metrics (e.g., weighted norms, conservation errors).

- Real-time controllers needing PDE-based estimates (sector: robotics, UAVs with flow interactions; environmental sensing)

- Deploy bounded-error flow/transport estimators for guidance in environments with wave/advection phenomena (e.g., plume tracking, wind-aware pathing).

- Workflow: edge-deploy small C artifacts; monitor runtime bound to switch strategies under high uncertainty.

- Assumptions: system dynamics reducible to hyperbolic components; bounds small enough for control stability.

- Model audit and comparison against classical solvers (sector: CFD verification/validation)

- Use BEACONS to benchmark and certify NN approximations against formally verified solvers; quantify stability and conservation adherence over time.

- Tools: automated conservation analysis; pointwise and time-integrated L²/L∞ comparisons.

- Assumptions: availability of verified baseline; compatible boundary conditions.

- Open-source toolchains and standardization (sector: software tooling, academic labs)

- Immediate reuse of the Racket DSL, C code generator, and theorem prover to stand up new PDE solver NNs with certificates.

- Assumptions: team familiarity with Racket/C; adoption of gkylcas/Gkeyll integration.

Long-Term Applications

- Certified digital twins at plant scale (sector: energy, chemical processing, aerospace systems)

- Extend BEACONS to multi-physics and higher-dimensional systems (e.g., coupled compressible flow + combustion + structures), enabling plant-wide digital twins with end-to-end certificates for control and safety.

- Products: “BEACONS Studio” for model assembly, compositional error budgeting, and deployment.

- Assumptions/dependencies: extension to PDEs with source terms, stiffness, and complex coupling; scalable theorem proving; parallel training/inference.

- Physics foundation models with formal guarantees (sector: cross-domain scientific computing)

- Pretrain compositional architectures across families of PDEs, offering reusable certified building blocks; shift from data diversity to provable structure-driven generalization.

- Workflows: library of certified layers/operators; task-specific compositions with bound tracking.

- Assumptions: scalable certification across many operators; efficient bound transfer during fine-tuning.

- Safety-critical control under uncertainty (sector: aerospace, autonomous vehicles, fusion/plasma)

- Use BEACONS bounds in model predictive control (MPC) to ensure robust constraints when the solver extrapolates (e.g., during off-nominal aerothermal events or plasma transients).

- Products: controllers that incorporate bound-aware decision logic; runtime certification monitors.

- Assumptions: bound magnitudes compatible with control tolerances; tight real-time performance.

- Climate and weather modeling modules (sector: climate science, meteorology)

- Integrate certified advection components and shock-aware transport schemes into larger models for more reliable OOD behavior in extreme events (e.g., tropical cyclone intensification).

- Workflows: hybrid classical–BEACONS modules; uncertainty-aware assimilation pipelines.

- Assumptions: coupling with parabolic/elliptic components handled; acceptance in community codes.

- Healthcare and biomedical flow simulation (sector: healthcare)

- Long-term application to certified solvers for cardiovascular flows (compressible/incompressible regimes, wave propagation in arteries) and ultrasound wave modeling.

- Products: clinical decision-support with bounded simulation error; regulatory-ready documentation.

- Assumptions: adaptation to relevant PDE classes (often mixed types); validation against experimental data; regulatory pathways.

- Finance and risk modeling via PDEs (sector: finance)

- Extend to parabolic PDEs (e.g., Black–Scholes variants) with composability and certification; provide bounded-error pricing/hedging tools.

- Workflows: certified quantitative libraries; auditing trails for compliance.

- Assumptions: method generalized beyond hyperbolic PDEs; acceptable runtime overhead for trading systems.

- Robotics path planning via Hamilton–Jacobi/Eikonal PDEs (sector: robotics)

- Certified solvers for wavefront propagation and value functions in navigation (hyperbolic/HJ PDEs), yielding safer OOD performance in novel terrains.

- Products: navigation stacks with certified PDE modules; safety case integration.

- Assumptions: formalization of HJ/PDE residuals; Lipschitz-aware composition calibrated.

- Standards and policy frameworks for verified ML in simulation (sector: policy/regulation)

- Establish standards for “formally certified ML solvers,” including documentation, proof artifacts, and conservation audits; influence procurement and validation criteria.

- Products: certification guidelines; benchmarking suites; regulator toolkits.

- Assumptions: multi-stakeholder buy-in; education on formal verification.

- Edge deployment on constrained hardware (sector: embedded/IoT)

- Optimize BEACONS-generated C artifacts for microcontrollers in safety systems (pipeline monitoring, industrial valves), leveraging small, compositional architectures with tracked bounds.

- Workflows: quantization with bound preservation; code-level proofs embedded in firmware.

- Assumptions: activation functions and arithmetic compatible with quantization; proof stability under fixed-point arithmetic.

- Automated architecture search guided by compositional bounds (sector: software/ML tooling)

- Use the algebraic composability theorem to drive neural architecture search (NAS) that optimizes error budgets and stability, not just empirical metrics, for complex PDE targets.

- Products: NAS engines with bound-aware objectives; design space explorers for PDE solvers.

- Assumptions: efficient estimation of Lipschitz constants; robust bound aggregation across layers.

Cross-cutting assumptions/dependencies

- Applicability is strongest for hyperbolic PDEs; extensions to parabolic/elliptic/mixed systems will require new analysis and certification rules.

- Error guarantees depend on smooth activation functions and on characteristic-based smoothness predictions (initial data and flux properties must be known).

- Bound tightness scales with network width/depth and the dimensionality of the PDE; practical feasibility relies on compositional designs that suppress large errors via smoother components.

- Certified training is most robust when training data comes from formally verified solvers; otherwise bounds are conditional on solver correctness.

- Real-world adoption may require integration into existing HPC stacks, acceptance of formal proofs by stakeholders, and performance engineering for large-scale or real-time use.

Collections

Sign up for free to add this paper to one or more collections.