- The paper introduces a deep learning-based markerless detection and 6D pose estimation approach that overcomes the limitations of traditional fiducial marker methods.

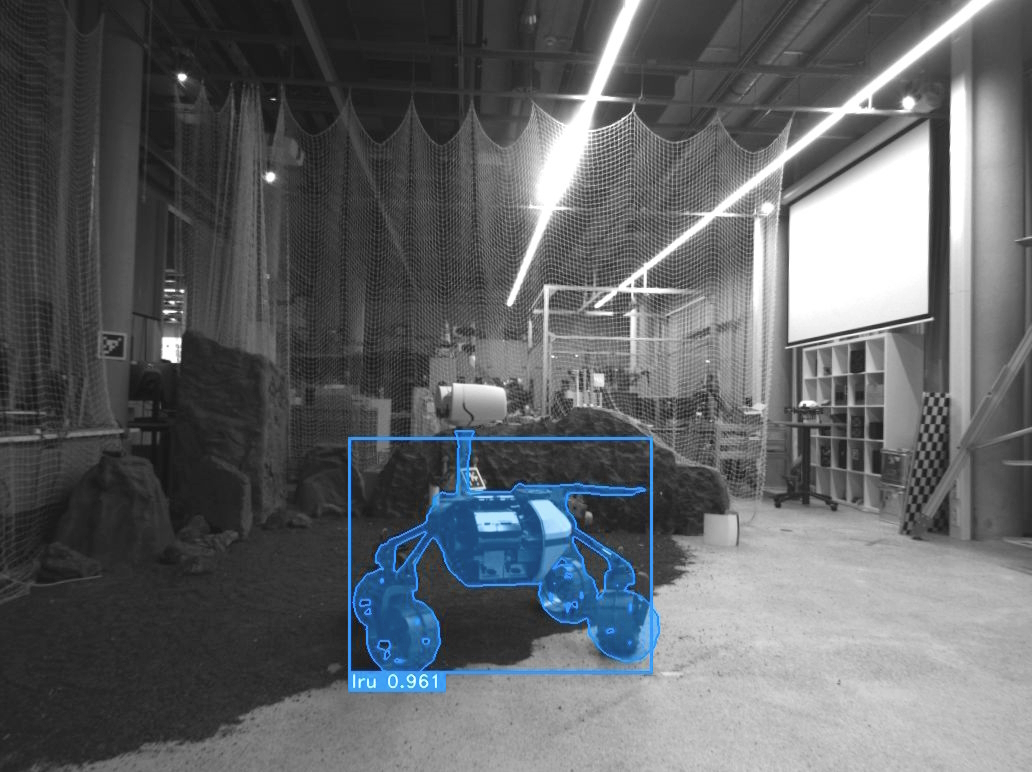

- It leverages YOLO v7, a transformer-based neural network, and stereo visual inputs to enhance data association and localization in decentralized multi-robot SLAM systems.

- Experimental validations in synthetic and real-world planetary-analog environments demonstrate significant improvements in detection range, accuracy, and overall localization performance.

Markerless Robot Detection and 6D Pose Estimation for Multi-Agent SLAM

Introduction

The paper "Markerless Robot Detection and 6D Pose Estimation for Multi-Agent SLAM" (2602.16308) introduces an innovative approach to solving the data association challenges in multi-robot SLAM systems. Traditional SLAM implementations rely heavily on fiducial markers like AprilTags for mutual observer detection, which limits observation range and renders the system ineffective under challenging lighting conditions. This paper proposes leveraging deep learning techniques for markerless detection and pose estimation, enriching the SLAM system with the ability to accurately localize robots within a team. Experimental validations were conducted in planetary analogous environments to highlight the system's efficacy in enhancing relative localization accuracy amongst agents.

Decentralized SLAM System

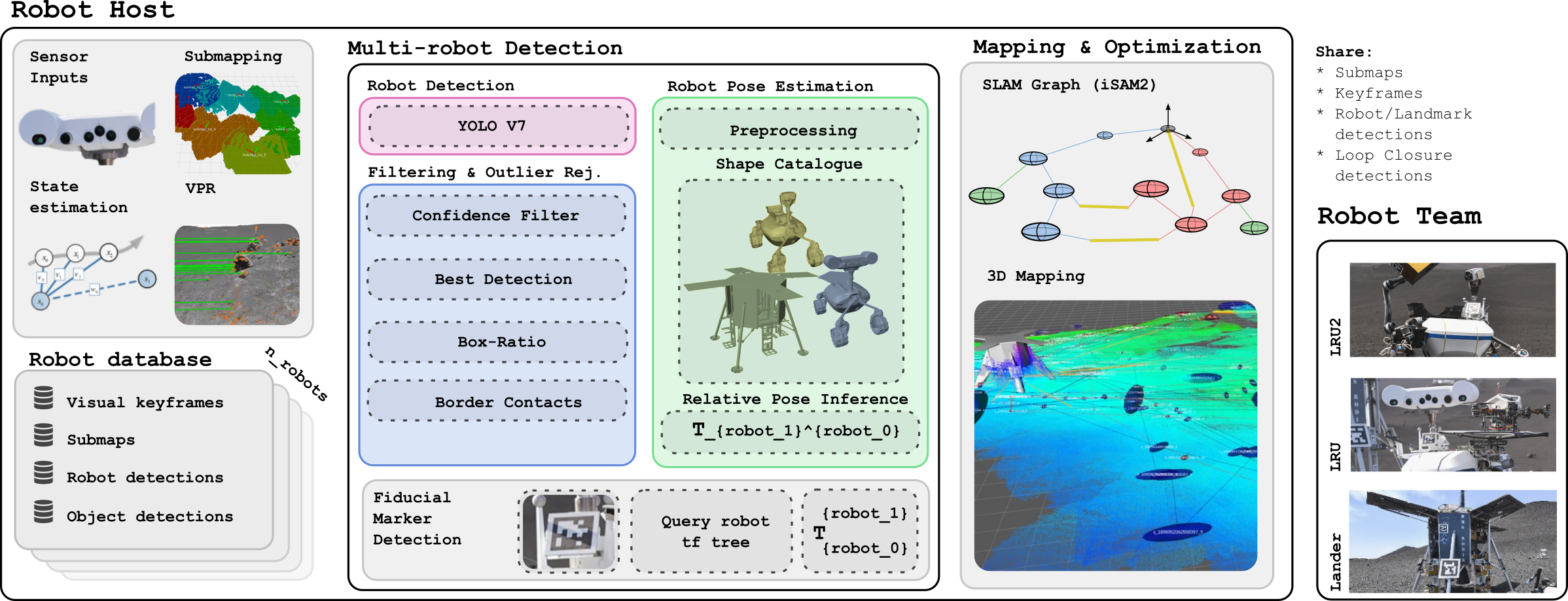

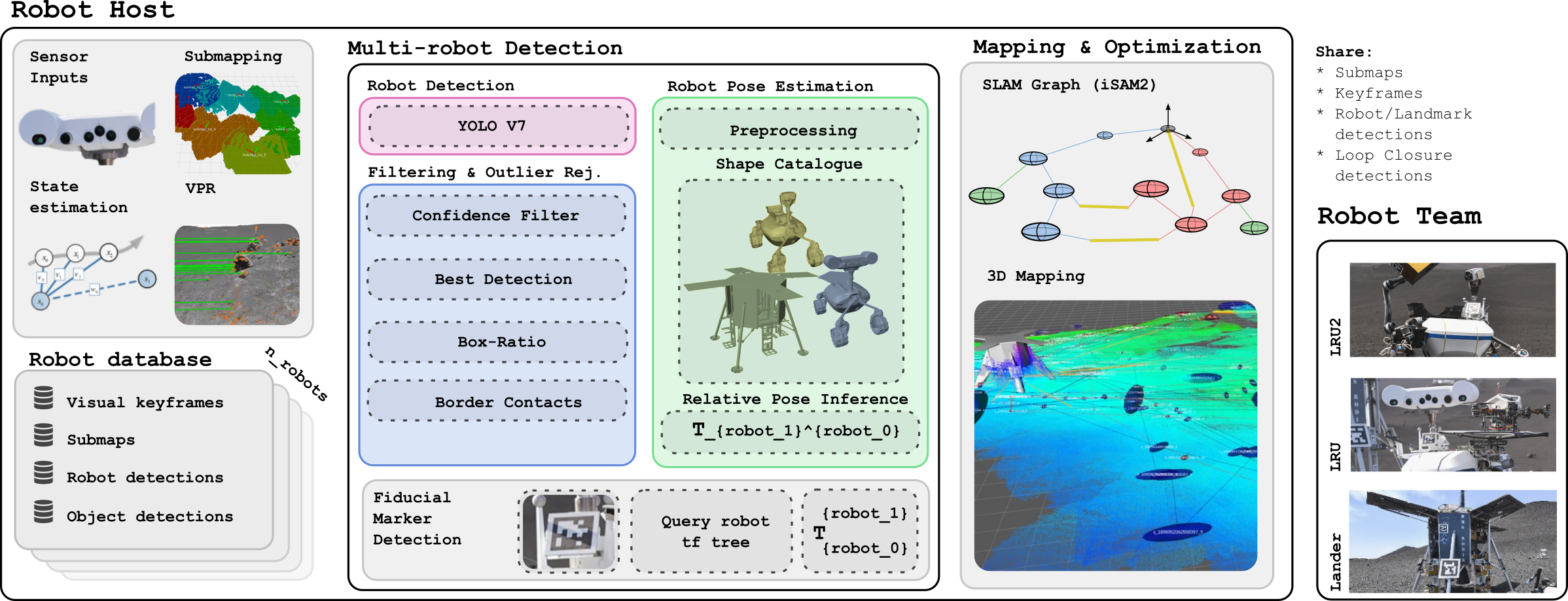

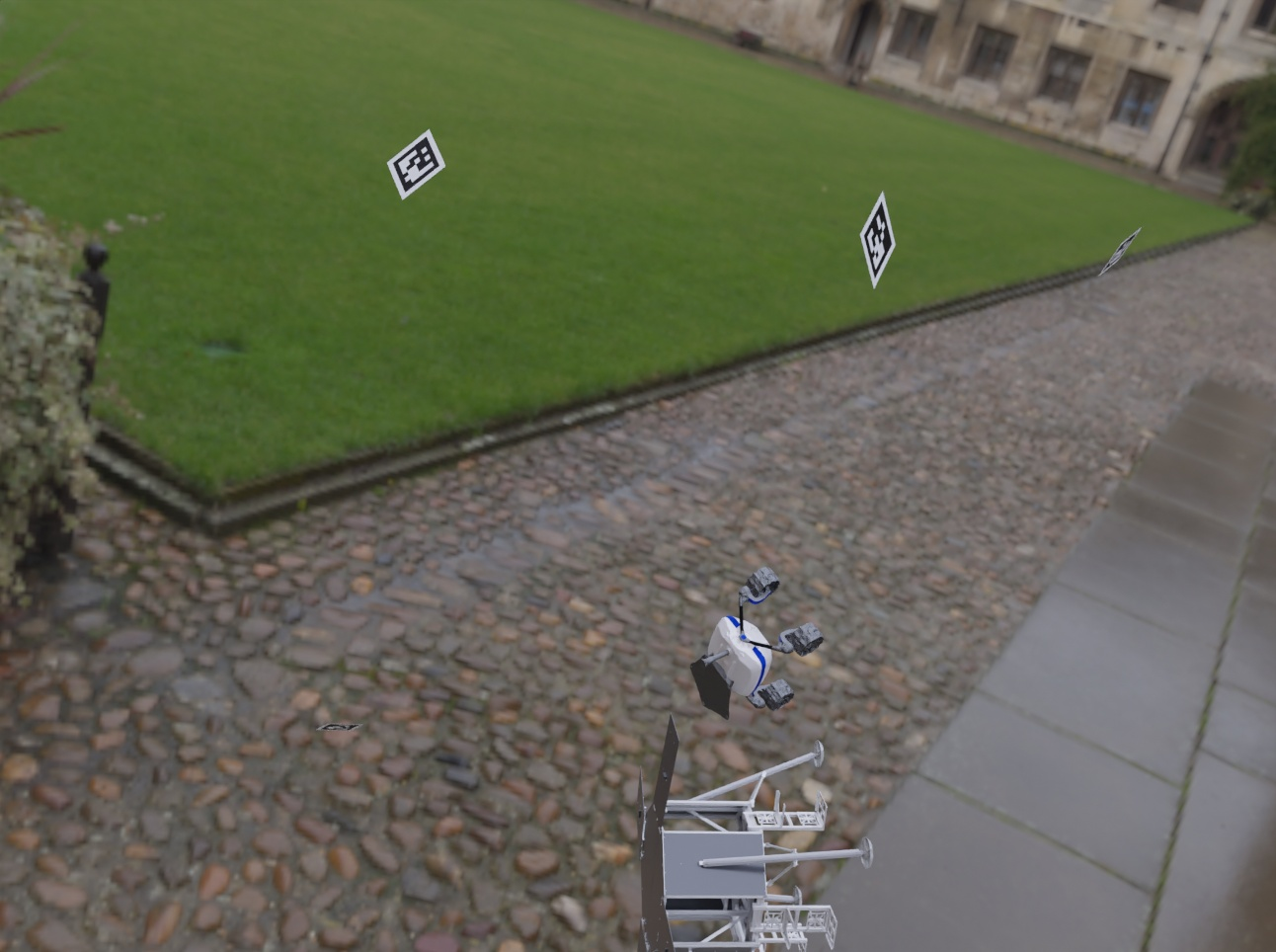

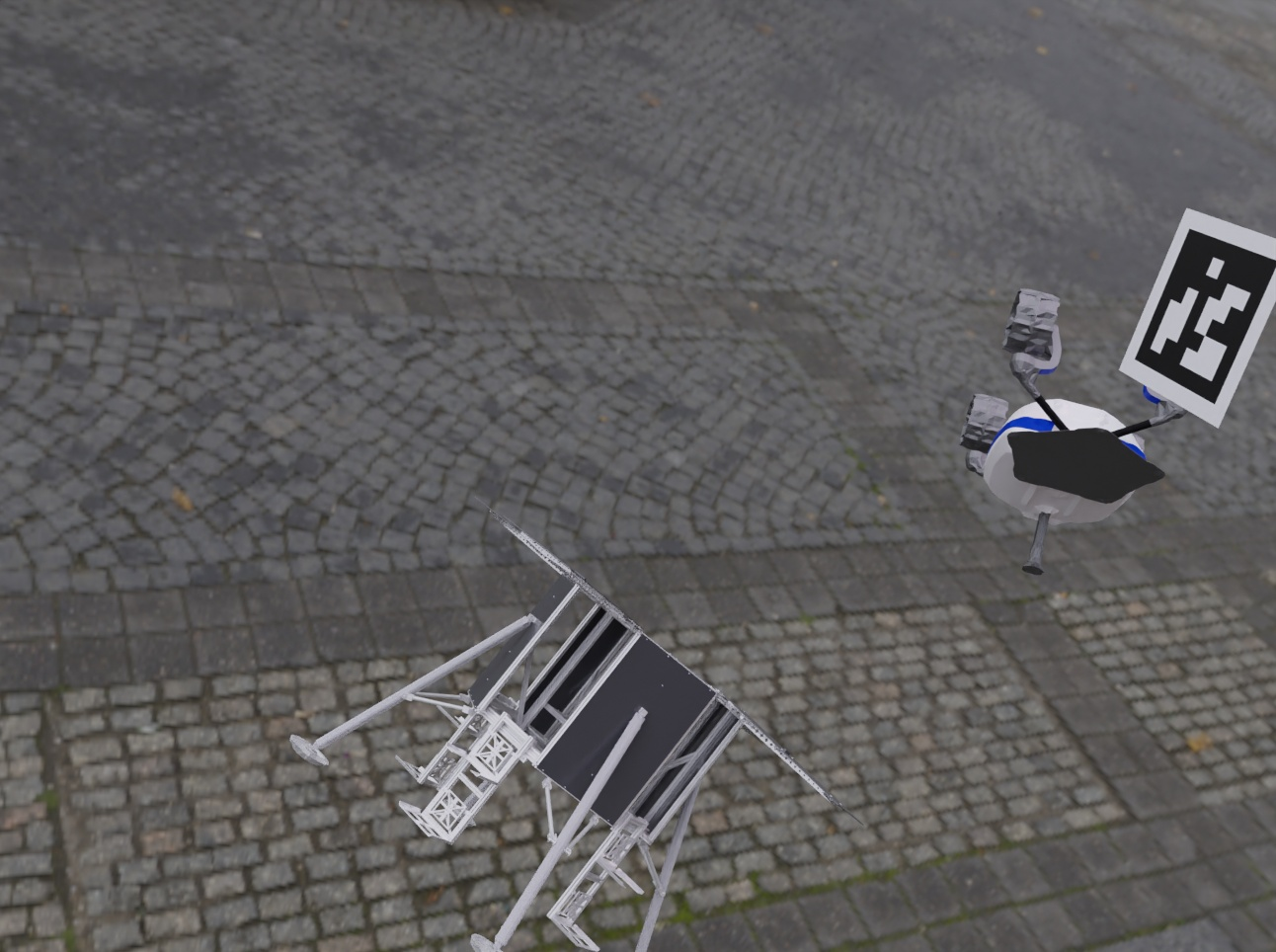

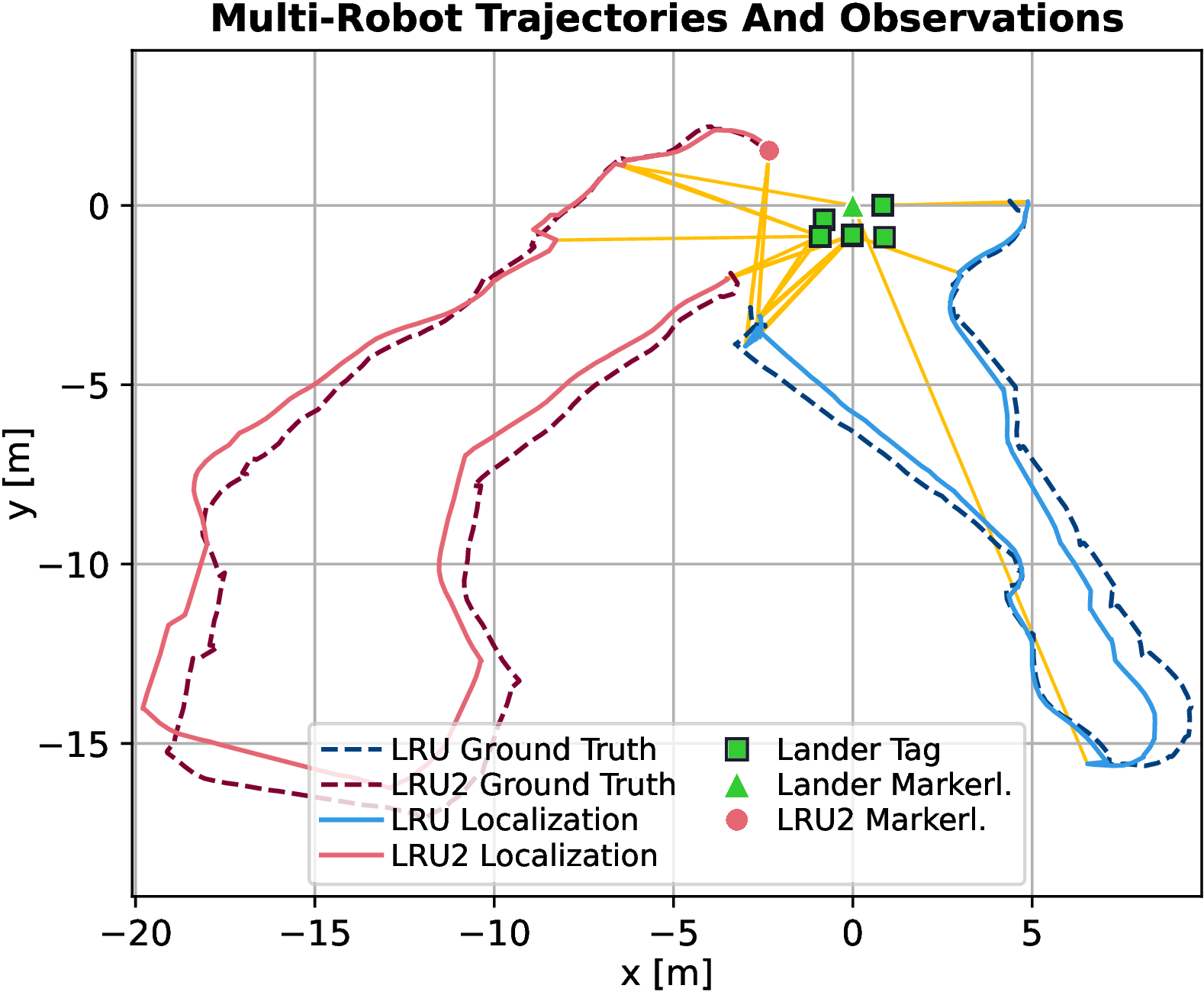

The study builds upon a decentralized multi-robot SLAM system, optimized for stereo vision-equipped robots like the Lightweight Rover Unit (LRU) and UAVs like ARDEA. Visual Odometry (VO), IMU measurements, and odometry sources are fused to compute local state estimation using a Local Reference Filter, allowing for environmental partitioning into submaps. Submap matching facilitates the formation of visual loop closures by registering overlapping submaps. This decentralized architecture supports inter-robot pose measurements derived from visual detections and robust estimation of SLAM graph constraints across agents.

Figure 1: Schematic overview of the employed decentralized SLAM system, focusing on multi-robot detection capabilities.

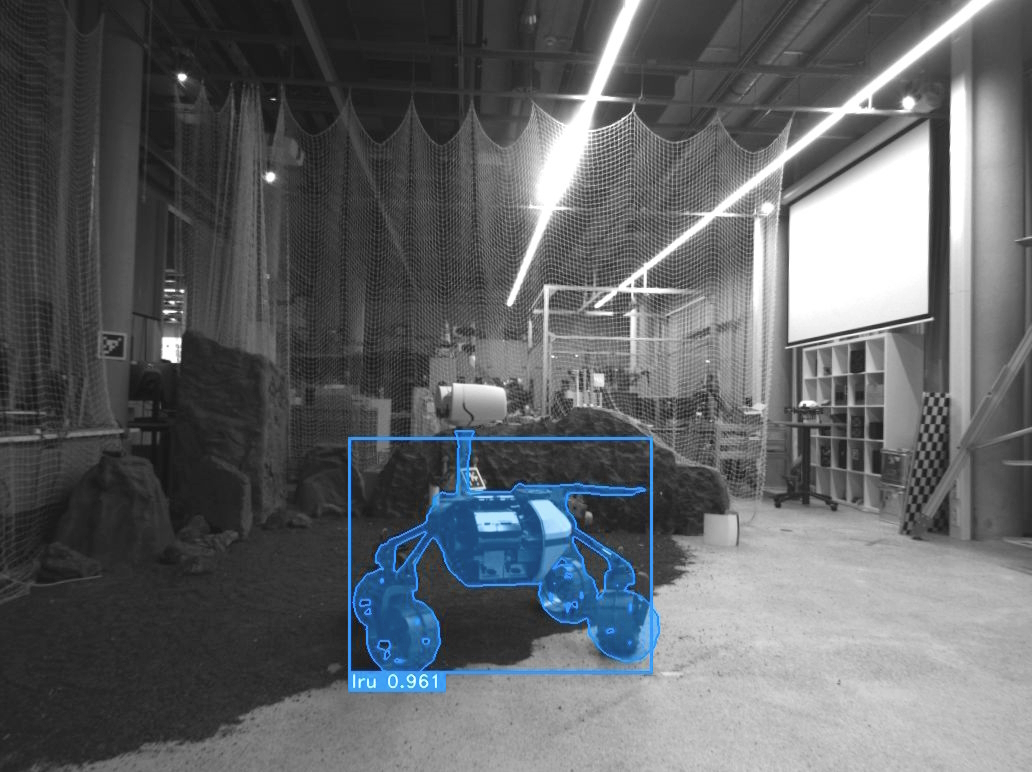

Markerless Detection and Pose Estimation Methods

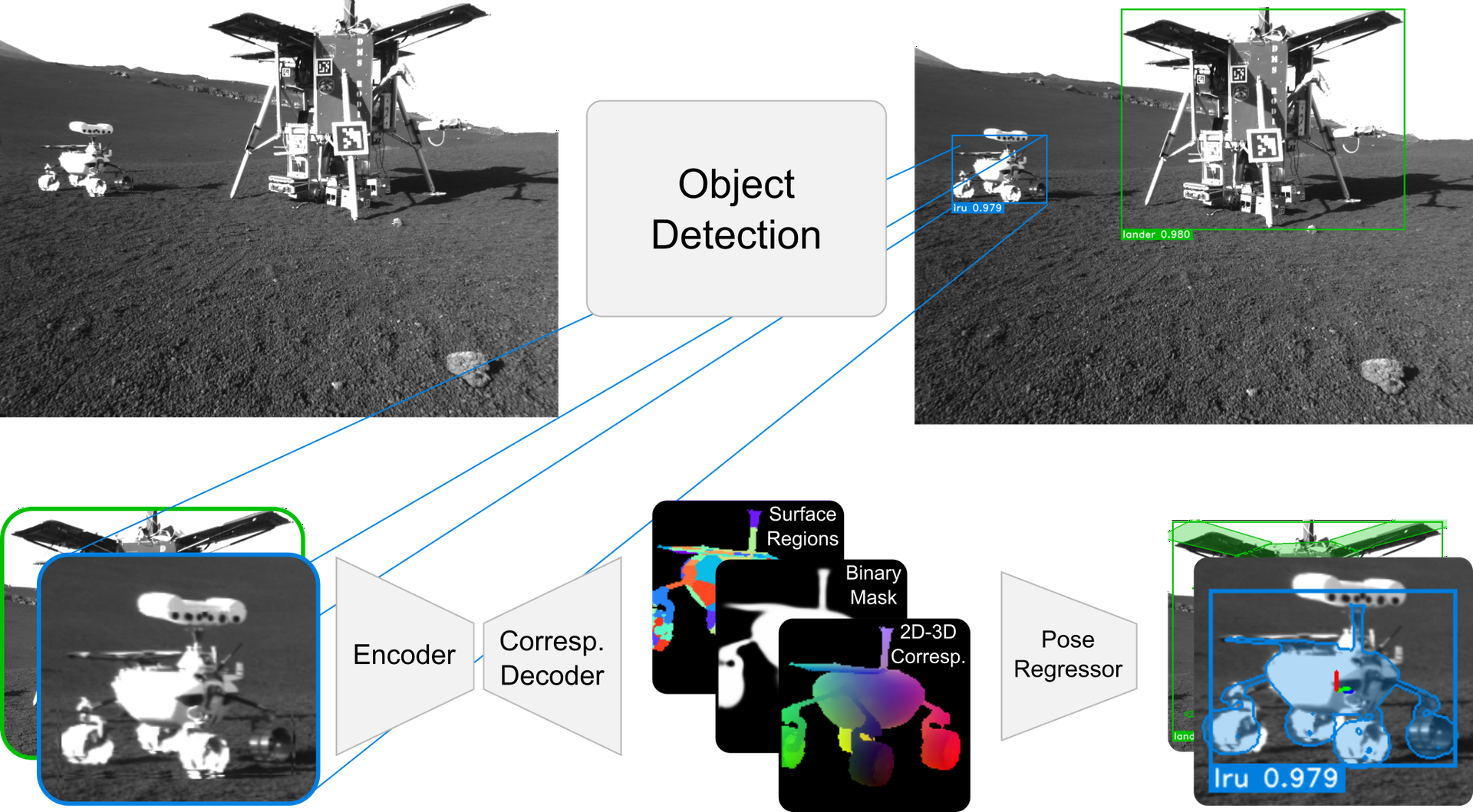

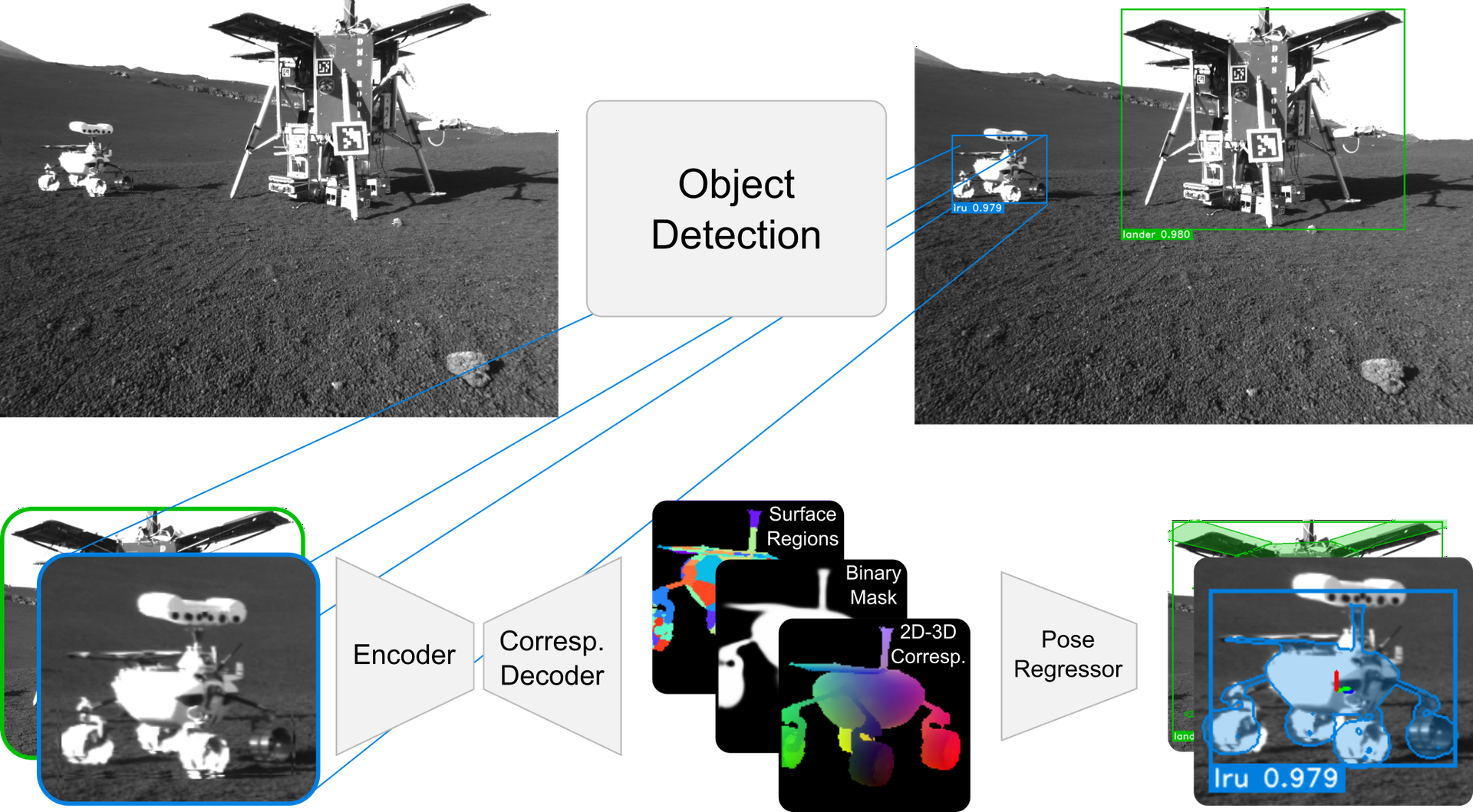

This work features a markerless robotic detection approach using the YOLO v7 object detector for accurate identification, followed by a pose estimation method inspired by Ulmer et al., focusing on 6D pose estimation with dense 2D-to-3D correspondence predictors. Adjustments include the integration of a transformer-based architecture and neural network-based 6D pose regression, enhancing robustness to occlusions. The SLAM integration optimally blends stereo visual inputs with markerless detection outputs, maximizing accuracy even in scenarios characterized by aliased and ambiguous perceptions.

Figure 2: Illustration of the markerless detection pipeline, including 2Dâ3D correspondences and pose regression network.

Experimental Validation

Evaluation on Synthetic Data

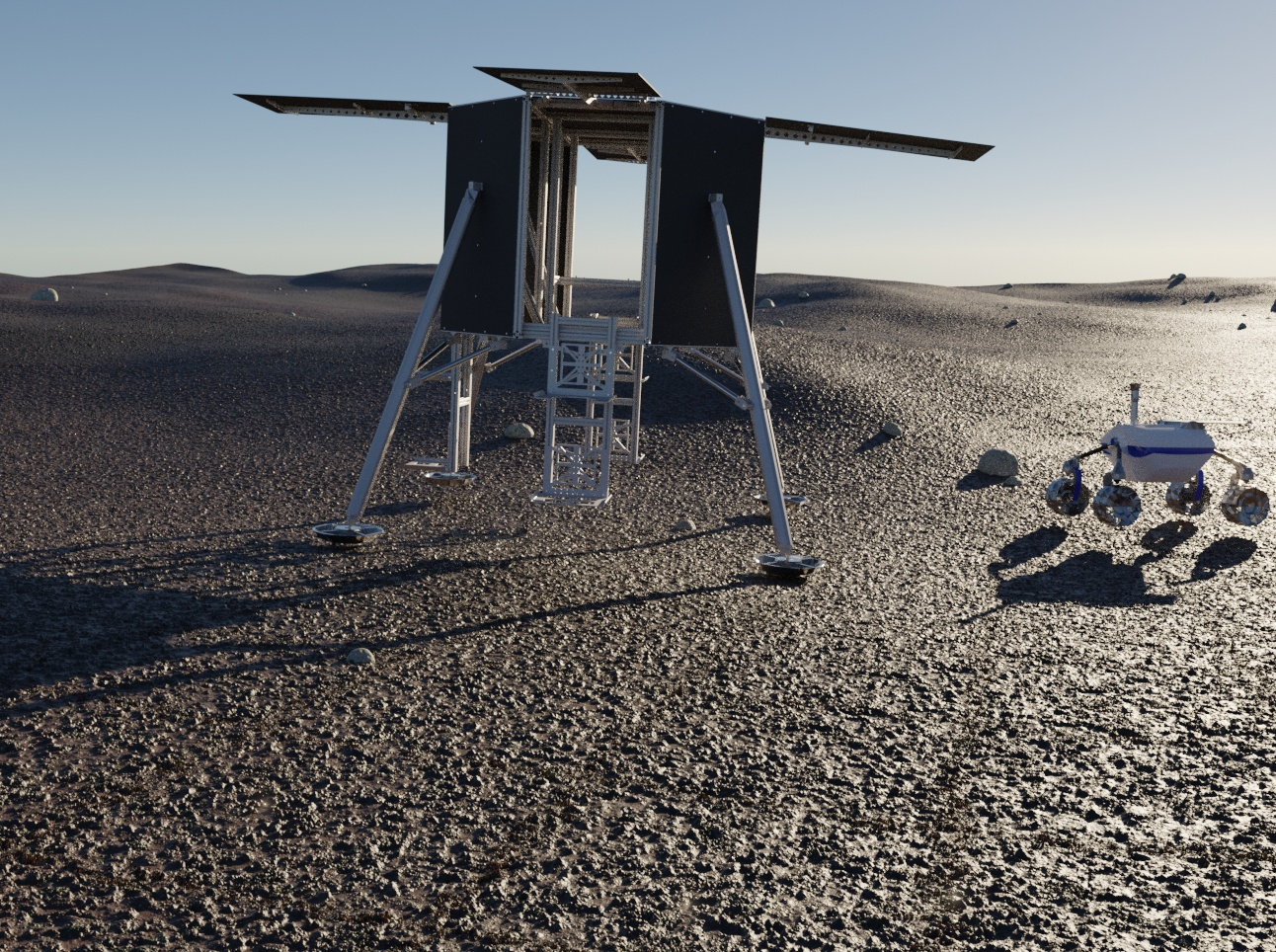

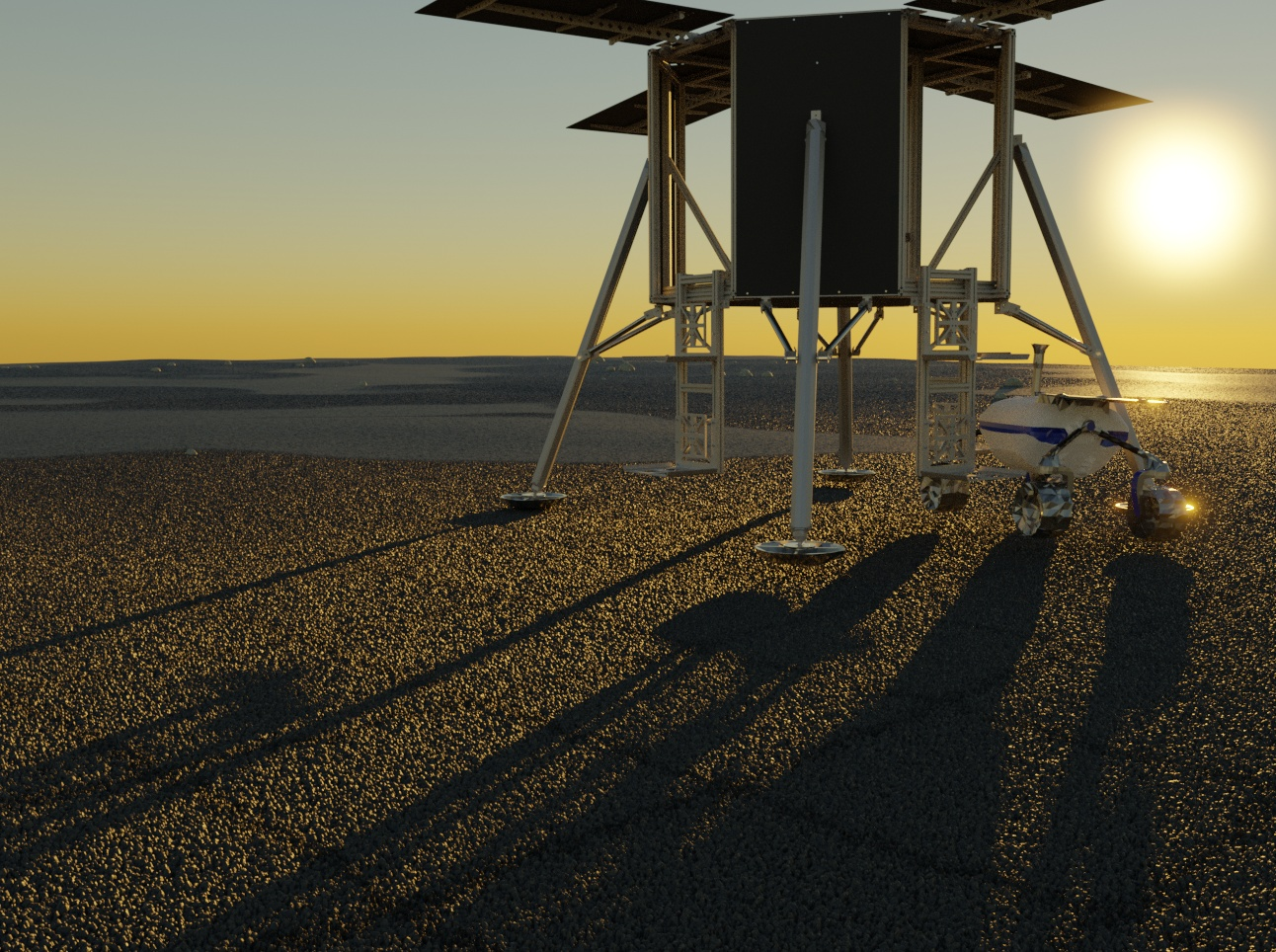

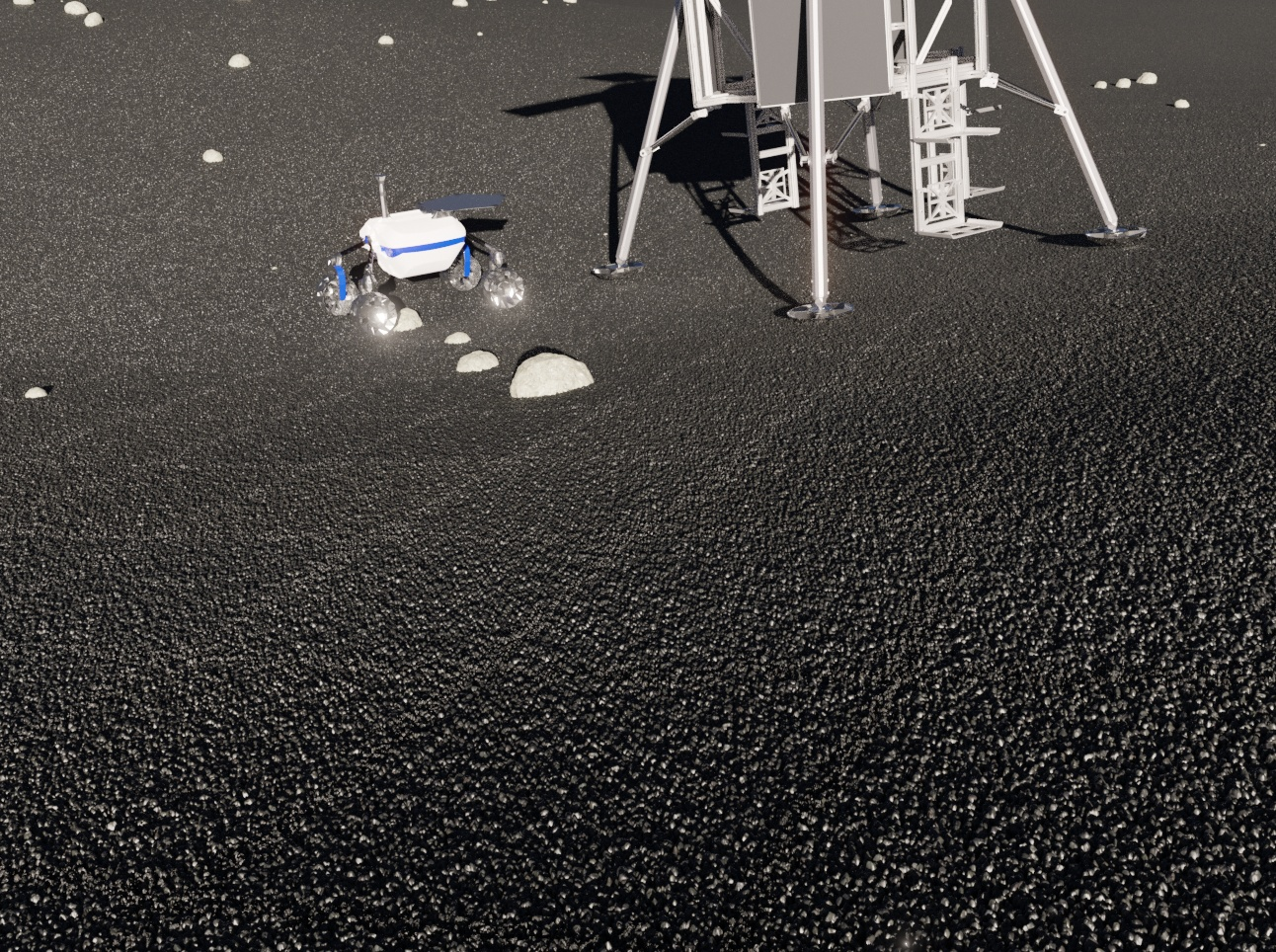

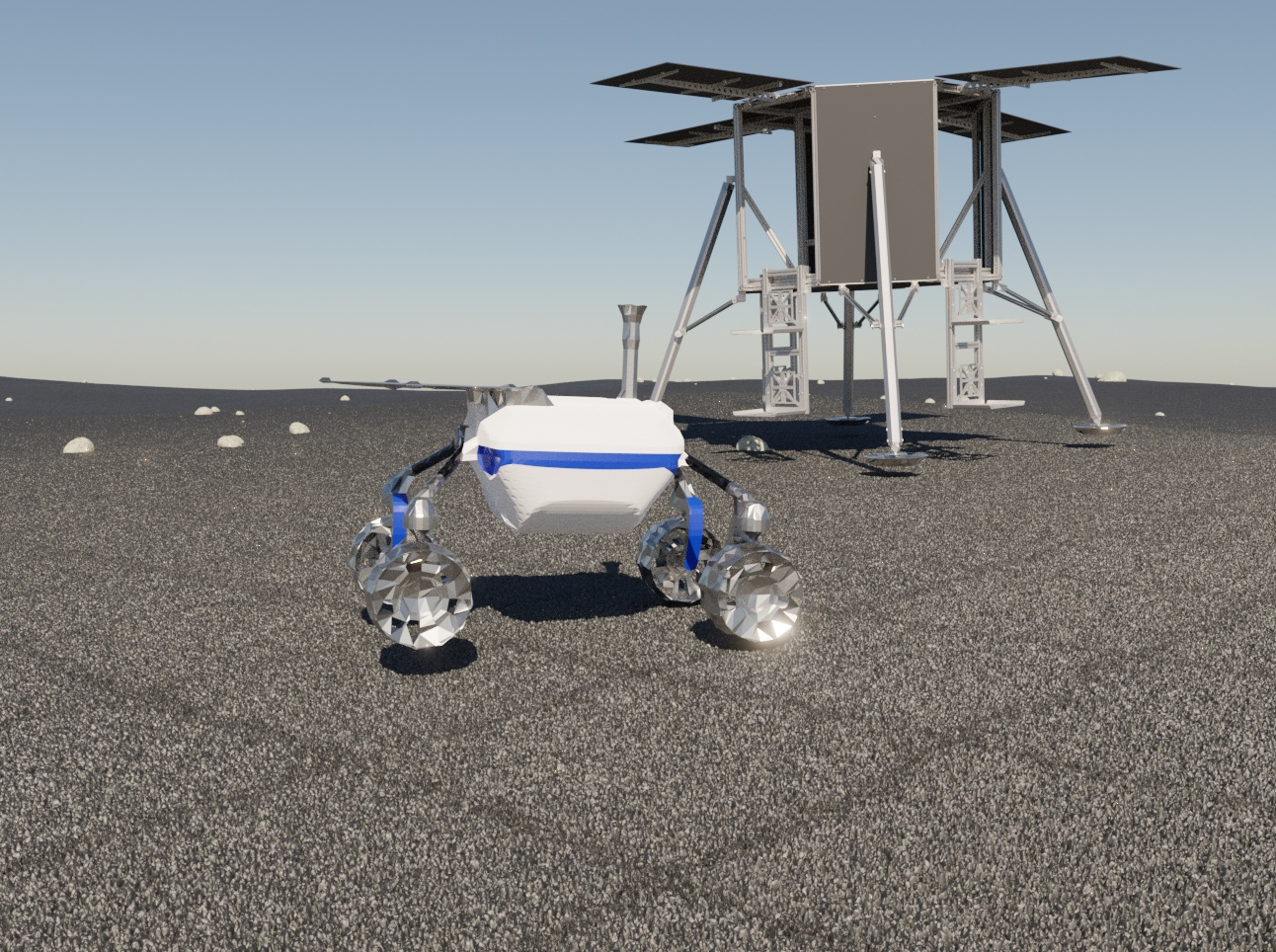

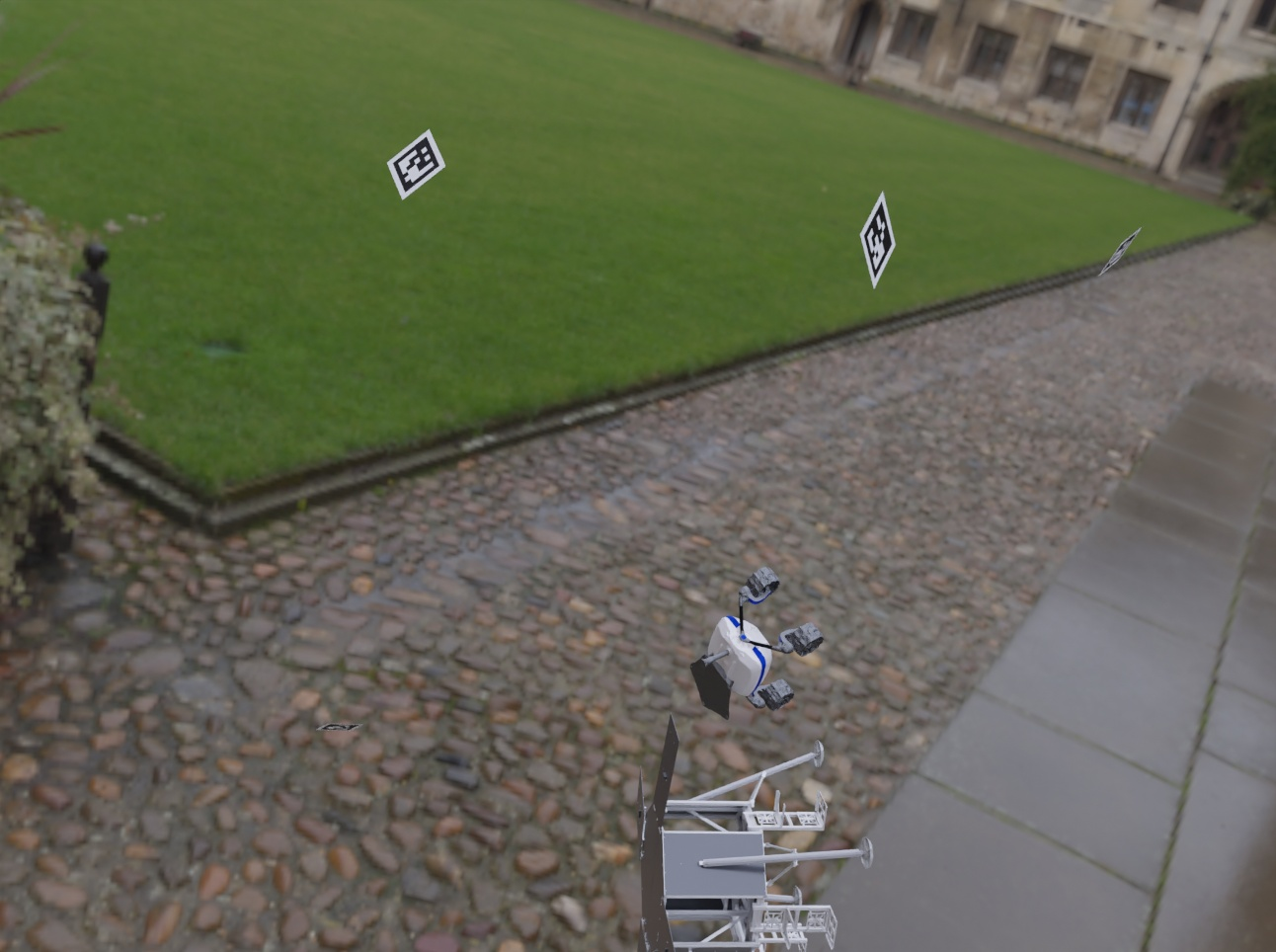

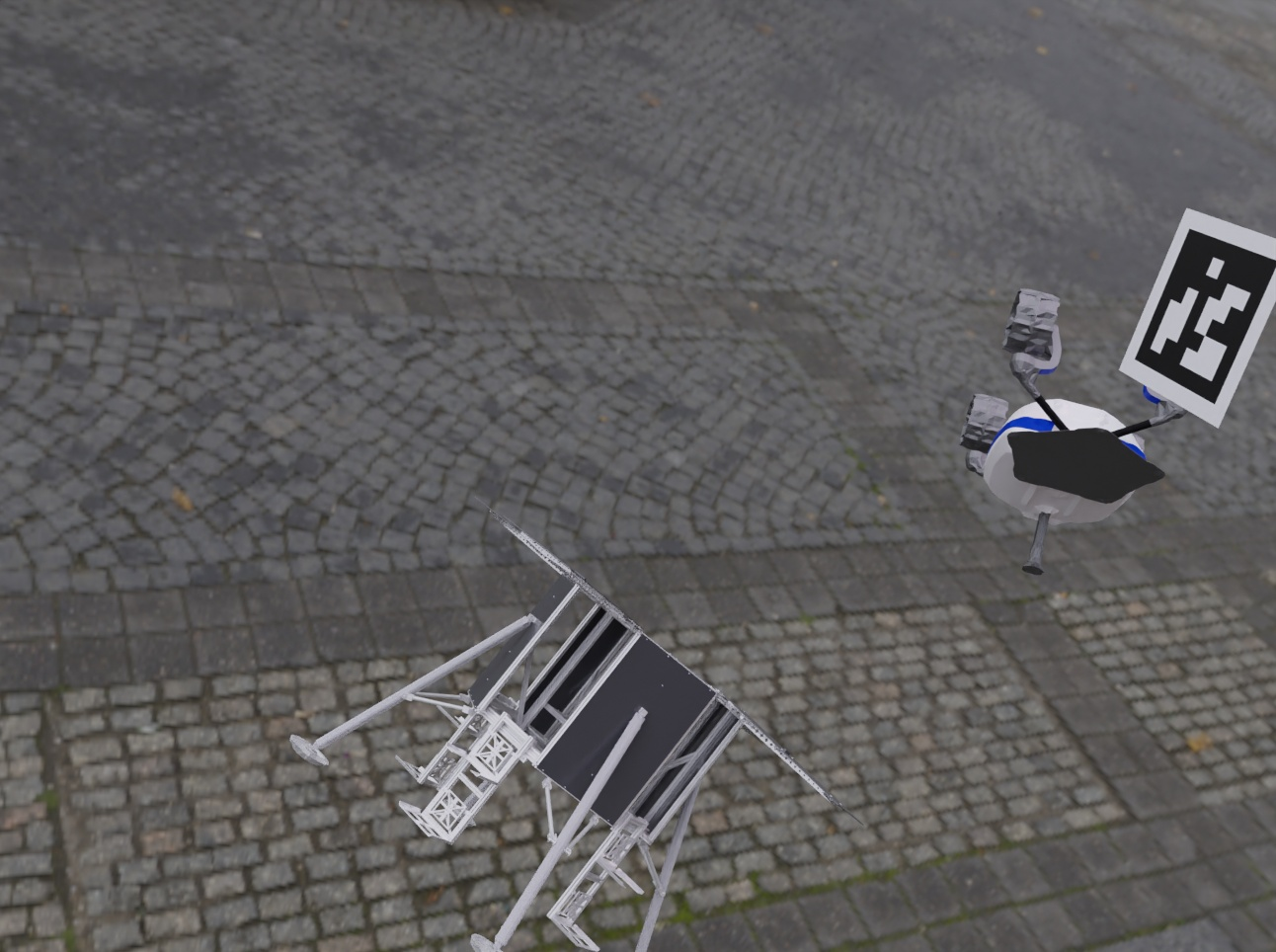

Synthetic data, strategically generated from simplified CAD models, facilitated rigorous training of both object detection and pose estimation models via BlenderProc and OAISYS frameworks. Diverse synthetic data contributed to improved model robustness, validated by detection rates and pose accuracy on unseen data samples.

Figure 3: Training samples from OAISYS and BlenderProc featuring LRU and Lander models.

Real-World Evaluation

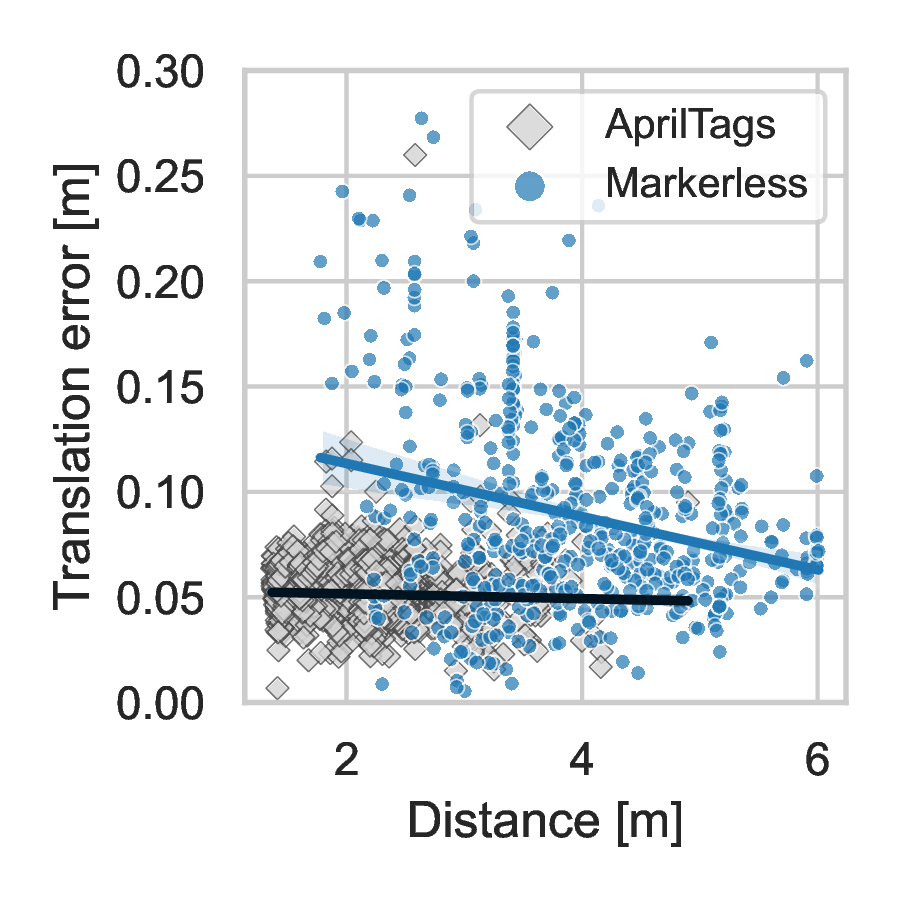

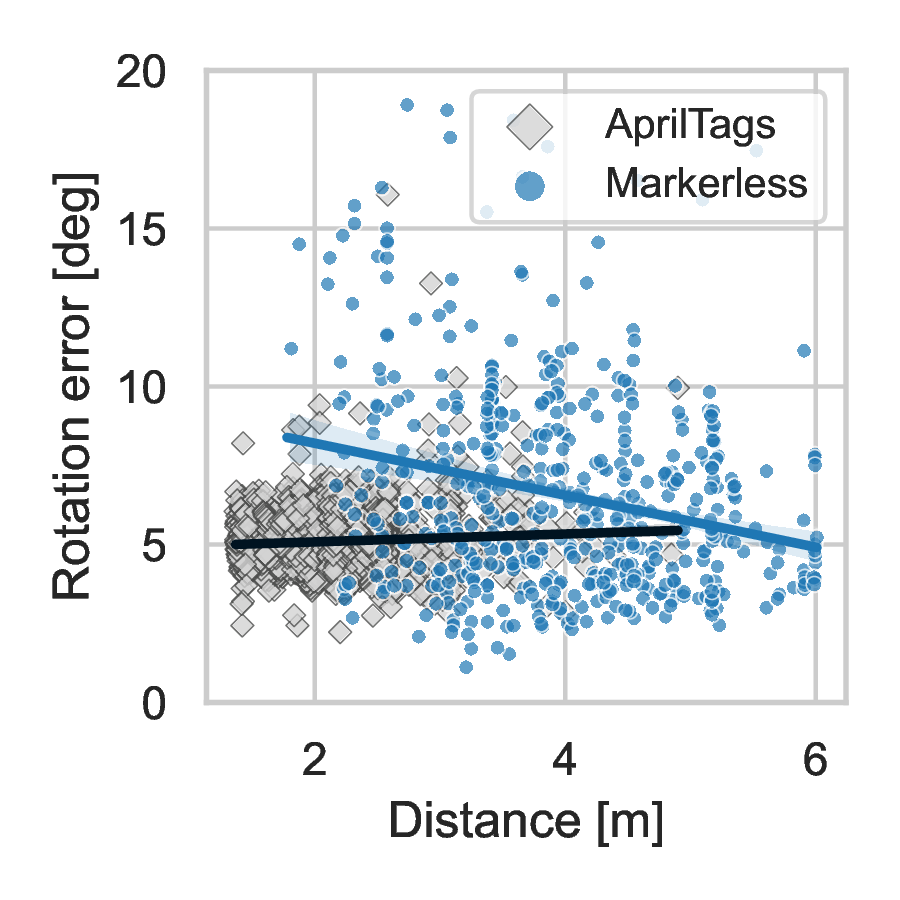

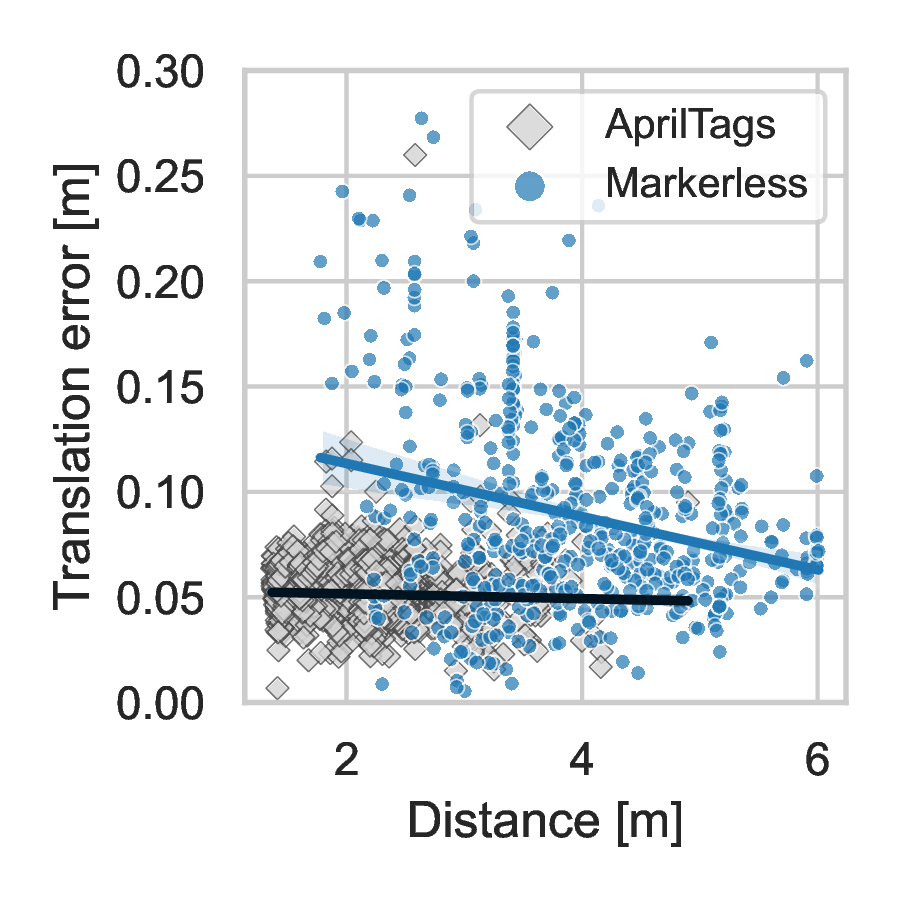

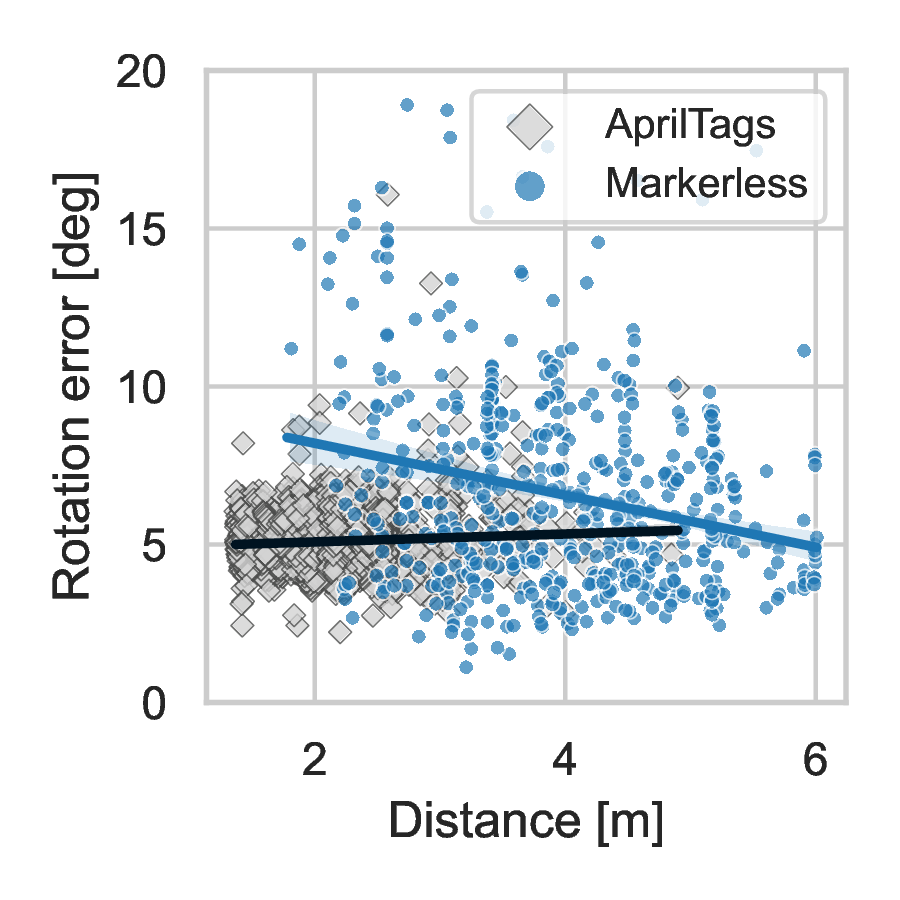

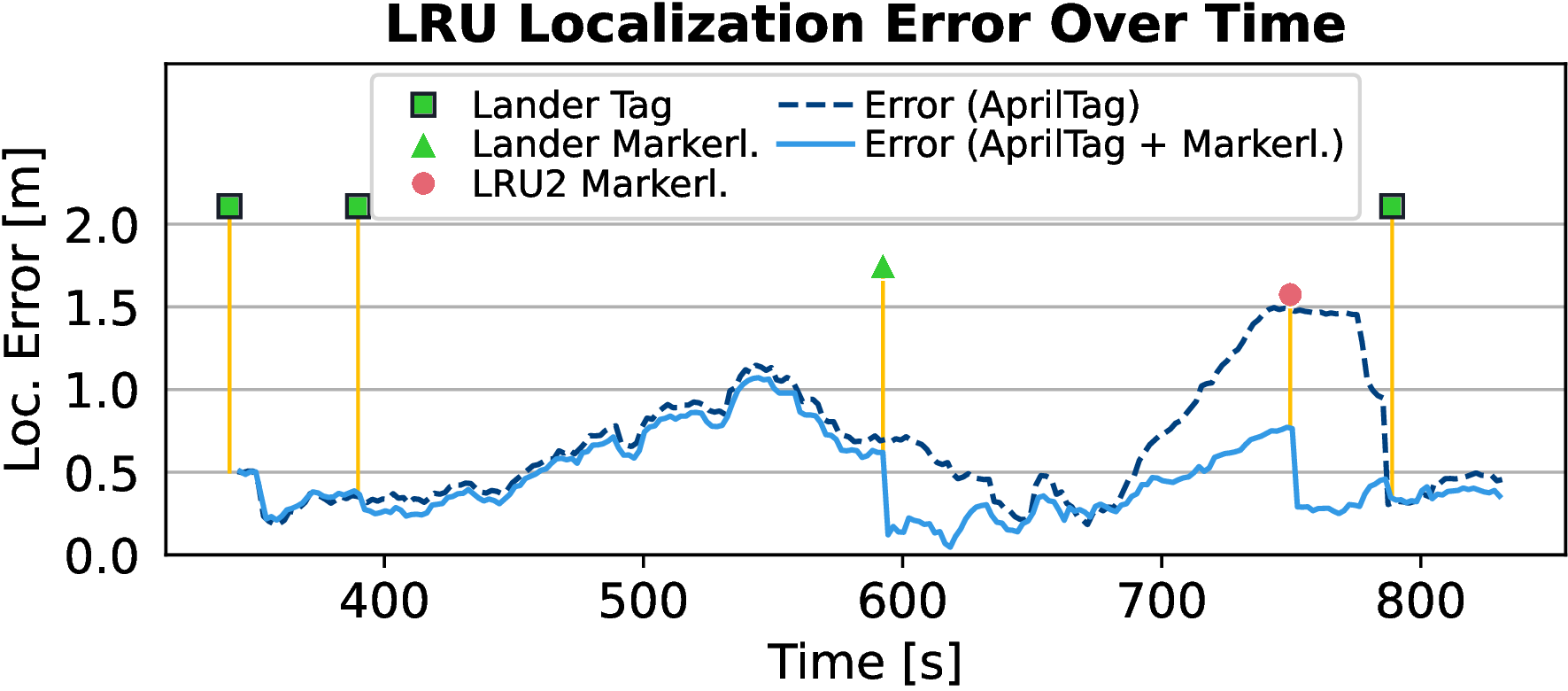

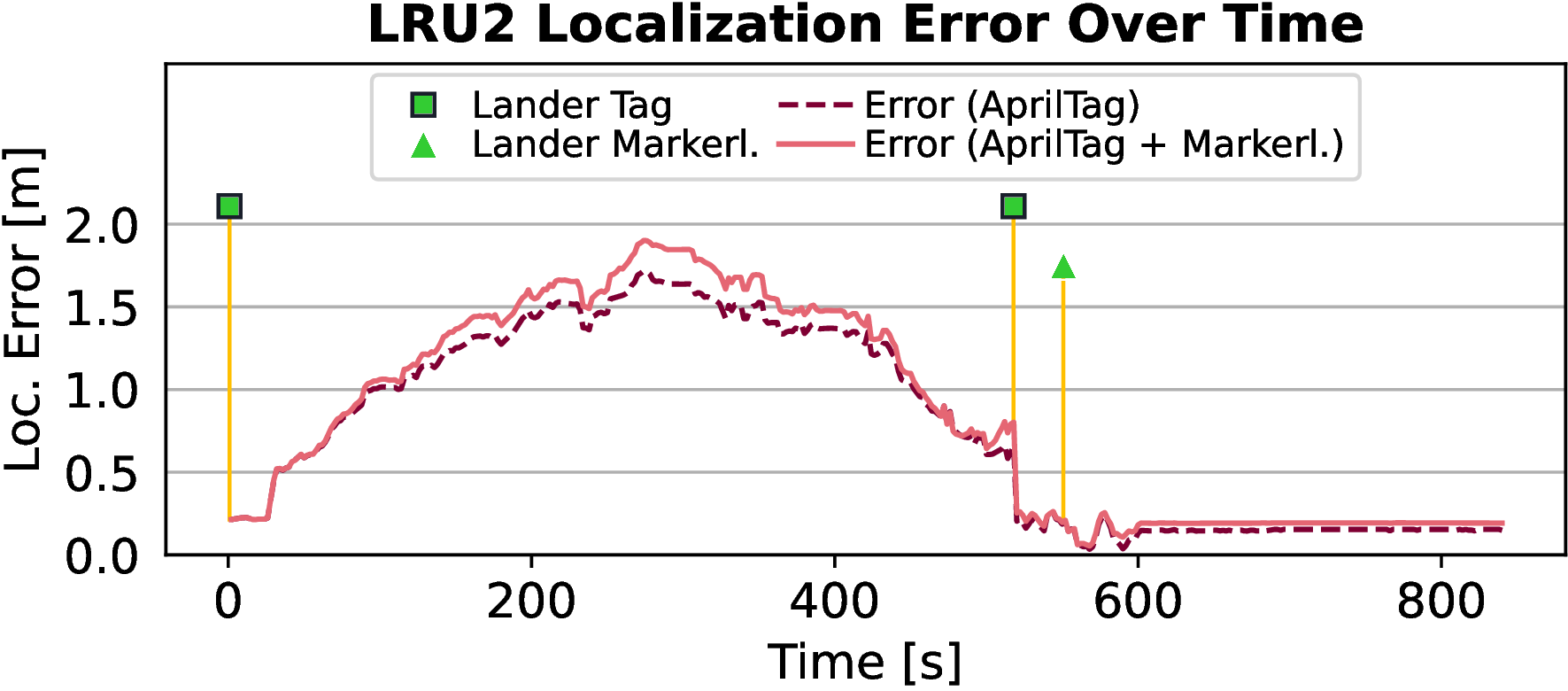

Real-world tests demonstrated the ability of the markerless detection approach to improve detection range and accuracy in comparison with fiducial markers under VICON measurements. The test narrated scenarios where conventional markers failed beyond certain range thresholds, showing increased accuracy at distances due to reduced perspective distortion.

Figure 4: Comparison of markerless pose estimation errors against conventional AprilTag detection under VICON measurements.

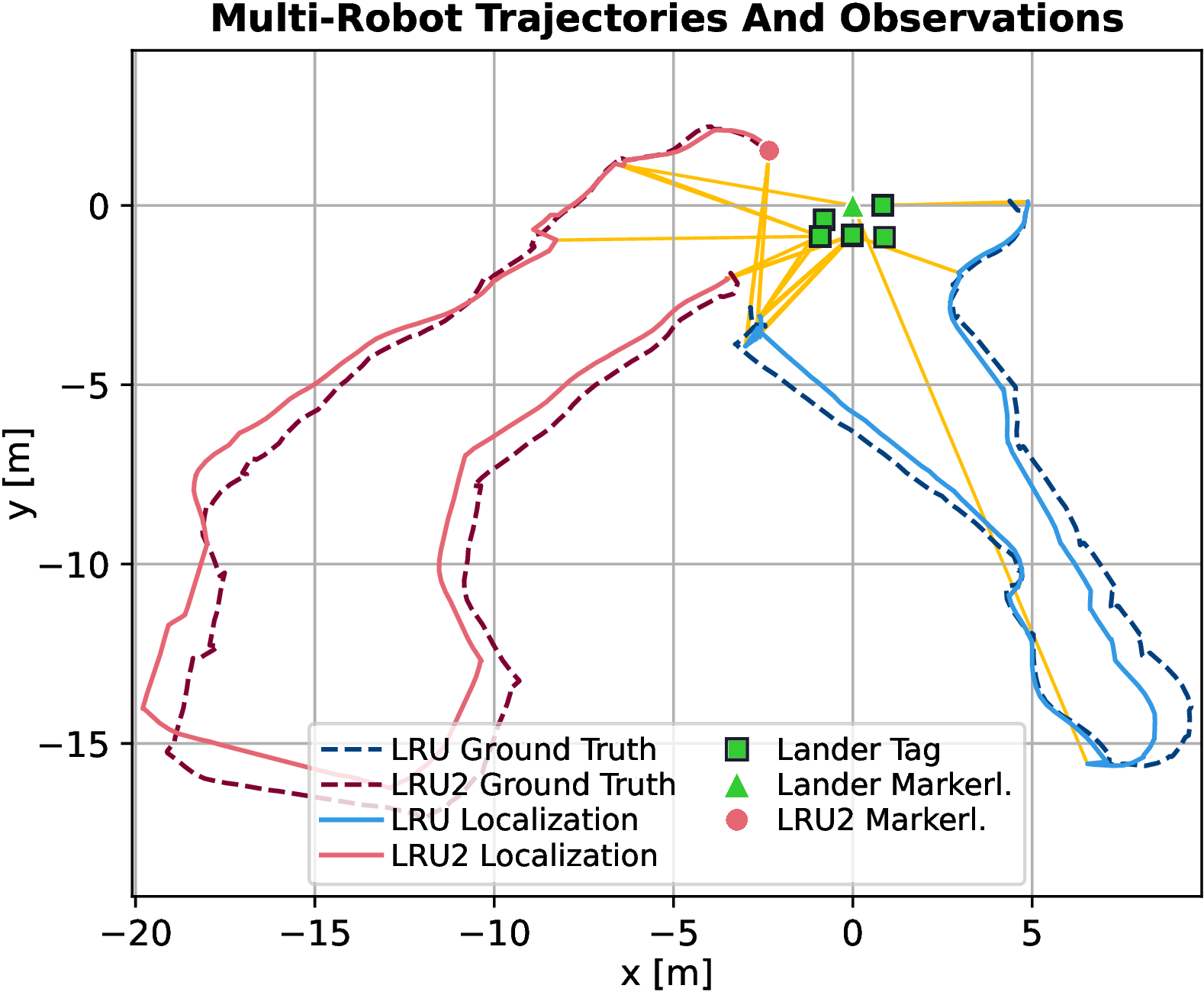

Multi-Robot SLAM Integration

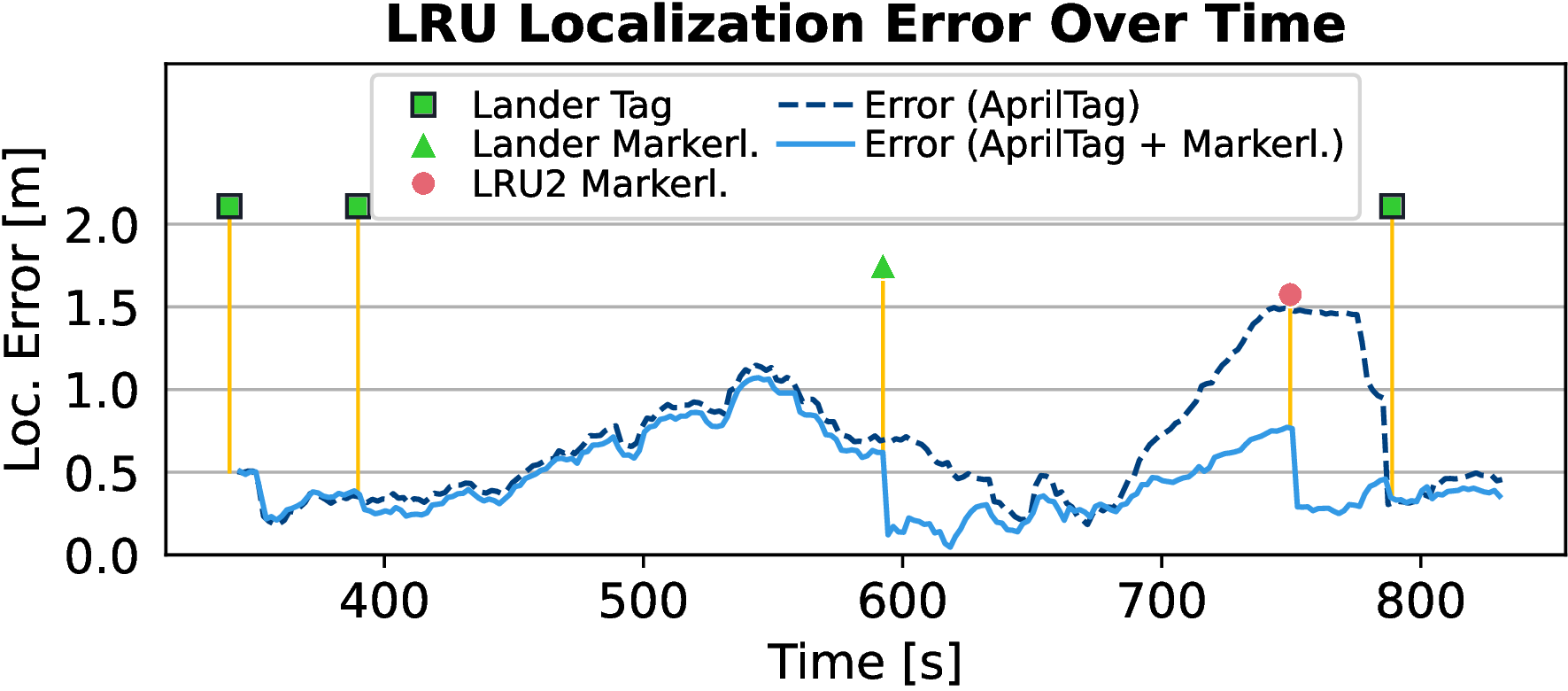

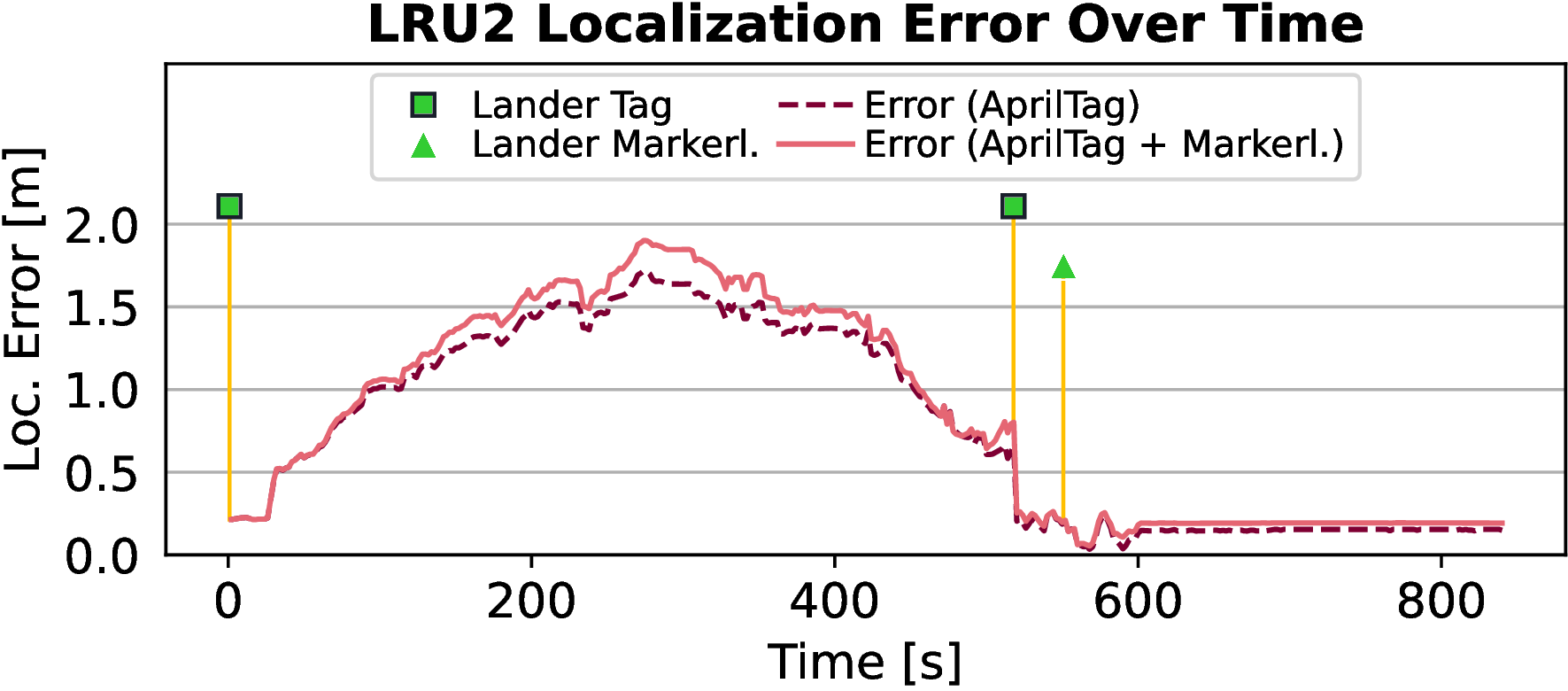

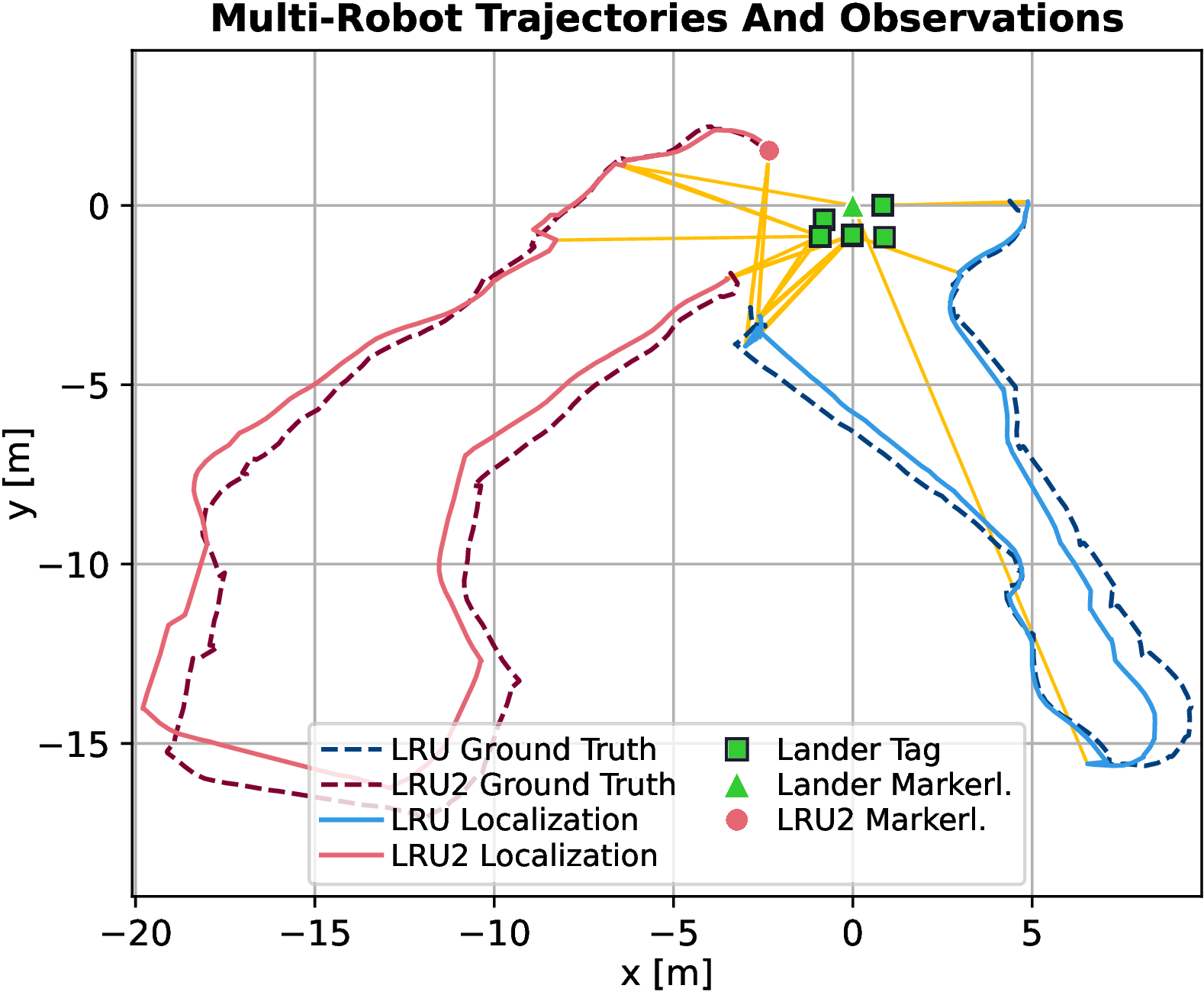

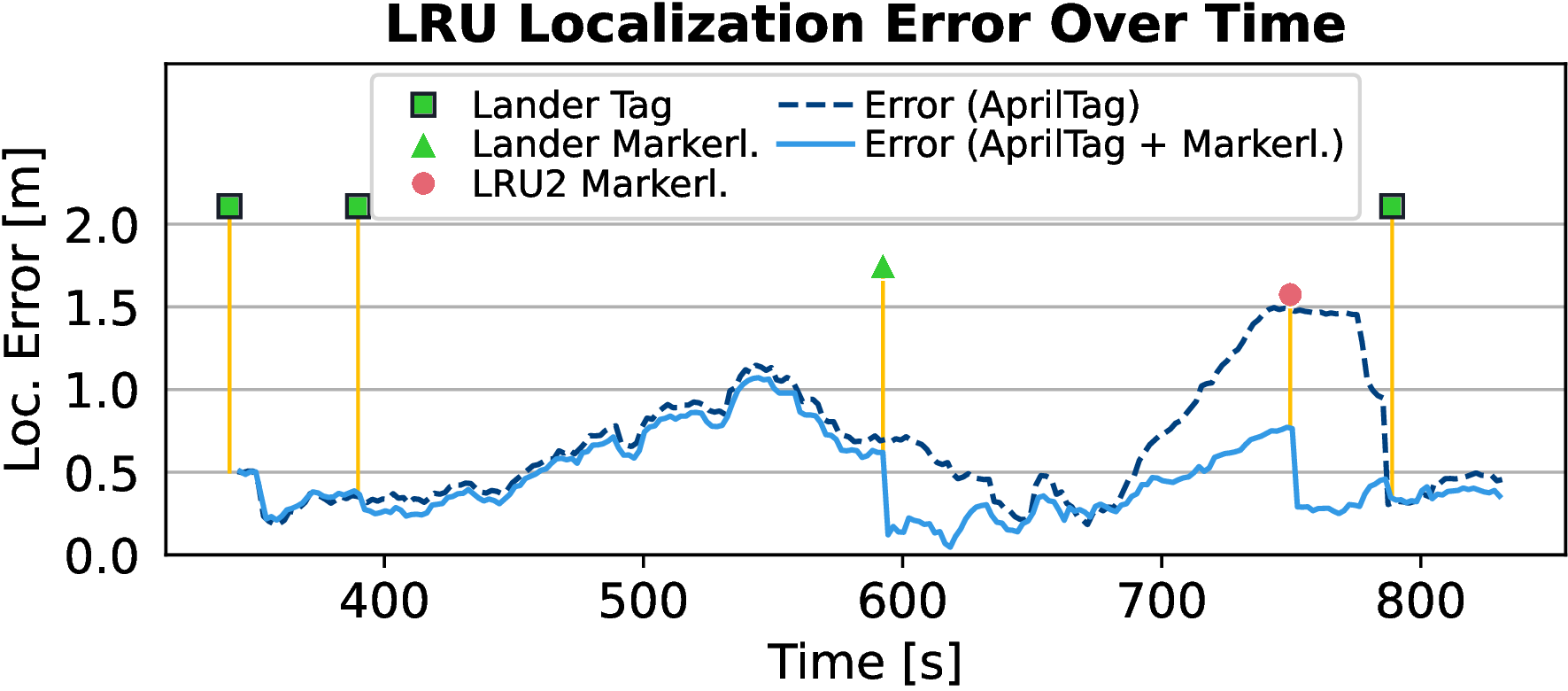

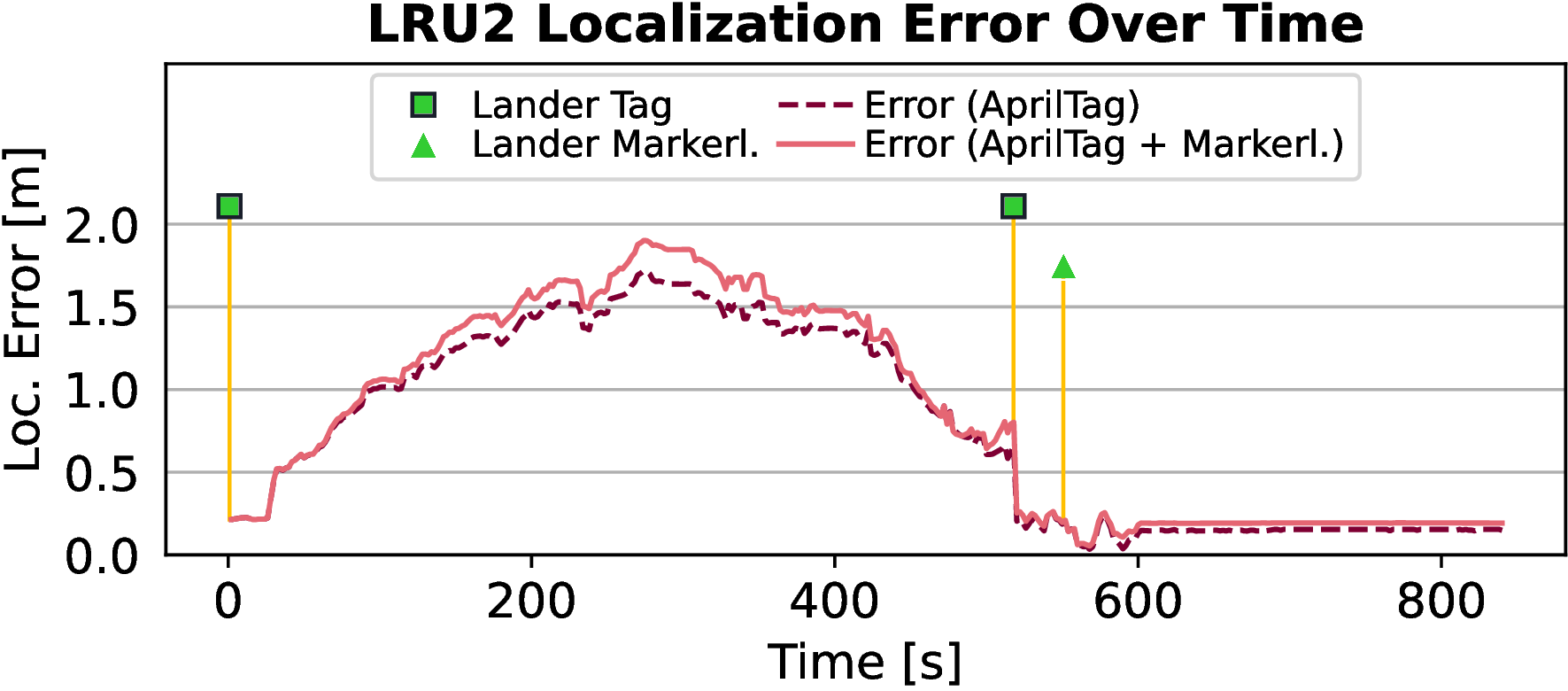

The markerless SLAM system underwent evaluation during field tests involving navigation experiments on Mount Etna. Results showed significant improvements in detection rates and maximum detection distances across missions, reducing open-loop navigation sequence durations and enhancing localization accuracy against D-GNSS ground truth.

Figure 5: Multi-robot SLAM results from Mission 1, illustrating trajectory and localization error corrections following markerless detection events.

Figure 6: Multi-robot SLAM results from Mission 2, showcasing error corrections after markerless observations.

Conclusion

The presented approach demonstrates substantial advancements in markerless robot detection and pose estimation for multi-agent SLAM systems. Enhanced detection ranges and improved localization accuracy suggest potential expansions into articulated robot configurations. Future directions include GPU deployment for real-time operation, emphasizing the practical applicability of this approach in dynamic and perceptually challenging environments.