- The paper proposes a novel approach that leverages LLM-generated constraints to improve causal graph inference.

- It integrates structured semantic priors with data-derived conditional independence tests within the Causal ABA framework.

- Evaluation on synthetic datasets shows superior performance in normalized SHD and F1-score compared to traditional methods.

Leveraging LLMs for Causal Discovery: A Constraint-Based, Argumentation-Driven Approach

This paper proposes a novel methodology for causal discovery by integrating LLMs into Causal Assumption-based Argumentation (Causal ABA). By using LLMs as imperfect experts, it aims to enhance the discovery process through structured semantic priors while maintaining the rigor of symbolic reasoning. This approach addresses the challenge of combining semantic insights with data-derived evidence, thereby advancing both theoretical and practical aspects of causal graph inference.

Integrating LLMs with Causal ABA

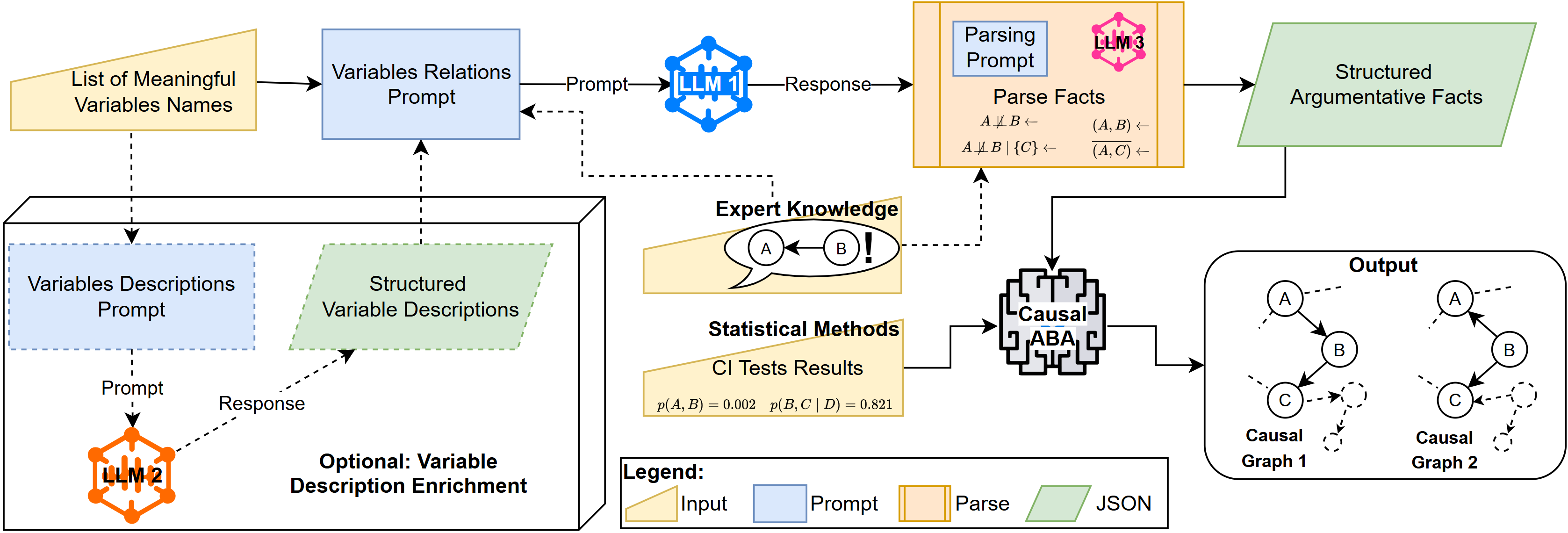

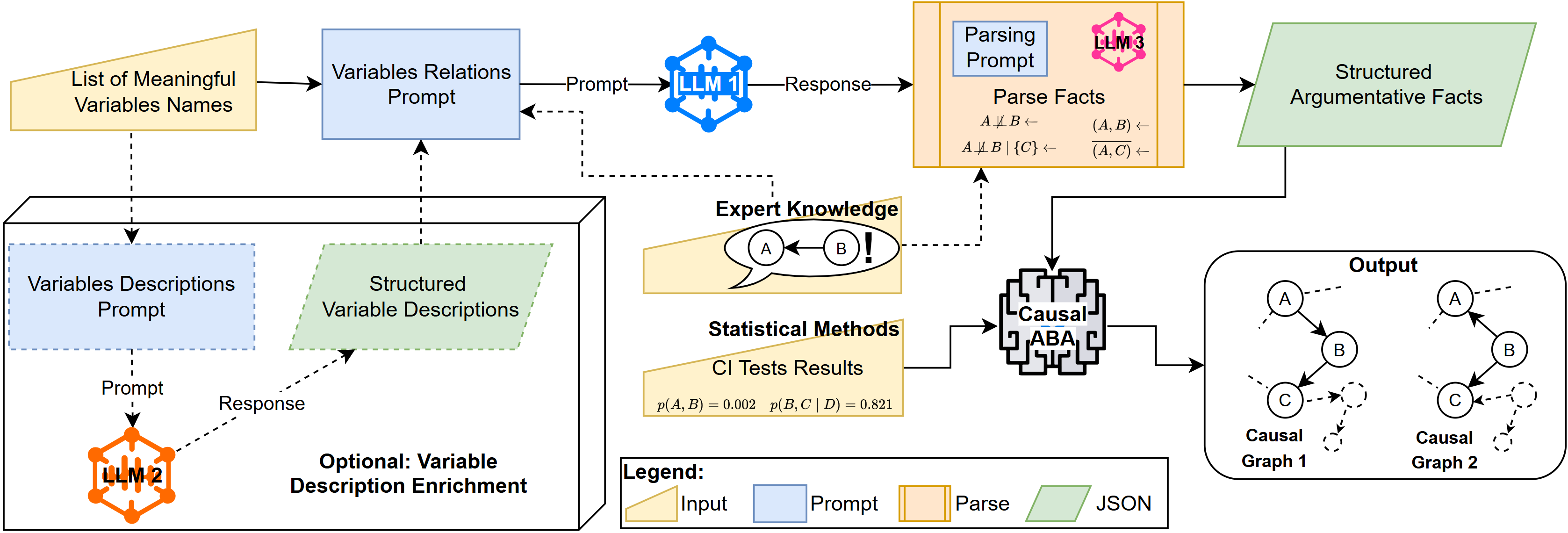

The integration of LLMs into Causal ABA involves a pipeline that elicits causal constraints from variable metadata, such as names and optional descriptions, by prompting LLMs to generate pairwise causal statements.

Figure 1: LLM Integration Pipeline: LLMs generate causal statements from variable metadata, which are structured into assumptions for Causal ABA to infer causal graphs.

The generated constraints, filtered through a consensus mechanism, are introduced into Causal ABA, complementing data-derived conditional independence constraints. This dual-source constraint integration aims to maximize causal inference accuracy by combining the rich prior knowledge encoded in LLMs with empirical data evidence.

Evaluation Protocol and Results

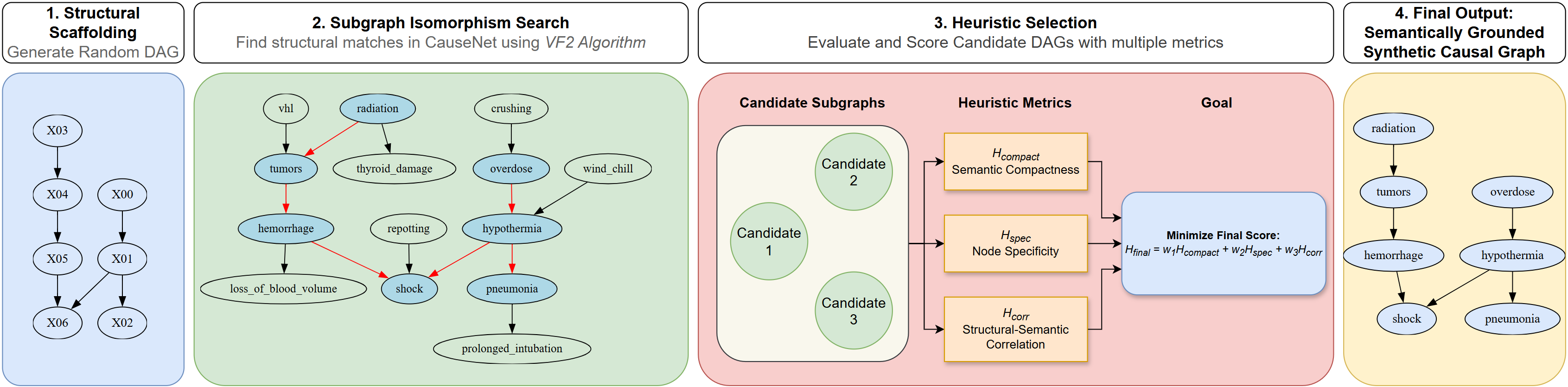

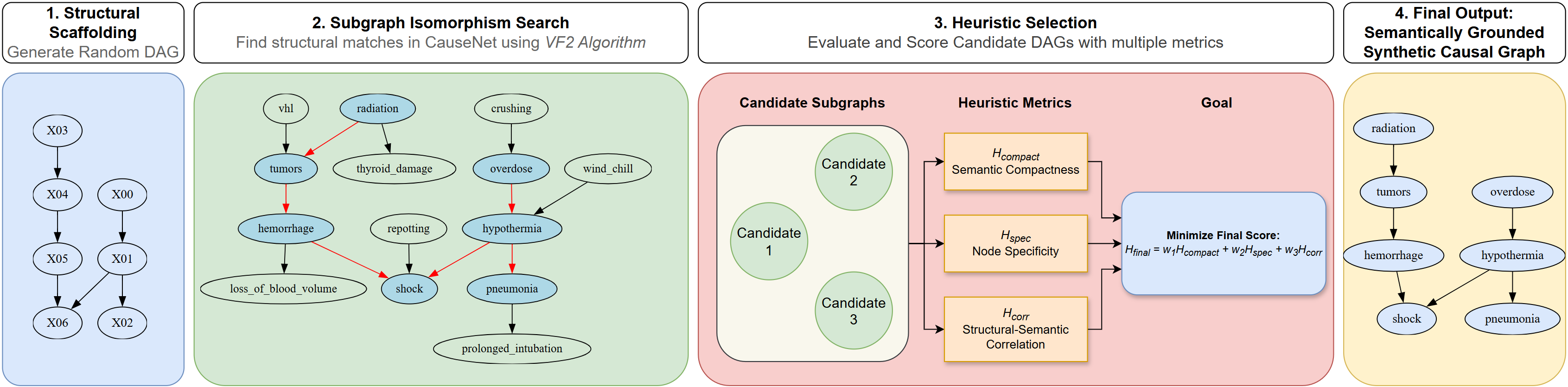

Evaluation of this approach is conducted using both standard benchmarks and novel synthetic datasets from CauseNet, a web-extracted knowledge graph. The synthetic evaluation protocol ensures robustness against memorization biases by grounding random DAGs in real-world concepts and selecting the most semantically coherent subgraphs through heuristic scoring.

Figure 2: Synthetic Evaluation Protocol: DAGs are grounded in CauseNet, and heuristic scores select the most coherent subgraphs for robust LLM evaluation.

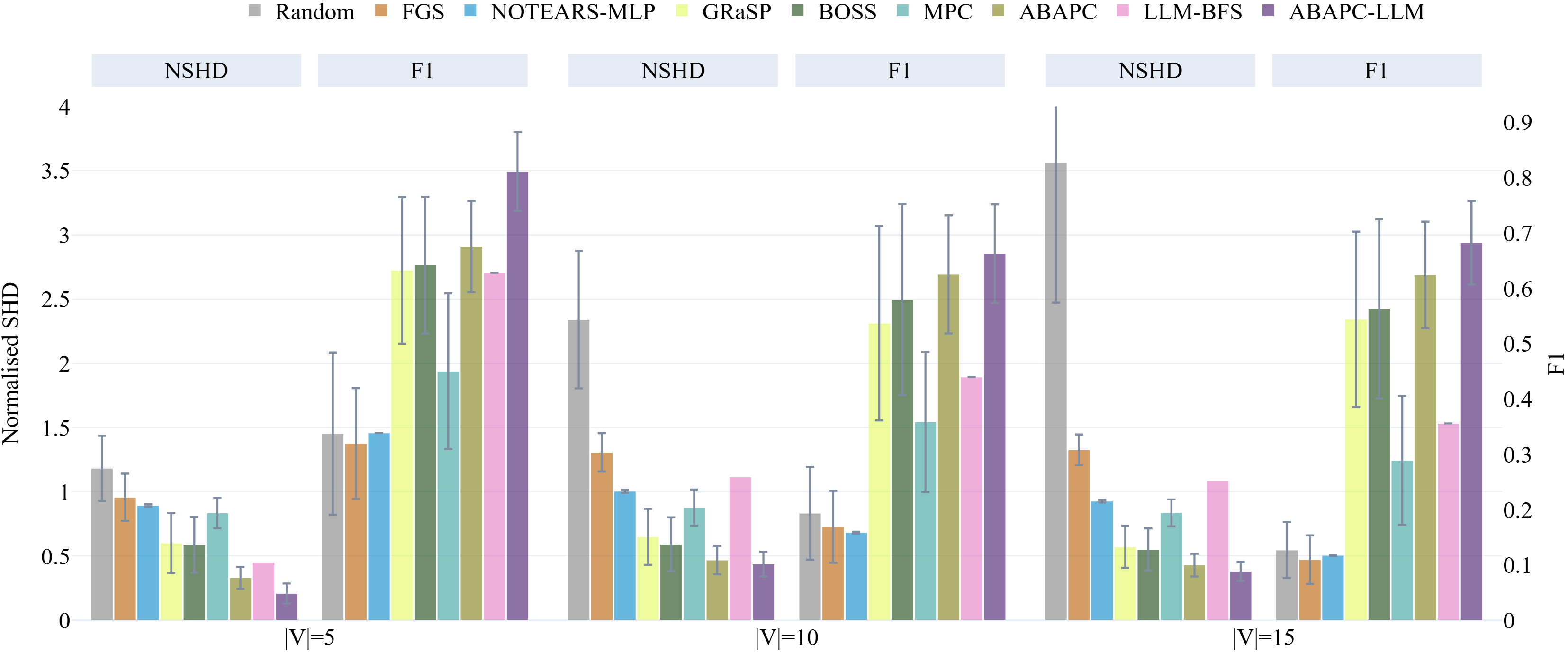

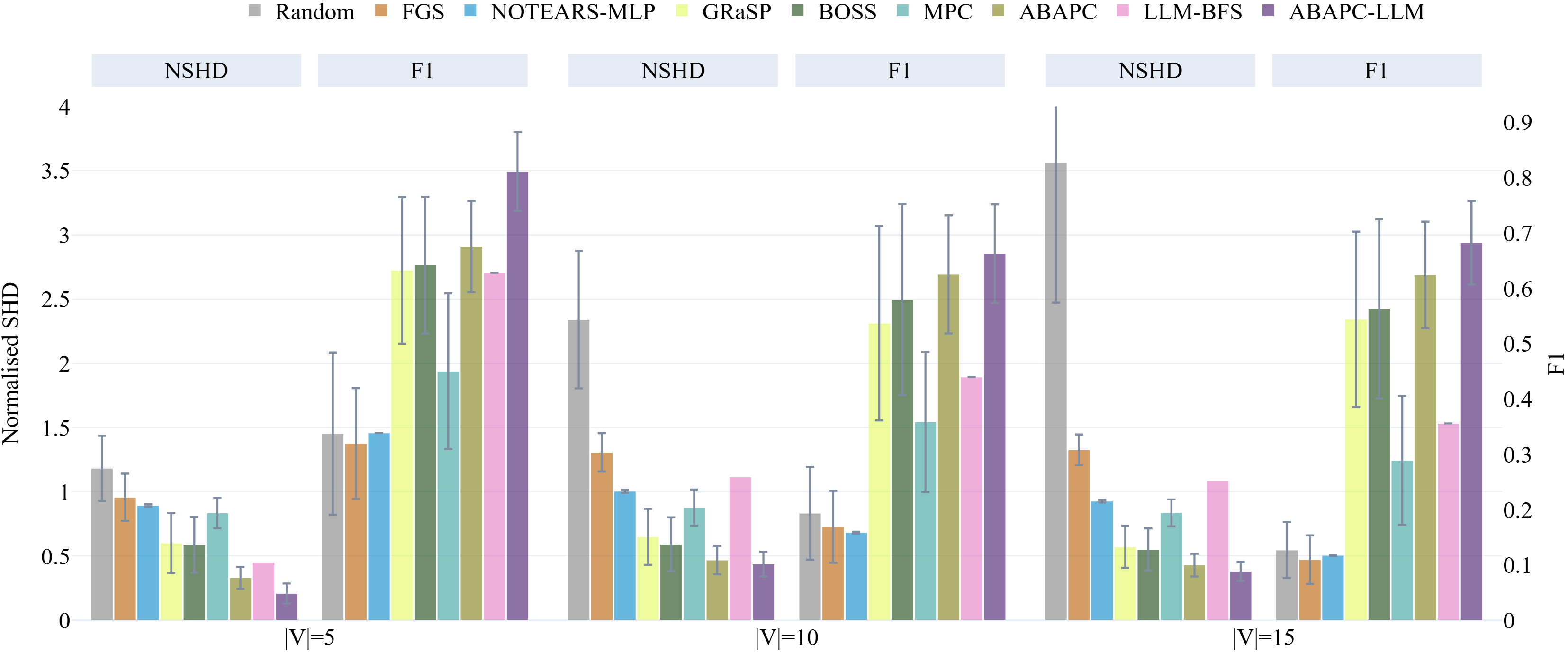

Comparisons with traditional algorithms show that the LLM-augmented Causal ABA outperforms competitors in normalised Structural Hamming Distance (SHD) and F1-score across synthetic datasets.

Figure 3: Normalised SHD and F1-score comparisons of LLM-augmented Causal ABA with baselines, indicating superior performance on synthetic datasets.

Interaction of Constraints and Data

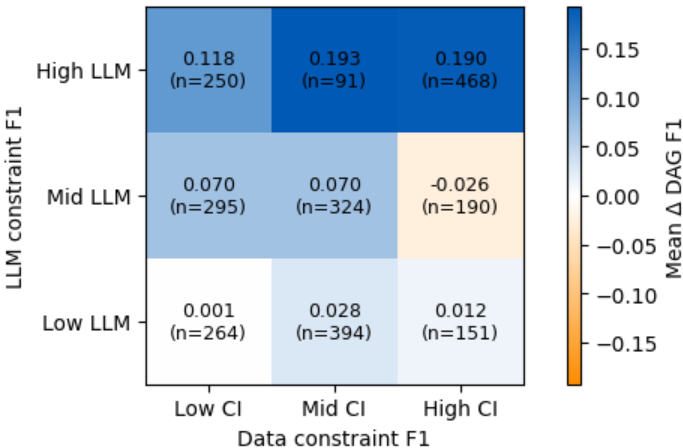

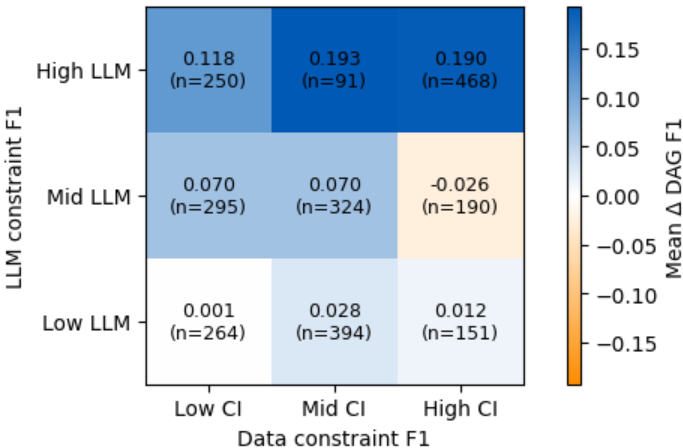

The quality of LLM-derived constraints is pivotal. Heatmaps illustrate the correlation between the quality of these constraints, derived from LLMs, and improvements in graph reconstruction accuracy after integration with Causal ABA.

Figure 4: Heatmap showing the relationship between LLM-derived and data-derived constraint quality and graph reconstruction accuracy.

Results indicate that when high-quality LLM constraints align with strong data-derived evidence, structural improvements are most substantial. This synergy highlights the value of structured integration of semantic and statistical signals.

Conclusion

This novel framework represents an effective approach to hybrid causal discovery, showcasing significant improvements in causal graph inference when semantic insights from LLMs are judiciously integrated with data-derived constraints. Future work could focus on extending this approach to encompass knowledge from scientific texts, exploring adaptive confidence calibration for asserting LLM insights, and further diversifying LLM capabilities through more nuanced prompt designs.