Agentic Code Reasoning

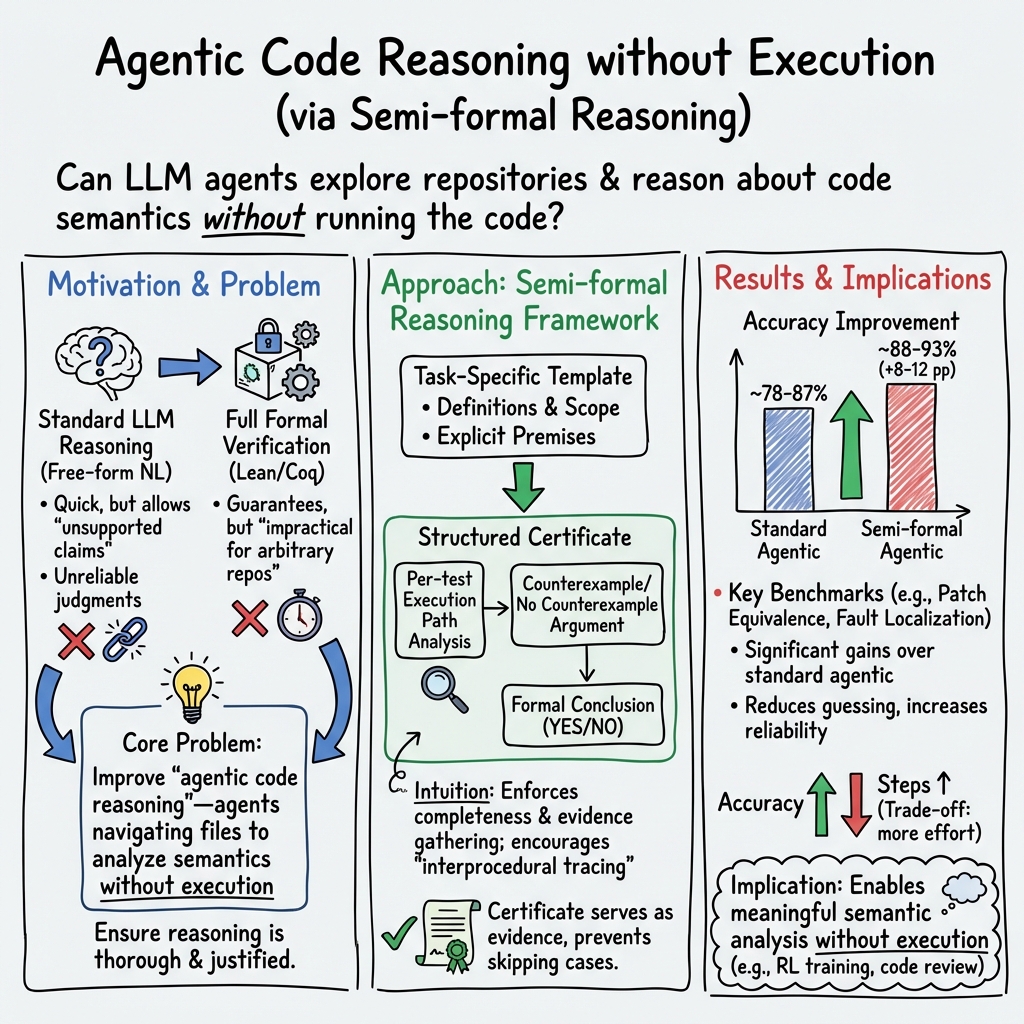

Abstract: Can LLM agents explore codebases and reason about code semantics without executing the code? We study this capability, which we call agentic code reasoning, and introduce semi-formal reasoning: a structured prompting methodology that requires agents to construct explicit premises, trace execution paths, and derive formal conclusions. Unlike unstructured chain-of-thought, semi-formal reasoning acts as a certificate: the agent cannot skip cases or make unsupported claims. We evaluate across three tasks (patch equivalence verification, fault localization, and code question answering) and show that semi-formal reasoning consistently improves accuracy on all of them. For patch equivalence, accuracy improves from 78% to 88% on curated examples and reaches 93% on real-world agent-generated patches, approaching the reliability needed for execution-free RL reward signals. For code question answering on RubberDuckBench Mohammad et al. (2026), semi-formal reasoning achieves 87% accuracy. For fault localization on Defects4J Just et al. (2014), semi-formal reasoning improves Top-5 accuracy by 5 percentage points over standard reasoning. These results demonstrate that structured agentic reasoning enables meaningful semantic code analysis without execution, opening practical applications in RL training pipelines, code review, and static program analysis.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper asks a simple question: Can an AI “code detective” understand and compare code by carefully reading it—without running it? The authors call this agentic code reasoning. They show a new way to guide the AI’s thinking, called semi-formal reasoning, that makes the AI write down clear evidence and step-by-step logic before giving an answer. This helps the AI avoid guessing and be more reliable.

What the researchers wanted to find out

In everyday terms, the team wanted to see if an AI could:

- Tell whether two code fixes (patches) behave the same on tests, just by reading the code.

- Find where a bug lives in a big project (fault localization) without running anything.

- Answer deep questions about how code works inside real projects (code question answering).

They also wanted to test whether their structured, evidence-first way of thinking (semi-formal reasoning) works better than the usual “freeform explanation” style.

How they approached the problem

Think of the AI like a careful detective:

- It can open files, search for functions, and follow how data moves through the codebase (like tracking clues across chapters in a long book).

- It cannot run the project or its tests, install dependencies, or peek at commit history.

- Sometimes, it can run tiny standalone Python snippets to check general language behavior (like “How does Python format numbers?”), but not inside the project itself.

The key idea is semi-formal reasoning: the AI fills out a structured “certificate” that requires:

- Stating premises: What files changed, what functions were touched, and what the tests check.

- Tracing execution paths: For each test or behavior, explain which functions run and why.

- Drawing a formal conclusion: Based on the evidence, say “same” or “different” and why.

This structure forces the AI to gather proof instead of making vague claims. It’s like a teacher asking a student to show their work step by step.

A quick example from the paper:

- Two code fixes look the same at first glance. One uses Python’s built-in

format()to format a year; the other uses string formatting like'%02d' % (year % 100). - Standard reasoning assumed both were fine.

- Semi-formal reasoning made the AI search where

formatactually comes from and discovered that in this project,formatwas a different function that expects a date object, not a number—so that version would crash. The patches are not equivalent.

What they tested and what they found

The researchers tried their approach on three tasks that appear in real software work.

1) Patch equivalence (Do two patches pass/fail the same tests?)

- Why it matters: If you can check whether two patches behave the same without running tests, you can give fast feedback to training systems and code review tools.

- Result: On a challenging set of patch pairs, accuracy jumped from about 78% (standard reasoning) to about 89% with semi-formal reasoning.

- On real patches with known test specs, the best model reached about 93% accuracy using semi-formal reasoning, better than both quick one-shot answers and simple text-difference scores.

2) Fault localization (Where is the bug?)

- Why it matters: Pinpointing the exact file lines that cause a failing test is a core step in fixing software.

- Result: With semi-formal reasoning and code exploration, Top-5 accuracy (buggy lines are among the top 5 guesses) improved by 5–12 percentage points compared to standard reasoning, reaching around 72% in a small-scale test and showing consistent gains in a larger test.

3) Code question answering (Answer deep “how does this code behave?” questions)

- Why it matters: Developers often ask nuanced questions about code behavior, edge cases, and how pieces fit together.

- Result: On RubberDuckBench, semi-formal reasoning raised accuracy for one model from about 78% to about 87%. The structure helped prevent “educated guesses” and pushed the AI to cite actual code evidence.

Why this is important:

- The AI can analyze code meaningfully without running it.

- The structured method makes the AI more careful and complete.

- These improvements could reduce the cost and time of verifying code changes in large projects.

Why the results matter

- More reliable AI code reviewers: Because the AI must show evidence, its conclusions are less likely to miss hidden behaviors or assume too much.

- Faster training for coding AIs: If you can verify patch quality without running tests in sandboxes, you can speed up reinforcement learning pipelines and reduce compute costs.

- Practical across many languages and frameworks: The method uses structured prompts (not heavy-duty formal math proofs), so it can flexibly adapt to different projects.

Simple takeaways and future impact

- The main idea: Make AIs “show their work” in a structured way when reading code. This leads to more trustworthy answers.

- The gains: Consistent accuracy boosts across three tough tasks—comparing patches, finding bugs, and answering code questions—without running the code.

- Potential impact:

- Cheaper, faster tools for code review and testing.

- Better training signals for AI coding assistants.

- A new kind of static analysis that uses reasoning templates rather than complex, hand-written rules.

The authors suggest future steps like training models to internalize this structured style, applying it to security tasks (like spotting vulnerabilities), and combining it with light formal checks for even stronger guarantees.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, framed to guide future research.

- Template design sensitivity: No ablation on which semi-formal template components (e.g., premises vs. per-test traces vs. formal conclusion) contribute most to gains; unclear minimal specification that preserves accuracy.

- Scalability of certificate filling: The method requires per-test execution-path tracing; feasibility for large suites (hundreds–thousands of tests) and big monorepos is not quantified or optimized.

- Compute and cost overheads: Step counts are reported, but token usage, latency, and monetary costs of semi-formal reasoning vs. baselines are not measured; no analysis of scalability/cost trade-offs.

- Generalization across LLMs: Results rely on Sonnet-4.5 and Opus-4.5; robustness across open-weight and smaller models, or model families with different reasoning biases, is not evaluated.

- Reproducibility with open models: No evidence that open-weight models (e.g., Llama, Qwen, CodeLlama) achieve similar benefits under the same templates and agent tools.

- Template learning vs. prompting: The paper suggests post-training to internalize structure, but does not test whether fine-tuning or RL on semi-formal outputs reduces overhead or improves reliability.

- Cross-language coverage: Evaluations focus on Python (patch equivalence, QA) and Java (fault localization) with some C++ QA; performance on languages with heavy macros, metaprogramming, advanced type systems, or build systems (e.g., Rust, Go, TypeScript/JS, Scala, Kotlin) is untested.

- Framework and ecosystem breadth: Limited assessment across diverse frameworks (e.g., React, Spring, Rust crates); behavior on repos with code generation, reflection, or heavy metaprogramming is unknown.

- Dependency on test artifacts: Patch equivalence assumes access to F2P/P2P tests or test patches; verification without tests (e.g., issue-only or spec-only settings) is not addressed.

- Equivalence beyond tests: The approach defines equivalence modulo tests; how to reason about semantics not captured by tests or detect specification drift remains open.

- Large test suites without execution: Strategies to prioritize/cluster tests for tracing or to approximate test coverage statically are not explored.

- Ground-truth reliance on LLM-based grading (QA): RubberDuckBench uses multi-model graders; no human adjudication or sensitivity analysis for grader bias, rubric ambiguity, or failure cases.

- Certificate trustworthiness: Certificates are not machine-checkable; there is no automated validation that required sections are complete or faithfully evidence-backed, leaving room for plausible but incorrect reasoning.

- Formal post-verification: Combining semi-formal certificates with automated checkers (e.g., Datalog/SMT) is proposed only as future work; no prototype showing which parts are easily checkable.

- Error mode mitigation: Identified failures (incomplete tracing, third-party library semantics, dismissing subtle differences) are not addressed via concrete retrieval (docs/source), library-aware tools, or reasoning constraints.

- External knowledge integration: The agent cannot fetch online docs; remedies like offline API docs, manpages, or vendor source mirrors to resolve library semantics are not integrated or evaluated.

- Tool-use ablations: No systematic ablation of search tools (grep, ctags, ripgrep, LSP, call-graph builders) or planning strategies to quantify which tools most reduce missed paths.

- Dynamic-semantics blind spots: Non-executable setting cannot capture runtime-dependent behavior (reflection, dynamic imports, concurrency, I/O, environment/version-specific semantics); scope and frequency of such blind spots are not quantified.

- Environment mismatch risk: Agents may run local Python snippets to probe “general language behavior”; no controls for version skew relative to target repo/toolchain which could mislead conclusions.

- Fault localization comparability: Their matching metric deviates from prior work; lack of head-to-head comparisons under standard metrics hampers comparability to existing systems.

- Use of “classes loaded during test execution” (FL): How “loaded classes” are obtained without execution is unclear; if precomputed dynamically, this introduces an execution dependency that is not accounted for in the static-analysis framing.

- Real-world workflow alignment (FL): Excluding stack traces/logs may underrepresent practical debugging scenarios; benefits of semi-formal reasoning when such signals are available remain unknown.

- Dataset selection bias: Curated patch-equivalence set emphasizes high-similarity, hard cases; generalization to naturally distributed patch pairs (including trivial/non-overlapping edits) is unmeasured.

- Contamination and benchmark leakage: While relative comparisons are emphasized, absolute numbers may still be inflated; no contamination-controlled subset or model decontamination is provided.

- Baseline tuning fairness: difflib threshold is tuned on the test set; lack of validation split or cross-validation risks optimistic baseline comparison.

- RL reward noise impact: 93% verifier accuracy implies ~7% label noise; the effect of this noise on RL convergence, stability, and policy quality is not quantified.

- Certificate adherence auditing: No metrics for template adherence (e.g., omissions, boilerplate reuse, unsupported claims) or for detecting “template gaming.”

- Long-context limits: The approach relies on reading code via tools and context windows; no methods for hierarchical indexing, retrieval compression, or summarization to handle very large repos.

- Git/history blind spots: Git tools are disabled; some semantic distinctions depend on historical context or deprecations; impact and potential mitigations are unstudied.

- Multi-file/multi-region bugs: FL performance drops with many regions; methods for aggregating evidence across scattered edits or ranking coverage for N>5 are not proposed.

- Confidence calibration: No analysis of confidence scoring, false positive/negative trade-offs, or how semi-formal structure affects calibration or abstention thresholds.

- Human-in-the-loop usability: No user studies on whether semi-formal certificates are readable, actionable, and trustworthy for developers during code review or debugging.

- Security and safety: Running shell tools in agent loops carries security risks; sandboxing, permissioning, and red-teaming of the verifier workflow are not discussed.

- Release artifacts: Prompts, agents, datasets, and scripts appear in appendices/references; availability and reproducibility (including exact tool versions and configs) are not established.

- Hybridization with static analyzers: No empirical comparison or fusion with classical static analysis (e.g., dataflow, taint, alias analysis) to quantify complementary strengths/weaknesses.

- Generalization to other tasks: While future work is suggested (vulnerability detection, code smells, API misuse), no pilot results show that the semi-formal paradigm extends effectively to these domains.

- Task granularity and planning: The agent plans are not formalized; whether explicit planning (e.g., ReAct + subgoal tracking) improves coverage of codepaths remains unexplored.

- Error-specific interventions: No targeted interventions (e.g., obligating call-graph expansion to a depth, evidence citation checks, or alternate hypothesis validation) are tested to reduce the listed failure modes.

Practical Applications

Practical Applications of “Agentic Code Reasoning”

The paper introduces semi-formal reasoning templates that require LLM agents to enumerate premises, trace code paths, and reach evidence-backed conclusions—enabling high-accuracy, execution-free semantic analysis of code. Below are actionable applications organized by deployment horizon, with sectors, possible tools/products, and key dependencies.

Immediate Applications

These can be piloted today using the paper’s methods (semi-formal certificates, agentic exploration without executing repo code), with reasonable integration effort.

- Execution-free patch equivalence gate for CI/CD

- Sectors: software across industries (healthcare, finance, energy, robotics, SaaS)

- What: Pre-screen pull requests or hotfixes to assess whether a proposed patch is semantically equivalent to a known-good fix or meets the behavior specified by fail-to-pass (F2P) tests—without executing the repo.

- Tools/Products/Workflows: “Patch Equivalence Verifier” GitHub/GitLab bot that attaches a semi-formal certificate (premises, per-test traces, conclusion) to PRs; policy options to auto-flag risky diffs.

- Assumptions/Dependencies: Highest accuracy (≈93% reported) when F2P test patches are available; requires agentic file search and sufficient model capacity; may underperform when behavior hinges on opaque third-party libraries or dynamic runtime conditions.

- Execution-free RL reward service for training SWE agents

- Sectors: software tooling, AI/ML platforms

- What: Replace expensive sandbox/test execution with a verifier API that returns pass/fail plus a certificate as a reward signal for reinforcement learning on code tasks.

- Tools/Products/Workflows: “Verifier API” microservice; integrated into RL pipelines (e.g., SWE-Gym/R2E-Gym-like setups) to deliver cheaper, scalable feedback.

- Assumptions/Dependencies: Reliability depends on availability/quality of specifications or F2P tests; careful monitoring for reward hacking; model and step costs vs. CI costs; calibrate for failure modes (e.g., third-party semantics).

- Evidence-backed code review assistant

- Sectors: software engineering in all domains

- What: Generate structured change-impact reports that enumerate modified files, traced call paths, implicated tests, and a formal conclusion—reducing reviewer cognitive load.

- Tools/Products/Workflows: “Semi-formal Review Summarizer” PR bot; IDE extension showing premises/claims tables and links to code lines.

- Assumptions/Dependencies: Requires repository read access and code search tooling; developers must adopt the certificate format and trust the output; latency/step budget management.

- Fault localization copilot for failing tests

- Sectors: software, SRE/production engineering

- What: Suggest Top-N likely buggy regions with code-path evidence when a test fails (without stack traces or executing repo code).

- Tools/Products/Workflows: IDE plugin or CI comment bot that outputs the ranked list plus supporting traces; integrates with ticketing systems.

- Assumptions/Dependencies: Needs access to failing test code and relevant sources; performance varies with model capability and project complexity; some bugs (indirection, multi-file, domain-heavy) remain challenging.

- Repository Q&A and onboarding assistant (“rubber duck” with receipts)

- Sectors: education, internal engineering enablement

- What: Interactive codebase Q&A that returns answers with function-trace tables, data-flow analysis, and citations to code locations.

- Tools/Products/Workflows: Chat interface bound to repo; “Ask the Repo” portal for new hires and cross-team support.

- Assumptions/Dependencies: Requires robust file search and navigation; accuracy can vary with opaque dependencies; best for codebases with clear structure and idioms.

- Change-impact summaries for regression risk assessment

- Sectors: regulated industries (healthcare, finance), embedded/edge systems

- What: Identify likely affected pass-to-pass (P2P) tests and modules from a patch by tracing interprocedural paths and enumerating potential behavior changes.

- Tools/Products/Workflows: “Risk Lens” report attached to PRs; nightly change-impact dashboards.

- Assumptions/Dependencies: Effectiveness improves with comprehensive tests and coherent module boundaries; static-only view may miss runtime config, reflection, or dynamic code loading.

- Open-source PR triage at scale

- Sectors: open-source maintainers, foundations, large platform teams

- What: Batch-score incoming PRs for equivalence to existing fixes or spec conformance, attaching semi-formal certificates to prioritize maintainer attention.

- Tools/Products/Workflows: Maintainer bot with queue prioritization and auto-feedback comments.

- Assumptions/Dependencies: Compute budget for multi-step reasoning; repository conventions vary widely; best where tests/specs are present.

- Lightweight documentation enrichment

- Sectors: software documentation, developer experience

- What: Auto-generate function trace tables and data-flow sketches for complex modules as living docs, leveraging the semi-formal template structure.

- Tools/Products/Workflows: “Trace-to-Docs” pipeline that outputs markdown artifacts linked to source lines.

- Assumptions/Dependencies: Ongoing maintenance to sync with code changes; not a substitute for design-level docs; static view may omit dynamic behavior.

- Duplicate/near-duplicate patch detection in bug bounty/internal fixes

- Sectors: security response, internal platform teams

- What: Identify when two independently submitted patches are semantically equivalent modulo tests to avoid duplicate payouts or redundant reviews.

- Tools/Products/Workflows: “Semantic Deduper” service that clusters patches by semi-formal equivalence.

- Assumptions/Dependencies: Needs common tests/spec; limited by model’s path coverage and project size.

Long-Term Applications

These require additional research, integration with formal/analytical tooling, scaling to larger or safety-critical contexts, or policy standardization.

- Safety-critical software assurance without executing untrusted code

- Sectors: healthcare (EHR, devices), finance (trading/risk), energy (grid/SCADA), aerospace/automotive, robotics

- What: Use semi-formal certificates as human-auditable evidence for patch correctness before deployment to safety/mission-critical systems.

- Tools/Products/Workflows: “Assurance Workbench” combining certificates with checklists; integration into quality gates and change-control boards.

- Assumptions/Dependencies: Requires stronger guarantees—likely hybrid with static analysis, symbolic execution, or model checking; regulator acceptance and standardization needed.

- Semi-formal security triage (vulnerability detection, API misuse, secure-by-default checks)

- Sectors: security engineering across industries

- What: Templates specialized for common vulnerability classes (e.g., auth bypass, deserialization, injection) to triage risk without running code.

- Tools/Products/Workflows: “Security Certificate Generator” feeding into SIEM/ASOC workflows; developer-facing pre-commit checks.

- Assumptions/Dependencies: Must be tuned per language/framework; high precision needed to avoid alert fatigue; false positives likely without deeper semantic or dynamic corroboration.

- Hybrid verification stacks (LLM + formal/symbolic engines)

- Sectors: high-assurance software, compilers, critical infrastructure

- What: Convert semi-formal premises/traces into machine-checkable obligations verified by SMT solvers, symbolic execution, or type systems.

- Tools/Products/Workflows: “Certificate-to-Checks” pipeline that compiles claims into constraints; CI that accepts only verified certificates.

- Assumptions/Dependencies: Nontrivial engineering to map natural-language traces to formal artifacts; language semantics coverage; performance/scalability.

- Language-agnostic, prompt-driven static analysis

- Sectors: multi-language platforms, monorepos

- What: Generalize task-specific templates (equivalence, fault localization, QA) into a reusable, cross-language analysis layer that reduces reliance on bespoke static analyzers.

- Tools/Products/Workflows: “Prompted Analyzer” framework with template library and policy packs.

- Assumptions/Dependencies: Coverage gaps versus specialized tools; maintenance of template library; handling of dynamic features and metaprogramming.

- Automated library upgrade/migration impact assessment

- Sectors: enterprise software, cloud platforms

- What: Compare pre/post-upgrade behavior semi-formally to flag potential incompatibilities and enumerate likely failing tests before running full CI.

- Tools/Products/Workflows: “Upgrade Risk Profiler” for dependency management pipelines.

- Assumptions/Dependencies: Requires robust interprocedural tracing across APIs; opaque third-party code limits depth; best used as a pre-screen before execution.

- Supply-chain and governance policy: standardize semi-formal certificates

- Sectors: public sector procurement, regulated industries, open-source foundations

- What: Require an evidence-backed “change certificate” (premises, traces, conclusions) for vendor updates, critical patches, or open-source intake.

- Tools/Products/Workflows: Policy templates; SBOM/attestation extensions embedding certificates.

- Assumptions/Dependencies: Consensus on schemas and validation criteria; auditor training; privacy constraints on code sharing.

- Repository knowledge graphs built from certificates

- Sectors: platform engineering, analytics

- What: Aggregate function traces and data-flow relations into a code intelligence graph to power smarter search, impact analysis, and refactoring planning.

- Tools/Products/Workflows: “Certificate Indexer” and graph store; IDE integrations for dependency insights.

- Assumptions/Dependencies: Data freshness and comprehensiveness; de-duplication and confidence weighting across runs/models.

- Curriculum and grading assistants for code reasoning

- Sectors: education, upskilling programs

- What: Teach code comprehension using semi-formal templates; auto-grade student certificates against rubrics.

- Tools/Products/Workflows: LMS plugins; interactive labs with repository exploration.

- Assumptions/Dependencies: Rubric quality and bias; controlled environments; model availability.

- Continuous equivalence monitoring for monorepos

- Sectors: big-tech monorepos, large enterprises

- What: Nightly scans to detect semantically equivalent code changes (or unintended behavior shifts) across services/modules.

- Tools/Products/Workflows: “Behavior Drift Watcher” with alerting and dashboards.

- Assumptions/Dependencies: Compute cost for large repos; sampling strategies; coordination with test owners.

- Training specialized verifiers and agents fine-tuned for semi-formal templates

- Sectors: AI tooling vendors, research labs

- What: Post-train models to internalize the semi-formal structure for higher accuracy and lower prompt overhead.

- Tools/Products/Workflows: Fine-tuning pipelines; benchmark suites; continuous evaluation.

- Assumptions/Dependencies: Access to high-quality supervised traces/certificates; risk of overfitting to template artifacts; domain adaptation per language/ecosystem.

Cross-cutting assumptions and risks

- Model capability matters (accuracy varied by model); large repositories may exceed context unless exploration is efficient.

- Static-only analysis can miss runtime-dependent behavior, reflection, or configuration; third-party library semantics are a recurring failure mode.

- Certificates improve transparency but are not machine-checked proofs; high-assurance use cases need hybridization with formal methods.

- Data access, privacy, and IP constraints may limit repository exploration in some organizations.

- Operational costs (tokens, steps, latency) must be balanced against CI/test infrastructure costs and team SLAs.

Glossary

- Agent-computer interface: An interactive interface that lets an AI agent manipulate and inspect codebases through specialized commands. "SWE-agent~\cite{yang2024sweagent} introduced an agent-computer interface that enables LLMs to interact with codebases through specialized commands"

- Agentic code reasoning: An agent’s iterative, evidence-seeking analysis of codebases without running code, to infer semantics across files and dependencies. "we call agentic code reasoning: an agent's ability to navigate files, trace dependencies, and gather context iteratively to perform deep semantic analysis without executing code."

- Agentic exploration: Multi-step repository navigation and evidence gathering (as opposed to single-shot reasoning) during code analysis tasks. "Agentic exploration with semi-formal reasoning achieves the best overall accuracy at 72.1\% Top-5, outperforming single-shot semi-formal (63.9\%)."

- Agentic verification: Verification where the agent can explore the repository iteratively (without executing it) to substantiate its conclusions. "Our work focuses on agentic verification using a minimal SWE-agent~\cite{yang2024sweagent} setup with access to bash tools."

- Chain-of-thought: An unstructured, free-form reasoning style where intermediate steps are described in natural language. "Unlike unstructured chain-of-thought, semi-formal reasoning acts as a certificate:"

- Coq: A proof assistant and formal language for machine-checked mathematical proofs and program verification. "formal languages like Lean~\cite{ye2025verinabenchmarkingverifiablecode, thakur2025clevercuratedbenchmarkformally}, Coq~\cite{kasibatla2025cobblestonedivideandconquerapproachautomating} or Datalog~\cite{sistla2025verifiedcodereasoningllms}"

- Context window: The maximum text span a model can process at once; code and tests must fit within it for single-shot evaluation. "We first evaluate on 50 bugs from Defects4J where all relevant source files fit within the context window, enabling comparison between single-shot (all code provided upfront) and agentic (iterative file exploration) modes."

- Datalog: A declarative logic programming language used for fact-based reasoning and static analysis verification. "formal languages like Lean~\cite{ye2025verinabenchmarkingverifiablecode, thakur2025clevercuratedbenchmarkformally}, Coq~\cite{kasibatla2025cobblestonedivideandconquerapproachautomating} or Datalog~\cite{sistla2025verifiedcodereasoningllms}"

- Data flow analysis: Tracing how values propagate through code to substantiate behavioral claims. "the template requires a function trace table, data flow analysis, and semantic properties with explicit evidence."

- Defects4J: A benchmark suite of real-world Java bugs used for fault localization and repair evaluations. "For fault localization on Defects4J~\cite{just2014defects4j}, semi-formal reasoning improves Top-5 accuracy by 5 percentage points over standard reasoning."

- difflib: A Python library for computing textual differences and similarity scores, sometimes used as a heuristic verifier. "Recent work from SWE-RL~\cite{wei2025swerl} used the Python difflib library to compute a similarity score as the RL reward."

- Equivalence checking: Formal comparison to determine if two programs exhibit the same behavior under all inputs. "Traditional approaches rely on formal methods such as translation validation~\cite{pnueli1998translation} and equivalence checking~\cite{necula2000translation}."

- Equivalence modulo tests: Two patches are equivalent if they produce identical pass/fail results on the defined test suite. "Two patches are equivalent modulo tests if and only if executing the repository's test suite (F2P P2P) produces identical pass/fail outcomes for both patches."

- Execution-free verification: Assessing correctness or equivalence without running the target code or tests. "Recent work has explored execution-free verification for code agents."

- Fail-to-Pass (F2P) Tests: Tests added with a fix that fail before the patch and pass after; they validate the fix. "Fail-to-Pass (F2P) Tests & Tests introduced alongside a bug fix that fail before the patch and pass after. These validate that the fix addresses the reported issue."

- Fault localization: The task of identifying the exact buggy code lines that cause observed test failures. "For fault localization, the task is to identify the exact lines of buggy code given only the failing test, without access to stack traces, error messages, or execution information."

- Formal verification: Proving program properties using mathematical logic and proof assistants. "formal verification approaches translate code or reasoning into formal languages like Lean~\cite{ye2025verinabenchmarkingverifiablecode, thakur2025clevercuratedbenchmarkformally}, Coq~\cite{kasibatla2025cobblestonedivideandconquerapproachautomating} or Datalog~\cite{sistla2025verifiedcodereasoningllms}, enabling automated proof checking."

- Function trace table: A structured record listing functions examined, their locations, and verified behaviors during analysis. "the template requires a function trace table, data flow analysis, and semantic properties with explicit evidence."

- Interprocedural reasoning: Reasoning across function and module boundaries by following call chains and dependencies. "The structured format naturally encourages interprocedural reasoning, as tracing program paths requires the agent to follow function calls rather than guess their behavior."

- Lean: A theorem prover and formal language for constructing machine-checked proofs. "formal languages like Lean~\cite{ye2025verinabenchmarkingverifiablecode, thakur2025clevercuratedbenchmarkformally}, Coq~\cite{kasibatla2025cobblestonedivideandconquerapproachautomating} or Datalog~\cite{sistla2025verifiedcodereasoningllms}"

- Multi-model LLM grading: Using multiple LLMs to grade free-form answers against rubrics to increase evaluation reliability. "we use multi-model LLM grading with rubric-based evaluation."

- Pass-to-Pass (P2P) / Regression Tests: Pre-existing tests that must continue to pass after a patch to prevent regressions. "Pass-to-Pass (P2P) / Regression Tests & Existing tests in the repository that must continue passing after the patch is applied."

- Patch equivalence: Determining whether two patches yield the same test outcomes under the shared specification. "Our primary focus is patch equivalence verification: given two patches addressing the same specification, do they produce the same test outcomes?"

- Post-hoc verification: Validating or checking the correctness of an LLM’s reasoning after it has produced an explanation. "A complementary line of work focuses on post-hoc verification of LLM reasoning, translating LLM responses into Datalog facts and using static analysis to verify reasoning steps"

- Program equivalence: The property that two programs behave identically for all valid inputs. "Program equivalence is a fundamental problem in computer science, known to be undecidable in the general case"

- Reinforcement learning (RL) training pipelines: Training setups where agents learn via rewards; here, verification can supply execution-free feedback. "enabling execution-free feedback for RL training pipelines."

- Reward models: Learned models that estimate reward signals (e.g., test outcomes) without executing code. "SWE-RM~\cite{shum2025swerm} trains reward models to approximate test outcomes,"

- RubberDuckBench: A benchmark of repository-level code understanding questions with expert rubrics for evaluation. "For code question answering on RubberDuckBench~\cite{rubberduckbench}, semi-formal reasoning achieves 87\% accuracy."

- Semi-formal certificate template: A structured, task-specific template that agents must fill with premises, traces, and conclusions. "Semi-formal Certificate Template (patch equivalence)"

- Semi-formal reasoning: Structured natural-language reasoning requiring explicit premises, execution traces, and formal conclusions. "We introduce semi-formal reasoning, a general approach that bridges this gap."

- Single-shot verification: One-pass reasoning from a static code snapshot without interactive exploration. "We distinguish between single-shot verification, where the model reasons from a static code snapshot, and agentic verification, where the model can actively explore the repository."

- Static program analysis: Analyzing code behavior and properties without execution, using code structure and semantics. "opening practical applications in RL training pipelines, code review, and static program analysis."

- Symbolic execution: Systematically exploring program paths using symbolic inputs to reason about behaviors and constraints. "Combining LLM-based reasoning with lightweight formal methods or symbolic execution could provide stronger guarantees"

- SV-Comp: An annual competition benchmarking software verification tools. "ranking competitively with specialized tools on SV-Comp benchmarks,"

- SWE-agent: A software engineering agent framework enabling LLMs to interact with codebases via specialized commands. "SWE-agent~\cite{yang2024sweagent} introduced an agent-computer interface that enables LLMs to interact with codebases through specialized commands"

- SWE-bench-Verified: A dataset of verified SWE-bench instances with patches and test results used for evaluation. "We source patches from SWE-bench-Verified, drawing on the community-contributed collection of agent traces, generated patches, and test execution results."

- Top-N accuracy: The fraction of cases where the correct item appears among the top N ranked predictions. "Evaluation measures Top-N accuracy: the percentage of bugs where all ground-truth buggy lines are covered within the top N predictions."

- Translation validation: Checking that two program versions (e.g., before and after transformation) are semantically equivalent. "Traditional approaches rely on formal methods such as translation validation~\cite{pnueli1998translation} and equivalence checking~\cite{necula2000translation}."

- Undecidable: A problem for which no algorithm can always determine the correct answer for all inputs. "True semantic equivalence (determining whether two programs produce identical behavior for all valid inputs) is undecidable in the general case."

Collections

Sign up for free to add this paper to one or more collections.