R3GW: Relightable 3D Gaussians for Outdoor Scenes in the Wild

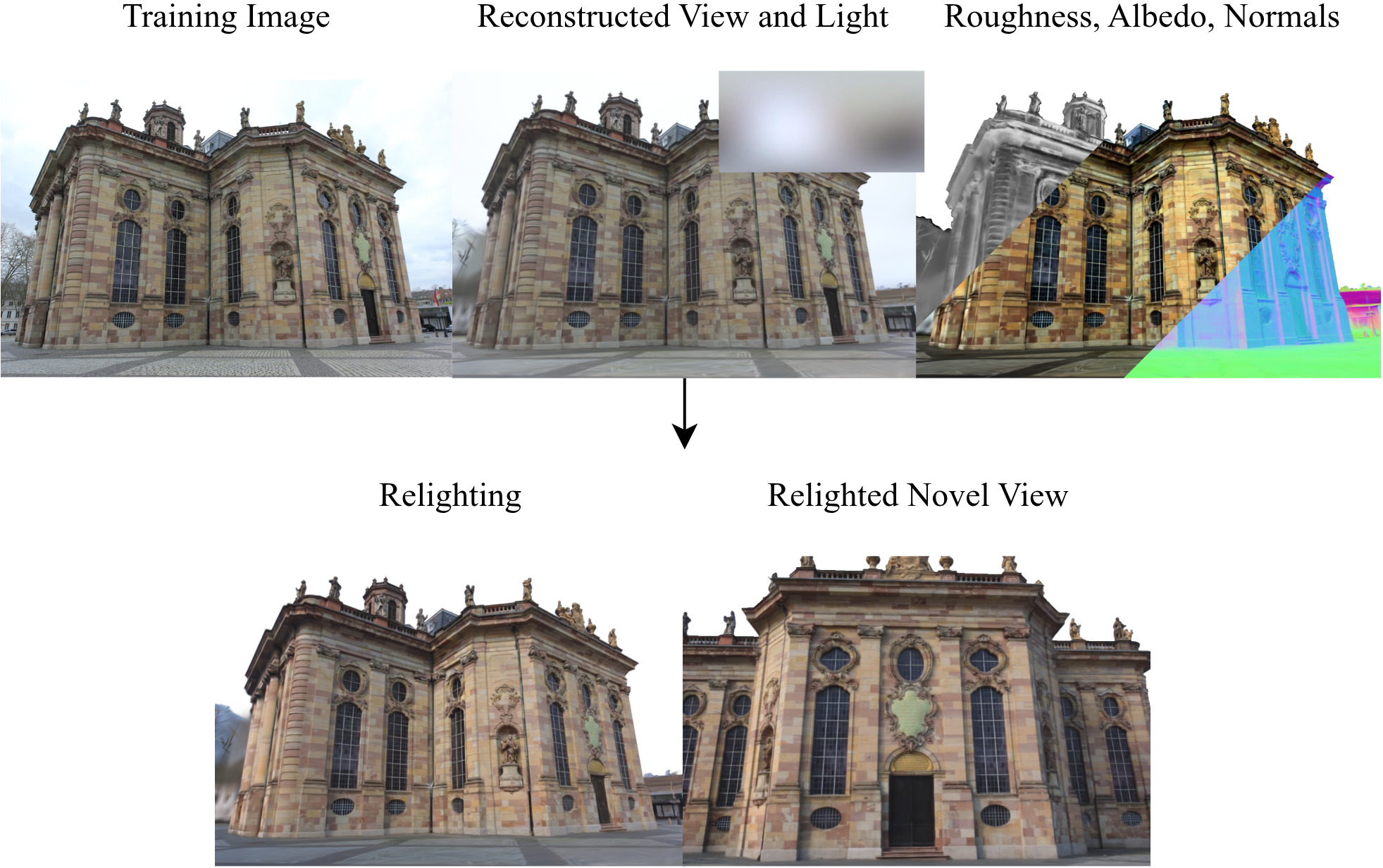

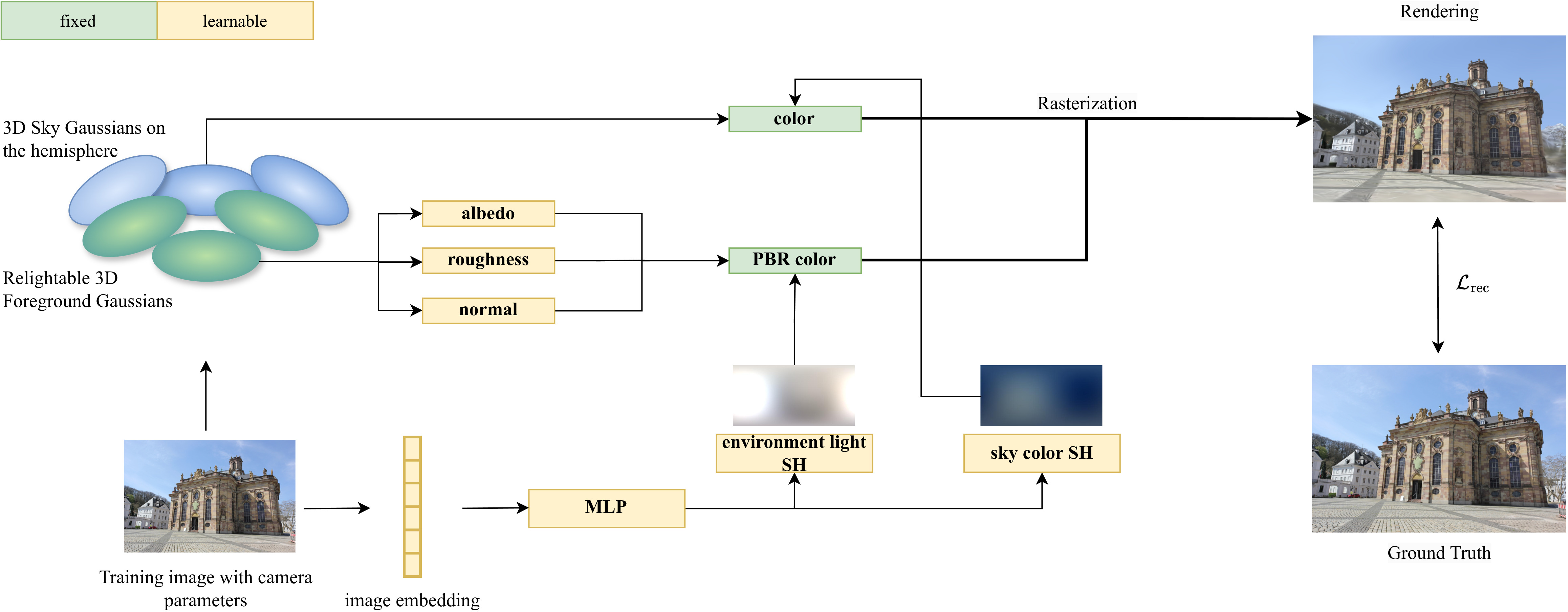

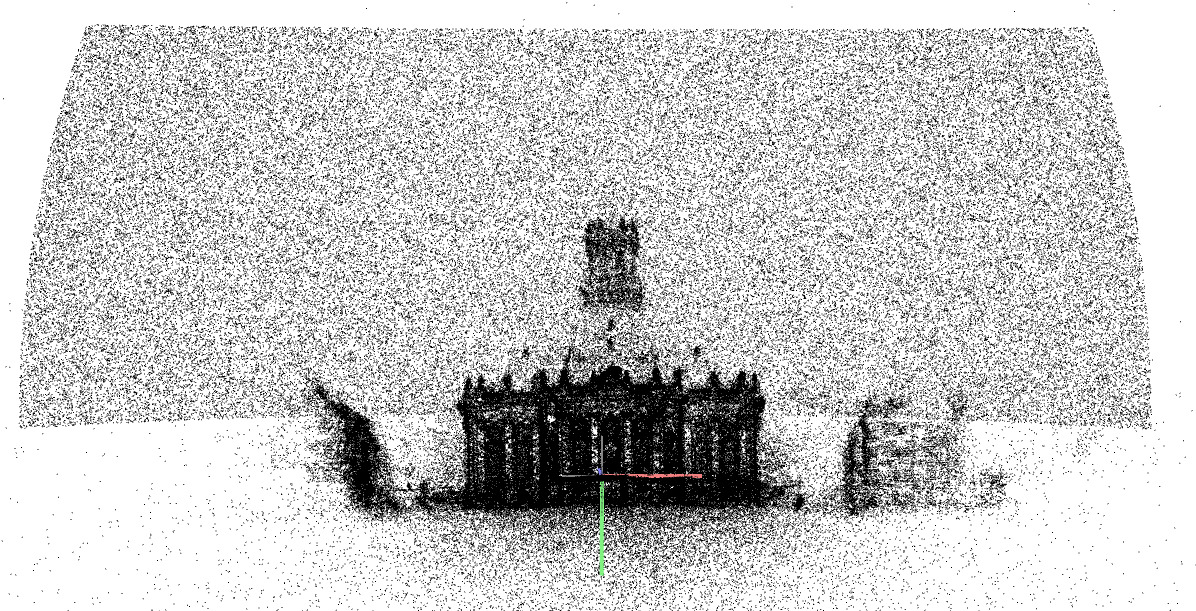

Abstract: 3D Gaussian Splatting (3DGS) has established itself as a leading technique for 3D reconstruction and novel view synthesis of static scenes, achieving outstanding rendering quality and fast training. However, the method does not explicitly model the scene illumination, making it unsuitable for relighting tasks. Furthermore, 3DGS struggles to reconstruct scenes captured in the wild by unconstrained photo collections featuring changing lighting conditions. In this paper, we present R3GW, a novel method that learns a relightable 3DGS representation of an outdoor scene captured in the wild. Our approach separates the scene into a relightable foreground and a non-reflective background (the sky), using two distinct sets of Gaussians. R3GW models view-dependent lighting effects in the foreground reflections by combining Physically Based Rendering with the 3DGS scene representation in a varying illumination setting. We evaluate our method quantitatively and qualitatively on the NeRF-OSR dataset, offering state-of-the-art performance and enhanced support for physically-based relighting of unconstrained scenes. Our method synthesizes photorealistic novel views under arbitrary illumination conditions. Additionally, our representation of the sky mitigates depth reconstruction artifacts, improving rendering quality at the sky-foreground boundary

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

R3GW: Relightable 3D Gaussians for Outdoor Scenes in the Wild — A simple explanation

What is this paper about?

This paper shows a new way to rebuild a 3D scene from a bunch of everyday photos and then change the lighting in that scene as if you were turning the sun, sky, or lamps on and off. The method is called R3GW. It works especially well for outdoor photos taken at different times and in different weather, where the lighting keeps changing.

What questions does the paper try to answer?

- How can we make a 3D scene that not only looks real from new camera angles, but also reacts realistically when we change the lighting afterward (relighting)?

- How can we handle outdoor “in the wild” photos where the sky and lighting vary a lot from picture to picture?

- Can we do this quickly and cleanly, without mixing up what’s part of the object’s material (like paint) and what’s just the light shining on it?

How does it work? (In everyday terms)

Think of building a 3D scene by sprinkling thousands of tiny, fuzzy colored dots in space. These dots are called “3D Gaussians,” and together they form the shapes and colors you see. This technique, “3D Gaussian Splatting,” is fast and looks good.

R3GW makes two improvements to handle outdoor scenes:

- It splits the scene into two parts: the foreground (buildings, trees, cars) and the sky. The sky is treated like a special background painted on a big dome, and it does not have “material” like wood or metal. This avoids confusing the sky with shiny surfaces and reduces visual glitches around the skyline.

- It learns how light hits the scene for each photo. It stores the “light from all directions” as a compact 360° description (called an environment map), using a small set of numbers (a math tool called spherical harmonics). This lets the method understand different times of day and weather across your photo collection.

For the foreground, R3GW uses a simple physics-based recipe for how light interacts with materials:

- Diffuse light: the basic color of the surface (its “albedo”) lit by the sky and sun.

- Specular light: the shiny highlights you see on glossy surfaces. This “recipe” is known as a BRDF. R3GW uses a popular one (Cook–Torrance, in a simplified Disney version) and a smart shortcut to keep it fast. You can think of it as: given where the camera is and where the light comes from, the method decides how bright and shiny each tiny dot should be.

Finally, the sky’s color is learned separately, so it can look right for each photo without pretending the sky is a shiny object.

What did they find, and why does it matter?

Here are the main results in simple terms:

- It can relight outdoor scenes: You can swap in new lighting (like a different sky or time of day), and the scene will update its look realistically, including shiny highlights.

- It handles “in the wild” photos better: Because it learns a separate lighting description for each photo and keeps the sky separate from the foreground, it works well with casual photo collections taken under changing conditions.

- Cleaner edges and better geometry: Buildings and trees look sharper against the sky, with fewer halos or depth errors. The method’s way of placing sky far behind the scene helps the 3D depth make more sense.

- Competitive quality, faster than many older methods: It achieves image quality similar to some neural-network approaches (NeRFs) but trains much faster, making it more practical.

Why is this important?

- For creators: It lets artists, game developers, and AR/VR teams turn everyday outdoor photos into 3D scenes that they can relight for different moods or times of day, quickly and with realistic results.

- For research and tools: It shows how to combine fast 3D Gaussian Splatting with physics-based lighting and a smart sky/foreground split, pointing the way to better, more controllable 3D scene tools.

- For future work: The method already handles reflections and varying light; adding things like soft shadows could make it even more realistic.

In short, R3GW helps turn messy, real-world outdoor photos into clean, relightable 3D scenes—fast, flexible, and ready for creative editing.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- No explicit modeling of shadows (direct or soft) or an environment visibility term in the rendering equation; how to incorporate shadowing and occlusion efficiently with 3DGS under varying environment maps remains open.

- Indirect lighting and interreflections are not modeled; methods to capture global illumination (e.g., color bleeding) without sacrificing speed are needed.

- Specular reflections are approximated by Gaussian blurring of SH coefficients (with $l_{\text{light}=4$), which likely limits sharp highlights and high-frequency lighting (e.g., sun disks). The fidelity trade-offs vs. cube-map prefiltering or spherical-Gaussian mixtures are unquantified.

- The environment light representation is low-order SH (diffuse up to , total up to ); sensitivity to SH order and behavior with highly directional or high-frequency lights is not analyzed.

- The sky appearance is learned independently of the environment light and is fixed per image via first-order SH, potentially leading to physically inconsistent relighting (e.g., nighttime environment map with daytime sky). A coupled sky–environment light model is missing.

- At test time, the MLP for per-image sky SH is unavailable; sky is set to white in evaluations. A mechanism to predict sky appearance for test views (or to derive it from the provided environment map) is needed.

- Sky is constrained to a subset of the upper hemisphere ([0, π/2] in polar angle, [0, π] in azimuth). Generalization to full-sphere sky coverage and scenes where sky spans beyond these ranges is untested.

- A single shared sky radius and a fixed hemisphere center are used; effects on parallax, alignment, and boundary artifacts across diverse scene scales and camera baselines are not evaluated.

- The material model fixes metallic at zero and omits anisotropy, clearcoat, subsurface scattering, and specular tint/IOR variation; extension to metals, glass, water, and complex outdoor materials is needed.

- Fresnel reflectivity is fixed to 0.04 (dielectric), preventing per-material (IOR-dependent) variation; the impact on material realism and whether learning improves accuracy is unknown.

- Foreground normals are inferred from each Gaussian’s shortest axis and regularized via depth gradients; the accuracy of this proxy vs. learned per-Gaussian normals or normal maps is not quantified.

- Camera exposure, white balance, tone-mapping, and sensor response variations across in-the-wild images are not modeled; the role of HDR vs. LDR environment maps and camera calibration on relighting fidelity is unexplored.

- The method relies on sky segmentation masks for the fg–sky loss; availability, accuracy, and robustness without ground-truth masks (automatic segmentation or weak supervision) are not addressed.

- Evaluation is limited to three NeRF-OSR scenes; performance across more diverse outdoor environments (materials, weather, haze/fog, seasons, nighttime) and larger-scale scenes remains untested.

- No quantitative metric for relighting fidelity under novel environment maps is provided; benchmarks isolating lighting-edit accuracy (beyond novel-view reconstruction) are missing.

- Alignment of ground-truth environment maps to scene geometry is manual; an automatic pipeline for environment map registration (orientation, intensity scale) is not proposed.

- Handling of transient objects (people, cars), semi-translucent foliage, and non-Lambertian/participating media (mist, haze) is not discussed; robustness in truly unconstrained captures remains uncertain.

- Vegetation and semi-translucent materials are masked during evaluation; how the method handles transmission, scattering, or view-dependent translucency is unaddressed.

- The sky–foreground boundary improvements are shown qualitatively; quantitative metrics for boundary sharpness/halo reduction and ablation of sky-geometry constraints are missing.

- Computational aspects beyond training time (memory use, rendering speed under varied environment maps, scalability to millions of Gaussians, very large scenes) are not reported.

- The impact of SH degree choices (diffuse l=2, environment l=4) and the roughness-dependent SH blurring kernel on accuracy vs. efficiency is not systematically studied.

- Coupling learned lighting to physical sun/skylight models (e.g., separating direct sun via spherical Gaussians and skylight via SH) and using illumination priors is not explored.

- Consistency between edited environment maps and sky color/appearance (e.g., ensuring the sky Gaussians reflect the edited illumination) is not enforced; a joint optimization or conditioning strategy is needed.

- The approach does not estimate or apply per-view occlusion/visibility for environment light; methods to approximate visibility efficiently for 3DGS (e.g., precomputed directional occlusion) remain open.

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging R3GW’s relightable 3D Gaussian Splatting for outdoor scenes, its decoupled sky-foreground modeling, and fast training/runtime characteristics.

- Virtual production and VFX previsualization (media/entertainment)

- What: Capture real outdoor locations with unconstrained photos and create relightable background plates and set extensions; interactively adjust time-of-day or weather-like lighting via environment maps.

- Tools/products/workflows: “Relightable Location Scan” kit (mobile capture + COLMAP + R3GW training), Unreal/Unity plugin that imports Gaussian scenes and exposes environment-map controls; Nuke/After Effects compositing nodes to match CG to relit plates.

- Assumptions/dependencies: Static scenes; calibrated cameras via SfM; availability or estimation of environment maps; no explicit shadows (soft shadow/interreflections approximated), metallic surfaces treated as non-metallic (metallic set to 0).

- AR/VR relightable outdoor backdrops and experiences (software, AR/VR)

- What: Interactive VR tours of landmarks where users adjust illumination; mixed reality backdrops for AR filters or outdoor signage previews with realistic specular view-dependent effects.

- Tools/products/workflows: WebGL/Unity viewers that stream Gaussian scenes; environment map selector or slider; cloud service for training (~2 hours per scene on an A100-class GPU).

- Assumptions/dependencies: Multiview capture; sky segmentation masks during training; SH-based lighting (degree 4) limits very high-frequency lighting details; sky treated as non-reflective and independent of material.

- Architectural visualization and real estate marketing (AEC)

- What: Present outdoor properties/sites under sunrise/noon/dusk lighting; assess façade appearance or material choices with realistic specular highlights (approximate, no explicit shadows).

- Tools/products/workflows: Cloud viewer for clients; “Capture → COLMAP → R3GW → shareable viewer” pipeline; environment map library (HDRI/ LDR) mapped to local coordinates.

- Assumptions/dependencies: Static scenes; approximate lighting accuracy (no shadow solver); requires robust camera calibration and enough viewpoints to reconstruct geometry and materials.

- Educational visualization of PBR and environment lighting (education)

- What: Interactive demonstrations of BRDFs, spherical harmonics lighting, and sky-foreground separation; illustrate view-dependent reflectance in real environments.

- Tools/products/workflows: Browser-based teaching modules; sliders for albedo, roughness, and environment lighting; export of albedo/normal maps for comparison across methods.

- Assumptions/dependencies: Availability of capture data; simplified Cook-Torrance model without metallics; specular term approximated via split-sum and SH smoothing.

- Content creation and shot planning (media/creator tools)

- What: Photographers and creators previsualize outdoor shots under different illumination using captured multi-view images; plan time-of-day shoots by relighting the reconstructed scene.

- Tools/products/workflows: Mobile capture app; quick cloud training; environment map presets; export stills or short clips of novel views and relit conditions.

- Assumptions/dependencies: Sufficient coverage in capture; environment maps representative of target lighting; consumer-grade devices may require cloud offload for training.

- Improved horizon compositing and sky-foreground boundary handling (media/entertainment, software)

- What: Reduce halos and depth artifacts at the skyline when compositing CG into plates; leverage decoupled sky Gaussians for sharper outlines.

- Tools/products/workflows: Compositing pipeline step that imports R3GW depth/foreground separation; sky Gaussians for clean mattes and backgrounds.

- Assumptions/dependencies: Proper sky region initialization and constraints; accurate COLMAP; sky SH learned per image (or set to neutral for test views if unavailable).

Long-Term Applications

The following applications require additional research, scaling, or productization (e.g., explicit shadowing, dynamic objects, metallics, higher-frequency lighting, and city-scale deployment).

- Urban planning and public lighting policy evaluation (policy, AEC, energy)

- What: Visualize and assess proposed streetlight changes, glare, and light pollution under realistic, time-varying illumination across neighborhoods.

- Tools/products/workflows: Stakeholder-facing web platform that relights city squares; integration with solar position models and physically accurate luminance/HDR environment maps.

- Assumptions/dependencies: Explicit shadow modeling and validated photometric accuracy; support for direct sunlight/skylight decomposition; standardized HDR environment capture.

- Autonomous driving and outdoor robotics simulation (robotics, automotive)

- What: Generate photorealistic outdoor scenes with controllable lighting for training perception systems to be robust to time-of-day, weather, and specular effects.

- Tools/products/workflows: Simulator plugins that stream R3GW scenes; APIs to sweep environment maps and viewpoints; domain randomization for lighting.

- Assumptions/dependencies: Handling transient/moving objects; shadowing and indirect light; scaling to large environments; real-time inference without large GPUs.

- City-scale digital twins with relightable contexts (smart cities, GIS)

- What: Maintain relightable reconstructions of districts for planning, maintenance, and community engagement; adjust lighting to test interventions.

- Tools/products/workflows: Distributed capture and incremental training; scene tiling and streaming; environment map inference from weather feeds.

- Assumptions/dependencies: Multi-scene stitching and memory-efficient representations; automatic sky segmentation; continuous updates and re-training.

- Photorealistic AR product placement outdoors (advertising, e-commerce)

- What: Place virtual objects (signage, furniture, EV chargers) with accurate reflections and shadows that match the relightable outdoor scene.

- Tools/products/workflows: ARSDK integrations; on-device relighting using inferred environment maps; automatic material matching for virtual insertions.

- Assumptions/dependencies: Metallic BRDF support; explicit soft shadow modeling and occlusion reasoning; robust environment map estimation from single frames.

- Daylighting and solar potential analysis (energy, AEC)

- What: Evaluate façade performance and solar exposure using relightable reconstructions across seasons and times.

- Tools/products/workflows: Link R3GW scenes to solar path models and physically-based daylight simulators; report generation for compliance.

- Assumptions/dependencies: Accurate sun/sky spectral modeling, HDR measurements; validated irradiance predictions; shadow/indirect light fidelity.

- Live or near-real-time relightable capture (software, media/entertainment)

- What: Streamed reconstruction and relighting of outdoor events; adjust scene lighting interactively during capture.

- Tools/products/workflows: Mobile SLAM + online Gaussian training; incremental sky/foreground updates; environment map estimation pipelines.

- Assumptions/dependencies: Lightweight training on device; robust segmentation; handling transient crowds and vehicles; stable tracking.

- Standardization of relightable Gaussian scene formats (software ecosystem)

- What: Interoperable file formats and SDKs for import/export of relightable 3DGS (attributes, BRDFs, sky separation, environment lighting controls).

- Tools/products/workflows: Open-source converters (Gaussian → mesh or neural fields), DCC plugins (Blender, Unreal), scene inspectors.

- Assumptions/dependencies: Community adoption; alignment with PBR standards; support for high-frequency lighting and shadow extensions.

- Weather-aware relighting and forecasting integration (software, climate tech)

- What: Drive environment maps from meteorological data (cloud cover, aerosols) to preview scenes under forecasted conditions.

- Tools/products/workflows: Environment map generators tied to weather APIs; SH/cubemap synthesis; automated relighting scenarios.

- Assumptions/dependencies: Accurate sky/weather models; higher-order SH or cubemaps for high-frequency features; validation across climates.

Notes on general assumptions and dependencies that affect feasibility:

- Input quality: Requires well-covered, calibrated multiview captures (SfM via COLMAP) of largely static scenes.

- Lighting model: Environment lighting parameterized with SH (up to degree 4); specular via split-sum approximation and SH smoothing; no explicit shadow modeling in current form.

- Material model: Cook-Torrance BRDF with simplified Disney parameters; metallic set to zero (non-metallic assumption).

- Sky modeling: Decoupled sky Gaussians with first-order SH per image; sky masks influence training; sky SH may be unavailable for new/test views without embedding inference.

- Compute: Training is fast but still GPU-reliant; large-scale or live workflows need incremental/efficient training and streaming.

- Accuracy: For policy/engineering-grade use, require HDR environment maps, explicit sun/sky decomposition, and validated photometric accuracy (shadows, indirect light).

Glossary

- 2D Gaussian Splatting (2DGS): A 2D point-based scene representation and rendering method that uses Gaussian primitives for efficient reconstruction and relighting. "LumiGauss \cite{kaleta2025lumigauss} introduces a relightable 2D Gaussian Splatting \cite{huang20242d} representation for outdoor scenes captured in the wild."

- 3D Gaussian Splatting (3DGS): A 3D point-based scene representation using Gaussian primitives and differentiable rasterization for fast, high-quality rendering and training. "3D Gaussian Splatting (3DGS) has established itself as a leading technique for 3D reconstruction and novel view synthesis of static scenes, achieving outstanding rendering quality and fast training."

- Albedo: The intrinsic, view-independent diffuse color of a surface, independent of illumination and shading. "parameterized according to a simplified Disney material model \cite{burley2012physically}: albedo and roughness ."

- Alpha blending (α-blending): A compositing technique that accumulates colors along the viewing direction using per-splat opacity to produce the final pixel color. "After projection, the color of each pixel in the output image is computed by sorting the splats by depth and accumulating their colors via -blending:"

- Bidirectional Reflectance Distribution Function (BRDF): A function describing how light is reflected at an opaque surface given incoming and outgoing directions. "The second term, $I_{\text{BRDF}$, corresponds to the integral of the BRDF specular component, depending on and the cosine of the angle formed by the viewing direction with the surface normal."

- COLMAP SfM: A Structure-from-Motion pipeline used to recover camera poses and a sparse point cloud from images. "The optimization of a 3D Gaussian Splatting scene begins with a collection of RGB images paired with calibrated cameras obtained from COLMAP SfM \cite{schonberger2016structure}."

- Cook–Torrance BRDF: A physically based microfacet reflectance model with normal distribution (D), Fresnel (F), and geometry (G) terms. "For the foreground, R3GW learns the varying illumination of the scene per image, represented by an environment map and parameterized by Spherical Harmonics (SH). For reflection, a Cook-Torrance BRDF parameterized following the Disney material model \cite{cook1982reflectance,burley2012physically} is used."

- Cube map: A six-faced environment texture used to represent omnidirectional data like environment lighting. "In static lighting scenarios, the integral $I_{\text{specular}$ can be pre-integrated and stored in the mipmap levels of a cube map \cite{jiang2023gaussianshader,R3DG2023}, following the original split-sum approximation method."

- Deferred shading: A rendering approach that postpones lighting computations until after geometry is rasterized into intermediate buffers. "either by integrating it directly into the color attribute of the Gaussians \cite{R3DG2023} or by applying deferred shading after rasterization \cite{liang2023gs,chen2024gi,wu20253d}."

- Disney material model: A popular, artist-friendly PBR parameterization defining material properties such as albedo, roughness, and metallic. "For reflection, a Cook-Torrance BRDF parameterized following the Disney material model \cite{cook1982reflectance,burley2012physically} is used."

- Environment light: A distant illumination model describing incoming radiance from all directions in the scene’s surroundings. "R3GW learns the environment light based on the foreground pixels of the training images."

- Environment map: A texture representing omnidirectional lighting used to illuminate the scene. "Our method factorizes the scene’s foreground into geometry, material, and illumination, with the lighting represented as an environment map."

- Equirectangular environment map: A latitude-longitude projection used to store spherical environment lighting in a 2D image. "To enable relighting, our approach explicitly models the incoming illumination of the scene as an RGB environment light, represented by an equirectangular environment map and compactly parameterized by SH."

- Fresnel-Schlick reflectivity: An approximation of the Fresnel effect using Schlick’s formula, often parameterized by a base reflectance F0. "The constant $0.04$ corresponds to the Fresnel-Schlick reflectivity for non-metallic surfaces."

- Gamma correction: The nonlinear transformation applied to convert between linear scene-referred colors and display-referred colors. "Before rendering, the color of each foreground Gaussian is gamma-corrected with ."

- Gaussian kernel (in the SH domain): A low-pass filter applied to spherical harmonic coefficients to smooth lighting. "Specifically, the coefficients are convolved channel-wise with a Gaussian kernel in the SH domain."

- Image appearance embedding: A learnable per-image latent vector capturing appearance factors like lighting and sky color. "To handle the varying illumination and sky appearance of the scene, following \cite{kaleta2025lumigauss}, each image is associated with a learnable embedding vector encoding its appearance information."

- Lambertian surface: An ideal diffuse reflector whose appearance is independent of viewing direction. "Furthermore, the approach still relies on the assumption of Lambertian surfaces."

- Lookup table: A precomputed array indexed at runtime to retrieve learned parameters or function values efficiently. "During training, the image appearance embeddings are stored in an optimizable lookup table."

- Lookup texture: A texture storing precomputed values (e.g., BRDF integrals) used during shading by texture lookup. "Given that $I_{\text{BRDF}$ is independent of the varying environment light, as proposed in \cite{SplitSumApprox}, it is precomputed and stored in a 2D lookup texture."

- Microfacet model: A reflectance model that treats surfaces as aggregates of tiny facets to compute specular reflection via D, F, and G terms. "It models the foreground reflections assuming a Cook-Torrance microfacet-based reflectance model \cite{cook1982reflectance}"

- Mipmap: A hierarchy of prefiltered texture levels enabling efficient minification and pre-integration. "In static lighting scenarios, the integral $I_{\text{specular}$ can be pre-integrated and stored in the mipmap levels of a cube map \cite{jiang2023gaussianshader,R3DG2023}"

- Neural Radiance Fields (NeRF): An implicit neural representation that maps 3D coordinates and viewing directions to density and radiance for volumetric rendering. "Unlike prior approaches based on NeRF \cite{mildenhall2021nerf}, which represent the scene volume implicitly with a multi-layer perceptron (MLP),"

- Novel view synthesis: The task of rendering images from viewpoints not present in the training data. "3D Gaussian Splatting (3DGS) has established itself as a leading technique for 3D reconstruction and novel view synthesis of static scenes,"

- Physically Based Rendering (PBR): Rendering techniques grounded in physical light transport and energy conservation to produce realistic images. "Recent works \cite{R3DG2023,liang2023gs,jiang2023gaussianshader} introduced a relightable 3DGS scene representation by integrating Physically Based Rendering (PBR) into the rendering pipeline of the method,"

- Rendering equation: An integral equation that expresses outgoing radiance as reflected incident radiance modulated by a BRDF. "The color of a foreground Gaussian is the light exiting from its position along its viewing direction , which is given by the rendering equation for non-emitting surfaces \cite{renderingeq}:"

- Spherical Gaussians: Directional basis functions on the sphere used to model lobed lighting like sunlight. "SOL-NeRF \cite{sun2023sol} utilizes a different lighting representation, modeling the scene illumination as a combination of a skylight, parameterized by SH, and sunlight, parameterized by Spherical Gaussians."

- Spherical Harmonics (SH): Orthogonal basis functions on the sphere used to compactly represent low-frequency functions like diffuse environment lighting. "For the foreground, R3GW learns the varying illumination of the scene per image, represented by an environment map and parameterized by Spherical Harmonics (SH)."

- Split-sum approximation: A technique for efficient image-based lighting that separates the specular integral into a prefiltered environment term and a BRDF term. "Following \cite{jiang2023gaussianshader,R3DG2023}, we compute the specular color using the split-sum approximation technique introduced in \cite{SplitSumApprox},"

- Structure-from-Motion (SfM): A method to estimate 3D structure and camera motion from multiple images. "The positions of the Gaussians are initialized with the sparse point cloud produced by SfM."

- Tile-based rasterizer: A rasterization architecture that partitions the screen into small tiles for efficient, parallel rendering. "To render the scene, 3DGS uses a fast differentiable tile-based rasterizer implemented in CUDA."

- View frustum: The truncated pyramid defining the visible volume of space from a camera, used for culling. "We constrain the sky representation to a subset of the upper hemisphere, as for most of the datasets considering the whole sphere would introduce unnecessary sky Gaussians that do not intersect the view frustum of any training camera."

- Volumetric rendering: Rendering that integrates radiance and transmittance through a participating medium or neural field along camera rays. "In contrast, FEGR \cite{wang2023fegr} uses a hybrid deferred renderer that combines the volumetric rendering of the neural field with mesh extraction to reproduce view-dependent specular highlights and shadows."

Collections

Sign up for free to add this paper to one or more collections.