Pretrained Vision-Language-Action Models are Surprisingly Resistant to Forgetting in Continual Learning

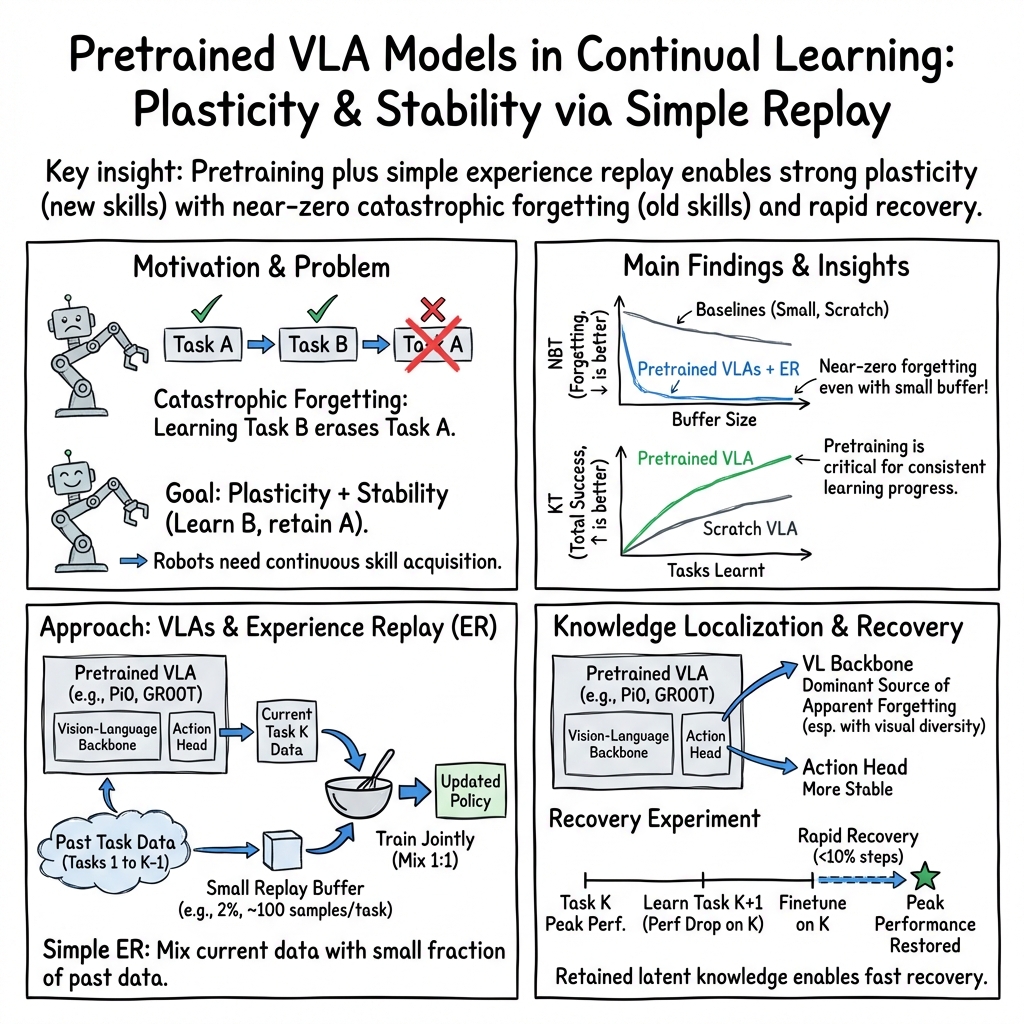

Abstract: Continual learning is a long-standing challenge in robot policy learning, where a policy must acquire new skills over time without catastrophically forgetting previously learned ones. While prior work has extensively studied continual learning in relatively small behavior cloning (BC) policy models trained from scratch, its behavior in modern large-scale pretrained Vision-Language-Action (VLA) models remains underexplored. In this work, we found that pretrained VLAs are remarkably resistant to forgetting compared with smaller policy models trained from scratch. Simple Experience Replay (ER) works surprisingly well on VLAs, sometimes achieving zero forgetting even with a small replay data size. Our analysis reveals that pretraining plays a critical role in downstream continual learning performance: large pretrained models mitigate forgetting with a small replay buffer size while maintaining strong forward learning capabilities. Furthermore, we found that VLAs can retain relevant knowledge from prior tasks despite performance degradation during learning new tasks. This knowledge retention enables rapid recovery of seemingly forgotten skills through finetuning. Together, these insights imply that large-scale pretraining fundamentally changes the dynamics of continual learning, enabling models to continually acquire new skills over time with simple replay. Code and more information can be found at https://ut-austin-rpl.github.io/continual-vla

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Pretrained Vision-Language-Action Models are Surprisingly Resistant to Forgetting in Continual Learning”

What this paper is about (the big idea)

The paper studies how robots can keep learning new skills over time without forgetting the old ones. Think of it like taking math, science, and history one after another, and still remembering math when you start science. The researchers looked at modern robot “brains” called Vision-Language-Action (VLA) models—systems that see with cameras, read or listen to instructions, and then act—and asked: do these big, already-pretrained models forget less than smaller models trained from scratch?

What the researchers wanted to find out

In simple terms, they asked:

- Do large, pretrained robot models hold onto old skills better when they learn new ones?

- Can a very simple practice trick—replaying a small amount of old examples—stop forgetting?

- How important is pretraining (learning lots of general knowledge before the robot learns specific tasks) for avoiding forgetting?

- If performance on an old skill drops, is the knowledge truly gone—or can it be brought back quickly?

How they tested it (in everyday language)

They used a set of robot tasks called LIBERO (like a course made of many different challenges). The robot learns tasks one after another in a fixed order. After each new task, they checked how well the robot still did on the earlier ones.

Key ideas, explained simply:

- Continual learning: learning new things over time without losing what you learned before.

- Catastrophic forgetting: when learning something new makes you forget old skills a lot.

- Pretrained VLA: a robot model that has already learned from tons of images, text, and robot demonstrations, so it has strong general understanding before you teach it specific tasks.

- Experience Replay (ER): while learning a new task, the robot also reviews a small set of saved examples from older tasks—like flipping through a few flashcards to keep old material fresh.

- Replay buffer size: how many “flashcards” from past tasks you keep. They tried very small amounts (as low as 0.2%–2% of the data) and larger ones.

- Success rate: how often the robot completes a task correctly.

- Negative Backward Transfer (NBT): a number that shows how much old skills got worse after learning new ones. Near zero means “no forgetting.”

What they compared:

- Big pretrained VLA models vs. smaller models trained from scratch.

- ER (reviewing past examples) vs. other methods that don’t replay old examples.

- Different amounts of replay data (very tiny to moderate).

- Different levels of pretraining for the same architecture: fully pretrained (vision + robot actions), vision-only pretrained, and no pretraining.

- Which part forgets more: the “seeing/understanding” part (vision-language backbone) or the “moving” part (action head). They “swapped” these parts between training stages to test where forgetting happens.

- Whether “forgotten” skills can be quickly recovered by a short round of fine-tuning.

What they found (in plain words)

Here are the core results and why they matter:

- Pretrained VLAs forget much less:

- With just a small replay buffer (as little as about 2% of old data), big pretrained models kept old skills almost perfectly—sometimes even improved them while learning new ones. This is unusual because smaller models typically forget a lot unless you keep large amounts of old data.

- Simple review beats fancy tricks:

- The simple “flashcard” method (Experience Replay) worked far better than more complicated methods that try to protect old skills without reviewing old examples. Even a little review went a long way for pretrained models.

- Pretraining is crucial—especially when replay is tiny:

- When the amount of saved old examples was very small, the gap between pretrained and non-pretrained models got even bigger. Pretraining gave the model strong, reusable building blocks that made it much harder to forget and still easy to learn new tasks.

- No trade-off between learning new things and remembering old ones:

- The pretrained models didn’t have to choose between “being flexible” (learning new tasks) and “being stable” (remembering old tasks). They did both well. Smaller, non-pretrained models often either forgot a lot or didn’t learn the new tasks very effectively.

- “Forgotten” skills aren’t really gone in pretrained VLAs:

- Even when a pretrained model’s score on an old task dropped after learning a new one, a short bit of fine-tuning brought the old skill back fast—often in less than 10% of the original training time. In contrast, smaller models trained from scratch had to relearn almost from the beginning.

- Swapping parts of the model showed that most of the apparent forgetting happened in the “seeing/understanding” part (vision-language backbone), not the “movement” part. Tasks with very different visuals caused more drop, while tasks with similar motions caused less.

Why this matters (the impact)

- Simpler, stronger lifelong robot learning:

- You might not need complicated continual learning tricks for big, pretrained robot models. Strong pretraining plus a small amount of replayed examples can be enough to keep skills fresh while adding new ones.

- Faster recovery and easier maintenance:

- Because pretrained models keep “hidden” knowledge even when performance dips, robots can quickly regain old skills with brief fine-tuning. That makes lifelong learning more practical in the real world.

- Better design priorities:

- Investing in broad, high-quality pretraining and smart ways to reuse internal knowledge may be more valuable than building big replay buffers or complex protective algorithms.

In short, the paper shows that large, pretrained robot models can keep learning new tasks over time without forgetting much—especially if they briefly review a few old examples. Even when they seem to forget, the knowledge is still inside and can be brought back quickly. This is good news for building robots that learn throughout their lives.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, prioritized to guide actionable future work.

- External validity to real-world robotics: Do the forgetting-resistant dynamics of pretrained VLAs under ER persist on physical robots with sensor noise, delayed actuation, contact-rich interactions, longer horizons, and sim-to-real gaps?

- Sensitivity to task order: How robust are the results to different task curricula and permutations (including adversarial sequences)? Quantify order effects and design order-agnostic training strategies.

- Scaling to longer lifelong sequences: What happens with hundreds or thousands of tasks over months of updates? Characterize stability–plasticity trends and recovery behavior as K grows, not just K=10.

- Replay strategy design: Beyond random sampling, which buffer construction methods (reservoir sampling, class/skill-balanced coresets, prioritization by uncertainty/rarity, diversity-aware selection) minimize forgetting for VLAs under tight memory budgets?

- ER hyperparameters and schedules: How do replay ratios, mixing schedules, sampling temperature, and curriculum interleaving impact backward/forward transfer in VLAs?

- Baseline breadth and strength: Compare ER against a broader set of continual learning methods (e.g., GEM/A-GEM, LwF, MAS, SI, orthogonal gradient constraints, PackNet, distillation-based regularization), with tuned hyperparameters, to substantiate “ER is uniquely effective.”

- Joint training upper bound: How close does continual ER with VLAs get to multi-task joint training performance? Quantify the performance gap and identify failure modes.

- Metric adequacy: Provide normalized forgetting metrics (beyond NBT), explicit forward transfer measures, per-task plasticity–stability trade-offs, and sample-efficiency/safety metrics to avoid confounds from varying initial SR.

- Mechanistic explanation: Develop a principled account of why pretraining reduces forgetting (e.g., layer-wise representational drift analysis, CKA/RSM stability, Fisher overlap, gradient interference/alignment across tasks, parameter-space geometry).

- Component-level ablations: Systematically test freezing vs partial updates for VL backbone and action head (e.g., adapters/LoRA, gating, sparse updates) to isolate which update patterns preserve prior skills while enabling new ones.

- Pretraining ingredients: Ablate pretraining dataset size, diversity, embodiment coverage, language breadth, and objectives (contrastive vs next-token vs action flow) to identify which components most strongly drive forgetting resistance.

- Model size scaling laws: Quantify how resistance to forgetting and recovery efficiency scale with parameter count and depth; derive practical size–memory–performance trade-offs for VLAs.

- Recovery policies: Formalize on-the-fly recovery triggers (e.g., detection of degradation), minimal finetuning schedules, and their impact on subsequent tasks; evaluate whether periodic micro-recovery improves overall KT.

- Language robustness: Assess retention under instruction paraphrases, vocabulary drift, compositional language, negation/quantifiers, and multi-lingual settings; test whether language grounding is a bottleneck for retention.

- Embodiment and sensor shift: Evaluate continual learning across different robots, grippers, camera placements, and sensor modalities (RGB-D, tactile); measure retention under hardware changes.

- Domain and non-iid drift: Stress-test VLAs under evolving environments (lighting, backgrounds, object sets), seasonal/temporal drift, and out-of-distribution tasks to map their failure envelope.

- Action head choices: Compare action decoders (diffusion, autoregressive, flow, policy gradient heads) for their impact on forgetting and recovery; determine if certain heads are inherently more stable.

- Replay from generative models: Investigate synthetic replay (e.g., trajectory generation from the VLA or a learned world model) to reduce storage needs while preserving retention.

- Resource constraints: Characterize memory/compute/energy trade-offs for ER in VLAs; design buffer compression (e.g., feature-level replay) that retains performance under strict on-device limits.

- Safety and negative transfer: Identify cases where pretraining induces harmful biases or negative backward transfer; develop safeguards (constrained optimization, risk-aware replay) to prevent unsafe forgetting-induced behaviors.

- Task diversity mapping: Relate task similarity/diversity to observed forgetting (e.g., shared subskills, visual overlap, action primitives) and use this to design curricula that maximize positive backward transfer.

- Hyperparameter sensitivity: Systematically analyze optimizer choice, learning rate schedules, weight decay, and finetuning step budgets on retention and KT to avoid attributing gains solely to pretraining.

- Dataset quality and noise: Evaluate sensitivity to imperfect demonstrations (noisy labels, suboptimal actions) and unfiltered datasets; determine whether VLAs still resist forgetting under realistic data imperfections.

- Reproducibility and statistical power: Increase seeds, report significance tests, and release full training/evaluation protocols to ensure claims about “near-zero forgetting” are statistically robust.

Practical Applications

Immediate Applications

The following applications can be deployed now by leveraging pretrained Vision-Language-Action (VLA) models’ resistance to forgetting, simple Experience Replay (ER), and fast skill recovery via finetuning.

- Robotics: “Replay-lite Continual Learning Module” for industrial robots

- Sector: Manufacturing, logistics, warehousing, retail automation, hospitality

- What: Deploy ER with small replay buffers (e.g., 2–20% of per-task data, ~100–1000 samples) to incrementally add new tasks (e.g., grasp variants, tool changeovers, shelf restocking, surface wiping) without catastrophic forgetting.

- Workflow/product:

- Replay Buffer Manager that enforces per-task budgets and sampling strategies

- Continual Learning Monitor that reports success rate (SR), Negative Backward Transfer (NBT), and Knowledge Transfer (KT)

- Rapid Recovery Finetune routine that triggers short finetuning when SR on past tasks dips

- Assumptions/dependencies: Access to pretrained VLA backbones (e.g., Open-VLA, Pi0, GR00T), curated demonstrations per task, safe on-robot finetuning protocols, tasks within the manipulation domain similar to LIBERO suites, sufficient edge/GPU resources for short finetunes.

- Service robots: Incremental skill updates in hospitals and hotels

- Sector: Healthcare operations, hospitality, facility services

- What: Add new cleaning protocols, delivery routes, or item-handling instructions with small ER buffers while maintaining previously learned behaviors.

- Workflow/product:

- Nightly ER training using limited replay samples

- Policy health dashboard to track NBT across critical skills

- Escalation to “fast restore” finetune when policy drift is detected

- Assumptions/dependencies: Institutional approval for continual updates, limited data retention aligned with privacy regulations (ER stores small samples), operational schedule for offline updates, existing VLA pretrained on diverse environments.

- Home robotics: Personalized chore learning without forgetting

- Sector: Consumer robotics

- What: Teach household tasks (folding, tidying, dish placement) over time; use small ER buffers to avoid forgetting older tasks and quick finetunes to restore performance when needed.

- Workflow/product:

- “Skill Library” UI for users to add tasks and monitor SR/NBT

- On-device replay data budgeting to minimize memory and power

- Assumptions/dependencies: Safe in-home finetuning, quality demonstrations or teleoperation, robust vision-language grounding for varied home environments.

- Agriculture robots: Low-memory adaptation to crop/variety changes

- Sector: Agriculture

- What: Update picking, pruning, or inspection tasks to new cultivars and conditions with small ER buffers; retain core skills (navigation, grasping).

- Workflow/product:

- Field replay sampling workflows (e.g., 50–100 key interactions per change)

- Diagnostic “component swap” tests (backbone vs. action head) to localize representation drift before finetuning

- Assumptions/dependencies: Reliable data capture in outdoor conditions, pretrained models robust to domain shifts, agronomic safety procedures for updates.

- MLOps for robotics: Lightweight continual learning tooling

- Sector: Software tooling, AI/ML infrastructure

- What: Provide off-the-shelf components tailored to VLAs’ continual learning dynamics:

- NBT/KT metric trackers and alerts

- Pareto frontier analyzer for buffer-size vs. forgetting trade-offs

- Component-swapping diagnostic kit (vision-language backbone vs. action head) to guide targeted finetunes

- Assumptions/dependencies: Integration with existing robotics stacks, standardized logging and evaluation harnesses (e.g., LIBERO-compatible).

- Academic labs: Reproducible continual learning experiments for VLAs

- Sector: Academia, education

- What: Use LIBERO suites and open VLA backbones to study low-buffer ER, measure SR/NBT/KT, and probe knowledge retention via quick finetunes.

- Workflow/product:

- Benchmark pipelines with fixed task orders, buffer sizes, and monitoring

- Teaching modules demonstrating stability–plasticity dynamics and diagnostic methods

- Assumptions/dependencies: Access to datasets, trained checkpoints, compute for short finetunes.

- Data privacy and efficiency: Minimize stored data via small ER buffers

- Sector: Policy/compliance, enterprise IT

- What: Adopt ER with small sample budgets to reduce data retention while maintaining performance; document replay sampling policies for audits.

- Workflow/product:

- “Replay Data Policy” templates specifying sampling, retention durations, and anonymization

- Privacy-by-design configurations for continual learning updates

- Assumptions/dependencies: Legal review for replay samples, governance for update logs and rollbacks.

- Fleet operations: Centralized continual learning orchestration

- Sector: Robotics-as-a-service providers

- What: Coordinate replay sampling, ER training, and fast recovery finetunes across multiple sites; push model updates that preserve local skillsets.

- Workflow/product:

- Fleet Continual Training Orchestrator

- Site-specific buffer budgeting and metrics dashboards

- Assumptions/dependencies: Reliable telemetry, versioning and rollback support, heterogeneous hardware compatibility.

Long-Term Applications

These applications will benefit from further research, scaling, standardization, and robustness improvements before broad deployment.

- Lifelong generalist robots that self-improve with tiny memory footprints

- Sector: Robotics across manufacturing, logistics, home, healthcare

- What: Persistent learning agents that continuously add and refine skills using minimal replay, maintain near-zero forgetting, and share updates safely across embodiments.

- Potential tools/products:

- “Continual Learning OS” for robots (on-device replay scheduling, safety gates, knowledge retention probes)

- Cross-embodiment skill transfer services leveraging pretrained VL backbones

- Assumptions/dependencies: Stronger pretraining breadth, robust safety layers for online updates, standardized evaluation of forgetting in open-world settings.

- Standards and certification for continual-learning robots

- Sector: Policy, regulatory bodies, safety certification

- What: Develop norms for reporting NBT/KT, replay policies, and recovery procedures; certify continual learning workflows analogous to software patch safety.

- Potential tools/products:

- Compliance test suites (stress tests on stability–plasticity trade-offs)

- Audit-ready logs for replay data and update decisions

- Assumptions/dependencies: Multi-stakeholder consensus, sector-specific risk models, harmonization across jurisdictions.

- Personalized healthcare/eldercare assistive robots

- Sector: Healthcare, home care

- What: Robots that learn patient-specific routines and preferences over time, recovering skills rapidly after protocol changes with minimal data retention.

- Potential tools/products:

- Patient-centric skill profiles with consent management for replay samples

- Safety-aware finetune schedulers that align with clinical oversight

- Assumptions/dependencies: Clinical validation, human-in-the-loop supervision frameworks, reliable grounding in diverse home/hospital environments.

- Education: Adaptive lab and classroom assistants

- Sector: Education

- What: Robots that learn course-specific tasks (demo setup, lab prep) each semester while retaining prior curricula; perform quick recoveries at term transitions.

- Potential tools/products:

- Curriculum-aware replay planners

- Instructor dashboards for SR/NBT/KT and recovery controls

- Assumptions/dependencies: Stable campus infrastructure, pedagogical alignment, safety and accessibility standards.

- Sustainable edge learning: Energy-aware continual updates

- Sector: Energy, green IT

- What: Optimize ER and finetuning to be energy-efficient on edge hardware; leverage small buffers to reduce storage and compute footprints across fleets.

- Potential tools/products:

- Energy-aware schedulers that plan updates when renewable energy is available

- Memory footprint optimizers for replay sampling policies

- Assumptions/dependencies: Hardware–software co-design, telemetry on energy usage, fleet-level scheduling.

- Cross-modal continual learning beyond robotics

- Sector: Software, multimodal AI

- What: Apply insights (pretraining reduces forgetting; small ER buffers suffice; rapid recovery possible) to software assistants, AR/VR agents, and embodied digital avatars.

- Potential tools/products:

- Multimodal ER libraries for edge devices

- Rapid Recovery Finetune APIs for knowledge re-expression

- Assumptions/dependencies: Comparable pretraining regimes for target domains, clear task predicates and success metrics.

- Marketplace of micro-replay skill updates

- Sector: Platform/software ecosystems, robotics integrators

- What: Distribute tiny, privacy-preserving replay bundles and finetune scripts as “skill patches” that improve prior tasks or add variants without full retraining.

- Potential tools/products:

- Skill Patch Registry with provenance and safety checks

- Automated compatibility checks (backbone/action-head diagnostics)

- Assumptions/dependencies: IP and privacy frameworks for sharing samples, standardized packaging, interoperability with diverse VLAs.

- Safety-first online continual learning

- Sector: Robotics safety, autonomy

- What: Real-time or near-real-time adaptation with guardrails that bound forgetting and enable rapid rollback; mixing ER with conservative regularization when needed.

- Potential tools/products:

- Safety monitors that gate updates by NBT thresholds

- Hybrid ER + regularization strategies tuned for high-stakes tasks

- Assumptions/dependencies: Proven robust metrics under distribution shift, validated rollback pathways, rigorous incident response policies.

Notes on Core Assumptions and Dependencies Across Applications

- Availability and maturity of pretrained VLA backbones (e.g., Pi0, GR00T N1.5, Open-VLA) with broad, diverse pretraining.

- Tasks resembling the manipulation domains evaluated (LIBERO suites); performance may vary under severe domain shifts or non-manipulation tasks.

- Quality and representativeness of small replay samples; replay sampling strategies matter.

- Safe and compliant procedures for on-device or on-prem finetuning (especially in healthcare and public spaces).

- Sufficient compute (edge or cloud) to run brief finetunes; robust telemetry for SR/NBT/KT.

- Organizational readiness for continual updates (versioning, rollback, audit).

- Regulatory acceptance for dynamic learning systems and data retention practices.

Glossary

- Action chunk: A contiguous set of future actions predicted at once by the policy. "predicts an action chunk at conditioned on a language instruction l and a history of observations ost of length H."

- Action head: The module that maps latent representations to action outputs. "which is then used by an action head to predict future actions."

- Backward transfer: Changes in performance on earlier tasks after learning new tasks; can be positive when earlier tasks improve. "positive backward transfer on previously learned tasks"

- Behavior cloning (BC): Imitation learning that trains a policy to mimic expert actions using supervised loss. "behavior cloning (BC) policy models trained from scratch"

- Catastrophic forgetting: Abrupt degradation of performance on previously learned tasks when training on new tasks. "performance on previous tasks seems to degrade from catastrophic forgetting"

- Continual learning: Training a single policy sequentially on multiple tasks while preserving prior knowledge. "Continual learning is a long-standing challenge in robot policy learning,"

- EWC (Elastic Weight Consolidation): A regularization-based continual learning method that penalizes changes to important parameters. "EWC (Kirk- patrick et al., 2017a), which likewise trains on the current task data but adds a regularization penalty"

- Experience Replay (ER): A technique that stores and reuses a subset of past-task data during training on new tasks. "Experience Replay (ER) is a widely used approach in continual learning"

- Finite-horizon Markov Decision Process: A formal task model with states, actions, transitions, and a fixed episode length. "We model a robotic task as a finite-horizon Markov Decision Process: M = (S, A, T, H, No)"

- Forward transfer: The ability to effectively learn new tasks after previous training. "enabling strong forward transfer with little to no forgetting across architectures."

- Goal predicate: A function that evaluates whether a state satisfies the task goal. "we assume access to a goal predicate g : S > {0, 1}."

- Imitation learning: Learning policies from expert demonstrations rather than direct reward optimization. "we focus on continual learning via imitation learning."

- Initial state distribution: The probability distribution over starting states for a task. "po is the initial state distribution"

- Knowledge transfer (KT): A metric capturing aggregate learning progress across tasks. "we additionally analyze knowledge transfer (KT), which mea- sures the aggregate success rate across all tasks"

- Lifelong learning: Another term for continual learning emphasizing ongoing skill acquisition. "continual learning, also known as lifelong learning (Liu et al., 2023b)"

- Multi-view RGB images: Multiple camera views of color images used as visual observations. "Each observation typically includes multi-view RGB images I} , ... , If"

- Negative Backward Transfer (NBT): A metric quantifying how much performance on past tasks decreases after learning new ones. "Negative Backward Transfer (NBT) across different replay buffer sizes."

- Pareto frontier: A curve showing trade-offs (e.g., forgetting vs. replay size) where improvements in one dimension may worsen another. "Fig. 4 visualizes this effect through a Pareto frontier that characterizes the trade-off between forgetting (in terms of Negative Back- ward Transfer) and replay buffer size."

- Pretraining: Training a model on large, diverse datasets before finetuning on downstream tasks. "pretraining plays a critical role in downstream continual learning performance"

- Proprioceptive state: Robot-internal sensing (e.g., joint angles) included in observations. "and the propri- oceptive state qt."

- Replay buffer: The memory that stores selected samples from past tasks for ER. "a separate replay buffer."

- Stability-plasticity trade-off: The tension between retaining old knowledge (stability) and acquiring new skills (plasticity). "stability- plasticity trade-off (McCloskey & Cohen, 1989a; French, 1999a)."

- Task-conditioned policy: A single policy whose behavior is conditioned on the current task specification. "using a single task-conditioned policy T(. | s,T)."

- Transition function: The dynamics mapping state-action pairs to next states. "T : S x A -> S is the transition function."

- Vision-LLM (VLM): A foundation model jointly trained on image-text data. "vision-LLMs (VLMs) pretrained on internet-scale image-text data."

- Vision-Language-Action (VLA) models: Robotic policies combining vision, language, and action modules for control. "Vision-Language-Action models (VLAs) are robotic poli- cies that map visual observations and natural language in- structions to actions"

- VLM backbone: The pretrained vision-language encoder used to produce latent representations for control. "with its VLM backbone to obtain a latent representation"

Collections

Sign up for free to add this paper to one or more collections.