Solving an Open Problem in Theoretical Physics using AI-Assisted Discovery

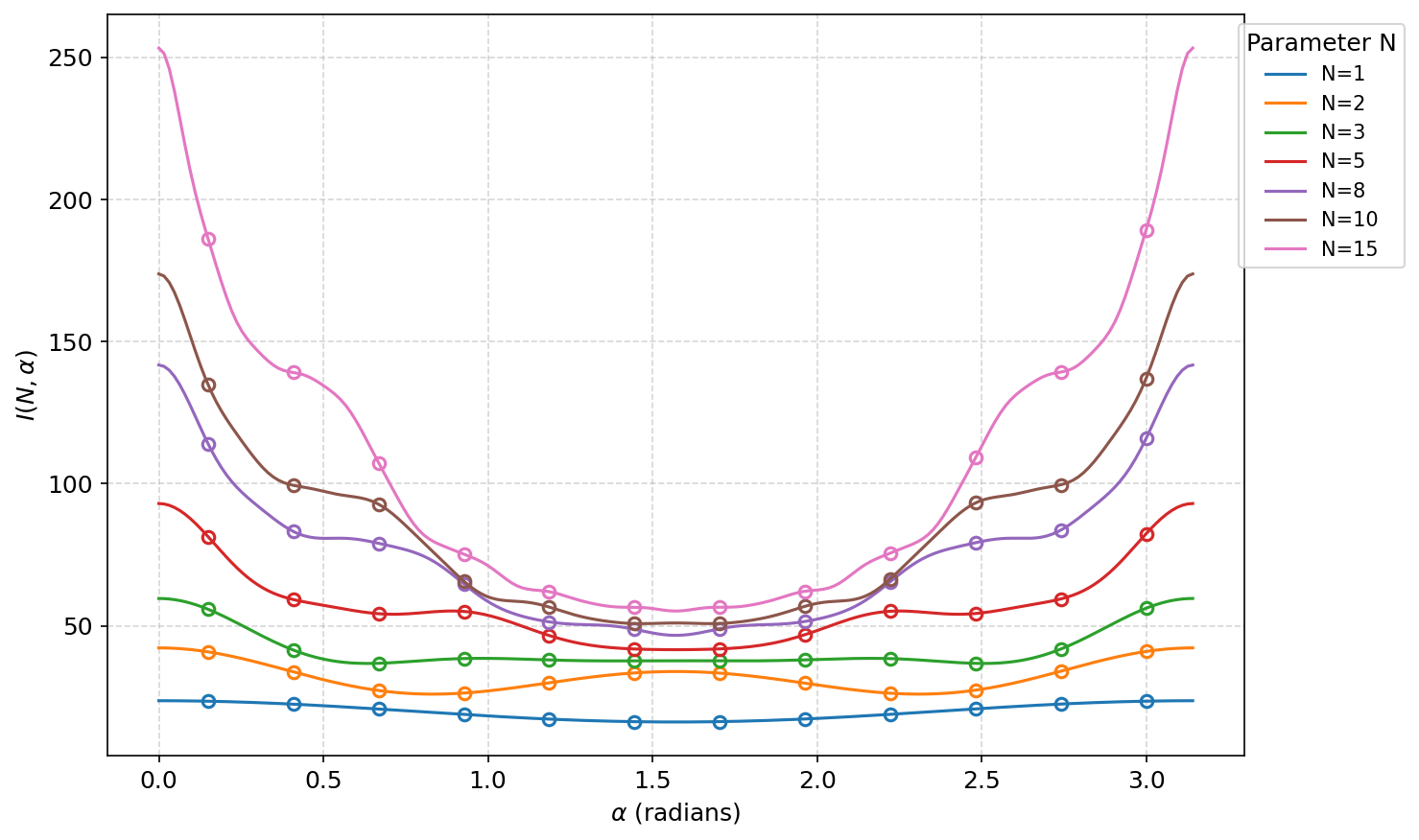

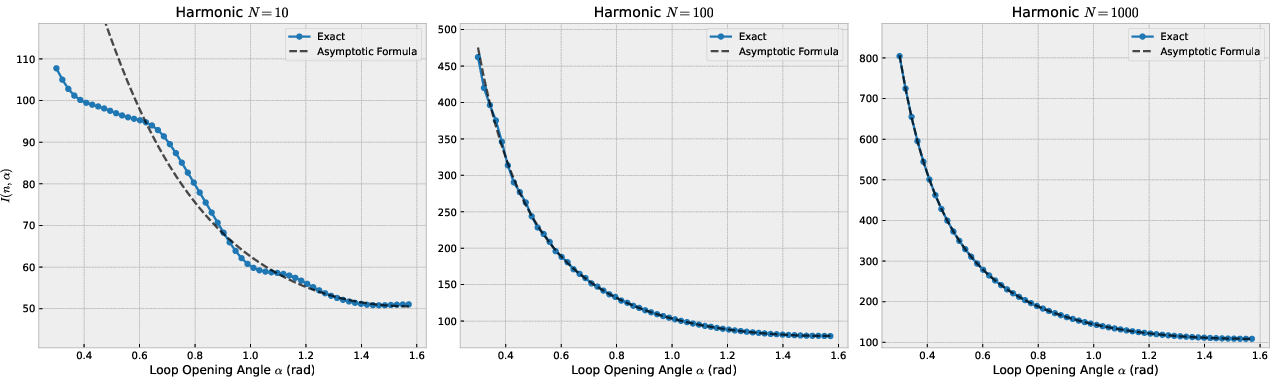

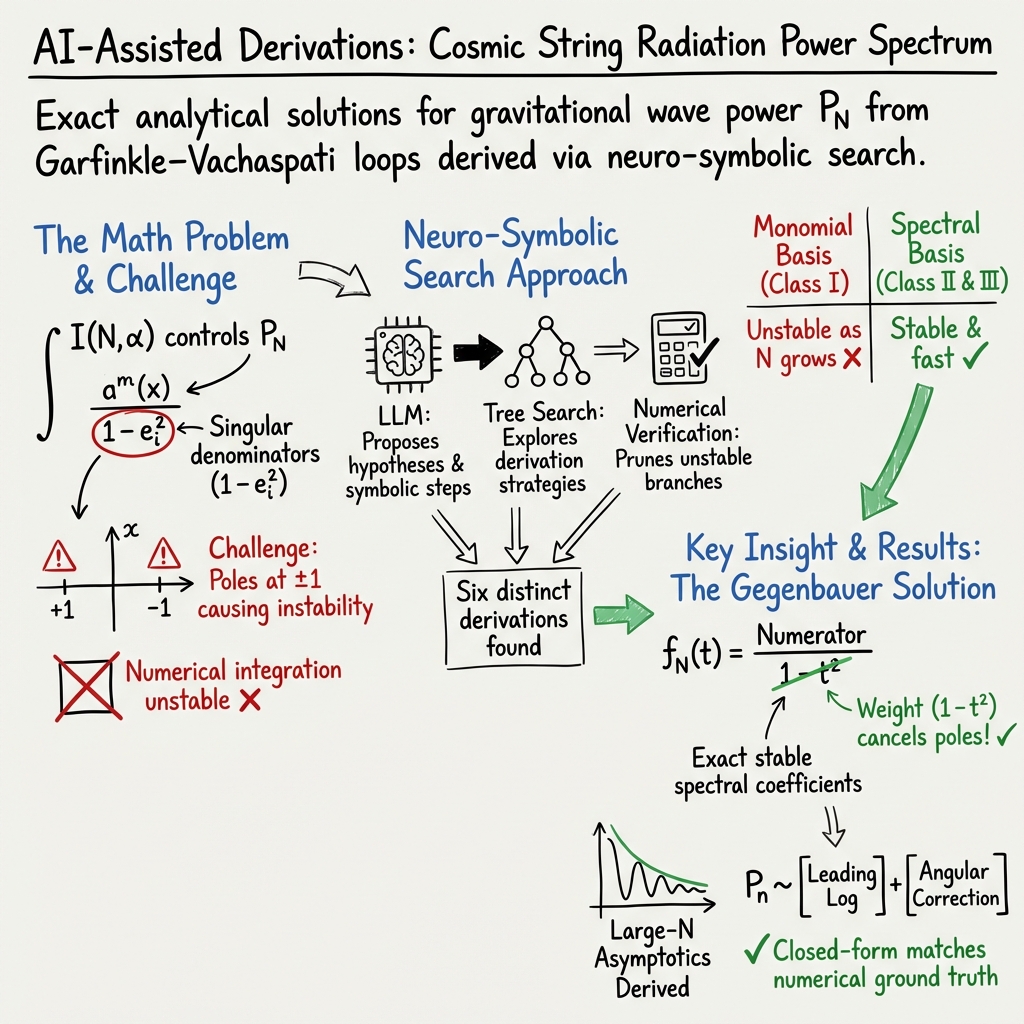

Abstract: This paper demonstrates that artificial intelligence can accelerate mathematical discovery by autonomously solving an open problem in theoretical physics. We present a neuro-symbolic system, combining the Gemini Deep Think LLM with a systematic Tree Search (TS) framework and automated numerical feedback, that successfully derived novel, exact analytical solutions for the power spectrum of gravitational radiation emitted by cosmic strings. Specifically, the agent evaluated the core integral $I(N,α)$ for arbitrary loop geometries, directly improving upon recent AI-assisted attempts \cite{BCE+25} that only yielded partial asymptotic solutions. To substantiate our methodological claims regarding AI-accelerated discovery and to ensure transparency, we detail system prompts, search constraints, and intermittent feedback loops that guided the model. The agent identified a suite of 6 different analytical methods, the most elegant of which expands the kernel in Gegenbauer polynomials $C_l{(3/2)}$ to naturally absorb the integrand's singularities. The methods lead to an asymptotic result for $I(N,α)$ at large $N$ that both agrees with numerical results and also connects to the continuous Feynman parameterization of Quantum Field Theory. We detail both the algorithmic methodology that enabled this discovery and the resulting mathematical derivations.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper shows how an AI system helped solve a tough math problem from physics. The problem was to work out how much energy in the form of gravitational waves is given off by “cosmic strings” (hypothetical, super‑thin cracks in space-time) as they vibrate. The key math step was a very hard integral (a kind of advanced area‑finding calculation) that humans found tricky because it behaves badly near the edges. The authors built an AI that not only explored many ways to solve it but also found an exact, neat solution and a simple formula that works especially well for large cases.

What questions were they trying to answer?

- Can an AI system actually discover new, correct math results on a real, open problem in physics, not just repeat known tricks?

- Can it find a general, exact solution for a stubborn integral that determines the “power spectrum” (how much wave energy appears at each “note” or harmonic) from a vibrating cosmic string?

- Can it do this in a way that is both fast and numerically stable, and can it find simpler formulas when the harmonic number gets large?

How did they do it?

Think of the task like climbing a mountain with many possible routes:

- The “mountain” is the hard integral that has sharp spikes (called singularities) that make normal calculations unstable.

- The AI is like a team of climbers with a map and a drone. It tries many routes (ideas), checks each one with quick calculations (the “drone” doing numerical tests), and drops the ones that don’t work.

Here’s the setup in simple terms:

- A smart LLM (called Gemini Deep Think) proposes math ideas step by step.

- A “Tree Search” is used, which you can imagine as a branching choose‑your‑own‑adventure: each branch is a different plan (like using different kinds of functions or algebra tricks). The system explores hundreds of these branches.

- Automated feedback acts like a scoreboard: each time the model writes a formula, a high‑precision checker compares it to a trusted numerical answer at random settings. Bad paths are cut; good ones are kept and refined.

- “Negative prompting” tells the AI, “You may not reuse that trick—find another one,” forcing it to discover genuinely different methods rather than repeating the same idea.

In math terms, the AI tried three classes of approaches:

- Monomial expansions: break the problem into powers like t², t⁴, t⁶. This is straightforward but can be unstable for big inputs.

- Spectral methods: like splitting a sound into notes, it splits the function into standard “shapes” (Legendre polynomials) that fit the sphere.

- A special “Gegenbauer” method: choose shapes that perfectly match the built‑in weight of the problem so the spikes cancel naturally. That makes the math much cleaner.

A human-AI handoff was also used: after the AI found several methods, a researcher asked a bigger model to double‑check and simplify, which fixed a small detail and helped turn an infinite sum into a tidy, closed formula.

What did they find, and why is it important?

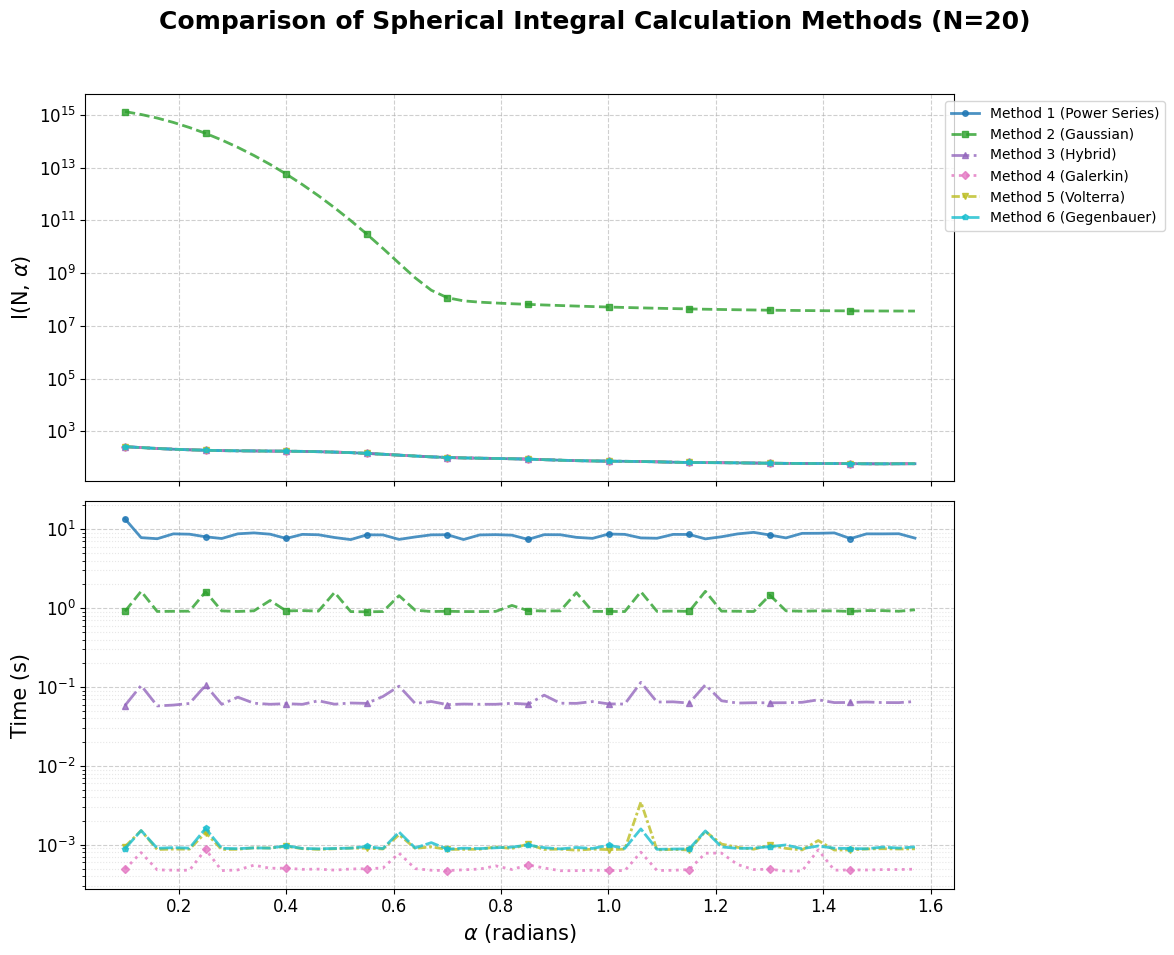

- Six distinct solution paths: The AI discovered six different ways to solve the integral—three based on plain power series, two spectral methods, and one special Gegenbauer method.

- An exact solution: The most elegant solution uses Gegenbauer polynomials. These “shapes” are tailored to the problem so the nasty parts cancel out. This led to an exact, closed‑form solution for the key quantity.

- Fast and stable computation: The spectral and Gegenbauer methods are not only accurate but also much faster and more reliable than the monomial (power‑series) ones, especially for high harmonics (large N).

- Strong agreement with numerical checks: When compared with precise computer calculations, the exact formulas matched extremely well across many test cases.

- A simple large‑N formula: For high harmonics, the authors derived a compact “asymptotic” formula (a good approximation for large N). In everyday terms, it tells you how the energy at those higher “notes” depends on N and the angle α in a very simple way, involving logarithms. That’s valuable because such formulas are easier to use and understand in big‑picture physics.

Why this matters:

- It’s a clear example that AI can do more than guess—it can help find real, new math results, check them, and present them in usable forms.

- For physics, having exact and fast formulas makes it easier to model signals from cosmic strings, which might contribute to the background hum of gravitational waves we measure.

What’s the bigger impact?

- AI as a discovery partner: The system didn’t just compute; it explored ideas, tested them, and found multiple correct routes—like having a creative junior researcher who also runs careful experiments.

- Transparency and repeatability: The authors share prompts, checks, and constraints so others can repeat the process. That’s important for science.

- Human + AI teamwork: A careful human review step improved the results, showing that the best outcomes often come from collaboration.

- Future potential: The same approach could attack other hard integrals and problems in math, physics, and engineering—speeding up the path from puzzle to solution.

In short, the paper shows that with the right guardrails and feedback, AI can help discover new math in physics problems, find exact and practical formulas, and do it faster and more reliably than many traditional methods.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list distills what remains missing, uncertain, or unexplored in the paper, phrased to guide concrete follow-on research:

- Scope of applicability to loop geometries: The derivations are demonstrated for the Garfinkle–Vachaspati loop setup; it remains unclear how (and under what assumptions) the methods generalize to arbitrary loop shapes (non-planar loops, loops with cusps/kinks, or small-scale structure) and whether the same integral structure persists.

- Exact finite-N closed form: Beyond spectral series and tail sums, the paper does not present a single closed-form expression for I(N, α) at finite N; either deriving such a form (in terms of special functions) or proving its non-existence is open.

- Explicit formulas for C_{2j} at finite N: The Gegenbauer-to-Legendre mapping yields tail sums for C_{2j}, but closed-form, finite-N expressions for individual coefficients C_{2j} are not provided; finding them (or tight bounds) would enable rigorous error control.

- Rigorous convergence and limit exchanges: Several steps (e.g., replacing oscillatory kernels by distributional limits with a small mass regulator, interchanging sums/integrals, and applying the Riemann–Lebesgue lemma) lack fully rigorous justification and conditions (uniformity in α, dominated convergence requirements); formal proofs and domains of validity are needed.

- Uniform asymptotic error bounds: The large-N asymptotic formula lacks quantified remainder terms and rates (e.g., O(1/N) or O(1/N2)), as well as proof of uniformity in α; deriving explicit error bounds and their α-dependence is essential.

- Behavior near singular angles (α → 0, π): The asymptotic expression includes factors like 1/sin2 α and logs of trigonometric functions, indicating divergences near α = 0 or π; a matched asymptotic analysis (or physical regularization) and precise limiting prescriptions are missing.

- Parity and finite-N oscillations: The paper notes finite-N parity effects but does not quantify them or provide systematic correction terms; a controlled expansion capturing oscillatory corrections would improve practical use.

- Spectral truncation strategy and guarantees: While spectral methods are efficient, no a priori truncation criteria (J_max as a function of N and tolerance) or rigorous truncation error bounds are provided; practical guidelines and proofs are needed.

- Numerical baseline specification: The “high-precision numerical calculation” used for verification is insufficiently specified (quadrature scheme, handling of integrand singularities, error control, adaptive strategies); detailed methodology and error budgets are necessary for reproducibility.

- Reproducibility artifacts: Full code, prompts, model versions, random seeds, and scripts to reproduce all figures are not released; without these, independent replication is limited.

- Model dependence and ablations: Sensitivity to LLM version/size, prompt wording, negative-prompting strategies, and the contribution of each pipeline component (LLM vs. TS vs. numerical feedback) is not systematically evaluated; ablation studies are needed.

- Human-in-the-loop reliance: The final analytic simplifications required manual intervention and an upgraded model; a quantitative assessment of where autonomy breaks down and how to automate these steps is open.

- Formal verification: Given the reported initial error in Method 5, integrating computer algebra systems or proof assistants to certify identities, recurrences, and convergence statements is an unresolved need.

- Conditioning and stability remedies: Method 5 shows conditioning spikes near specific α, and Method 2 (Gaussian lifting) fails at moderate N; developing preconditioners, alternative normalizations, or basis choices that eliminate these pathologies remains open.

- Stable evaluation of spherical Bessel functions: Accurate, stable computation of j_ℓ(A) for large A and ℓ is crucial but not detailed; selecting asymptotic regimes, recurrence directions, or continued fractions with certified errors would strengthen the numerical pipeline.

- Complexity constants and memory: While several methods are O(N), the constants, memory usage, and practical scaling for large N are not quantified; profiling and asymptotic/empirical complexity analyses are missing.

- Edge cases and definitions at α = 0, π: The integral’s definition and evaluation exactly at the singular angles are not treated; clear limiting procedures and consistency with physical expectations are required.

- Validation against physical observables: The analytic results are not propagated to loop ensemble predictions or compared to observational constraints (e.g., PTA backgrounds); connecting the mathematics to astrophysical inferences is left open.

- Generalization of the framework: It is untested how well the neuro-symbolic methodology transfers to other classes of integrals (different weights, multiple directions e_1, e_2, e_3, higher-dimensional spheres Sd, or non-spherical manifolds); benchmarks on a broader suite of problems are needed.

- Alternative orthogonal bases: The paper focuses on Gegenbauer C_l{(3/2)}; exploring ultraspherical families with different λ or other weighted bases that could further optimize conditioning and convergence is unexplored.

- Robustness of Tree Search: The search explored ~600 nodes with 80% pruning, but scaling behavior with problem complexity, risk of reward hacking, and failure modes are not characterized; developing metrics and stopping criteria is an open area.

- Novelty assurance: There is no audit to ensure the solution pathways were not memorized from training data; documenting checks for data contamination and provenance would strengthen the novelty claim.

- Uncertainty quantification: Reported numerical comparisons lack uncertainty bars combining quadrature error, series truncation error, and floating-point error; a comprehensive uncertainty budget per method is missing.

- Stabilization of monomial approaches: While labeled unstable, it is untested whether resummation, compensated summation, or orthogonalized monomial bases could salvage Methods 1–3; investigating such stabilizations may broaden applicability.

- Multi-harmonic and multi-direction extensions: Whether the techniques handle correlations across multiple harmonics or integrals involving more than two directions (e.g., e_1, e_2, e_3) is not addressed; extending the formalism is an open direction.

Practical Applications

Immediate Applications

The paper’s methods and results can already be translated into concrete tools and workflows across domains. Below are actionable use cases, with sector tags and key dependencies.

- Drop-in spectral module for cosmic-string power spectrum modeling [Sector: astrophysics/astronomy]

- What: Implement Method 6 (Gegenbauer) and the asymptotic formula for fast, stable evaluation of the power spectrum Pₙ(α) in Pulsar Timing Array (PTA) and gravitational-wave pipelines.

- Why: Orders-of-magnitude speedups and improved numerical stability for large N when scanning broad parameter grids (enabling faster likelihood evaluations).

- How: Provide a Python/C++ library (with special-function backends for spherical Bessel and Ci/Cin) callable from PTA analysis frameworks (e.g., enterprise, libstempo).

- Dependencies/assumptions: Accurate special-function libraries (e.g., SciPy, Boost), validation around α→0 or α→π (where sin α is small), and basic unit tests against numerical quadrature for regression.

- Spherical convolution acceleration and stability improvements [Sector: computer graphics, vision, geosciences]

- What: Use the Funk–Hecke-based spectral formulation (Legendre expansion) to compute spherical convolutions (e.g., environment lighting, precomputed radiance transfer, diffusion MRI orientation functions) with improved conditioning.

- Why: High-frequency content and near-singular kernels often destabilize naive expansions; the paper’s spectral/Gegenbauer approach removes these pathologies.

- How: Integrate a “SphericalConvolution” routine in graphics/vision toolkits, supporting P_l and C_l3/2 bases with auto-selection for stability.

- Dependencies/assumptions: Spherical harmonics infrastructure already in place; numerical stability verified for specific domain kernels.

- Regularization of singular integrals via Gegenbauer weighting [Sector: electromagnetics, acoustics, wireless]

- What: Apply the weight-matched Gegenbauer expansion to integrals with 1/(1−t²)-type singularities (e.g., antenna radiation patterns, scattering) for stable evaluation.

- Why: Cancels singular denominators analytically, enabling robust and fast evaluation at high orders.

- How: Provide a “Gegenbauer-regularizer” utility that transforms problem kernels before numerical integration or spectral projection.

- Dependencies/assumptions: Knowledge of kernel structure and singularities; availability of orthogonal polynomial routines; validation on representative geometries.

- Auto-Galerkin and Volterra code generator for spectral solvers [Sector: scientific software/engineering simulation]

- What: Package Methods 4 (Galerkin matrix) and 5 (Volterra recurrence) as a code generator that emits stable, O(N) spectral solvers (tridiagonal SPD systems, forward recurrences).

- Why: Engineers and computational scientists often need fast, stable spectral solvers; automated generation reduces time-to-solution and errors.

- How: A CLI tool that ingests orthogonality weight and basis, emits compiled routines (C++/Python) for coefficient recovery and convolution.

- Dependencies/assumptions: Trusted linear algebra backends; test harness with reference integrals; domain-specific basis choices.

- AI-guided math discovery harness for lab use [Sector: academia/industrial R&D]

- What: Adopt the neuro-symbolic workflow (LLM + Tree Search with PUCT + automated numerical feedback + negative prompting) as an internal “math bench.”

- Why: Rapidly explores multiple derivation strategies, prunes unstable paths, and cross-validates results; improves reproducibility and discovery velocity.

- How: Provide a templated Jupyter extension (“MathBench”) that enforces function signatures, auto-runs numerical baselines, injects exceptions/errors back into the LLM, and logs search trees.

- Dependencies/assumptions: Access to a capable LLM (e.g., Gemini-class), numerical baselines for verification, governance around data/IP.

- Quality assurance for symbolic-to-numeric pipelines [Sector: scientific computing/software QA]

- What: Integrate an automated checker (like the paper’s harness) into CI pipelines to verify symbolic manipulations against high-precision numerics, detecting cancellation/instability.

- Why: Prevents subtle math errors and numerical pathologies from silently shipping in scientific software.

- How: CI hooks that execute proposed closed-form code on randomized parameter sets and compare to high-precision references (with thresholds).

- Dependencies/assumptions: High-precision libraries (mpmath/Boost.Multiprecision); compute budget for CI; metrics for conditioning and error bounds.

- Rapid asymptotics prototyper [Sector: physics/quantitative finance/applied math]

- What: Wrap the paper’s approach to derive and validate large-parameter asymptotics (e.g., stationary phase, steepest descent variants) with automated cross-checks against numerics.

- Why: Many integrals in QFT, statistical physics, and option pricing require robust asymptotics; the harness can accelerate hypothesis → validation cycles.

- How: “AsymptoticSynth” plugin that drives the LLM to produce a leading-log plus remainder structure and verifies across regimes.

- Dependencies/assumptions: Representative parameter sweeps for validation; access to special functions; careful handling near turning points/singularities.

- Teaching and tutoring modules in advanced mathematical methods [Sector: education]

- What: Classroom-ready notebooks that reproduce the six methods, show failure modes (e.g., cancellation), and demonstrate spectral vs monomial conditioning.

- Why: Students see multiple solution paths, numerical pitfalls, and verification best practices; builds intuition for stable computation.

- How: Open educational resources with auto-graders and harness-based feedback on student derivations/code.

- Dependencies/assumptions: Instructor access to LLMs (optional), numerical libs, and sandboxed compute.

- Reference implementation for spherical integrals in medical imaging [Sector: healthcare (imaging)]

- What: Apply spectral/Gegenbauer techniques to diffusion MRI spherical integrals and spherical deconvolution steps for more stable high-order reconstructions.

- Why: High angular resolution methods can suffer from instability; weight-matched orthogonalization improves numerical behavior.

- How: Package as a plugin for DIPY or MRtrix with benchmarks on publicly available datasets.

- Dependencies/assumptions: Domain-specific validation; integration with existing pipelines; careful handling of noise and regularization.

- Multi-method cross-validation for critical calculations [Sector: all]

- What: Use negative prompting to force the LLM to produce independent derivations (e.g., monomial vs spectral), providing internal consistency checks for high-stakes results.

- Why: Reduces risk of single-path hallucinations or hidden errors.

- How: A “dual-derivation” workflow in internal R&D SOPs, with auto-comparison and escalation when discrepancies exceed thresholds.

- Dependencies/assumptions: LLM diversity of methods; numerical oracle availability for tie-breaking; human-in-the-loop for adjudication.

Long-Term Applications

The work also points to more ambitious directions that require further research, scaling, or standardization before broad deployment.

- Autonomous discovery engines for mathematics and theoretical physics [Sector: academia]

- What: End-to-end systems that propose, validate, and refine proofs/derivations with hierarchical verification and minimal human intervention.

- Why: Scales exploration of open problems; triages promising paths; documents full search provenance.

- Dependencies/assumptions: Stronger formal verification (proof assistants), better guardrails against hallucination, and richer numerical oracles where closed-form ground truths do not exist.

- CAS-integrated “AI mathematician” with stability-aware solvers [Sector: software]

- What: Integrate LLM+TS+feedback into computer algebra systems (e.g., Mathematica, Maple, SymPy) to auto-select numerically stable representations (e.g., Gegenbauer over Legendre when weights mismatch).

- Why: Elevates CAS from manipulation to principled, stability-aware computation.

- Dependencies/assumptions: Deep integration with CAS internals, special-function coverage, and standardized conditioning diagnostics.

- Near–real-time gravitational-wave data analysis at scale [Sector: astrophysics/astronomy]

- What: Use the asymptotic closed-form to enable low-latency inference on cosmic-string signals across large parameter spaces (PTAs, LISA).

- Why: Enables rapid hypothesis testing and follow-up strategies.

- Dependencies/assumptions: Validation on end-to-end pipelines, extension to broader loop geometries and astrophysical priors, stress-testing under noise/systematics.

- Automated design of stable spectral/PDE solvers [Sector: engineering simulation/energy/robotics]

- What: Generalize “Auto-Galerkin/Auto-Volterra” to PDEs (radiative transfer, Helmholtz, elasticity) and control (Riccati equations) with stability-first code synthesis.

- Why: Reduces manual trial-and-error in selecting bases, preconditioners, and boundary treatments.

- Dependencies/assumptions: Domain constraints encoding (BCs, geometries), verified preconditioning libraries, and performance tuning on heterogeneous hardware.

- QFT and HEP integral assistant using Feynman-parameter pathways [Sector: high-energy physics]

- What: Systematically derive parameterizations and asymptotics of multi-loop integrals, propose contour deformations, and validate numerically.

- Why: Bridges symbolic derivation and numeric regulators (dimensional regularization, mass cutoffs) with auditable steps.

- Dependencies/assumptions: High-precision multi-dimensional integration or sector decomposition tools; tight integration with community codes.

- Policy frameworks for AI-accelerated science (transparency and credit) [Sector: policy]

- What: Guidelines that mandate disclosure of prompts, search constraints, and verification harnesses when publishing AI-assisted results; norms for attribution and authorship.

- Why: Ensures reproducibility and accountability; supports community trust.

- Dependencies/assumptions: Alignment across journals, funders, and institutions; practical tooling to attach artifacts to publications.

- AI-augmented peer review and math verification services [Sector: scholarly publishing]

- What: Third-party services that reproduce claimed derivations with independent LLM+TS runs and numerical checks, flagging instability or gaps.

- Why: Raises the bar for mathematical rigor and reduces reviewer burden.

- Dependencies/assumptions: Compute and model access; standardized benchmarks and acceptance thresholds.

- Enterprise R&D copilots for theoretical/algorithmic innovation [Sector: pharma, finance, energy, aerospace]

- What: Internal copilots that propose multiple derivations, test stability, and produce deployment-ready code for domain-specific integrals/approximations (e.g., Fourier-based option pricing, scattering in materials).

- Why: Shortens time from idea to reliable prototype; encourages method diversity and cross-validation.

- Dependencies/assumptions: Domain data confidentiality, compliance, and robust governance; customization for organization-specific kernels and constraints.

- Numerical stability audits as a standard artifact [Sector: cross-domain]

- What: Make conditioning and cancellation analyses first-class outputs (plots, metrics, regression tests) alongside code and theory.

- Why: Codifies best practices surfaced in the paper (e.g., failure of monomial expansions at large N) into enforceable standards.

- Dependencies/assumptions: Tooling to compute conditioning indicators; community consensus on metrics.

- Curriculum redesign around neuro-symbolic problem solving [Sector: education]

- What: Integrate tree-search-based exploration, negative prompting for multiple methods, and automated feedback into advanced math/physics curricula.

- Why: Trains students to value method diversity, rigor, and stability.

- Dependencies/assumptions: Scalable access to LLMs, instructor training, and assessment frameworks that reward process as well as result.

Notes on Assumptions and Dependencies (cross-cutting)

- Model capability: Results depend on access to advanced reasoning LLMs (Gemini-class or equivalent) and effective prompt design.

- Verification oracles: The approach presumes availability of high-precision numerical baselines; for some open problems, these may be unavailable or computationally expensive.

- Numerical libraries: Reliable special-function implementations and high-precision arithmetic (e.g., mpmath, Boost) are crucial to stability.

- Human-in-the-loop: Final verification and interpretation often require expert oversight, especially when generalizing beyond the demonstrated integral.

- Reproducibility: Publishing prompts, search constraints, and test harnesses is essential to trust and adoption; institutions may need policy and tooling support.

- Generalization boundaries: Methods shown for spherical integrals and specific singularities; adaptation to other geometries/kernels may require new basis choices and analysis.

Glossary

- Associated Legendre polynomials: Special functions extending Legendre polynomials with an order parameter, often used in solving angular parts of spherical problems. "this corresponds to a transient matrix conditioning issue near a root of the associated Legendre polynomials."

- Bauer plane wave expansion: An expansion expressing trigonometric plane waves in Legendre polynomials with spherical Bessel coefficients. "we use the Bauer plane wave expansion"

- Catastrophic cancellation: A numerical instability where subtracting nearly equal numbers causes significant loss of precision. "catastrophic cancellation, unstable monomial sums or ill conditioned basis transformations"

- Chebyshev: A family of orthogonal polynomials useful for approximation and spectral methods. "basis expansions (e.g., power series, Legendre, Chebyshev, Jacobi, Gegenbauer)"

- Cin(z): The generalized cosine integral function defined by Cin(z) ≡ ∫₀z (1−cos t)/t dt. "The resulting integral is the standard definition of the generalized cosine integral function :"

- Complementary Cosine Integral: A variant of the cosine integral appearing in closed-form asymptotic expressions. "finite closed-form expression involving the Complementary Cosine Integral, improving the solution from an exact infinite series to a fully analytical finite form."

- Cosmic strings: Hypothetical one-dimensional topological defects in spacetime that can emit gravitational radiation. "gravitational radiation emitted by cosmic strings."

- Eikonal propagators: High-energy limit approximations of propagators in quantum field theory, treating trajectories as straight lines. "We treat the denominators as Quantum Field Theory (QFT) massless eikonal propagators."

- Feynman parameterization: A method to combine multiple propagator denominators into a single integral over auxiliary parameters. "connects to the continuous Feynman parameterization of Quantum Field Theory."

- Funk-Hecke Convolution Theorem: A theorem that diagonalizes spherical convolutions in terms of spherical harmonics or Legendre polynomials. "exploiting the Funk-Hecke Convolution Theorem."

- Galerkin Matrix: A linear system obtained by projecting equations onto a finite basis, used in spectral/Galerkin methods. "Method 4: Spectral Galerkin (Matrix Method)"

- Gamma function: A generalization of factorial to complex and real numbers, Γ(n) = (n−1)!. "The radial integral can be evaluated directly as "

- Garfinkle-Vachaspati string: A specific model of cosmic string used in gravitational radiation calculations. "the power emitted by a Garfinkle-Vachaspati string is governed by the integral:"

- Gegenbauer polynomials: Orthogonal polynomials generalizing Legendre and Chebyshev polynomials, suited to weights like 1−t². "expands the kernel in Gegenbauer polynomials to naturally absorb the integrand's singularities."

- Gravitational radiation: Ripples in spacetime (gravitational waves) produced by accelerating masses. "gravitational radiation emitted by cosmic strings."

- Harmonic Numbers: The sequence H_k = ∑_{i=1}k 1/i, appearing in asymptotic analyses. "which is precisely the difference between consecutive even Harmonic Numbers ():"

- Jacobi: A family of orthogonal polynomials that generalize many classical polynomial families. "basis expansions (e.g., power series, Legendre, Chebyshev, Jacobi, Gegenbauer)"

- Legendre differential equation: The ODE whose solutions are Legendre polynomials, arising in spherical problems. "derive a recurrence relation for by multiplying the Legendre differential equation\nby and integrating"

- Legendre polynomials: Orthogonal polynomials on [−1,1] used in spherical expansions and solving angular PDEs. "analytical expansion in Legendre polynomials difficult due to the non-matching weight functions."

- PUCT: Predictor plus upper confidence bound; a tree search strategy balancing exploration and exploitation. "The TS algorithm utilized a predictor plus upper confidence bound (PUCT) approach to balance exploitation and exploration of novel solution strategies."

- Pulsar Timing Arrays: Observational networks that detect low-frequency gravitational waves via pulsar timing variations. "following observations of the stochastic background by Pulsar Timing Arrays."

- Quantum Field Theory (QFT): The theoretical framework combining quantum mechanics and special relativity to describe fields and particles. "We treat the denominators as Quantum Field Theory (QFT) massless eikonal propagators."

- Riemann-Lebesgue lemma: A result stating that Fourier transforms of integrable functions vanish at infinity, used to justify distributional limits. "The Riemann-Lebesgue lemma then implies that the kernel converges to a strict distribution."

- Spherical Bessel function: Special functions j_l(x) related to radial solutions in spherical coordinates. "where is the spherical Bessel function of the first kind."

- Spherical convolution: Convolution defined on the sphere, diagonalized by spherical harmonics/Legendre polynomials. "Since is a spherical convolution, if , then we can use the Funk-Hecke Theorem"

- Symmetric positive definite (SPD) matrix: A matrix with positive eigenvalues and symmetry, ensuring stable solvability of linear systems. "Thus, is a symmetric positive definite tridiagonal matrix."

- Tree Search (TS): A combinatorial search over branching solution states, often guided by scoring and pruning. "Tree Search (TS) algorithm"

- Volterra Recurrence: A recurrence relation approach inspired by Volterra-type integral/differential equations for sequential coefficient computation. "Method 5: Spectral Volterra (Recurrence Method)"

Collections

Sign up for free to add this paper to one or more collections.