- The paper introduces a unified taxonomy for latent space, establishing its evolution from a neural byproduct to a central computational principle.

- It employs detailed analysis of mechanisms including architecture, representation, computation, and optimization to enhance model efficiency and scalability.

- It demonstrates latent space's role in boosting reasoning, planning, and multi-modal integration while highlighting challenges in evaluation and interpretability.

The Latent Space in Language-Based Models: A Unified Perspective

Introduction and Motivation

The latent space paradigm has emerged as a central substrate for computation in language-based models such as LLMs, VLMs, VLAs, and broader agentic architectures. Moving beyond explicit, token-centric perspectives, this survey ("The Latent Space: Foundation, Evolution, Mechanism, Ability, and Outlook" (2604.02029)) provides a rigorous taxonomy and synthesis of latent space research. The authors delineate the conceptual, mechanistic, and functional dimensions of latent space, emphasizing its transition from a byproduct of neural computation to a first-class operational and design principle.

The study highlights how latent spaces address structural limitations of explicit space—redundancy, discretization bottlenecks, inefficiency, and semantic loss—thereby enabling more expressive, efficient, and scalable intelligence.

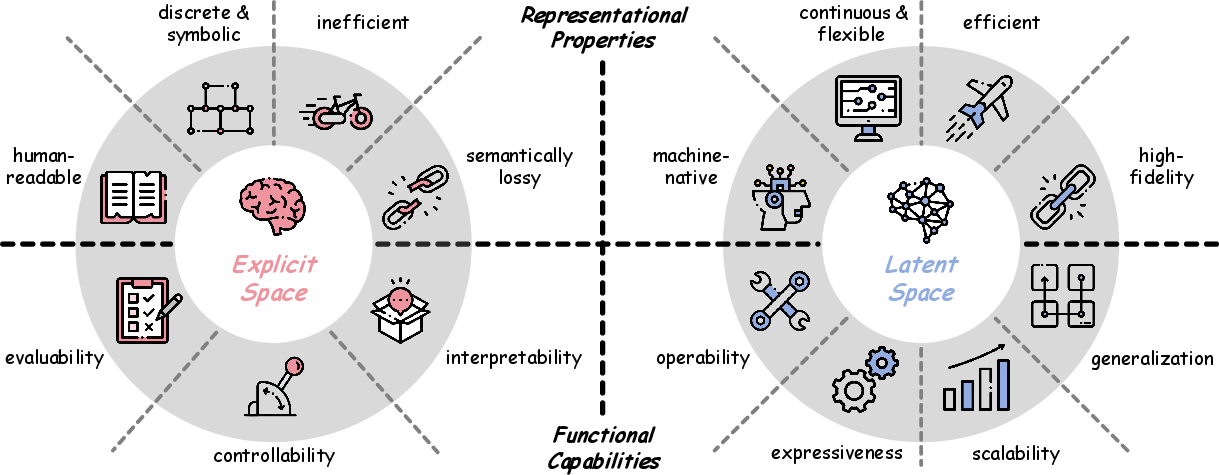

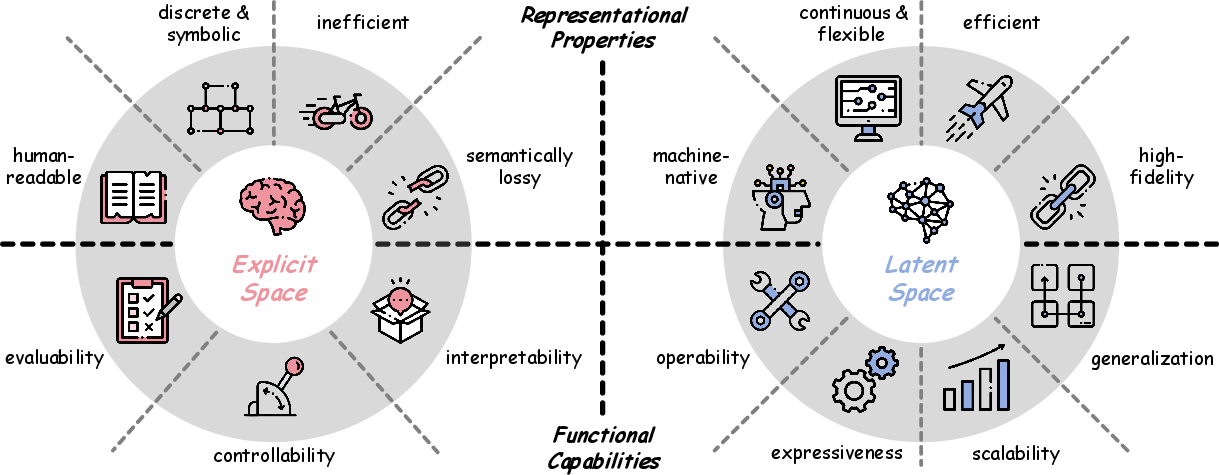

Figure 1: Latent vs. explicit spaces—the former exhibits higher semantic fidelity, continuity, and operational flexibility, while the latter is constrained by human-interpretable discrete tokens.

Conceptual Foundation: Latent vs. Explicit Space

Latent space in language-based models comprises internal, continuous, high-dimensional activations encoding not only semantics but also relational, syntactic, and contextual features. Unlike explicit space, which operates on discrete, interpretable token sequences, latent representations lack direct human readability yet provide:

- Continuous, machine-native vectorization;

- More compact, flexible, and information-rich encoding;

- Reduced sequential and conversion inefficiencies;

- Higher representational fidelity, capturing subtleties lost in tokenization (Hao et al., 2024, Zhu et al., 18 May 2025).

Figure 1 contrasts these paradigms along axes of expressivity, efficiency, and fidelity, underscoring a paradigm shift toward latent-centric computation as the primary locus of abstraction and inference.

Evolutionary Trajectory of Latent Space Research

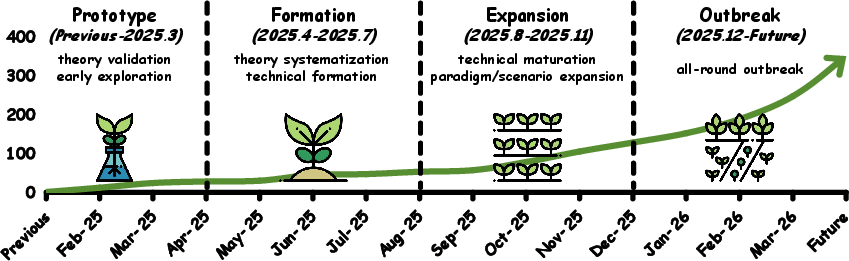

The field’s evolution is traced through four major stages:

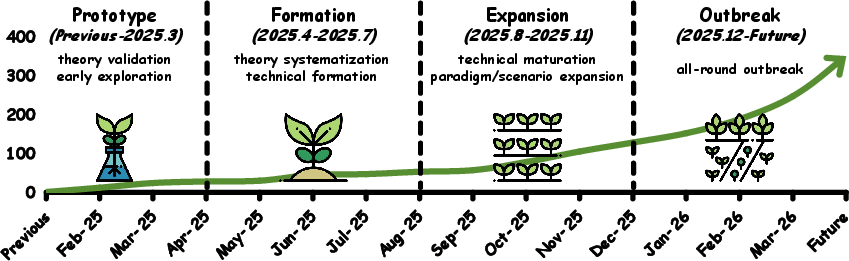

Figure 2: The development of latent space research, categorized into Prototype, Formation, Expansion, and Outbreak stages, with timeline and method diversity.

Prototype: Early validation that LLM activations encode reasoning-like processes (Hao et al., 2024, Liu et al., 2024), with demonstration of compressed chains-of-thought and latent self-evaluation. Methods such as COCONUT instantiate continuous, looped latent reasoning, bypassing explicit tokenization.

Formation: Theoretical systematization, such as reasoning-by-superposition analysis (Zhu et al., 18 May 2025), and rigorous complexity results on expressiveness and parallelism (Gozeten et al., 29 May 2025, Saunshi et al., 24 Feb 2025). Here, latent frameworks extend to multimodal regimes.

Expansion: Proliferation of domain-specific adaptations (latent memory (Zhang et al., 29 Sep 2025), visual reasoning (Li et al., 29 Sep 2025), multi-agent latent collaboration (Zou et al., 25 Nov 2025), and latent action representations (Bu et al., 9 May 2025)), along with technical advancements in scalable inference and RL-augmented optimization. Fragmentation becomes evident as latent strategies diverge across tasks and modalities.

Outbreak: Consolidation and sophistication, marked by architectural specialization (recurrent/looped transformers, concept-level computation), advanced optimization (RL, reward shaping), and cross-modal unification. Latent operation emerges as a foundational systems paradigm, yet challenges in standardization, evaluation, and interface specification become central.

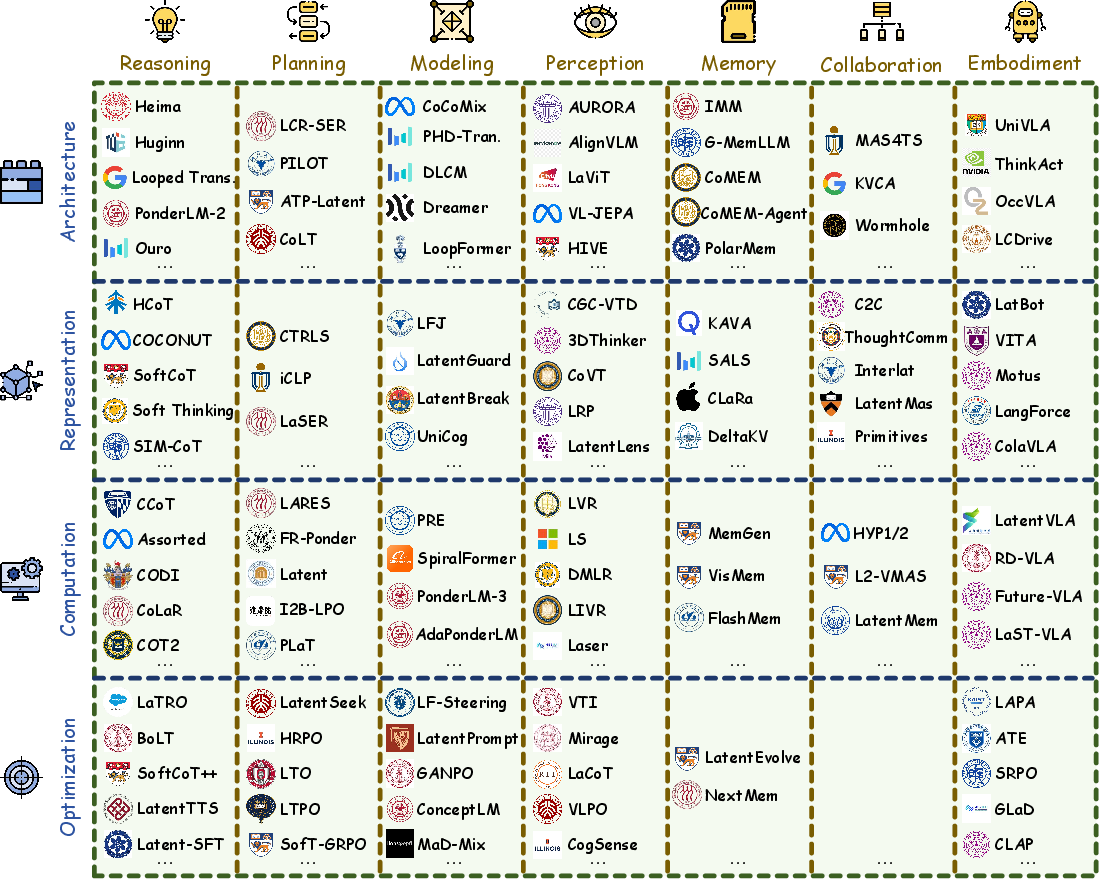

Latent Space Taxonomy: Mechanism and Ability

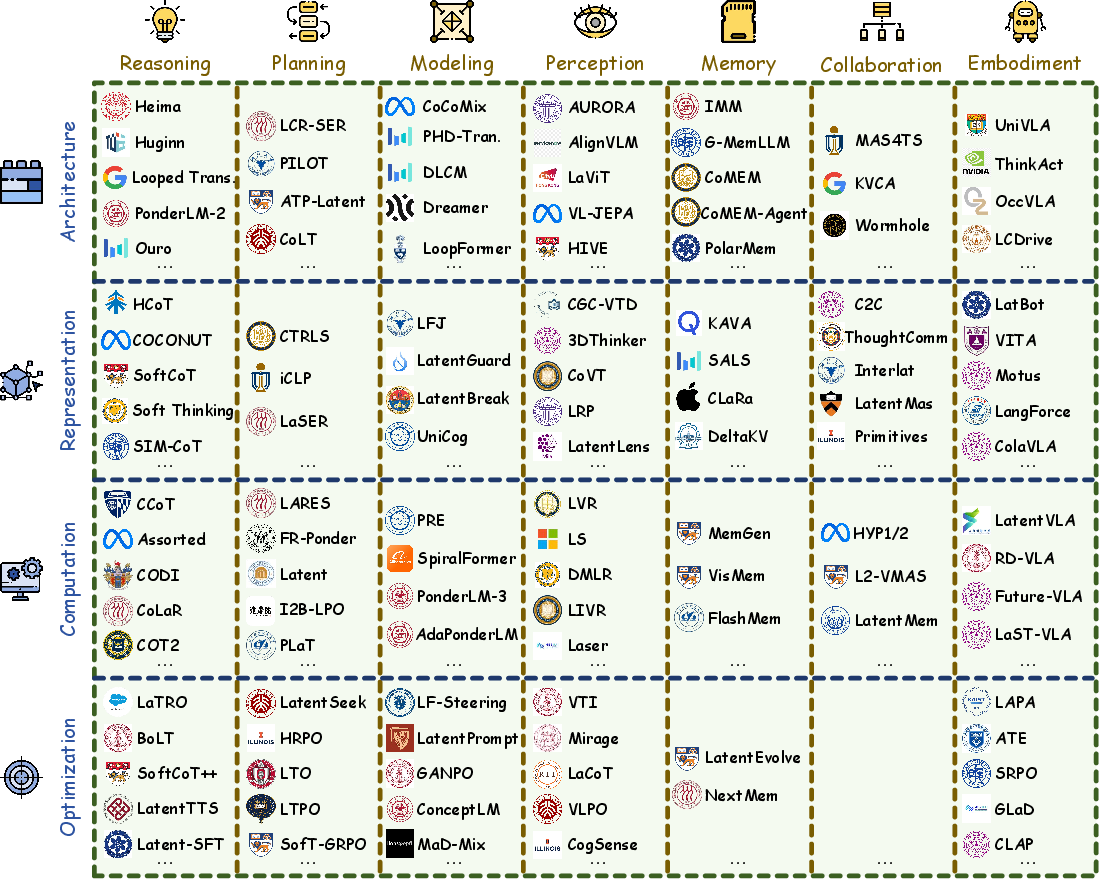

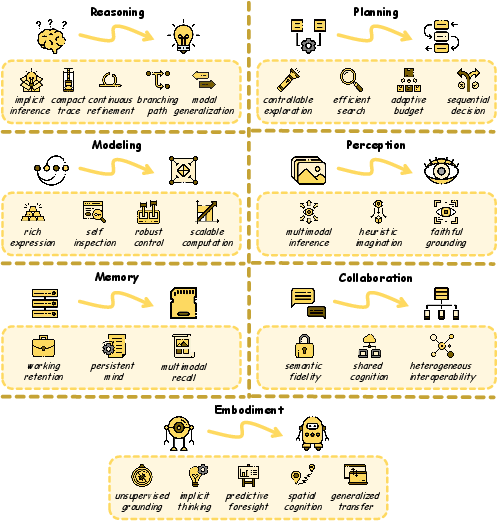

Figure 3: Taxonomy of latent space approaches, classified along Mechanism (architecture, representation, computation, optimization) and Ability (reasoning, planning, modeling, memory, perception, collaboration, embodiment) axes.

Mechanistic Organization

Architecture: Native integration of latent computation into the backbone or through modular/auxiliary components, progressing from shallow recurrence to joint cross-modal systems.

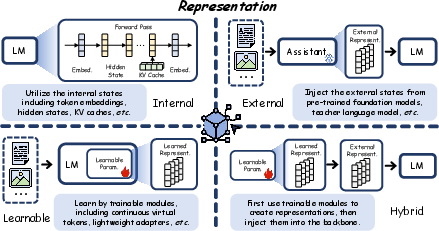

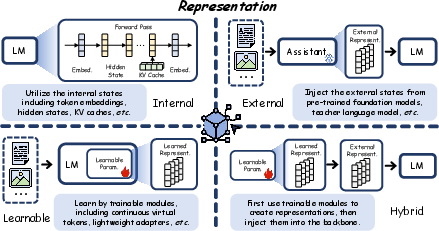

Representation: Four cardinal forms are identified—internal (backbone activations), external (auxiliary model outputs), learnable (dedicated modules), and hybrid (learned plus external injection), as detailed in Figure 4.

Figure 4: The four representational paradigms—Internal, External, Learnable, and Hybrid—each enabling distinct integration with the core model.

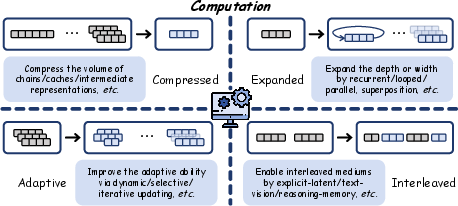

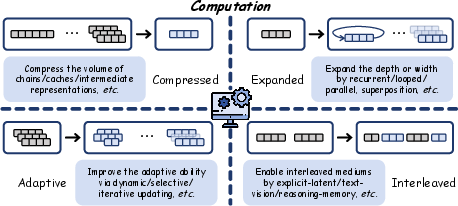

Computation: Latent computation spans compressed (trace/state/feature compaction), expanded (recurrent/parallel/superpositioned inference), adaptive (dynamic control, instance-level compute allocation), and interleaved (mixing symbolic/latent, modality-bridged, or agent-tied reasoning) regimes, as in Figure 5.

Figure 5: Operational schemas for latent computation: compression, expansion, adaptation, and heterogeneous interleaving.

Optimization: Methods encompass pre-training (native autoregressive/auxiliary/reinforcement objectives), post-training (fine-tuning, contrastive/distillation/specialized reward shaping), and inference-level manipulation (test-time scaling, gradient-based latent updates, reward-guided search).

Functional Abilities Enabled by Latent Space

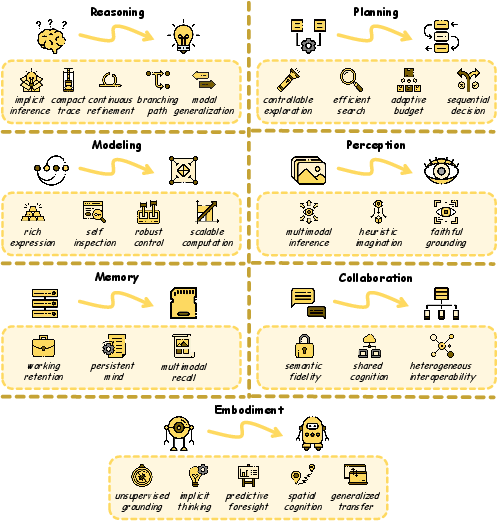

Figure 6: Core latent space-enabled abilities: Reasoning, Planning, Modeling, Perception, Memory, Collaboration, and Embodiment.

Reasoning: Latent reasoning supports implicit, compact, and high-fidelity inference, branching exploration (parallel reasoning paths/trajectory superposition), and seamless transition to multimodal, non-textual substrates. Theoretical and empirical results show latent-driven methods can match or surpass explicit CoT in efficiency and accuracy (Zhu et al., 18 May 2025, Hao et al., 2024).

Planning: Differentiable, policy-optimized latent trajectories enable controllable and adaptive search, superior exploration, and efficient resource allocation for sequential decision tasks—substantiated by RL-based latent optimization and test-time gradient adjustment (Zheng et al., 9 Nov 2025, Li et al., 19 May 2025).

Modeling: Latent space architectures extend expressiveness, accommodate robust self-inspection (probing, visualization, semantics-aware steering), and support scalable compute (recurrent/looped transformers with theoretical guarantees of increased capacity and generalization (Saunshi et al., 24 Feb 2025, Zhu et al., 29 Oct 2025)).

Perception: Vision and vision-LLMs gain high-fidelity multimodal integration—internal representation and manipulation of spatial, geometric, and 3D structure directly in latent space—thereby improving sample efficiency and alleviating hallucination (Li et al., 29 Sep 2025, Yang et al., 20 Jun 2025, Yu et al., 14 Nov 2025).

Memory: Latent memory modules replace context-window-dependent, stateless LLM operation with persistent, differentiable, and adaptive knowledge stores, enhancing working and episodic memory, cross-modal recall, and self-evolving agent behavior (Zhang et al., 29 Sep 2025).

Collaboration: Direct latent communication channels in multi-agent systems enable high-bandwidth, low-latency state sharing and non-symbolic, heterogeneous collaboration, outperforming text-mediated protocols in throughput and semantic fidelity (Zou et al., 25 Nov 2025, Zheng et al., 23 Oct 2025).

Embodiment: In vision-language-action models and robots, latent action representation and predictive simulation afford unsupervised grounding, transfer across morphologies, and spatial imagination beyond what is feasible with symbolic interfaces (Bu et al., 9 May 2025, Huang et al., 22 Jul 2025).

Challenges and Open Problems

Despite significant progress, latent space computation remains limited by:

- Evaluability: Lack of process-level benchmarks—most evaluation is output-based; distinguishing genuine, structured latent inference from spurious or shortcut solutions is an open issue.

- Controllability: Reliable, semantic steering and safe intervention in latent space is hindered by the opacity and high dimensionality of activations, aggravating issues of safety and behavioral alignment.

- Interpretability: The absence of human-legible intermediate states hampers process auditability and hinders adoption in critical domains; standalone interpretability methods for latent computation are still emergent.

These challenges impede standardization and comparative evaluation, raising stakes for further theoretical and methodological development.

Implications and Future Directions

The survey positions latent space as the probable substrate for next-generation intelligence, especially in regimes where explicit computation is intractable, lossy, or uneconomical. Key directions include:

- Theoretical unification of explicit and latent spaces as complementary computational regimes, with rigorous expressiveness, accuracy, and alignment analysis (2509.25239, Zhu et al., 18 May 2025).

- Cross-modal expansion, making latent space the joint workspace for language, vision, action, memory, and multi-agent planning.

- Systematic benchmarking and governance methodologies—process-level metrology, constraint enforcement, and explainability frameworks.

- Design of models and agentic systems where latent computation, not token-level generation, defines the organizational core—explicit outputs used for reporting, instruction, and external verification only.

Conclusion

This survey establishes latent space as a foundational, machine-native paradigm that is rapidly restructuring the mechanics, architecture, and reach of modern intelligent systems. By integrating a unified taxonomy—through Mechanism (architecture, representation, computation, optimization) and Ability (reasoning, planning, modeling, perception, memory, collaboration, embodiment)—it delineates clear trajectories for future progress. Crucially, as latent-centric computation expands, the stringency of evaluation, control, and interpretability must increase commensurately to ensure trustworthy, scalable, and safe deployment across modalities and domains.