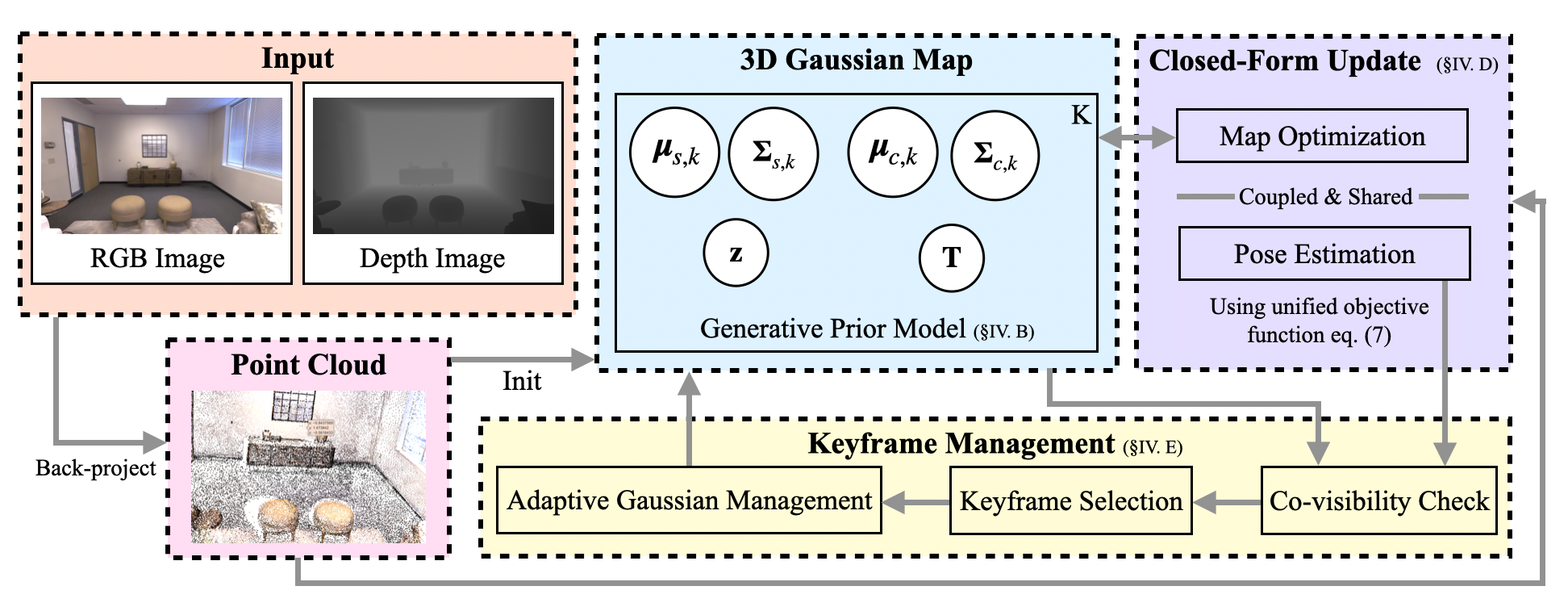

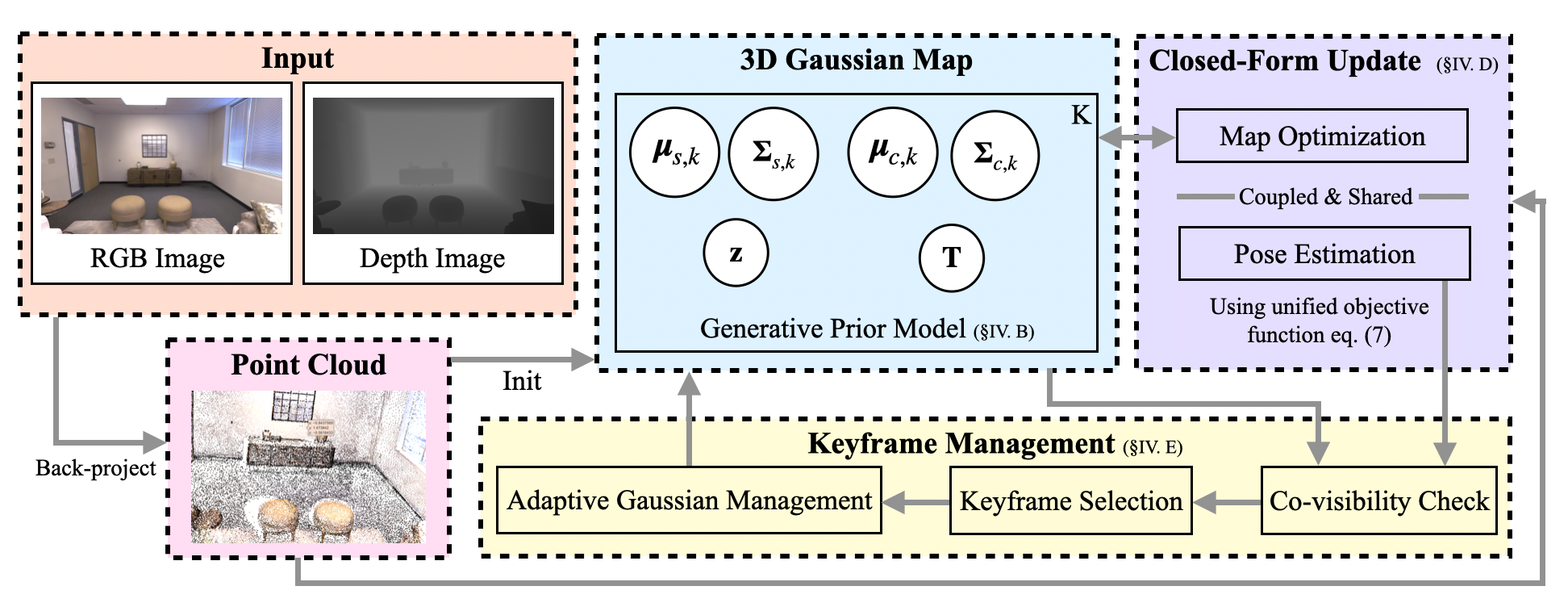

- The paper introduces a unified probabilistic SLAM model by jointly optimizing camera pose and 3D Gaussian map parameters via closed-form variational updates.

- It leverages adaptive Gaussian management and mean-field variational Bayesian inference to ensure robust tracking and high-fidelity 3D reconstruction.

- Empirical tests on Replica, TUM-RGBD, and AR-TABLE show competitive localization accuracy and rendering quality compared to state-of-art methods.

Variational Bayesian Gaussian Splatting for Simultaneous Localization and Mapping: An Expert Analysis

Introduction and Motivation

VBGS-SLAM introduces a fully probabilistic framework for dense RGB-D SLAM, integrating variational Bayesian inference with 3D Gaussian Splatting. Traditional SLAM systems employing point clouds or voxel grids suffer from scalability, memory overhead, and inadequate representation in unobserved regions. Neural implicit approaches, notably MLP-based methods and NeRF, offer more compactness but incur prohibitive retraining costs and lack efficient streaming updates. Recent advances with 3D Gaussian Splatting (3DGS) have improved scene representation and adaptability, but existing SLAM variants (e.g., SplaTAM, MonoGS) rely on deterministic, gradient-based pose optimization and are vulnerable to initialization issues, catastrophic forgetting, and lack principled uncertainty modeling.

VBGS-SLAM is formulated to overcome these limitations by coupling camera pose estimation and scene mapping within a unified generative probabilistic model. Leveraging multivariate Gaussian conjugacy and variational inference, the system achieves closed-form updates over both pose and map parameters, explicitly maintains posterior uncertainties, and propagates them through the mapping process. This design enhances robustness, mitigates drift, and supports real-time rendering and novel view synthesis.

Figure 1: VBGS-SLAM system pipeline: RGB-D inputs are back-projected to seed an adaptive probabilistic 3D Gaussian map, which is jointly optimized with SE(3) pose variables via closed-form variational updates.

Methodological Contributions

VBGS-SLAM models the environment as a mixture of spatial and color Gaussians, with camera poses parameterized within the Lie group SE(3). The generative model incorporates spatial positions conditioned on latent pose variables; instead of treating pose as a conditioned parameter, VBGS-SLAM elevates it to a latent random variable with its own prior and variational posterior factor. This joint modeling enables the propagation of pose uncertainty directly into inference for point assignments and map updates.

The inference is performed using mean-field variational Bayesian methods, minimizing the KL divergence between the approximated and true posteriors, equivalent to maximizing the ELBO. Closed-form update rules are derived for both the spatial and color Gaussians, as well as the pose posterior on the tangent space of SE(3). The pose likelihood is linearized via first-order Taylor expansion for tractability. The resulting update equations for pose mean and covariance allow concurrent optimization with map parameters—efficiently computed in a single pass.

Keyframe management employs co-visibility and temporal constraints to regulate insertion, reducing redundancy and preventing drift. Adaptive Gaussian management ensures coverage and sparsity, with new Gaussians inserted in underrepresented regions and redundant splats pruned.

Empirical Results

Testing on diverse datasets (Replica, TUM-RGBD, AR-TABLE) demonstrates strong numerical results, including:

- Localization: On Replica, VBGS-SLAM achieves an average ATE of 0.33 cm, outperforming SplaTAM (0.36 cm) and Point-SLAM (0.53 cm). On AR-TABLE, VBGS-SLAM attains the best ATE (5.94 cm) and maintains stable tracking across challenging sequences, while other GS-based baselines suffer tracking failures due to abrupt map changes.

- 3D Reconstruction: On Replica, VBGS-SLAM renders at PSNR 37.94 dB, SSIM 0.95, LPIPS 0.097, and 0.465 FPS—competitive or superior to MonoGS and significantly better than NICE-SLAM and SplaTAM. On AR-TABLE, VBGS-SLAM achieves 35.24 dB PSNR, 0.079 LPIPS, and 1.374 FPS with lowest GPU usage among the baselines.

- Robustness: Integration with IMU propagation yields only modest improvements (e.g., 0.23 cm gain in tracking accuracy), indicating that the probabilistic pose inference is sufficiently stable and accurate, unlike baselines reliant on geometric or motion cues.

Figure 2: Qualitative novel view rendering comparison on AR-TABLE and TUM-RGBD; VBGS-SLAM (rightmost column) exhibits superior edge coherence and texture preservation versus MonoGS and SplaTAM.

The ablation studies confirm that the Bayesian pipeline delivers robust results without dependency on inertial sensors, and that adaptive management retains both compactness and accuracy over long trajectories.

Theoretical and Practical Implications

The joint probabilistic formulation in VBGS-SLAM enables explicit uncertainty propagation between map and pose estimates, enhancing robustness to initialization and mitigating catastrophic forgetting—traits rarely addressed in previous GS-SLAM variants. The closed-form updates eliminate the computational bottlenecks of gradient descent, realizing real-time performance and efficient streaming integration.

Practically, VBGS-SLAM broadens SLAM applicability for robotics, AR/VR, and real-time 3D reconstruction in unconstrained and large-scale environments. The approach supports high-fidelity rendering and maintains structural detail with fewer artifacts at depth discontinuities, critical for perceptual tasks.

Theoretically, the variational Bayesian approach establishes a foundation for scalable SLAM architectures with principled uncertainty quantification. It invites further developments in dynamic scene modeling (with motion priors), multi-sensor fusion, and potentially, online adaptation of prior distributions to tackle out-of-distribution scenarios.

Future Directions

Potential extensions include:

- Dynamic Scenes: Incorporation of temporal priors to model moving objects and environments.

- Multi-Sensor Fusion: Integration with LiDAR, IMU, and vision, leveraging the probabilistic backbone for uncertainty-aware sensor fusion.

- Efficient Map Maintenance: Advanced adaptive management strategies for lifelong mapping, loop closure, and redundancy elimination.

- Non-Euclidean Manifolds: Exploration of more expressive priors for pose and scene representation, enhancing modeling capacity in complex topologies.

- Streaming Bayesian Updates: Investigation of online streaming Bayesian inference for continuous learning and adaptation.

Conclusion

VBGS-SLAM delivers a fully Bayesian, closed-form variational pipeline for dense RGB-D SLAM, improving over existing 3DGS-based approaches through robust uncertainty modeling and efficient joint optimization of pose and map parameters. The system achieves state-of-the-art tracking, rendering, and runtime efficiency, with qualitative and quantitative advantages demonstrated across synthetic and real-world benchmarks. Its design paves the way for further advances in scalable, uncertainty-aware SLAM systems and multi-modal scene understanding.