- The paper introduces a reliance-control framework to balance human oversight and AI automation in software engineering.

- The framework is based on qualitative analysis of 22 expert interviews and delineates a spectrum from self-reliance to full automation.

- It offers actionable guidance for tool selection, process governance, and SE education to mitigate risks while enhancing productivity.

Towards an Appropriate Level of Reliance on AI in Software Engineering

Introduction

The proliferation of AI-powered tools, most notably LLMs, has created new modes of human-AI collaboration in software engineering (SE). While AI assistance can drive productivity and enhance developer workflow, concerns regarding overreliance or underreliance persist. Unchecked reliance on AI systems risks the atrophy of core SE skills, reduction in critical team interactions, and introduction of novel operational hazards, as demonstrated by notable real-world failures. Conversely, excessive caution or lack of engagement with these systems may mitigate the benefits AI offers. The paper "Towards an Appropriate Level of Reliance on AI: A Preliminary Reliance-Control Framework for AI in Software Engineering" (2604.10530) introduces a reliance-control framework designed to systematize and balance developer interaction with AI, emphasizing both human control and judicious use of automation.

Context, Motivation, and Related Work

Empirical evidence indicates significant variance in how developers calibrate trust and reliance in AI. While recent industry and empirical research demonstrates notable productivity improvement with LLMs, prominent failures—such as unauthorized infrastructure changes and database loss caused by agentic AI tools—underscore the need for mechanisms to modulate trust, dependence, and control. Overreliance on AI can manifest as cognitive and behavioral atrophy, as shown by measurable reductions in user performance across neural, linguistic, and practical dimensions. Team communication may also suffer, with developers deferring to AI rather than engaging in peer discourse regarding critical engineering decisions.

Prior research has investigated how interface frictions, explanations, source attribution, and uncertainty cues influence appropriate trust calibration [bo:2025, kim:2025, ma:2023]. However, these works predominantly focus on the interactional or UI level. The reliance-control framework uniquely conceptualizes the coevolution of reliance and control as orthogonal dimensions, integrating technical affordances and socio-technical contexts.

The Reliance-Control Framework

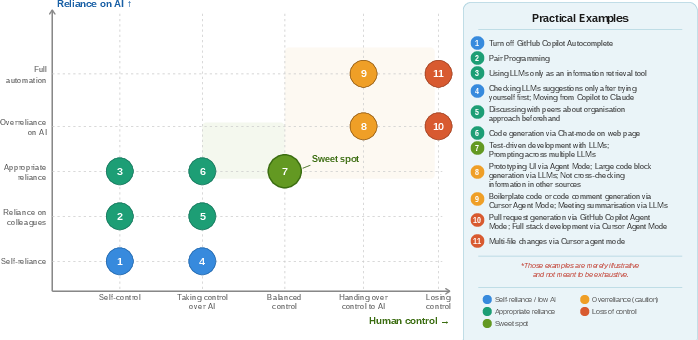

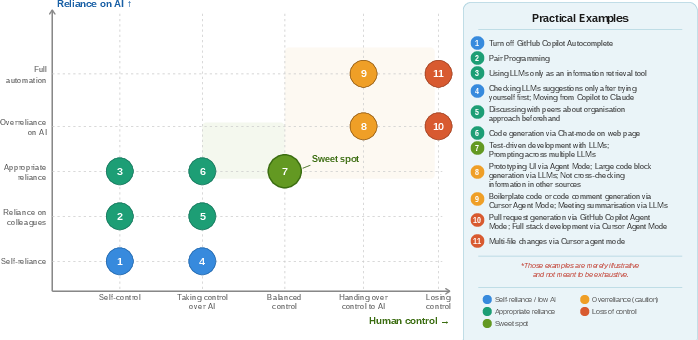

The framework emerges from a grounded-theory qualitative analysis of 22 interviews with practitioners who utilize LLM-powered tools across diverse geographies and tasks. The authors formalize two axes: "control" (ranging from self-control to full delegation) and "reliance" (ranging from self-reliance to uncritical acceptance and automation).

Figure 1: The reliance-control framework surfaces the joint spectrum of control and reliance in developer-AI collaboration in SE contexts.

Distinct configurations at the intersection of these axes correspond to core patterns and risks in developer-AI interaction:

- Self-control and self-reliance—Exercised when developers disable AI suggestions to reinforce core competencies or avoid cognitive dependence.

- Balanced control and appropriate reliance—Characterized by intentional leveraging of LLM automation while actively supervising AI outputs, e.g., via agent-assisted TDD with iterative human review.

- Handing over control with overreliance or automation—Evidenced when AI is authorized to conduct code generation, large-scale refactoring, or infrastructure-level changes without adequate human supervision, frequently leading to reduced codebase comprehension or introduction of critical defects.

The framework identifies the "sweet spot" for SE practice as the region defined by balanced control and appropriate reliance, where productivity gains and risk mitigation are jointly optimized.

Implications for SE Practice

The framework provides actionable guidance for multiple SE stakeholders:

- Tool selection and team management: SE leaders can use the framework to tailor the level of permissible AI control to the team’s experience. Novices are best served by tools and configurations that prioritize human intervention, even at the cost of some automation, while advanced practitioners can more safely leverage autonomous agentic modes.

- Process governance: The framework enables explicit documentation of when full automation is permissible and when strict oversight is mandated, fostering shared accountability for AI-generated code.

- SE education: Educators are encouraged to modulate AI tool exposure by student seniority. Early-stage learners should only be permitted interaction modalities that amplify human control, thereby preventing premature dependence and ensuring foundational skills acquisition.

Implications for Research Agendas

The reliance-control paradigm highlights several future research trajectories:

- Empirical task-level validation: Quantitative evaluations across development tasks and toolchains are needed to refine the optimum reliance-control configurations for various SE contexts.

- Expertise-dependent customization: As developer expertise modulates attitudes towards and efficacy with AI, further work should investigate adaptive interactions that dynamically adjust both reliance and control affordances.

- Lifecycle- and context-dependent reliance: AI contributes asymmetrically across SE lifecycle stages; understanding task granularity is essential for workflow orchestration.

Speculative Trajectories for AI in SE

The reliance-control framework offers a foundation for the design of AI-mediated tooling that is dynamically context- and user-aware. Anticipated developments include adaptive agents capable of detecting risk contexts (e.g., critical code changes) and automatically escalating control requirements, thereby minimizing both practical and organizational hazards. The paradigm succinctly frames research into explainability, error mitigation, and human-in-the-loop design as core drivers for achieving robust and sustainable AI-augmented SE practice.

Conclusion

This work offers a structured framework for conceptualizing and managing the dual axes of reliance and control in developer-AI collaboration. Through explicit mapping of operational modes and practical exemplification, the framework assists SE practitioners, educators, and tool designers in maximizing the benefits of LLM-driven automation while safeguarding against overreliance and loss of critical skill. Further empirical validation and refinement will enhance its prescriptive value and inform the development of adaptive, risk-aware AI tooling in software engineering (2604.10530).