- The paper demonstrates that deep neural networks, despite their opaque internals, can effectively guide hypothesis generation in science.

- It employs case studies in low-dimensional topology and seismology to show that DNN outputs improve exploration without compromising rigorous justification.

- The study implies that confining opaque DNN results to discovery contexts preserves scientific standards and supports innovative research workflows.

Deep Learning Opacity in Scientific Discovery

Introduction

"Deep Learning Opacity in Scientific Discovery" (2206.00520) addresses a core epistemological challenge for contemporary AI: the tension between the epistemic opacity of deep neural networks (DNNs) and the standards of scientific justification. While recent philosophical literature has often emphasized the problematic nature of unexplainable, black-box models in the context of scientific knowledge, recent advances in science—enabled by DL—suggest that these models routinely yield substantial progress without compromising rigorous justificatory standards. The paper interrogates and reconciles this disjunction by attending to the practical realities of how DNNs are deployed in science, appealing to the classic philosophical distinction between contexts of discovery and justification.

Characterizing Epistemic Opacity in Deep Learning

The paper provides a precise analysis of neural network opacity. While the trained parameters of DNNs are mathematically transparent—their architectures and weights are exhaustively specified by training—the induced function, and more critically, the rationale for its mappings from input to output, are generally unintelligible and unfathomable. Following the distinctions of Zerilli and Creel, the paper dissects epistemic access into tractability, intelligibility, and fathomability. DNNs are operationally tractable but are rarely intelligible or fathomable at the algorithmic or structural level: it is typically impossible for a scientist to understand, even in principle, the semantic function linking features and predictions.

This analytic distinction underwrites much of the philosophical skepticism: in scientific research—especially where models serve as the basis for acceptance or justification of new scientific claims—opacity is seen to undermine explainability, justification, and value-alignment.

The Discovery-Justification Distinction in Practice

The central argument pivots on a classic distinction, tracing back to Reichenbach and Popper: the context of discovery versus the context of justification. While the context of justification focuses on the warrant for scientific claims, requiring accessible and intelligible reasoning chains, the context of discovery is concerned with the processes of hypothesis generation, model selection, and intuition-guiding heuristics. The paper argues that scientists routinely and explicitly restrict the epistemically opaque outputs of DNNs to the context of discovery: DNNs guide conjecture-generation or exploration, but do not themselves serve as foundations for justificatory scientific claims.

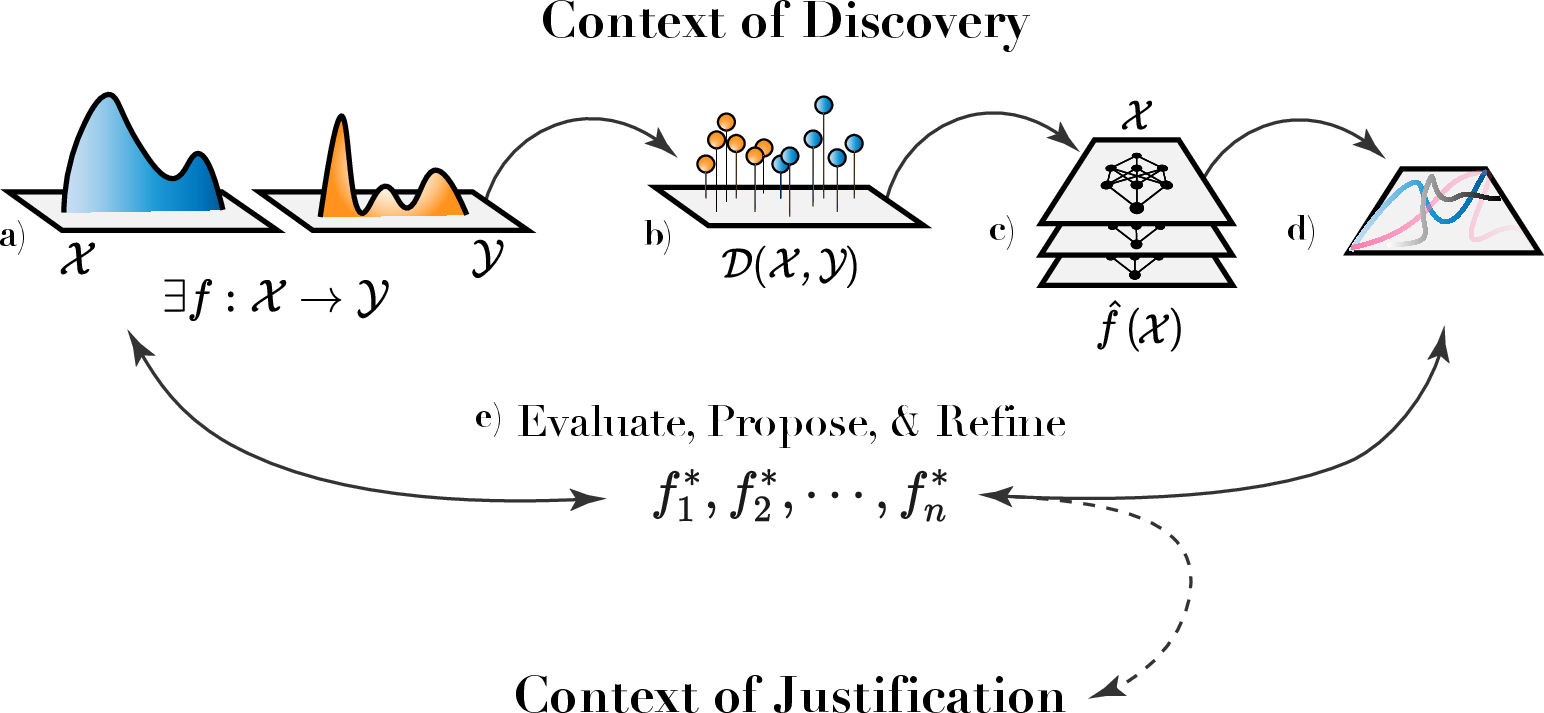

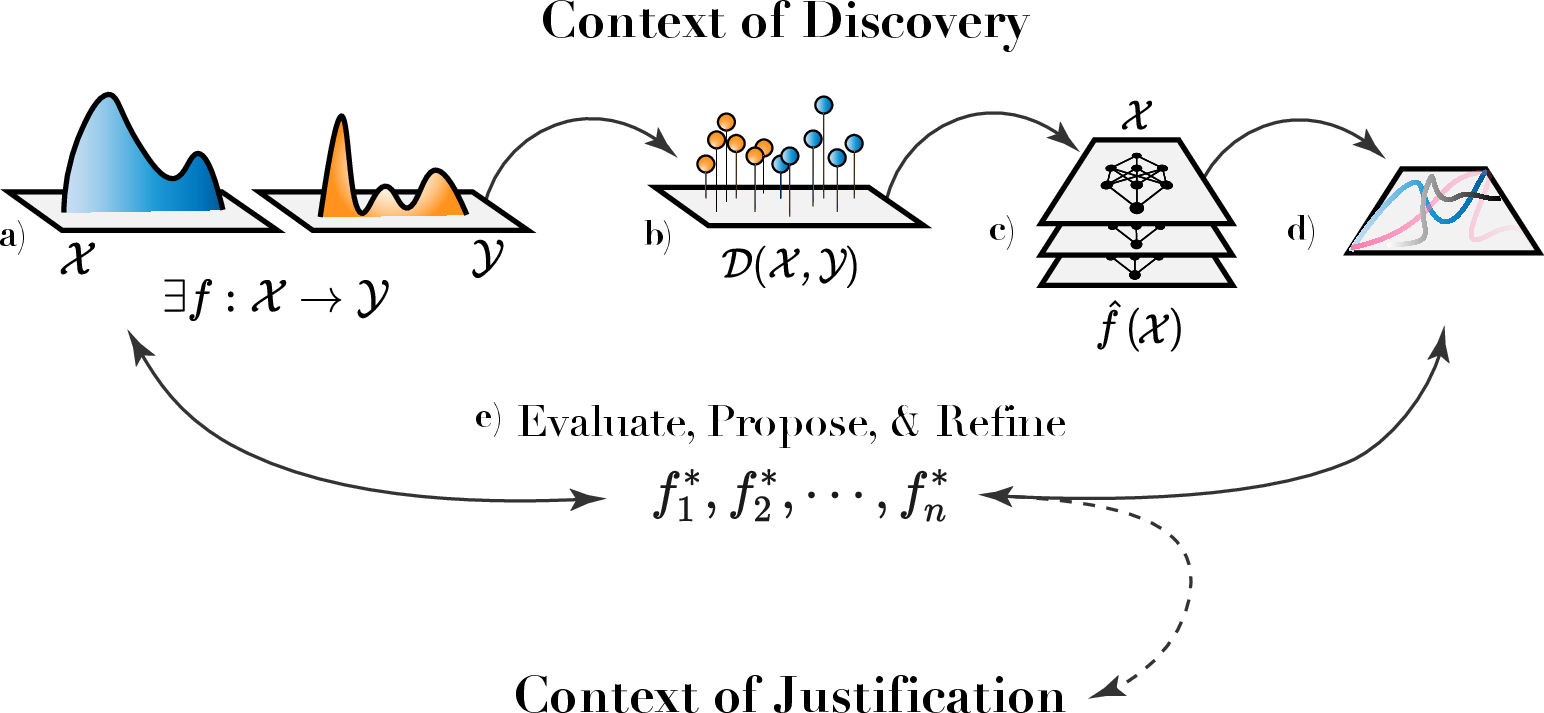

Figure 1: The experimental and inferential pipeline: DNN outputs are confined to the discovery context, supporting hypothesis generation and exploration, without being part of justificatory arguments for final scientific claims.

In this paradigm, DNN-based results catalyze scientific investigations by pointing toward new relationships or highlighting features worthy of closer theoretical or experimental scrutiny. Crucially, the opacity of the DNN is epistemically irrelevant to the final justification for any resulting scientific claim, which remains anchored in traditionally accepted forms of argument (proof, experimental replication, or theoretical modeling).

Case Studies: Epistemic Opacity as Epistemically Irrelevant

Case 1: Deep Learning-Guided Mathematical Intuition

The first case analyzes the work of Davies et al. (Nature 2021) on low-dimensional topology. The research leveraged DNNs to reveal correlations between geometric and algebraic knot invariants, previously speculated but not crisply characterized. The DNN was trained on a database of hyperbolic and algebraic invariants; its predictive accuracy (≈0.78, compared to a baseline of 0.25) provided strong, though non-justificatory, evidence of underlying mathematical relationships. Using gradient-based saliency analyses, the researchers isolated specific invariants that contributed most to predictive accuracy, guiding mathematicians toward formulating and refining new conjectures.

The critical insight is that the final step—formal proof of the resulting theorem—does not rely on the DNN’s predictions or internal logic; justification rests entirely on deductive argument, aligning with disciplinary epistemic norms. Thus, DNN opacity presents no epistemic barrier.

Case 2: Deep Learning for Improved Geophysical Theory

The second case examines DNN-enabled breakthroughs in seismology (DeVries et al., Nature 2018). Here, DL architectures achieved substantial improvements in forecasting aftershock locations (AUC=0.85 versus classical model AUC=0.583). Crucially, detailed examination of DNN output distributions—compared to prior theoretical models—identified discrepancies pointing toward overlooked geophysical features. By correlating DNN-derived probability fields with physical parameters, scientists isolated three key stress-related quantities that were not explicit inputs to the model but accounted for its superior predictions.

As with mathematics, not only is the model’s opacity irrelevant to justification, but the final, improved scientific theory is justified via classical means: it accounts for the empirical evidence, integrates seamlessly with established physical principles, and provides deeper explanatory value.

Implications for the Philosophy of Science and Future AI Practice

By situating DNN opacity within a context-sensitive framework, the paper proposes a nuanced account of the epistemic standing of black-box AI in scientific discovery. Applying DNNs as heuristic engines in the context of discovery does not transgress justificatory standards, provided that outputs are not treated as finished scientific claims without further, transparent grounding.

Practically, this model underscores the continued need for careful methodological controls and disciplinary normativity around how and where DNNs are deployed. Theoretically, it suggests that future AI systems—regardless of opacity—can remain epistemically efficacious in science, as long as they are integrated into workflows that robustly separate exploratory heuristics from claims in need of justification.

Conclusion

This work illuminates the philosophical and practical alignment between the optimistic adoption of deep learning in science and the epistemic worries posed by its opacity. By rigorously tracking the contexts in which DNN epistemic opacity matters, it dissolves apparent contradictions and provides an operational template for responsible scientific use. This approach has substantial and ongoing ramifications for both the philosophical analysis of AI-driven science and the practical design of future scientific workflows in the era of increasingly powerful and inscrutable machine learning models.