Learning from learning machines: a new generation of AI technology to meet the needs of science

Abstract: We outline emerging opportunities and challenges to enhance the utility of AI for scientific discovery. The distinct goals of AI for industry versus the goals of AI for science create tension between identifying patterns in data versus discovering patterns in the world from data. If we address the fundamental challenges associated with "bridging the gap" between domain-driven scientific models and data-driven AI learning machines, then we expect that these AI models can transform hypothesis generation, scientific discovery, and the scientific process itself.

Summary

- The paper establishes that conventional AI models excel in prediction but fail to yield scientific insight due to limited extrapolation and interpretability.

- It advocates for the development of scientifically validated surrogate models that distill black-box representations into testable, mechanistic hypotheses.

- It introduces a taxonomy of model structures—global, local, and intermediate-scale—highlighting the need for advanced statistical tools in AI-driven science.

Toward AI-Enabled Scientific Understanding: Bridging Data-Driven Learning and the Scientific Method

Introduction and Motivation

This paper, "Learning from learning machines: a new generation of AI technology to meet the needs of science" (2111.13786), provides a rigorous assessment of the fundamental mismatch between current AI/ML methodologies—optimized primarily for industrial and commercial applications—and the methodological requirements for scientific discovery. The authors argue that while modern AI excels at predictive performance, it rarely offers true scientific insight due to its design focus: optimizing for generalization on held-out data, rather than for extrapolation, interpretability, or counterfactual reasoning central to the scientific method.

The paper systematically articulates the emerging opportunities and theoretical challenges necessary for AI to fulfill its transformative potential in scientific domains, advocating for the development of 'surrogate models' that are both scientifically interpretable and counterfactually validated.

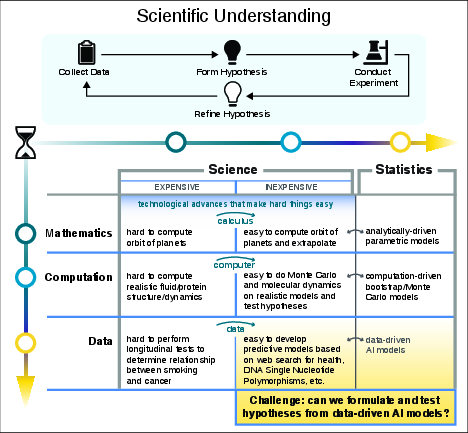

Figure 1: Cumulative improvement in the scientific method and associated statistical tools, showing key transitions: calculus, electronic computers, and modern AI/ML enabling previously intractable modeling tasks.

Scientific Models versus Data-Driven Learning Machines

The division between scientific and industrial goals engenders a critical distinction. Industrial AI prioritizes deployment and predictive utility on iid data, driven by statistical generalization frameworks. Scientific modeling, in contrast, seeks mechanisms and explanatory frameworks capable of extrapolation—making predictions about regimes and outliers not observed during model fitting. Statisticians and domain scientists require the capacity to interrogate fitted models for mechanisms, not merely for predictive success [box71p, bickel2015mathematical]. Classic examples, such as Newtonian mechanics, demonstrate the difference between curve fitting (phenomenology) and inductive discovery of underlying principles (theory).

The paper highlights the lack of established tools for interpreting data-driven AI models in a fashion analogous to traditional statistical interrogation—confidence intervals, hypothesis tests, uncertainty quantification—underscoring the urgent need for scientifically meaningful integration of AI into the hypothesis-discovery lifecycle.

Generalization, Extrapolation, and Outliers

The notion of generalization—central in ML—differs fundamentally from extrapolation, which is crucial in science. Current AI models are typically validated through train/test splits, measuring the ability to reproduce phenomena within the same distribution but not necessarily understanding the mechanisms or supporting prediction well outside the data regime of training (i.e., extrapolation).

Scientific workflows demand:

- Models leading to testable hypotheses for different regimes

- Mechanisms to recognize and learn from interesting outliers, which may indicate new science

AI models trained for commercial generalization minimize generalization error but often lack robustness and extrapolative capacity, making them brittle in scientific applications, especially when encountering unexpected regimes or rare events.

Explainability, Interpretability, and the Limits of XAI

The work provides a critical distinction between explainability (how a model works) and interpretability (why a model works in terms of underlying system properties). Explainable AI (XAI) techniques—such as saliency maps and relevance attribution—highlight which inputs influence model outputs, but rarely connect these to underlying scientific mechanisms or causal relationships. They aid diagnostics and exploratory analysis but only sporadically facilitate interpretability or foster hypothesis-driven scientific reasoning.

The authors demonstrate that most XAI approaches only interrogate the model mechanics, not the world it represents, and argue that interpretability in the scientific sense can only arise through counterfactual analysis and validation against mechanisms not present in the training data.

Learning Representations and Extracting Structure

Modern AI models compute representations and decision functions, typically formulated as f(x)=h(g(x)), where g is feature extraction or representation mapping and h is the decision function. Although representations are empirically essential, their scientific content is generally opaque. Recent success stories (e.g., AlphaFold) illustrate that deep models can learn emergent features implicitly, but extracting new theory or interpretable mechanisms from them remains challenging.

Furthermore, complex domains such as neuroscience and genetics underscore the difficulty of identifying relevant abstractions, scales, or representations a priori—necessitating AI/ML systems that can assist in discovering new semantic structures directly from data.

Taxonomy of Model Structures: Global, Local, and Intermediate-Scale

The paper introduces a taxonomy (and corresponding formalism) for structures that AI models can learn:

- Global Sparsity: Models depend on a small set of parameters (e.g., sparse regression).

- Local Sparsity: Models are low-dimensional only in local neighborhoods; high overall parameter count, but locally few matter (e.g., cancers—many implicated genes globally, but only a few per tumor).

- Intermediate-Scale Structure: State-of-the-art AI models (deep nets) appear to learn complex, coupled, locally low-dimensional features that do not aggregate into globally sparse forms, nor into simple local patches. Analytical tools for these are underdeveloped, and their explanatory power is mostly uncharted.

(Figure 2)

Figure 2: Taxonomy of structure in statistical and AI models: global sparsity (top left), local sparsity (top right), and complex intermediate-scale structure (middle and bottom) characteristic of deep AI.

The authors emphasize that while statistical theory is well-developed for global and some local models, it does not yet provide tools for understanding the intermediate-scale structures implicit in learned deep models. This is a principal barrier for integrating ML into scientific methodology.

Surrogate Models and Scientific Counterfactuals

To address this barrier, the authors advocate for the construction of 'scientifically validated surrogate models'—simpler, interpretable models distilled from complex AI models but amenable to counterfactual and hypothesis-driven interrogation. Critically, these are not mere distillations for efficiency (as in standard knowledge distillation [hinton2015distilling]); they must support both mechanistic understanding and robust extrapolation.

Surrogate models should map the internal representations and decision functions of black-box models into minimal, testable scientific hypotheses. For example, symbolic regression can sometimes extract closed-form equations relating variables, and surrogate models constructed this way can often extrapolate better than the original black-box model in novel regimes [cranmer2020discovering, udrescu2020ai].

The authors argue that the creation and validation of such surrogates will be as transformative for AI-driven science in the 21st century as the introduction of model chemistries and computational surrogates was for the empirical sciences in the 20th.

Implications and Future Directions

The paper identifies several necessary methodological advances for AI to enable scientific discovery:

- Extraction of Surrogate Models: Techniques for deriving explicit representations (g) and decision rules (h) from black-box models, yielding interpretable mechanisms.

- Statistical and Scientific Validation: Procedures for testing hypotheses and quantifying uncertainty in surrogate models, integrating counterfactual and experimental reasoning.

- Use-Case Driven Development: Emphasis on practical scientific domains (e.g., synthetic biology, precision medicine) as motivation and testbed for method development.

The practical implications are profound: AI models that are interpretable, robust, and discover mechanisms can accelerate innovation in fields ranging from materials science to genomics. Moreover, interpretable surrogates play a key role in societal domains—such as law and medicine—where the ability to interrogate and trust model conclusions is critical for social and ethical acceptance.

Theoretically, the authors chart a new research frontier focused on the interplay between representation learning, model structure, and the scientific method—a blend of statistical learning theory, experimental design, and causal inference [pearl2019seven].

Conclusion

This work presents a comprehensive framework and research agenda for aligning the objectives of AI with the epistemic goals of science. The authors highlight the urgent need for interpretability and counterfactual validation in AI models intended for scientific discovery. They emphasize that progress requires the creation of surrogate models that enable mechanistic insight and reliable extrapolation, rather than simply delivering predictive accuracy. Success in this agenda will unlock AI's potential as an indispensable tool for hypothesis generation, theory formation, and discovery in the sciences.

References:

(2111.13786) "Learning from learning machines: a new generation of AI technology to meet the needs of science" [cranmer2020discovering] "Discovering Symbolic Models from Deep Learning with Inductive Biases" [udrescu2020ai] "AI Feynman: a physics-inspired method for symbolic regression" [hinton2015distilling] "Distilling the Knowledge in a Neural Network" [box71p] G.E.P. Box, "Science and Statistics" [bickel2015mathematical] "Mathematical Statistics: Basic Ideas and Selected Topics, Volume I" [pearl2019seven] J. Pearl, "The seven tools of causal inference, with reflections on machine learning"

Paper to Video (Beta)

No one has generated a video about this paper yet.

Whiteboard

No one has generated a whiteboard explanation for this paper yet.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Open Problems

We haven't generated a list of open problems mentioned in this paper yet.

Continue Learning

- How can surrogate models be designed to improve the interpretability of AI systems in scientific research?

- What specific counterfactual validation techniques does the paper propose for testing scientific hypotheses?

- In what ways does the paper distinguish between AI's predictive accuracy and its capacity for scientific discovery through extrapolation?

- How might the taxonomy of model structures influence future developments in statistical and machine learning methods?

- Find recent papers about surrogate model development in AI.

Related Papers

- From Kepler to Newton: Explainable AI for Science (2021)

- The Future of Fundamental Science Led by Generative Closed-Loop Artificial Intelligence (2023)

- Decoding complexity: how machine learning is redefining scientific discovery (2024)

- AI Descartes: Combining Data and Theory for Derivable Scientific Discovery (2021)

- Explain the Black Box for the Sake of Science: the Scientific Method in the Era of Generative Artificial Intelligence (2024)

- Towards Scientific Discovery with Generative AI: Progress, Opportunities, and Challenges (2024)

- Advancing the Scientific Method with Large Language Models: From Hypothesis to Discovery (2025)

- AI4Research: A Survey of Artificial Intelligence for Scientific Research (2025)

- From AI for Science to Agentic Science: A Survey on Autonomous Scientific Discovery (2025)

- Explainable AI: Learning from the Learners (2026)

Collections

Sign up for free to add this paper to one or more collections.