- The paper introduces a novel framework that replaces the Euclidean metric with the 2-Wasserstein distance to robustly summarize data via convex polytopes.

- It establishes theoretical guarantees including existence, uniqueness, and statistical consistency while employing Rényi entropy for regularization.

- Empirical evaluations demonstrate the algorithm's effectiveness on both synthetic and real datasets, highlighting its interpretability and robustness to outliers.

Wasserstein Archetypal Analysis: Theory, Algorithms, and Empirical Evaluation

Archetypal Analysis (AA) is a classical unsupervised learning paradigm aimed at summarizing multivariate data via convex polytopes, with the polytope vertices interpreted as "archetypes"—exemplars of extreme points of the dataset. Historically, AA minimizes the average squared Euclidean distance from data points to their projections onto a convex hull contained within the convex hull of the dataset, yielding archetypes that explain the general characteristics of the data.

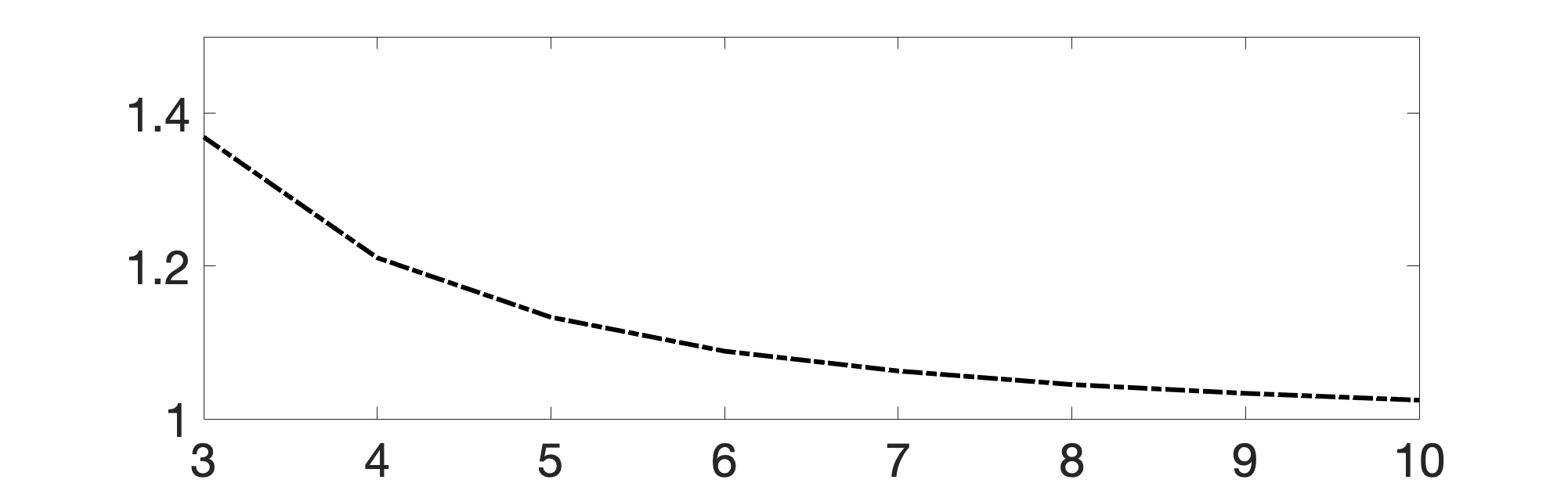

However, AA suffers from fundamental issues when the data-generating distribution is unbounded or contains outliers, due to the quadratic loss. To address these deficiencies, the paper introduces Wasserstein Archetypal Analysis (WAA), replacing the Euclidean metric with the 2-Wasserstein metric, thus leveraging optimal transport to robustly fit polytopal distributions to empirical or continuous data. For fixed k, WAA seeks the convex k-gon (or polytope in higher dimensions) whose uniform measure is closest to the data distribution μ in Wasserstein distance.

Theoretical Results

The paper systematically analyzes the existence, uniqueness, and statistical consistency of the WAA minimization problem. Key results include:

These results are technically rigorous, leveraging measure-theoretic properties, optimal transport theory, and variational analysis.

Algorithmic Methods

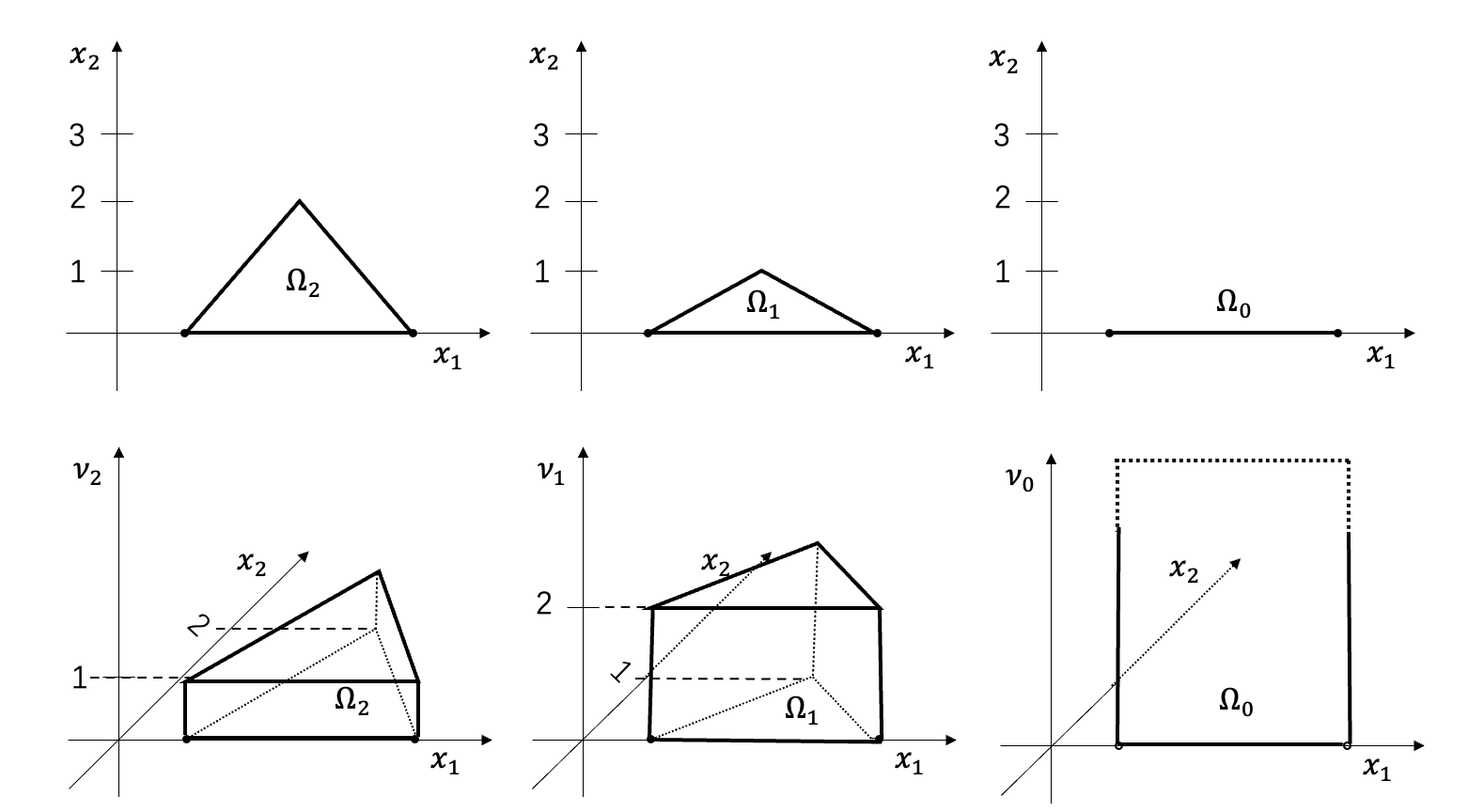

The paper proposes a gradient-based alternating minimization algorithm for the 2D case with empirical measures, exploiting the semi-discrete optimal transport method:

- The dual formulation of the 2-Wasserstein metric is utilized, where for discrete μ and uniform polytope ν, the optimal transport is computed via weighted Voronoi polygons (power diagrams), allowing direct computation and efficient optimization.

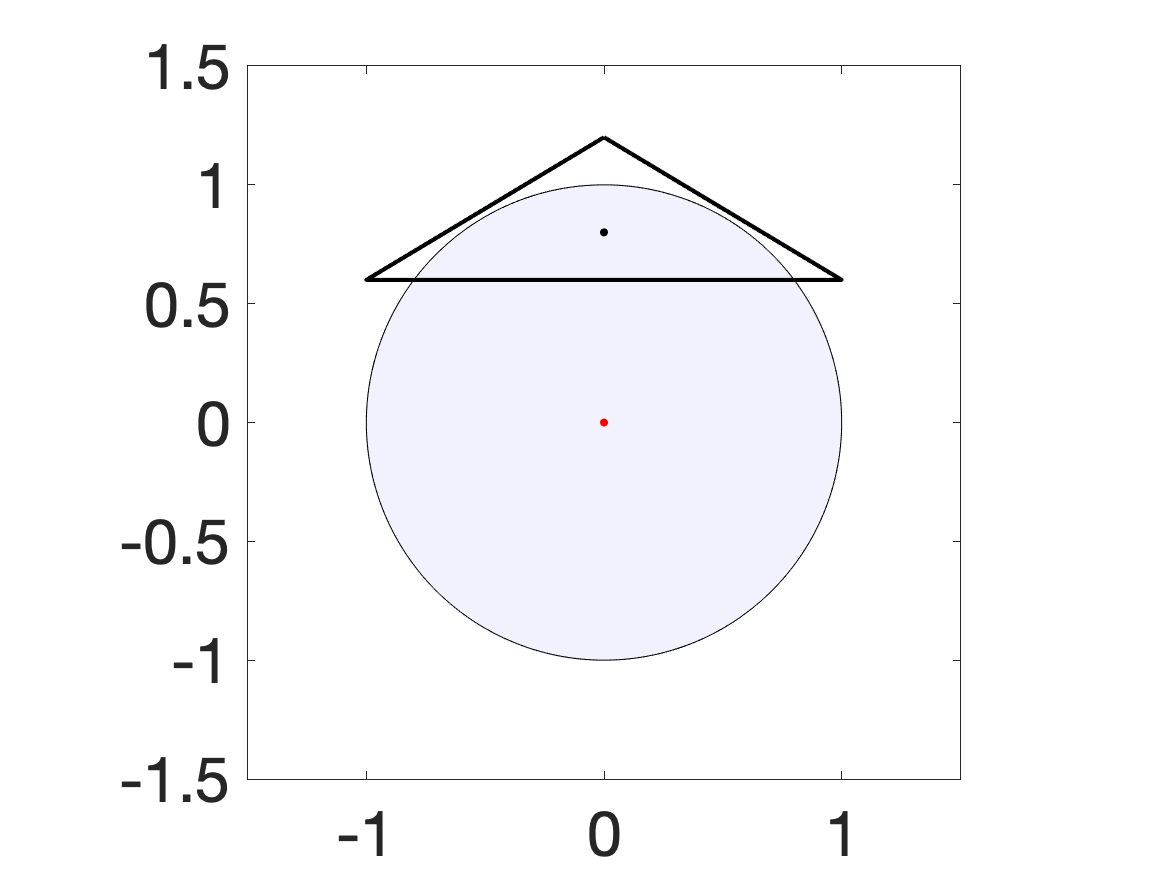

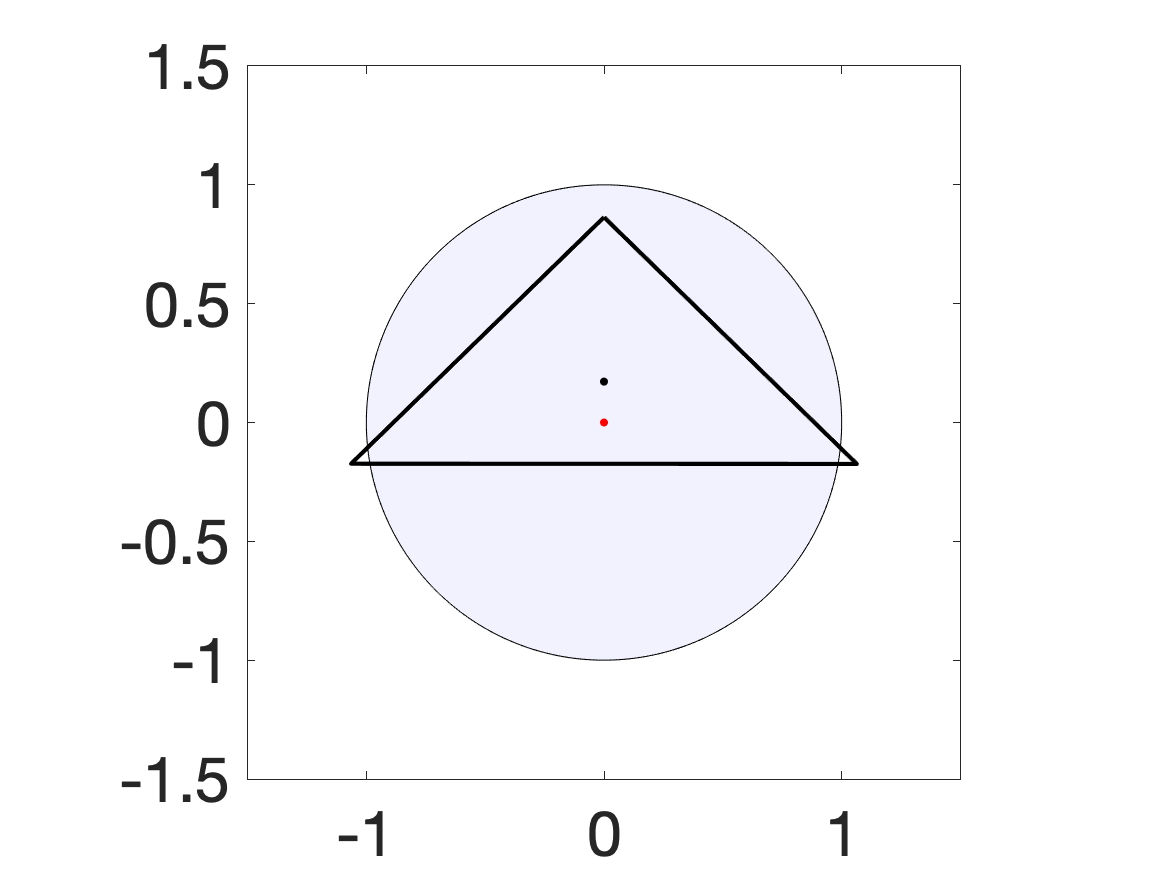

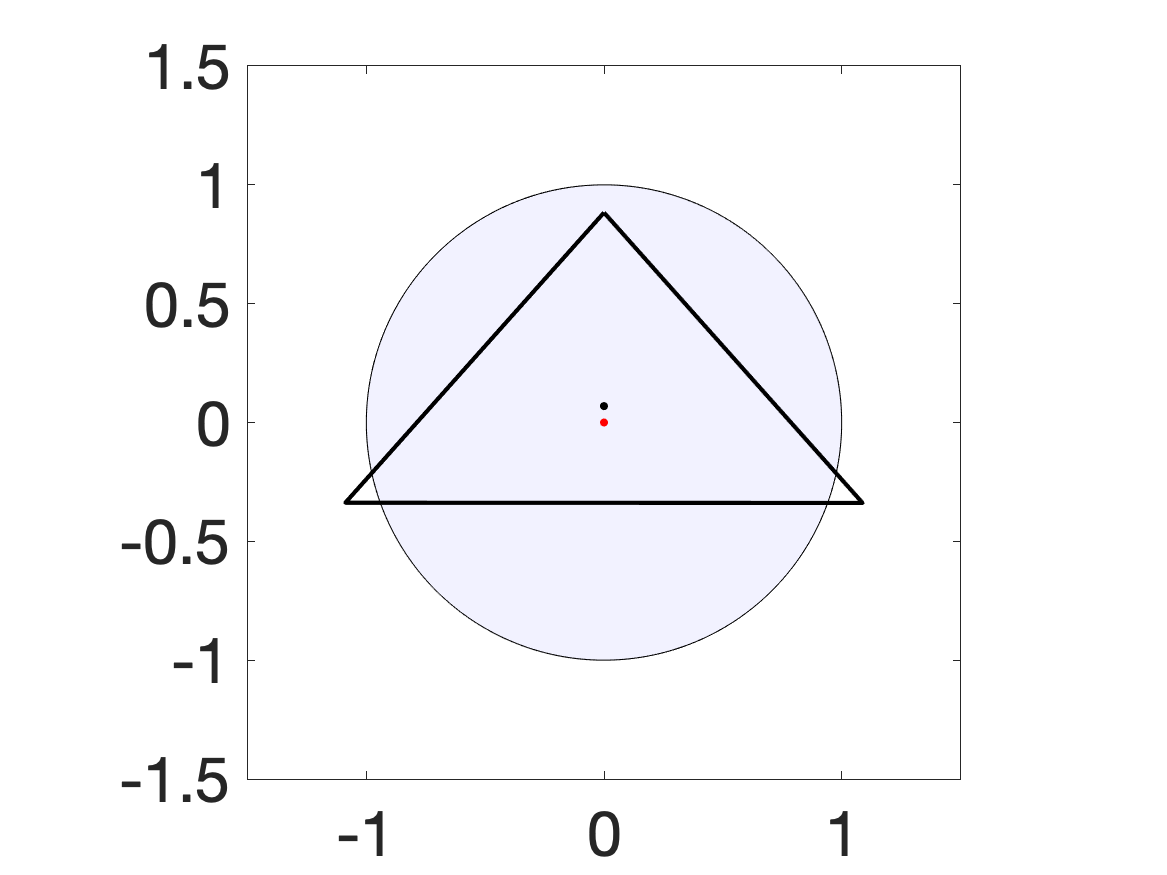

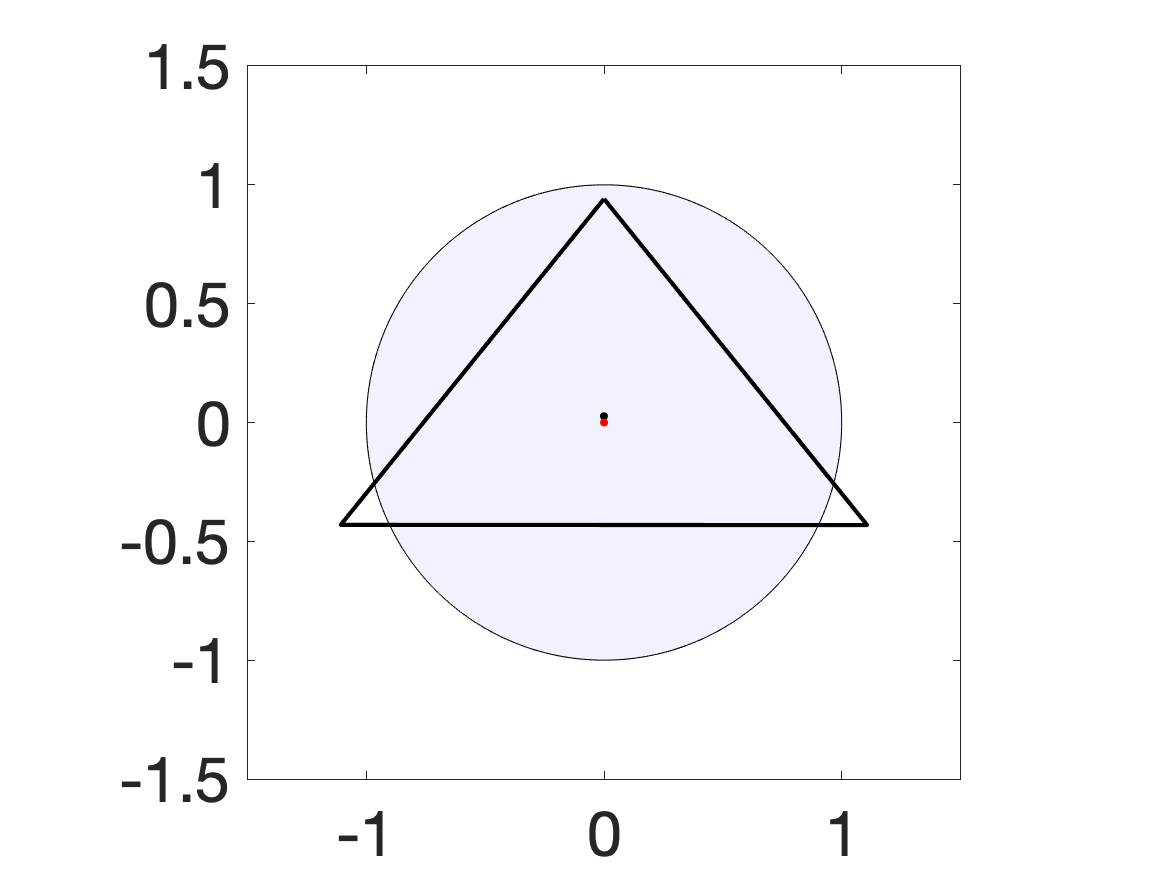

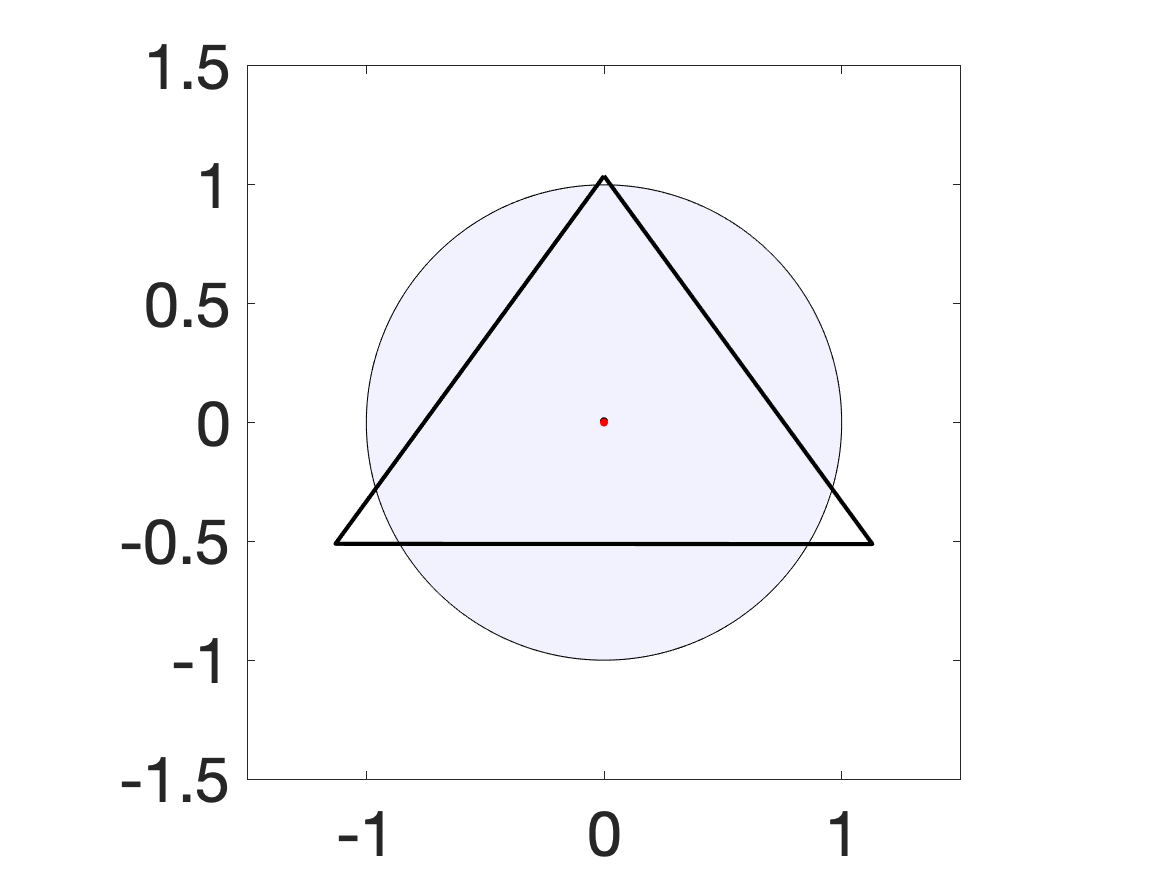

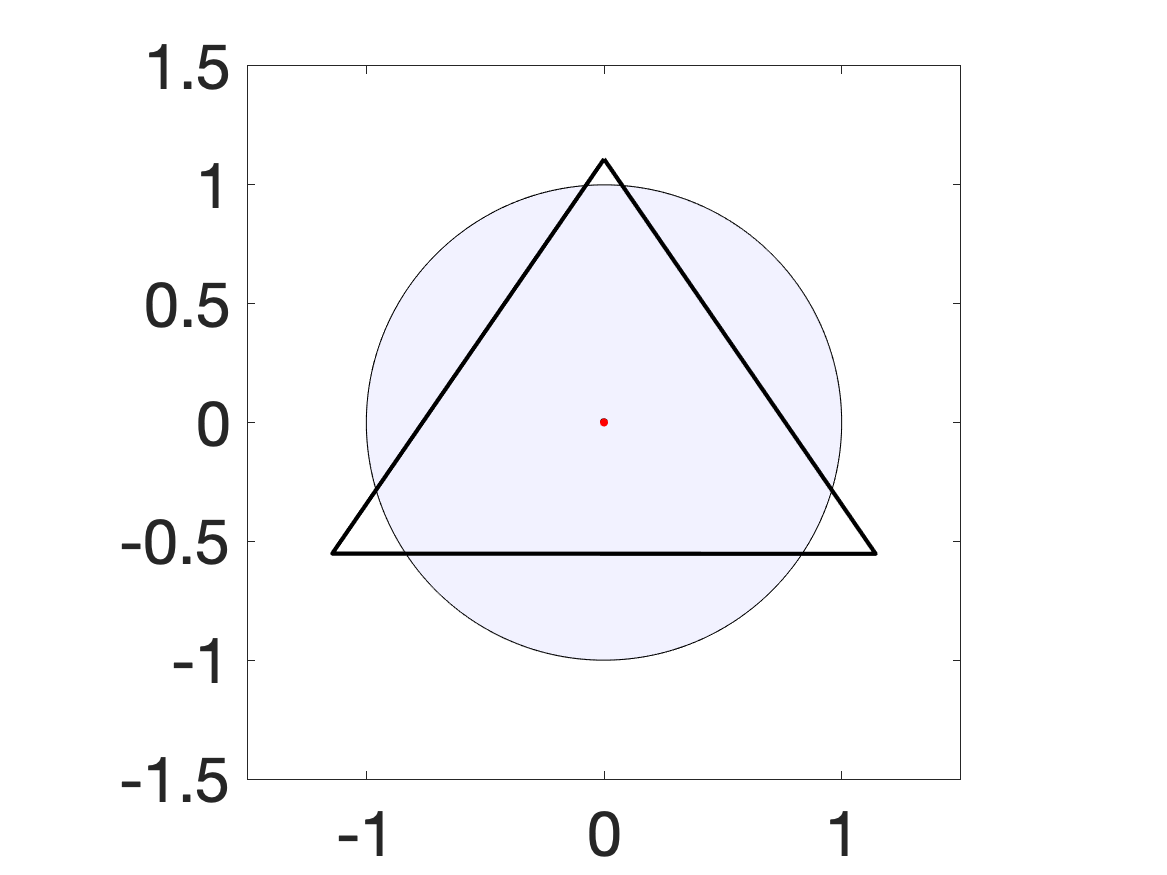

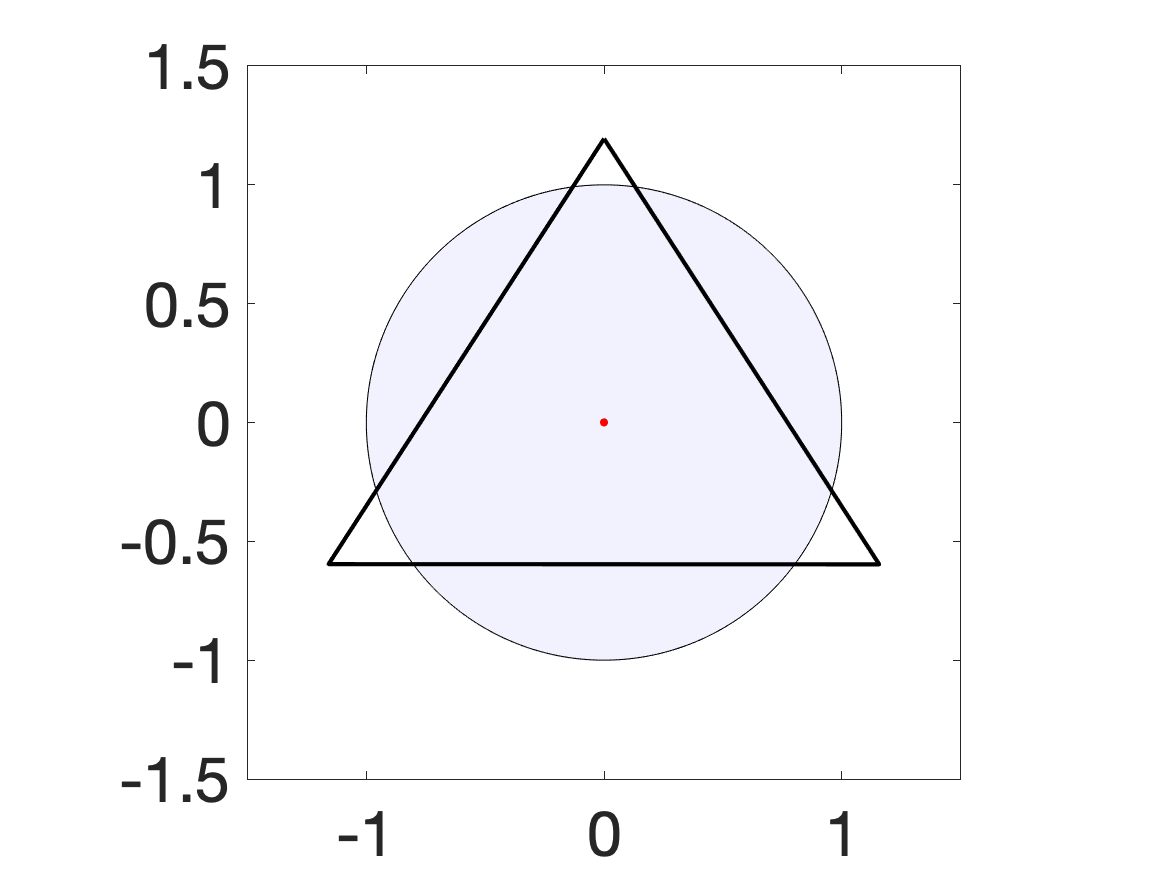

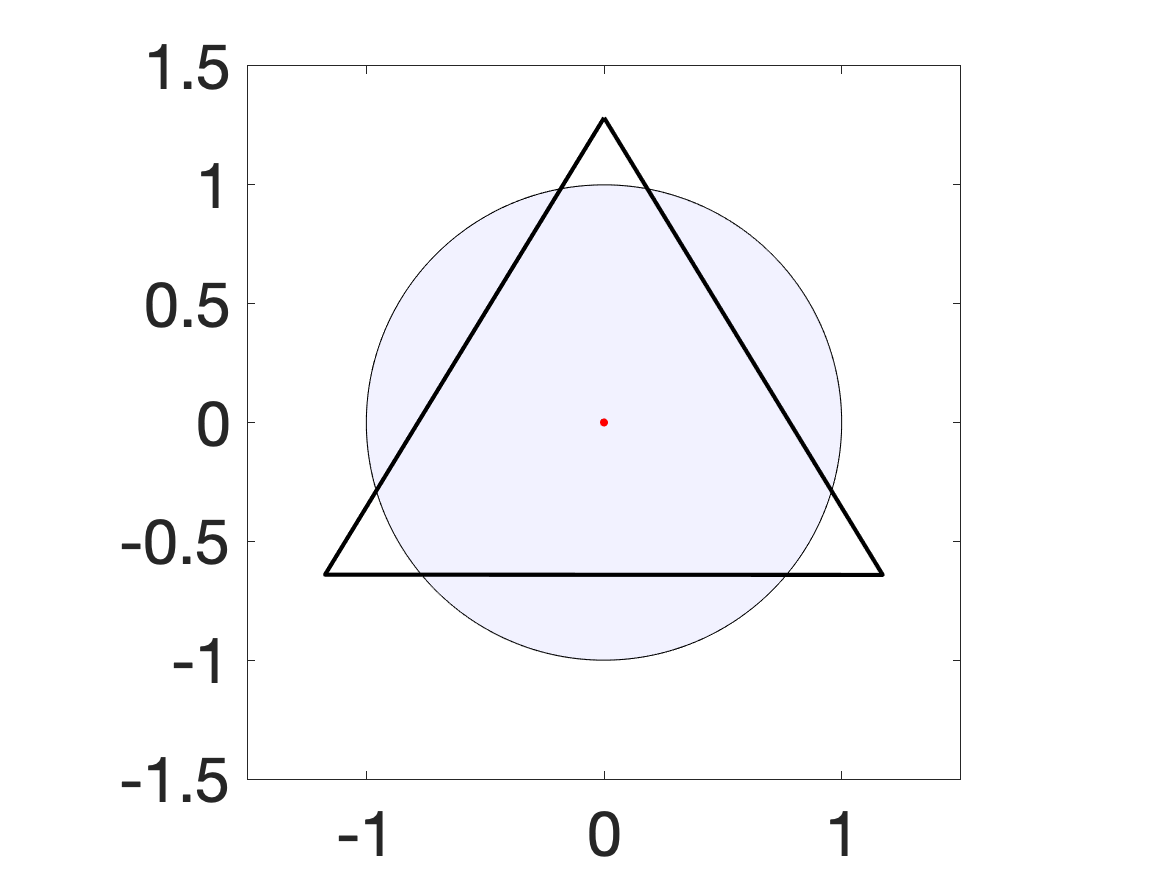

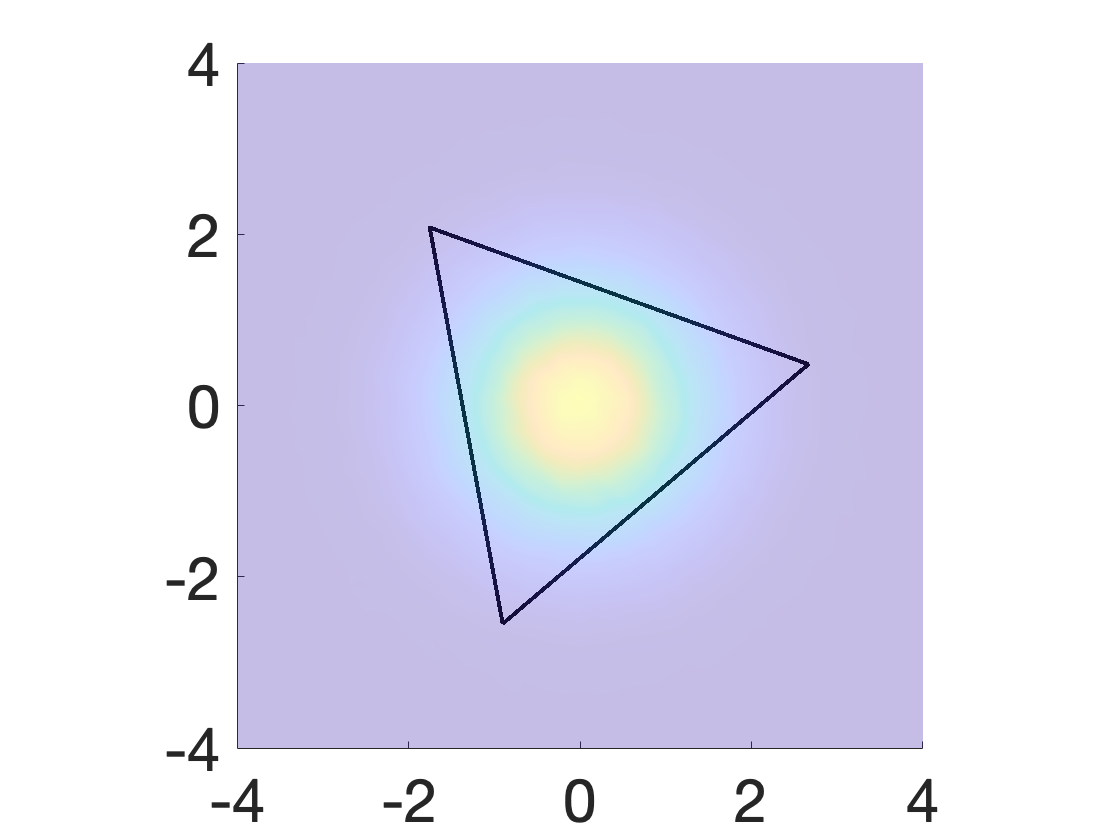

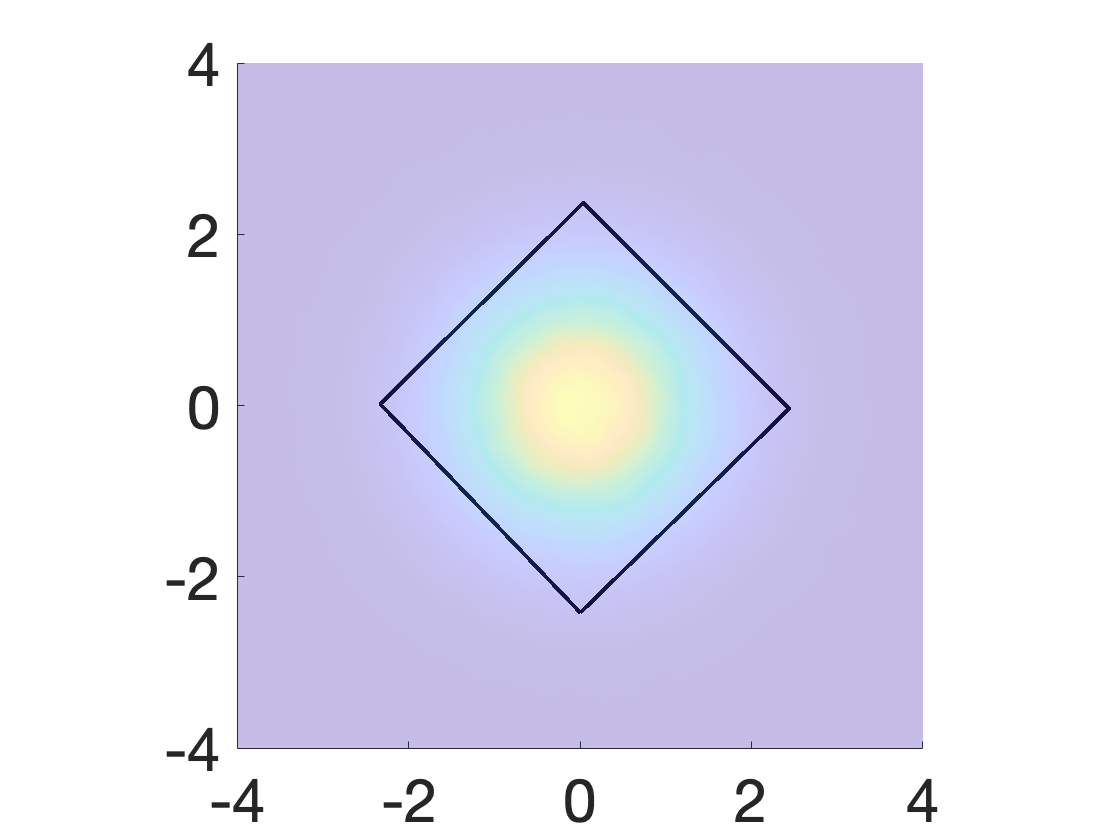

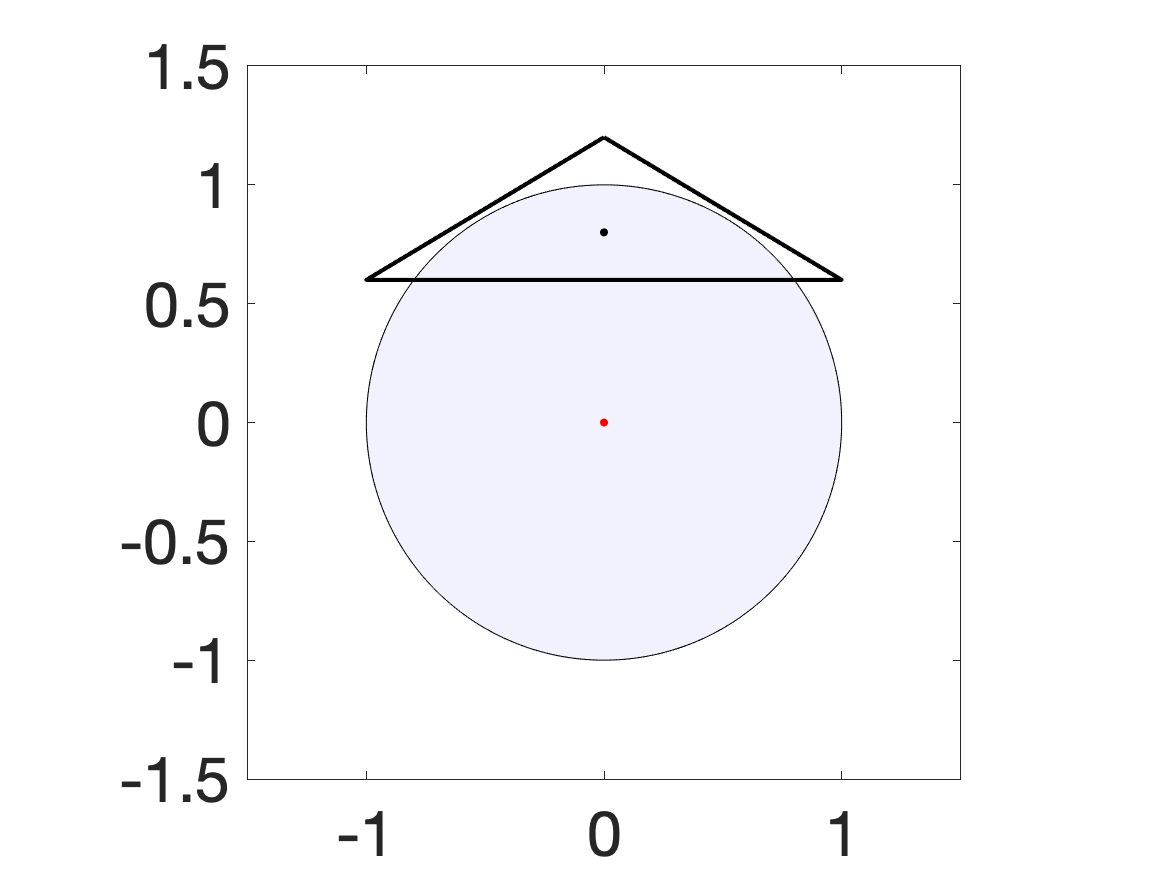

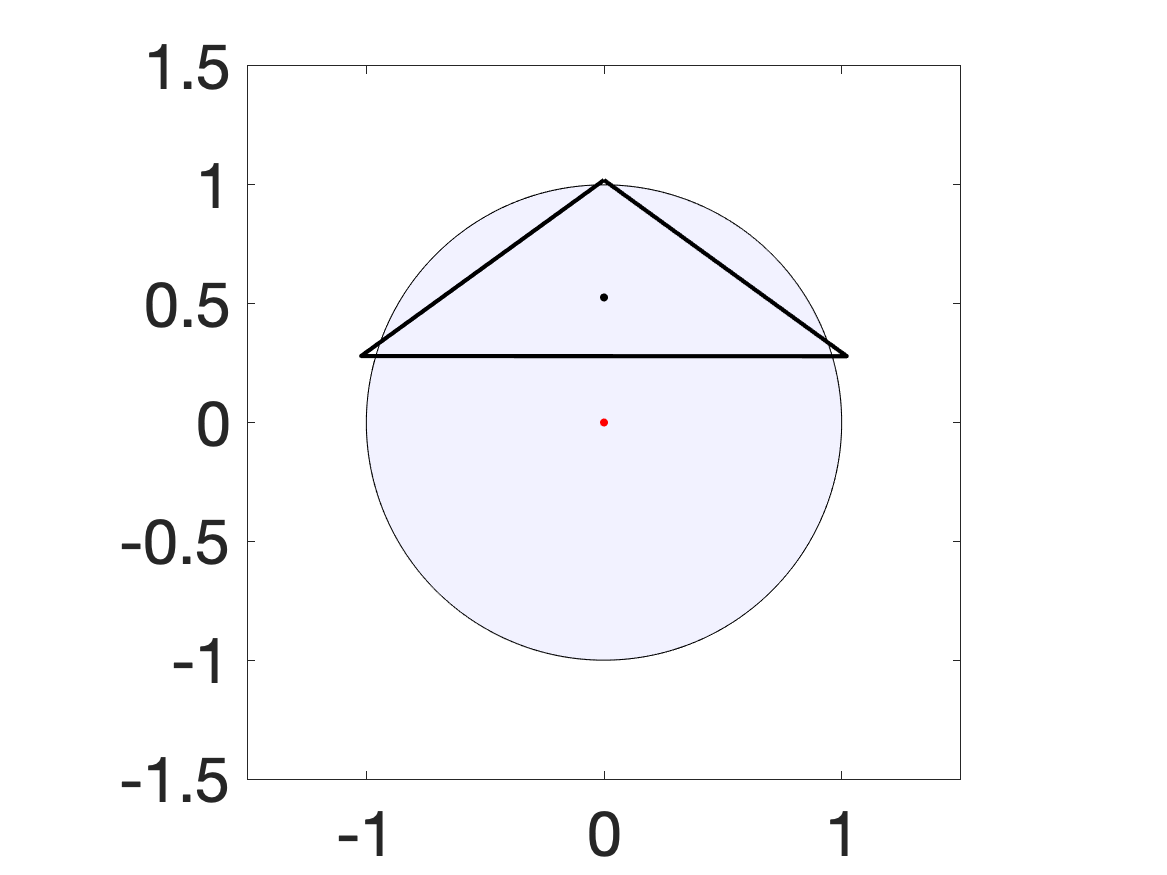

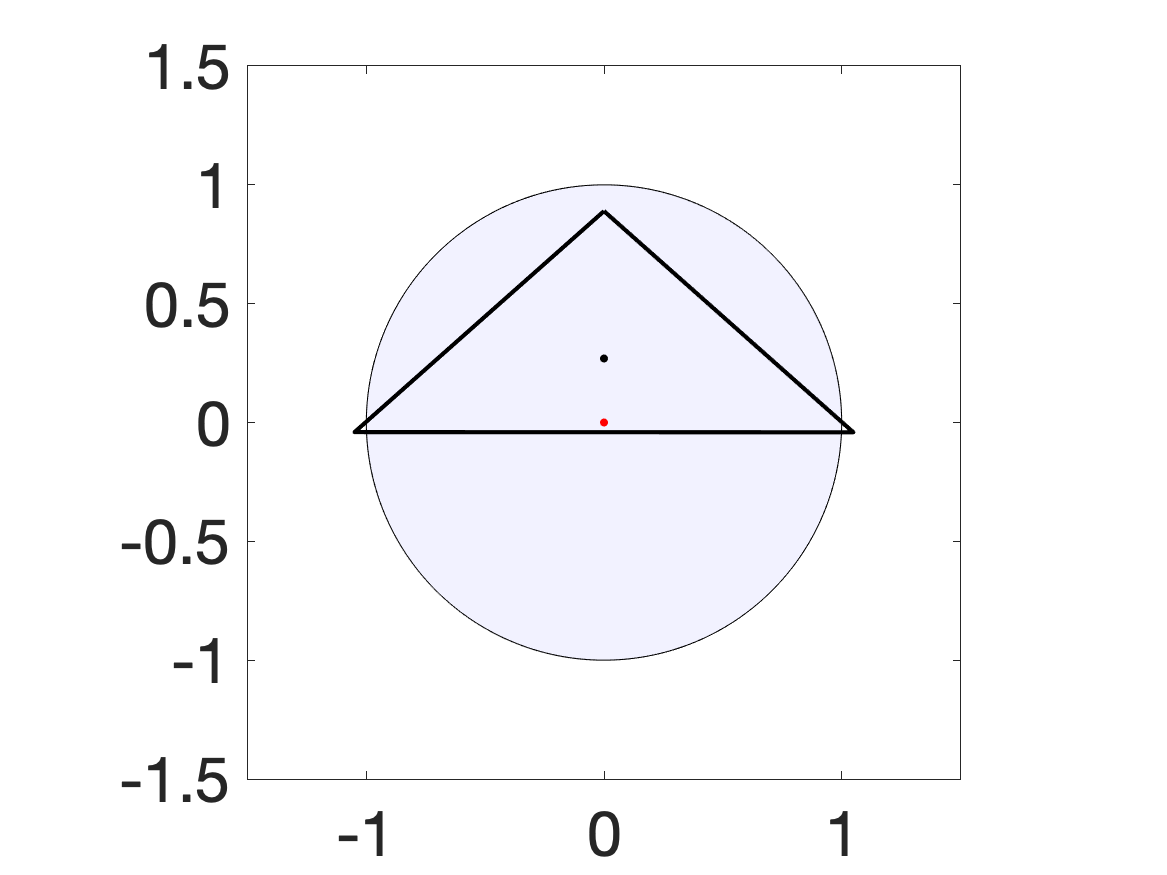

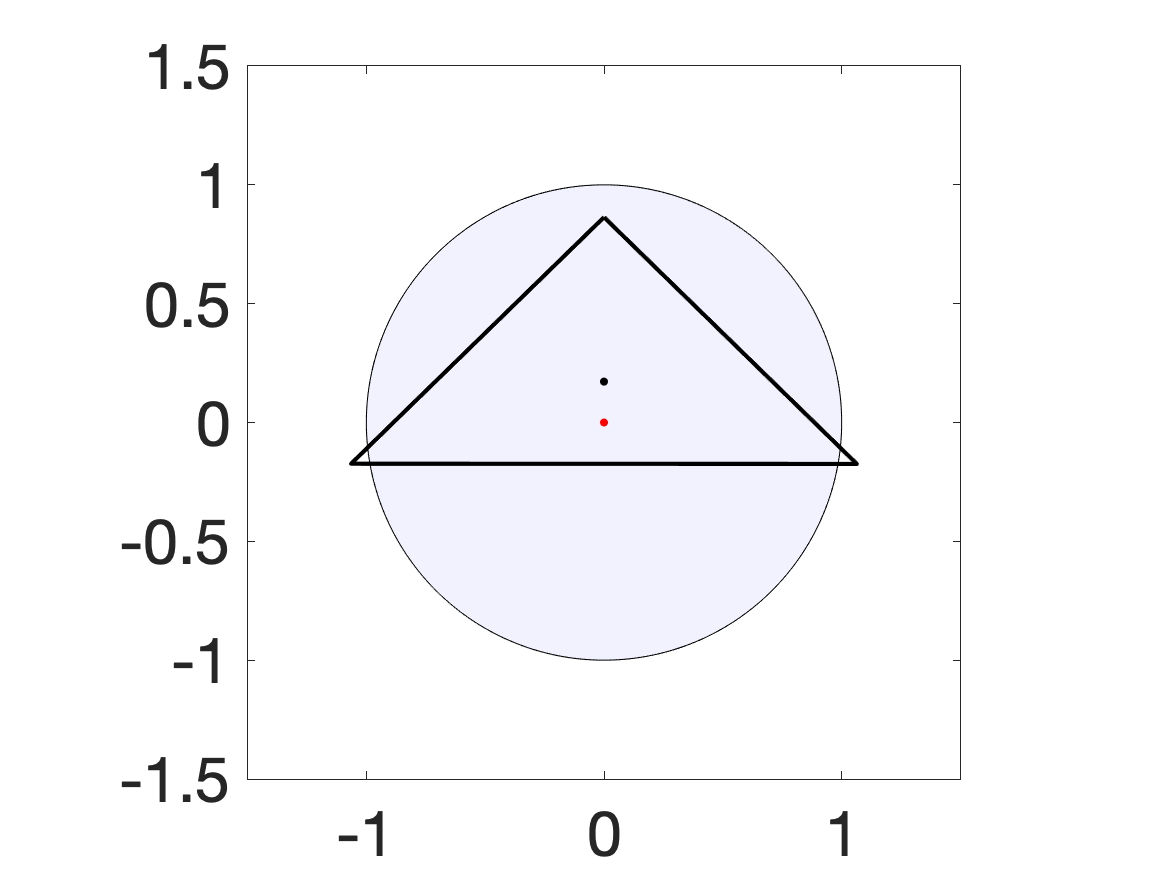

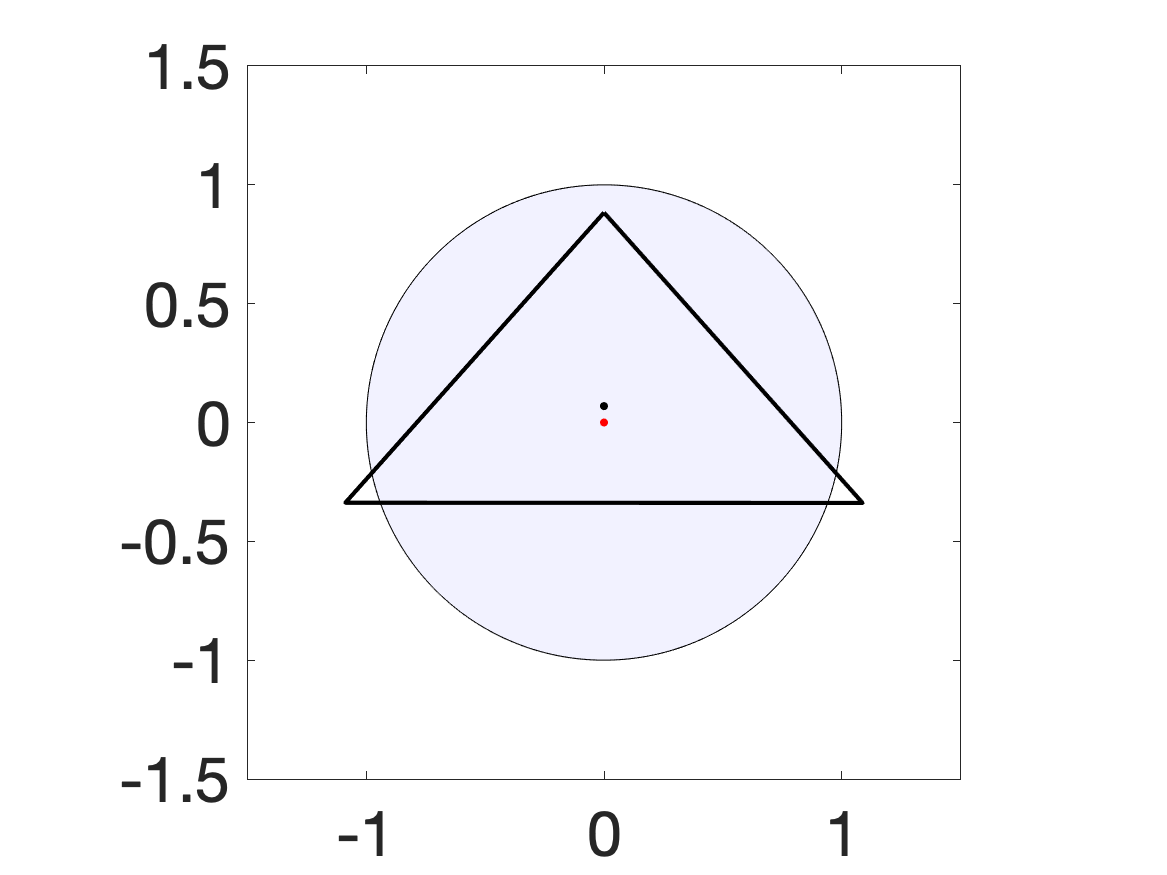

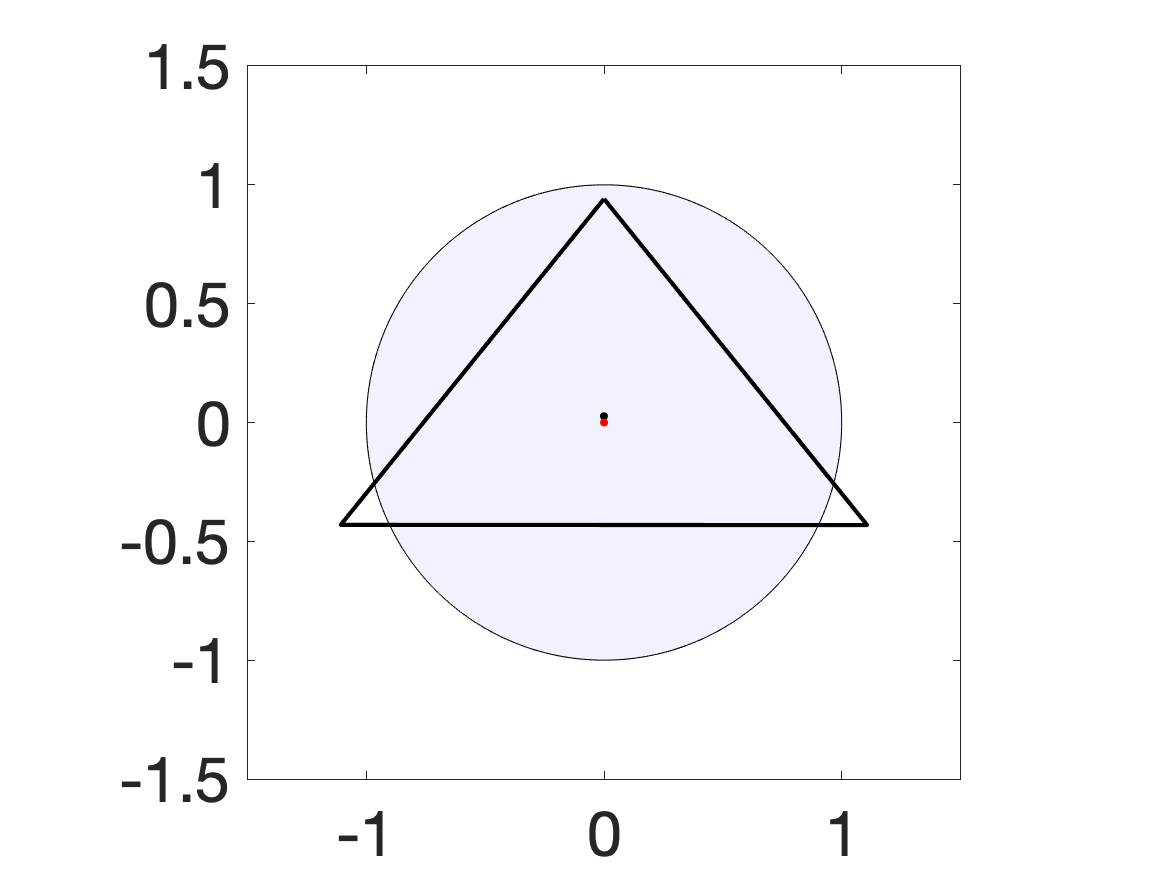

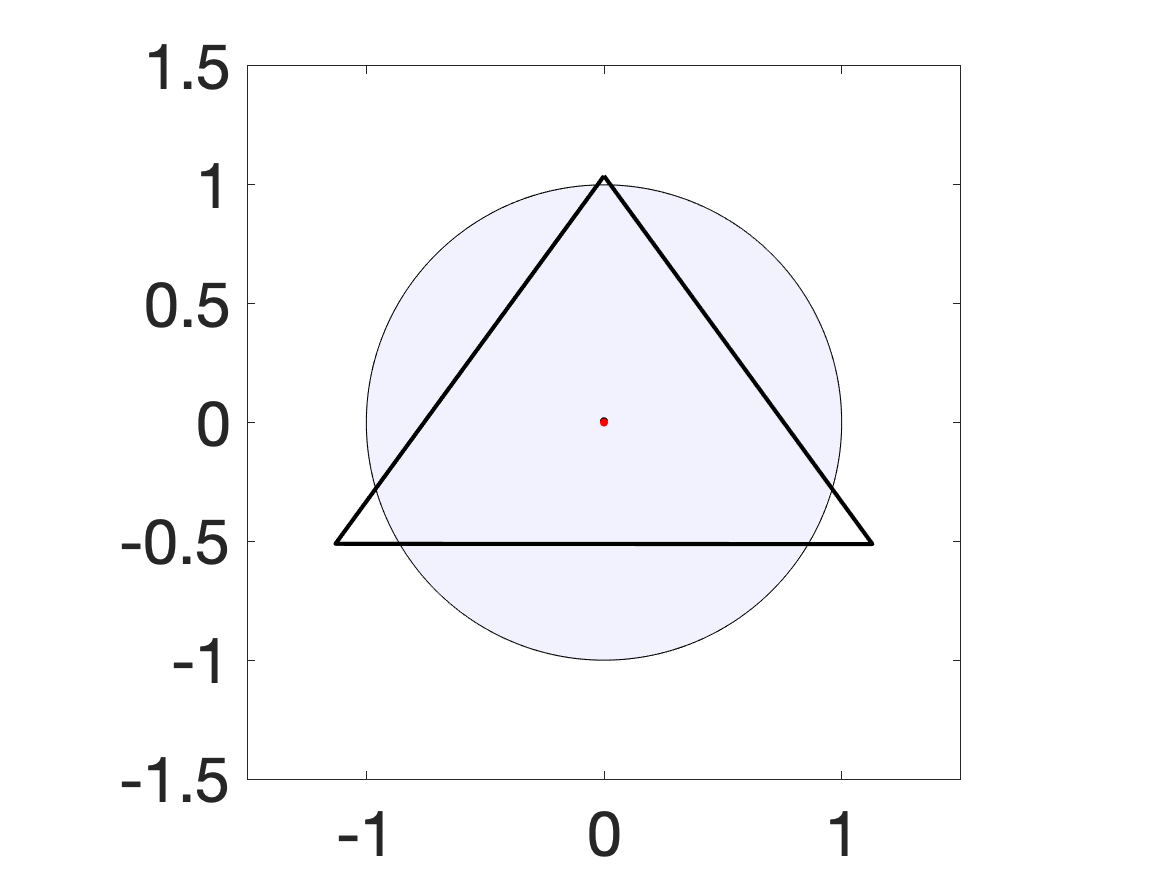

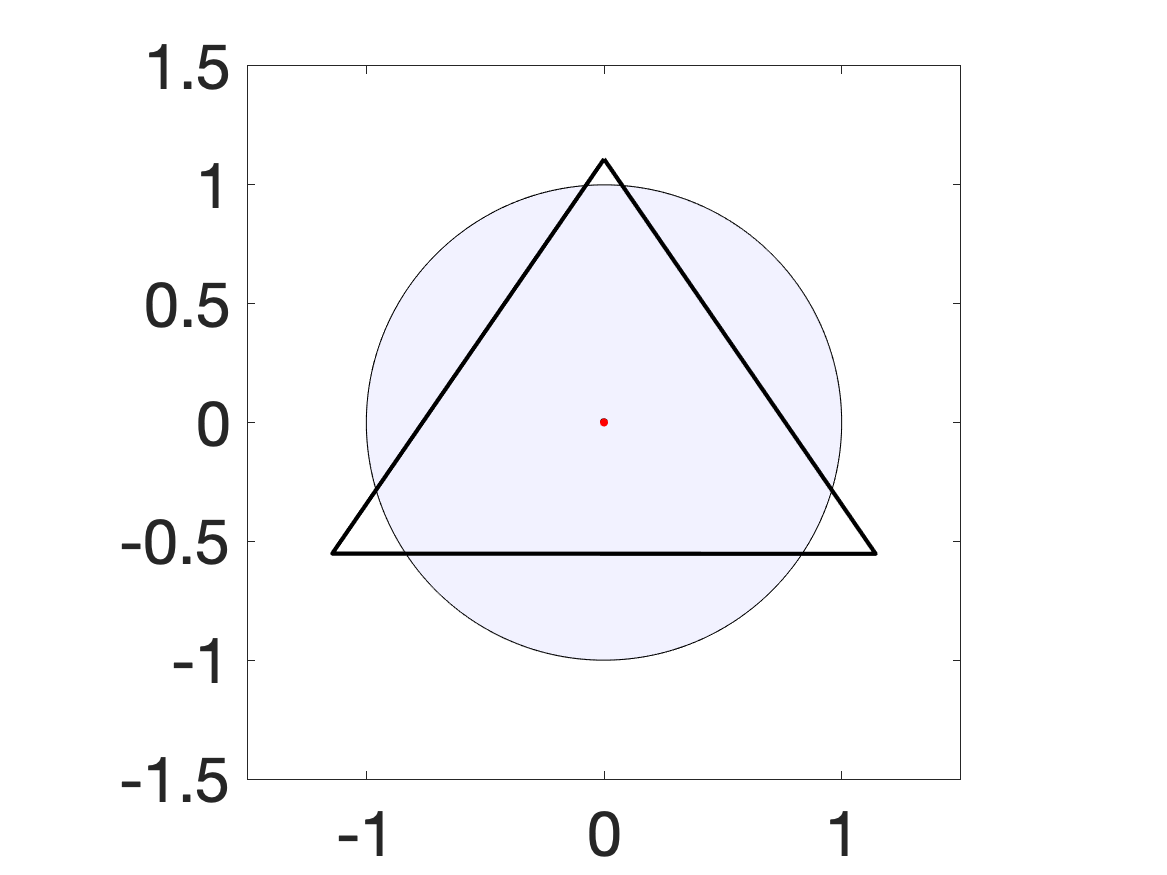

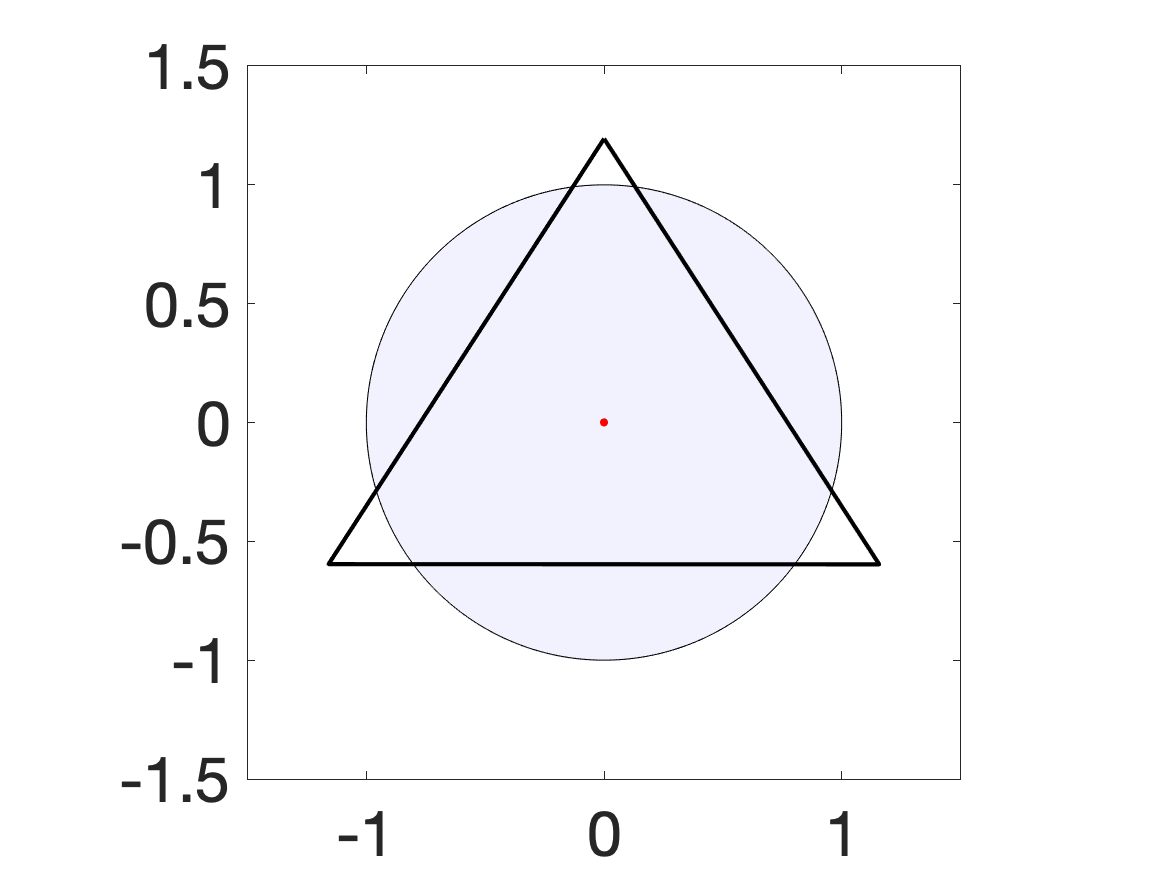

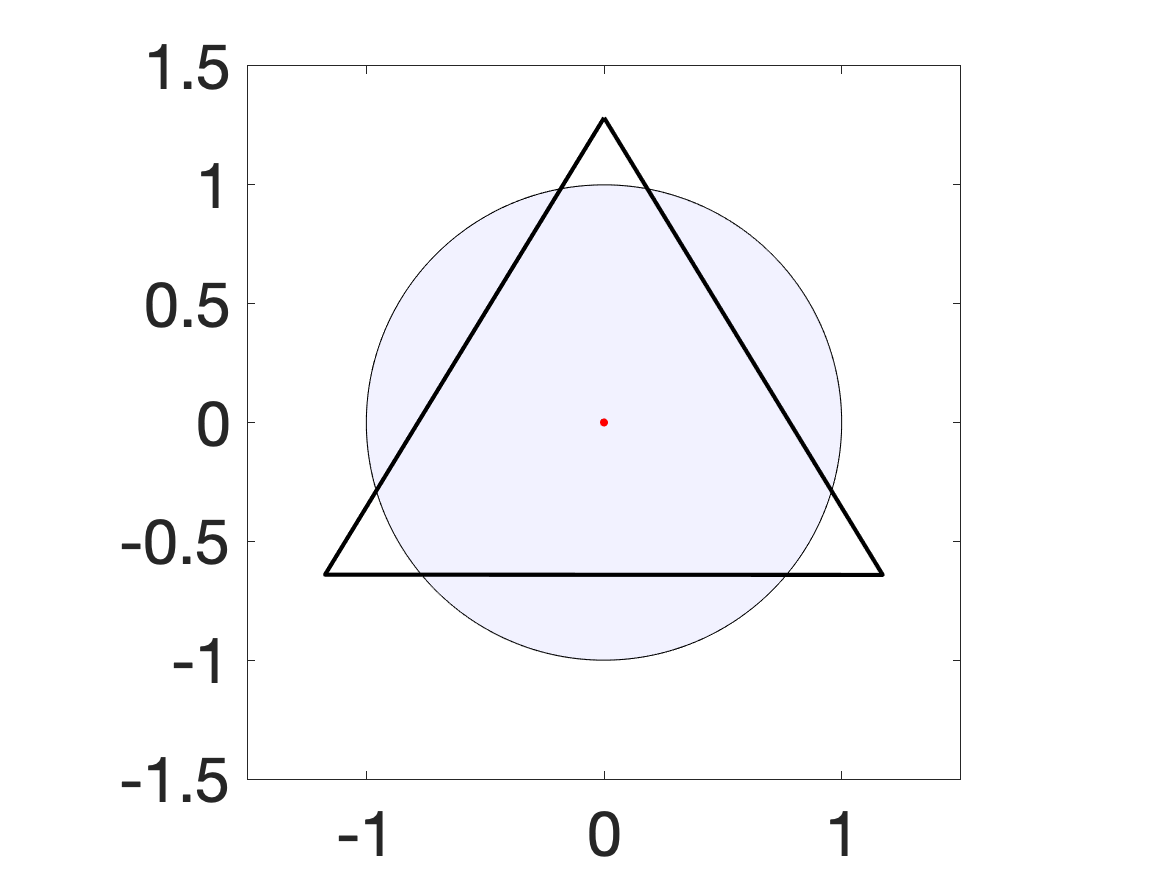

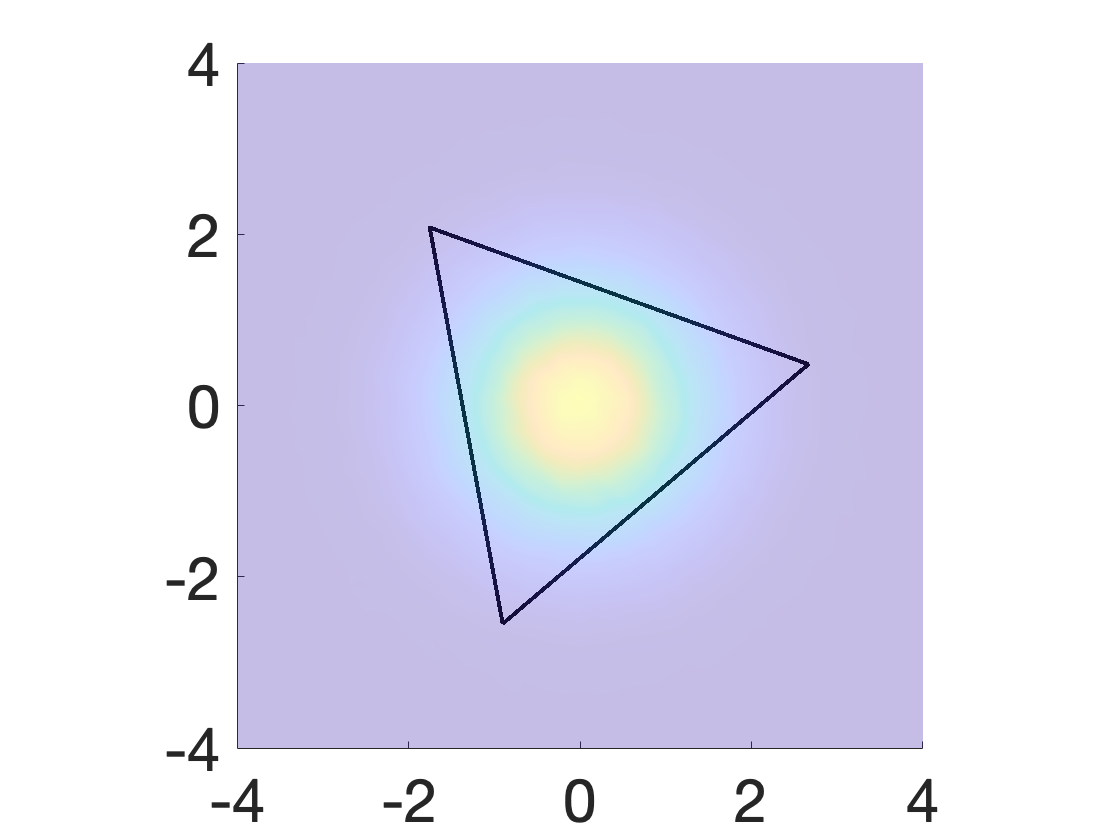

Figure 2: Snapshots of the gradient-based algorithm evolving the triangle solution over iterations for uniform disk data.

- The alternating method maximizes the dual variable over the Voronoi tessellation and descends in the space of polytope vertex positions, leveraging explicit formulae for shape derivatives of integrals over polytopes (Proposition on shape derivatives).

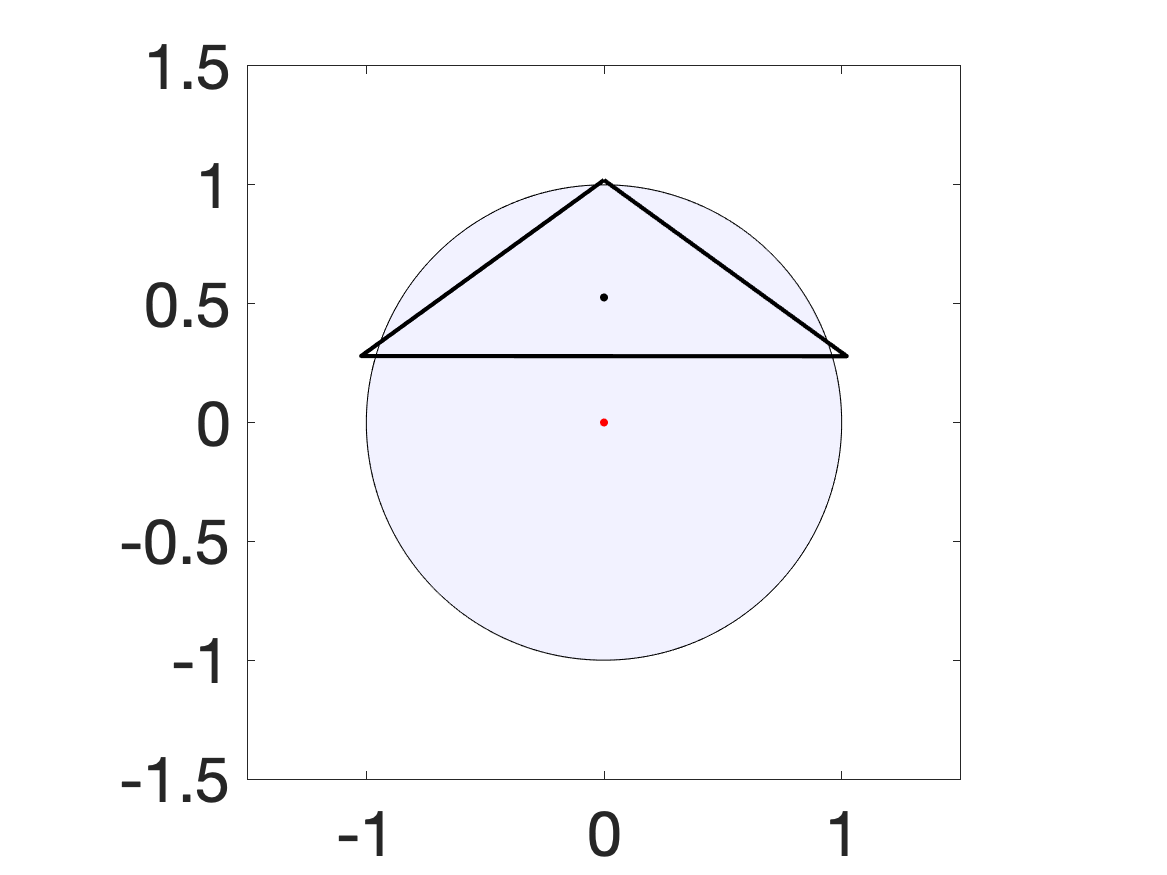

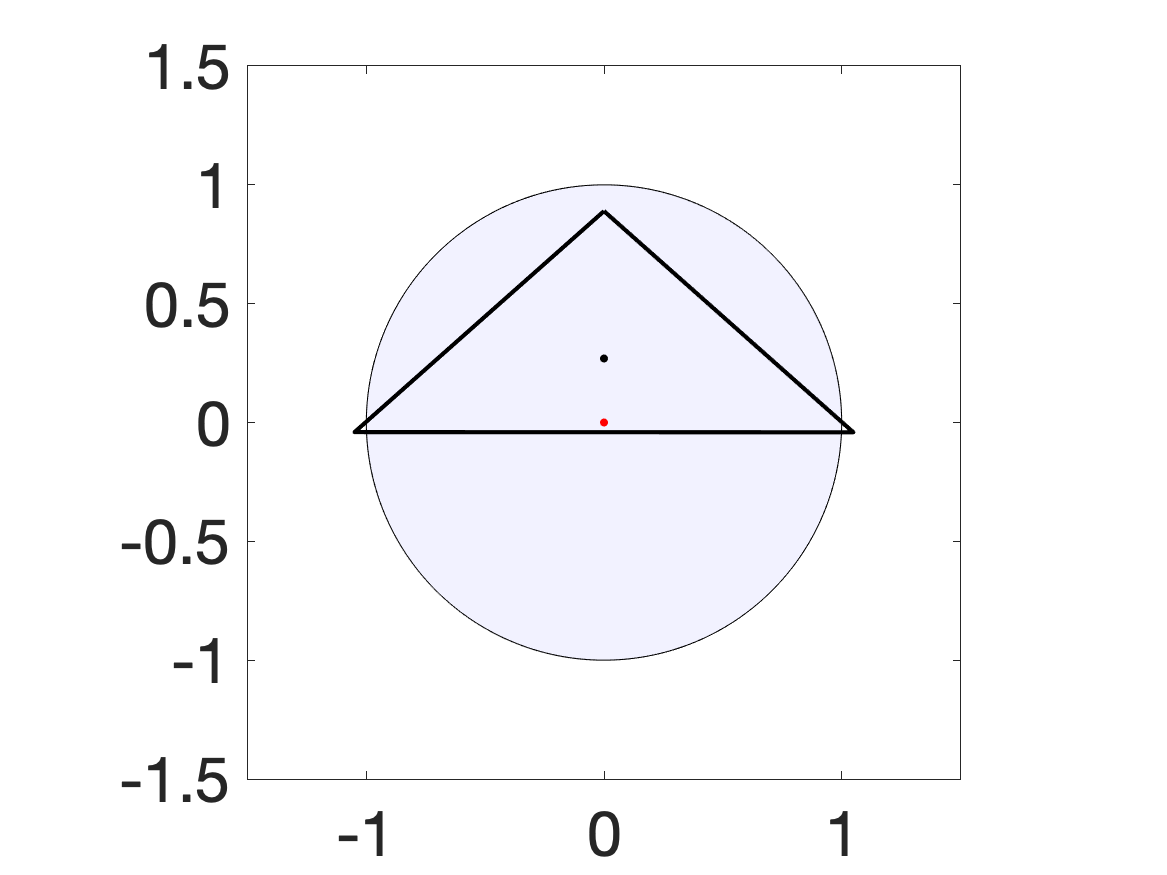

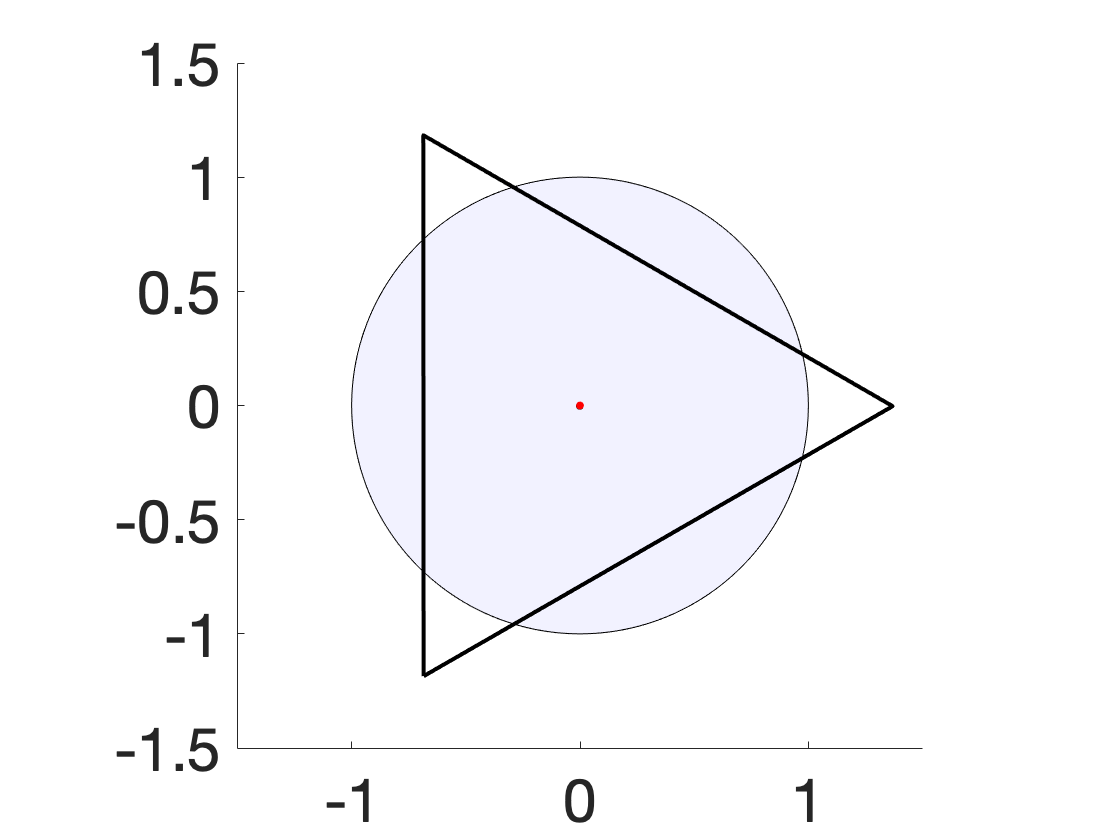

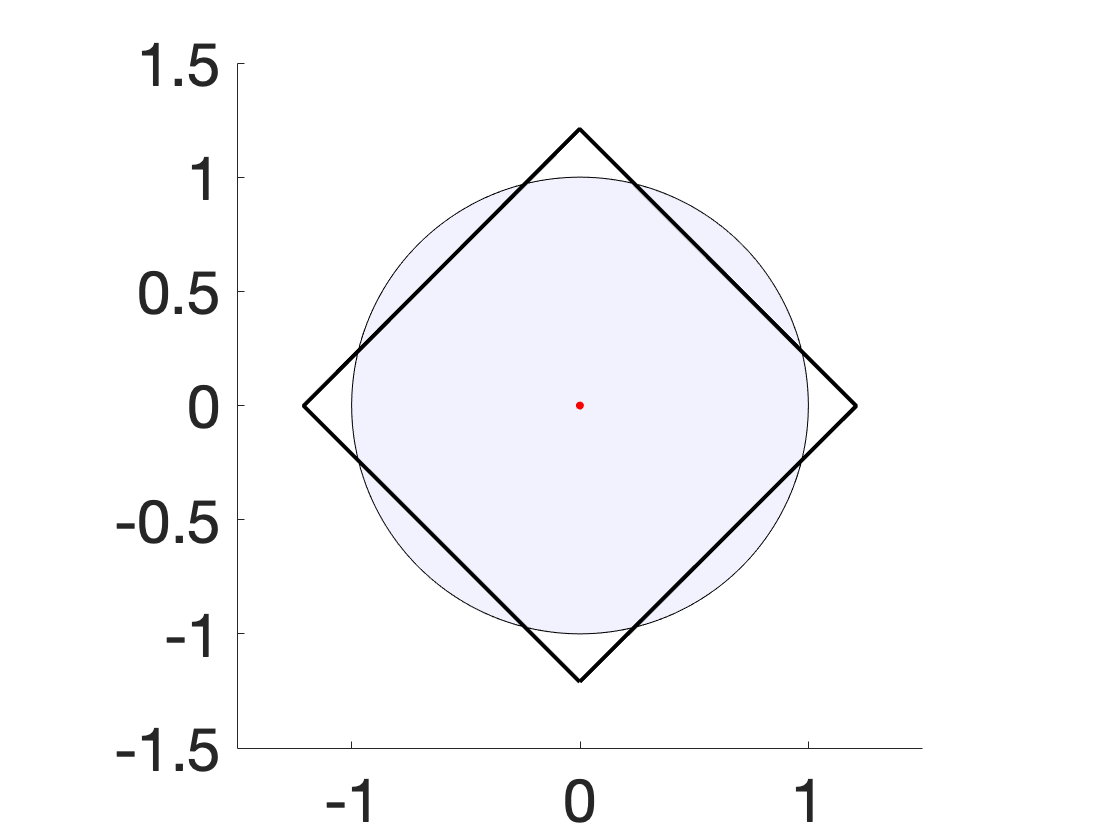

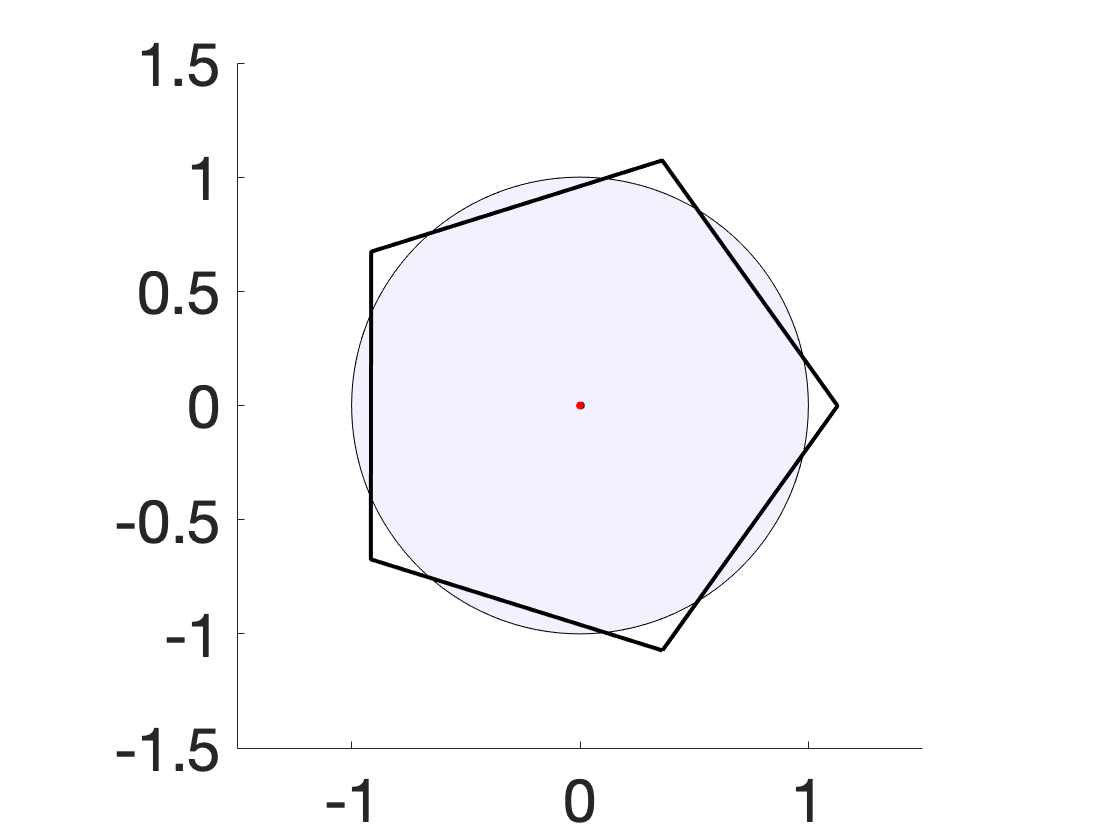

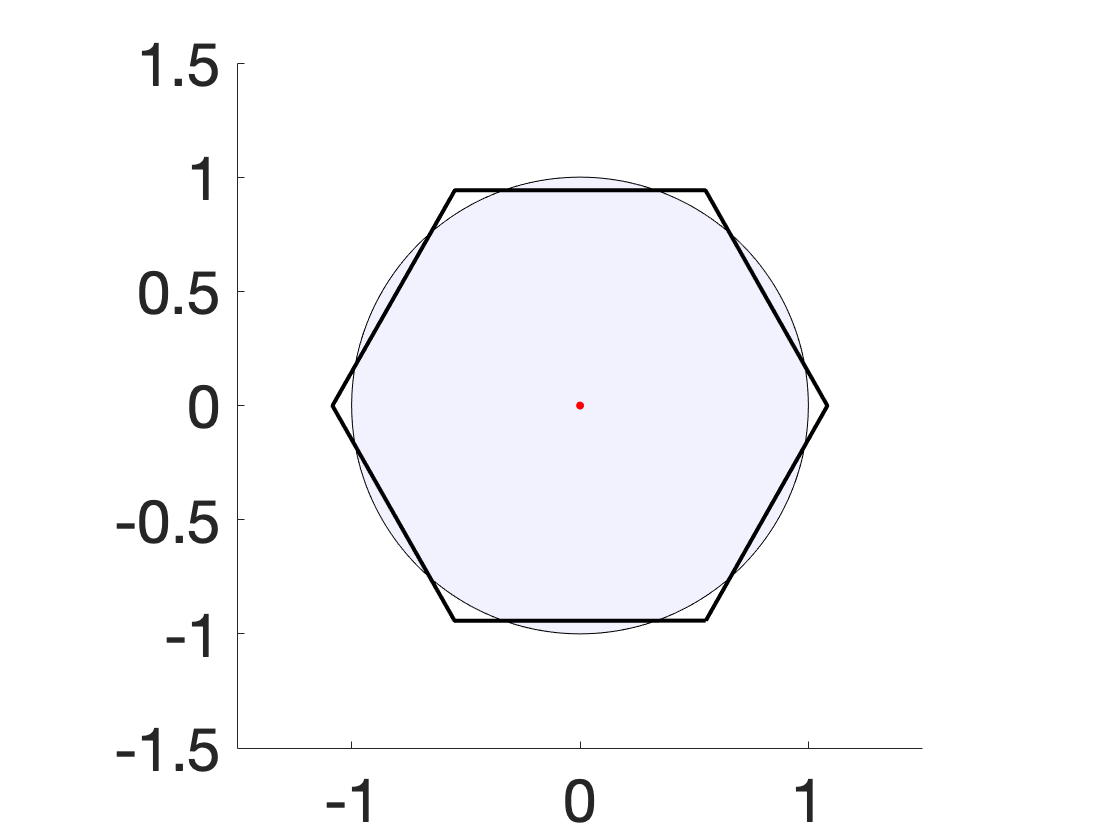

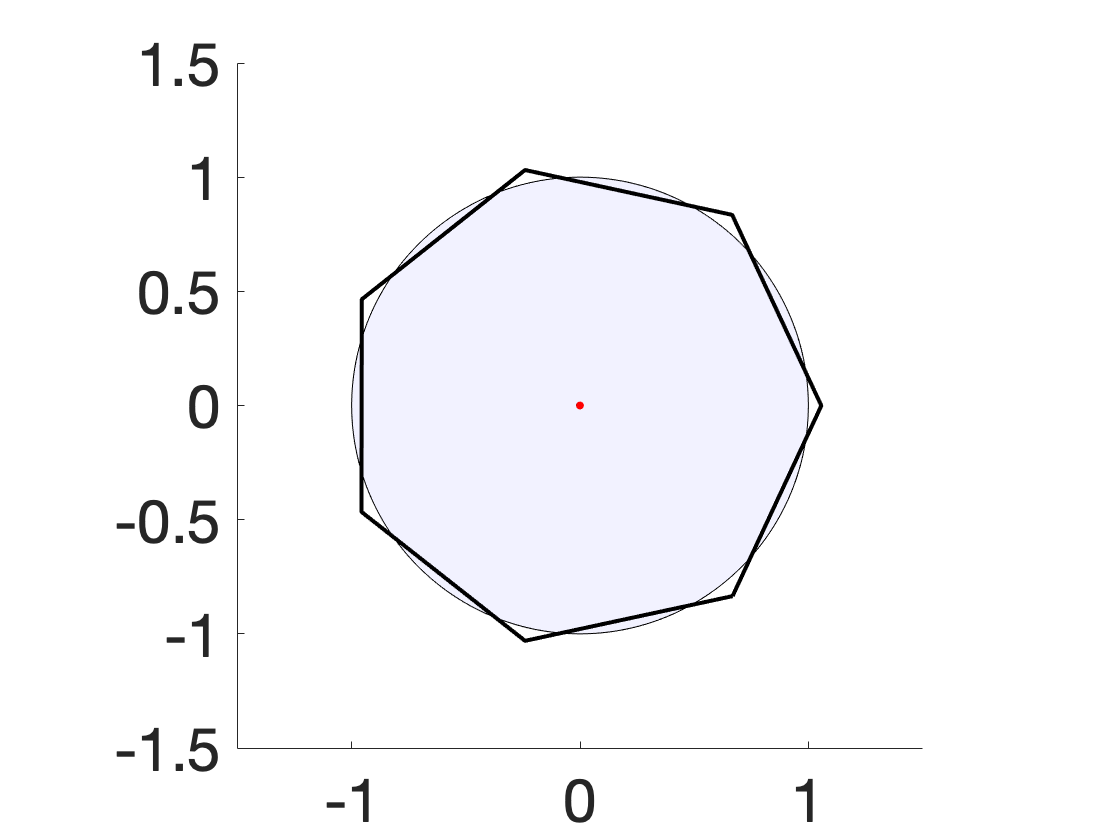

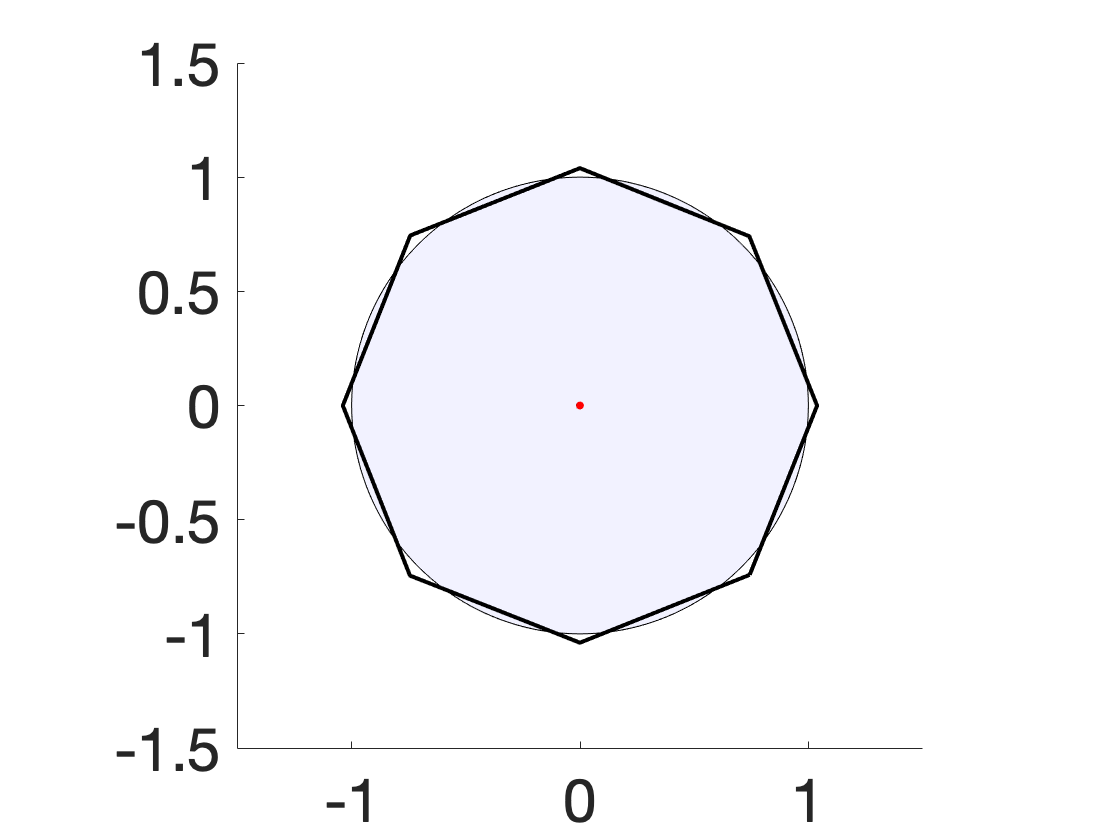

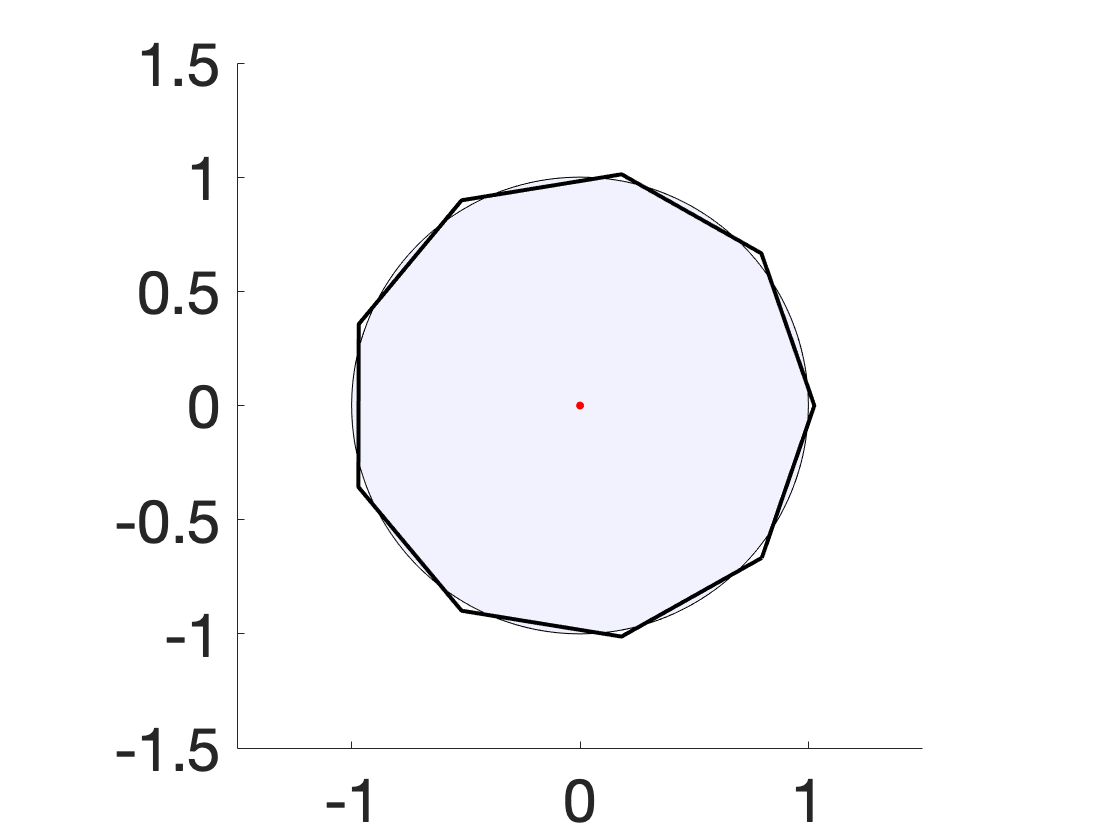

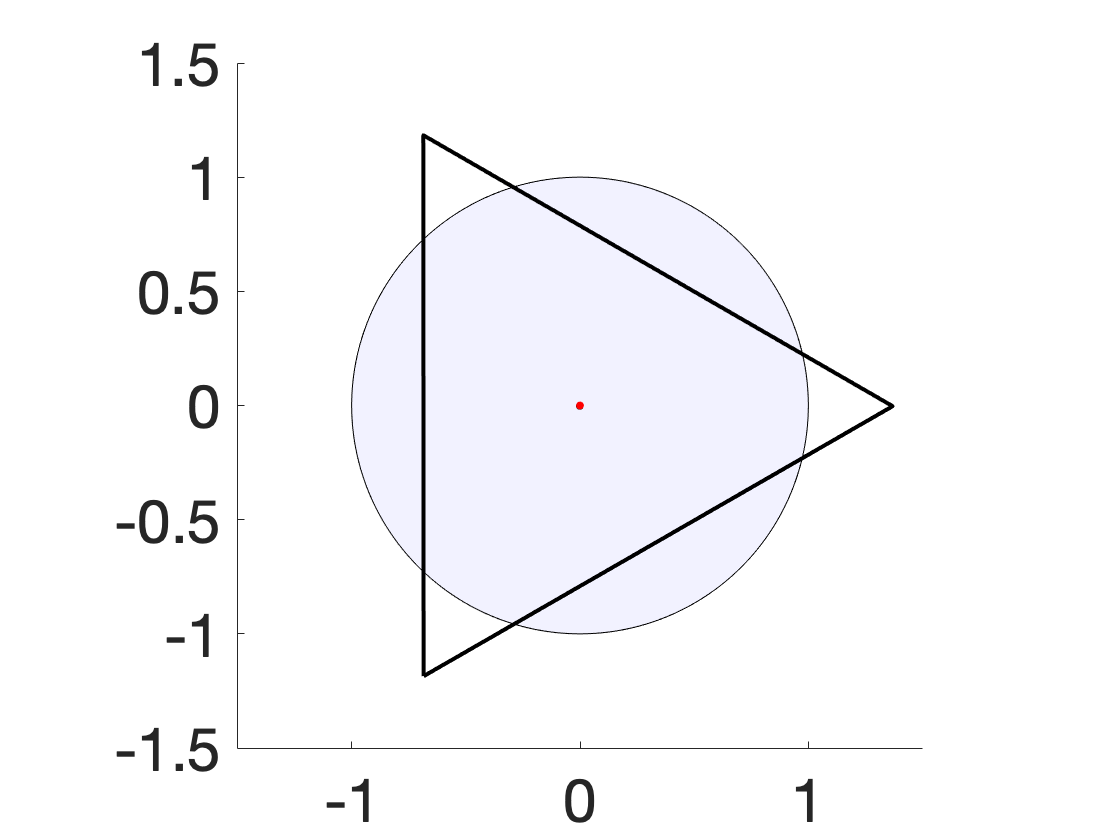

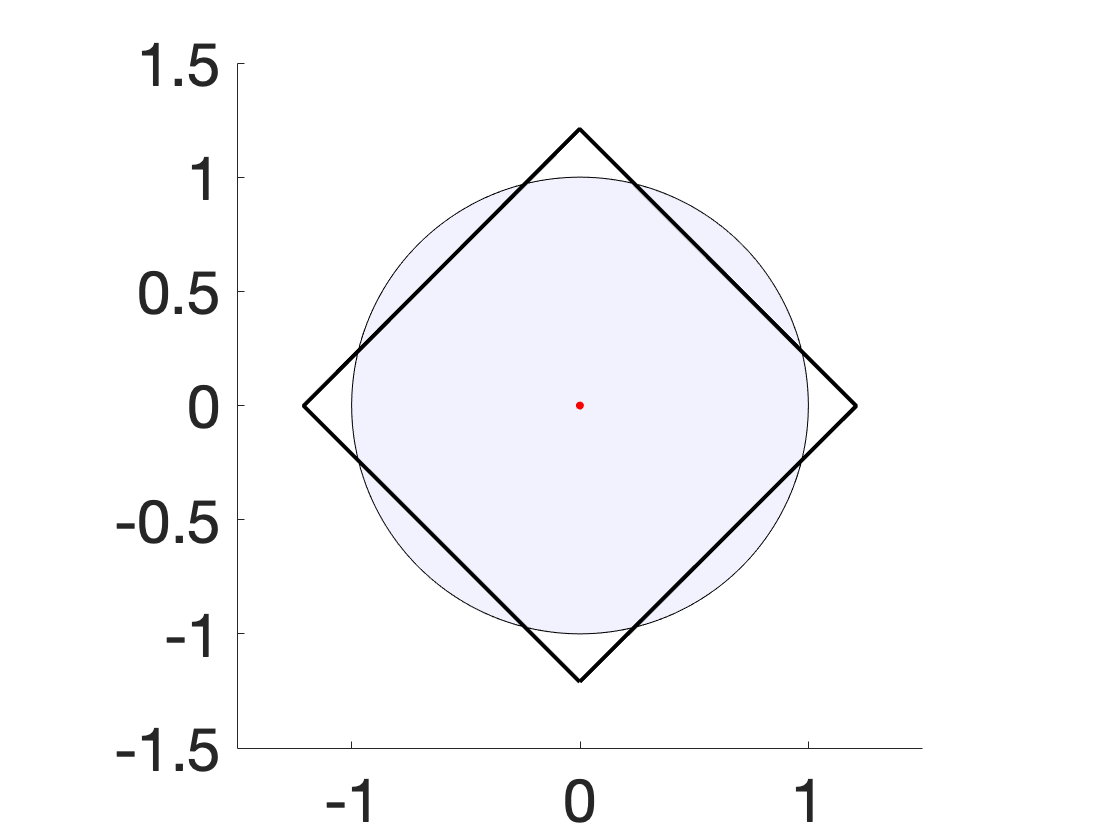

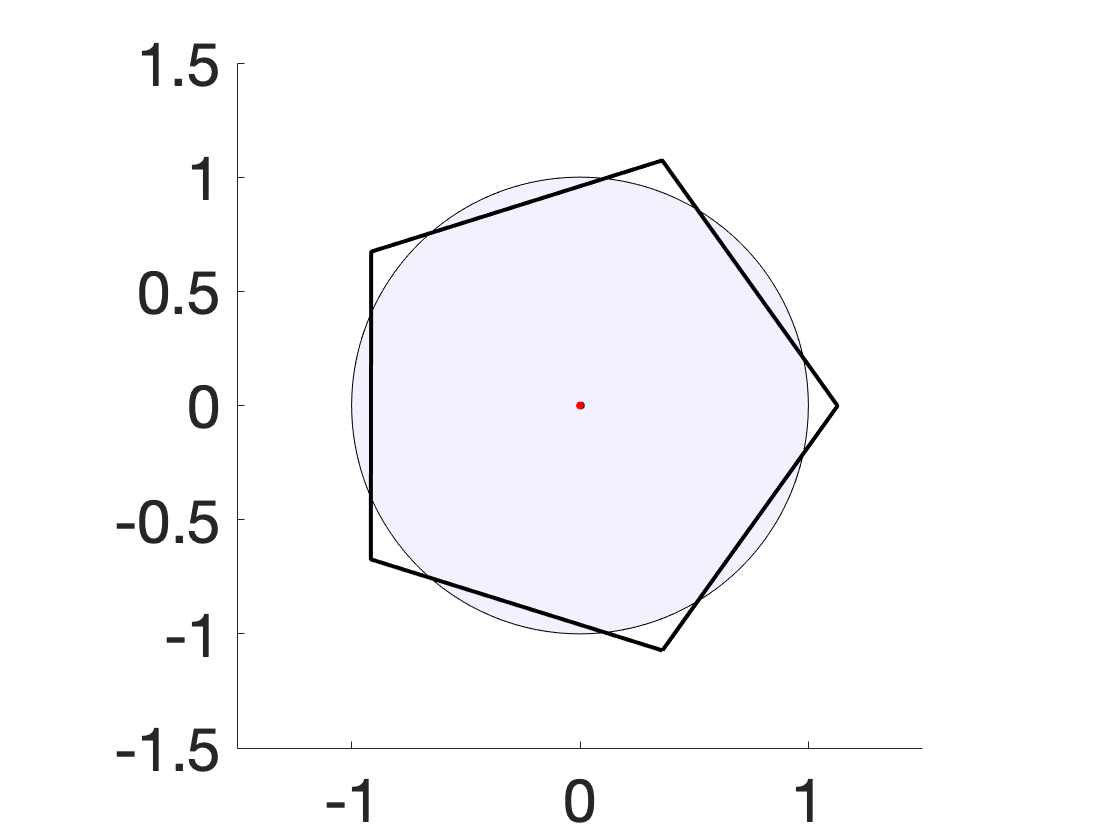

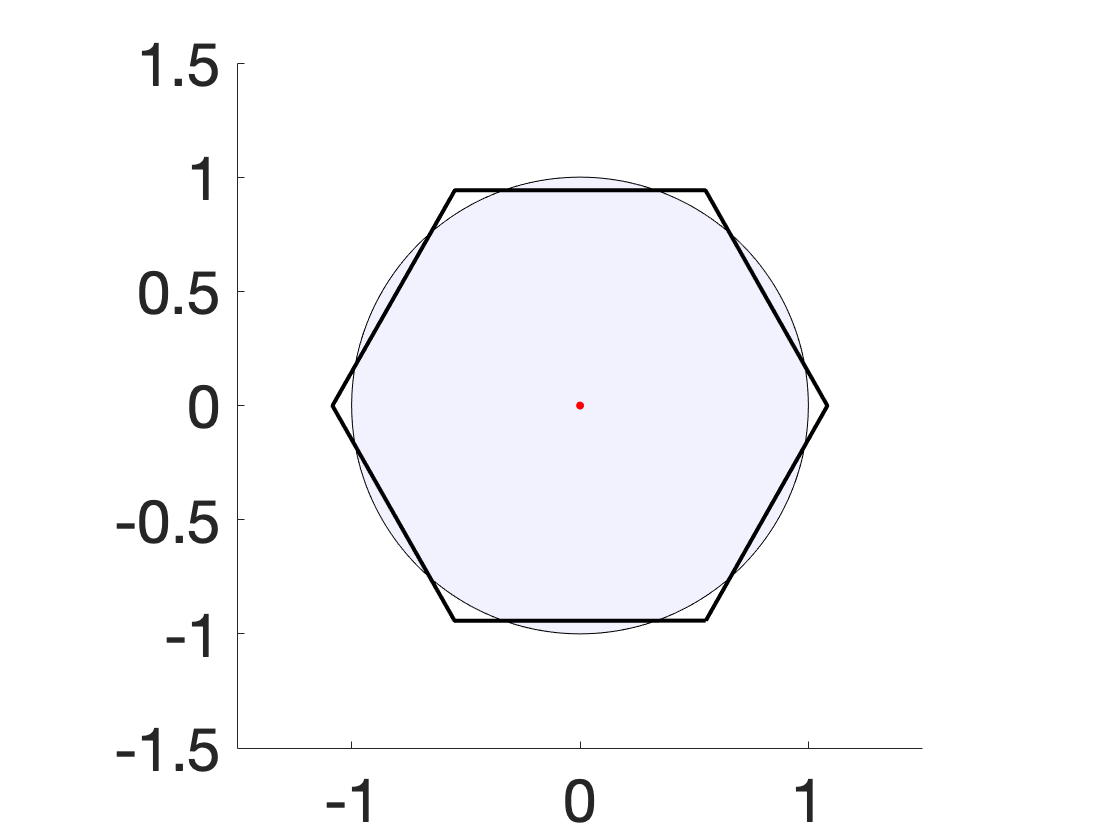

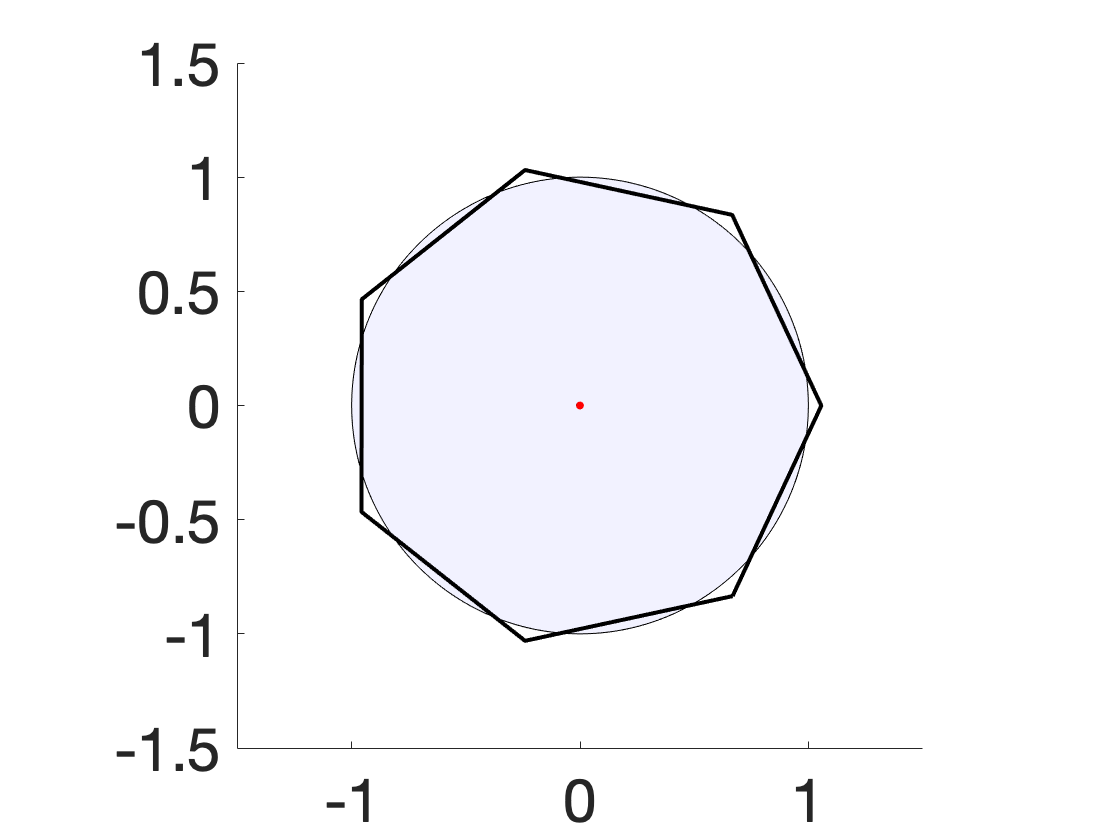

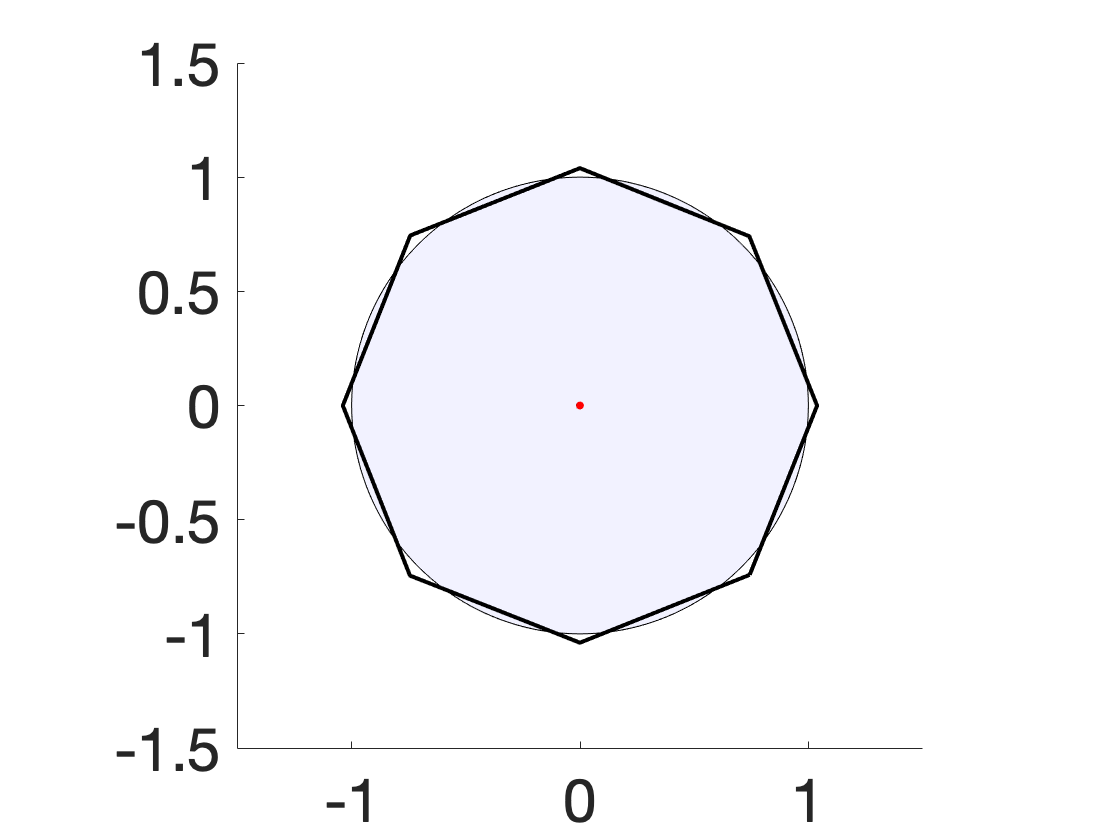

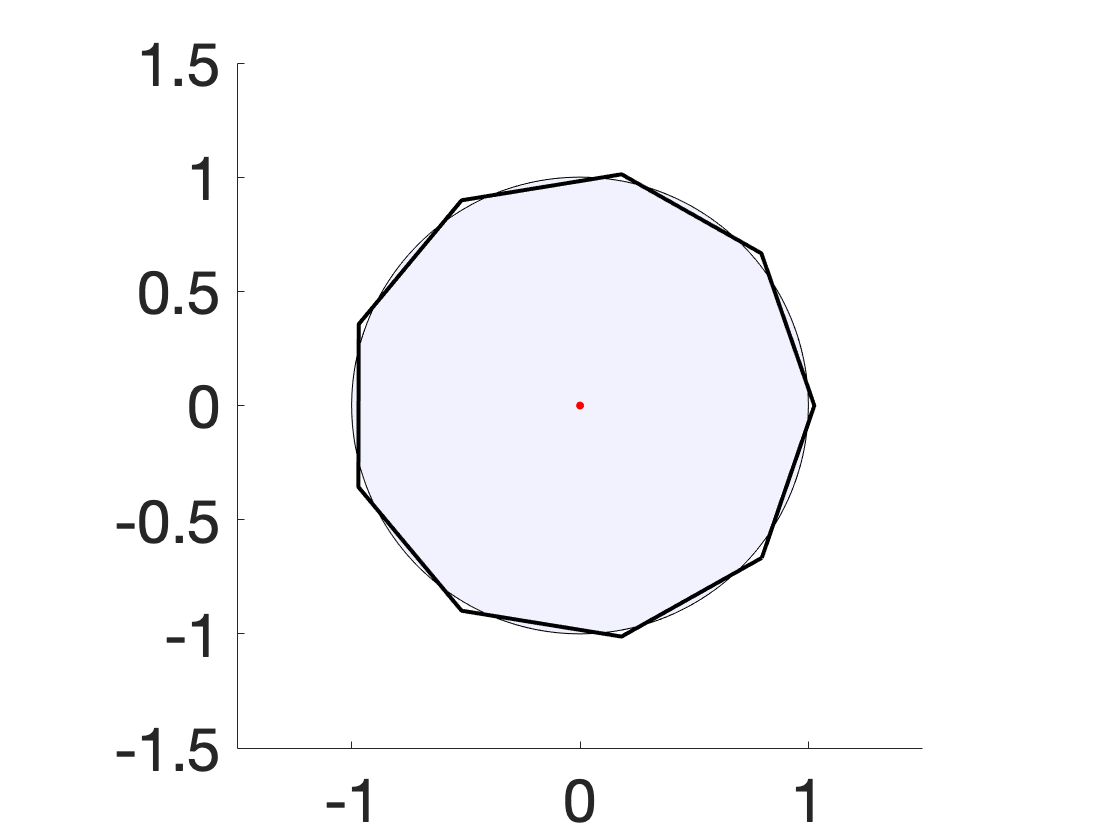

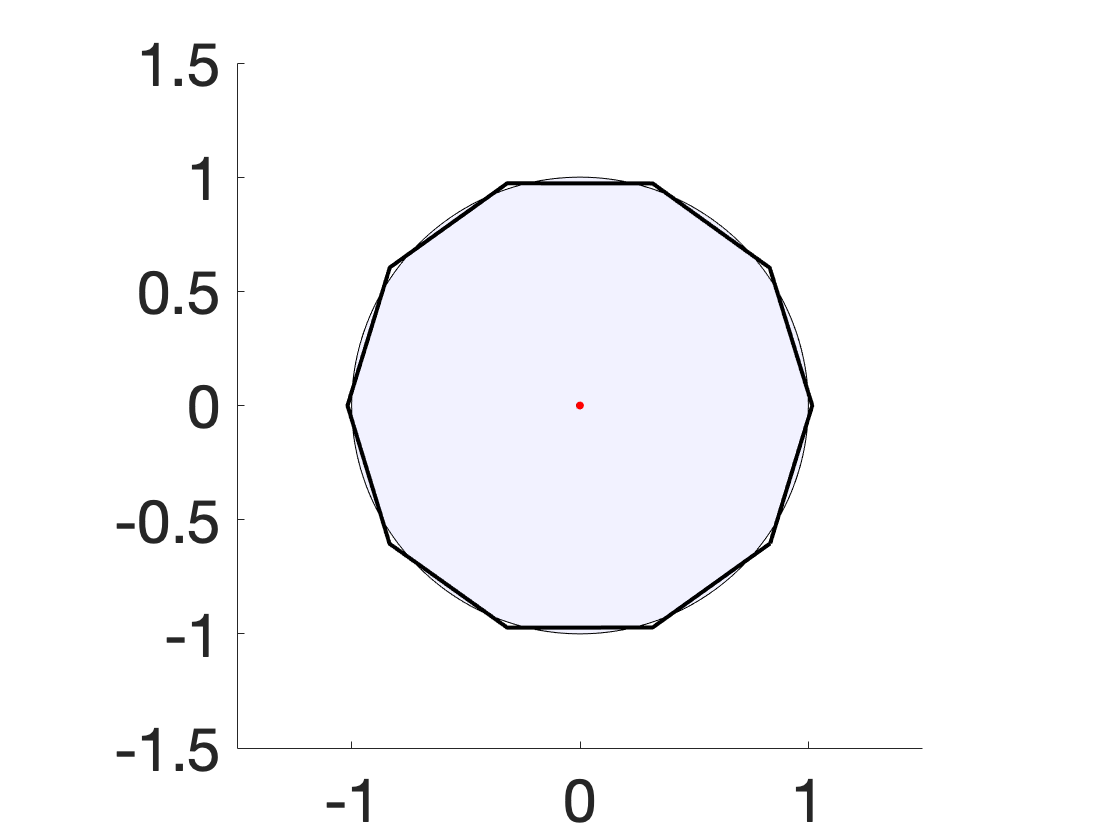

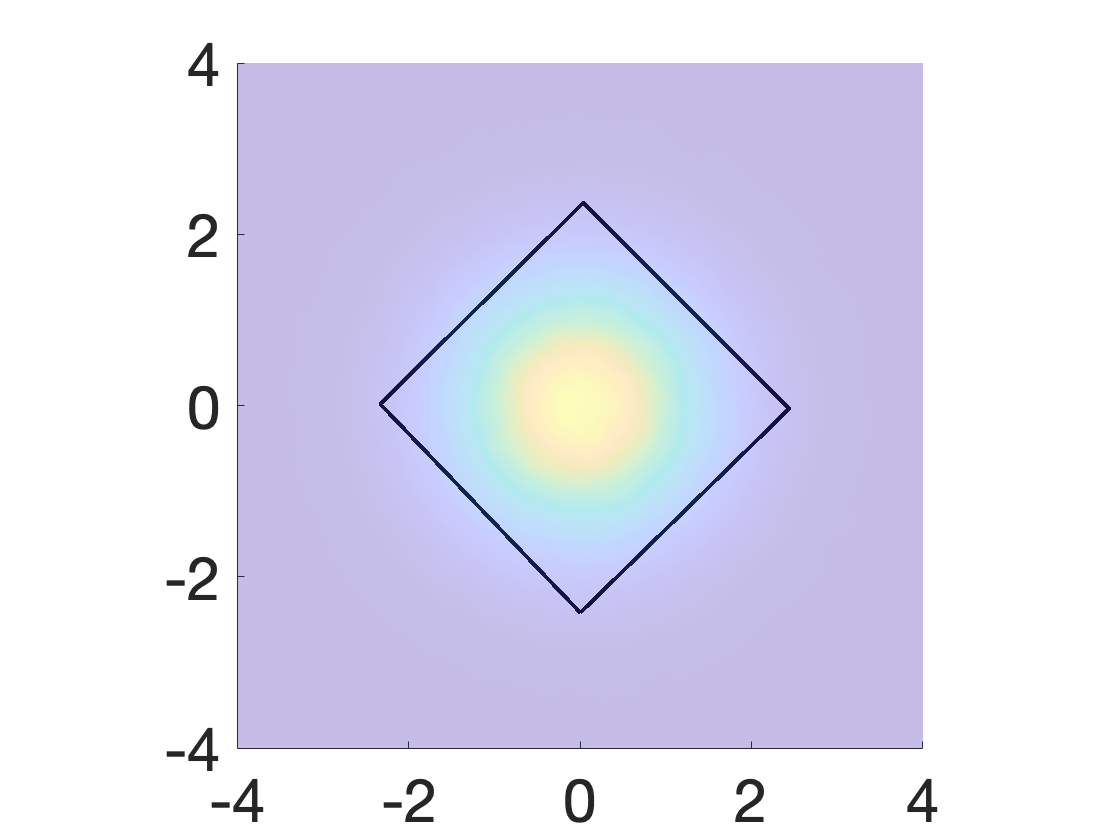

Figure 3: Solutions for varying k show regular polygons emerge as optimal archetypes for uniform disk data.

Numerical experiments demonstrate the robustness and versatility of the approach on both synthetic (uniform disk, Gaussian) and real datasets (COVID-19 positivity rates).

Empirical Analysis and Observations

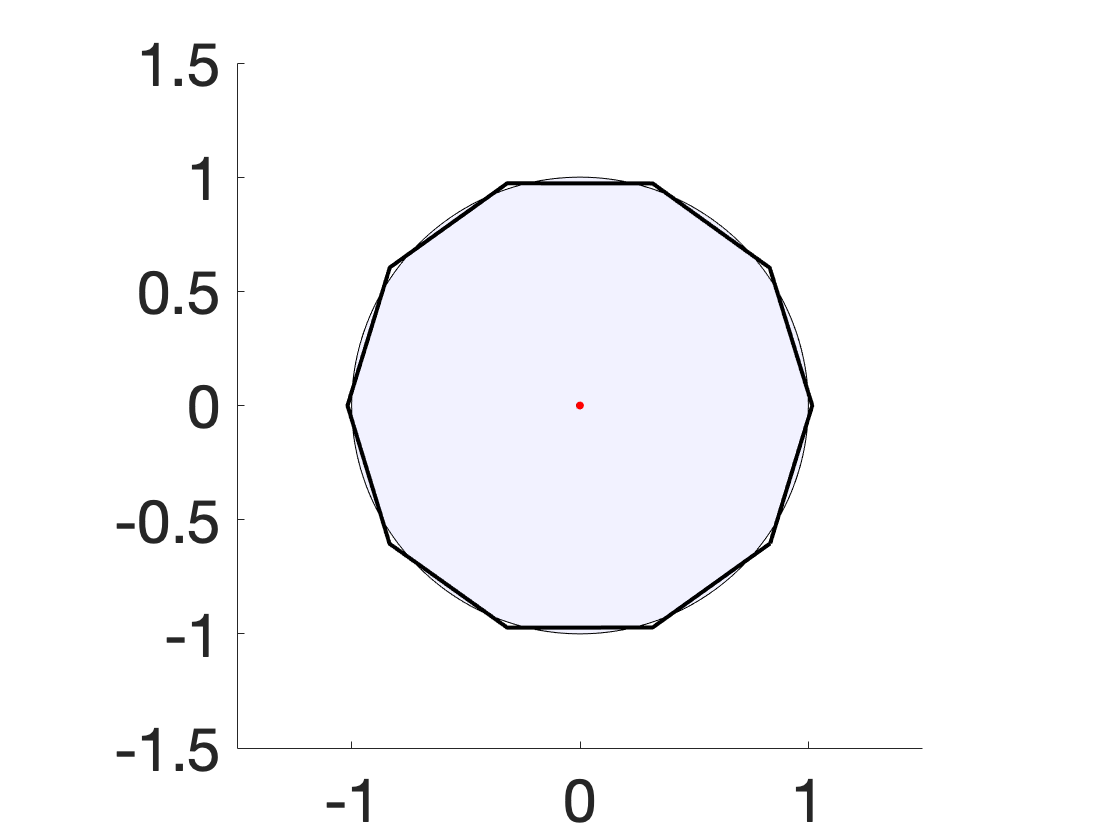

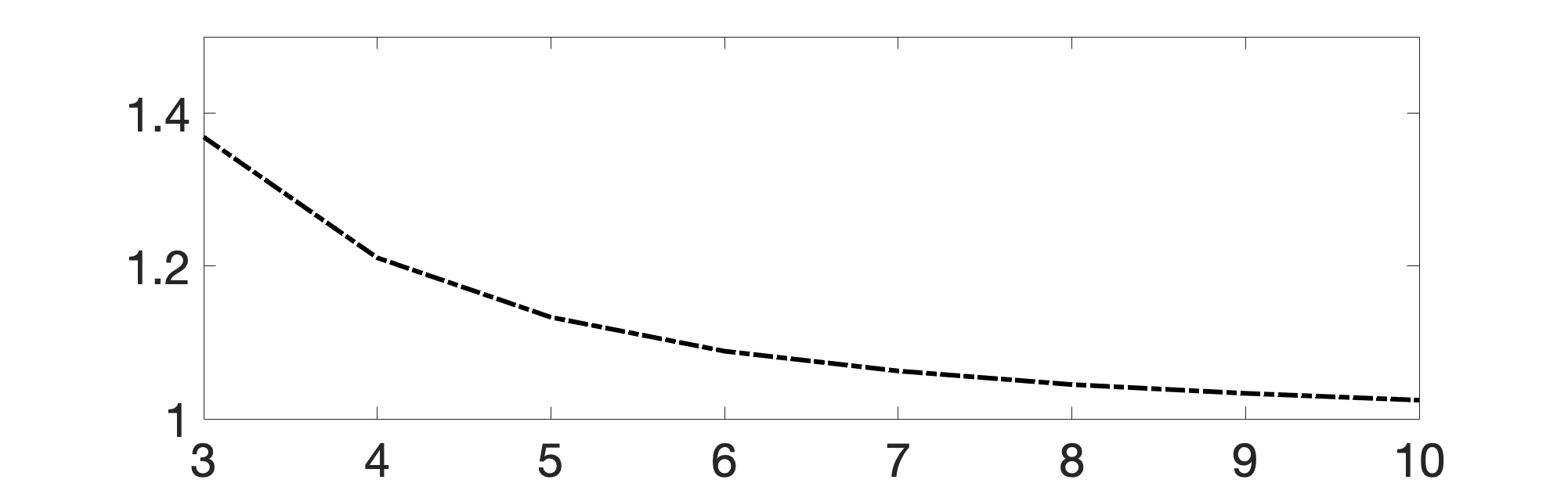

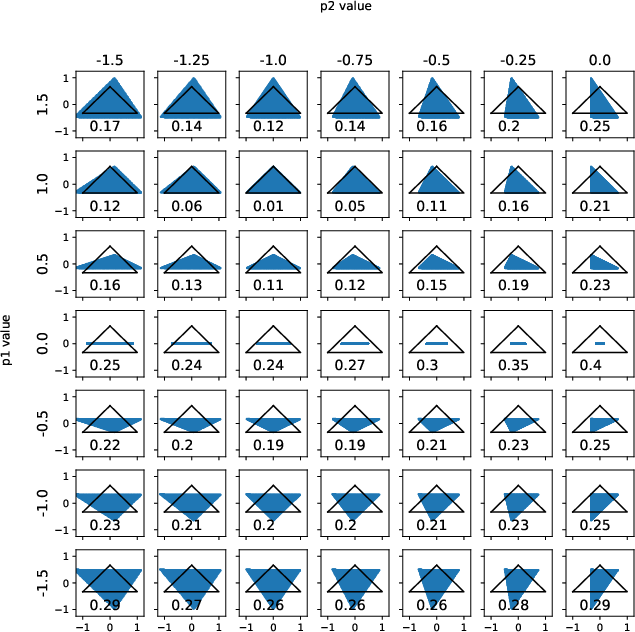

- Regular Polytopes as Archetypes: For isotropic distributions (disk, normal), the optimal WAA polytopes are empirically regular (equilateral triangles, squares), regardless of initialization. This aligns with theoretical expectations for symmetry-constrained minimization.

Figure 4: Archetypal polygons for Gaussian data, showing interior solutions and dimensional consistency for k=3,4.

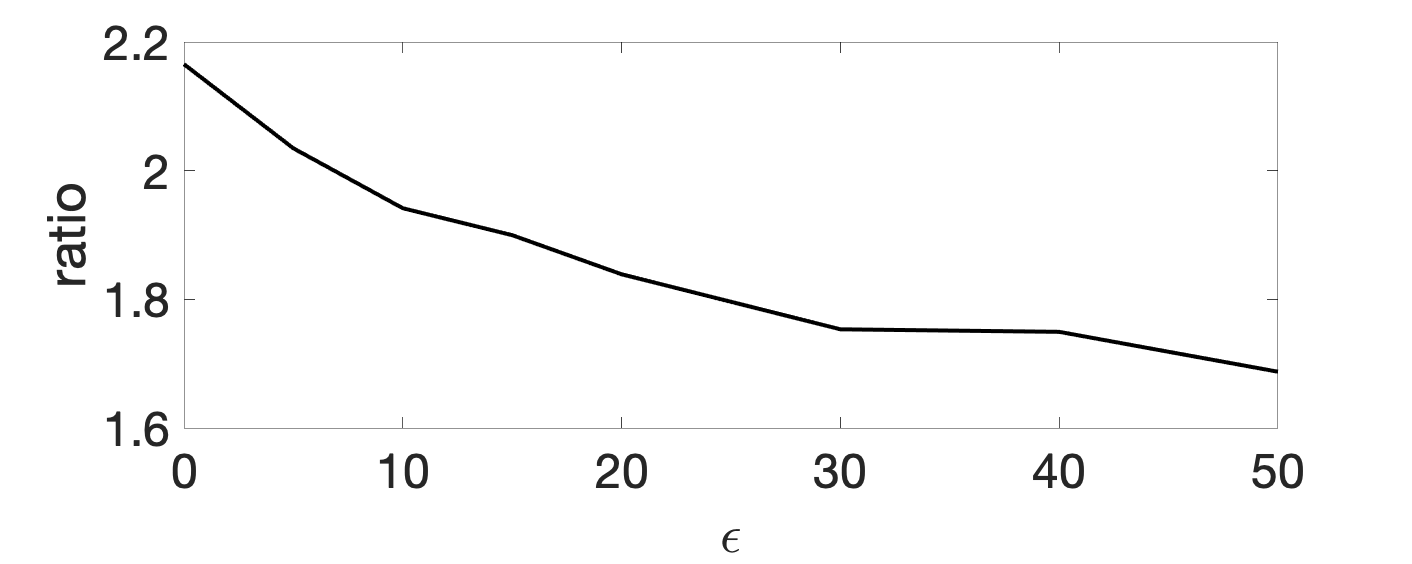

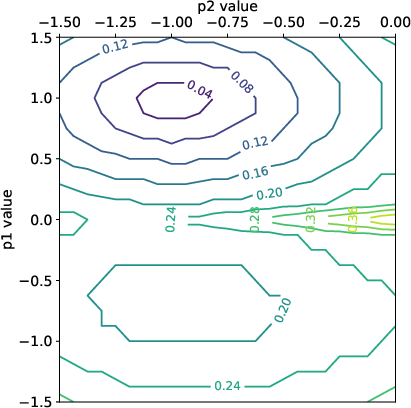

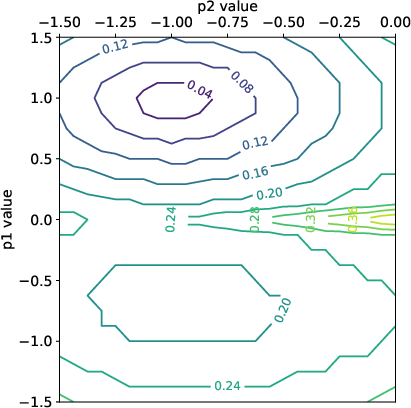

Figure 6: Energy landscape for triangle-to-triangle Wasserstein minimization exhibits local minima, indicating non-convexity.

- Application to COVID-19 Data: WAA archetypes provide interpretable summarization of US states' pandemic trajectories in principal component space, outperforming k-means in robustness to outliers and yielding easily interpretable exemplars.

Implications and Future Directions

Practically, WAA provides a robust alternative to classical AA for summarizing datasets with heavy tails or outliers. Theoretically, the work exposes important open questions including:

- Extending existence proofs for ε=0 to higher dimensions and singular measures

- Establishing conditions for uniqueness up to invariance groups

- Generalizing to p-Wasserstein metrics (p=2) and other divergences

- Developing efficient algorithms for d>2, possibly through entropic regularization or back-and-forth schemes

The analysis and methodology support WAA as a powerful, flexible tool for unsupervised summarization of multivariate distributions, particularly in settings where classical AA fails.

Conclusion

The paper rigorously formulates and analyzes Wasserstein Archetypal Analysis, providing theoretical existence and statistical consistency results, introducing a Rényi entropy regularization for generality, and developing a gradient-based semi-discrete algorithm. Empirical studies support the theoretical claims and illustrate WAA's advantages in robustness and interpretability. The implications span both methodological advances in unsupervised learning with optimal transport and practical improvements for archetype-based data summarization, paving the way for further algorithmic and theoretical development in high-dimensional and non-Euclidean settings.