- The paper presents AdaTest++, which integrates human-AI collaboration to enhance auditing of LLMs and mitigate biases and errors.

- The tool employs natural language prompting, dynamic tree visualization, and expert-derived prompt templates to improve audit effectiveness.

- User studies demonstrated that AdaTest++ enables diverse failure detection strategies, supporting robust sensemaking and deeper audit processes.

Supporting Human-AI Collaboration in Auditing LLMs with LLMs

This paper presents enhancements to an existing AI-driven auditing tool, AdaTest, by integrating human-AI collaborative processes to improve the identification and management of biases and errors in LLMs. The enhanced tool, AdaTest++, facilitates the auditing process for AI practitioners by leveraging the strengths of both human insights and AI-driven test generation.

Motivation and Background

The deployment of LLMs in various applications such as chatbots and content moderation systems has exposed vulnerabilities in these models including biases and irresponsible behaviors. Traditional auditing methods, led by human red teams, often lack scalability and coverage. Recent advancements attempt to integrate LLMs into the auditing process, but these are heavily system-driven, limiting human input in directing the exploration of potential failures.

AdaTest++ aims to address these challenges by supporting sensemaking and human-AI communication during audits. This involves enabling users to prompt LLMs with specific requests, organizing discovered test cases for better insight, and utilizing reusable prompt templates that encapsulate expert strategies.

Key Enhancements in AdaTest++

Natural Language Prompting

AdaTest++ introduces a free-form input box for users to request test cases from LLMs in natural language. This feature addresses the opacity in the notion of similarity used for LLM-driven test suggestions and allows auditors to direct inquiries into specific areas of interest.

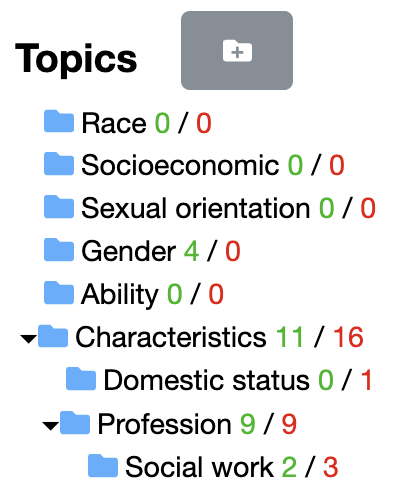

Test Organization and Visualization

The implementation of a dynamic tree visualization provides users with a comprehensive view of the auditing landscape, displaying organized test cases and highlighting areas with passing or failing statuses. This supports the user's cognitive process in handling complex datasets.

Figure 1: An illustration of implemented tree visualization.

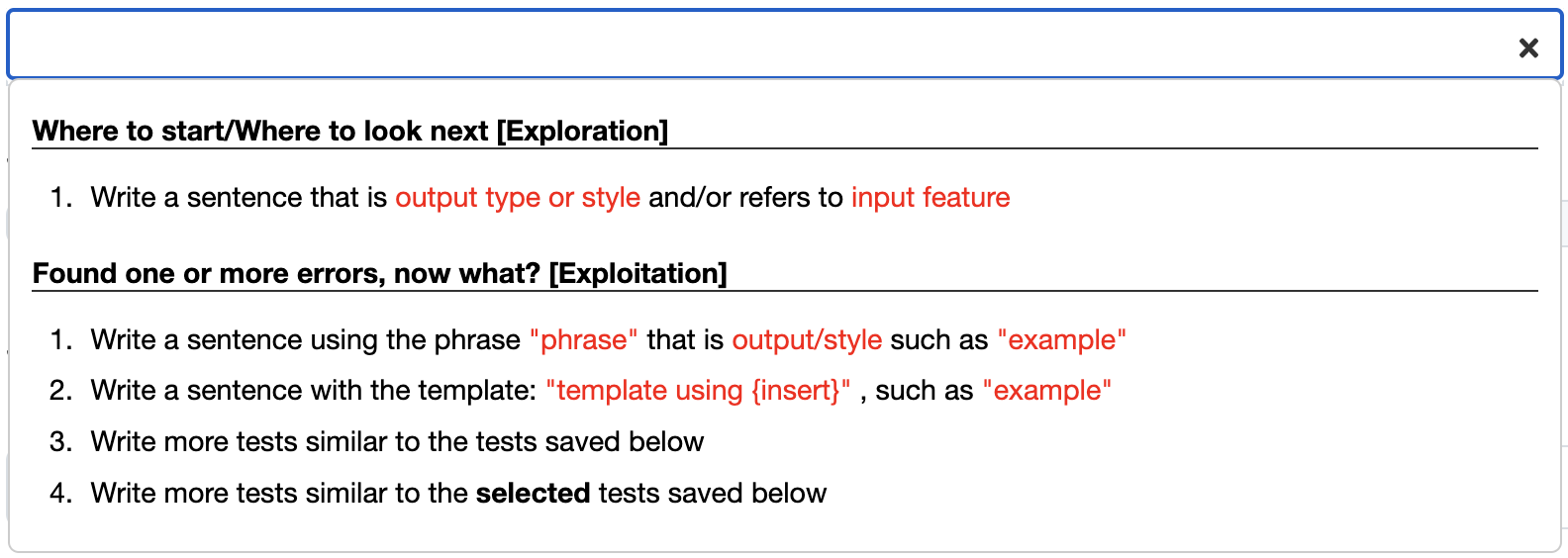

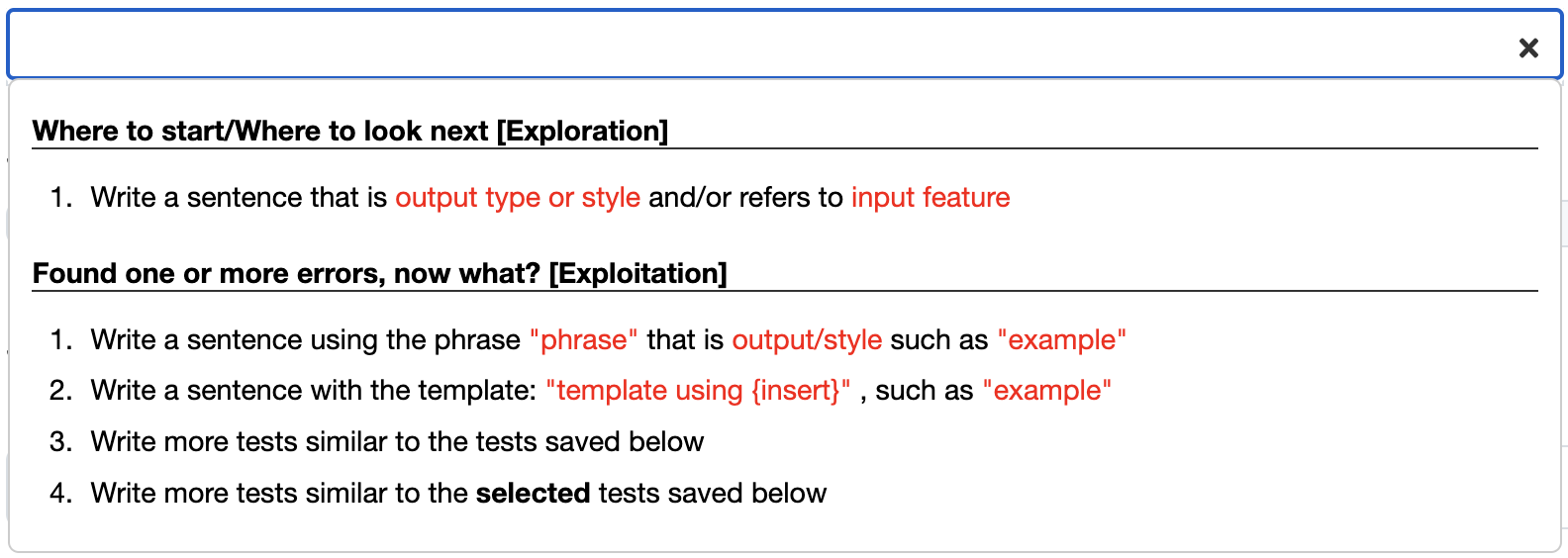

Prompt Templates

Based on insights gathered from expert interviews, AdaTest++ incorporates prompt templates that distill effective auditing strategies into reusable formats. These templates guide less experienced auditors in generating relevant test cases by specifying input features and desired stylistic outputs.

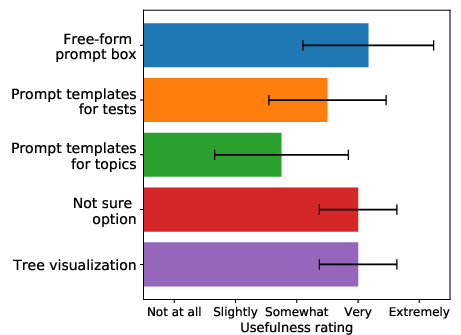

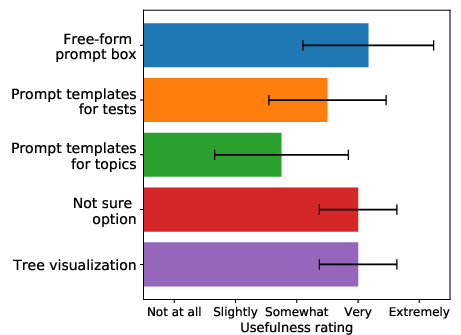

Figure 2: Usefulness of the design components introduced in AdaTest++ as rated by user study participants.

User Study and Findings

A user study was conducted with AI practitioners to assess the efficacy of AdaTest++. The study indicated that AdaTest++ successfully supports auditors through various sensemaking stages such as schema development and hypothesis testing. Participants were able to identify a wide array of failures, some previously unreported, by capitalizing on the combined strengths of human oversight and AI-driven generation processes.

Quantitatively, participants achieved comparable failure detection rates with established tools, while qualitatively, they displayed a diverse range of strategies informed by domain expertise and personal experience. The tool's extensions were notably effective in empowering auditors to navigate both depth and breadth in their investigative efforts.

The study highlights the strengths of AdaTest++ in facilitating effective and efficient auditing processes. Future work should focus on enhancing user support in formulating prompts and managing cognitive biases. Additionally, research should explore broader application domains and long-term integration strategies in organizational auditing practices.

Conclusion

AdaTest++ exemplifies a significant step towards more collaborative and flexible auditing processes for LLMs, enhancing human-AI interaction and supporting a deeper understanding of model behavior. As LLMs proliferate in sensitive applications, such auditing tools are crucial for preemptive bias detection and ensuring responsible AI deployment.