Better Generalization with Semantic IDs: A Case Study in Ranking for Recommendations

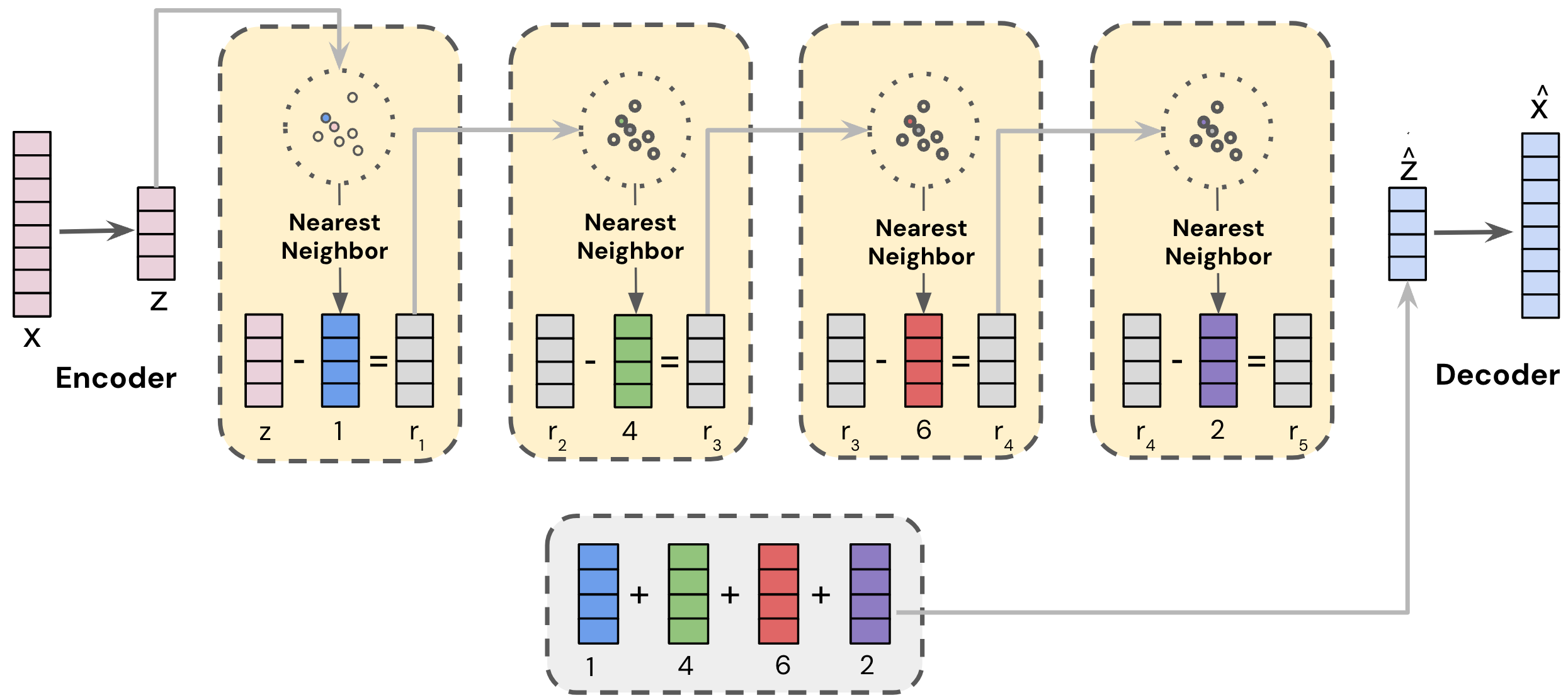

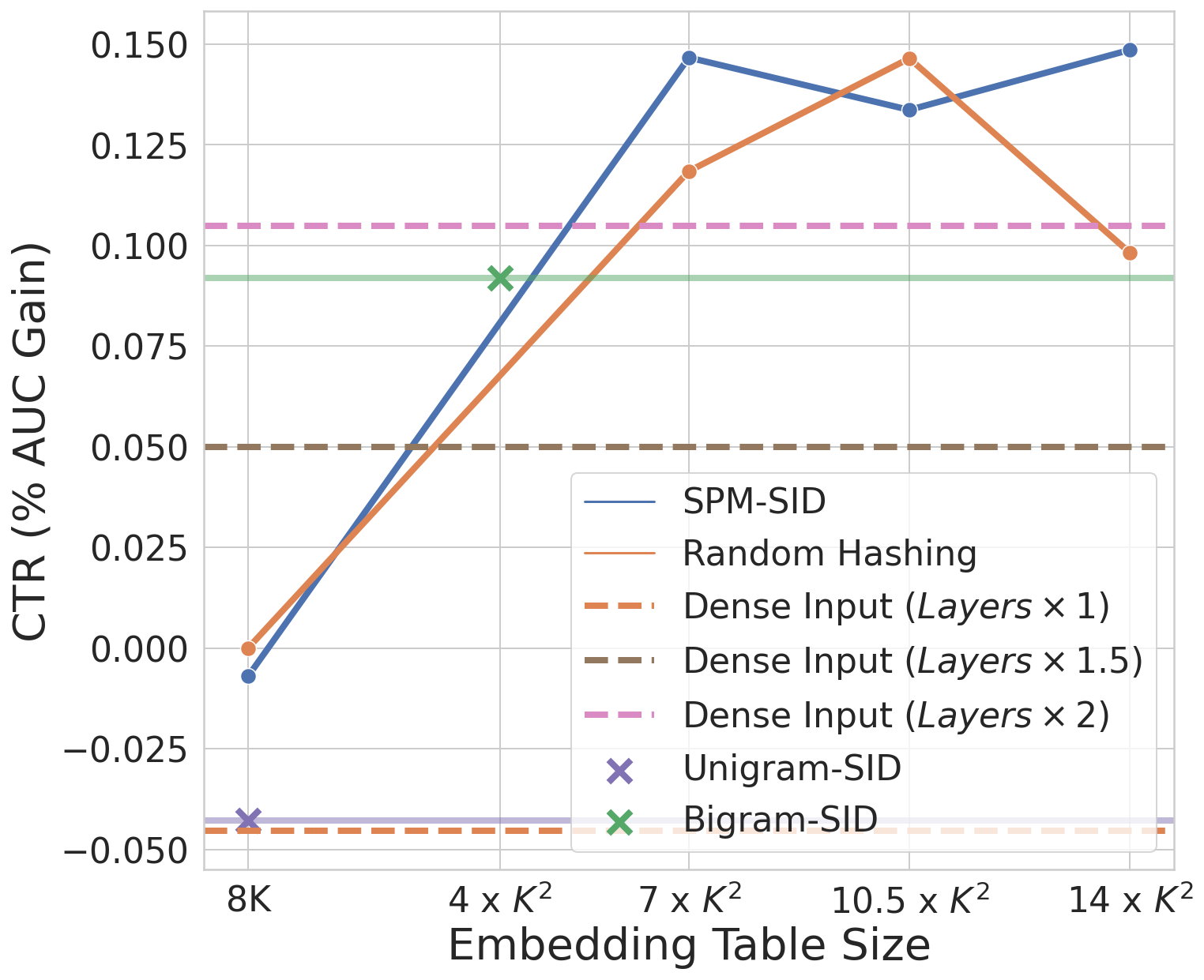

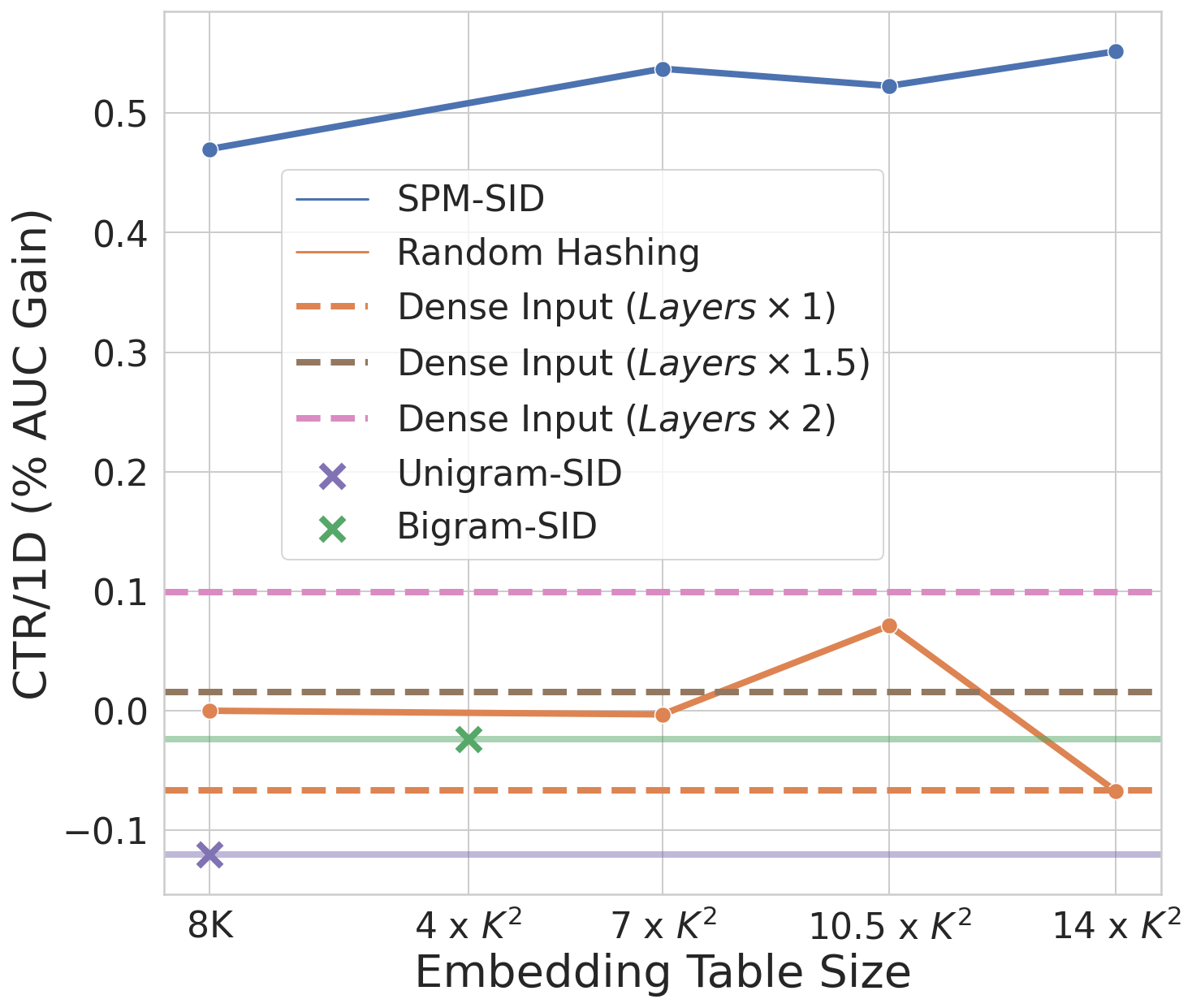

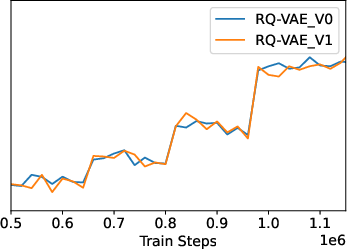

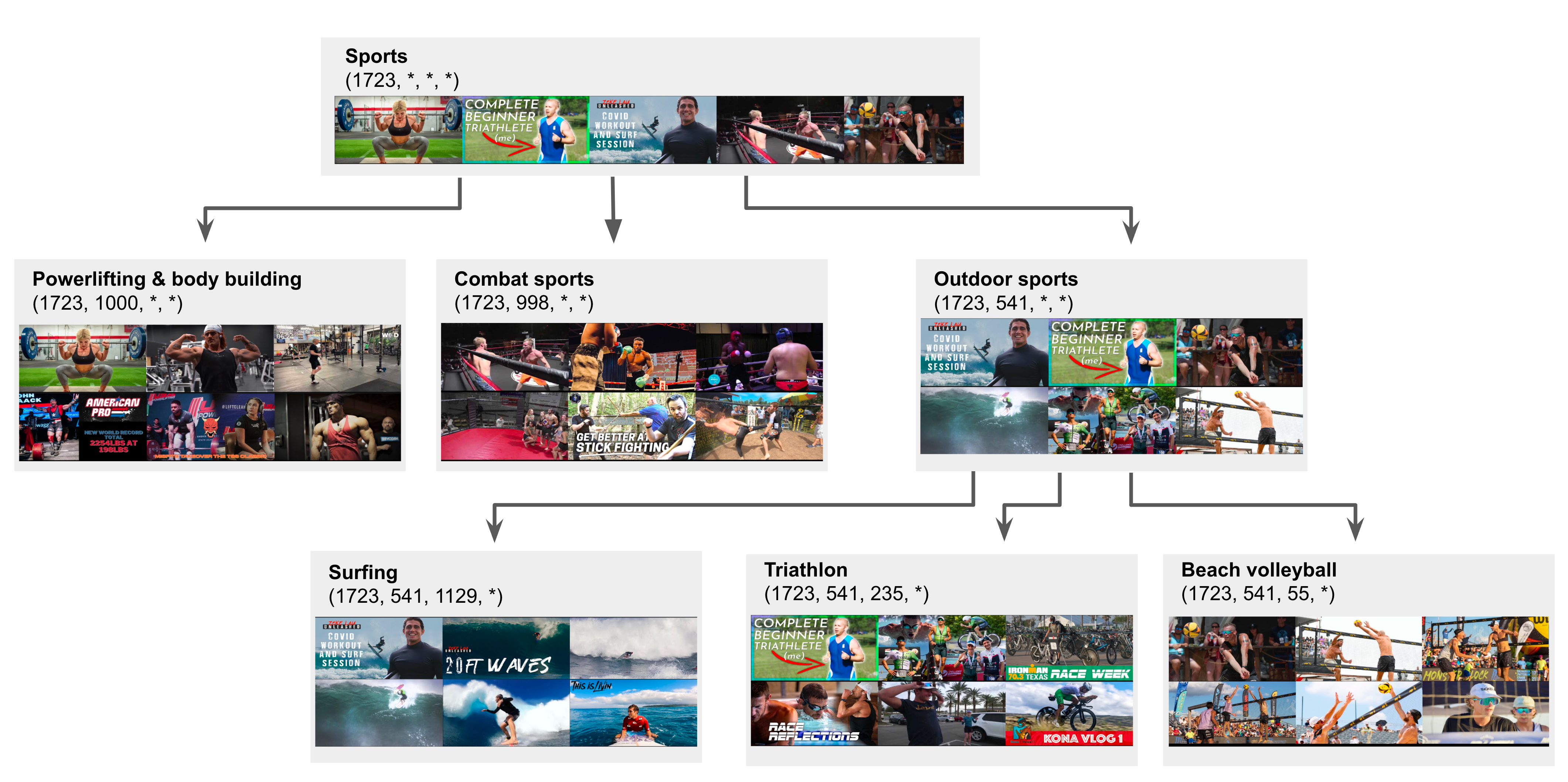

Abstract: Randomly-hashed item ids are used ubiquitously in recommendation models. However, the learned representations from random hashing prevents generalization across similar items, causing problems of learning unseen and long-tail items, especially when item corpus is large, power-law distributed, and evolving dynamically. In this paper, we propose using content-derived features as a replacement for random ids. We show that simply replacing ID features with content-based embeddings can cause a drop in quality due to reduced memorization capability. To strike a good balance of memorization and generalization, we propose to use Semantic IDs -- a compact discrete item representation learned from frozen content embeddings using RQ-VAE that captures the hierarchy of concepts in items -- as a replacement for random item ids. Similar to content embeddings, the compactness of Semantic IDs poses a problem of easy adaption in recommendation models. We propose novel methods for adapting Semantic IDs in industry-scale ranking models, through hashing sub-pieces of of the Semantic-ID sequences. In particular, we find that the SentencePiece model that is commonly used in LLM tokenization outperforms manually crafted pieces such as N-grams. To the end, we evaluate our approaches in a real-world ranking model for YouTube recommendations. Our experiments demonstrate that Semantic IDs can replace the direct use of video IDs by improving the generalization ability on new and long-tail item slices without sacrificing overall model quality.

- Wide & deep learning for recommender systems. In Proceedings of the 1st workshop on deep learning for recommender systems, pages 7–10, 2016.

- Deep neural networks for youtube recommendations. In Proceedings of the 10th ACM conference on recommender systems, pages 191–198, 2016.

- Jukebox: A generative model for music, 2020.

- How to learn item representation for cold-start multimedia recommendation? In Proceedings of the 28th ACM International Conference on Multimedia, pages 3469–3477, 2020.

- Taming transformers for high-resolution image synthesis. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 12873–12883, 2021.

- C. A. Gomez-Uribe and N. Hunt. The netflix recommender system: Algorithms, business value, and innovation. ACM Transactions on Management Information Systems (TMIS), 6(4):1–19, 2015.

- Learning vector-quantized item representation for transferable sequential recommenders. arXiv preprint arXiv:2210.12316, 2022.

- Product quantization for nearest neighbor search. IEEE transactions on pattern analysis and machine intelligence, 33(1):117–128, 2010.

- Learning multi-granular quantized embeddings for large-vocab categorical features in recommender systems. In Companion Proceedings of the Web Conference 2020, pages 562–566, 2020.

- Learning to embed categorical features without embedding tables for recommendation. In Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining, pages 840–850, 2021.

- A music recommendation system with a dynamic k-means clustering algorithm. In Sixth international conference on machine learning and applications (ICMLA 2007), pages 399–403. IEEE, 2007.

- Matrix factorization techniques for recommender systems. Computer, 42(8):30–37, 2009.

- Autoregressive image generation using residual quantization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 11523–11532, 2022.

- Distributed representations of words and phrases and their compositionality. Advances in neural information processing systems, 26, 2013.

- Pinnerformer: Sequence modeling for user representation at pinterest. arXiv preprint arXiv:2205.04507, 2022.

- Recommender systems with generative retrieval. arXiv preprint arXiv:2305.05065, 2023.

- Methods and metrics for cold-start recommendations. In Proceedings of the 25th annual international ACM SIGIR conference on Research and development in information retrieval, pages 253–260, 2002.

- Adaptive feature sampling for recommendation with missing content feature values. In Proceedings of the 28th ACM International Conference on Information and Knowledge Management, pages 1451–1460, 2019.

- Neural discrete representation learning. Advances in neural information processing systems, 30, 2017.

- Dropoutnet: Addressing cold start in recommender systems. Advances in neural information processing systems, 30, 2017a.

- Content-based neighbor models for cold start in recommender systems. In Proceedings of the Recommender Systems Challenge 2017, pages 1–6. 2017b.

- Feature hashing for large scale multitask learning. In Proceedings of the 26th annual international conference on machine learning, pages 1113–1120, 2009.

- Graph convolutional neural networks for web-scale recommender systems. In Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining. ACM, jul 2018. doi: 10.1145/3219819.3219890. URL https://doi.org/10.1145%2F3219819.3219890.

- Vector-quantized image modeling with improved vqgan. arXiv preprint arXiv:2110.04627, 2021.

- Scaling autoregressive models for content-rich text-to-image generation. arXiv preprint arXiv:2206.10789, 2022.

- Soundstream: An end-to-end neural audio codec. CoRR, abs/2107.03312, 2021. URL https://arxiv.org/abs/2107.03312.

- Model size reduction using frequency based double hashing for recommender systems. In Proceedings of the 14th ACM Conference on Recommender Systems, pages 521–526, 2020.

- Recommending what video to watch next: a multitask ranking system. In Proceedings of the 13th ACM Conference on Recommender Systems, pages 43–51, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.