AIOS: LLM Agent Operating System

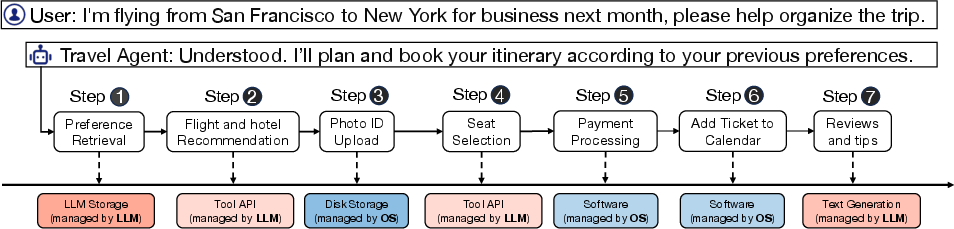

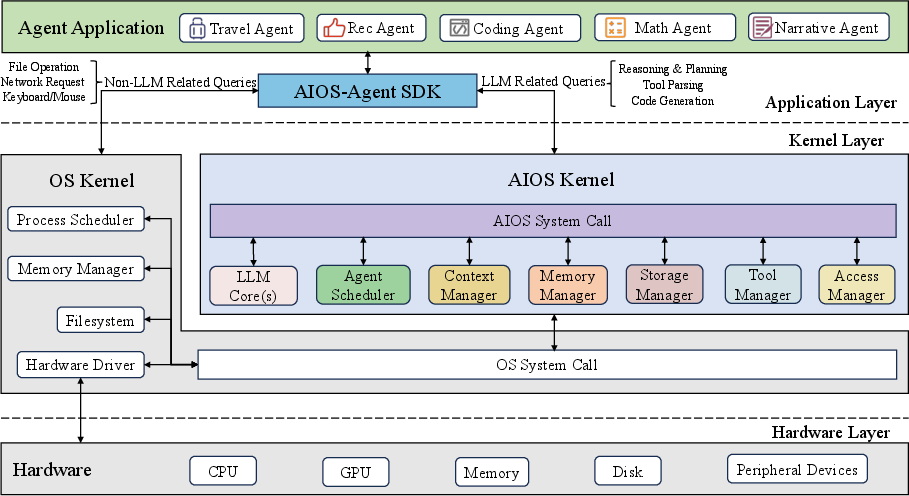

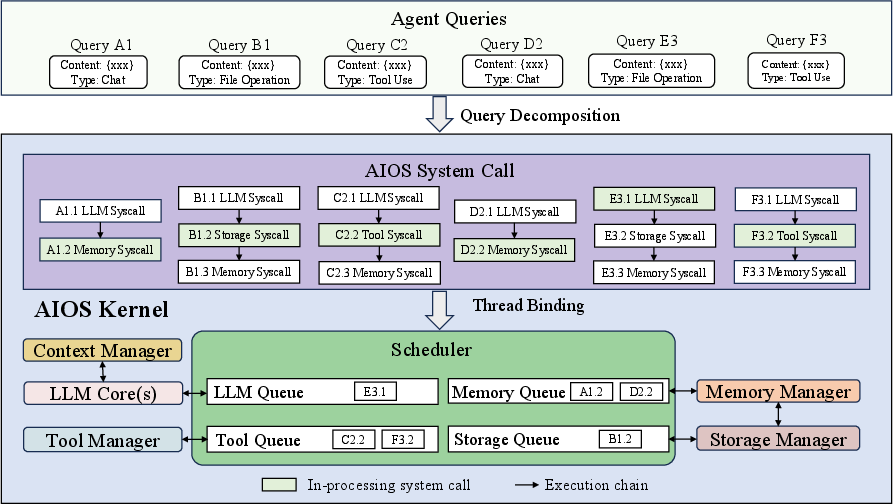

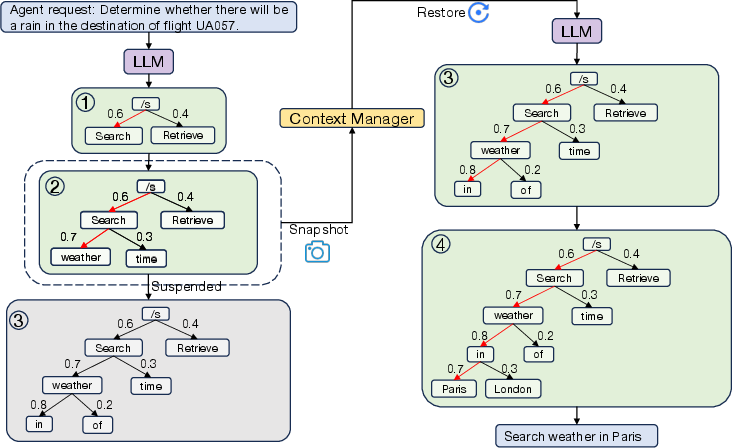

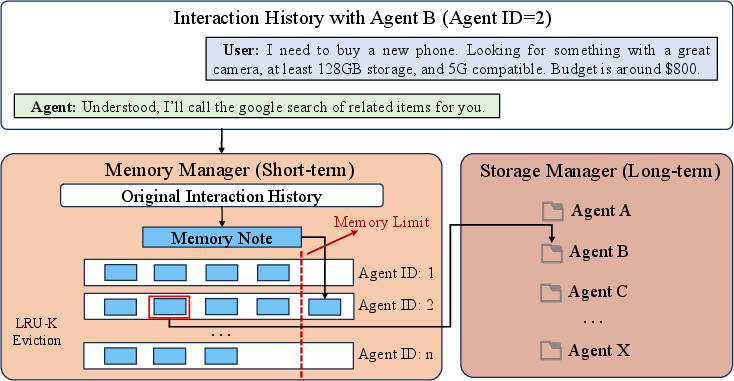

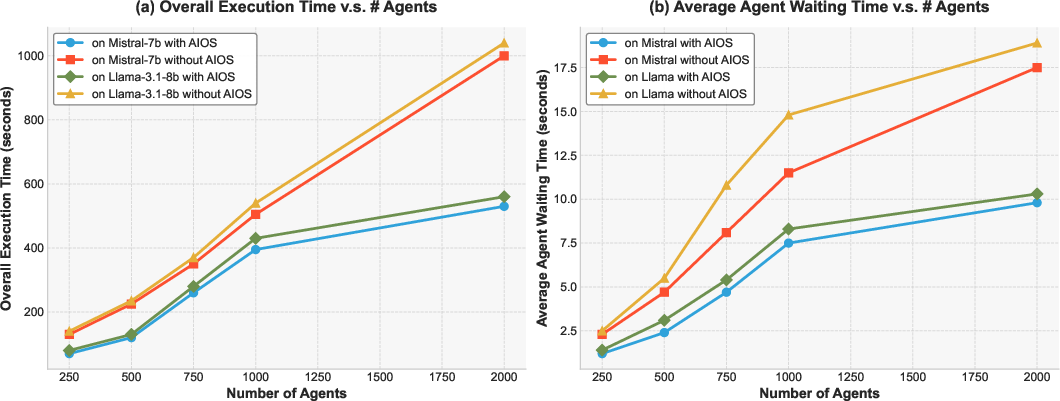

Abstract: LLM-based intelligent agents face significant deployment challenges, particularly related to resource management. Allowing unrestricted access to LLM or tool resources can lead to inefficient or even potentially harmful resource allocation and utilization for agents. Furthermore, the absence of proper scheduling and resource management mechanisms in current agent designs hinders concurrent processing and limits overall system efficiency. To address these challenges, this paper proposes the architecture of AIOS (LLM-based AI Agent Operating System) under the context of managing LLM-based agents. It introduces a novel architecture for serving LLM-based agents by isolating resources and LLM-specific services from agent applications into an AIOS kernel. This AIOS kernel provides fundamental services (e.g., scheduling, context management, memory management, storage management, access control) for runtime agents. To enhance usability, AIOS also includes an AIOS SDK, a comprehensive suite of APIs designed for utilizing functionalities provided by the AIOS kernel. Experimental results demonstrate that using AIOS can achieve up to 2.1x faster execution for serving agents built by various agent frameworks. The source code is available at https://github.com/agiresearch/AIOS.

- Intelligent agents: Theory and practice. The knowledge engineering review, 10(2):115–152, 1995.

- A roadmap of agent research and development. Autonomous agents and multi-agent systems, 1:7–38, 1998.

- Tropos: An agent-oriented software development methodology. Autonomous Agents and Multi-Agent Systems, 8:203–236, 2004.

- OpenAI. Gpt-4. https://openai.com/research/gpt-4, 2023.

- Facebook. Meta. introducing llama: A foundational, 65-billion-parameter large language model. https://ai.facebook.com/blog/largelanguage-model-llama-meta-ai, 2022.

- Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805, 2023.

- OpenAGI: When LLM Meets Domain Experts. Advances in Neural Information Processing Systems, 36, 2023.

- Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744, 2022.

- Scaling instruction-finetuned language models. arXiv preprint arXiv:2210.11416, 2022.

- Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023.

- Recommendation as language processing (rlp): A unified pretrain, personalized prompt & predict paradigm (p5). In Proceedings of the 16th ACM Conference on Recommender Systems, page 299–315, 2022.

- Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213, 2022.

- Codegen: An open large language model for code with multi-turn program synthesis. arXiv preprint arXiv:2203.13474, 2022.

- Galactica: A large language model for science. arXiv preprint arXiv:2211.09085, 2022.

- Reasoning with language model is planning with world model. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pages 8154–8173, 2023.

- Language models can solve computer tasks. Advances in Neural Information Processing Systems, 36, 2023.

- The programmer’s assistant: Conversational interaction with a large language model for software development. In Proceedings of the 28th International Conference on Intelligent User Interfaces, pages 491–514, 2023.

- Palm-e: an embodied multimodal language model. In Proceedings of the 40th International Conference on Machine Learning, pages 8469–8488, 2023.

- Do as i can, not as i say: Grounding language in robotic affordances. In Conference on robot learning, pages 287–318. PMLR, 2023.

- ReAct: Synergizing reasoning and acting in language models. International Conference on Learning Representations, 2023.

- Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems, 36, 2023.

- Mind2web: Towards a generalist agent for the web. Advances in Neural Information Processing Systems, 36, 2023.

- UW:CSE451. History of Operating Systems, 2023. https://courses.cs.washington.edu/courses/cse451/16wi/readings/lecture_readings/LCM_OperatingSystemsTimeline_Color_acd_newsize.pdf.

- The unix time-sharing system. Commun. ACM, 17(7):365–375, jul 1974.

- Charles Antony Richard Hoare. Monitors: An operating system structuring concept. Communications of the ACM, 17(10):549–557, 1974.

- Exokernel: An operating system architecture for application-level resource management. ACM SIGOPS Operating Systems Review, 29(5):251–266, 1995.

- Scheduling algorithms for multiprogramming in a hard-real-time environment. Journal of the ACM (JACM), 20(1):46–61, 1973.

- Edsger W Dijkstra. Cooperating sequential processes. In The origin of concurrent programming: from semaphores to remote procedure calls, pages 65–138. Springer, 2002.

- Peter J Denning. The working set model for program behavior. Communications of the ACM, 11(5):323–333, 1968.

- Virtual memory, processes, and sharing in multics. Communications of the ACM, 11(5):306–312, 1968.

- The design and implementation of a log-structured file system. ACM Transactions on Computer Systems (TOCS), 10(1):26–52, 1992.

- A fast file system for unix. ACM Transactions on Computer Systems (TOCS), 2(3):181–197, 1984.

- LLM as OS, Agents as Apps: Envisioning AIOS, Agents and the AIOS-Agent Ecosystem. arXiv:2312.03815, 2023.

- Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761, 2023.

- Language agents in the digital world: Opportunities and risks. princeton-nlp.github.io, Jul 2023.

- Talm: Tool augmented language models. arXiv preprint arXiv:2205.12255, 2022.

- Toolalpaca: Generalized tool learning for language models with 3000 simulated cases. arXiv preprint arXiv:2306.05301, 2023.

- Webgpt: Browser-assisted question-answering with human feedback, 2022.

- Toolcoder: Teach code generation models to use apis with search tools. arXiv preprint arXiv:2305.04032, 2023.

- Minedojo: Building open-ended embodied agents with internet-scale knowledge. Advances in Neural Information Processing Systems, 35:18343–18362, 2022.

- Voyager: An open-ended embodied agent with large language models. In Intrinsically-Motivated and Open-Ended Learning Workshop@ NeurIPS2023, 2023.

- Emergent autonomous scientific research capabilities of large language models. arXiv preprint arXiv:2304.05332, 2023.

- Chemcrow: Augmenting large-language models with chemistry tools. arXiv preprint arXiv:2304.05376, 2023.

- Language models as zero-shot planners: Extracting actionable knowledge for embodied agents. In International Conference on Machine Learning, pages 9118–9147. PMLR, 2022.

- Language models meet world models: Embodied experiences enhance language models. Advances in neural information processing systems, 36, 2023.

- Camel: Communicative agents for "mind" exploration of large language model society. Advances in Neural Information Processing Systems, 36, 2023.

- Generative agents: Interactive simulacra of human behavior. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, pages 1–22, 2023.

- Metagpt: Meta programming for multi-agent collaborative framework. In The Twelfth International Conference on Learning Representations, 2023.

- Communicative agents for software development. arXiv preprint arXiv:2307.07924, 2023.

- Autogen: Enabling next-gen llm applications via multi-agent conversation framework. arXiv preprint arXiv:2308.08155, 2023.

- Flows: Building blocks of reasoning and collaborating ai. arXiv preprint arXiv:2308.01285, 2023.

- Improving language model negotiation with self-play and in-context learning from ai feedback. arXiv preprint arXiv:2305.10142, 2023.

- Improving factuality and reasoning in language models through multiagent debate. arXiv preprint arXiv:2305.14325, 2023.

- Chateval: Towards better llm-based evaluators through multi-agent debate. In The Twelfth International Conference on Learning Representations, 2023.

- Encouraging divergent thinking in large language models through multi-agent debate. arXiv preprint arXiv:2305.19118, 2023.

- War and peace (waragent): Large language model-based multi-agent simulation of world wars. arXiv preprint arXiv:2311.17227, 2023.

- Mistral 7b. arXiv preprint arXiv:2310.06825, 2023.

- Pythia: A suite for analyzing large language models across training and scaling. In International Conference on Machine Learning, pages 2397–2430. PMLR, 2023.

- Extending context window of large language models via positional interpolation. arXiv preprint arXiv:2306.15595, 2023.

- Yarn: Efficient context window extension of large language models. arXiv preprint arXiv:2309.00071, 2023.

- Augmented language models: a survey. Transactions on Machine Learning Research, 2023.

- LangChain. Langchain. https://github.com/langchain-ai/langchain, 2024.

- Rapid. Rapid api hub. https://rapidapi.com/hub, 2024.

- Ken Thompson. Reflections on trusting trust. Communications of the ACM, 27(8):761–763, 1984.

- Towards taming privilege-escalation attacks on android. In NDSS, volume 17, page 19, 2012.

- Tris Warkentin Jeanine Banks. Gemma: Introducing new state-of-the-art open models. https://blog.google/technology/developers/gemma-open-models/, 2024.

- Bleu: a method for automatic evaluation of machine translation. In Proceedings of the 40th annual meeting of the Association for Computational Linguistics, pages 311–318, 2002.

- Bertscore: Evaluating text generation with bert. arXiv preprint arXiv:1904.09675, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.