- The paper demonstrates that Dynamic Activation Composition dynamically adjusts steering intensity using KL divergence to balance fluency and multi-property conditioning.

- It details an activation extraction and injection process employing contrasting prompt pairs to compute targeted steering vectors for controlling language, safety, and formality.

- Experiments reveal that the method outperforms static steering techniques by maintaining optimal fluency while effectively managing multiple properties across languages.

Multi-property Steering of LLMs with Dynamic Activation Composition

The paper "Multi-property Steering of LLMs with Dynamic Activation Composition" explores comprehensive strategies for conditioning LLMs during inference with multiple properties. It introduces Dynamic Activation Composition (Dyn) as a method to dynamically adapt the steering intensity throughout the generation process to maintain high conditioning efficacy and output fluency.

Introduction

With the rapid evolution of LLMs, improving the controllability of these models has become pivotal for ensuring safe deployment in real-world applications. Traditional techniques such as RLHF result in permanent behavioral modifications, which can degrade downstream generation quality. Inference-time interventions, on the other hand, provide targeted changes during generation without the costs associated with retraining. This paper investigates multi-property steering, which involves conditioning generation on multiple aspects such as language, safety, and formality.

Activation steering utilizes the linear representation hypothesis, adding specific vectors to model activations to influence generation. Previous evaluations mostly focused on single-property steering; this paper extends the evaluation to multi-property steering and benchmarks Dynamic Activation Composition as a new approach.

Methodology

Activation Extraction and Injection

The paper employs activation extraction using contrastive prompt pairs demonstrating opposite behaviors or properties. At each generation step, the activation difference between these contrasting pairs forms the steering vector, which is applied to model activations using a modulating parameter α.

Dynamic Activation Composition adapts the steering intensity αi at each step using information gain derived from steering vectors. It calculates the Kullback-Leibler divergence between the model's token probability distributions under strong steering and unsteered conditions, bounding it to limit disruptions in output fluency.

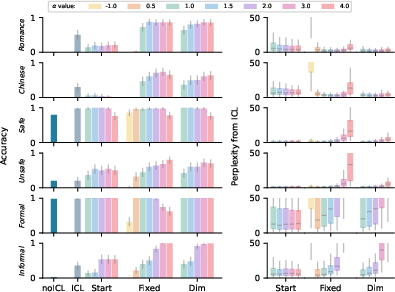

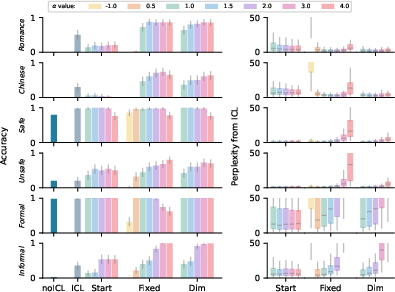

Figure 1: Multi-property steering results for different languages, with Unsafe and Informal properties, where Dyn shows superior fluency while achieving high steering performances.

Experiments and Results

Single-property Steering

For single-property steering, the paper tests different configurations for language switching, safety assurance, and formality adjustment. The study finds property-specific effectiveness for steering strategies, with dynamic steering yielding the best flu-ency-accuracy trade-off across these properties.

Figure 2: Steering accuracy for Romance languages, Chinese, Safe, Unsafe, Formal, and Informal settings, measured against multiple α intensities.

Dynamic Activation Composition

The Dyn approach demonstrates a capacity to dynamically adjust steering intensity, ensuring minimal fluency disruption while maintaining property conditioning. It exhibits superior fluency in multi-property scenarios compared to static steering methods.

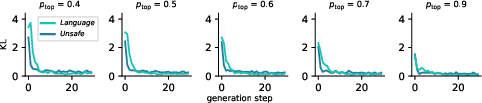

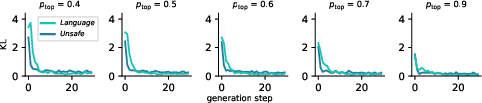

Figure 3: Average αi scores for Unsafe and Language properties, illustrating optimal steering intensity adjustment during generation.

The paper benchmarks steering for languages—Italian, French, Spanish, Chinese—combined with safety or formality, revealing Dyn's effectiveness in managing these intricate tasks without prior tuning of steering intensity.

Conclusion

Dynamic Activation Composition represents an advancement in steering LLMs, facilitating flexible conditioning of multiple properties concurrently. This approach holds promise for improving LLM alignment with diverse application requirements, reducing the necessity for pre-defined, property-specific adjustments. Future research may explore its application to larger LLMs and investigate interpretability of dynamic steering behaviors.

Figure 4: L² norm of steering vectors at generation initiation across languages, showing Romance language vector congruence.

These findings underscore the significance of adaptive steering techniques for real-world AI applications demanding nuanced, multifaceted controllability.