- The paper introduces conceptors as a novel method to control LLM activations, enabling precise manipulation of outputs during inference.

- It applies Boolean operations on ellipsoidal representations to combine multiple steering goals more effectively than additive techniques.

- Experimental results demonstrate that conceptor steering consistently outperforms additive methods, enhancing AI safety and performance.

Steering LLMs using Conceptors: Improving Addition-Based Activation Engineering

Introduction

The paper "Steering LLMs using Conceptors: Improving Addition-Based Activation Engineering" (2410.16314) addresses the challenges in controlling the outputs of LLMs, which are crucial in mitigating risks such as misinformation and bias. Traditional methods like RLHF and fine-tuning are costly and often lack generalization, while prompt engineering can lead to inconsistent results. This paper introduces conceptors as a novel approach to activation engineering, offering a more reliable way to manipulate LLM activations during inference.

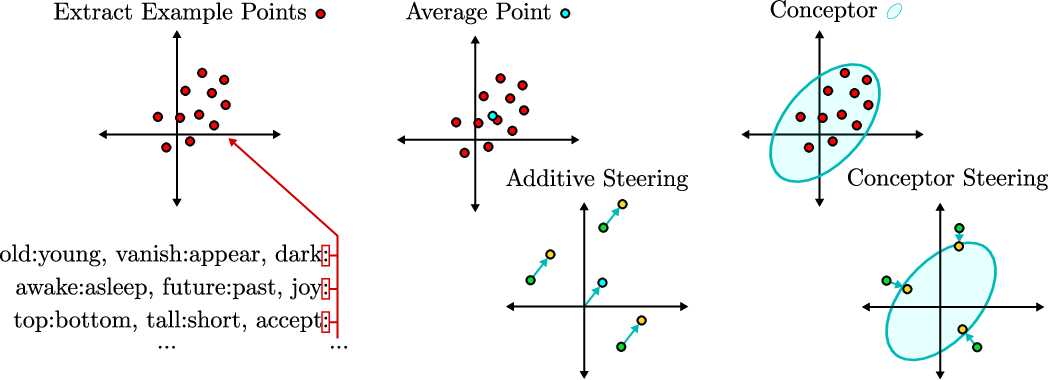

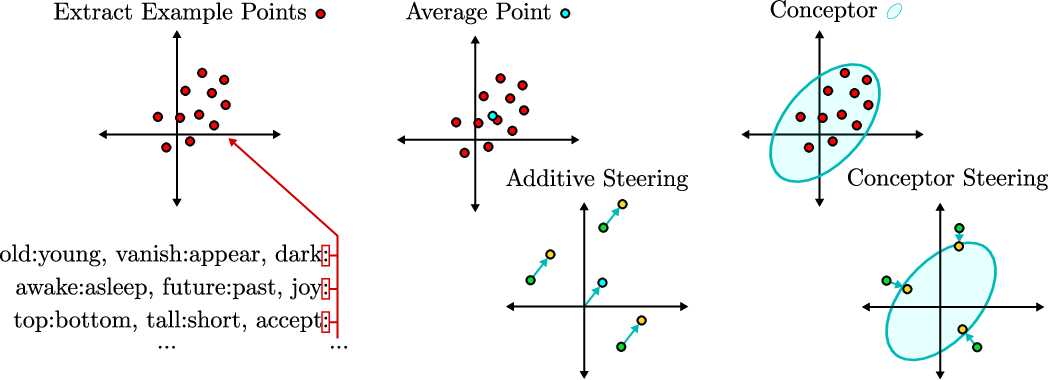

Figure 1: Illustration showing the basic geometric difference between additive and conceptor steering using a set of activations for the antonym task. Additive steering acts as a translation of the activation vectors by a fixed steering vector. Conceptor steering acts as a (soft) projection onto a target ellipsoid.

Background on Activation Engineering

Activation engineering modifies LLM activations at inference time without altering the model parameters. Traditionally, an average steering vector is computed from examples and added to the residual stream to push the model outputs towards desired behaviors. However, this approach is unreliable when dealing with complex activation patterns. Conceptors offer a more nuanced solution by representing activation sets as ellipsoidal regions rather than single vectors, thus providing more comprehensive control.

Conceptors and Boolean Operations

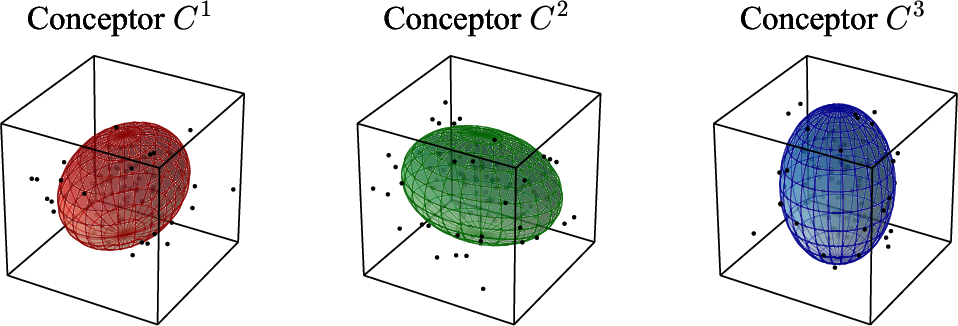

Conceptors encapsulate state space regions with ellipsoidal mathematical constructs, allowing sophisticated manipulation of neural activations. Their utility in controlling RNNs and preventing catastrophic forgetting in other architectures is well-established. This paper exploits conceptors' capability to operate using Boolean algebra, combining multiple steering goals more effectively than the traditional additive approach.

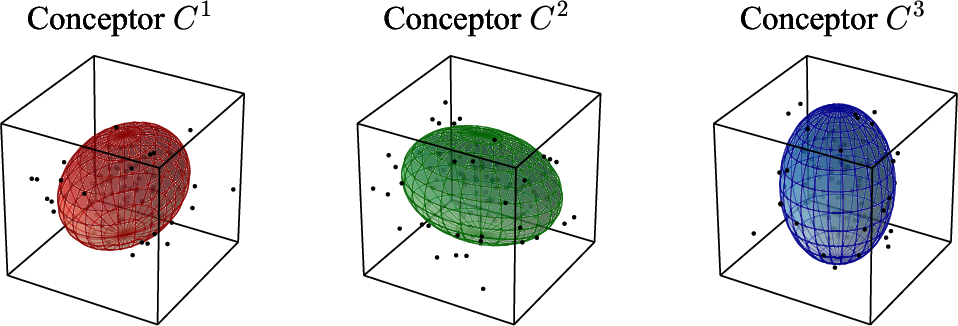

Figure 2: Illustration of three conceptors as ellipsoids that capture the state space region of different sets of neural activations in 3D space.

The paper investigates Boolean operations like AND, OR, and NOT on conceptors, facilitating the combination of multiple steering matrices to achieve more complex steering tasks. This flexibility enables LLMs to maintain performance across varied and composite functions.

Computational Complexity and Implementation

While conceptors are computationally intensive, with matrix operations at O(n3) complexity, the steering mechanism is still cheaper than alternatives like RLHF or full model fine-tuning. The conceptor steering matrix can be fused with existing model weight matrices, optimizing the inference process without significant overhead.

Experiments and Results

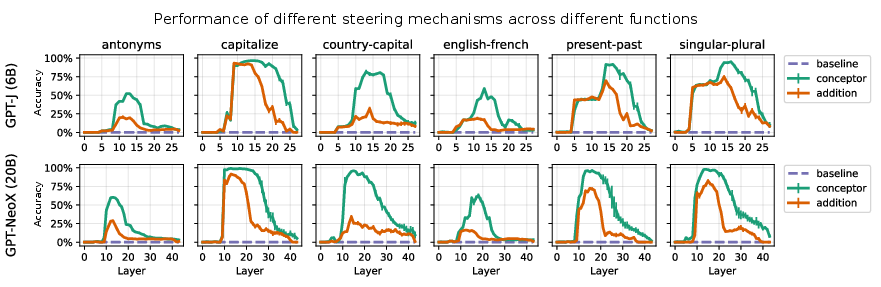

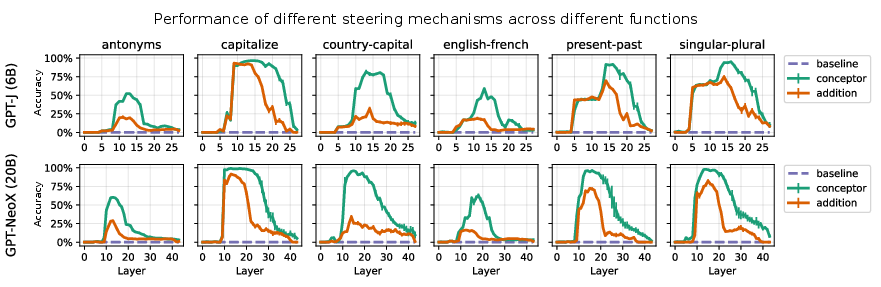

The paper conducts experiments using EleutherAI’s GPT-J and GPT-NeoX models, comparing conceptor-based steering to additive methods across several function tasks, including antonyms and language translation. Results demonstrate that conceptor-based steering consistently outperforms additive steering across all tasks and layers tested.

Figure 3: Comparison of the accuracy on all six function tasks for conceptor-based steering against additive steering across all layers for GPT-J and GPT-NeoX.

Mean-centering improves performance for both methods, but conceptors remain superior, even without this enhancement. Boolean operations on conceptors further boost steering effectiveness, particularly for composite functions, indicating their robustness in complex steering scenarios.

Conclusion

Conceptors represent a promising advancement in activation engineering for LLMs, offering precise control and reliable output manipulation. The paper suggests further research into conceptors' impact on overall model capabilities and their scalability. Despite the challenges of higher computational cost and additional hyperparameters, conceptors provide a significant potential to enhance AI safety, debias models, and align AI outputs more closely with human values.