- The paper presents SC-MCTS*, integrating three statistically normalized reward models to enhance reasoning accuracy on the Blocksworld dataset.

- It refines the UCT strategy with optimized exploration constants and backpropagation techniques, achieving a 51.9% speed improvement per node.

- The study demonstrates that SC-MCTS* outperforms methods like RAP-MCTS and CoT, offering greater interpretability and efficiency in LLM reasoning.

Interpretable Contrastive Monte Carlo Tree Search Reasoning

Introduction

The paper presents an enhancement to reasoning algorithms for LLMs through a novel Monte Carlo Tree Search (MCTS) approach called Speculative Contrastive MCTS (SC-MCTS*). Building upon challenges observed in previous methods, such as speed constraints and inadequate reward models, SC-MCTS* aims to improve reasoning accuracy and speed significantly without the need for extensive model training or domain-specific adaptations.

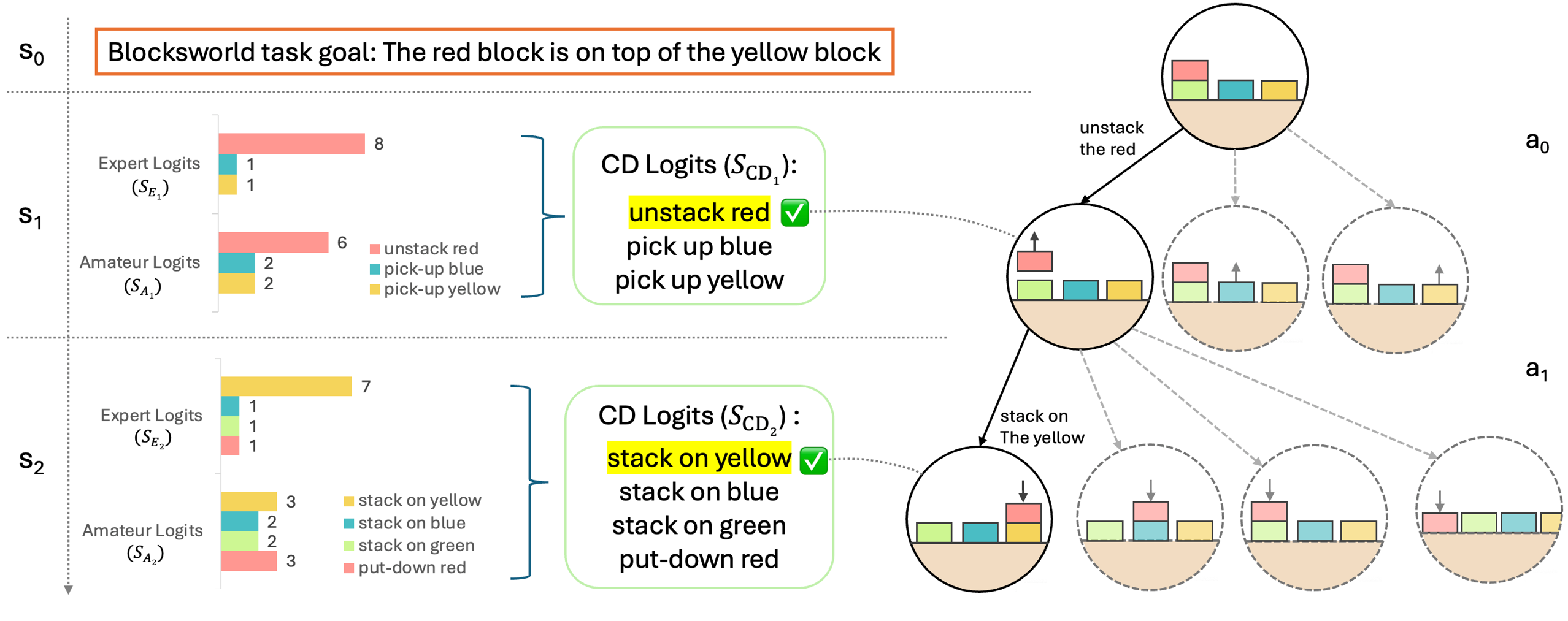

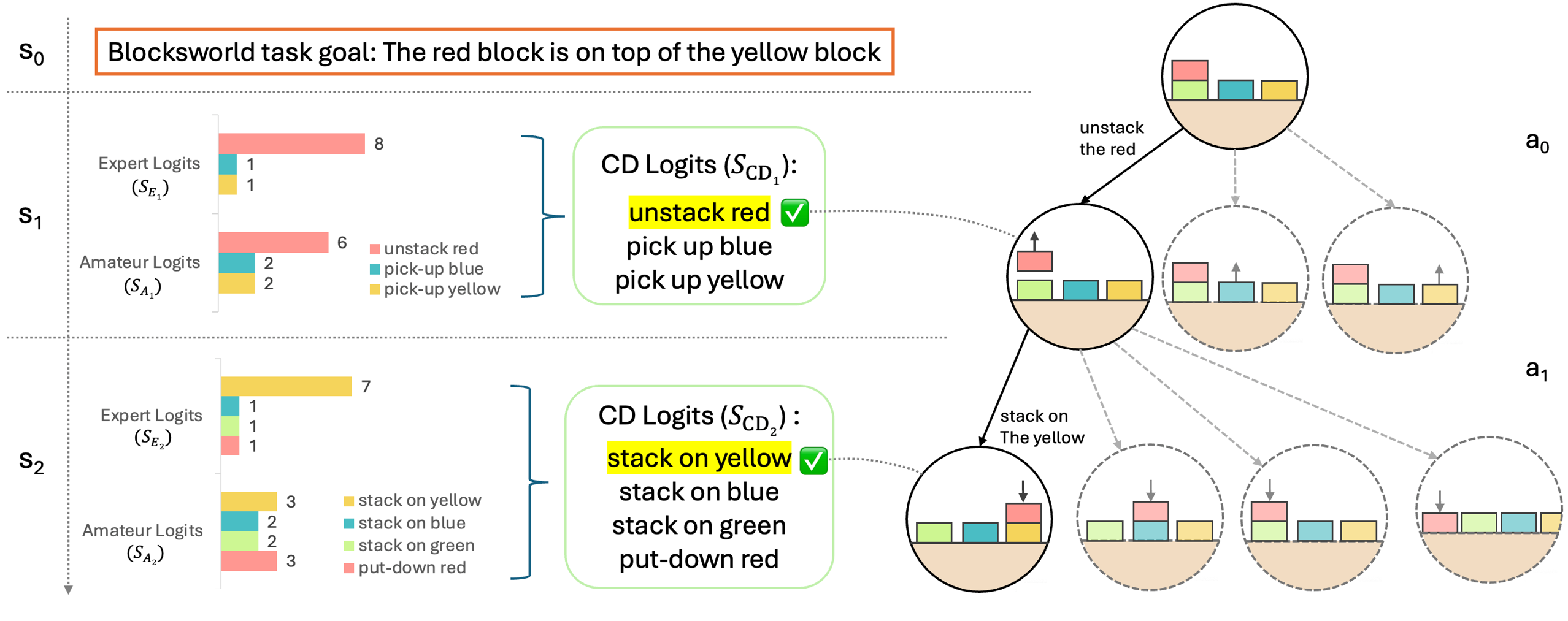

Figure 1: An overview of SC-MCTS∗. We employ a novel reward model based on the principle of contrastive decoding to guide MCTS Reasoning on Blocksworld multi-step reasoning dataset.

Methodology

The core of SC-MCTS* lies in its innovative reward modeling, UCT strategy refinement, and backpropagation enhancements.

- Multi-Reward Design: SC-MCTS* introduces three reward models—contrastive JS divergence, loglikelihood, and self-evaluation—to guide MCTS reasoning. Each model is statistically normalized based on empirical distributions for effective online reward combination, enhancing interpretability and performance.

- Node Selection Strategy: The exploration constant in UCT (Upper Confidence Bound applied on Trees) is crucial for optimal node selection. By refining this constant and conducting thorough quantitative experiments, SC-MCTS* ensures the exploration term effectively contributes to improved reasoning outcomes.

- Backpropagation Refinement: The backpropagation component allows SC-MCTS* to capture smoothly progressing paths, favoring pathways nearing goal achievement for improved value propagation.

Experiments

The effectiveness of SC-MCTS* was demonstrated using the Blocksworld dataset, comparing it with existing methods such as RAP-MCTS and Chain of Thought (CoT) with several LLM configurations (Llama-3.1-70B, GPT-4o, etc.).

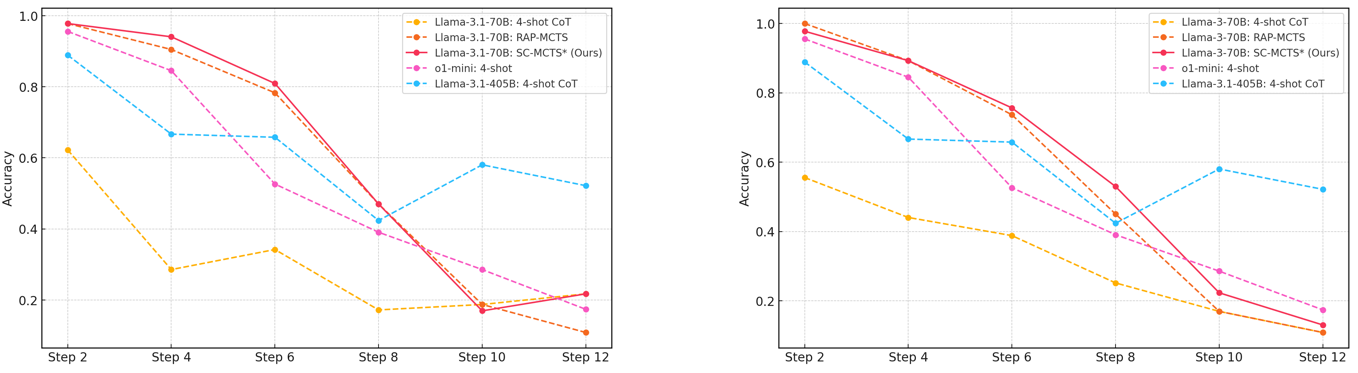

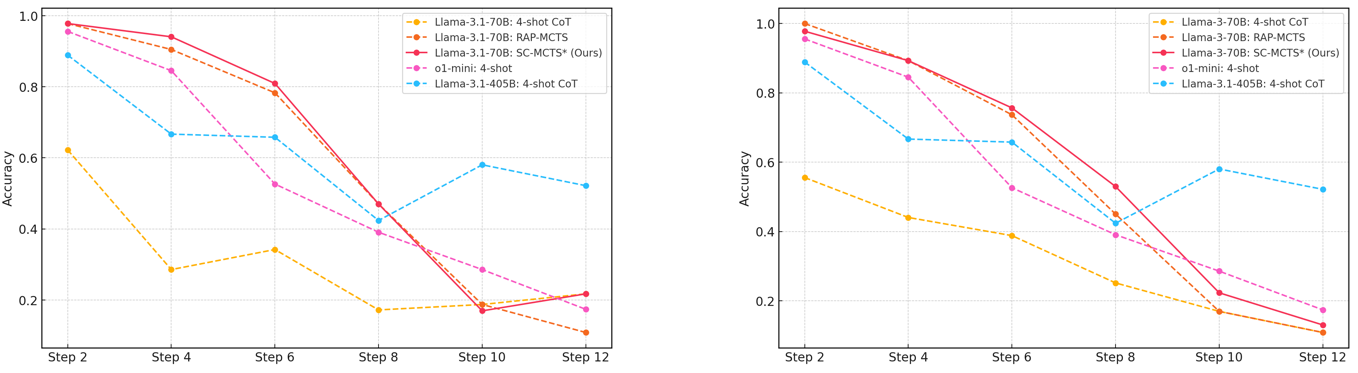

In terms of accuracy across reasoning steps, SC-MCTS* consistently outperformed RAP-MCTS and CoT methods, notably in both easy and hard reasoning modes.

Figure 2: Accuracy comparison of various models and reasoning methods on the Blocksworld multi-step reasoning dataset across increasing reasoning steps.

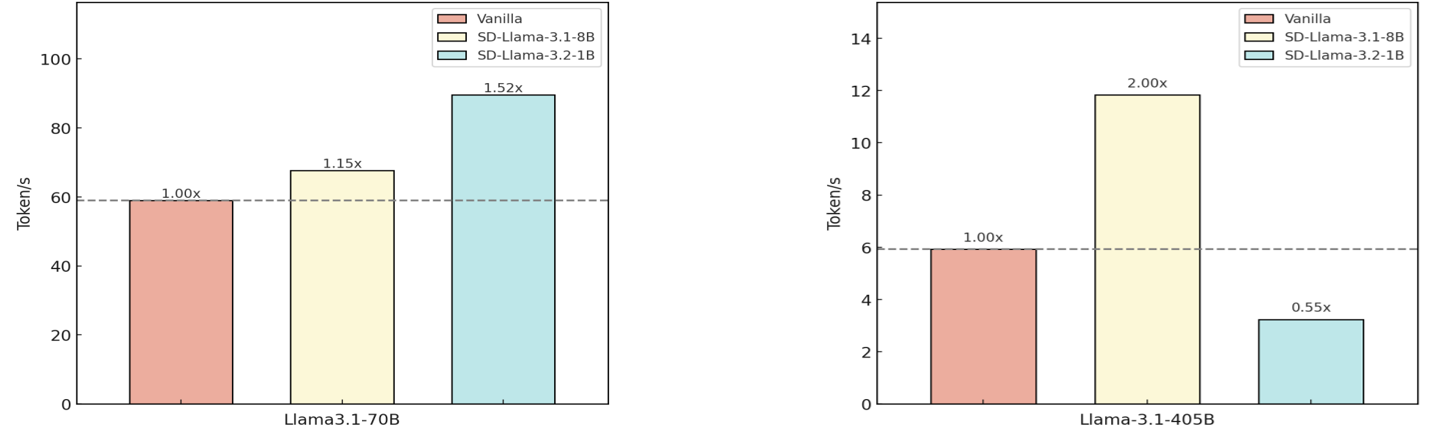

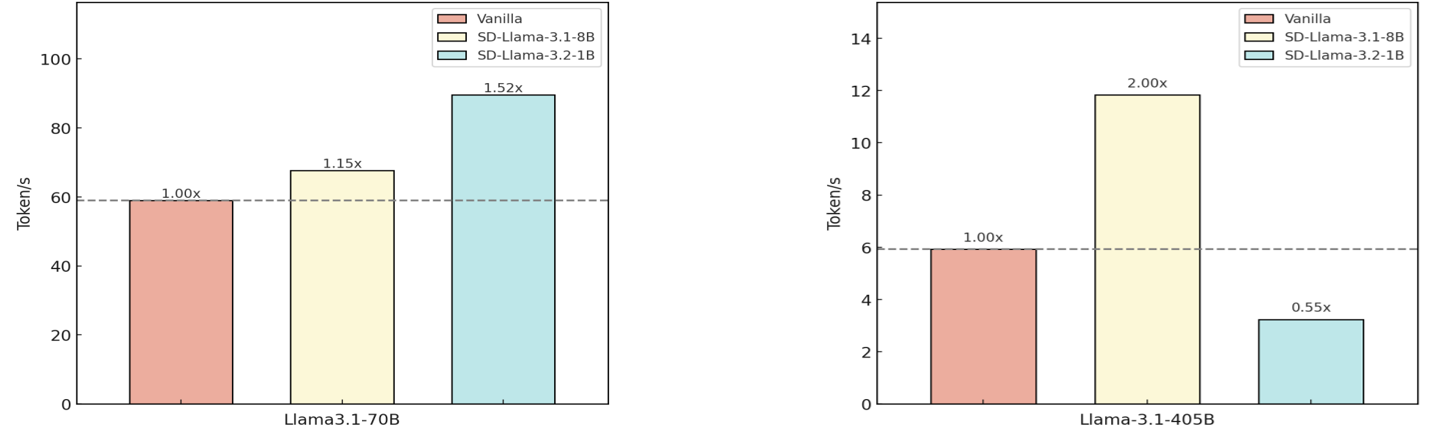

In terms of speed, SC-MCTS* achieved an average speed improvement of 51.9% per node using speculative decoding, demonstrating superior computational efficiency.

Figure 3: Speedup comparison of different model combinations. For speculative decoding, we use Llama-3.2-1B and Llama-3.1.8B as amateur models with Llama-3.1-70B and Llama-3.1-405B as expert models, based on average node-level reasoning speed in MCTS for Blocksworld multi-step reasoning dataset.

Implications and Future Work

SC-MCTS* not only advances the performance for complex reasoning tasks in LLMs but also demonstrates a pathway to more interpretable and efficient AI reasoning systems. The insights gained from the reward model design and UCT strategy refinement illustrate possibilities for broader applications and adaptations in reasoning-centric AI models.

Future research could focus on refining step-splitting methods for generalization across various reasoning tasks without domain-specific dependencies. Other potential directions include integrating additional metrics-based reward models to further enhance accuracy and interpretability.

Conclusion

SC-MCTS* represents a substantial improvement over existing reasoning systems for LLMs, offering increased accuracy, speed, and interpretability without the need for complex learning processes or excessive computational resources. The paper's methodology and results highlight the potential for scalable, efficient, and interpretable approaches in AI-based reasoning frameworks.