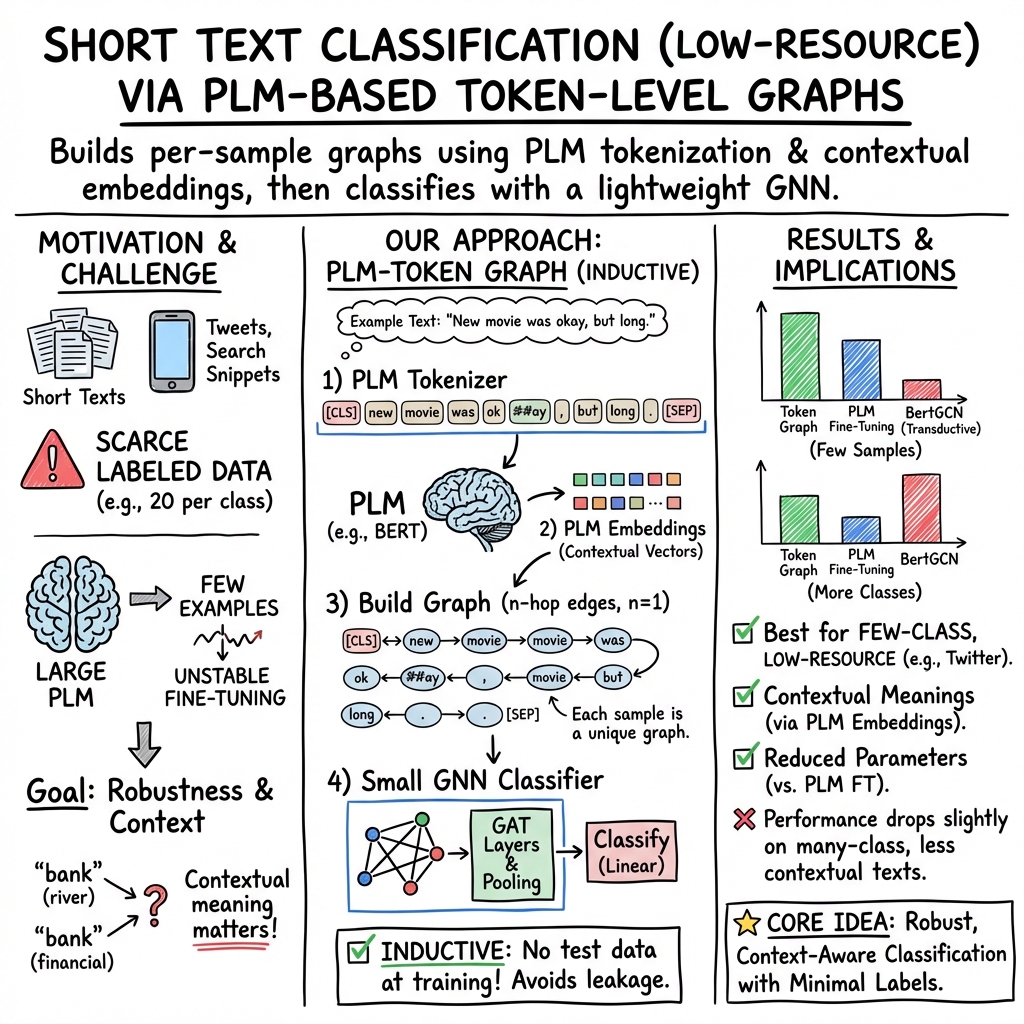

- The paper presents a novel token-level graph method that integrates PLM tokenization to tackle short text classification with limited labeled data.

- It constructs distinct graphs per sample, capturing contextual semantic embeddings while reducing model parameters for increased efficiency.

- Experimental results on benchmarks like Twitter and MR demonstrate competitive accuracy and macro F1 scores compared to traditional methods.

Token-Level Graphs for Short Text Classification

Introduction

The paper "Token-Level Graphs for Short Text Classification" (2412.12754) addresses the challenge of classifying short texts, a critical task in Information Retrieval (IR) systems such as search engines and social media analytics. Traditional methods, including the fine-tuning of Pre-trained LLMs (PLMs), often struggle in scenarios where there is limited labeled data. Recent progress in graph machine learning has sparked interest in using graph-based approaches to improve performance in low-resource environments.

Existing graph-based methods frequently encounter limitations such as the inability to differentiate meanings of identical terms across contexts and the constraints imposed by transductive learning methods. To overcome these issues, the paper proposes a novel approach that constructs text graphs grounded on tokens derived from PLMs, allowing for the extraction of both semantic and contextual information. This approach also mitigates the vocabulary constraints of previous methods and reduces the number of model parameters, enhancing training stability and efficiency.

Methodology

Token-Based Graph Construction

The authors introduce a method where each text sample is transformed into a token-based graph. The graph construction process includes the following stages:

- Text Preprocessing: Each text T is tokenized into a sequence using a PLM tokenizer, resulting in tokens that form the nodes of the graph.

- Graph Structure: Nodes are connected within an n-hop fully connected graph based on their position in the token sequence, ensuring contextual embeddings.

- Node Embeddings: Features for each node are generated by embedding the tokenized sequence via the PLM, capturing context-dependent semantic representations.

This approach facilitates inductive learning by creating distinct graphs for each sample and leveraging the contextual embeddings provided by PLMs.

Experimental Setup

Data and experiments were conducted on standard short text classification datasets including Twitter, MR, Snippets, and TagMyNews. The models used BERT for tokenization, and Graph Attention Networks with reduced dimensions to minimize computational overhead. The training regime involved 20 samples per class, repeated five times for robustness, highlighting the method’s feasibility without GPU acceleration.

Baseline comparisons against various graph-based and traditional approaches were executed, demonstrating the superiority of token-based graphs in most scenarios, particularly where fewer training samples were available.

Results

In controlled experiments, the token-level graph approach yielded the highest or competitive classification accuracy and macro F1 scores, particularly excelling in datasets such as Twitter and MR. Results indicated that while transductive methods like BertGCN were advantageous in contexts with more training samples, the token-based method consistently maintained robust performance across datasets with limited context.

Discussion and Conclusion

The presented approach delivers a robust mechanism for short text classification by integrating PLM tokenization with graph-based learning. The methodology excels particularly in scenarios with limited training samples, providing stable performance without requiring transductive setups. Importantly, the paper asserts the potential for combining PLM-derived semantic embeddings and graph neural networks to offer a lightweight, efficient classification tool suitable for diverse IR applications.

Overall, this research extends the capabilities of current text classification paradigms by offering a model that effectively manages token-level semantics and overcomes the common challenges associated with graph-based classifiers in low-resource settings. Future work may focus on expanding this approach to domain-specific datasets, and further refining the graph construction process for enhanced performance.