- The paper introduces a taxonomy for LLM memory, categorizing mechanisms along dimensions of object, form, and time.

- It finds that AI systems mimic human memory processes such as sensory, working, explicit, and implicit memory.

- It discusses practical applications and challenges, including personalized memory in user interactions and multimodal data integration.

Overview of Memory Mechanisms in LLMs

In the field of AI, memory mechanisms within LLMs are crucial components, paralleling aspects of human cognitive memory. These mechanisms help encode, store, and retrieve information, enabling AI systems to perform tasks with an enhanced understanding of context and history.

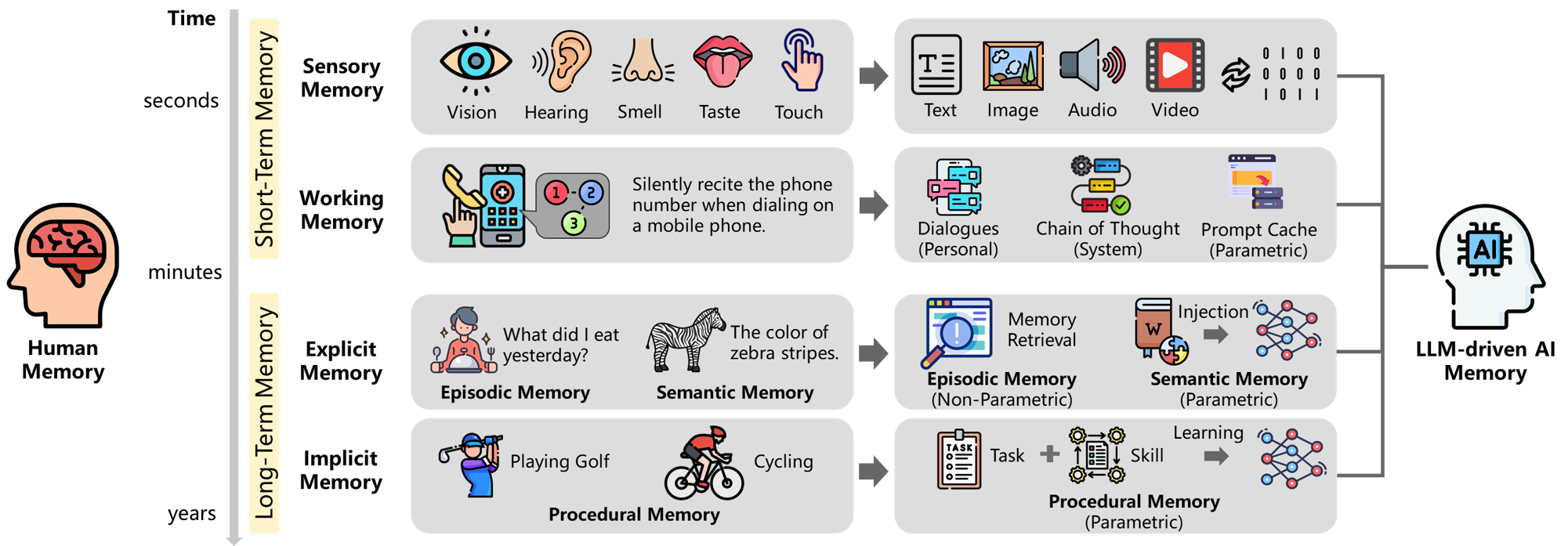

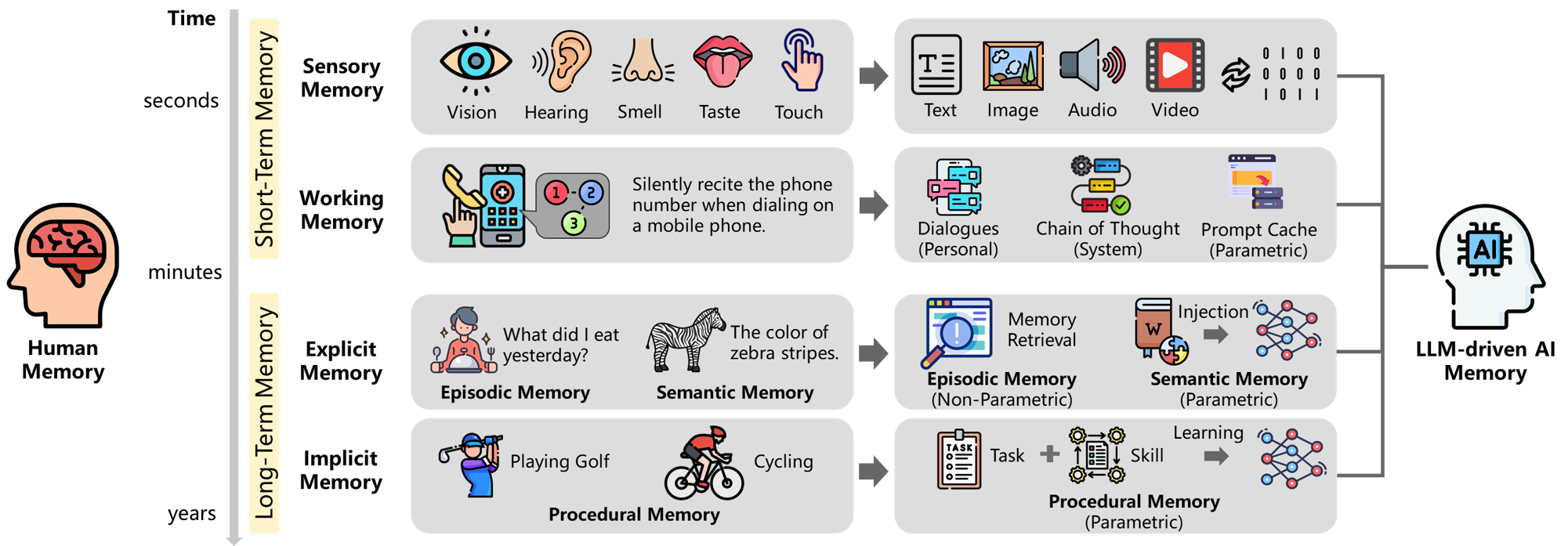

Figure 1: Illustrating the parallels between human and AI memory.

Human Memory vs. AI Memory

Human Memory

Human memory can be classified into short-term memory, including sensory and working memory, and long-term memory, which includes explicit and implicit memory. The dynamics of human memory involve encoding, storage, retrieval, consolidation, reconsolidation, and forgetting—all contributing to a complex, adaptive memory system.

Memory in AI Systems

AI memory in LLM-driven systems mirrors human memory in several ways:

- Sensory Memory: Like sensory input in humans, AI systems process external data, temporarily holding it before further analysis.

- Working Memory: AI systems utilize working memory to maintain context during tasks, akin to human short-term processing.

- Explicit Memory: LLMs have explicit memory for retaining factual knowledge, similar to human semantic memory.

- Implicit Memory: AI systems develop implicit memory for task executions, akin to procedural memory in humans.

These parallels highlight how insights into human cognition can inspire enhancements in AI memory design.

Categorization of AI Memory

The paper introduces a taxonomy for LLM-driven AI memory, dividing it into three dimensions—object (personal and system), form (parametric and non-parametric), and time (short-term and long-term). This framework supports a structured exploration of AI memory capabilities, offering insights into system adaptability and personalization.

Quadrants of AI Memory

The categorization forms eight quadrants, each addressing distinct roles and functions within AI systems:

- Personal Memory: Enhances user personalization through non-parametric or parametric approaches, improving context-awareness and individual experience.

- System Memory: Supports advanced reasoning and task management, facilitating both short-term computational efficiency and long-term capability evolution.

Research and Applications

Personal Memory

Personal memory aids in tailoring AI interactions for individual users, incorporating preferences and histories through various frameworks like ChatGPT Memory or MemoryBank. Leveraging non-parametric and parametric memory approaches, AI systems can dynamically respond to user needs.

System Memory

System memory extends the reasoning and planning abilities of AI systems, optimizing task performance through mechanisms like ReAct and Voyager. Through continuous self-refinement and adaptation, AI systems develop robust strategies for complex task execution.

Challenges and Future Directions

The exploration of memory mechanisms in LLMs identifies several challenges:

- Multimodal Memory: Integration of diverse data types (text, images, audio) can enhance system adaptability.

- Stream Memory: Developing dynamic, real-time memory systems to complement static approaches.

- Comprehensive Memory: Constructing collaborative memory systems to parallel human cognitive processes.

- Shared Memory: Encouraging cross-domain knowledge sharing among AI systems.

Future directions involve enhancing privacy protection amidst collective data usage and enabling automated system evolution for continuous improvement.

Conclusion

The paper underscores the significance of memory systems in advancing AI capabilities in the context of LLMs. By drawing parallels with human memory, analyzing current frameworks, and identifying future challenges, the paper lays the groundwork for developing more intelligent, adaptable, and collaborative AI systems rooted in sophisticated memory architectures.