- The paper introduces a metacognitive AIED intervention, DeBiasMe, that reduces biases in human-AI interactions.

- It employs deliberate friction and bi-directional collaboration to prompt reflection and mitigate cognitive biases during interactions.

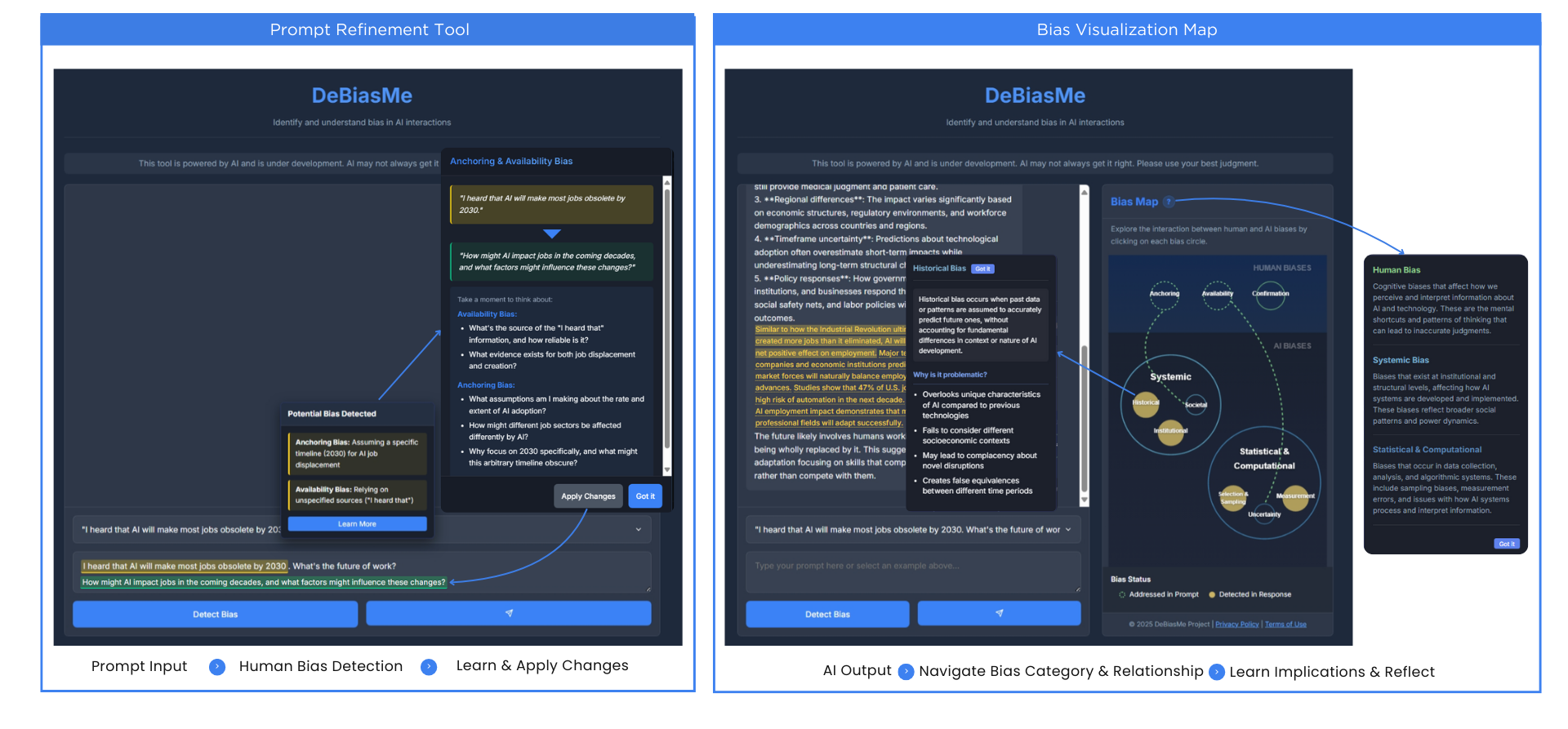

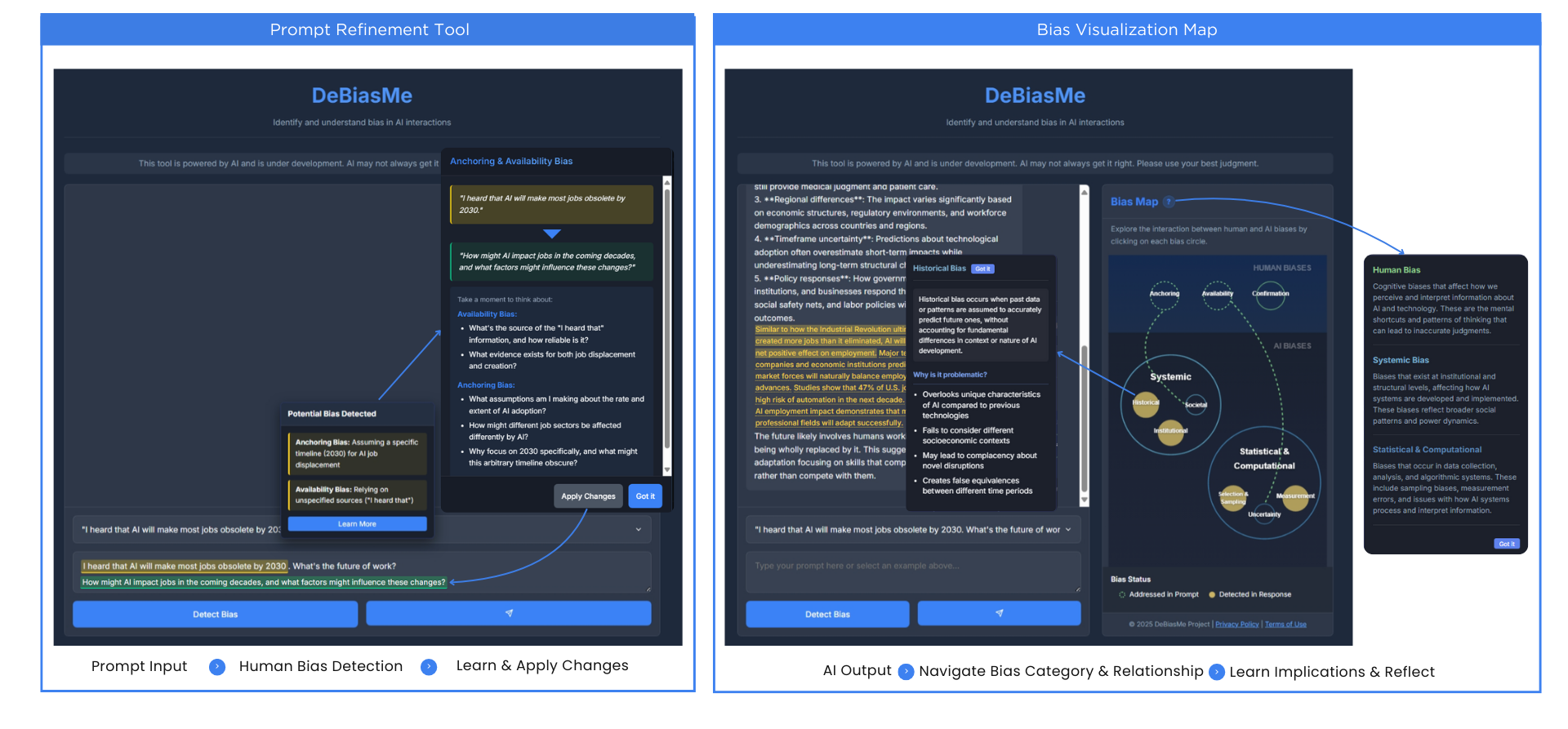

- DeBiasMe features a prompt refinement tool and bias visualization map to enhance bias recognition and AI literacy among students.

The paper "DeBiasMe: De-biasing Human-AI Interactions with Metacognitive AIED (AI in Education) Interventions" (2504.16770) explores the transformative role of generative AI technologies in academic environments, focusing on cognitive biases in human-AI interactions. It advocates for metacognitive AI literacy interventions designed to help university students critically engage with AI tools while addressing biases across human-AI interaction workflows. This paper argues for a comprehensive approach to AI literacy that prioritizes awareness of human bias and metacognitive competencies, empowering users to actively navigate biases inherent in AI systems.

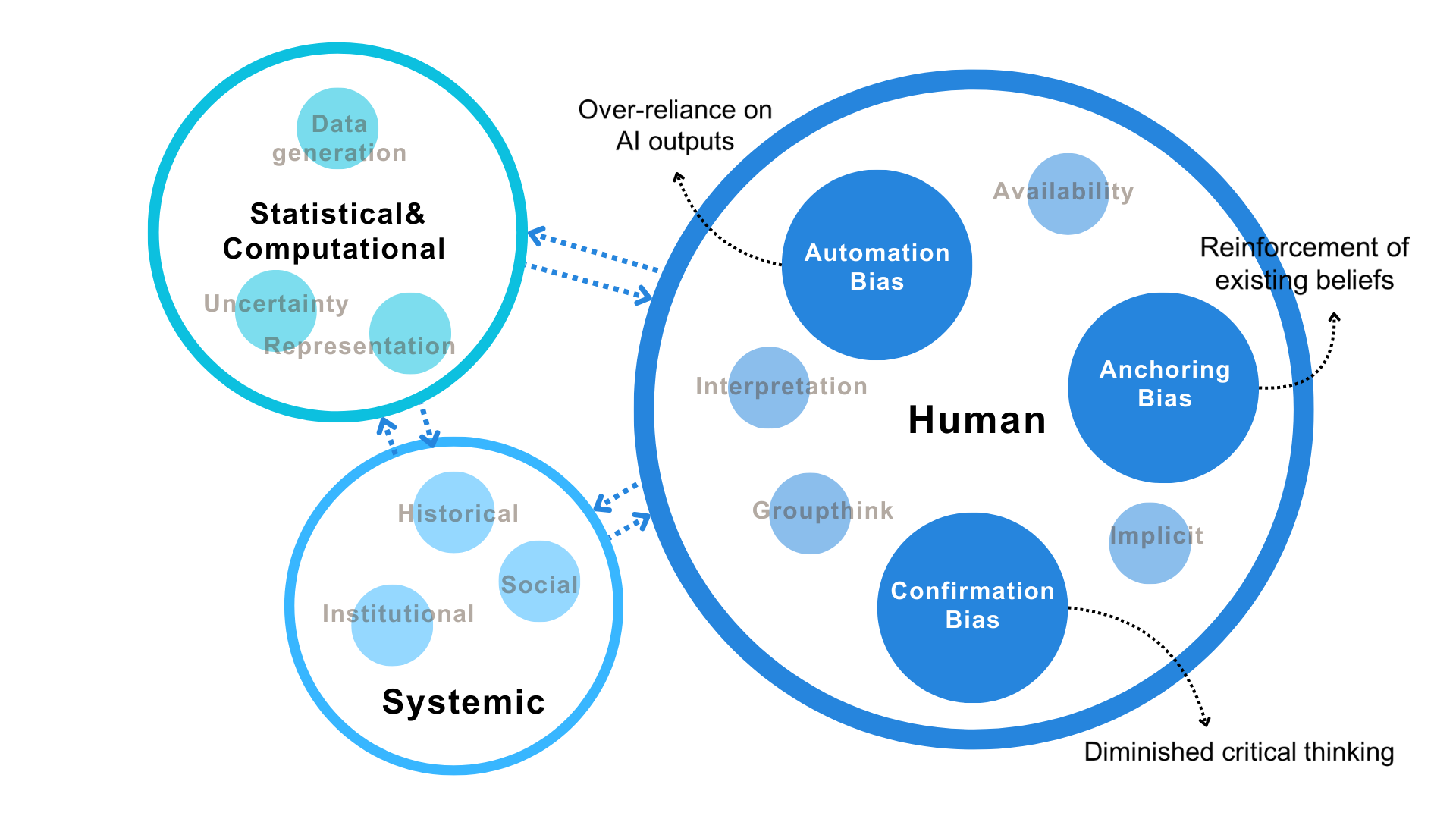

The paper highlights several forms of cognitive biases—such as anchoring, confirmation, and automation bias—that adversely influence human interactions with AI. It argues that human biases interact dynamically with and amplify algorithmic and systemic biases embedded in AI systems, thereby impairing critical thinking and decision-making processes.

Figure 1: Interacting categories of bias in human-AI Interaction.

To counter these risks, the paper posits metacognitive support as critical in AI literacy frameworks, enabling learners to monitor, evaluate, and regulate their decision-making processes. By fostering reflection and critical engagement, metacognitive tools can upgrade students from passive AI consumers to active collaborators in human-AI ecosystems.

Framework and Design Solutions

The paper introduces "Deliberate Friction" and "Bi-directional Human-AI Collaboration" as foundational frameworks for developing AI literacy interventions.

Deliberate Friction: This design principle involves introducing strategic friction points within Human-AI interaction workflows to prompt reflection and reduce cognitive biases. By intentionally disrupting seamless human-AI interfaces, the approach encourages users to exercise independent judgment rather than over-relying on automated systems.

Bi-directional Collaboration: It advocates perceiving humans as integral participants in AI workflows, enabling interaction interventions at both input formulation and output interpretation stages. This model promotes robust human-AI synergy through enhanced interpretability and transparency, resulting in improved bias recognition and mitigation.

Figure 2: Interactive prototype and frictional design elements.

Case Study: DeBiasMe

The paper presents DeBiasMe, a work-in-progress metacognitive AIED intervention designed to enhance awareness of cognitive biases through educational technology. DeBiasMe incorporates two primary components:

Prompt Refinement Tool: Prior to submitting prompts, users receive feedback that highlights potential biases, fostering awareness in question framing and avoiding initial bias-induced suggestions.

Bias Visualization Map: Post-interaction, users engage with an interactive map that identifies bias types in AI outputs, presenting visual connections between detected biases and enabling exploration of implications and mitigation strategies.

Implications and Future Directions

The paper invites stakeholders—including educators, designers, and AI developers—to consider design and evaluation methods for scaffolding mechanisms, bias visualization, and frameworks that enhance AI literacy. While DeBiasMe focuses on individual bias mitigation, collaborative learning environments necessitate extended inquiry.

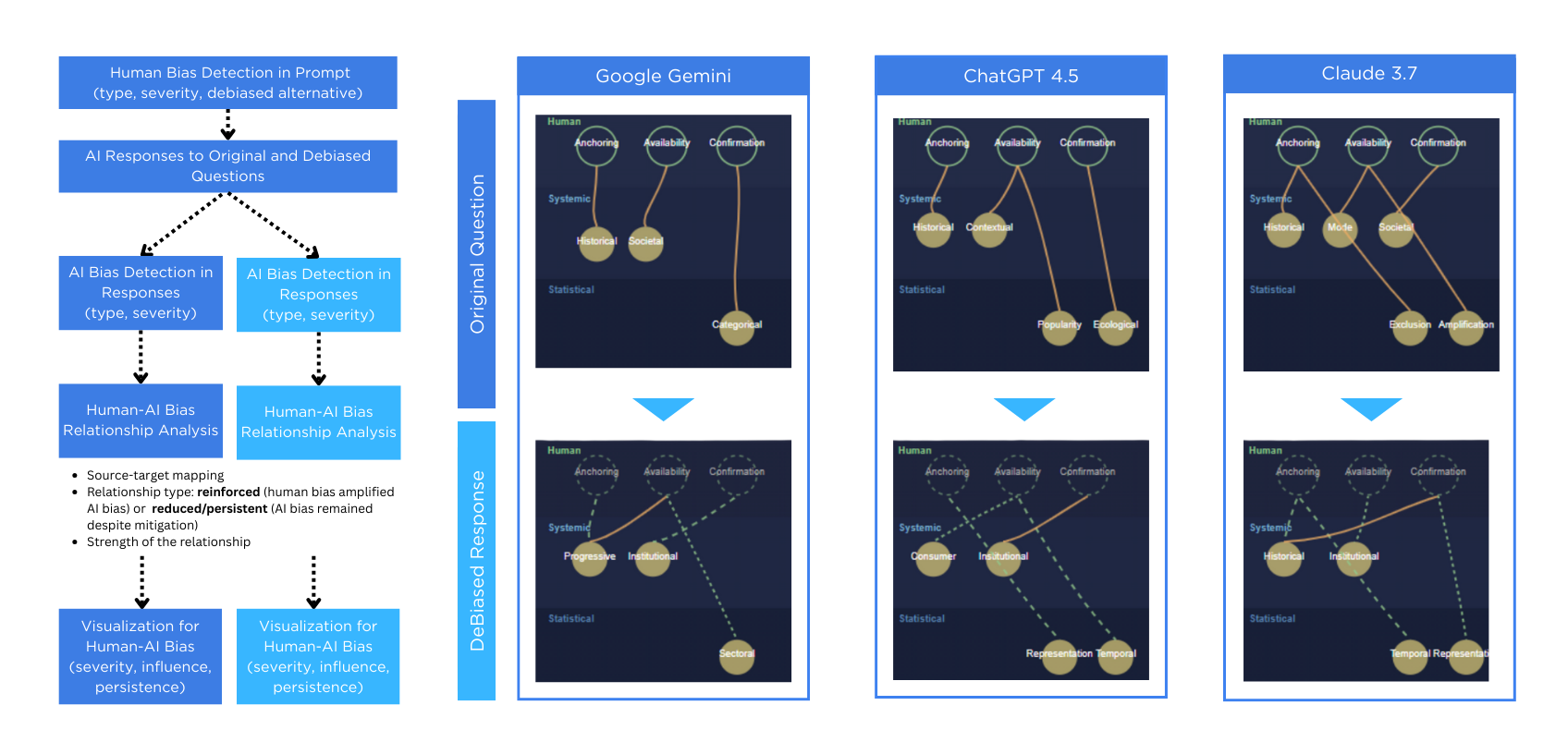

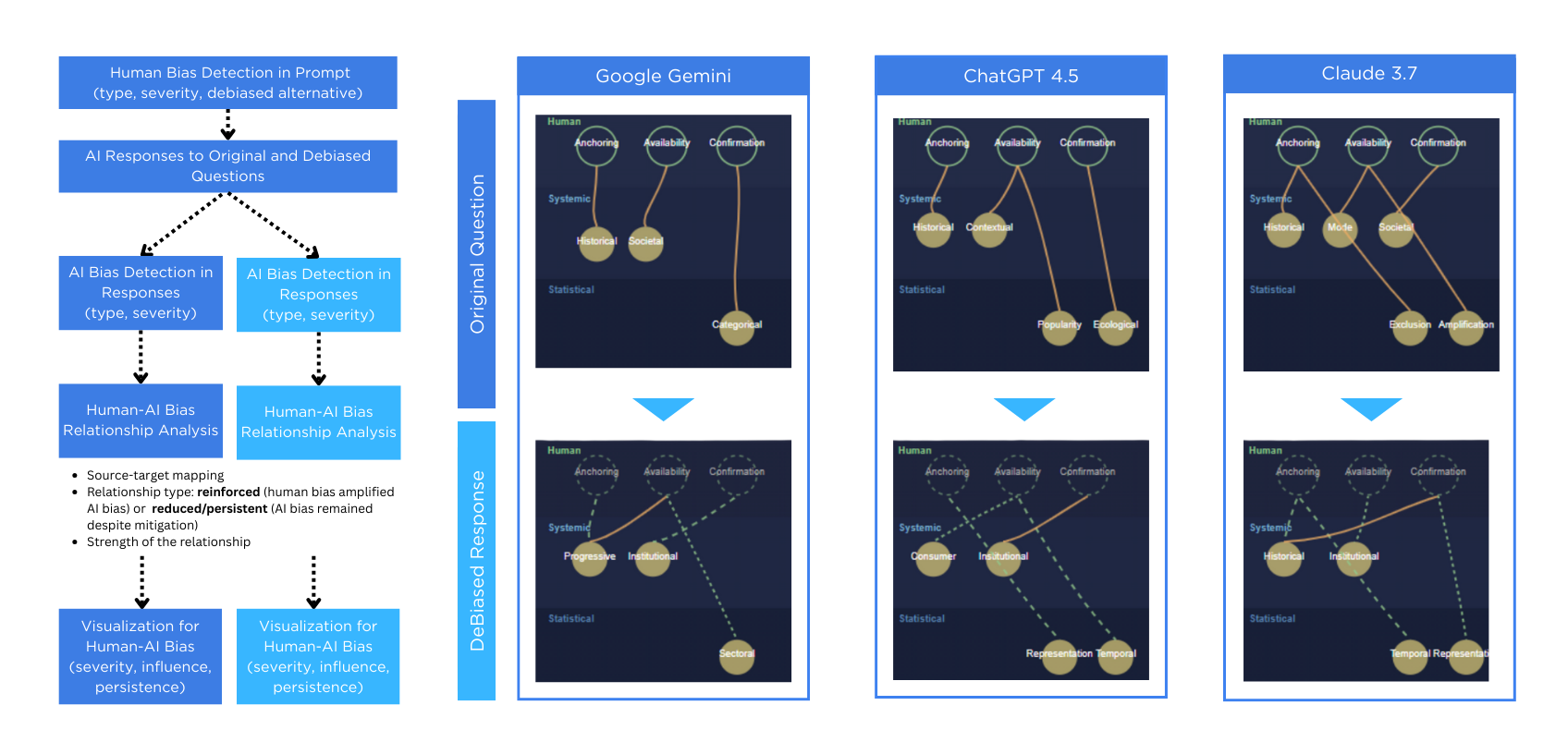

Figure 3: Developing bias visualization: A structured Comparison of Biased and Debiased Alternatives.

The multidisciplinary approach enriches discourse on AI-augmented reasoning, emphasizing the epistemic and ethical dimensions pivotal to navigating AI's role in education and broader society. The tool encourages students to critically assess AI assistance, promoting strategic cognitive augmentation rather than automated problem-solving.

Conclusion

AI literacy advancement necessitates integrating metacognitive skills for comprehensive human-AI collaboration. The "DeBiasMe" intervention exemplifies AI literacy frameworks that prioritize bias awareness and metacognitive competencies, enabling students to manage complex interactions in AI-augmented learning environments effectively. Future research must continue developing tools and methods that empower end-users with critical thinking competencies for healthy and productive engagement with AI technologies.