- The paper introduces a neuroscience-inspired architecture that aligns AI modules with human neural processes to improve agentic reasoning.

- It employs layered sensory input, dynamic memory, and centralized reasoning modules to mimic hierarchical cognition and adaptive decision-making.

- The framework integrates neuro-symbolic learning to enhance real-time adaptability and contextual awareness in autonomous systems.

Nature's Insight: A Novel Framework and Comprehensive Analysis of Agentic Reasoning Through the Lens of Neuroscience

Introduction

The paper delineates a framework inspired by neuroscience to advance agentic reasoning in autonomous AI systems. Agentic reasoning is critical for machines to transition from executing tasks to independently addressing complex problems and environmental uncertainties. Despite significant advances in AI, existing approaches lack a comprehensive understanding rooted in biological reasoning, which integrates hierarchical cognition, multimodal integration, and dynamic interactions observed in human brains.

Neuroscience-Inspired Framework for Agentic Reasoning

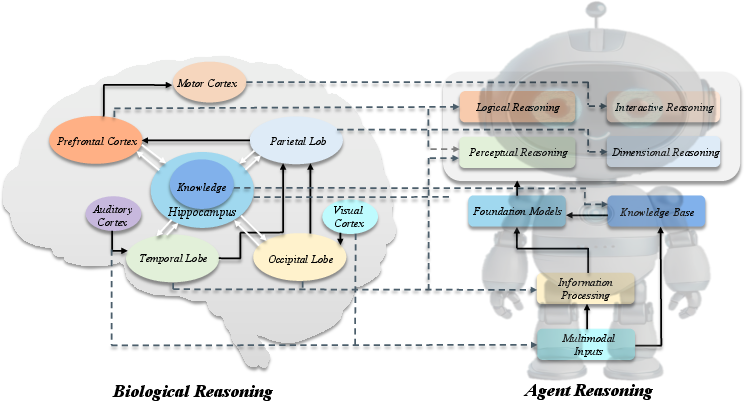

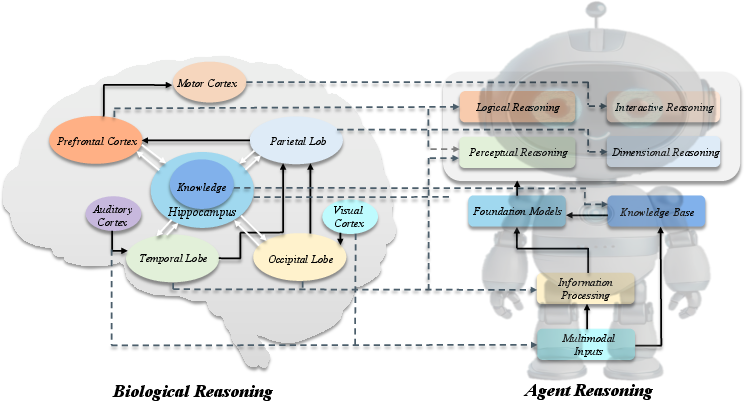

The proposed framework draws equivalences between human cognitive processes and AI agent architectures. In human cognition, sensory inputs are processed through modality-specific cortices, integrated in higher association areas, and linked with predictive coding mechanisms to facilitate decision-making (Figure 1). This biological approach inspires a corresponding AI architecture consisting of several layers:

Figure 1: The proposed neuroscience-inspired framework for agentic reasoning, illustrating the mapping between human brain functions and AI agent modules.

- Sensory Input Module: Encodes environmental data, allowing the agent to perceive complex stimuli.

- Information Processing Module: Layered processing mimics human cortices, providing foundational understanding via foundation models.

- Factual Memory Storage: A dynamic knowledge base analogous to the hippocampus, improving contextual awareness.

- Centralized Reasoning Module: Facilitates adaptive and context-aware decision-making, akin to the prefrontal cortex.

The framework aims to replicate the recursive and integrated nature of human reasoning, enabling AI agents to handle ambiguity and evolve through interaction.

Classification and Implementation of Reasoning Behaviors

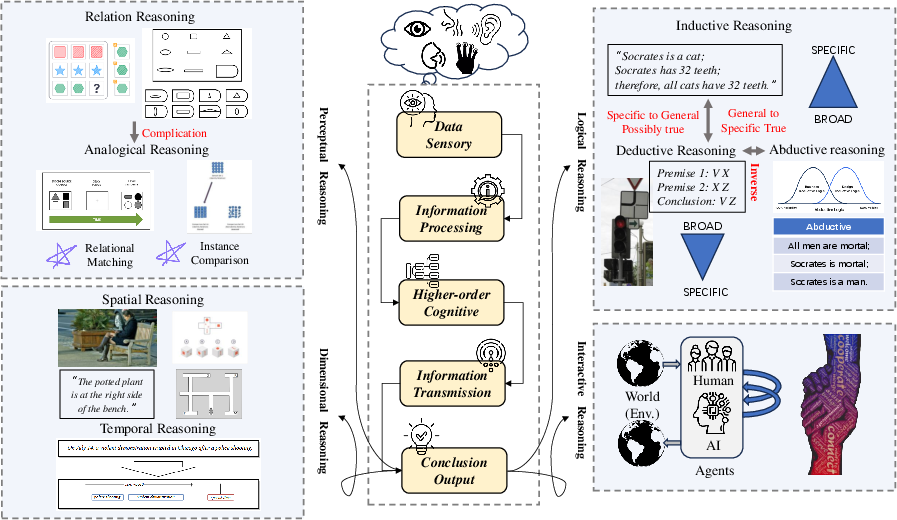

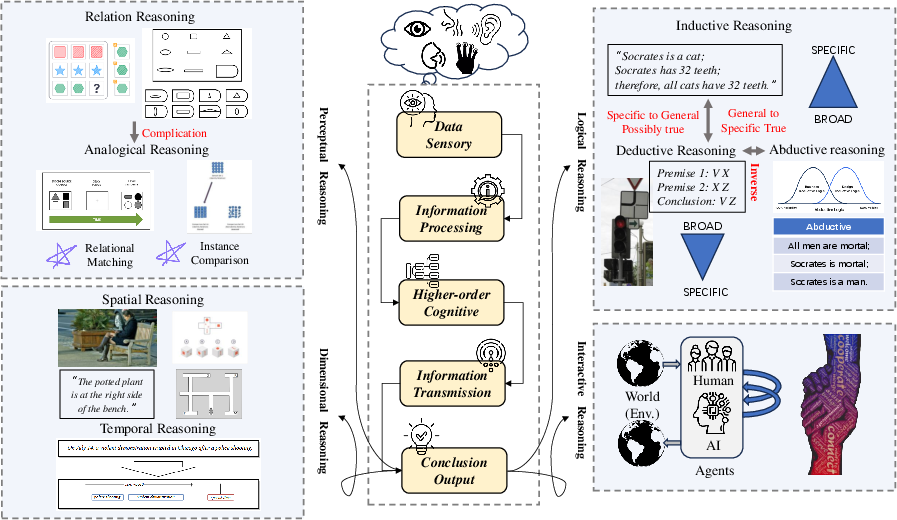

Different reasoning types address varied functions in cognition, structured into five categories (Figure 2):

Figure 2: Overview of reasoning processes and behavior classification from a neuro-perspective, emphasizing multimodal integration and higher-order cognition.

- Perceptual Reasoning: Engages multisensory perception for pattern recognition, with AI systems mimicking human analogy detection and relational matching.

- Dimensional Reasoning: Integrates spatial and temporal inference; AI employs topological and hierarchical reasoning, reflecting parietal lobe processes.

- Relational Reasoning: Involves analogical thinking, guiding logical relational operations in AI systems.

- Logical Reasoning: Encompasses inductive, deductive, and abductive logic, facilitating structured thought processes.

- Interactive Reasoning: Focuses on collaboration and dynamic interaction, crucial for social and autonomous agent systems.

This taxonomy articulates a comprehensive view on how biological inspiration can be translated into computational implementation, establishing domains for further research and application.

Neurosymbolic Integration and Future Innovations

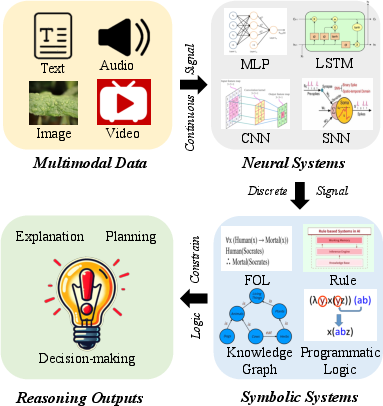

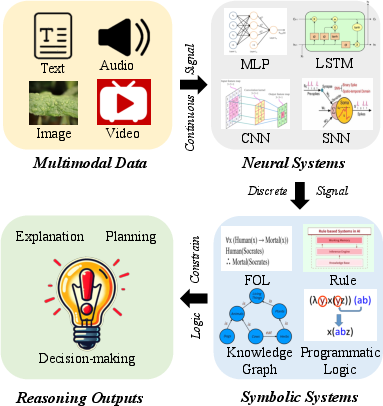

The paper posits that current AI models predominantly operate under static architectures, lacking real-time adaptive refinement typical of human cognition. To overcome this, integrating neuro-symbolic learning systems becomes indispensable.

Figure 3: Neuro-symbolic learning process, leveraging multimodal signals processed via neural systems for logical reasoning.

Neurosymbolic systems could enhance adaptability by learning structured representations directly from sensory data and applying logical operations to derive reasoning outputs. This hybrid integration fosters a balance between pattern recognition and rigorous logical inference, aligning AI with human-like reasoning capabilities.

Future Directions:

Conclusion

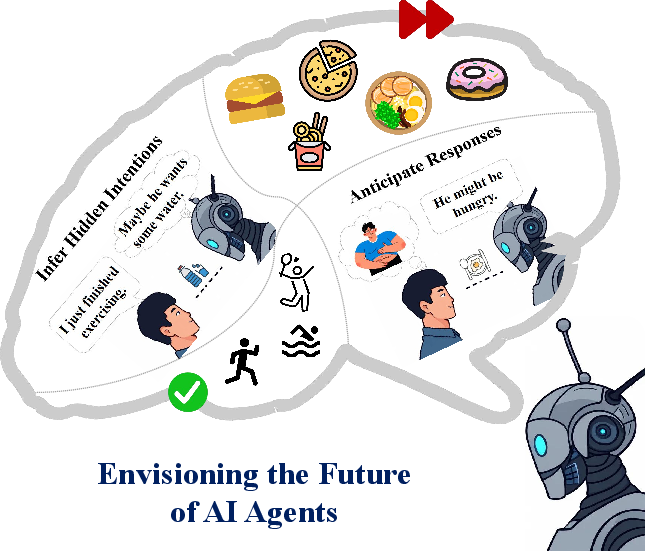

In sum, this paper offers a foundational and pragmatic framework for advancing agentic reasoning by bridging the gap between cognitive neuroscience and artificial intelligence. By mimicking neural mechanisms, AI can achieve a more generalizable, adaptive, and cognitively aligned reasoning capability, pivotal for autonomous systems in both physical and virtual environments. Future research will continue to explore the integration of neural-symbolic approaches and dynamic reasoning models, emphasizing adaptability, sensing, and cognitive interaction.