- The paper identifies that effective memory management, through selective memory addition and history-based deletion, significantly influences LLM agents' long-term performance.

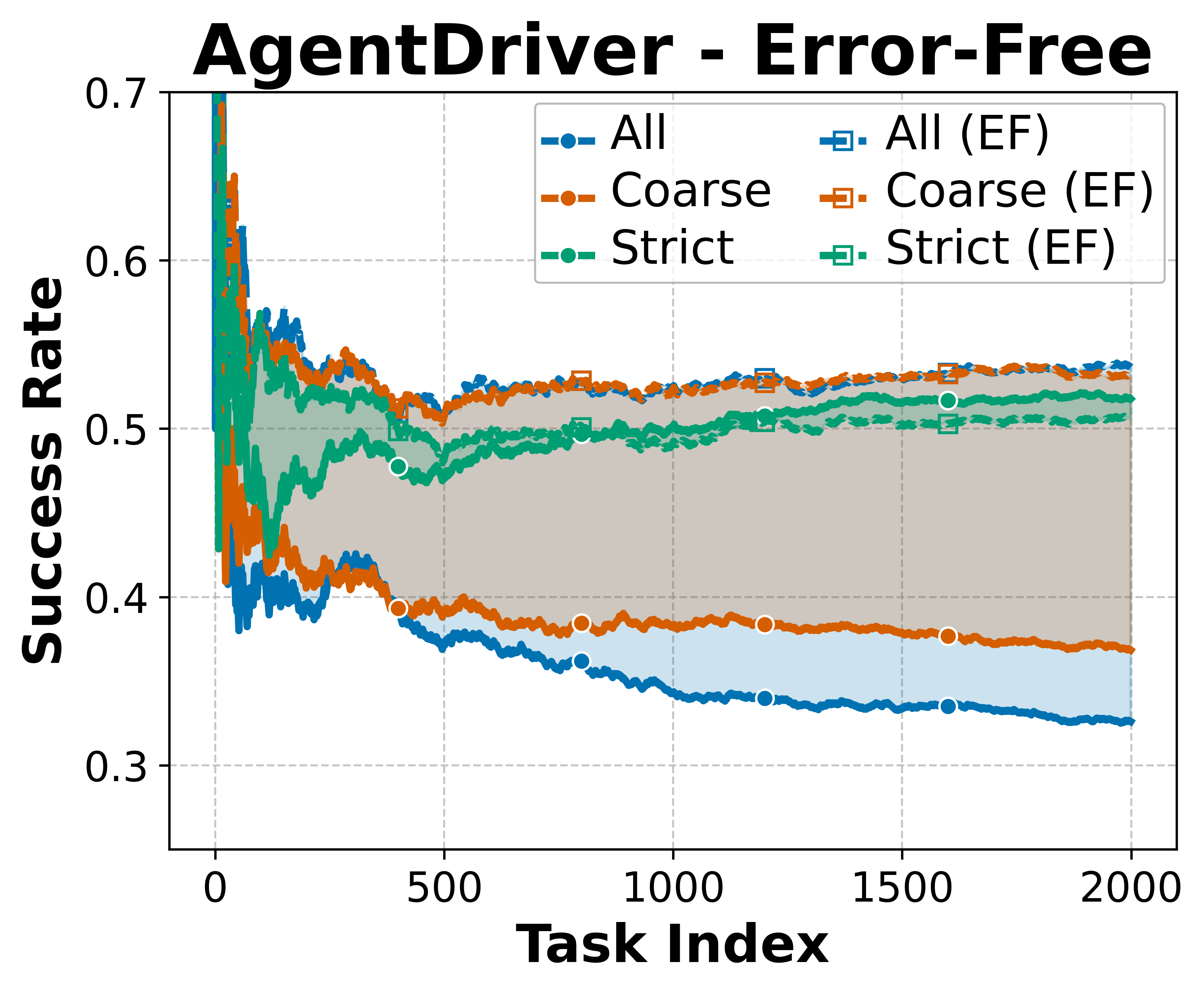

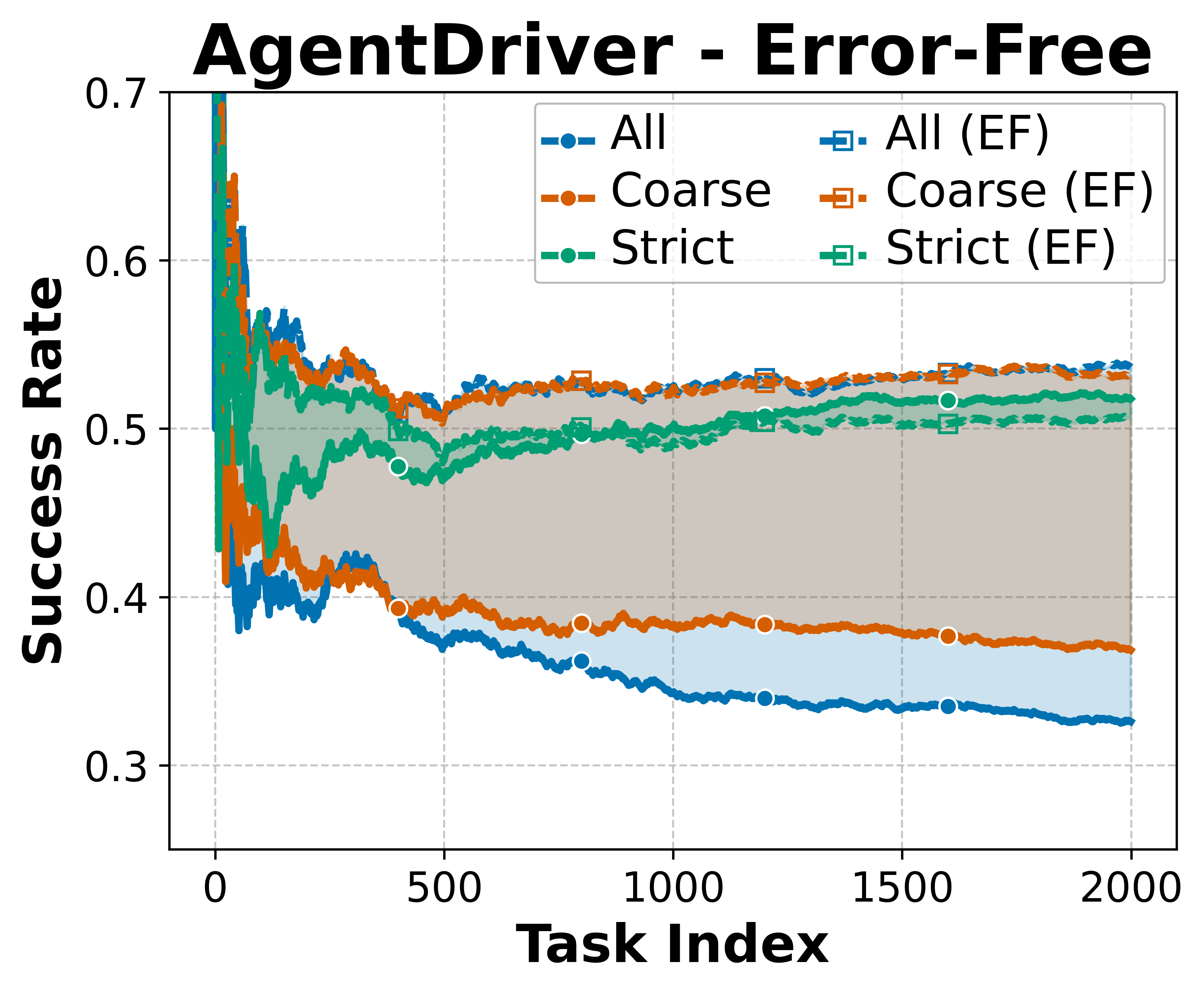

- The study employs controlled experiments comparing evaluators, revealing that strict and coarse methods yield distinct self-improvement patterns.

- The results demonstrate that strategic memory deletion combined with quality memory addition mitigates error propagation and misaligned experience replay in agents.

How Memory Management Impacts LLM Agents: An Empirical Study of Experience-Following Behavior

Introduction

The paper "How Memory Management Impacts LLM Agents: An Empirical Study of Experience-Following Behavior" details a comprehensive exploration of how memory management choices affect the long-term performance of LLM-based agents. The focus lies primarily on two fundamental operations within memory management: memory addition and deletion. The study identifies the experience-following property of LLM agents and addresses two critical challenges: error propagation and misaligned experience replay. This analysis draws insights from controlled experiments conducted using various agent architectures and tasks, offering practical guidance for designing memory systems that support robust agent performance.

Memory Dynamics and Behavioral Insights

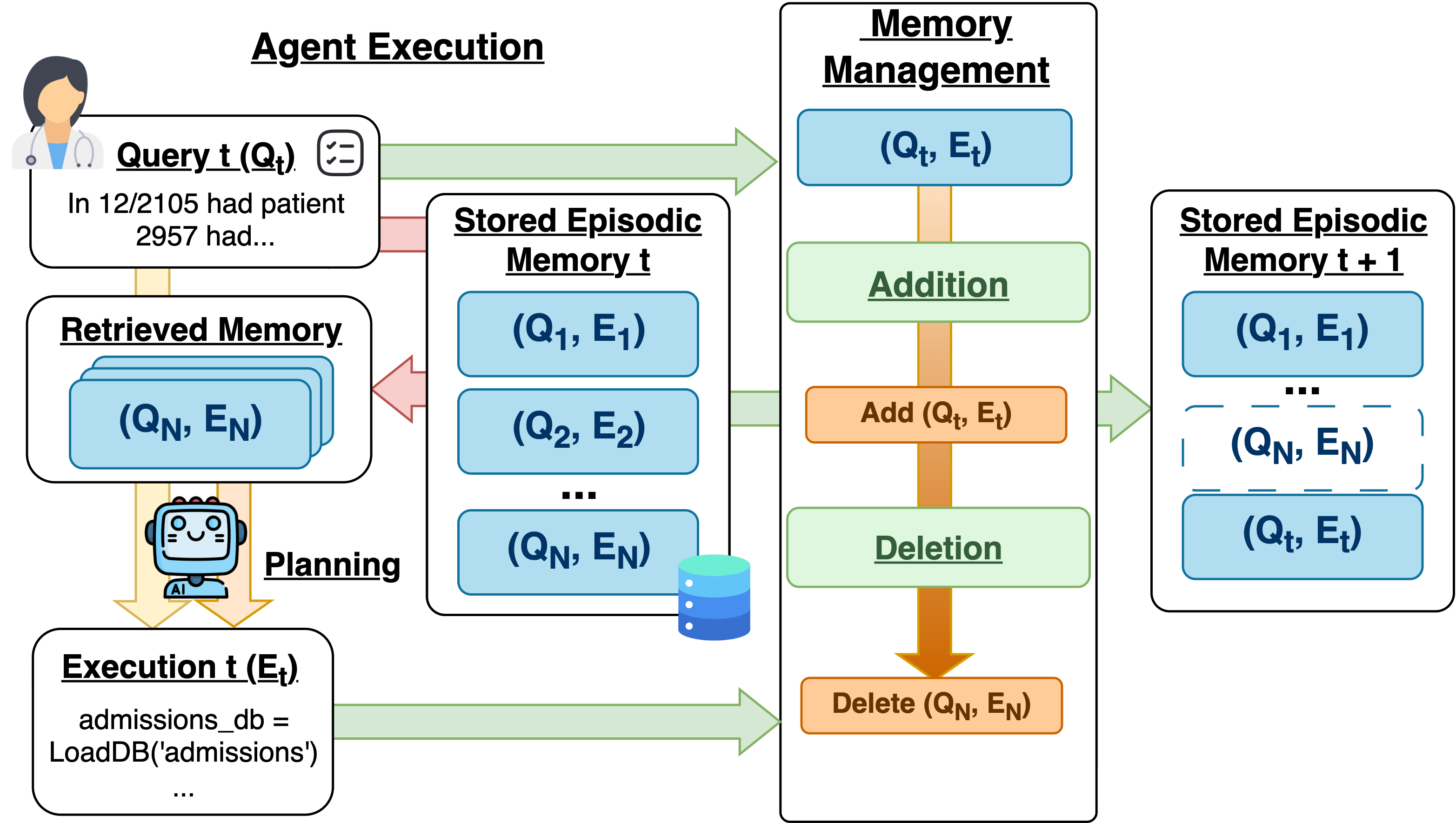

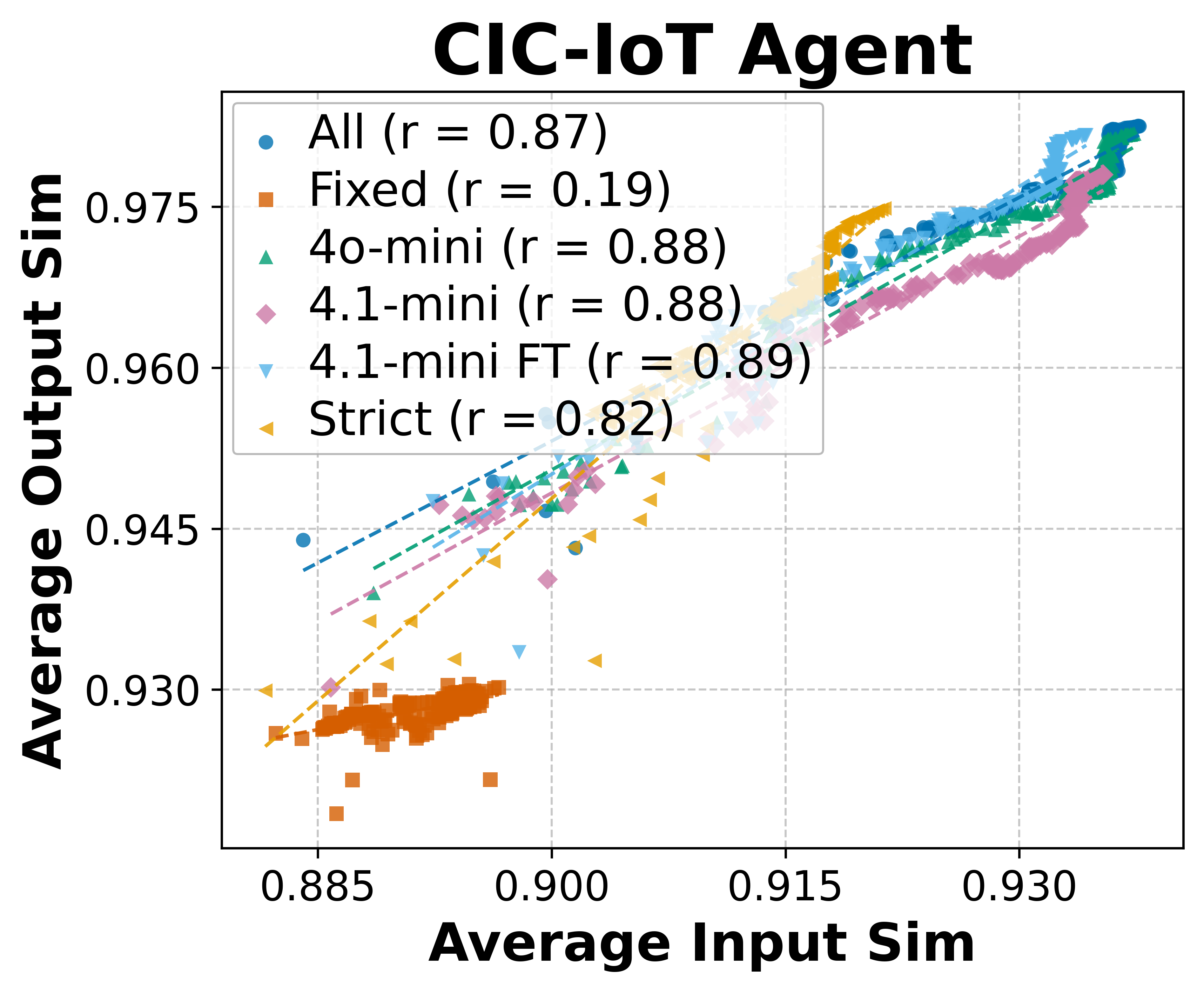

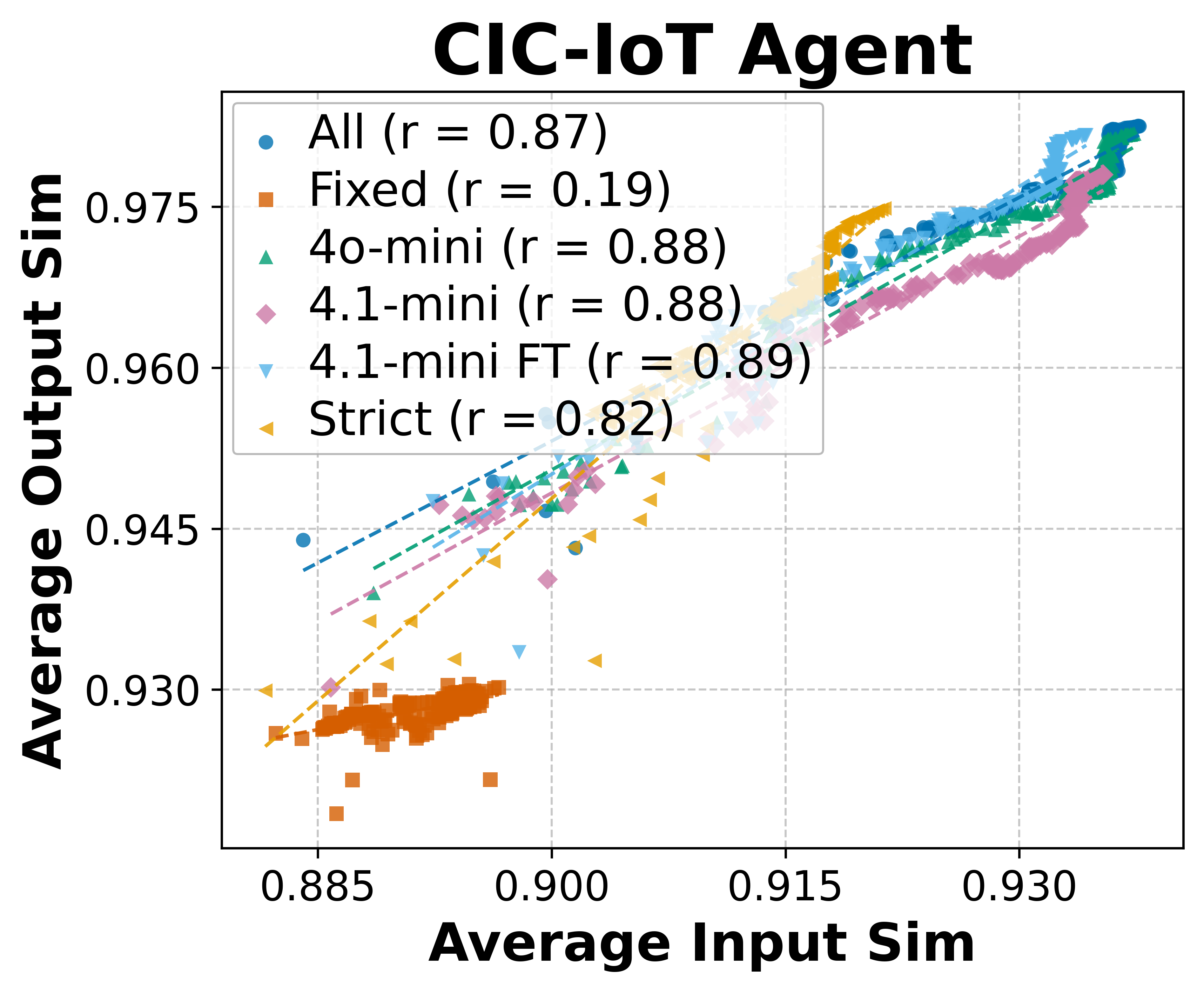

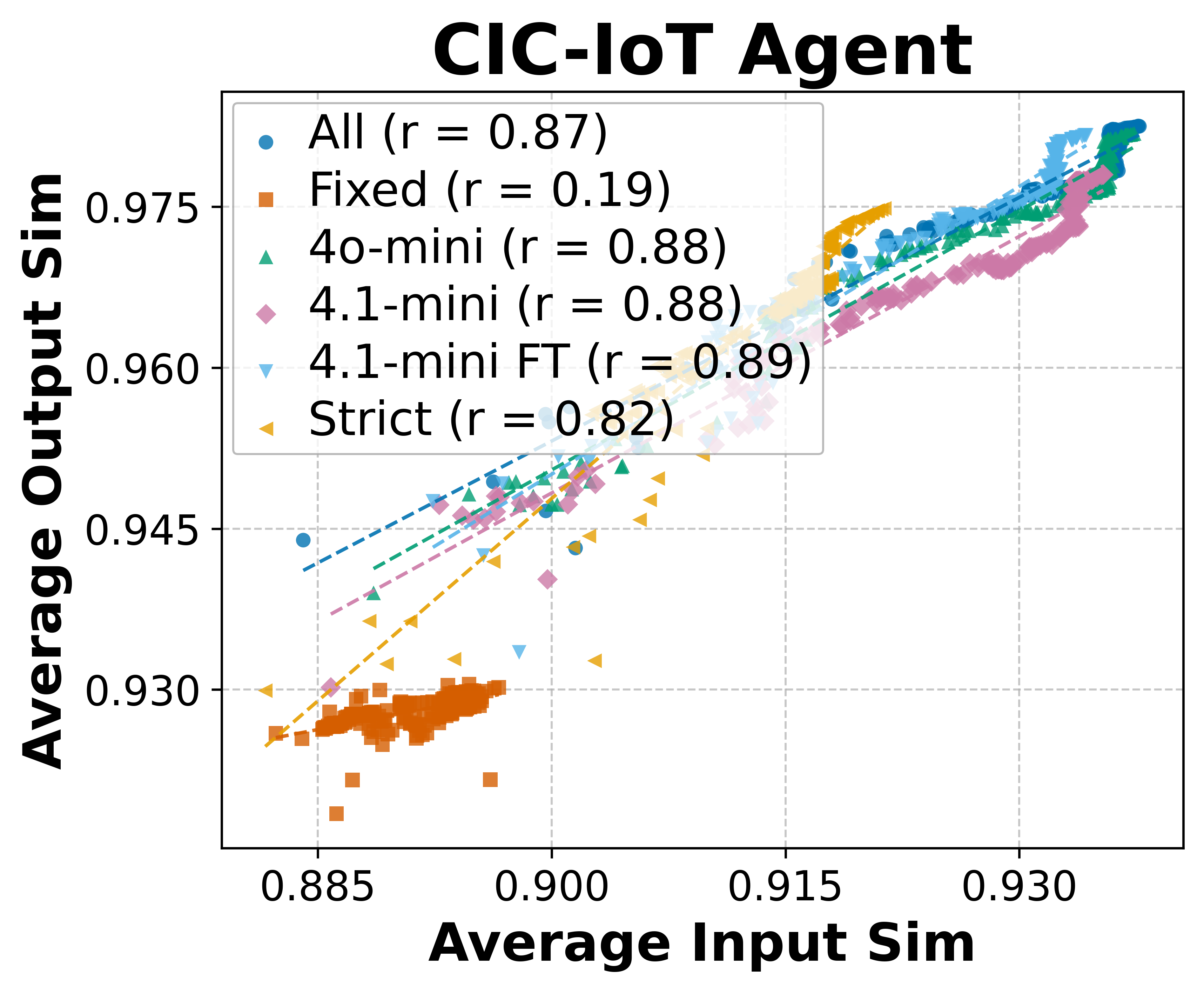

Memory management within LLM agents involves continually evolving dynamics resulting from persistent operations like memory addition and deletion. The paper identifies a key property called experience-following, which denotes that a high input similarity often correlates with a high output similarity. This implies that LLM agents tend to replicate outputs when querying similar tasks. The phenomenon, however, poses significant challenges such as error propagation, where inaccuracies are perpetuated across performances, and misaligned experience replay, wherein certain prior executions offer negligible or misleading value when used as demonstrations for new tasks.

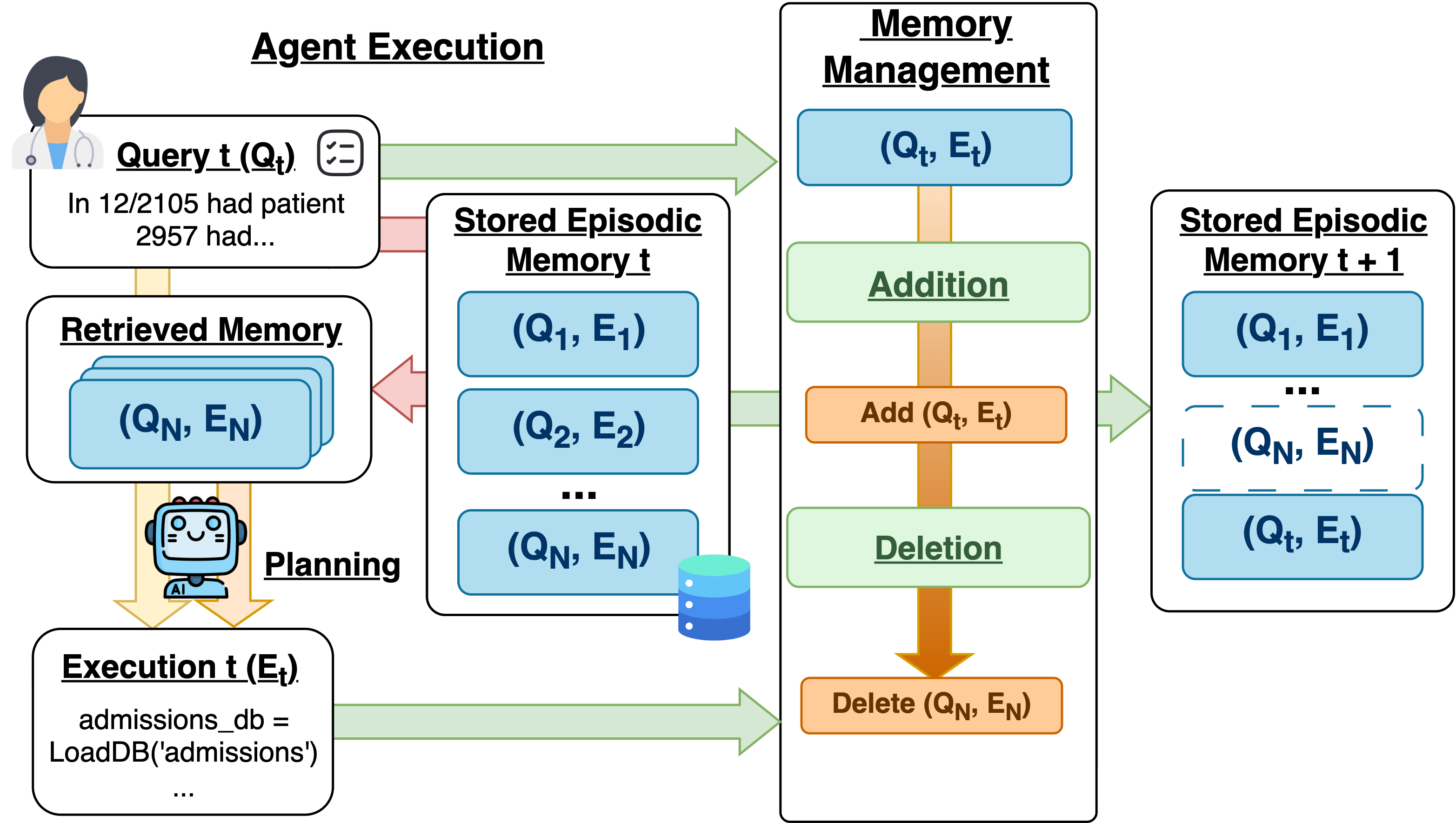

Figure 1: Illustration of the memory management workflow after each agent execution.

Memory Addition

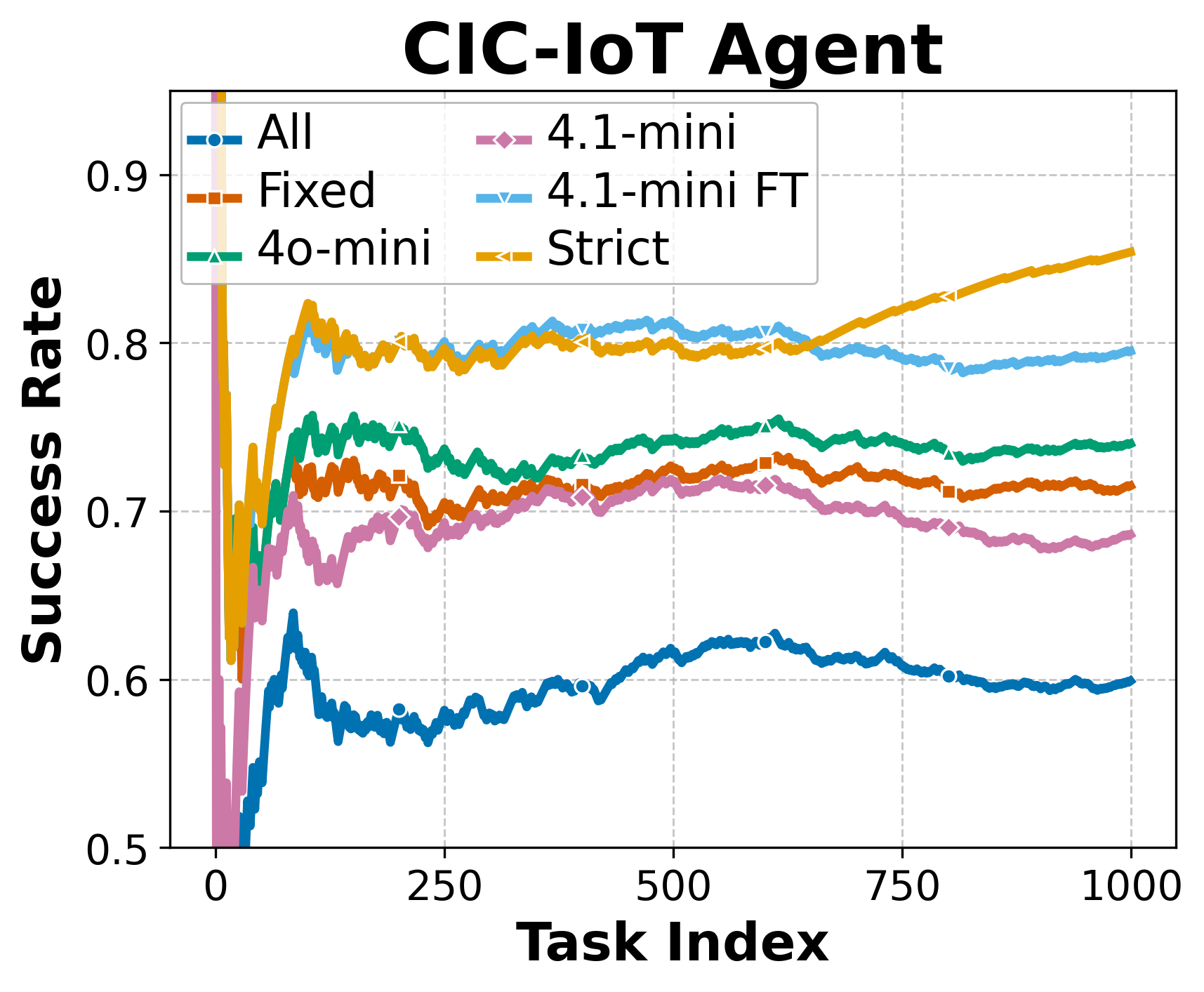

Memory addition determines whether a completed task's execution should be stored in the memory bank. The paper explores different strategies for this, ranging from adding all task records to selective approaches based on automatic or human evaluations. It reveals that execution quality coupled with memory size significantly influences long-term agent performance (Table 1).

Methodologies

The paper evaluates multiple memory addition strategies:

- Add-All Approach: Encompasses storing every task execution.

- Selective Addition (Coarse Evaluation): Utilizes model-based criteria to judge task quality for addition. Different evaluators, like automatic (coarse) and human (strict), impact outcomes significantly.

- Fixed-Memory Baseline: Relies on an initial set memory without additions.

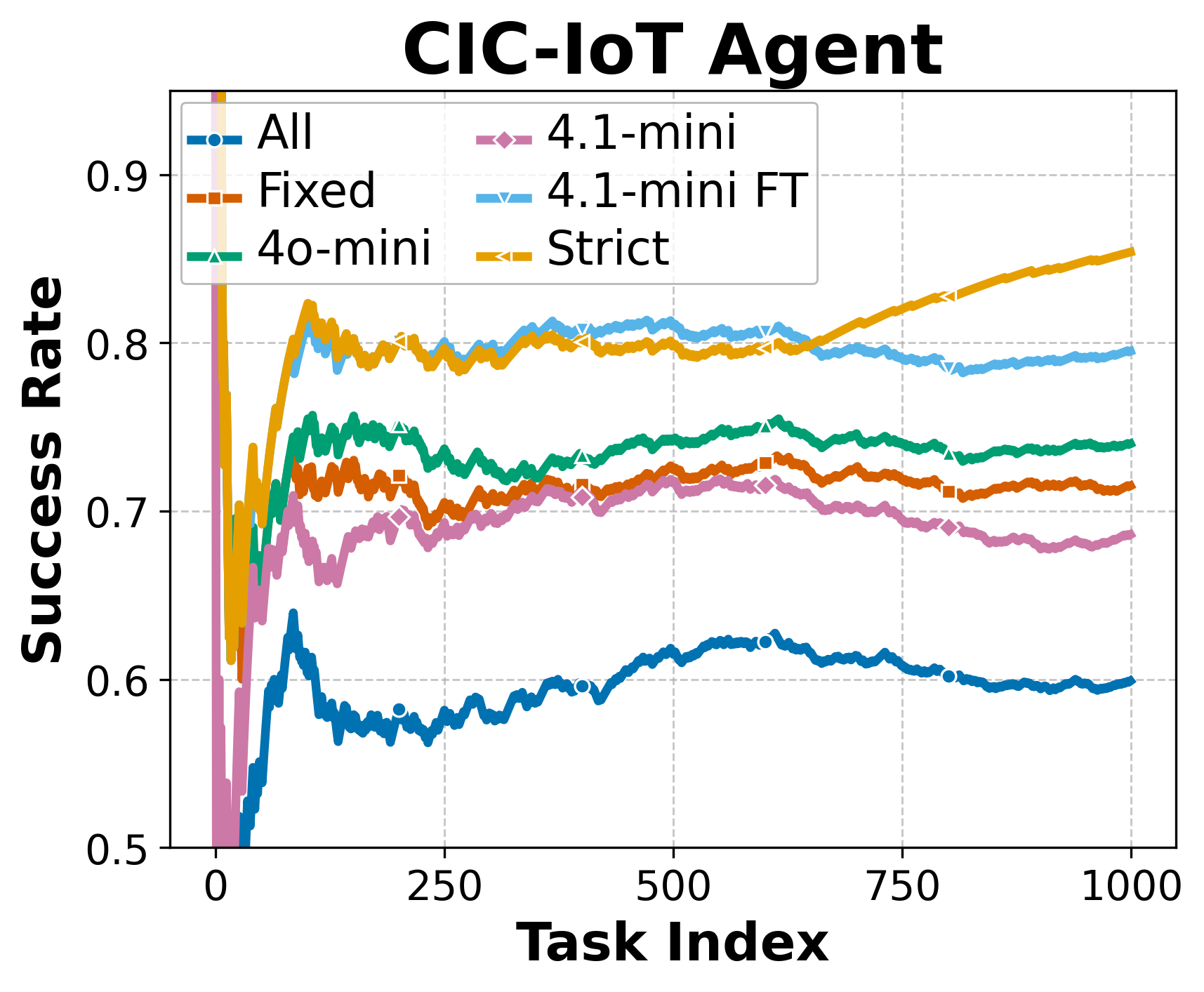

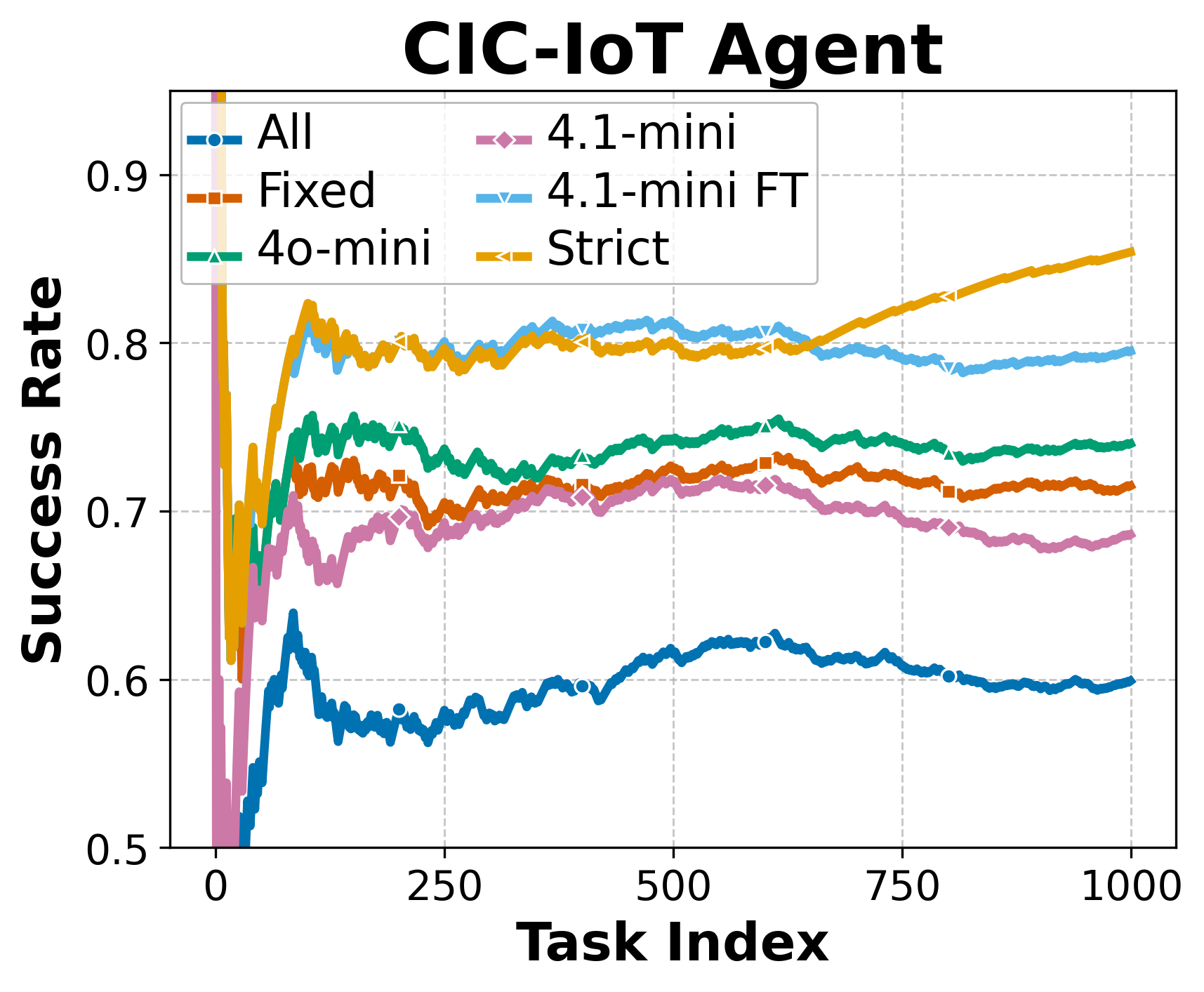

Figure 2: Performance trend for EHRAgent and AgentDriver. 4o-mini, 4.1-mini, and 4.1-mini FT denote different coarse evaluators from the GPT series. Both the strict evaluator and some coarse evaluators exhibit consistent self-improvement over time.

Memory Deletion

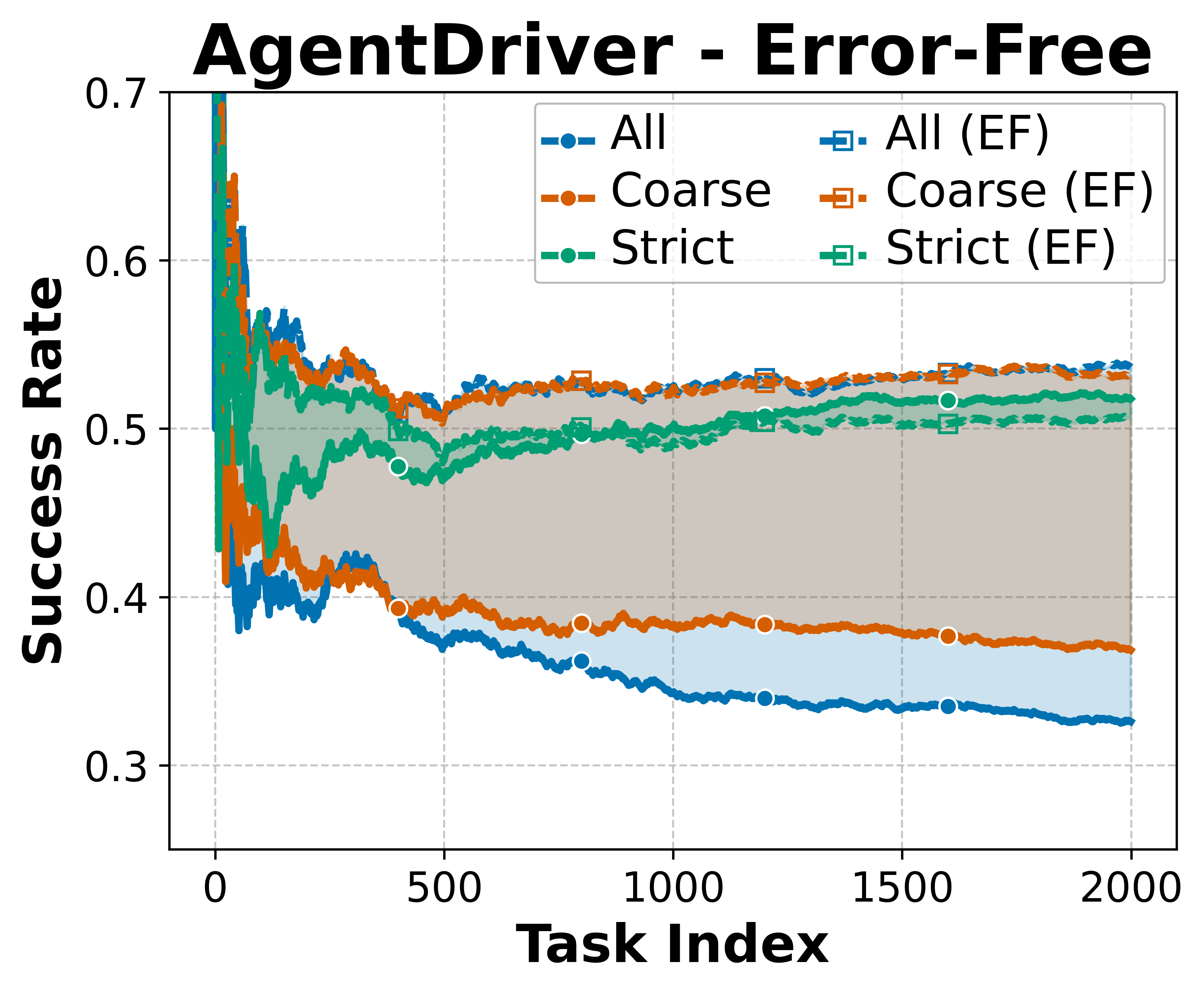

Memory deletion is essential due to storage constraints and involves removing memory entries deemed ineffective or redundant. The paper examines three strategies:

- Periodic Deletion: Based on retrieval statistics over time.

- History-Based Deletion: Guided by past usefulness metrics of the memory records.

- Combined Approach: Merges past retrieval performance with utility evaluator insights.

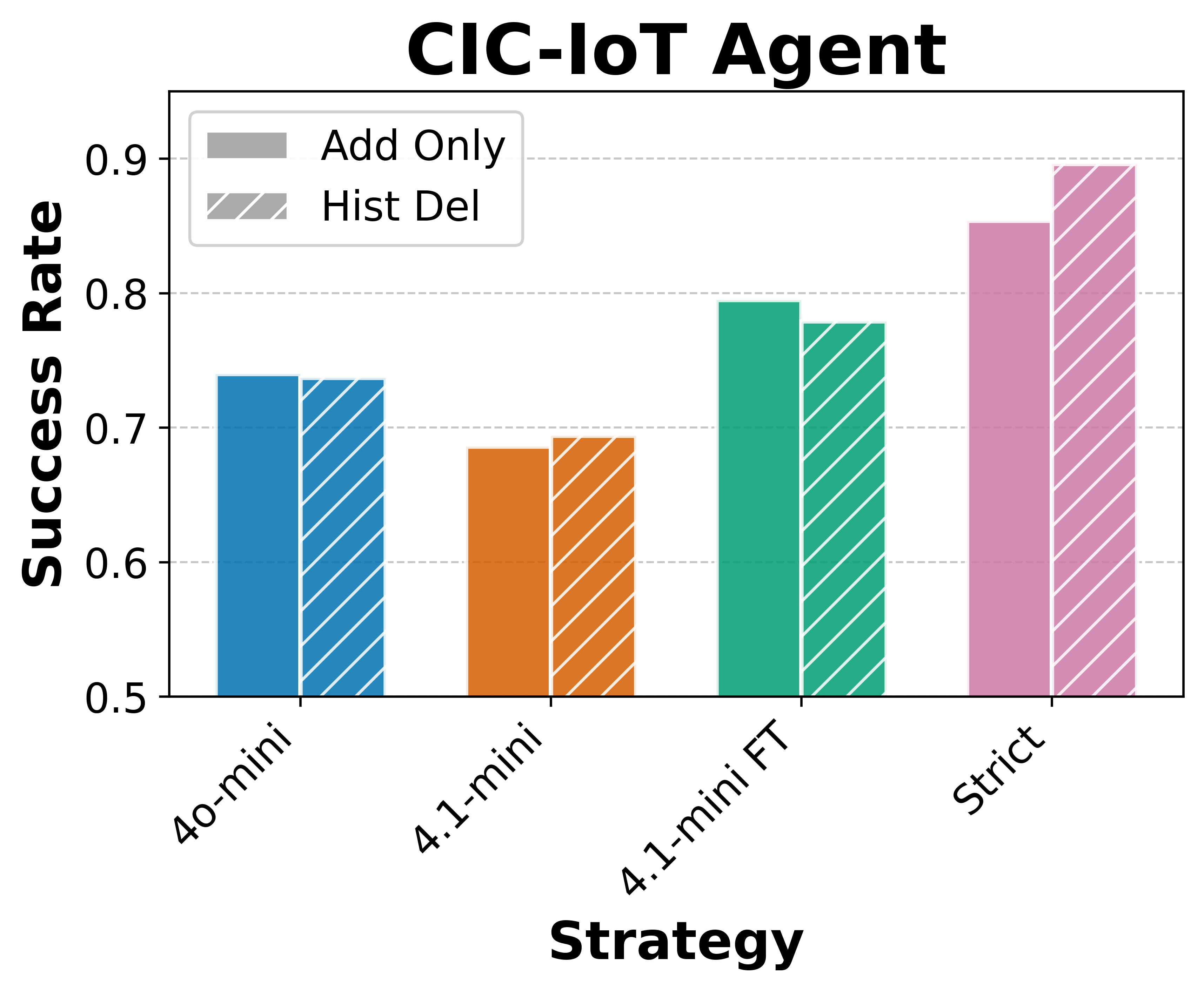

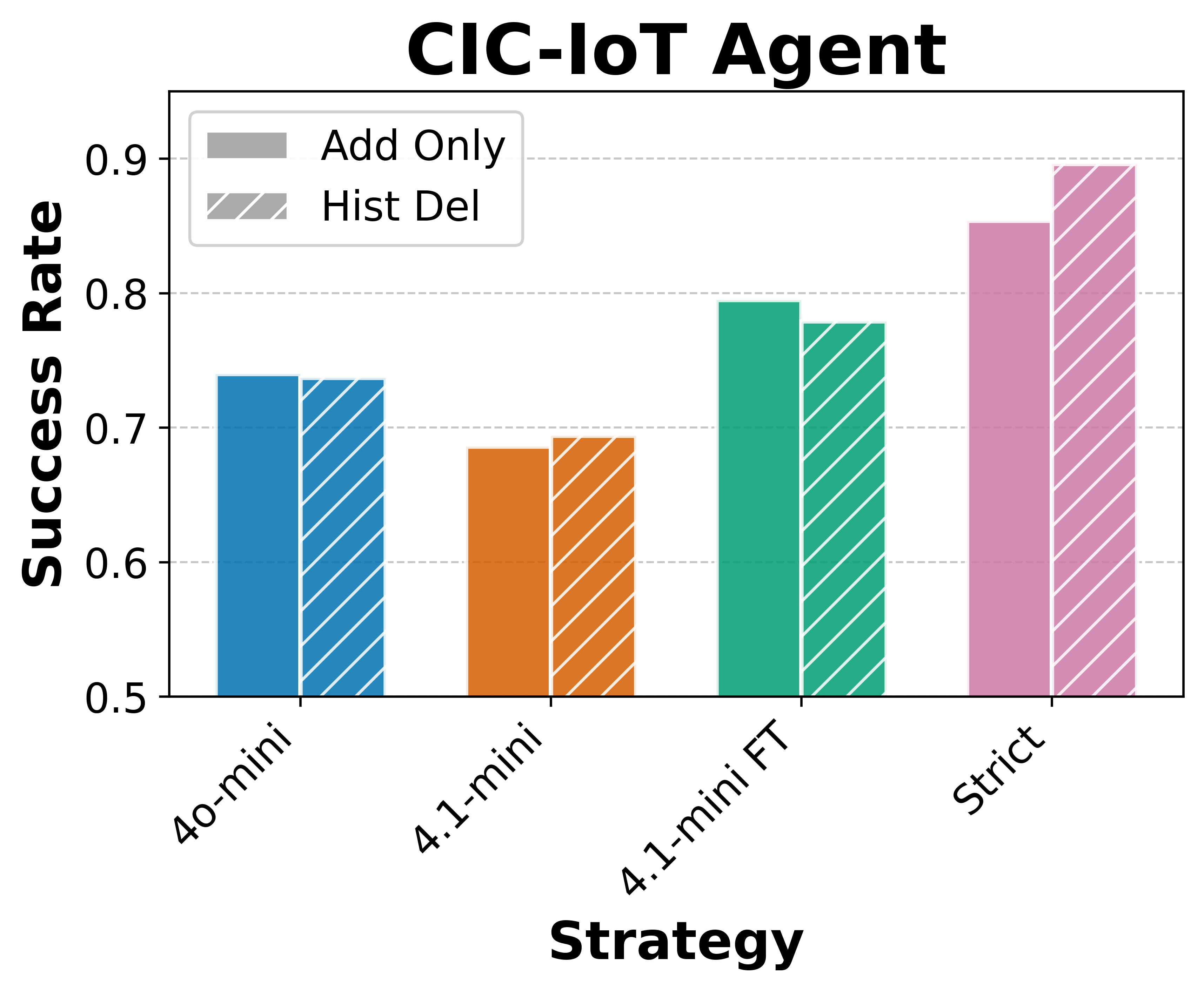

Results demonstrate that history-based deletion often enhances performance, especially when utilizing strict evaluators to maintain useful memory records.

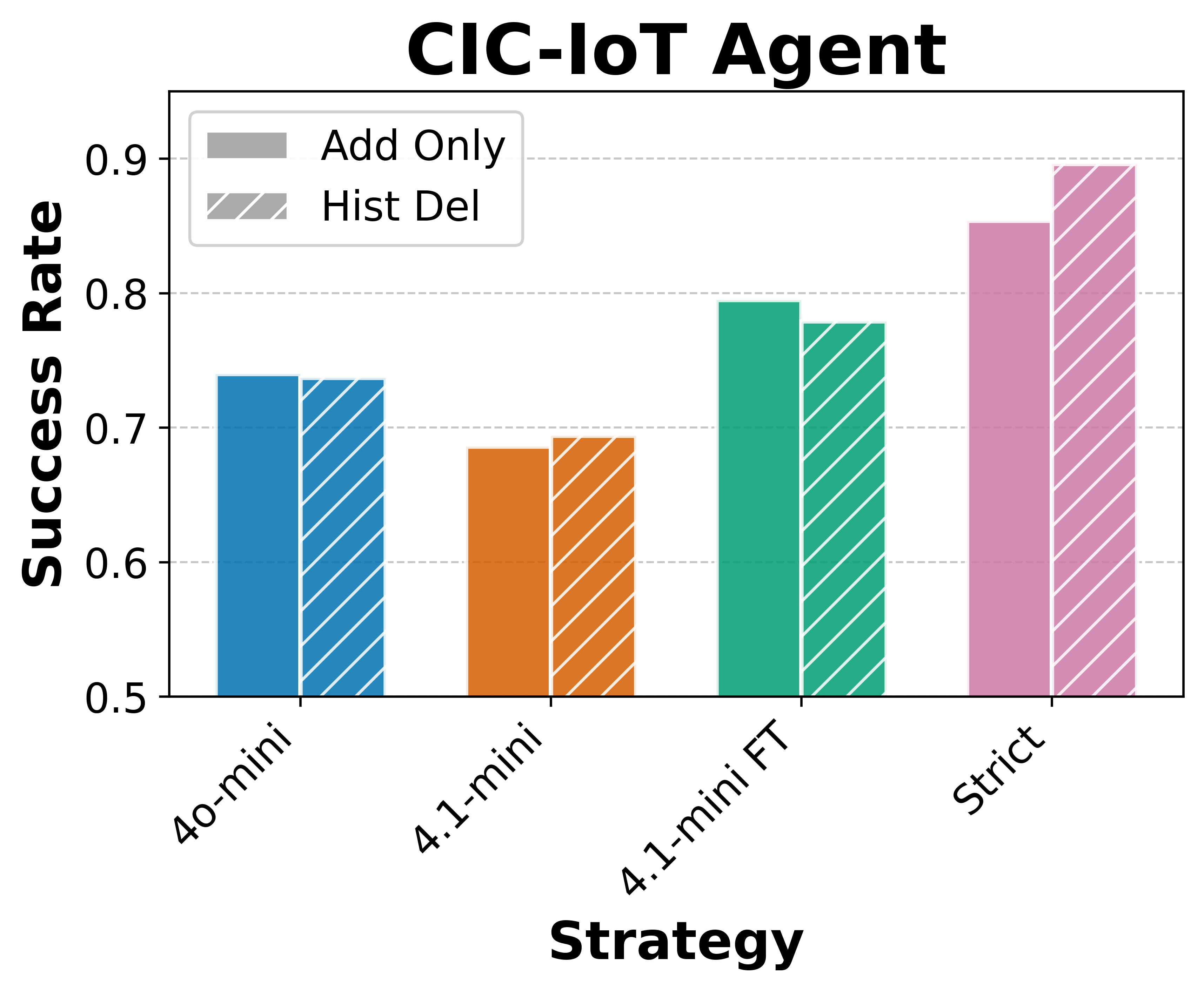

Figure 3: Performance comparison after applying history-based deletion with different evaluators.

Addressing Challenges: Error Propagation and Misaligned Replay

Error propagation and misaligned experience replay are pivotal concerns associated with experience-following. As the paper demonstrates, using evaluators for memory records helps mitigate these issues by assessing execution quality, leading to enhanced future task performance.

In history-based deletion, the utility evaluator assesses the effectiveness of past experiences, ensuring the removal of detrimental entries that might misalign with current objectives. This approach significantly reduces potential noise introduced through indiscriminate memory addition.

Figure 4: Comparison between deleted and retained records for RegAgent.

Implications and Future Directions

The empirical findings underscore the importance of sophisticated memory management strategies tailored to agentic architectures. The paper's conclusions advocate for the design of adaptive, quality-focused memory systems that leverage evaluative insights for both addition and deletion. The research suggests further exploration into automated evaluators and hybrid methods accommodating evolving task distributions.

Conclusion

This paper meticulously dissects the memory management framework in LLM agents, offering evidence-driven insights and strategies to optimize performance. By addressing critical challenges like error propagation and misaligned experience replay, it lays the groundwork for future advancements in designing adaptive, resilient memory systems for LLM agents.