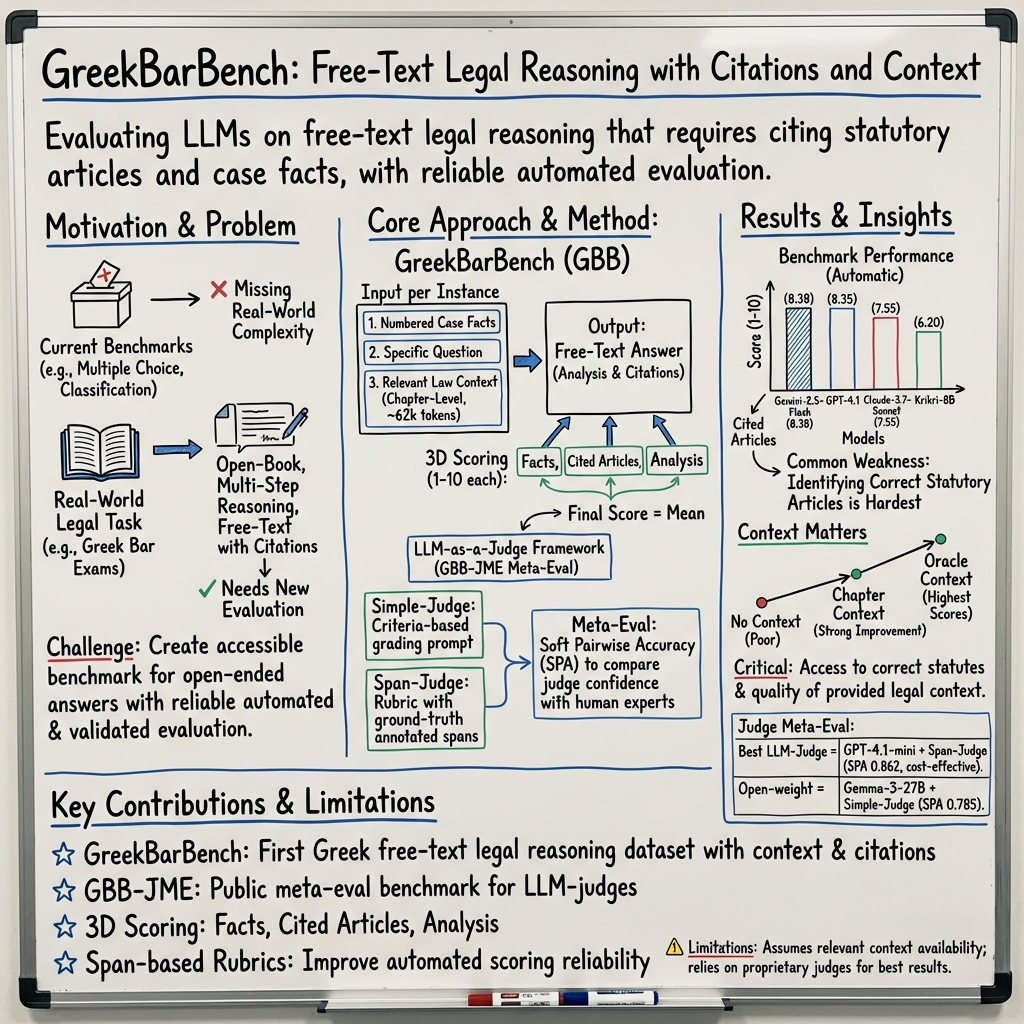

- The paper presents GreekBarBench, establishing a new benchmark for evaluating LLMs' capabilities in free-text legal reasoning and statutory citations inspired by Greek Bar exams.

- It employs a three-dimensional scoring system—facts, cited articles, and analysis—to mirror real-world legal reasoning complexities.

- Evaluation of 13 LLMs shows promising results with notable citation errors, highlighting key areas for improvement in legal AI applications.

GreekBarBench: A Challenging Benchmark for Free-Text Legal Reasoning and Citations

Introduction

The paper "GreekBarBench: A Challenging Benchmark for Free-Text Legal Reasoning and Citations" (2505.17267) introduces GreekBarBench, a novel benchmark designed to evaluate the legal reasoning capabilities of LLMs using questions inspired by the Greek Bar exams. This benchmark targets a significant gap in existing legal NLP benchmarks, which often focus on classification tasks or multiple-choice questions that do not fully capture the complexities of legal reasoning. GreekBarBench demands not only the identification and analysis of legal facts but also requires citing statutory articles, thus pushing the boundaries of what LLMs can achieve in realistic legal scenarios.

Benchmark Design and Methodology

GreekBarBench encompasses five distinct legal domains derived from the Greek Bar exams: Civil Law, Criminal Law, Commercial Law, Public Law, and the Lawyers' Code. The questions necessitate open-ended answers, citation of relevant statutory articles, and multi-hop reasoning—a skill set that mirrors the practical legal reasoning performed by legal professionals. The benchmark employs a novel three-dimensional scoring system focusing on three key aspects: Facts, Cited Articles, and Analysis. This scoring methodology allows the benchmark to rigorously assess each model's ability to comprehend case facts, correctly cite legal statues, and construct a valid argument.

To evaluate the performance of various LLMs on GreekBarBench, the authors utilize an "LLM-as-a-judge" methodology, which involves using LLMs to score candidate responses. This approach is further augmented with a span-based rubric system to enhance the alignment between LLM evaluation and human expert scoring. A meta-evaluation benchmark, GBB-JME, is developed to quantify the correlation between LLM-generated scores and human evaluations.

Evaluation and Results

The paper reports an extensive evaluation of 13 proprietary and open-weight LLMs using GreekBarBench. These evaluations reveal that although top-performing models exhibit promising capabilities, they are still prone to significant errors, particularly in the citation of applicable statutory articles. This underscores a critical challenge in current LLM capabilities: while they can generate seemingly coherent and knowledgeable responses, the intricacy required for legal citation remains predominantly unmastered.

The GreekBarBench results show that leading models like Gemini-2.5-Flash and GPT-4.1 achieve the highest scores, yet they remain below the performance of an average human expert. Notably, the benchmark highlights that a model's proficiency in internalizing and retrieving legal knowledge is pivotal, as demonstrated by the substantial performance improvements achieved when supplied with a legal context.

Implications and Future Directions

The introduction of GreekBarBench provides a finer granularity in evaluating the legal reasoning capabilities of LLMs, facilitating the development of models that are both more robust and aligned with human legal reasoning. As LLMs continue to play a more significant role in legal NLP applications, benchmarks like GreekBarBench will be instrumental in shaping the future trajectory of AI-aided legal expertise.

The paper outlines critical pathways for future research, including improvements in context comprehension and retrieval-augmented generation strategies that could further bolster a model's legal reasoning capabilities. Additionally, enhancing models to handle more nuanced legal language and interpret context-specific legal requirements could prove transformative for their eventual deployment in real-world legal settings.

Conclusion

GreekBarBench sets a new standard for the evaluation of LLMs in legal reasoning, offering a comprehensive and challenging benchmark that closely mimics the complexities of real-world legal analysis. While the benchmark highlights existing limitations in LLMs, particularly in legal citation, it also points toward exciting avenues for further research and development in LLMs capable of tackling sophisticated legal reasoning tasks. The continued advancement of these capabilities is crucial for the more widespread adoption and reliability of legal AI applications.