- The paper introduces physics-based ASICs that harness natural physical processes to improve energy efficiency and computational throughput.

- It outlines a co-design methodology fusing top-down algorithm selection with bottom-up hardware strategies, surpassing state-of-the-art metrics.

- Applications range from neural network inference to scientific simulation, potentially reducing energy consumption while boosting performance.

Solving the Compute Crisis with Physics-Based ASICs

Introduction: The Compute Crisis

The prevailing demands of AI have driven the computing industry to a pivotal juncture characterized by an unsustainable compute crisis. Data centers in 2023 consumed approximately 200 TWh of electricity for AI operations, a figure projected to escalate to 260 TWh by 2026. The rapid expansion of AI applications fuels this energy demand, alongside prohibitive financial costs for training advanced models, which are predicted to exceed \$1 billion by 2027 due to the limitations of existing computing hardware. These constraints are compounded by the nearing limits of CMOS scaling laws, emphasizing a critical inefficiency in conventional architectures, which fail to fully utilize the inherent physical computing power.

Physics-based Application-Specific Integrated Circuits (ASICs) delineate a promising alternative pathway, eschewing the abstraction layers that impede efficiency. By leveraging the intrinsic dynamics of physical processes, these ASICs maximize energy efficiency and throughput, fundamentally altering the co-design of algorithms and hardware. This vision underscores a potential paradigm shift towards energy-efficient, physically-integrated computation, addressing core AI applications such as neural network inference, sampling, and optimization.

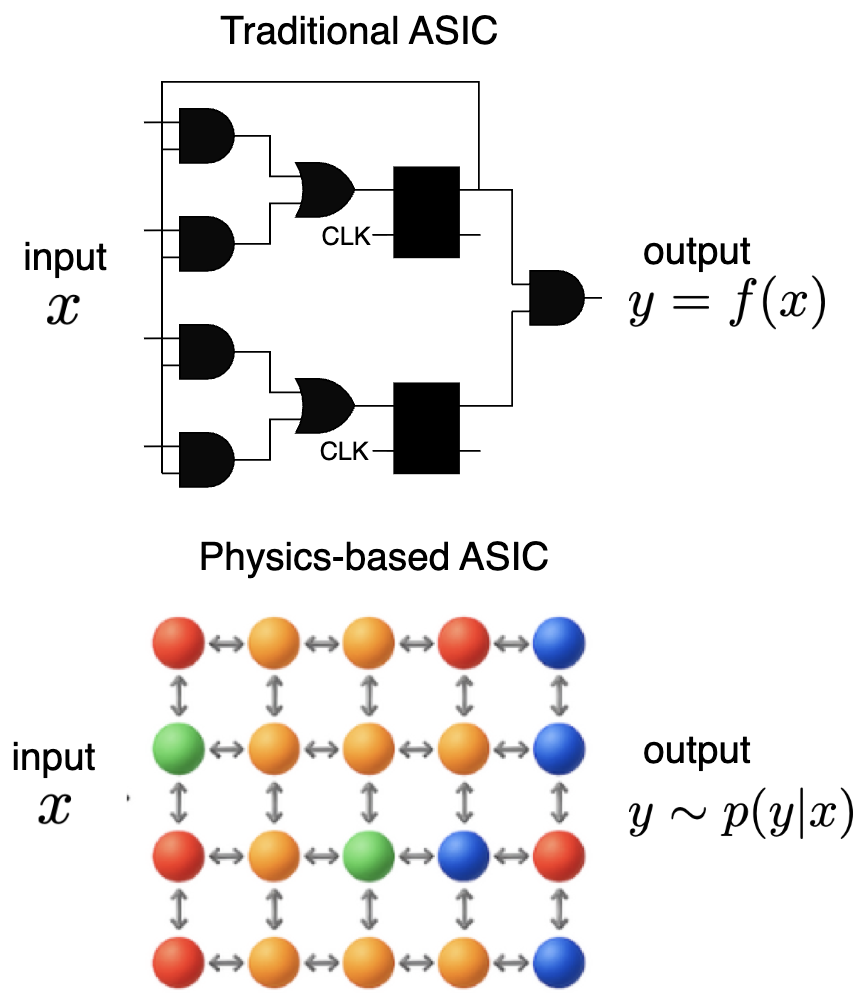

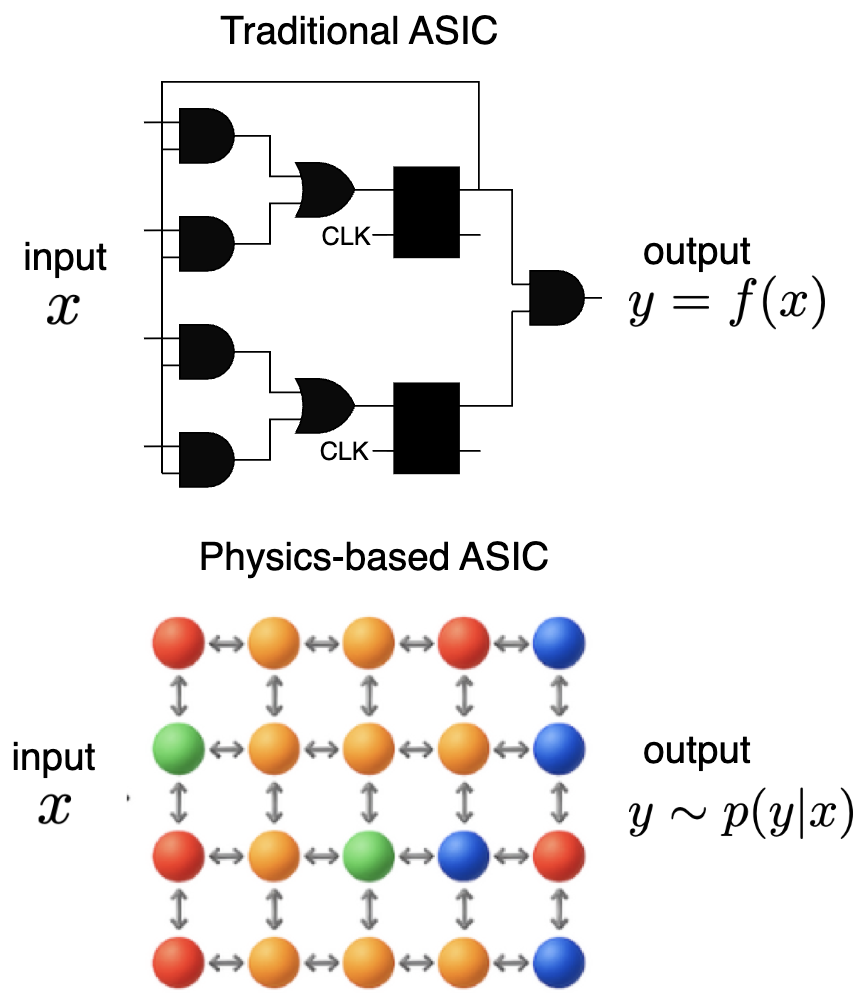

Physics-based ASICs represent a radical departure from conventional ASIC design, exploiting rather than suppressing naturally occurring physical phenomena. Traditional ASICs maintain separations, such as memory and computational statelessness, unidirectionality, determinism, and synchronization. In contrast, physics-based ASICs embrace statefulness, bidirectionality, and stochasticity to closely mimic and leverage physical processes.

Figure 1: Traditional ASICs versus Physics-based ASICs, illustrating differences in memory and computation, statefulness, and information flow.

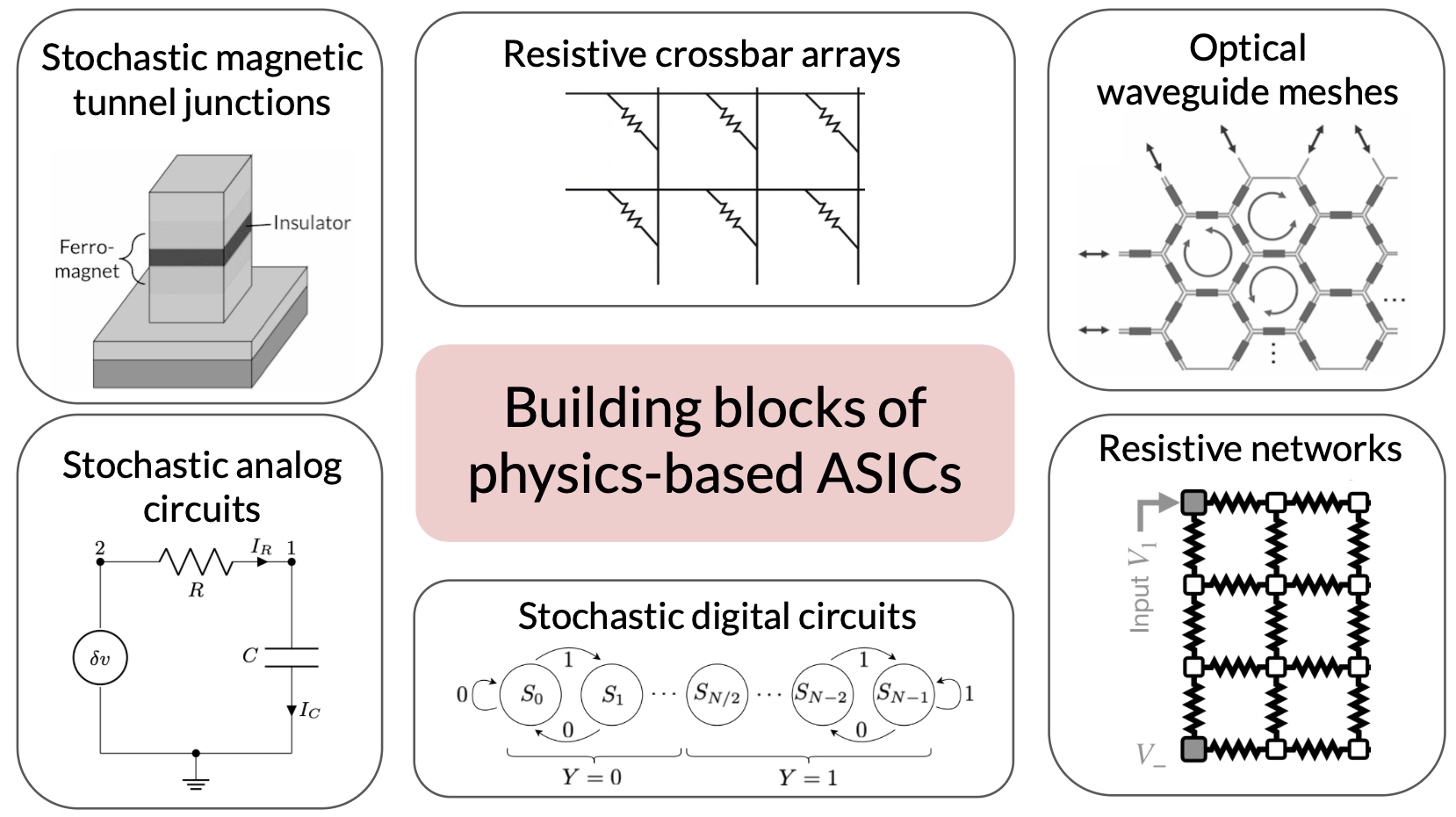

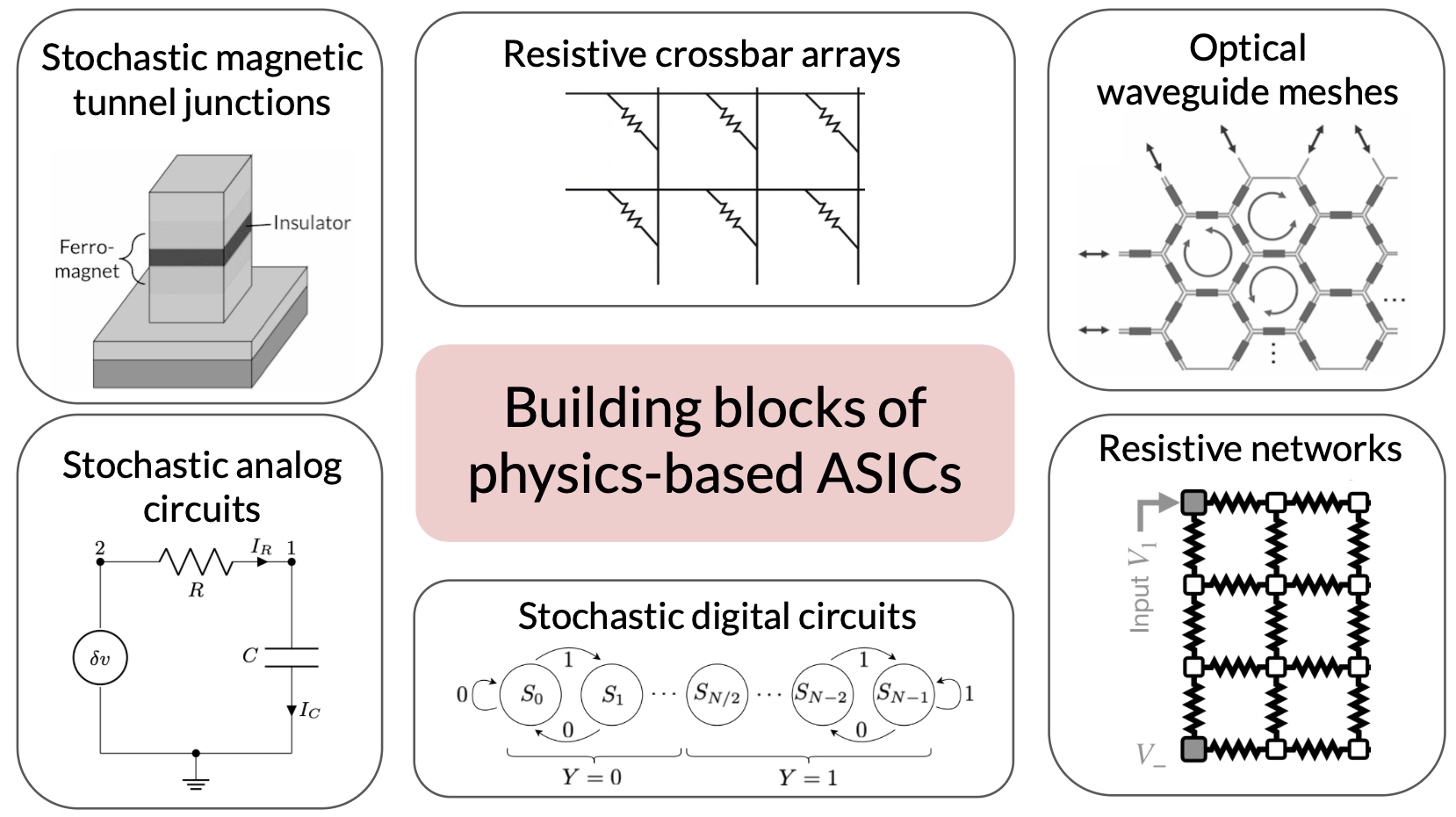

These ASICs employ a variety of physical structures, as illustrated by a number of paradigms such as memristive circuits and Ising machines that accept nonlinear, dynamic interactions over fixed deterministic logic. By reducing the overhead introduced by enforcing idealized behaviors, these designs promise substantive gains in energy efficiency, performance, and scaling. Notably, platforms like reversibly computing architectures underscore the minimal energy dissipation achievable by avoiding information erasure.

Figure 2: Common physical structures used as building blocks for physics-based ASICs, each mapped to distinct computational operations.

Design Strategies

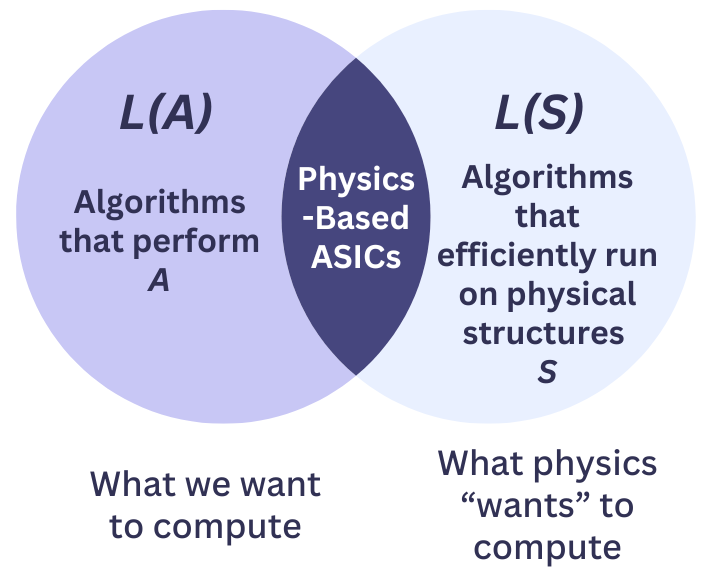

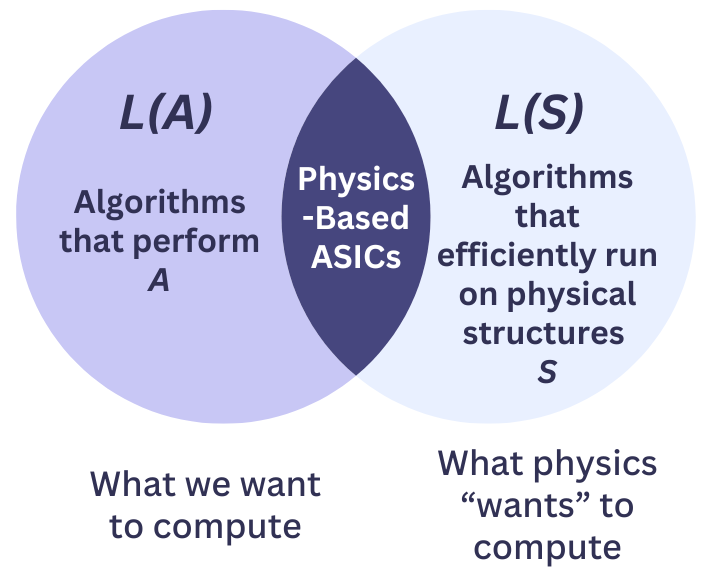

Effective design of physics-based ASICs demands a balance between top-down and bottom-up approaches, each informing the selection of algorithms and hardware. The challenge lies in maximizing the intersection between algorithmic possibilities for target applications and the efficient computational primitives realizable by the hardware's physical structure. This intersection is critical for developing applications like quantum computing within the broader scope of physics-based ASICs.

Figure 3: A Venn diagram illustrating the design principle of maximizing overlap between algorithms and physical structures.

Performance metrics for these ASICs focus on runtime and energy efficiency, captured through comparative ratios against state-of-the-art (SOTA) digital hardware. These metrics guide inclusion in the set of efficient algorithms for a given structure, further refined by exploring energy-time trade-offs intrinsic to physical computation. Moreover, algorithmic co-design is stressed to overcome constraints such as Amdahl’s Law, which limits the achievable acceleration by ASICs based on the fraction of computations amenable to optimization.

Applications: Broad Implications

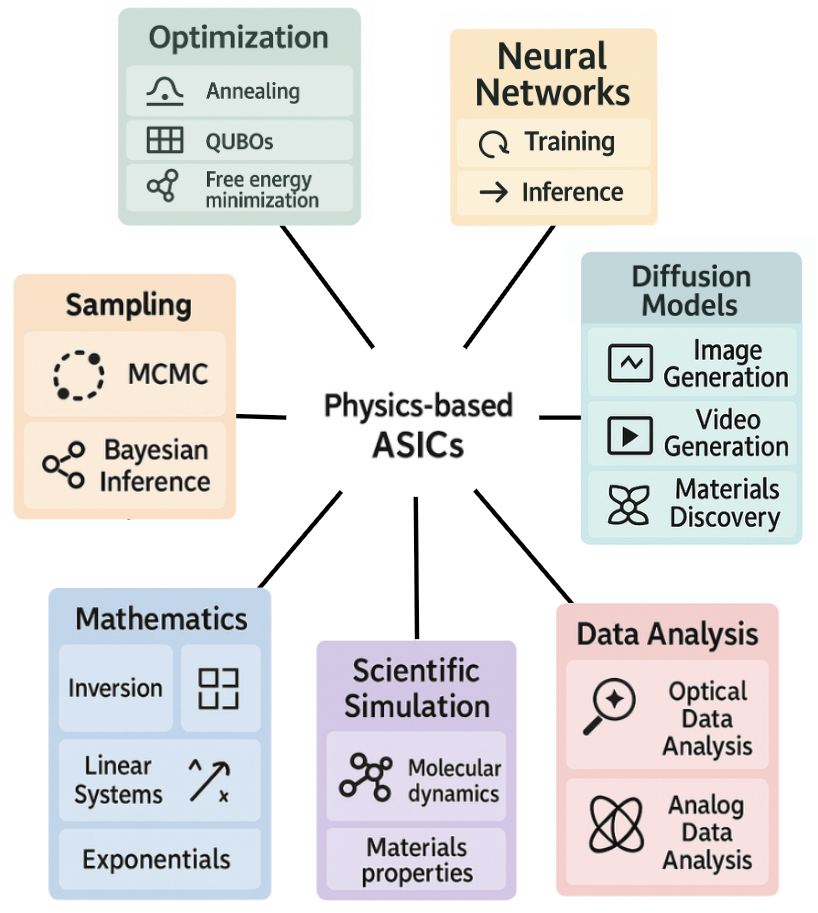

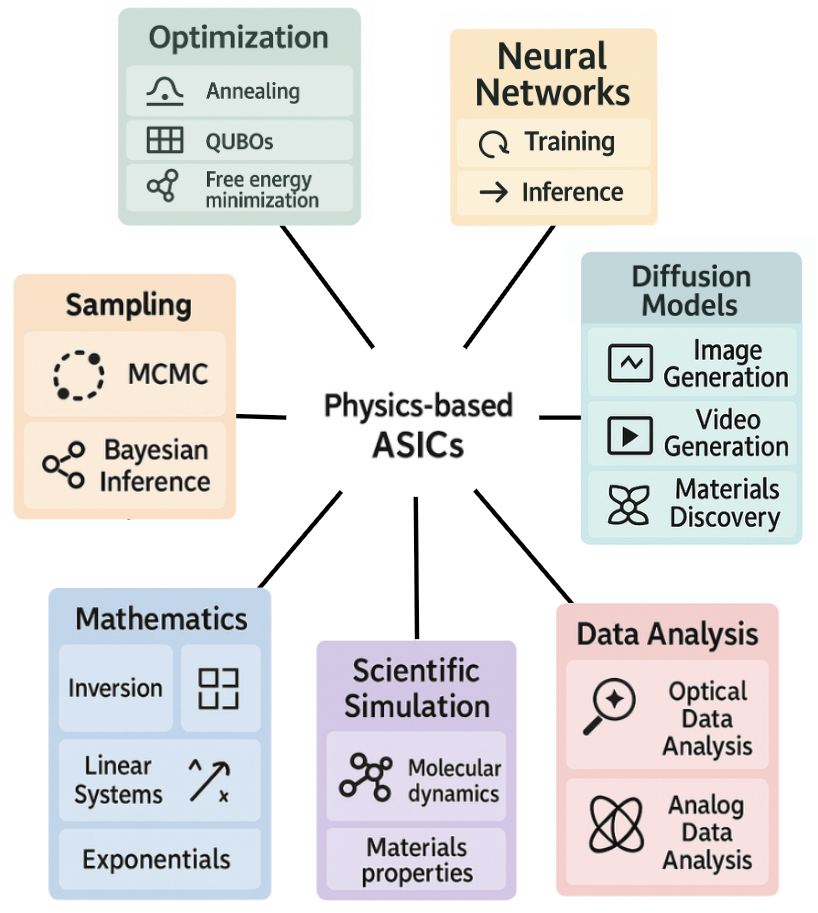

The potential applications of physics-based ASICs span both physics-driven and physics-inspired domains. Notably, artificial neural networks (ANNs) exhibit an inherent resilience to analog computations, thus aligning well with the capabilities of physics-based hardware. Similarly, diffusion models and optimization algorithms benefit from the direct physical mapping enabled by such ASICs.

Figure 4: Applications of physics-based ASICs, highlighting their role in sampling, optimization, and scientific simulation.

Scientific simulation emerges as a strong candidate for physics-based acceleration, with mesoscopic simulations and molecular dynamics exemplifying traditional computational challenges reimagined with physics-based efficiency. Physics-based ASICs, thus, hold the potential for transformative advancements in modeling physical phenomena, as well as bridging gaps in more abstract AI applications through enhanced data analysis methodologies.

Conclusion

In conclusion, physics-based ASICs offer an essential evolution in computing technology in response to the multifaceted compute crisis. By integrating and exploiting the dynamics of physical processes, these ASICs not only proffer considerable improvements in energy efficiency and computational capability but also potentially unlock new AI capabilities. As the field progresses, the development of standard software abstractions and scalable integration strategies remains crucial to their widespread adoption. Physics-based ASICs symbolize a forward trajectory in computing, aligning technological innovation with the underlying principles of physical reality, poised to address the pressing demands of a burgeoning AI landscape.