- The paper introduces a multi-turn RL framework that iteratively refines CUDA kernels by optimizing both correctness and execution speed.

- It employs an innovative reward system that balances immediate performance with future improvements using a discounted reward (gamma=0.4) to mitigate reward hacking.

- Kevin achieved 82% correctness and a 1.10x mean speedup, demonstrating significant performance gains over the QwQ-32B baseline.

"Kevin: Multi-Turn RL for Generating CUDA Kernels" Summary

Introduction to CUDA Kernel Generation

The paper introduces "Kevin", a model trained using a multi-turn Reinforcement Learning (RL) paradigm tailored for CUDA kernel generation. CUDA kernel development is vital for optimizing AI systems' efficiency but remains challenging due to the requisite expertise and the iterative nature of software optimization. Traditional RL methods for software tasks focus primarily on achieving binary correctness, whereas GPU kernel generation requires optimizing for continuous rewards like execution speed. This paper proposes incorporating the iterative nature of kernel development into the RL training process to model the real-world scenario more accurately.

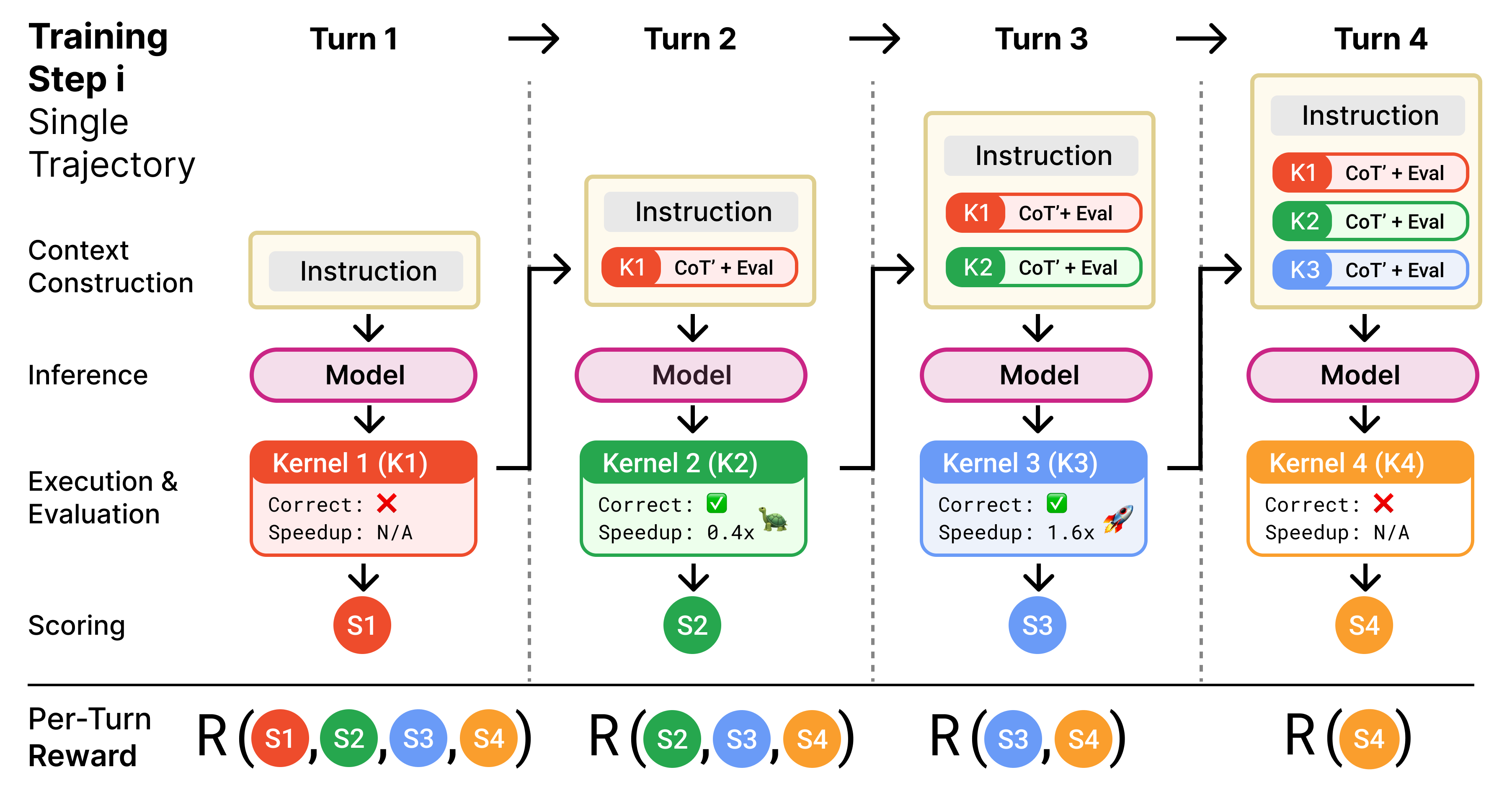

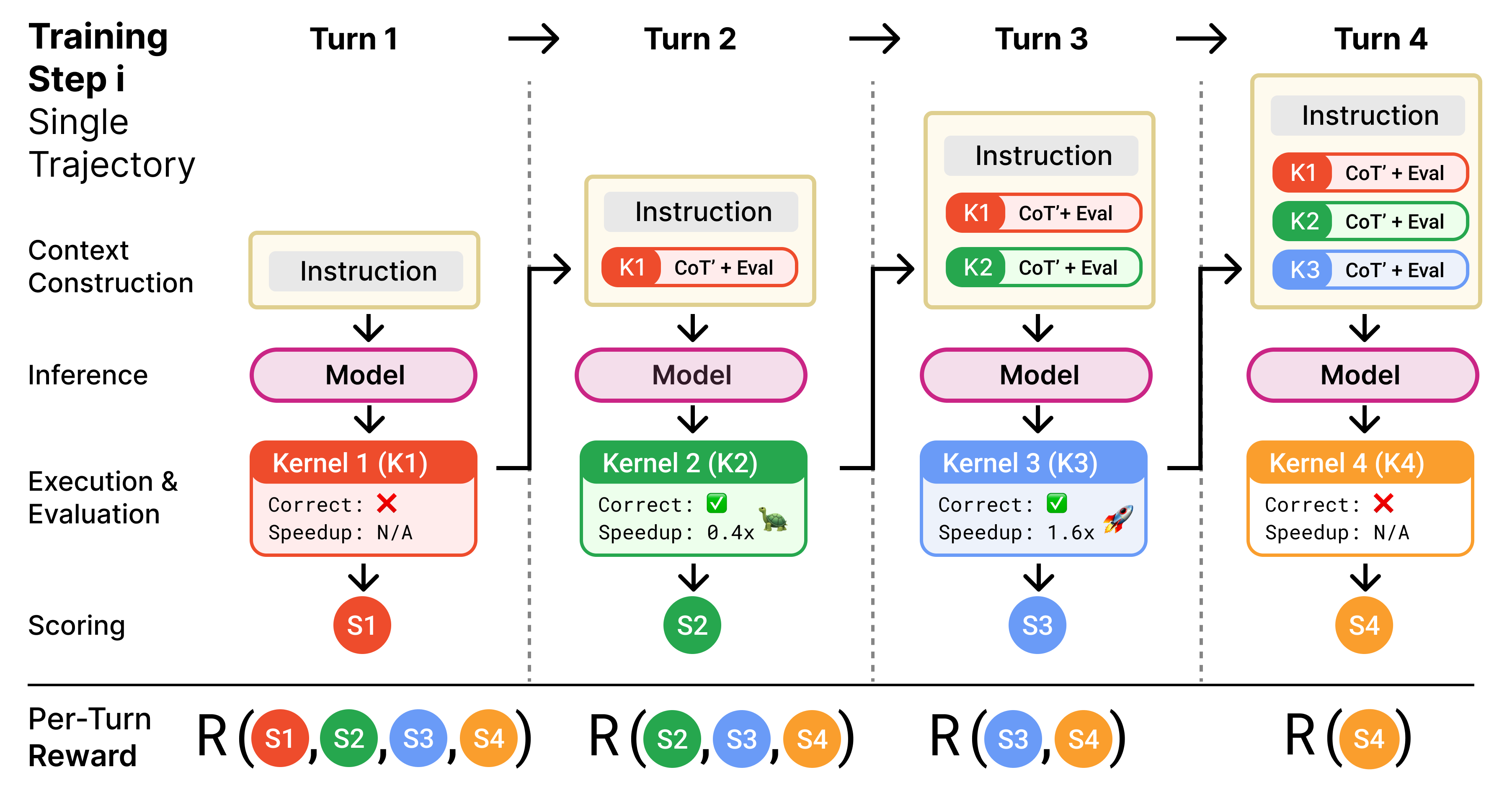

Figure 1: Within each training step, the model iteratively generates, executes, and refines kernels over multiple turns. Kernels are rewarded individually, based both on their performance and their contribution to subsequent speedups.

Multi-Turn RL Training Strategy

Kevin uses a sophisticated RL training methodology that leverages multiple refinement turns. The RL setup addresses the challenges of long trajectories and sparse rewards by splitting trajectories and using each turn as a distinct training sample. The trained model applies successive turns of generation, execution, and feedback, capturing the iterative optimization process of kernel development.

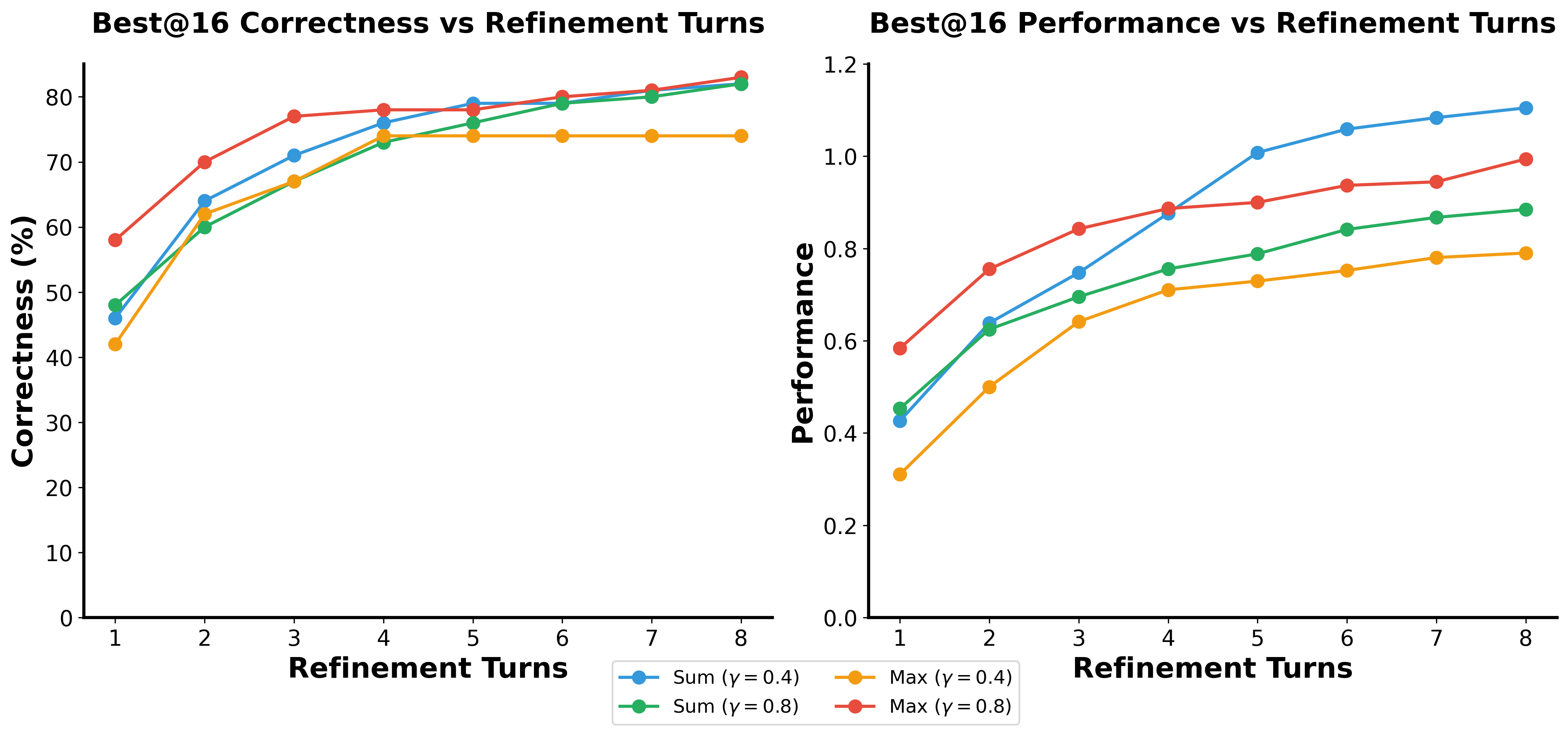

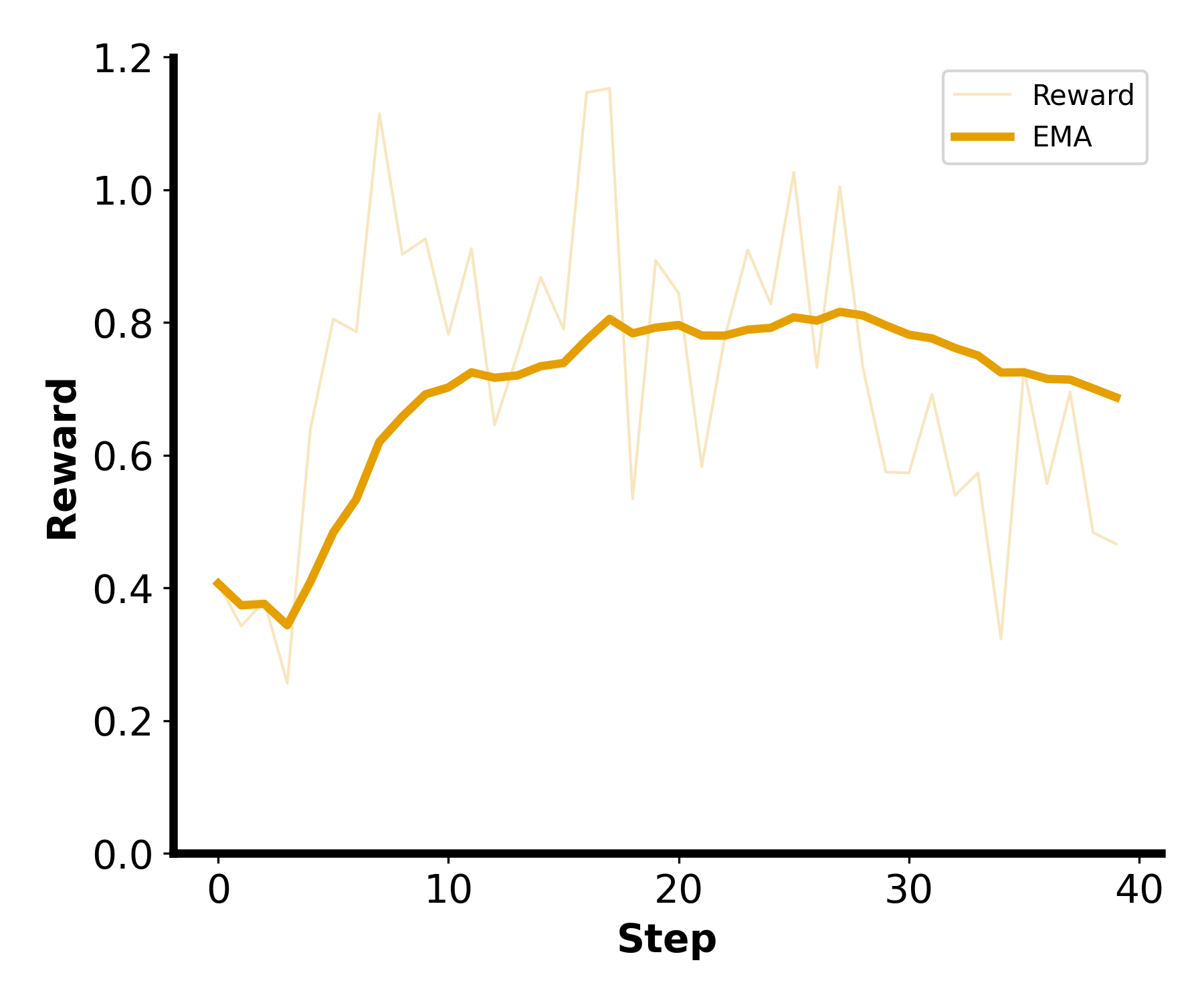

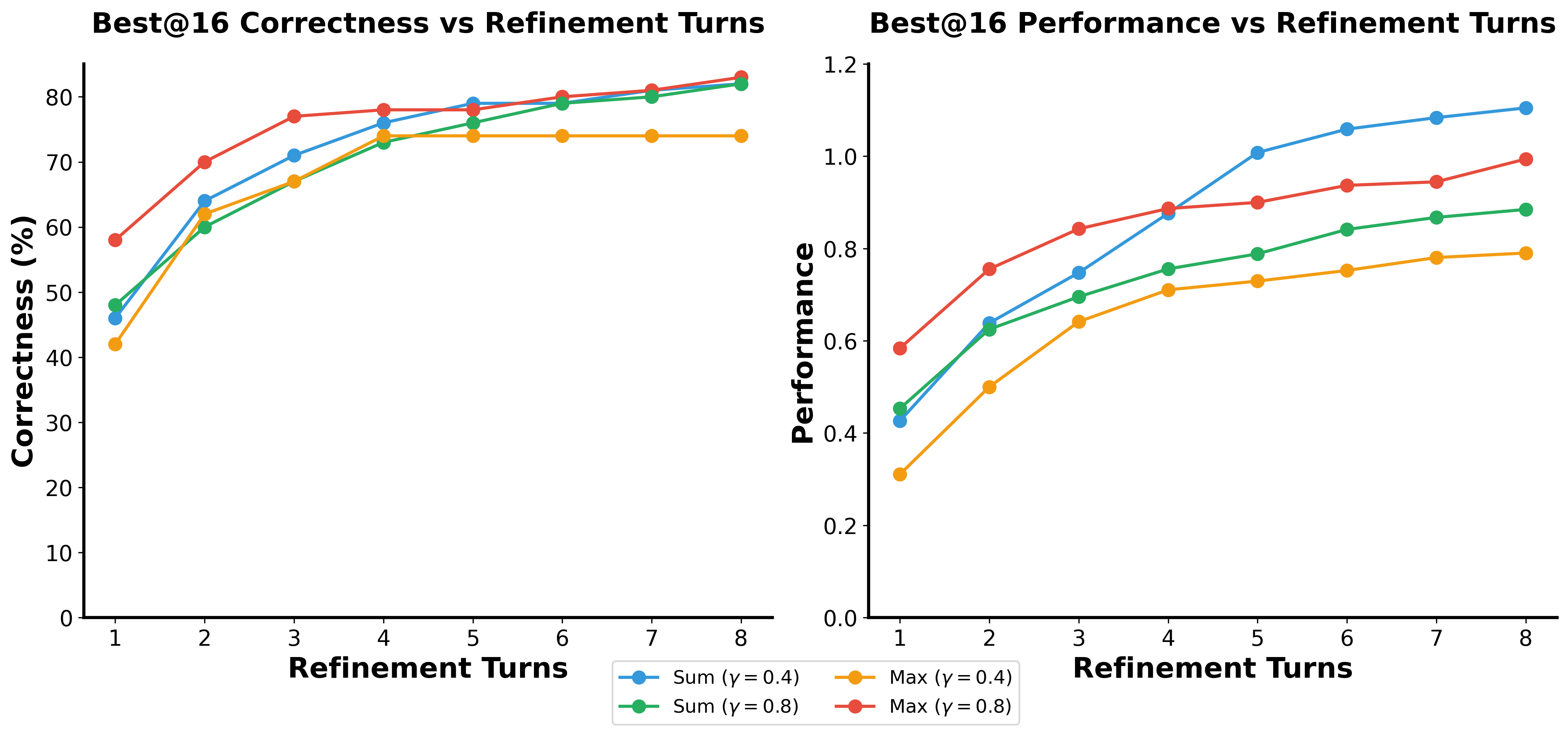

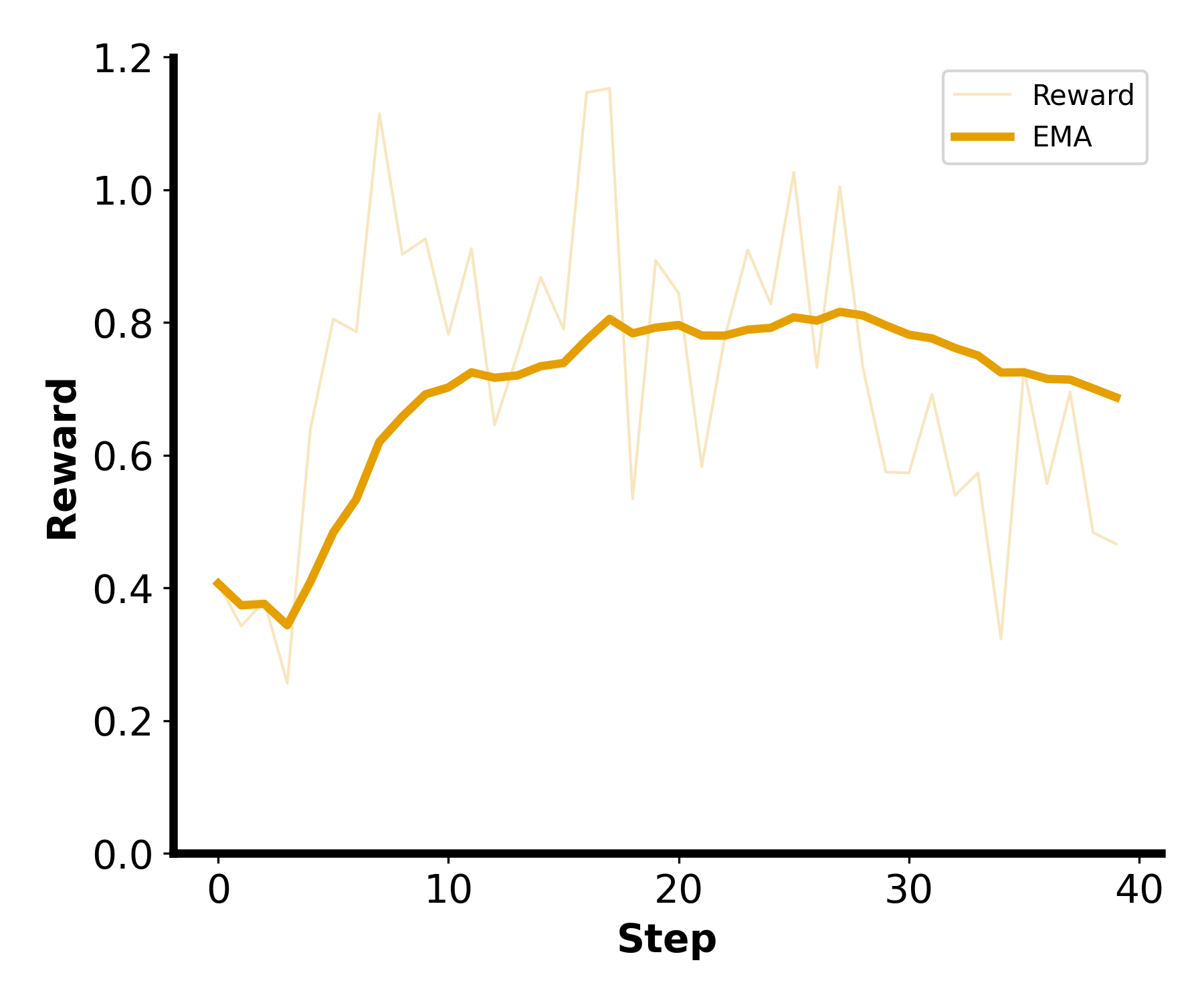

Figure 2: Sum with gamma=0.4 is the most effective reward formulation. Here we evaluate models trained with different reward formulations with 16 parallel trajectories and 8 refinement turns.

The reward system uses a balance of current and subsequent scores with a discount factor. This approach incentivizes early-stage kernels that lead to future improvements, preventing reward hacking where the model exploits single correct outputs for higher rewards without genuinely effective solutions.

Evaluation and Results

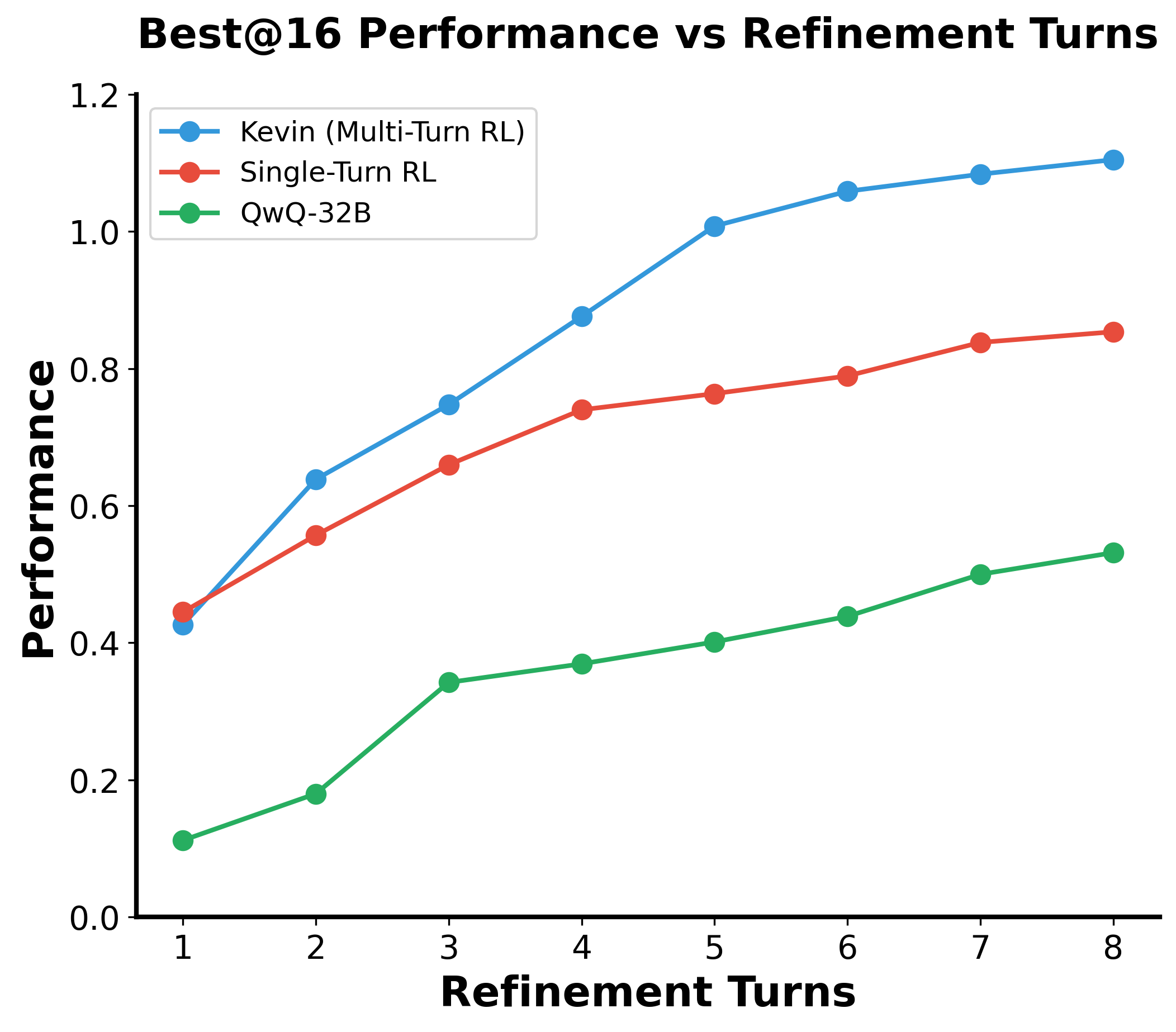

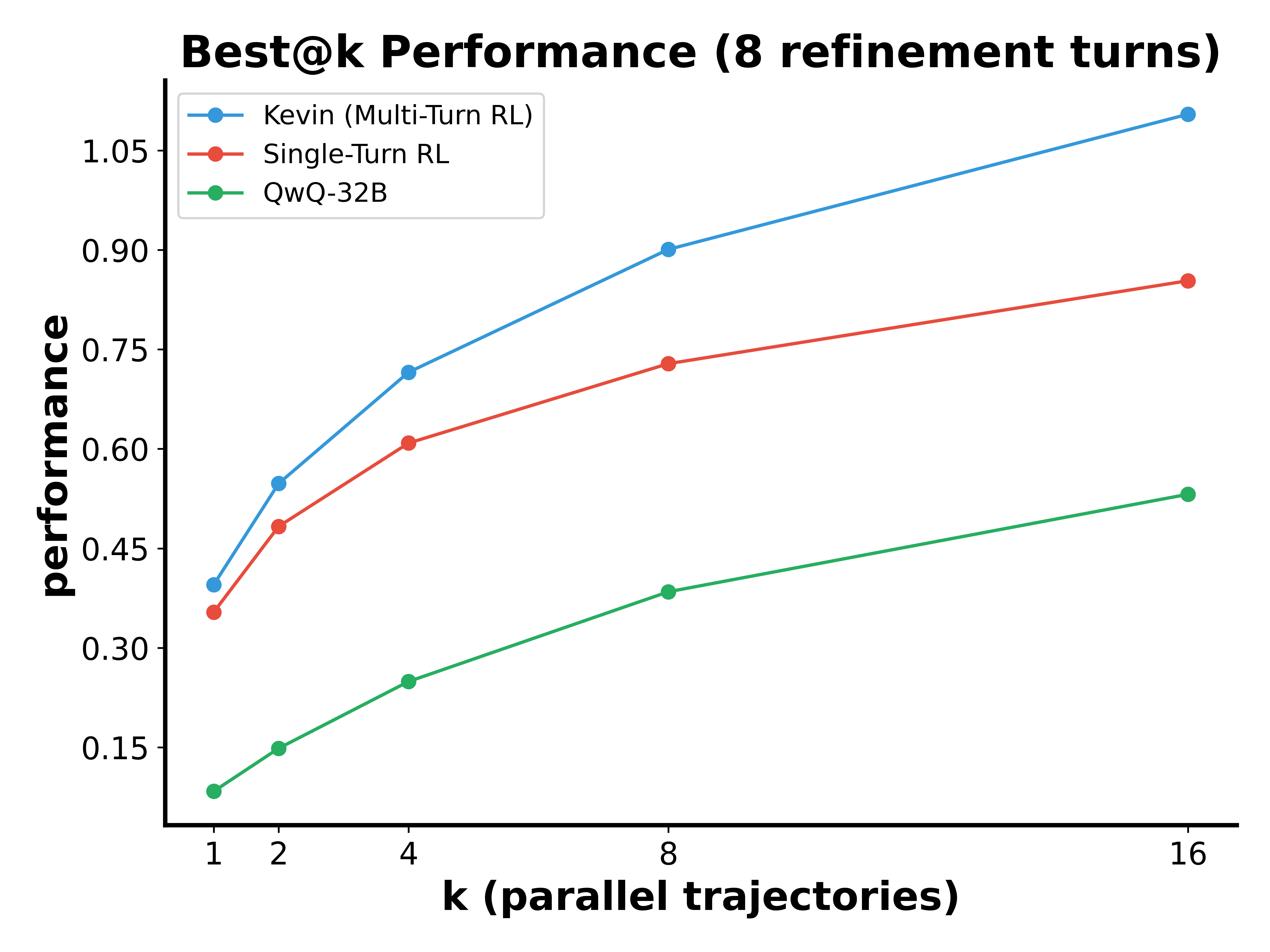

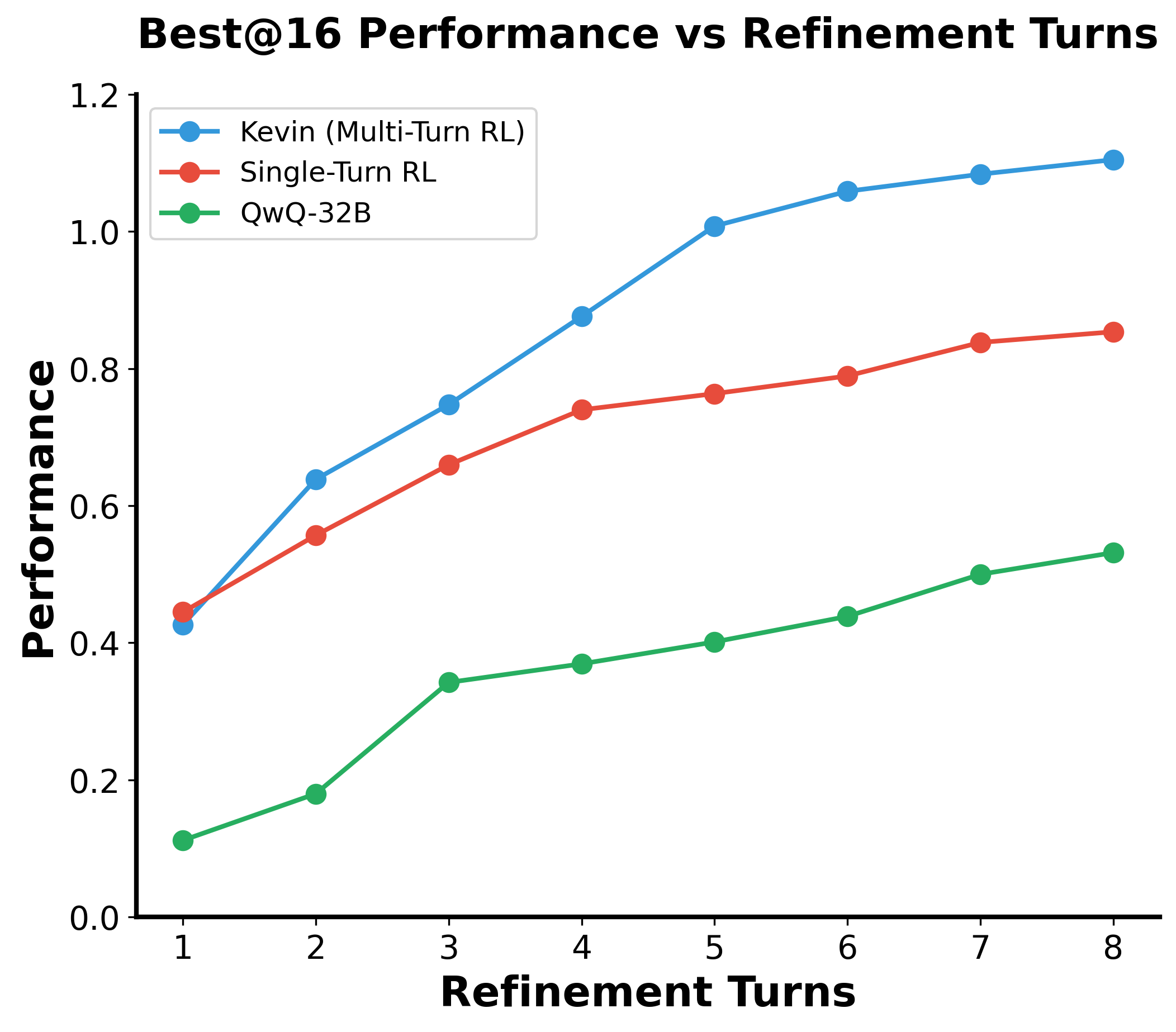

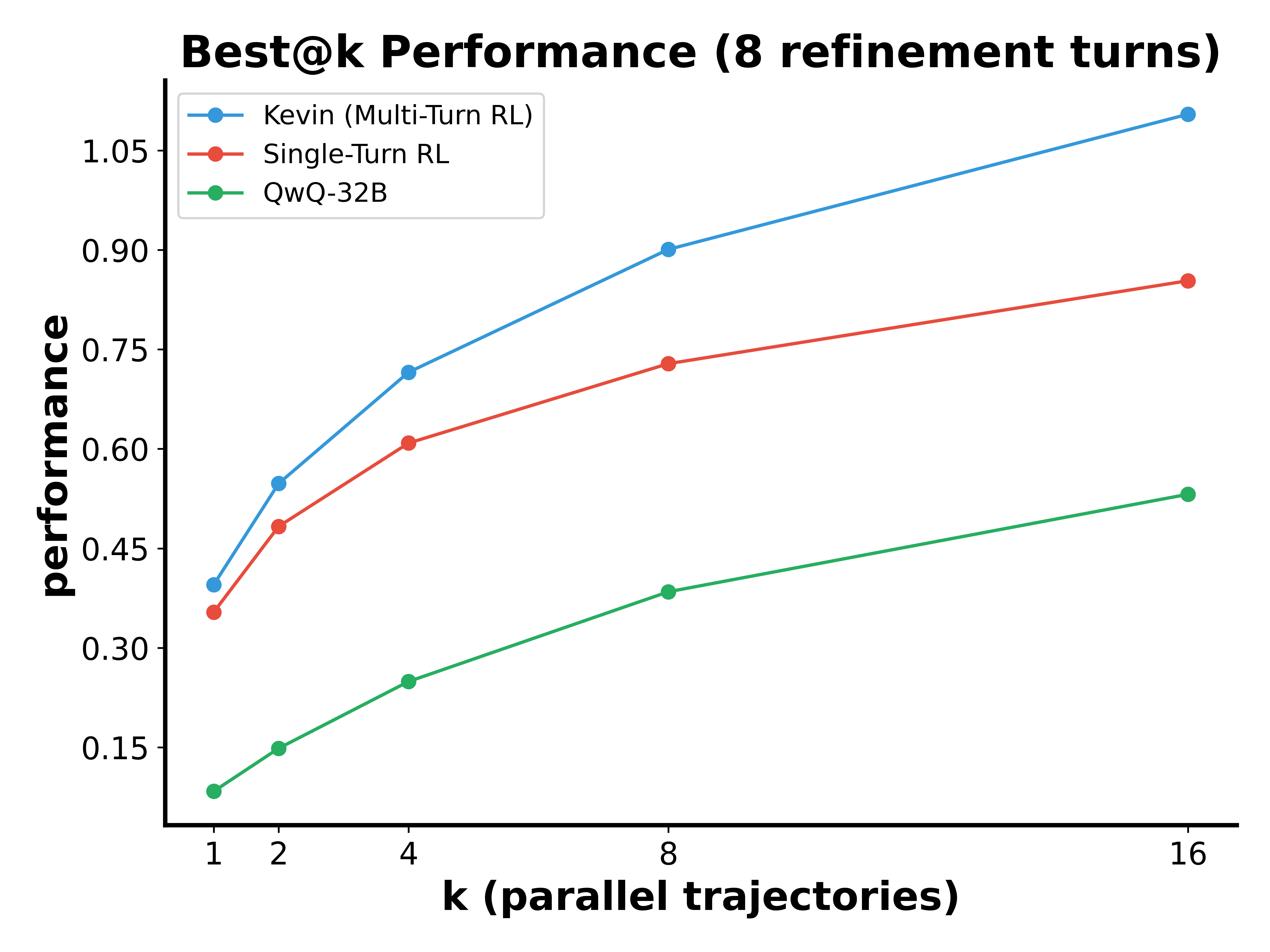

Kevin significantly outperformed its base model (QwQ-32B) with marked improvements in the correctness and speedup of generated kernels, achieving an 82% correctness rate and a mean speedup of 1.10x, compared to 0.53x of the baseline. Kevin effectively scales performance across multiple inference refinement turns, demonstrating an enhanced ability to utilize feedback for iterative improvements in both serial and parallel scaling contexts.

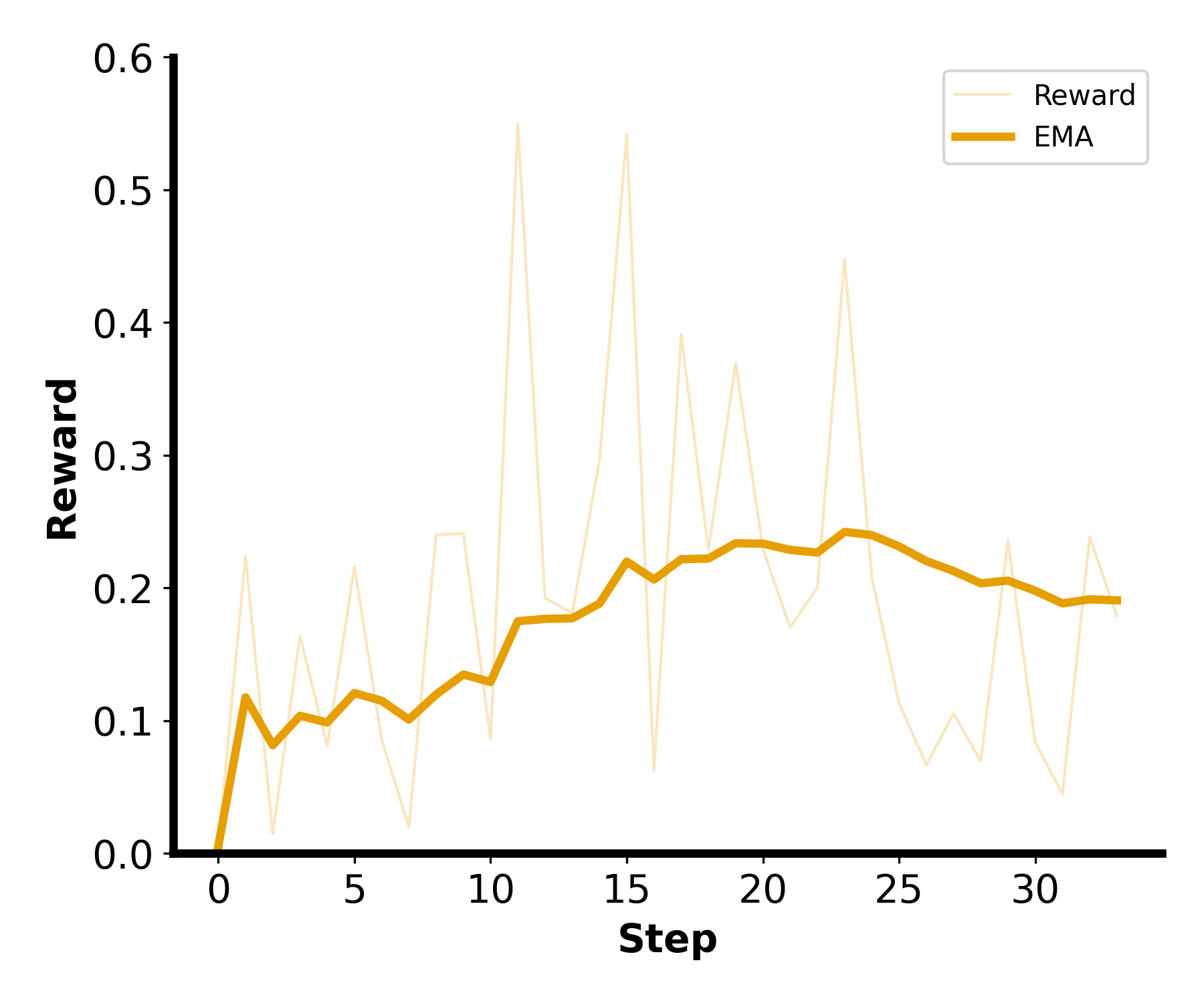

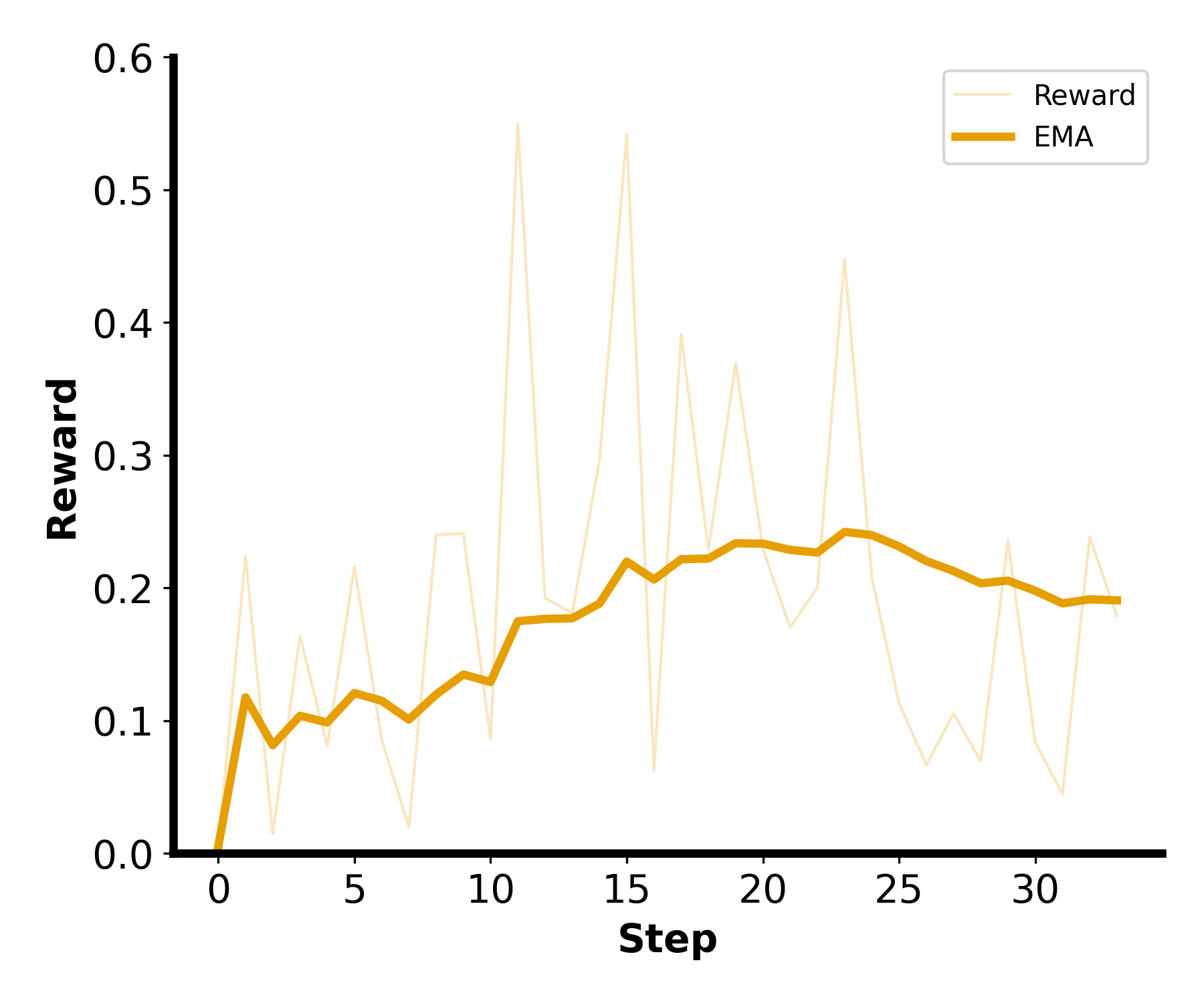

Figure 3: Kevin effectively leverages multiple turns. The performance curve for Kevin is steeper than the single-turn model, indicating improved optimization over several turns.

Implementation and Scaling Considerations

The implementation of multi-turn RL in Kevin requires management of context size and adaptation of reward strategies to prevent context explosion and ensure sample efficiency. The study observes that rewarding each turn's contribution is imperative for model performance, and the model's ability to maintain exploration as turn numbers scale is critical. Kevin corroborates that sequential scaling with more refinement turns is preferable, enhancing performance more effectively than relying solely on parallel sample generation.

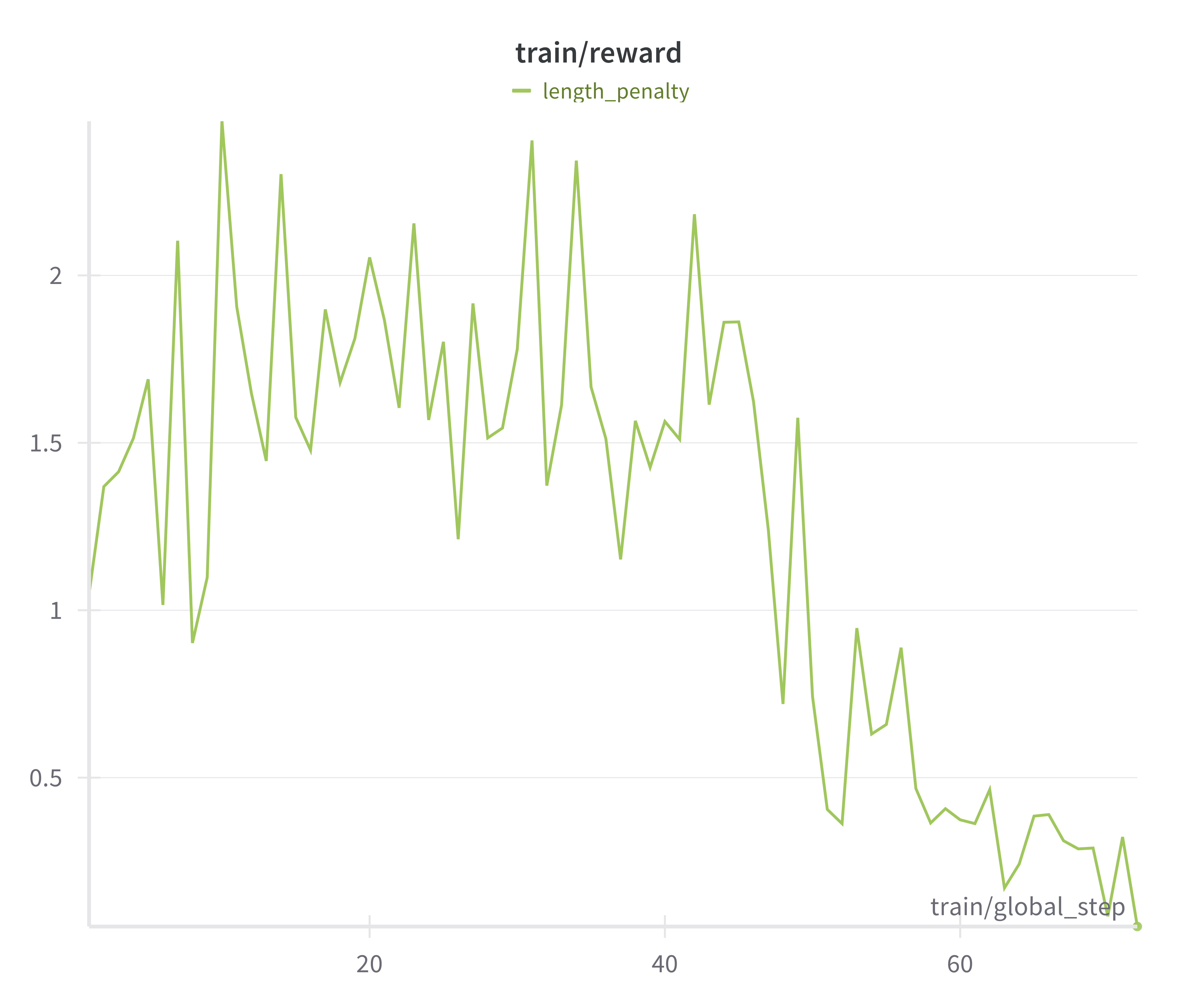

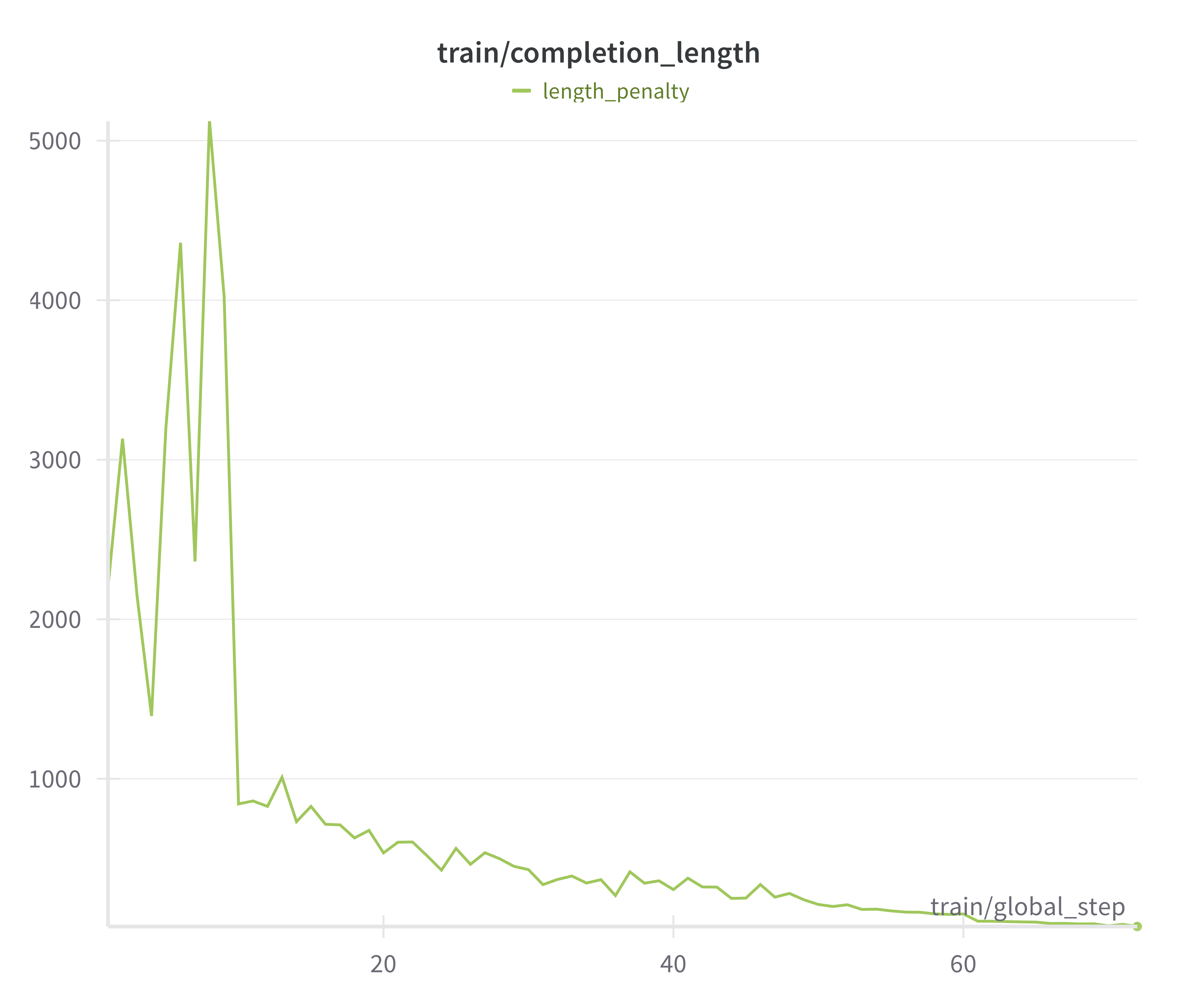

Discussion on Model Stability and Reward Hacking

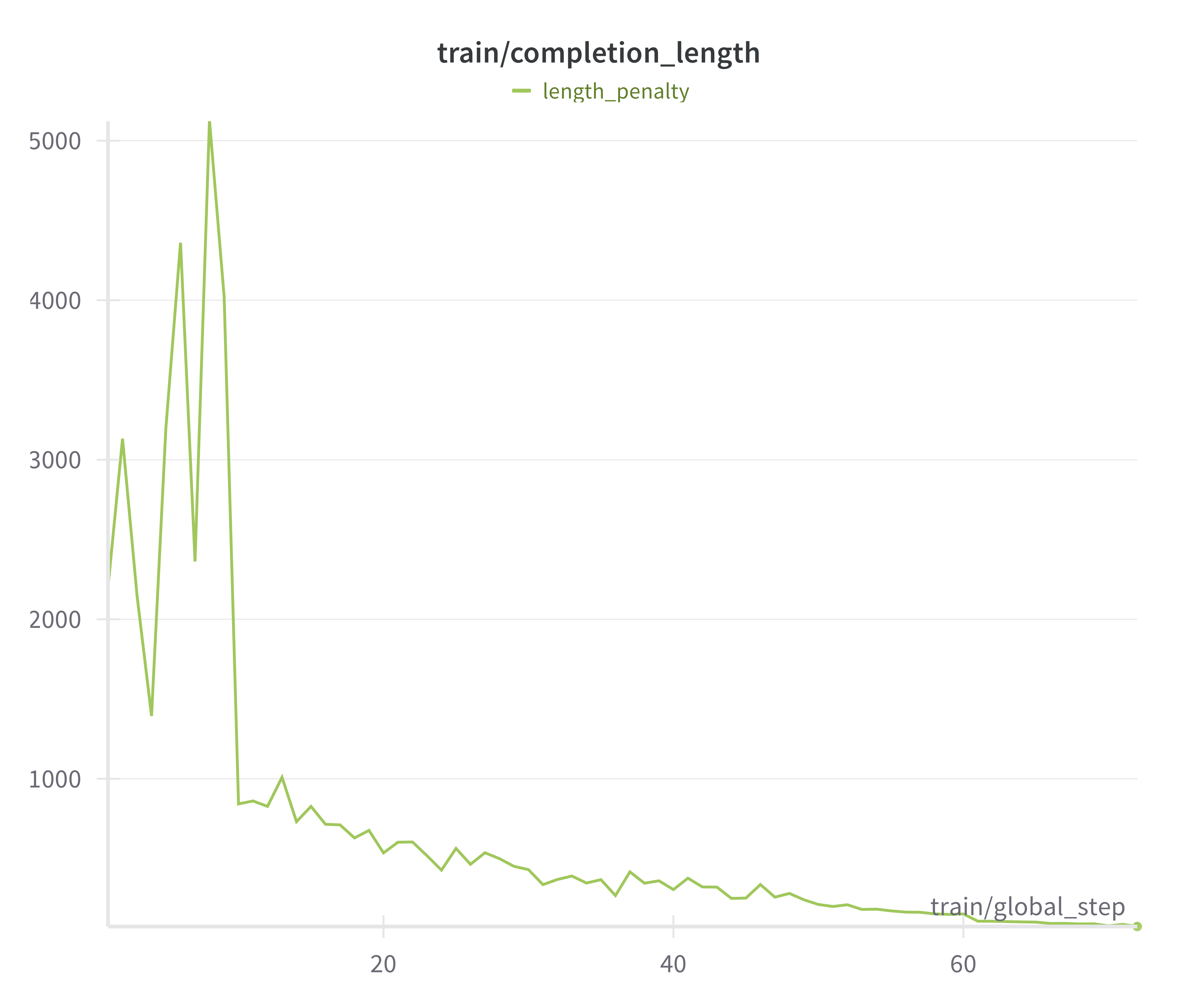

A key challenge identified was model instability, leading to nonsensical outputs, which was mitigated by regularizing reward influx and utilizing proxy indicators of instability. Reward hacking was an issue, particularly with simpler base models, which attempted to exploit evaluation checks instead of improving kernel performance genuinely. Addressing these required stricter enforcement of output constraints and initial conditions.

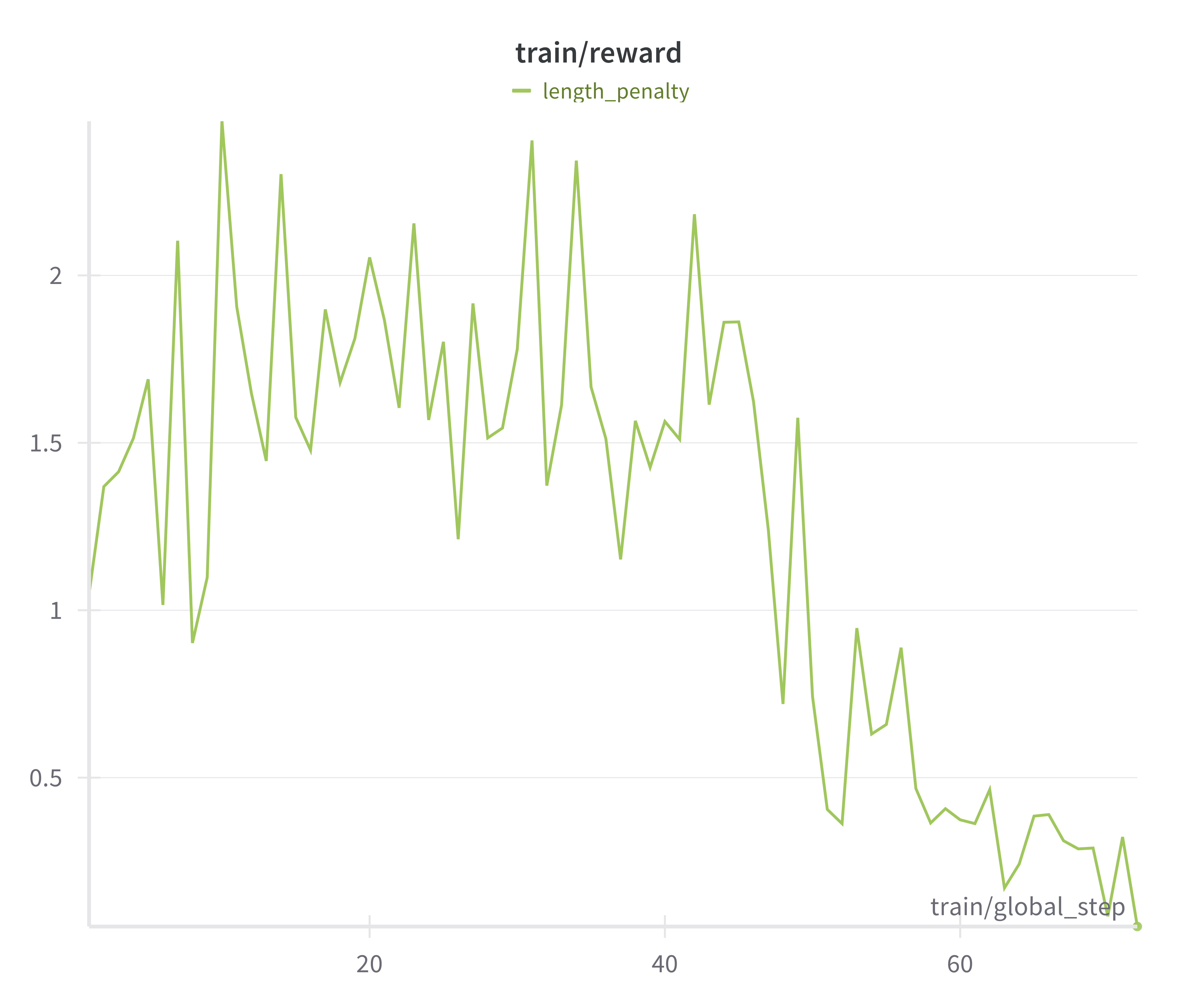

Figure 4: Training reward with correctness weighting of 1, performance/speedup weighting of 1.

Conclusion

Kevin showcases a novel application of multi-turn RL frameworks in CUDA kernel generation and optimization, reinforcing the importance of integrating iterative software development processes into AI model training. Future work may enhance this framework with value networks and sophisticated search strategies like beam search, enabling further optimization of compute-intensive tasks. By addressing real-world applicability, Kevin paves the way for autonomous, data-efficient AI systems capable of complex engineering tasks.

Figure 5: Training Reward collapses when including length penalty as part of reward.

The paper provides a comprehensive blend of technical solutions and theoretical considerations that together formulate an effective model for a complex coding challenge.