- The paper presents a zero-shot pipeline that synthesizes high-DoF articulated objects from static inputs without relying on curated motion data.

- It leverages part-aware mesh recovery, Monte Carlo Tree Search for kinematic tree inference, and DW-CAVL optimization to precisely estimate joint parameters.

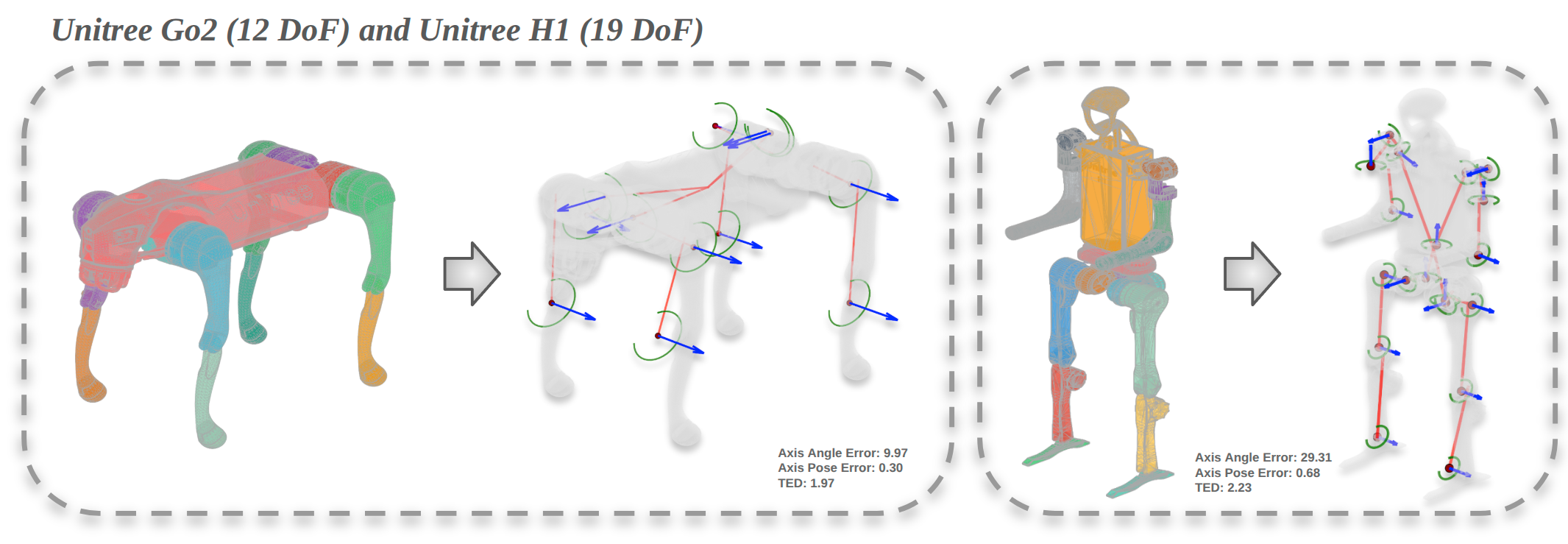

- Evaluations on PartNet-Mobility and robot platforms demonstrate reduced joint error metrics and successful deployment in simulation and real-world manipulation tasks.

Kinematify: Open-Vocabulary Synthesis of High-DoF Articulated Objects

Motivation and Contributions

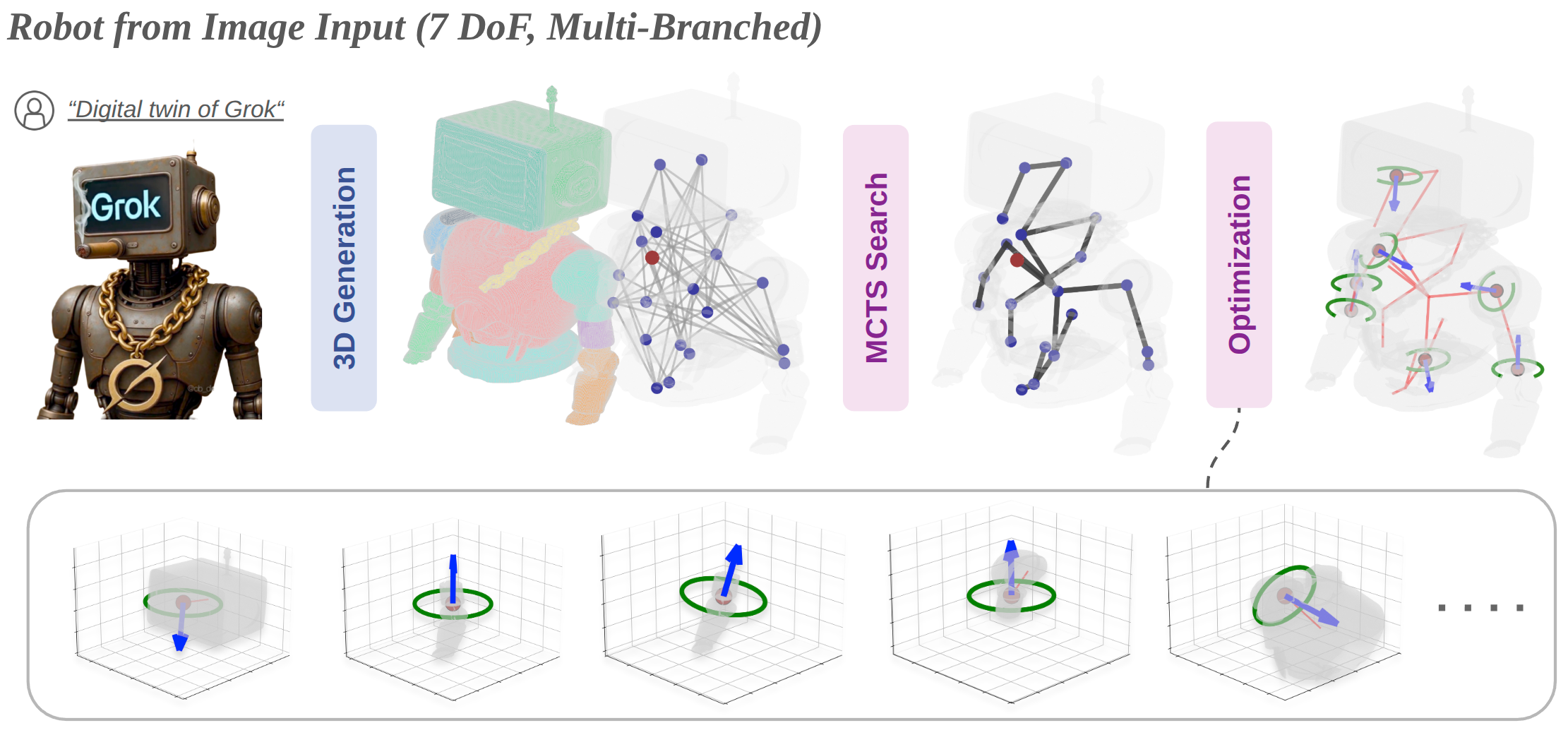

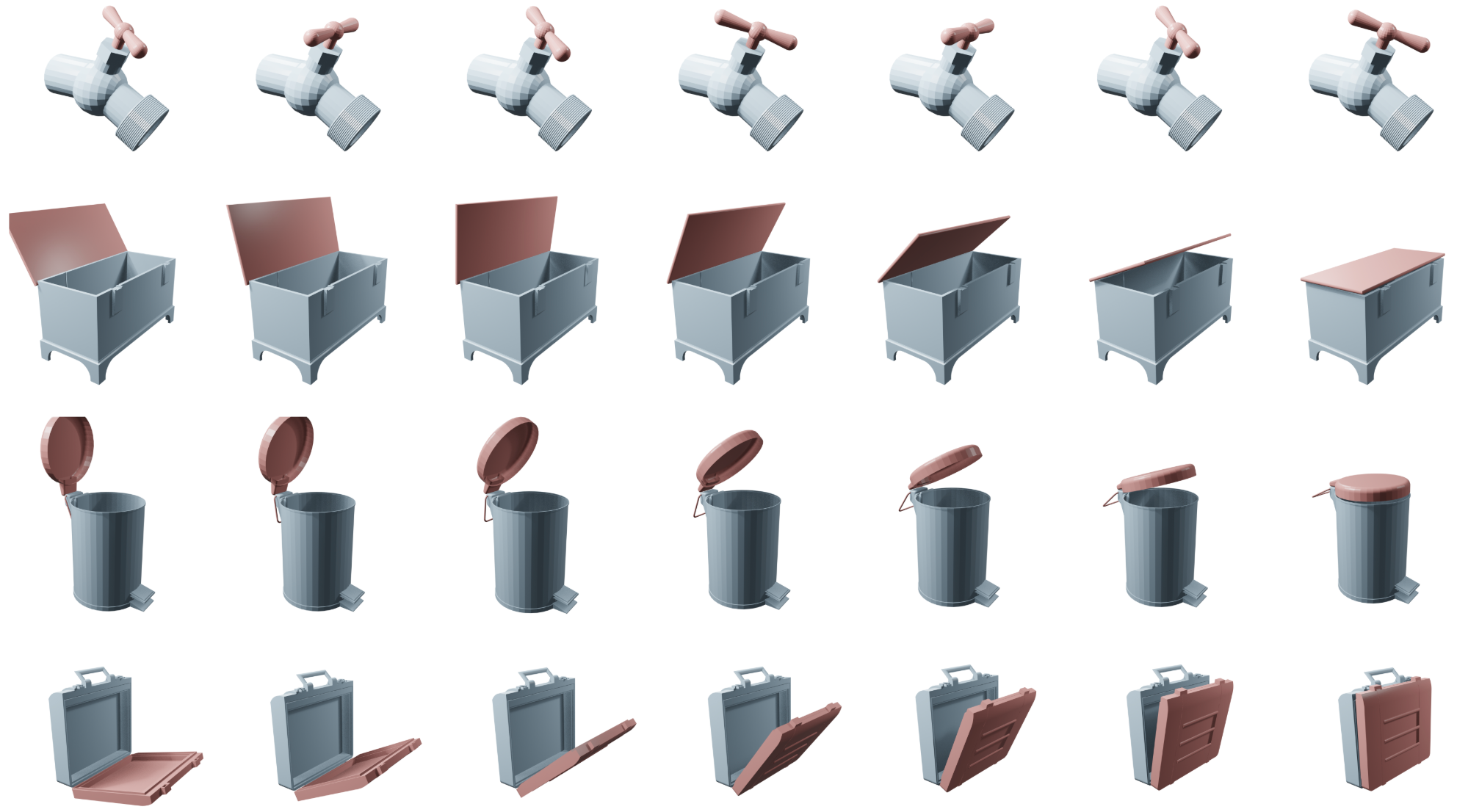

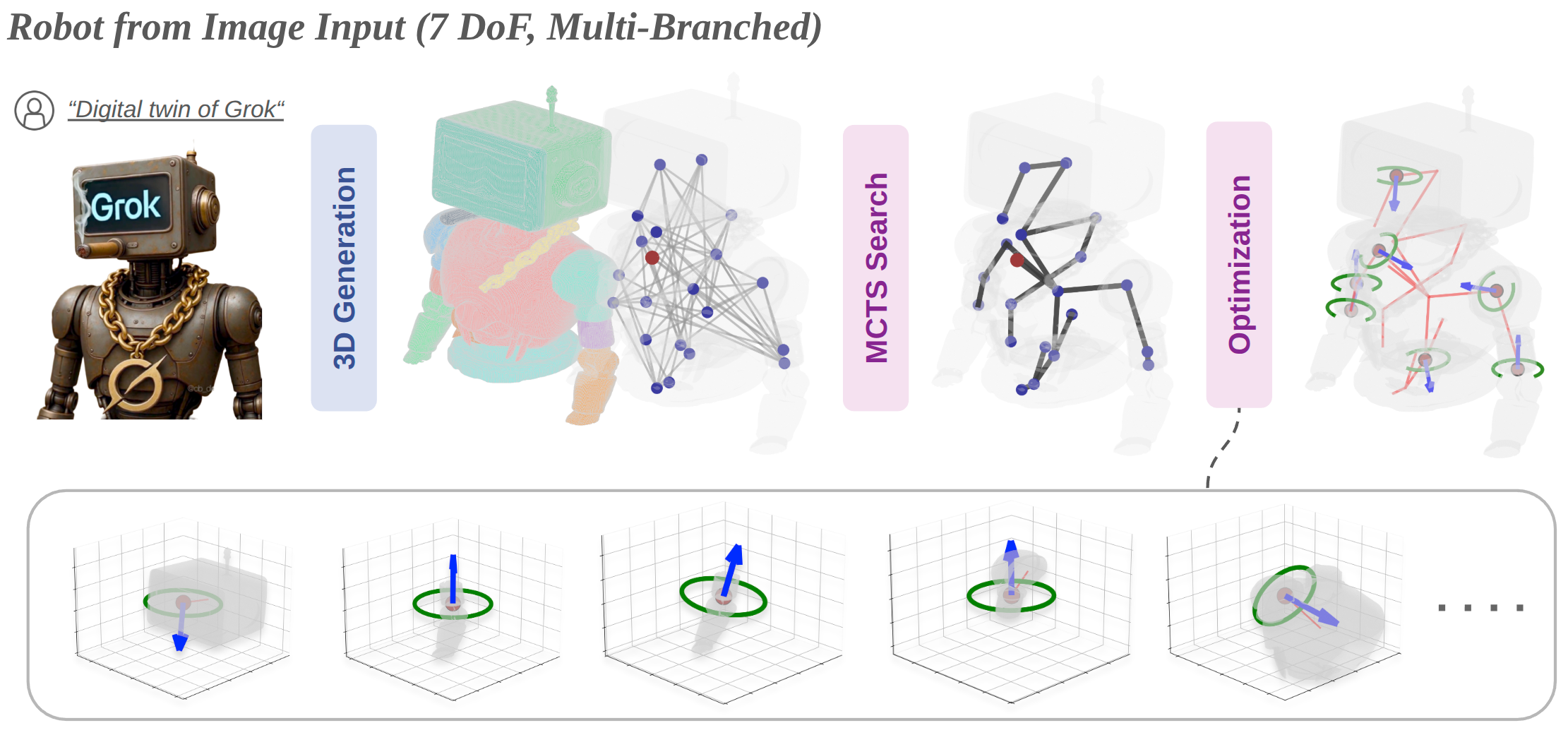

Kinematify introduces a novel zero-shot pipeline for synthesizing articulated objects and robots with high degrees of freedom directly from a single RGB image or textual description. The method circumvents the dependency on motion data or curated articulation priors prevalent in previous works. The two central challenges addressed are: (i) inference of multi-branched kinematic topologies for high-DoF objects, and (ii) estimation of joint parameters from static geometry. The core innovations include the use of part-aware 3D foundation models for mesh reconstruction, Monte Carlo Tree Search (MCTS) with structural priors for kinematic tree inference, and geometry-driven optimization (DW-CAVL) for joint parameter recovery. Output is functionally valid and physically consistent, with URDF generation enabling direct deployment for simulation and robotics platforms.

Pipeline Overview

The pipeline comprises four stages: part-aware mesh recovery, contact graph construction, kinematic tree inference, and joint parameter optimization.

A 3D foundation model generates a segmented mesh from image or text input. The contact graph captures candidate relations between parts using signed distance fields (SDFs). MCTS infers a kinematic tree, resolving attachment ambiguities via reward terms encoding hierarchy, symmetry, structural regularity, static stability, and contact strength. Joint types are predicted via vision-LLMs (VLM), and joint parameters are optimized with the DW-CAVL objective leveraging near-contact geometry.

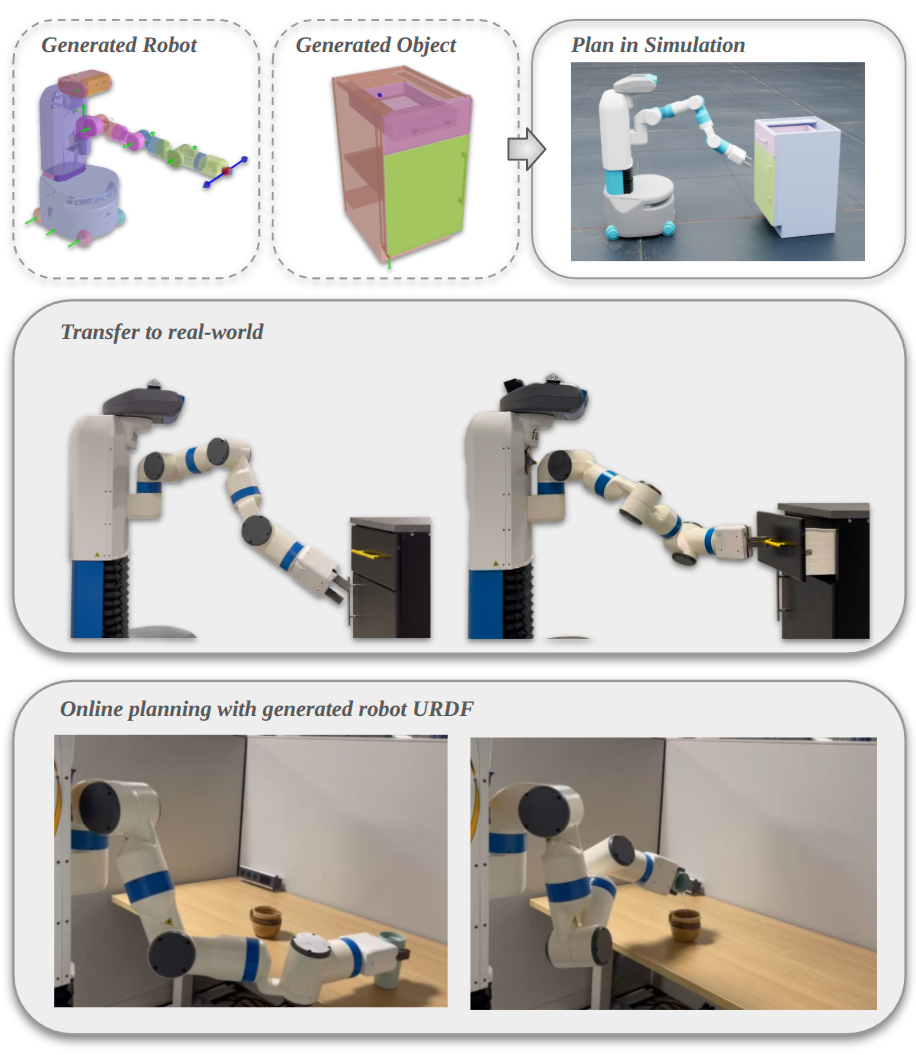

Figure 1: Pipeline of Kinematify for recovering articulated robots from a single RGB image, culminating in optimization of revolute axes and joint parameters via DW-CAVL.

Part segmentation employs part-aware 3D foundation models to construct meshes with robust geometric fidelity. SDFs are trained per part and mutual surface proximity is computed to determine contact, forming the edge structure of the contact graph. The segmentation process discards parts with insufficient geometric spread, mitigating false positives and ensuring structural coherence.

Kinematic Tree Inference via Monte Carlo Tree Search

The kinematic inference stage utilizes MCTS with an objective balancing five reward terms: structural regularity (depth, degree), static stability (center-of-mass support), contact strength, symmetry (within discovered clusters), and hierarchy (preventing larger children than parents). BFS initialization orients the contact graph, and constraints prohibit spurious intra-cluster links based on part symmetry. Rollouts greedily complete the tree and terminal rewards are backed-up for optimal selection. This search resolves multi-branch and symmetry ambiguities that defeat greedy strategies.

Geometry-Driven Joint Parameter Optimization

Joint reasoning synthesizes orthographic view sets for type prediction and hinge/pivot parameter estimation. Weighted PCA and contact normals inform candidate axes and pivots. Differentiable rigid motions are parameterized for revolute and prismatic joints. The DW-CAVL loss comprises three terms: consistency (preserving near-contact), collision (minimizing interpenetration under motion), and pivot regularization (anchoring to contact centroid). Candidate axes are ranked and refined, with top-scoring parameters selected for the final URDF export.

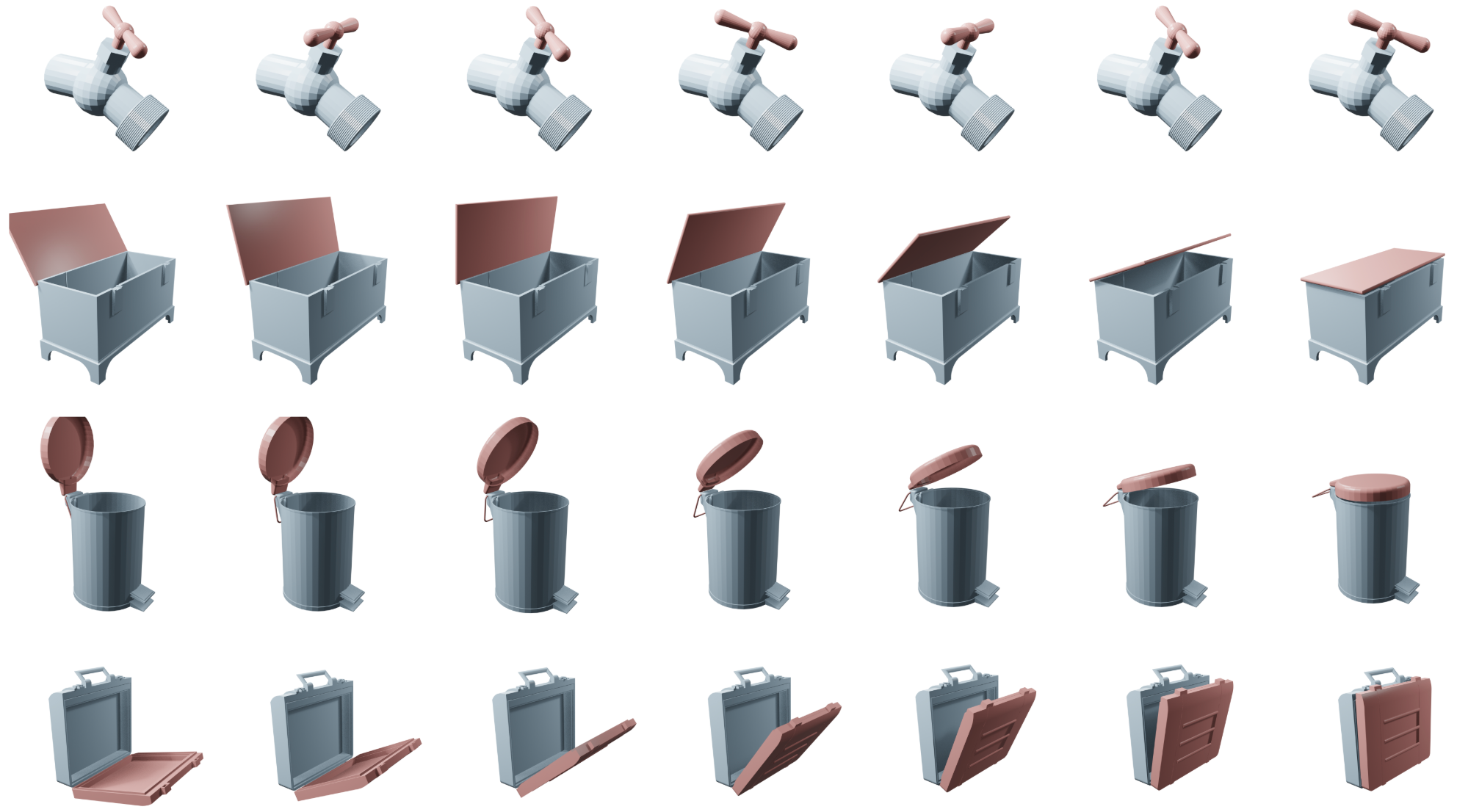

Figure 2: Examples of articulated objects synthesized by Kinematify exhibiting diverse joint configurations.

Experimental Evaluation and Numerical Results

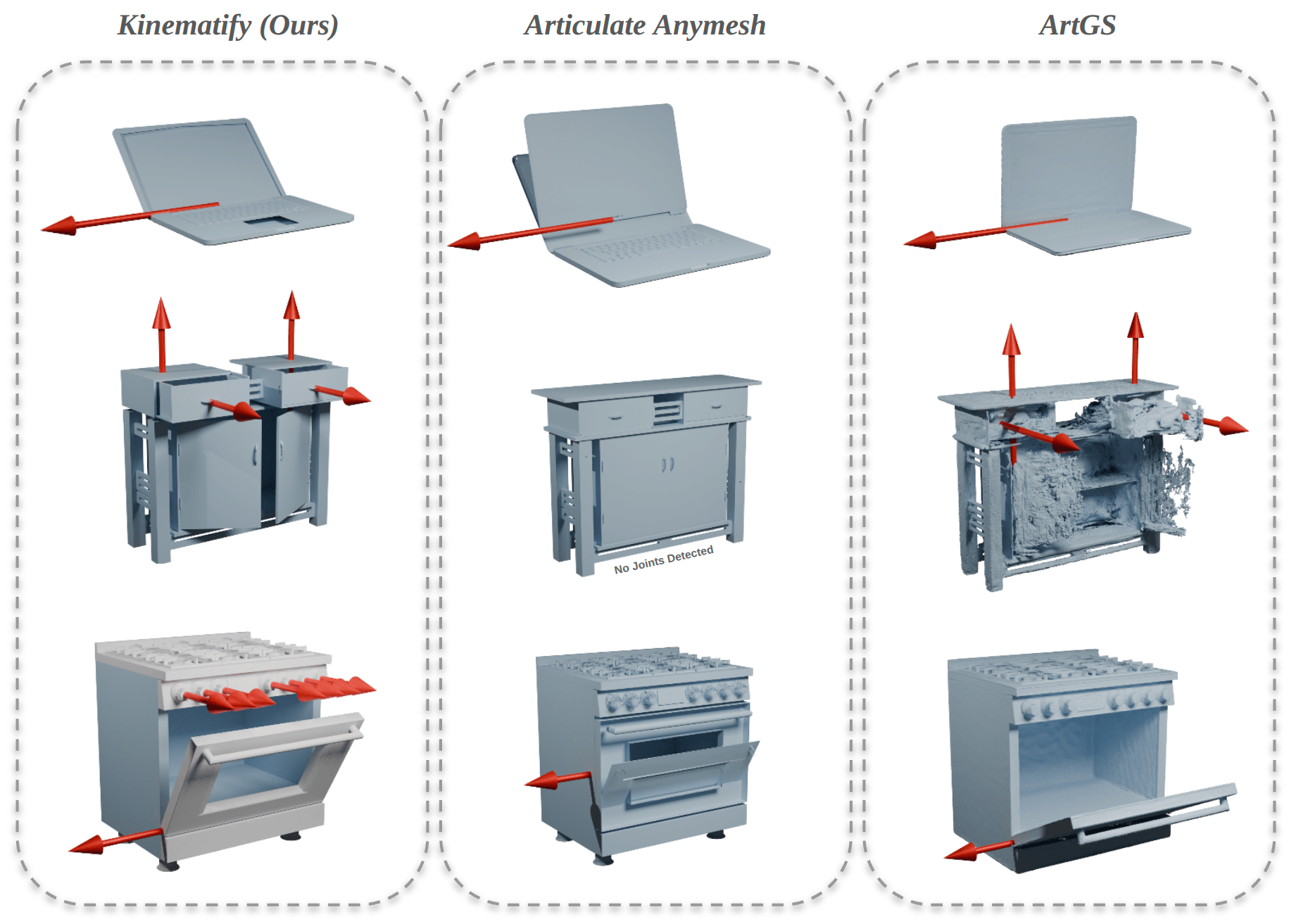

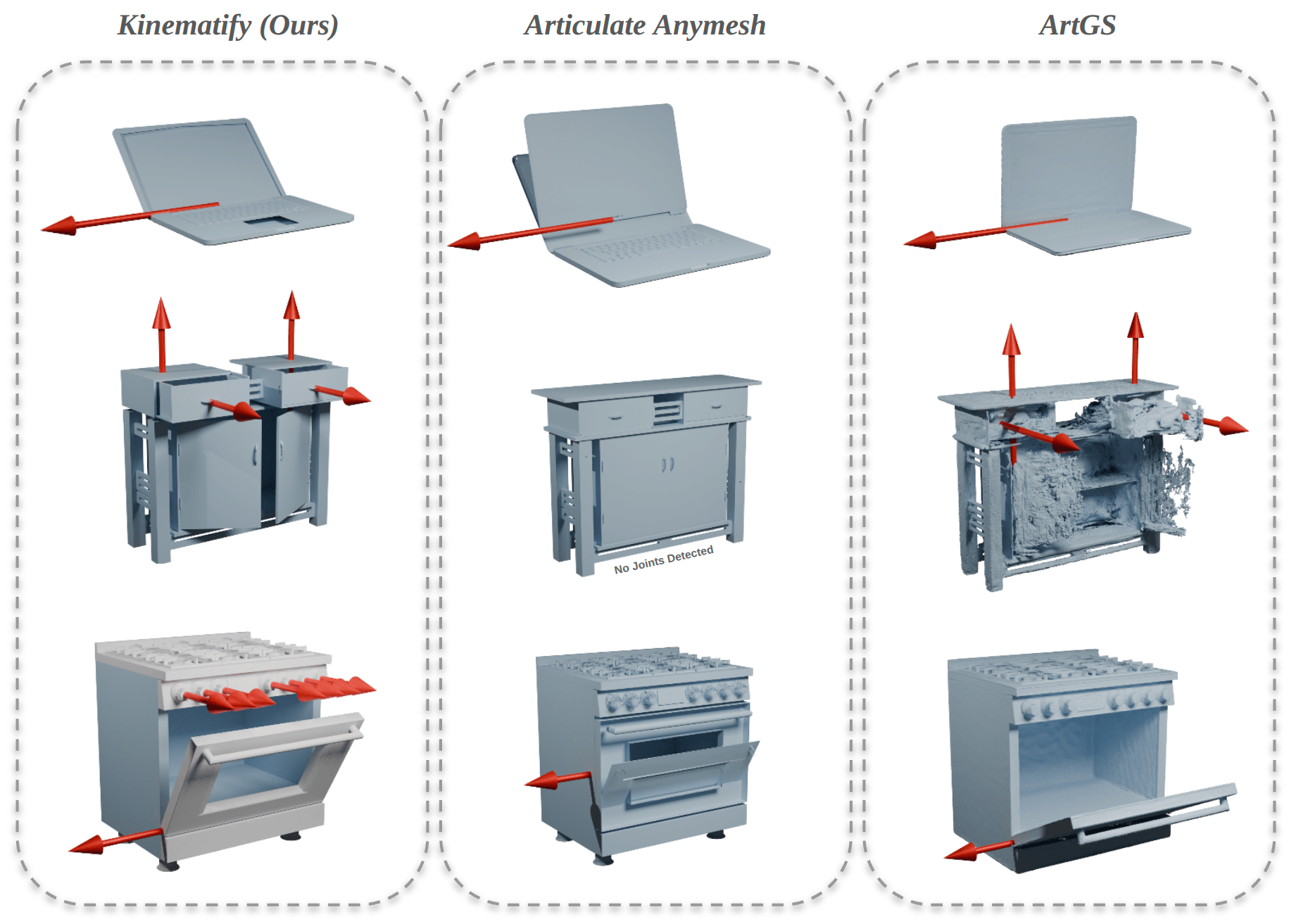

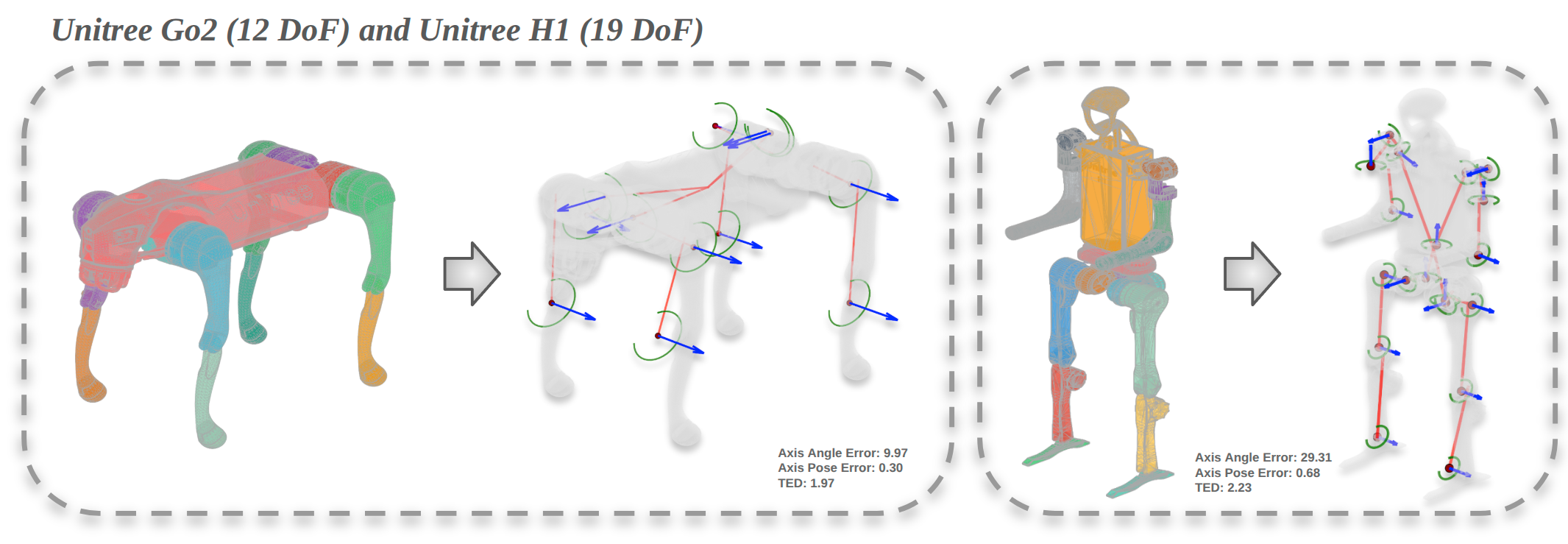

Kinematify is evaluated on PartNet-Mobility and six robot platforms spanning 1–19 DoFs. Metrics include axis angle error, axis position error, and tree edit distance (TED). Against baselines Articulate Anymesh and ArtGS, Kinematify achieves lowest axis angle error and competitive axis position error on everyday objects. On robot platforms, TED is significantly reduced, indicating superior kinematic structure recovery. End-to-end evaluations from RGB input exhibit a modest increase in error for robots with tighter tolerances, but everyday objects remain robust.

Figure 3: Qualitative comparison of articulation recovery across Kinematify, Articulate Anymesh, and ArtGS, highlighting joint direction accuracy (red lines).

Figure 4: Demonstrations on high-DoF robots: Unitree Go2 (12 DoF) and Unitree H1 (19 DoF), showcasing segmentation, tree inference, and optimized joint parameters.

Ablation Study and Component Analysis

Ablations demonstrate the necessity of both MCTS-based topology inference and DW-CAVL optimization. Replacing MCTS with BFS yields higher TED due to suboptimal tree regularity and symmetric attachment errors. Removing the DW-CAVL anchor term causes significant degradation in joint parameter accuracy, as pivots drift from true centers under collision-only optimization. The full pipeline achieves optimal balance between topology and joint parameter estimation.

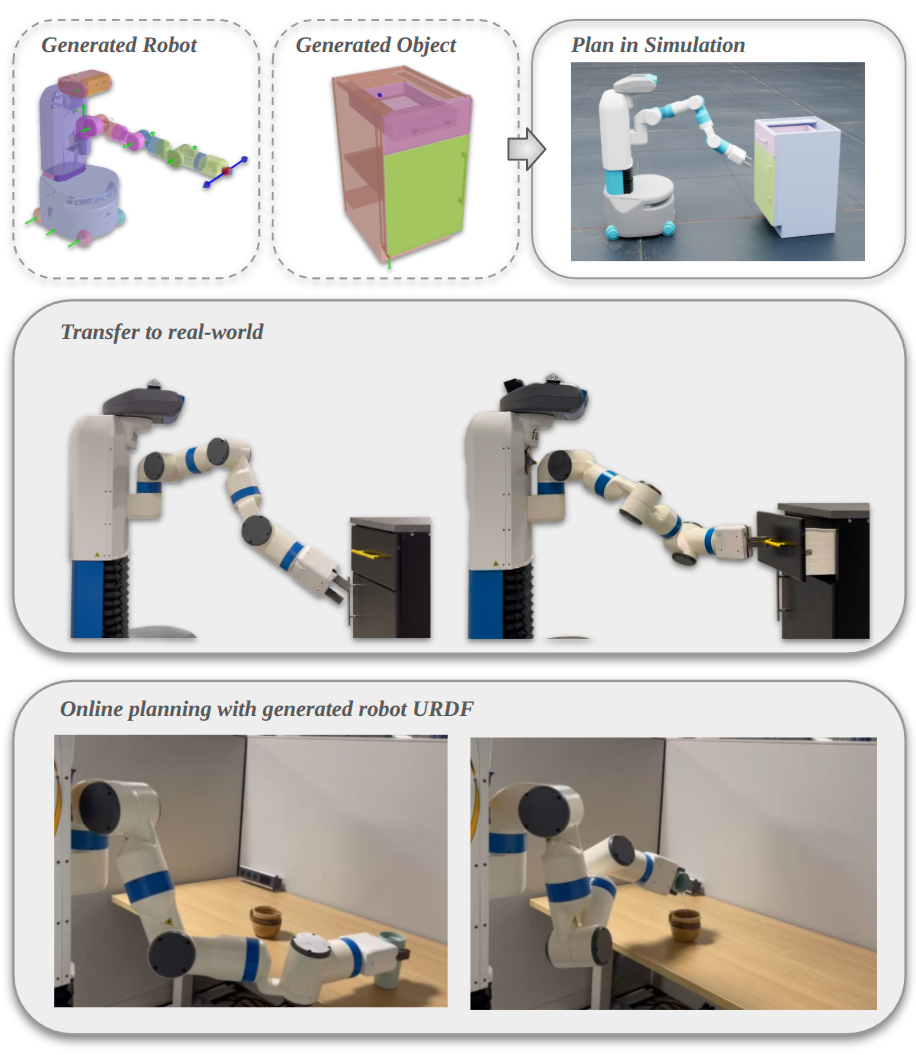

Real-World Deployment and Practical Implications

Exported URDFs enable direct simulation and execution of manipulation tasks in Isaac Sim and on real hardware (e.g., Fetch robot). The pipeline supports online planning with MoveIt, as validated in drawer-opening and pouring tasks without collision, verifying physical consistency of inferred kinematics. Kinematify thus streamlines articulation modeling for downstream robotics applications, including policy learning and manipulation.

Figure 5: Kinematify-generated URDFs enable cross-platform planning and execution for robots and objects in simulation and real environments.

Limitations and Future Outlook

The method presupposes reliable segmentation and contact graph accuracy. Spurious decorative geometry or errant mesh seams can mislead tree inference and attachment. Structural prior weighting (especially symmetry) emerges as a critical mitigation for false contacts but remains heuristic. Potential future directions include joint refinement of part segmentation and contact reliability, adaptive reward schedules in MCTS based on cluster variance, and end-to-end learning of structural priors.

Conclusion

Kinematify constitutes an automated, open-vocabulary pipeline for high-DoF articulated object synthesis from static inputs, bypassing prior dependencies on curated datasets or motion sequences. By integrating part-aware mesh recovery, reward-driven MCTS, and SDF-based joint optimization, the method achieves greater accuracy in joint estimation and kinematic structure reconstruction, directly enabling physical simulation and manipulation. Kinematify advances the practical automation of articulation modeling and lays groundwork for broader generalization in robotic self-perception and interaction.