- The paper reveals that Transformers develop symbolic reasoning by manipulating context-dependent tokens to solve arithmetic problems.

- It identifies key mechanisms such as commutative copying, identity recognition, and closure-based cancellation to handle variable token assignments.

- Model performance scaled with context length and generalized well to unseen algebraic groups, indicating robust abstract reasoning capabilities.

In-Context Algebra Research Summary

Introduction

The paper "In-Context Algebra" (2512.16902) investigates the novel mechanisms that Transformer models develop when trained to solve arithmetic problems under unique conditions. Specifically, the research explores how Transformers can perform symbolic reasoning when meanings of tokens are not fixed and vary across different sequences. This setup contrasts with traditional studies where token embeddings carry fixed meanings. Despite the complexity of the task, which involves reasoning about variable symbols in finite algebraic groups, the models achieve high accuracy and can generalize to unseen algebraic groups. The findings highlight a departure from previously observed geometric representations, emphasizing instead symbolic reasoning strategies inherent to context-based token interactions.

Task Description

The task involves solving arithmetic problems by manipulating variable tokens whose meanings are context-dependent. It incorporates sequences of algebraic group operations where the vocabulary and semantics of tokens vary across sequences.

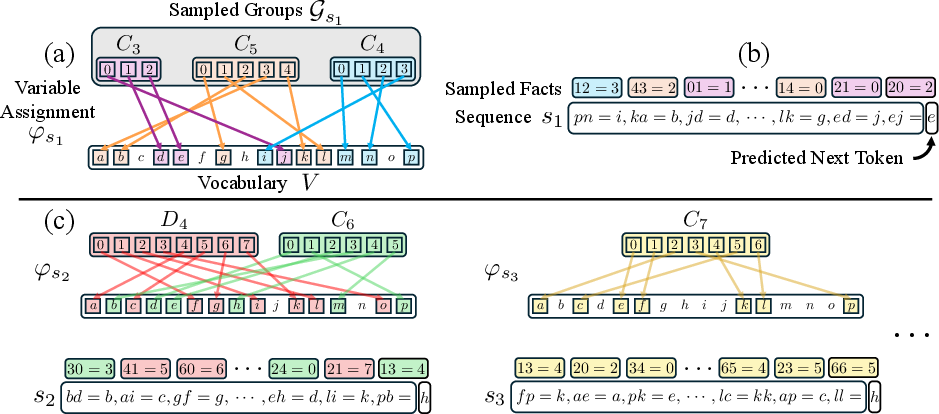

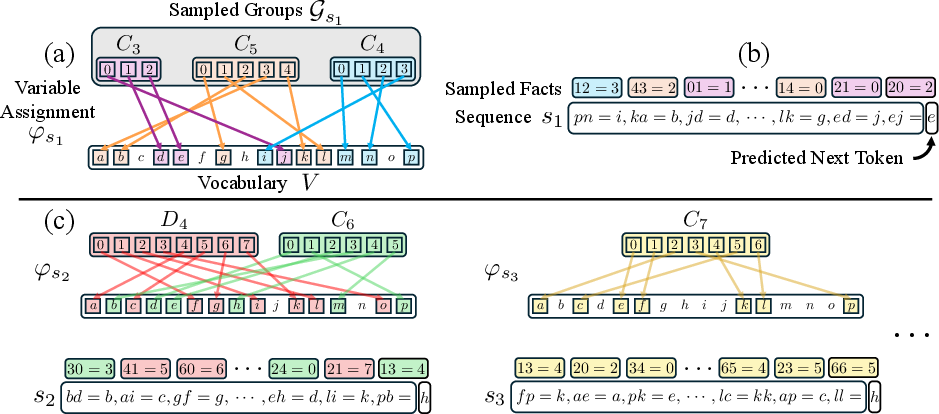

Figure 1: The data generation process involves variable assignment, sequence generation, and sample diversity ensuring varying token meanings across sequences.

Each sequence presents a mix of facts drawn from algebraic operations within sampled groups. Tokens serve purely as variables, forcing the model to infer the structure from relational in-context information rather than from static embeddings. The complexity of the task is increased by randomizing group-to-variable assignments per sequence, demanding abstract reasoning to deduce correct outcomes.

The Transformers exhibited strong performance that scaled with context length and demonstrated phase transitions indicative of discrete skill acquisition, aligning with prior studies of grokking and gradual learning phases in Transformers.

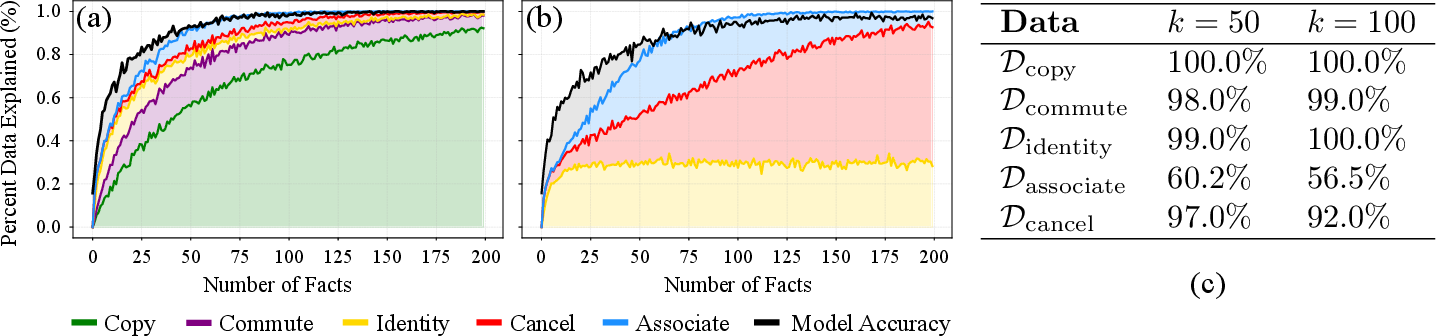

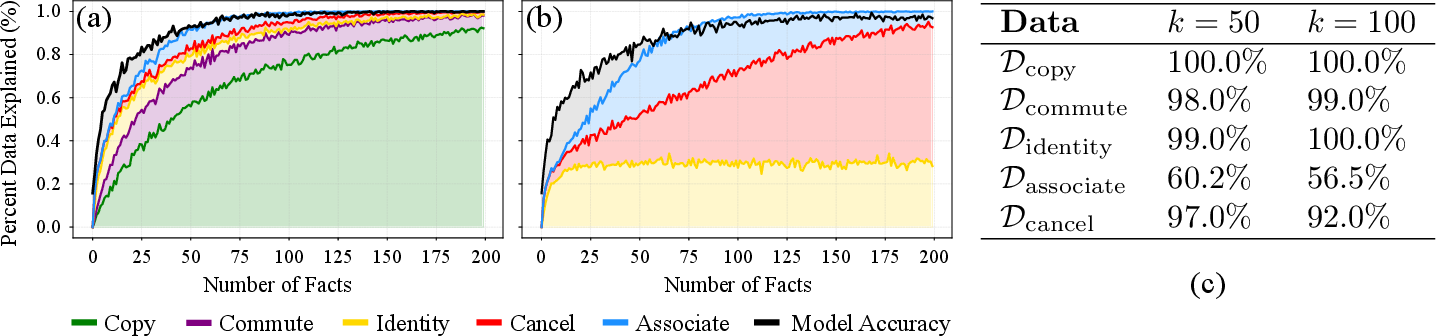

Figure 2: Algorithmic coverage and empirical model performance showing near-perfect accuracy across algorithmic distributions, with unexplained model performance in shaded gray regions.

Persistent accuracy increases were noted with longer sequences, especially for groups with higher orders that require more facts to achieve maximum accuracy. Despite these higher requirements, models successfully generalized to unseen groups and maintained performance across varying sequential structures.

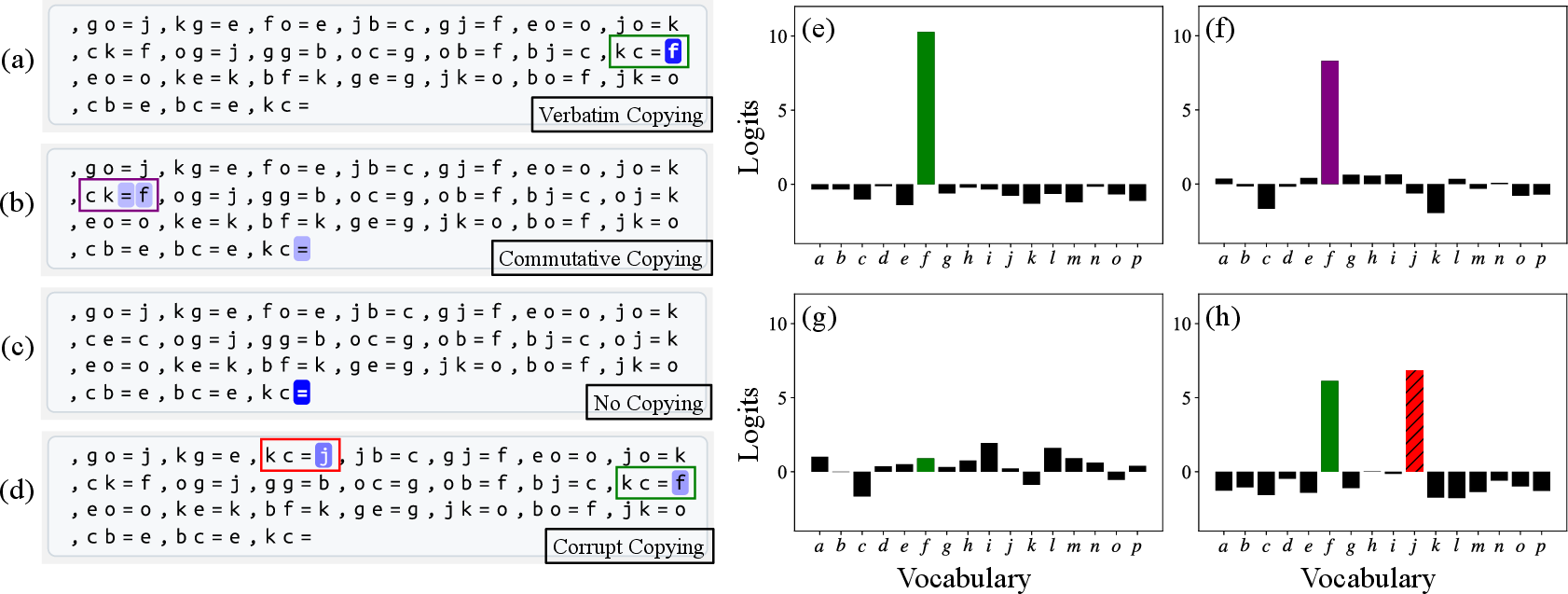

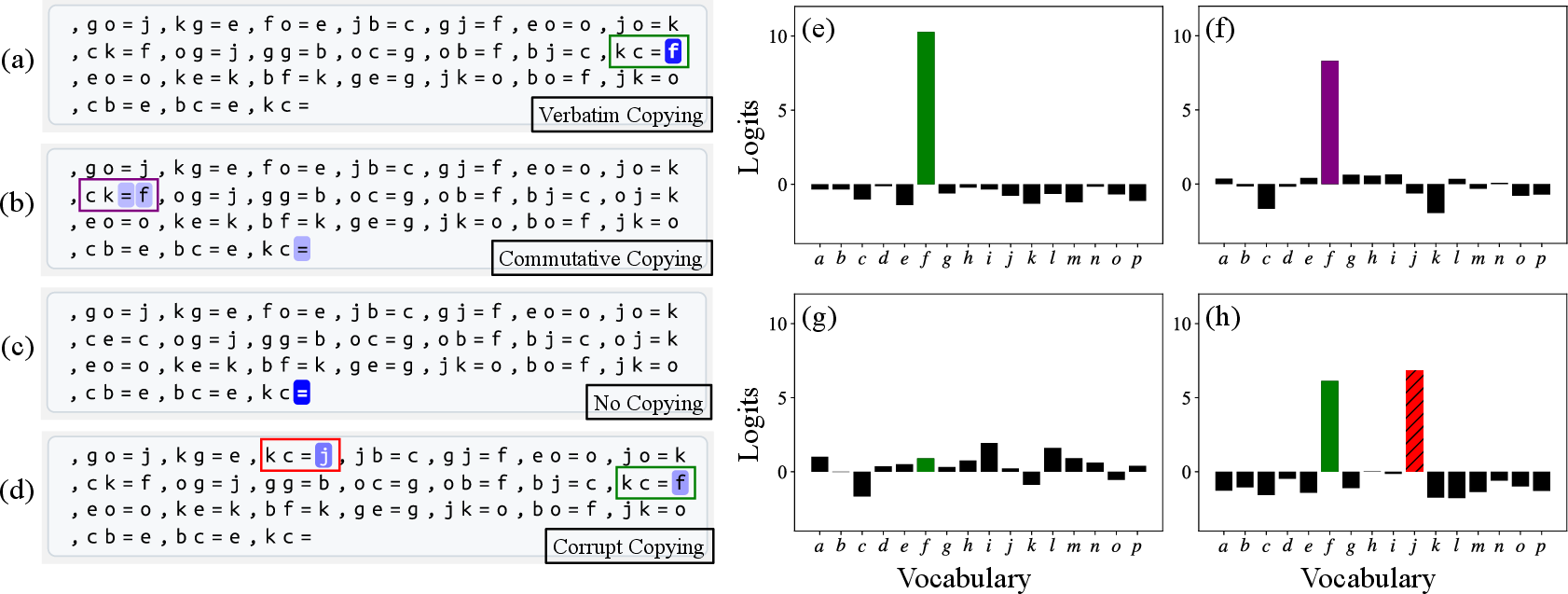

Figure 3: An analysis of the copying mechanism showing direct logit contributions across sequence variations, underlying the model's ability to promote the correct tokens.

Hypothesized Symbolic Mechanisms

Three primary mechanisms were identified in this study:

- Commutative Copying: A mechanism where a specific attention head facilitates the copying of answers by recognizing commutative pairs from context.

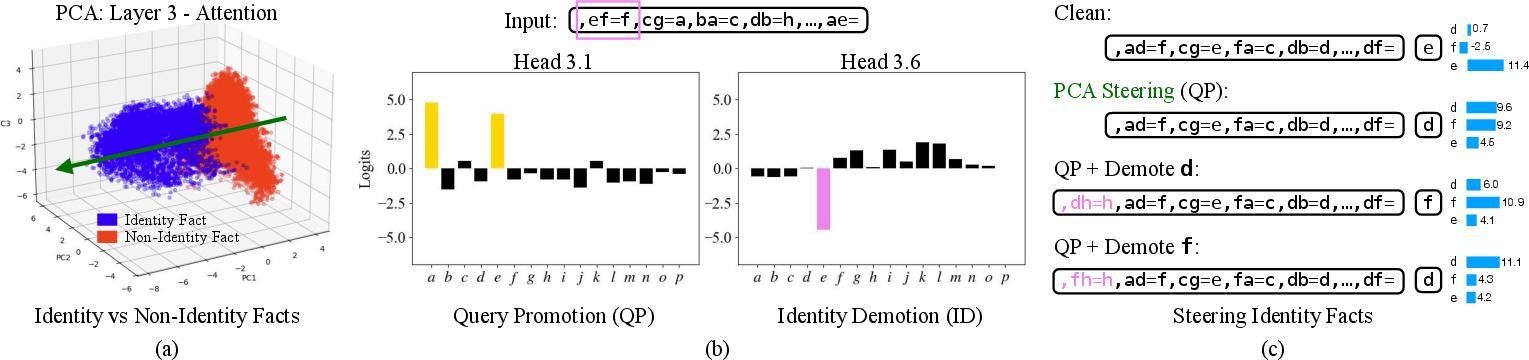

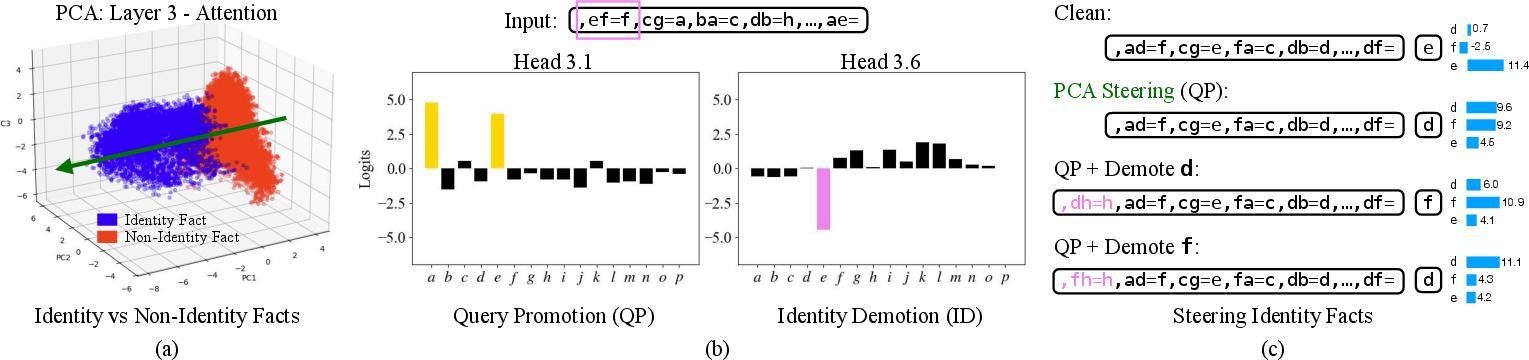

- Identity Element Recognition: In sequences involving identity elements, attention heads distinguish these elements through learned symbolic reasoning, effectively separating identity facts from non-identity ones.

- Closure-Based Cancellation: This involves tracking group membership, where valid answers are constrained by eliminating possibilities using closure and cancellation laws.

Implications and Future Directions

The research holds noteworthy implications for symbolic reasoning within Transformer architectures, challenging the prevailing perspective that relies heavily on geometric embeddings. The domain of algebraic structures provides a fertile testing ground for further exploration of symbolic reasoning in neural networks.

Figure 4: PCA decomposition shows clear separation of identity facts which is crucial for effective identity recognition.

Future research could explore understanding the trade-offs between symbolic and geometric reasoning, particularly in adapting Transformer models to diverse problem settings that require contextual token meaning adaptation. Additionally, extending these findings to larger pre-trained models might unlock deeper insights into symbolic computational strategies in neural architectures.

Conclusion

The study provides meaningful insights into the symbolic mechanisms developed by Transformers, offering a complementary perspective to fixed-meaning token arithmetic. By training models within a variable-context framework, researchers have highlighted the emergent capability of neural models to employ abstract reasoning devoid of pre-encoded solutions. This demonstrates a pivotal shift in AI's approach to problem-solving and lays the groundwork for future advancements in neural symbolic reasoning.