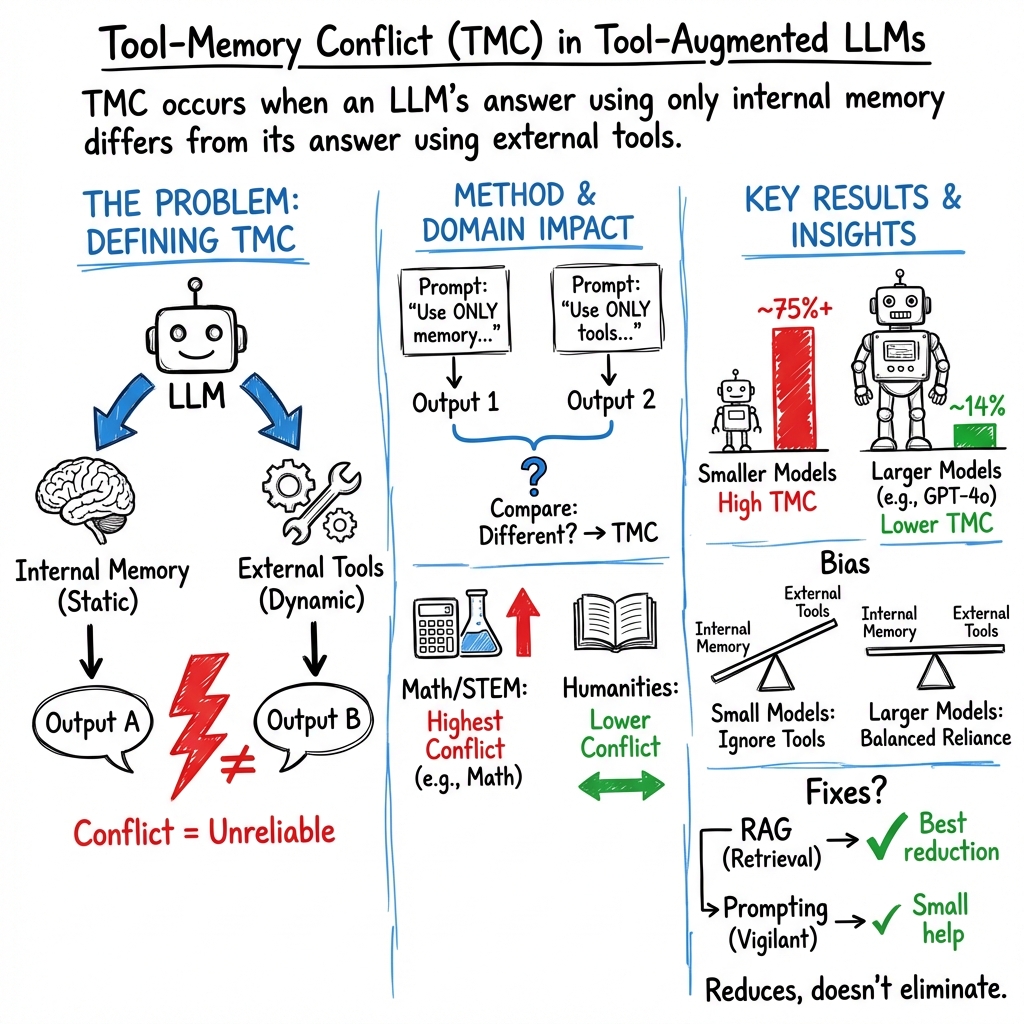

- The paper introduces Tool-Memory Conflict (TMC) as a novel inconsistency between LLMs’ static memory and dynamic tool outputs, particularly in STEM domains.

- It employs extensive experiments to assess when TMC arises, showing that model scale and domain-specific strategies affect conflict resolution efficacy.

- Findings indicate that integrating precise computational modules and retrieval-augmented generation improves reliability in tool-augmented LLM responses.

Introduction

The integration of external tools into LLMs has enhanced their utility across various applications, particularly by extending their capabilities to interact with dynamic and domain-specific information sources. Despite these advantages, tool-augmented LLMs face potential epistemic inconsistencies when outputs from external tools contradict the parametric knowledge embedded in the models. This paper introduces the concept of Tool-Memory Conflict (TMC), a novel knowledge inconsistency in tool-augmented LLMs, focusing on the divergence of internal and external outputs when addressing identical queries, especially in STEM-related tasks.

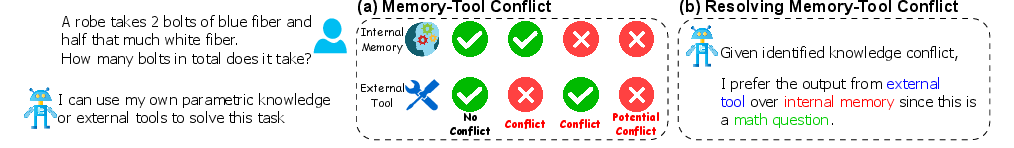

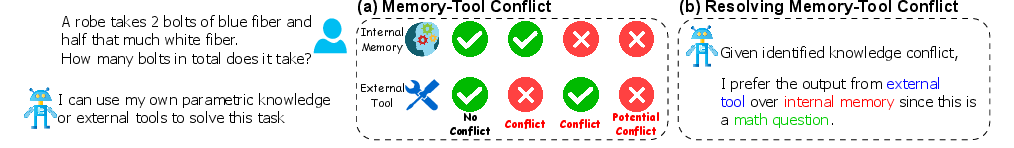

Figure 1: Illustration of tool-memory Conflict (MCT). Green and red indicate correct or incorrect outputs, respectively, compared to the ground truth answer.

This investigation aims to comprehensively evaluate the conditions under which TMC emerges, identify the prioritization tendencies of LLMs when faced with TMC, and assess the effectiveness of current conflict resolution techniques.

Problem Definition and Examples

TMC occurs when the internal parametric output of a tool-augmented LLM conflicts with the output generated by using external tools. Formally, given a tool-augmented LLM f capable of using a tool set T for query q, TMC is defined by f(q)=f(q;T). Examples of scenarios where TMC arises include discrepancies in mathematical computations, factual data retrieval, code execution, and medical diagnosis. The core issue centers on reconciling LLMs' static memory with dynamic tool interactions to ensure reliability and coherence in decision-making processes.

Causes and Differences from Existing Conflicts

TMC stems primarily from temporal information mismatches, misinformation from tools, and incorrect tool usage. Unlike context-memory or inter-context conflicts, TMC involves tool-generated knowledge which functions externally and is often dynamic, while contextual knowledge is static at retrieval and integrated within the model’s generation process. Additionally, TMC inherently involves a real-time clash between current external data and pre-existing model knowledge.

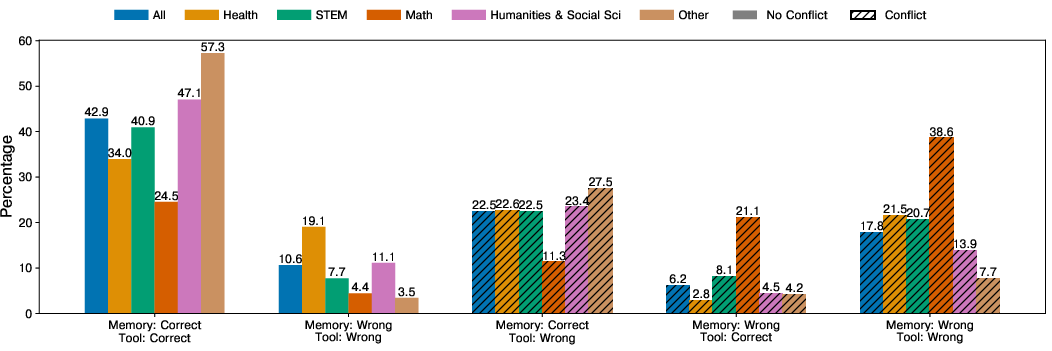

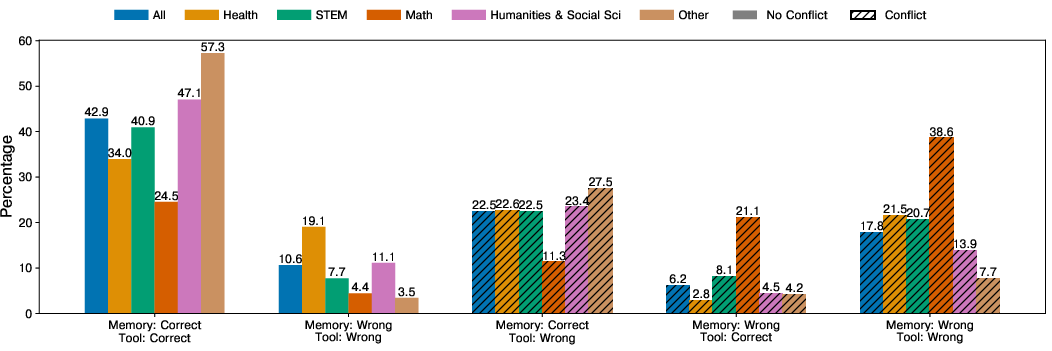

Figure 2: Tool-Memory Conflict across different domains.

Impact and Resolution

Understanding TMCs is vital for discerning the limitations of LLMs and assessing tool reliability. Diagnosing and addressing TMC can lead to improvements in model design and better tool integration strategies. Current conflict resolution involves analyzing the internal bias of LLMs and applying prompting techniques or Retrieval-Augmented Generation (RAG) to mitigate inconsistencies.

Experimental Setup and Results

Evaluation Methodology

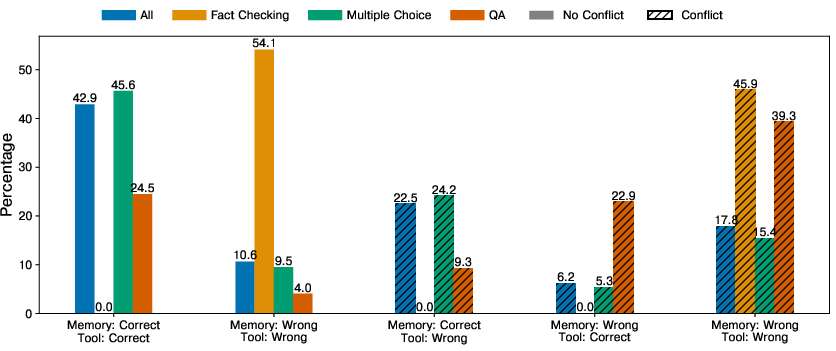

The study examines TMC across various datasets and LLM models capable of tool augmentation. Conflict prevalence and conditions are assessed, identifying domain-specific TMC sensitivities and the effect of model scale on conflict frequency.

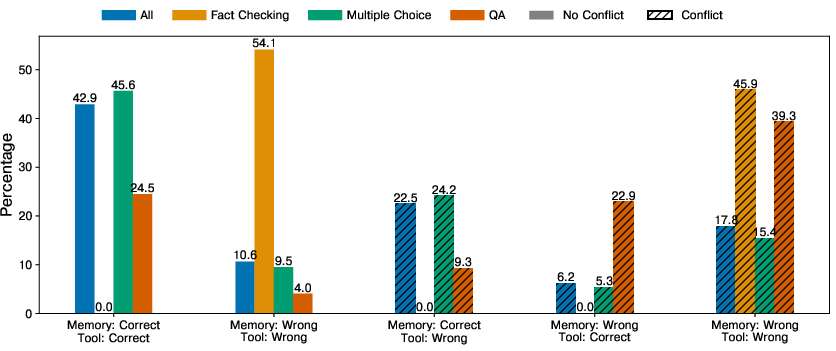

Figure 3: Tool-Memory Conflict across different types of tasks.

Findings

The investigation reveals that TMC is prevalent across all evaluated models and tasks, with significant occurrences in math and STEM domains. Larger models like GPT-4o and LLAMA-3.3 reduce conflict more effectively due to superior internal representation capacities. The study underscores the need for domain-specific conflict mitigation strategies, such as integrating precise computational modules for math tasks or employing hybrid retrieval tactics for long-tail knowledge.

Tool bias and memory bias metrics further highlight tendencies of LLMs to either rely heavily on external tools or internal memory, affecting overall accuracy. Existing techniques like vigilant prompting show limited effectiveness, while RAG offers a more robust approach to resolving TMC by grounding model responses in up-to-date, external information.

Conclusion

This paper identifies and characterizes Tool-Memory Conflict (TMC) in tool-augmented LLMs, demonstrating its prevalence and challenges across multiple models and task types. The findings emphasize the need for improved conflict resolution mechanisms, such as enhanced retrieval-augmented techniques, to achieve more reliable and interpretable LLM outputs. Future work should explore broader model evaluations and novel methodologies to effectively mitigate TMC and enhance the coherence of tool-augmented LLMs.