Gabor Fields: Orientation-Selective Level-of-Detail for Volume Rendering

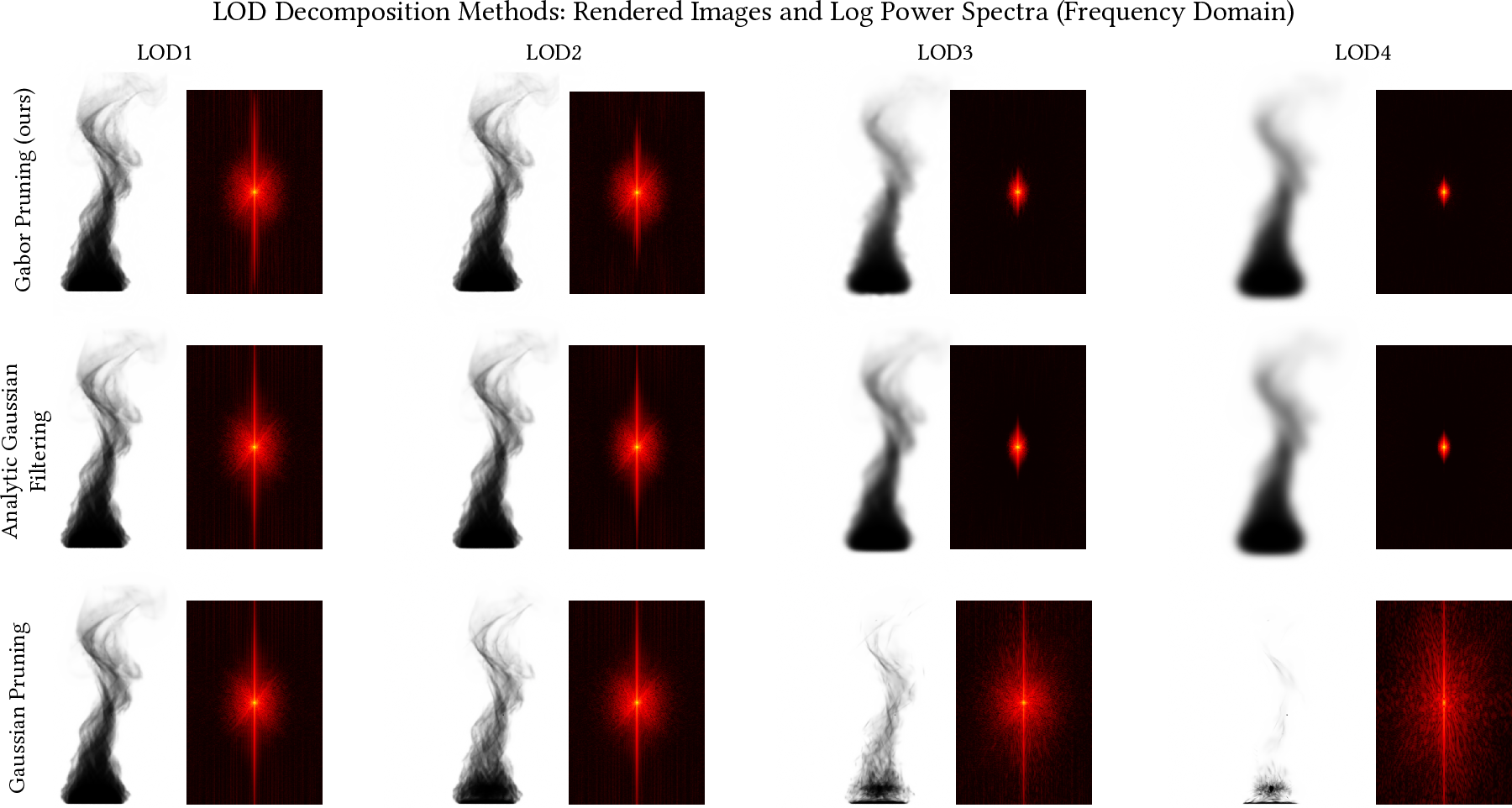

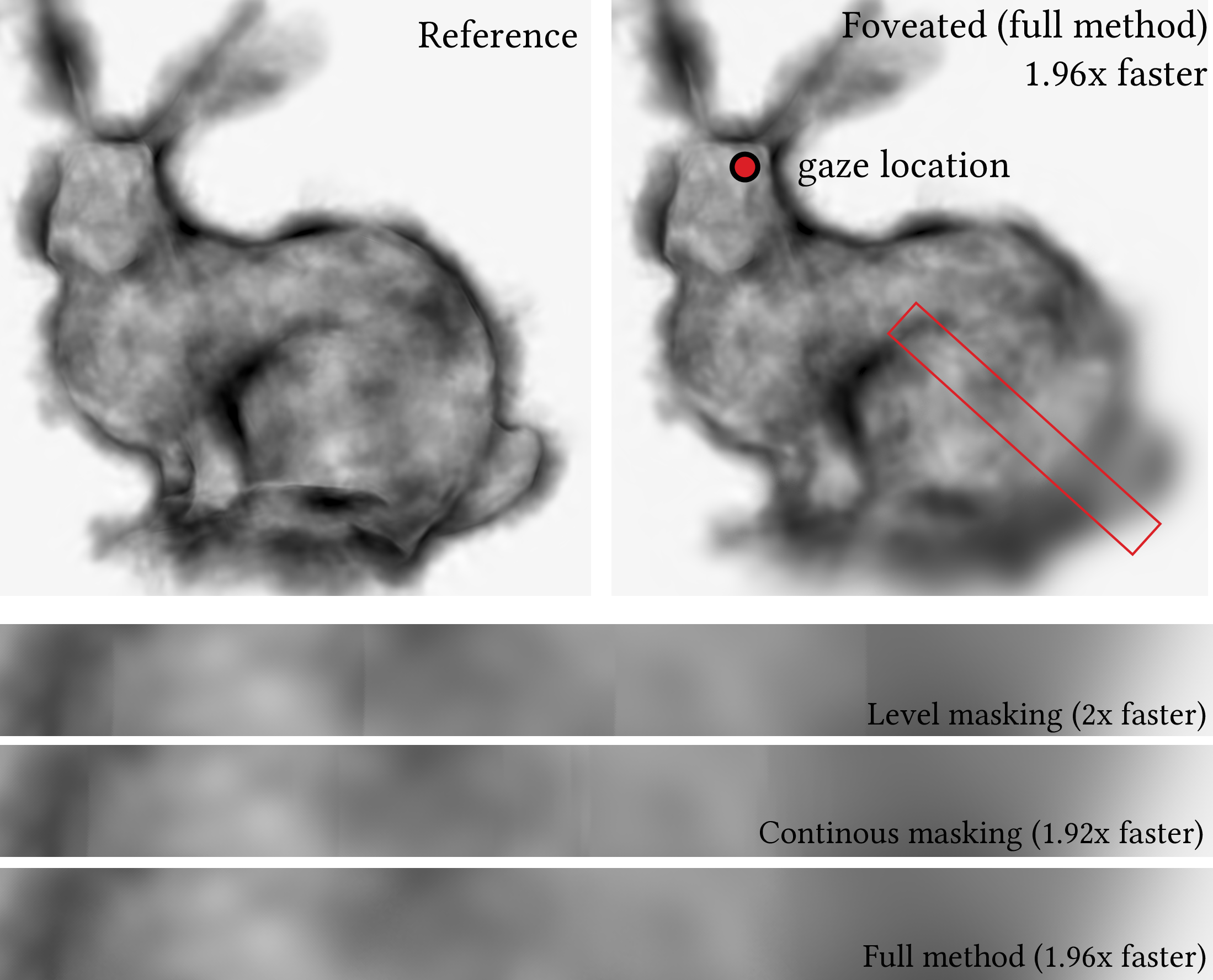

Abstract: Gaussian-based representations have enabled efficient physically-based volume rendering at a fraction of the memory cost of regular, discrete, voxel-based distributions. However, several remaining issues hamper their widespread use. One of the advantages of classic voxel grids is the ease of constructing hierarchical representations by either storing volumetric mipmaps or selectively pruning branches of an already hierarchical voxel grid. Such strategies reduce rendering time and eliminate aliasing when lower levels of detail are required. Constructing similar strategies for Gaussian-based volumes is not trivial. Straightforward solutions, such as prefiltering or computing mipmap-style representations, lead to increased memory requirements or expensive re-fitting of each level separately. Additionally, such solutions do not guarantee a smooth transition between different hierarchy levels. To address these limitations, we propose Gabor Fields, an orientation-selective mixture of Gabor kernels that enables continuous frequency filtering at no cost. The frequency content of the asset is reduced by selectively pruning primitives, directly benefiting rendering performance. Beyond filtering, we demonstrate that stochastically sampling from different frequencies and orientations at each ray recursion enables masking substantial portions of the volume, accelerating ray traversal time in single- and multiple-scattering settings. Furthermore, inspired by procedural volumes, we present an application for efficient design and rendering of procedural clouds as Gabor-noise-modulated Gaussians.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Gabor Fields: A simple explanation

What is this paper about?

This paper introduces a new way to store and render 3D “foggy” stuff—like smoke, clouds, and hair volumes—so it looks good up close and far away, and renders faster. The authors call their method Gabor Fields. The key idea is to build the volume out of tiny “blobs with ripples” that each prefer a certain detail size and direction. Because of that, you can easily turn detail up or down (level of detail) without needing extra copies of the volume.

What questions are the authors trying to answer?

In everyday terms, the paper asks:

- How can we make 3D fog/smoke/clouds look good at different distances without storing lots of extra versions?

- Can we keep rendering physically correct lighting (light scattering in fog) but make it faster?

- Is there a way to use math to quickly figure out how much a light ray is dimmed as it passes through these tiny shapes?

- Can we convert old-style “voxel” volumes (big 3D grids) into this new format?

- Can the same idea help artists make realistic procedural clouds efficiently?

How the method works (in plain language)

Before the method, a quick picture to keep in mind: imagine building a cloud out of many tiny jelly-like blobs. Some blobs are smooth (Gaussians). Others are the same blobs but with soft stripes running through them (Gabor kernels). Those stripes have a favorite size (how fine the detail is) and direction (which way the stripes run).

The key ideas

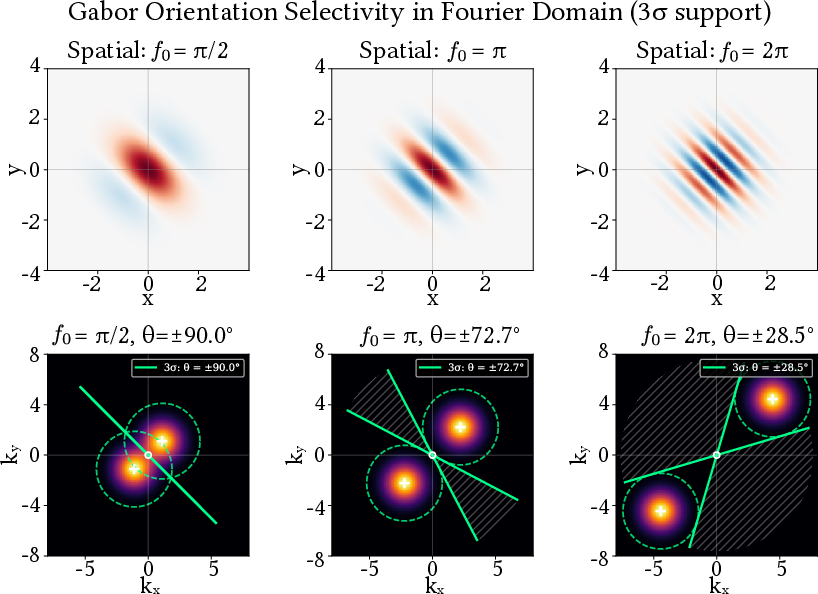

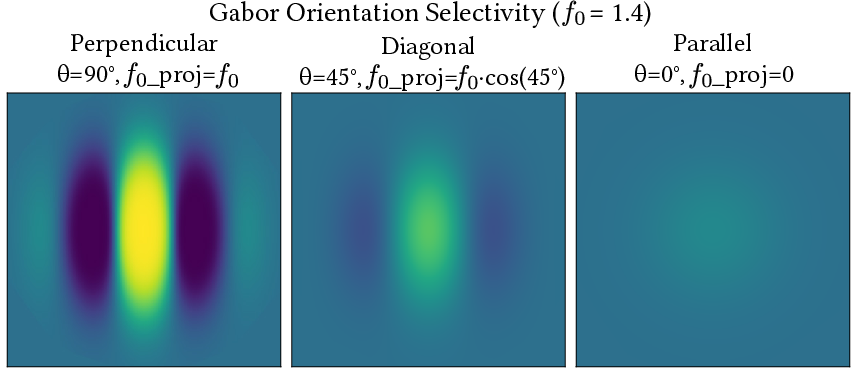

- Detail as “frequency”: Fine details are like high pitches in music; broad, soft shapes are like low pitches. If each blob is “tuned” to a particular detail size, you can “mute” certain bands of detail—just like an equalizer on a music player.

- Direction as “orientation”: The ripples in a blob point a certain way. If a light ray travels in a direction that doesn’t “match” those ripples, that blob barely matters to the ray—and you can skip it to save time.

What they actually do

- Build with two kinds of blobs:

- Smooth blobs for the lowest, broadest shapes (Gaussians).

- Rippled blobs for finer detail (Gabor kernels = Gaussian × cosine wave).

- Level of detail (LOD) with no extra memory:

- Because each rippled blob holds a specific band of detail, you can get a lower-detail version by just ignoring some blobs—no need to store or recompute multiple copies.

- This gives clean, smooth transitions as you zoom in and out.

- Fast, exact math for light:

- The authors derive formulas to compute exactly how much a single blob dims a ray of light. This avoids slow, step-by-step approximations.

- Speed tricks during rendering:

- Frequency sampling: For each ray, randomly pick which detail bands to consider. This makes each sample cheaper, so you can afford more samples overall.

- Orientation masking: Group blobs by the direction of their ripples. For each ray, skip groups that barely contribute based on the ray’s direction.

- Adaptive clamping: Shrink how far blobs “reach” in space when it’s safe, so the system checks fewer blobs per ray.

- GPU-friendly visibility masks: Use bitmasks to tell the ray tracer which groups to test, before any heavy work is done.

- Turning old volumes into Gabor Fields (regression):

- First blur the original volume into a pyramid (from very smooth to detailed).

- Fit smooth blobs to the lowest level (the broad shapes).

- Fit rippled blobs to the remaining detail.

- Optimize the blobs by rendering images and adjusting them to match the reference (like teaching by comparison), with a bit of smart randomness to escape bad starting points.

What did they find, and why does it matter?

Here are the main takeaways, explained simply:

- Continuous level of detail (LOD) without extra storage:

- You can dial detail up or down by turning certain blobs on or off. No need to store multiple versions of the same asset.

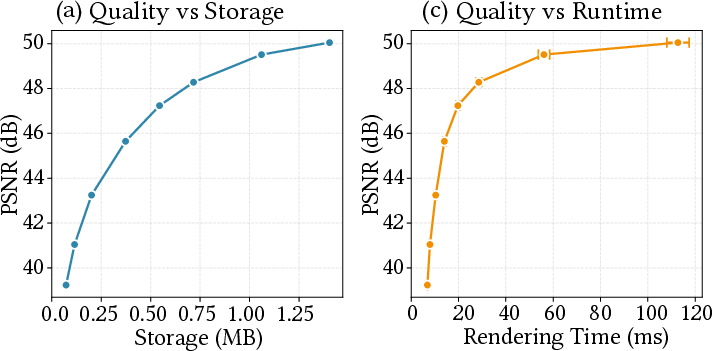

- This makes far-away clouds cheaper to render and prevents visual flickering or aliasing.

- Faster rendering with physically based lighting:

- Because they know exactly how a ray interacts with each blob, fewer guesses are needed.

- By skipping blobs that won’t matter (based on frequency and direction), rays travel faster through the scene.

- Randomly sampling detail bands (with proper re-weighting) trades a little noise or tiny bias for big speed gains—useful when time is tight.

- Smooth transitions and fewer artifacts:

- Turning bands on/off corresponds to true “filtering” of detail (like a proper blur), not just chopping shapes crudely.

- Works for both single and multiple scattering:

- Multiple scattering (light bouncing around inside fog) is the tricky, realistic kind. Their method still helps here.

- Great for procedural clouds:

- Artists can build big fluffy clouds with small memory footprints and get clean detail control, thanks to Gabor-style “noise.”

Note on bias and noise: When you randomly choose which detail bands to include per ray, each ray is faster but a bit noisier. In most parts of the pipeline this averages out. In deep fog with multiple bounces, a small, well-understood bias can appear (a math effect from averaging exponentials), but the authors explain how to keep it small and where to put it (for example, into less noticeable frequencies).

Why this is important

- Practical speed-ups: Movies, games, and VR often need huge volumes (cloudscapes, smoke plumes). This method cuts render time without needing more memory for extra LOD assets.

- Cleaner look at any distance: Proper LOD avoids shimmering or harsh patterns when you zoom out.

- Flexible pipeline: You can convert existing grids into this format or design procedural volumes directly in it.

- Bridges two worlds: It combines the exactness of mathematical shapes (for speed and correctness) with the richness of procedural detail (for art and realism).

In short

Gabor Fields let you build 3D fog out of smart, direction-aware pieces. Because each piece knows which kind of detail it represents, you can smoothly control detail, skip unimportant pieces per ray, and render realistic lighting faster—all without storing multiple versions of your assets.

Knowledge Gaps

Below is a single, consolidated list of concrete knowledge gaps, limitations, and open questions left unresolved by the paper. Each point is intended to be actionable for future research.

- Positivity and physical validity: The method uses Gabor kernels with signed residuals; how is non-negativity of extinction ensured everywhere along rays to prevent transmittance T > 1, and what constraints or safeguards (e.g., constrained optimization, clamping) guarantee monotonic optical depth for distance sampling?

- Bias control in multiple scattering: Stochastic masking introduces bias via Jensen’s inequality; what unbiased or provably bounded-bias estimators can be devised for multiple scattering, and how should MIS be formulated to combine Gaussian base and Gabor bands/orientations without bias explosions?

- Quantitative error bounds: There is no theoretical bound on radiance/transmittance error from (i) frequency/orientation pruning, (ii) stochastic band selection, or (iii) adaptive clamping; can tight, computable error bounds (per ray, per pixel) be derived to meet specified error budgets?

- Temporal stability: Stochastic pruning and per-ray masking risk temporal flicker under camera/light motion; what temporally coherent sampling schemes (e.g., blue-noise in ray space, reservoirs, stratification) minimize flicker while preserving acceleration?

- Complex error function evaluation: The truncated series approximation for complex erf lacks a rigorous accuracy/speed analysis across parameter ranges (segment length, frequency, anisotropy); what are robust, GPU-friendly algorithms with guaranteed error and minimal divergence, and how should regimes be switched without branches?

- Extreme parameter robustness: Numerical stability under very high modulation frequencies, long segments, or extreme anisotropy is not characterized; where do integrals and samplers fail or degrade, and what conditioning or reparameterizations mitigate this?

- Optimal binning and masking: Orientation/frequency binning is heuristic; how many bins, which partitioning, and what runtime selection policies minimize variance and intersection work per asset/scene, and can auto-tuning learn bin layouts offline/online?

- Importance sampling theory: The orientation weight w(a) = exp(-f02 a2 / 2) is heuristic; what is the variance-optimal importance distribution for optical depth or radiance, and how should it be combined with frequency-level sampling via MIS?

- Pyramid non-orthogonality: The “relaxed” steerable/Laplacian decomposition is not orthogonal; how much energy overlap exists between bands, how does it impact unbiasedness/variance and sampling weights, and can biorthogonal or near-orthogonal constructions reduce redundancy?

- Envelope–frequency coupling: Tying Gabor frequency to envelope scale simplifies optimization but may limit expressivity; what are the modeling and regression trade-offs of decoupling f0 and scale, or using multi-frequency modulations per primitive?

- Phase and emission integration: The paper omits detailed treatment of anisotropic phase functions and emission; how do the analytic integrals and stochastic strategies extend to spatially varying σs, σa, emission, and complex phase (e.g., HG), and what is the impact on estimator variance?

- Distance sampling efficiency: Inversion is handled via bisection; are there closed-form or faster numerical schemes for common cases, or hybrid approaches with null/ratio tracking using tight Gabor-majorants that reduce solver iterations without bias?

- Adaptive clamping calibration: The heuristic extent Eadaptive depends on ε and frequency; how should ε be set per level/scene, what is the worst-case error across paths, and can adaptive, error-controlled clamping be derived (e.g., via Lipschitz or local energy bounds)?

- Acceleration structures at scale: Multiple GAS per level improves culling but may incur build/memory overhead; what AS designs (e.g., per-instance masks, compact TLAS with per-ray visibility filtering) scale best for many bands/orientations and dynamic updates?

- Comprehensive benchmarking: The work lacks broad quantitative comparisons vs NanoVDB/OpenVDB, wavelet volumes, and Gaussian mixtures across varied scenes (sizes, albedos, phase anisotropy); standardize metrics (speed, memory, PSNR/SSIM/LPIPS, variance, bias) and release datasets/scripts.

- Screen-space LOD control: There is no linkage between ray/image footprint (ray differentials) and spectral pruning; how to choose bands/orientations per ray to guarantee anti-aliasing and minimize variance for a target pixel footprint?

- Dynamic scenes and motion blur: Beyond a brief mention, methods to maintain temporal coherence, fast updates/re-fitting for deforming or time-varying volumes, and streaming LOD under motion are unexplored.

- Procedural authoring workflow: The procedural cloud application is promising but under-specified; what authoring controls, parameter mappings, and validation against production assets are needed to make Gabor-noise-modulated Gaussians practical for artists?

- Regression scalability and convergence: The hierarchical differentiable procedure needs complexity analyses (time/memory vs primitives), convergence diagnostics on very large assets, robust initialization, and strategies for incremental/streaming fitting.

- Differentiable rendering through special functions: Autodiff stability and gradient variance through complex erfs, masking, and pruning are not analyzed; what reparameterizations or surrogate gradients are needed for stable inverse problems?

- Handling heterogeneous optical properties: The current regression targets extinction; how to jointly fit extinction, albedo, and phase parameters (possibly correlated) within the Gabor framework while preserving physical plausibility?

- Ensuring monotonic CDF for sampling: Signed residuals can, in principle, locally reduce optical depth; what constraints or algorithms ensure a monotonic cumulative extinction per segment so that distance sampling remains well-posed?

- MIS with NEE and masked volumes: Formal MIS weights and energy conservation proofs are missing when using different band/orientation strategies for primary vs shadow rays; what MIS designs avoid bias/variance pitfalls in these mixed estimators?

- GPU efficiency and divergence: Series truncation, per-ray mask selection, and per-band GAS traversal may cause SIMT divergence; what kernel designs, batching, or wavefront schedulers reduce divergence and maximize occupancy?

- Reproducibility and parameter sensitivity: Key hyperparameters (ε for clamping, series truncation depth, σ0 for pyramids, mask strategies) lack sensitivity analyses; publish reference settings, ablations, and open-source code for reproducibility.

Practical Applications

Immediate Applications

Below are practical applications that can be deployed today by leveraging Gabor Fields’ orientation- and frequency-selective volumetric representation, analytic line integrals, and continuous level-of-detail (LOD) control.

- VFX/animation volumetric assets with continuous LOD and faster rendering

- Sector: Media & Entertainment, Software (DCC/renderers)

- What: Replace OpenVDB volumes for clouds, smoke, fog, explosions with Gabor Field assets to enable continuous LOD (no extra memory), reduced aliasing at distance, and faster path tracing via pruning/masking. Use the provided hierarchical regression to distill existing VDB volumes into Gabor Fields and deploy in renderers (e.g., Mitsuba 3).

- Tools/workflows: “Gabor Field Volume” plugin or converter for Houdini/Maya; renderer integration using TLAS/GAS visibility masks; lookdev workflows that switch LOD at render time without reauthoring.

- Assumptions/dependencies: Requires engine support for ray visibility masks and BVH filtering (e.g., OptiX), analytic Gabor line integrals (including complex error function implementation), and a conversion pipeline (regression) from VDB grids. Quality depends on regression tuning and kernel counts.

- Real-time or near-real-time volumetric effects using orientation-aware culling

- Sector: Games/Interactive Graphics, AR/VR

- What: Precompute Gabor Field assets for fog/clouds and apply frequency/orientation-based masking to cut intersection tests per ray, boosting frame rates in real-time or fast preview settings.

- Tools/workflows: UE5/Unity plugin for pre-baked Gabor assets with runtime LOD masks; selective ray traversal based on alignment weights; aggressive low-pass-only sampling for shadow rays/NEE.

- Assumptions/dependencies: GPU implementation of complex erf, masked BVH traversal, careful control of stochastic strategies to manage variance/bias (Jensen’s inequality). Some effects may run in “preview” or hybrid modes first.

- Procedural cloud authoring with Gabor-noise-modulated Gaussians

- Sector: Games, VFX, Simulation Tools

- What: Artist-facing controls for procedural clouds using Gabor noise modulation with analytic transmittance; compact, resolution-independent assets with infinite detail via frequency-aware evaluation.

- Tools/workflows: Houdini SOP nodes or engine components that generate procedural volumes as Gabor Fields; sliders for frequency bands/orientations; motion-blur-ready volumes.

- Assumptions/dependencies: Authoring tools must support Gabor parameters; renderer integration for line integrals; artists may need presets and guardrails for physical plausibility.

- Motion-blur-friendly volumetric rendering

- Sector: Media & Entertainment (offline and high-quality preview)

- What: Use Gabor Fields’ analytic integrals and compact support to render volumetric motion blur more efficiently than grid-based approaches.

- Tools/workflows: Render farm pipelines with motion-aware segments; lower sample counts due to reduced traversal.

- Assumptions/dependencies: Requires renderer support for segment-based radiative transfer and accurate temporal handling of primitives.

- Scientific and industrial volume visualization with continuous LOD

- Sector: Scientific Computing, Oil & Gas (seismic), CFD, Materials Science

- What: Distill large volumetric datasets (e.g., seismic cubes, CFD fields) into Gabor Fields to support smooth LOD for interactive exploration with reduced bandwidth and memory.

- Tools/workflows: Server-side precomputation/regression; thin-client interactive viewers streaming specific bands/orientations on demand.

- Assumptions/dependencies: Must integrate with existing pipelines (VDB/NetCDF/SEG-Y imports); need fast regression at scale; orientation pruning policies must be validated for task-specific fidelity.

- Medical volume visualization for interactive exploration

- Sector: Healthcare (imaging visualization), Education

- What: Use Gabor Fields for smooth LOD in CT/MRI/PET visualization, enabling aliasing-free zooming and faster interaction on large scans.

- Tools/workflows: PACS viewers or research tools with Gabor-based rendering backends; transfer-function mapping over Gabor mixtures.

- Assumptions/dependencies: Clinical deployments demand strict validation; transfer-function compatibility must be ensured; many clinical viewers may not yet support analytic path tracing of volumes.

- Synthetic sensor and scene simulation in adverse weather

- Sector: Autonomous Vehicles, Robotics, Simulation

- What: Simulate cameras/LiDAR in fog/smoke with analytic transmittance and low-cost traversal using LOD and orientation masking; accelerate large Monte Carlo runs for training/testing.

- Tools/workflows: Scenario generators that import Gabor Fields for participating media; control-variate sampling that biases toward low-pass for shadow rays.

- Assumptions/dependencies: Requires calibrated scattering coefficients for realism; stochastic strategies introduce controllable bias/variance; real-time constraints vary by hardware.

- Tomography forward modeling for research and education

- Sector: Academia, Imaging R&D

- What: Use analytic line integrals of Gabor kernels for fast forward projectors in CT-like simulations (e.g., testing reconstruction algorithms, teaching line-integral physics).

- Tools/workflows: Benchmarks and open-source prototypes in Mitsuba 3 for forward projections; synthetic datasets based on Gabor mixtures.

- Assumptions/dependencies: Practical clinical reconstruction uses measured data and different priors; negative-valued Gabor residuals require careful handling for physical interpretability.

- Volumetric asset compression and streaming

- Sector: Cloud Rendering, Content Delivery, Digital Twins

- What: Deliver multi-LOD volumetric assets with a single compact representation; stream only needed bands for the viewer’s footprint and orientation.

- Tools/workflows: Asset servers that transmit selected pyramid bits; client-side mask control integrated into the renderer.

- Assumptions/dependencies: Standards for volumetric asset containers (e.g., USD, VDB) need extensions for Gabor parameters; client engines must support mask filtering and dynamic LOD.

- Research and pedagogy in frequency/orientation-aware volume rendering

- Sector: Academia, Education

- What: Use Gabor Fields to teach Laplacian/Steerable pyramid concepts in 3D and to benchmark spectral LOD strategies and bias/variance trade-offs.

- Tools/workflows: Course modules and labs built on Mitsuba 3; comparative studies with voxel grids and Gaussian mixtures.

- Assumptions/dependencies: Requires accessible, well-documented code and datasets; students need GPU access for performance experiments.

Long-Term Applications

These applications will require further research, scaling, systems integration, or standardization before broad deployment.

- Real-time path-traced volumetric lighting at AAA scale

- Sector: Games, Film Previz, AR/VR

- What: Full-scene volumetric lighting with multi-bounce scattering using stochastic Laplacian/orientation sampling tuned for low bias and high performance.

- Dependencies: Highly optimized GPU kernels (complex erf), engine-level BVH masking APIs across platforms, perceptual error metrics for dynamic adaptation, cross-vendor support beyond OptiX.

- Hybrid neural–primitive volumetric representations

- Sector: Graphics/ML, Streaming Media

- What: Combine Gabor Fields with learned components (e.g., neural textures or feature fields) to increase modeling power per primitive, compress time-varying volumes, and enhance LOD.

- Dependencies: Joint training pipelines, stability and interpretability of negative-valued residuals, efficient inference on consumer GPUs.

- Inverse volumetric rendering and cloud reconstruction from imagery

- Sector: Remote Sensing, Meteorology, Film Scanning, Research

- What: Recover cloud/fog volume parameters by optimizing Gabor mixtures via differentiable rendering, exploiting frequency/orientation priors for stable inference.

- Dependencies: Robust regularizers, multi-view datasets with calibrated lighting, handling of multiple scattering during inversion, scalable optimization.

- Medical CT/MRI/PET reconstruction research using Gabor bases

- Sector: Healthcare R&D, Imaging

- What: Explore Gabor mixtures as a compressive prior or basis in iterative reconstruction or denoising, leveraging fast forward projection to speed algorithmic loops.

- Dependencies: Clinical validation, physical non-negativity constraints, integration with standard reconstruction frameworks, regulatory acceptance.

- Planet-scale weather and atmospheric visualization

- Sector: Geospatial/Climate, Simulation, Education

- What: Interactive exploration and instructional visualization of global weather volumes (cloud fields, aerosols) using orientation/frequency-aware streaming and culling.

- Dependencies: Efficient tiling/chunking for massive datasets, dynamic time-varying updates, multi-user server–client streaming infrastructures.

- Robust sensor simulation for AV/robotics with domain randomization

- Sector: Autonomous Vehicles, Robotics

- What: Training pipelines that inject physically plausible, parameterized volumetric weather via Gabor Fields, improving domain robustness.

- Dependencies: Coupled simulators for camera/LiDAR/Radar, validated scattering models per sensor, automation for randomization across spectral bands/orientations.

- Digital twins with live volumetric effects

- Sector: Industrial IoT, Smart Cities

- What: Stream and render transient media (steam, smoke, plumes) in plant/city digital twins with smooth LOD to support monitoring and training.

- Dependencies: Real-time volumetric data ingestion and regression, sensor fusion, safety protocols for visualization fidelity.

- Volumetric video and effect compression/codec research

- Sector: Media Technology, Standards

- What: Investigate Gabor-based bases and LOD masks for compressing volumetric effects and integrating them into emerging volumetric media standards.

- Dependencies: Standardization efforts, encoding/decoding performance on consumer devices, quality metrics for volumetric perceptual fidelity.

- Enhanced education and outreach tools for environmental hazards

- Sector: Public Policy, Education

- What: Interactive, web-based visuals of wildfire smoke/plume dispersion with resolution-independent LOD and device-friendly performance.

- Dependencies: Browser-capable GPU pipelines (WebGPU), simplified physics for public communications, data pipelines from agencies.

- SLAM and mapping in scattering environments

- Sector: Robotics

- What: Use compact volumetric scattering models to correct perception in dust/fog during mapping; integrate with existing 3D Gaussian/feature-based SLAM.

- Dependencies: Joint geometry–media estimation research, real-time constraints on embedded hardware, coupled sensor modeling and calibration.

Cross-cutting assumptions and dependencies

- Engine support: Efficient BVH filtering/masking (e.g., TLAS/GAS visibility masks), robust GPU implementations of complex error functions, and batched evaluation of analytic integrals are required for performance.

- Regression pipeline: Converting large VDB volumes to Gabor Fields requires compute and tuning (kernel counts, band allocations); convergence and quality may vary by asset.

- Physical plausibility: Gabor residuals can hold negative values; care is needed to maintain physically meaningful extinction when composing bands (e.g., low-pass Gaussian base ensuring positivity).

- Bias/variance management: Stochastic Laplacian and orientation-selective sampling introduce controllable variance and potential bias in multi-scattering contexts; application-specific error budgets and perceptual metrics are advisable.

- Integration with existing standards: Adoption will be faster if Gabor Field parameters can be embedded into common asset formats (USD, VDB) or standardized extensions.

Glossary

- Adaptive Clamping: A heuristic technique to reduce the spatial extent of primitives to speed up rendering while keeping error low. "Adaptive Clamping"

- Aliasing: Artifacts caused by insufficient sampling of high-frequency content, often appearing as jagged edges or flickering. "eliminate aliasing when lower levels of detail are required."

- Analytic Transmittance Integrals: Closed-form expressions for integrating attenuation along a ray, avoiding numerical approximation. "and analytic transmittance integrals."

- Anisotropic: Having direction-dependent properties; in kernels, different extents or behavior along different axes. "Our 3D anisotropic Gabor kernel has the form"

- Bisection: A root-finding method that repeatedly halves an interval to locate a solution. "via root-finding (Newton-Raphson, bisection) or closed-form inversion"

- BVH (Bounding Volume Hierarchy): A tree of nested bounding volumes used to accelerate ray-scene intersection tests. "before even traversing the BVH."

- Control Variates: A variance-reduction technique using correlated estimators to reduce Monte Carlo noise. "partial closed-form estimation and sample reweighting (i.e. control variates)"

- Covariance Matrix: A matrix describing the spread and correlation of a multivariate Gaussian's axes. "Together they form a factorization of the covariance matrix "

- Distance Sampling: Selecting interaction distances along a ray from the cumulative distribution to simulate scattering. "Distance sampling (multiple-scattering, VPPT) OR integrate the projected field directly (tomography)."

- Error Function (Complex): The complex-valued extension of the Gaussian error function used in integrals with oscillatory terms. "The complex error function (Faddeeva function) handles the finite integration bounds."

- Expectation-Maximization (EM): An iterative algorithm to estimate latent-variable model parameters, often for Gaussian mixtures. "optionally warm-started on a Gaussian mixture obtained through expectation-maximization."

- Extinction Field: The spatially varying coefficient governing attenuation (absorption + out-scattering) in a medium. "The extinction field at any point is expressed as the sum over contributing primitives:"

- Faddeeva Function: A special function related to the complex error function, used in wave-propagation integrals. "The complex error function (Faddeeva function) handles the finite integration bounds."

- Fourier Transform: A transform mapping a function to its frequency spectrum. "power spectra of the Fourier Transform."

- Frequency-Domain Representation: Describing a signal in terms of its frequency content rather than spatial or temporal domain. "have a frequency-domain representation consisting of symmetric, opposed Gaussians centered around their peak modulation frequency"

- Gabor Kernel: A Gaussian envelope modulated by a sinusoid, selective in both frequency and orientation. "A Gabor kernel combines a Gaussian envelope with a sinusoidal wave."

- Gaussian Pyramid: A multi-scale representation created by successively low-pass filtering and downsampling. "Gaussian pyramids are constructed in the form of Mipmaps"

- GAS (Geometry Acceleration Structure): A lower-level acceleration structure containing geometry for efficient ray tracing. "each level has its own Geometry Acceleration Structure (GAS)"

- Jacobian: The determinant of the transformation’s derivative, accounting for scaling under variable changes. "The factor is the Jacobian that arises as we normalize to unit length for simpler integration."

- Jensen’s Inequality: A convexity inequality relating a function of an expectation to the expectation of the function, often explaining bias. "Jensen's inequality states:"

- Kernel Mixture Model: A representation that sums multiple kernels (e.g., Gaussians, Gabors) to approximate a field. "kernel mixture models bounded by volumetric primitives."

- Laplacian Pyramid: A multi-scale residual decomposition capturing band-pass details between Gaussian pyramid levels. "similar to a continuous Laplacian pyramid but with orientation selectivity."

- Lagrangian-Style Particle Methods: Volumetric representations that track discrete particles rather than sampling on a fixed grid. "In recent years, Lagrangian-style particle methods have become the volumetric representation of choice"

- Level of Detail (LOD): Techniques that adjust representation fidelity based on viewing conditions to optimize performance. "Gaussian volumetric primitives are not directly compatible with level-of-detail (LOD) strategies."

- Line Integral: Integration along a path (ray) through a field to compute quantities like optical depth. "analytically derived line integrals for the Gabor kernels"

- Multiple Scattering: Light undergoing more than one scattering event before reaching the sensor. "accelerating ray traversal time in single- and multiple-scattering settings."

- Next-Event Estimation (NEE): A variance-reduction method that directly samples light sources for more efficient rendering. "secondary rays (i.e. next-event estimation, NEE)"

- Null-Scattering Estimators: Monte Carlo methods that introduce fictitious interactions to simplify sampling in heterogeneous media. "null-scattering estimators"

- Optical Depth: The integral of extinction along a path; determines transmittance via exponential decay. "each primitive’s contribution to the optical depth along a ray segment [a, b] is given by"

- OptiX Ray Visibility Masking: An OptiX feature allowing rays to selectively interact with subsets of geometry via bitmasks. "leverage Optix ray visibility masking at the Top-level Acceleration Structure (TLAS)."

- Phase Function: The angular distribution describing how light is scattered by particles in a medium. "emission, and phase function (the latter two omitted here for conciseness)."

- Power-Series Expansion: Representing functions as infinite series of powers, useful for approximations in special-function evaluation. "leveraging power-series expansions"

- Procedural Noise: Algorithmically generated noise used to create textures or volumetric detail without stored images. "procedural noise and texture synthesis methods"

- Radiance Field: A function that maps 3D position and direction to radiance, used in neural rendering and reconstruction. "radiance field reconstructions"

- Radiative Transfer Equation: The governing equation for light transport in participating media. "requiring solutions to the radiative transfer equation"

- Ray Marching: Incremental sampling along a ray to integrate volumetric effects. "Some popular approaches include ray marching"

- Ratio Tracking: A Monte Carlo technique for unbiased transmittance and scattering sampling in heterogeneous media. "render tomographic reference images using ratio tracking"

- SLAM (Simultaneous Localization and Mapping): Concurrent estimation of sensor pose and a map, often from visual or depth data. "simultaneous localization and mapping (SLAM)"

- Steerable Pyramid: A multi-scale, orientation-selective decomposition used in image and volume analysis. "steerable pyramid decompositions"

- Stochastic Gradient Langevin Dynamics (SGLD): An optimization method combining stochastic gradients with noise to approximate Bayesian sampling. "converted to Stochastic Gradient Langevin Dynamics by injecting noise into the gradients."

- Stochastic Texture Filtering: A Monte Carlo approach to texture filtering that mitigates aliasing by randomizing sampling. "stochastic texture filtering"

- TLAS (Top-level Acceleration Structure): The highest-level ray-tracing structure organizing references to lower-level geometry (GAS). "Top-level Acceleration Structure (TLAS)"

- Tomographic Rendering: Rendering that computes integrals through volumes to form images, analogous to tomography. "fast tomographic rendering"

- Transmittance: The fraction of light that is not attenuated along a path through a medium. "The total transmittance through the segment becomes"

- Voxel Grid: A 3D grid of volumetric samples representing density or other fields. "3D voxel grids that can be dense, adaptive or partly implicit"

- ZCA Whitening Matrix: A linear transform that decorrelates and scales variables to unit variance while preserving orientation. "using ZCA whitening matrix as:"

Collections

Sign up for free to add this paper to one or more collections.