- The paper introduces a policy-driven memory governance layer for LLM agents, using a tri-path loop (Read, Reflect, Background) for active memory adjudication.

- It employs novel techniques like FSRS v4 decay, Kalman Filter utility tracking, and Hebbian Graph Expansion to optimize memory retrieval and ensure consistency.

- Empirical evaluations demonstrate improvements in aggregate accuracy (+7.45%) and temporal reasoning (+39.2%), highlighting robust semantic synthesis over traditional methods.

MemArchitect: Advancing Policy-Driven Memory Governance for LLM Agents

Motivation and Architectural Differentiation

MemArchitect addresses a persistent failure mode in agentic LLM architectures: the "Governance Gap"—the absence of explicit policy-driven control over memory lifecycle, consistency, and compliance. As conversational LLM agents become persistent, maintaining reliable factuality and coherent state across sessions is paramount. Prior work in memory management, from operating system-inspired paging (MemGPT, MemOS) to streaming and compression pipelines (SimpleMem, LLMLingua), either treat memory as a passive, append-only artifact or focus solely on resource efficiency, neglecting contradictory facts, privacy enforcement, and context pollution.

MemArchitect introduces a model-agnostic governance middleware decoupled from the model weights. It implements a robust policy suite spanning Lifecycle Hygiene, Consistency Truth, Adaptive Retrieval, and Efficiency Safety, transforming memory into an actively governed, competitive resource. This architecture establishes a "Triage Bid" economy, enforcing that every memory competes for inclusion in the agent's limited context window under explicit governance rules.

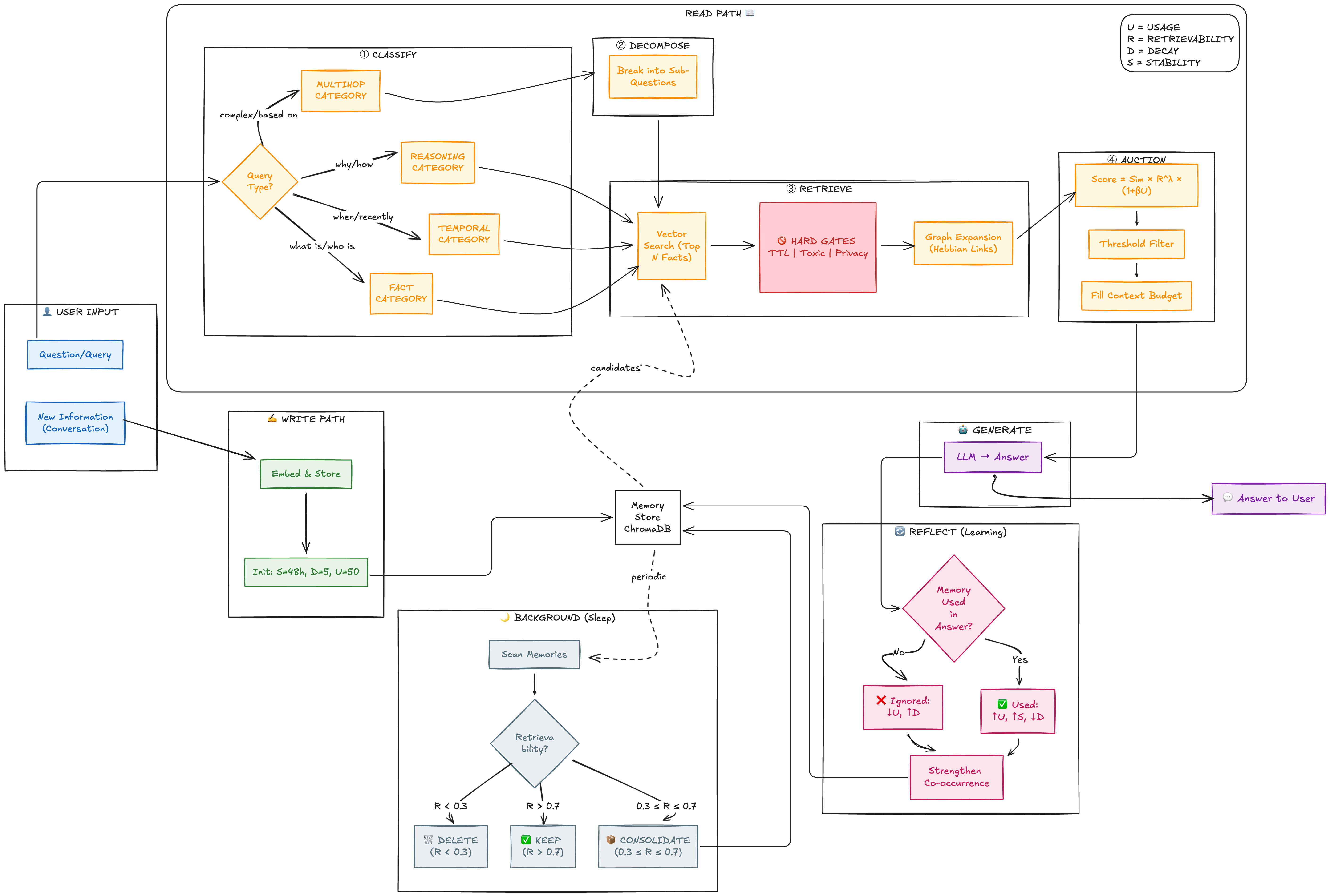

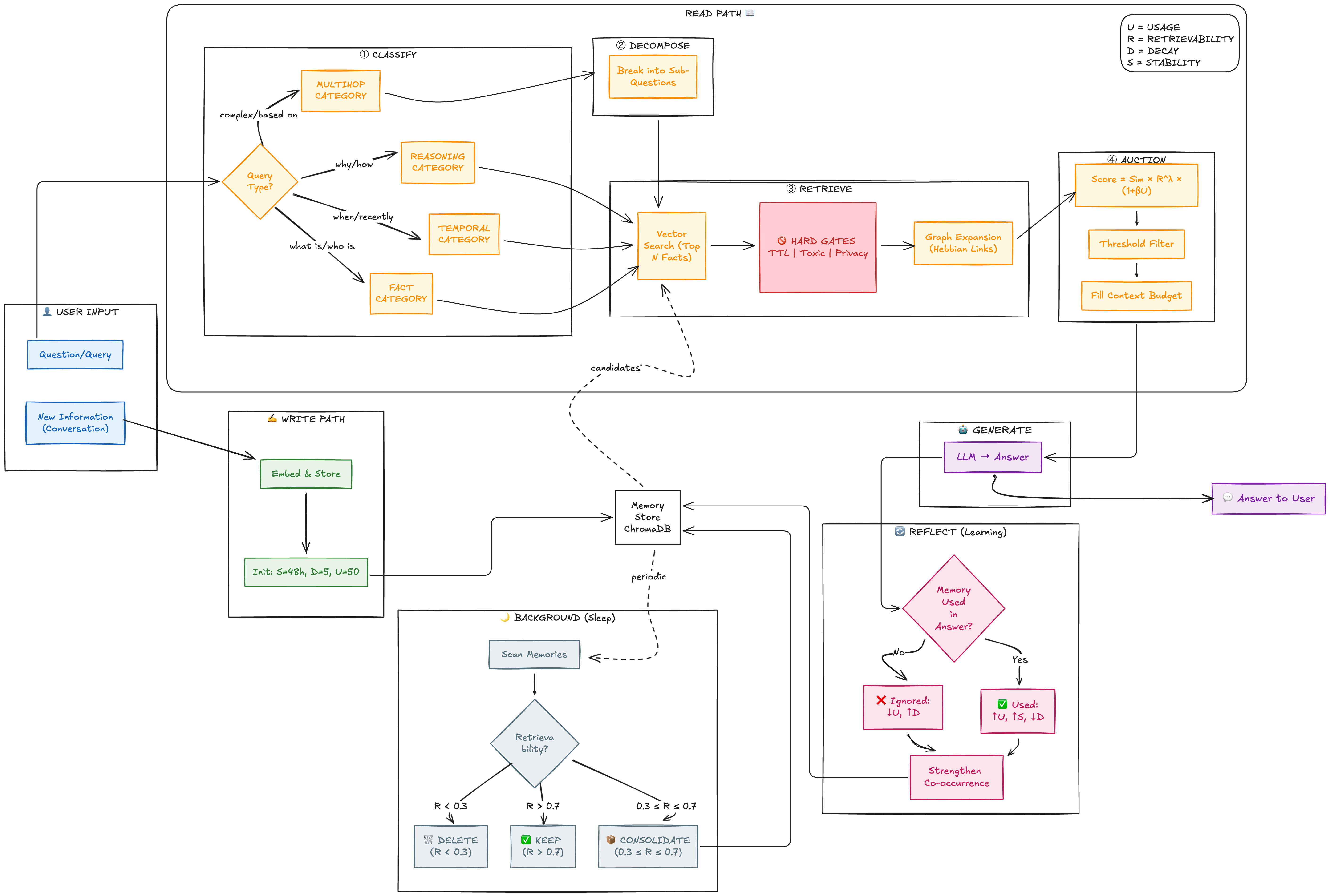

The architecture's main workflow operated as a closed tri-path loop—Read, Reflect, and Background—each oriented around active policy enforcement for memory adjudication.

Figure 1: MemArchitect governance workflow illustrating memory triage, policy-driven retrieval, reflexive feedback, and background maintenance.

Lifecycle Hygiene (Forgetting Engine)

MemArchitect replaces exponential decay with FSRS v4, modeling retrievability R(t)=(1+919⋅St)−1—yielding optimal forgetting curves tuned for agentic recall requirements. Redundant episodic memories are consolidated via entropy monitoring and compression triggers. Low-retrievability items (R<0.3) are aggressively pruned; fading memories (0.3≤R≤0.7) are synthesized into stable semantic facts for long-term retention.

Consistency Truth (Utility Engine)

A Kalman Filter tracks memory utility (U), updating based on binary feedback from usage detection post-generation, ensuring "Trust Score" inertia. A Cross-Encoder veto gate discards retrieved memories failing semantic entailment (<0.1 threshold), minimizing context pollution. Planned conflict resolution policies use NLI-based arbitration and recency-weighted provenance to automatically resolve contradictions.

Adaptive Retrieval (Auction Engine)

MemArchitect implements multi-hop decomposition for complex queries, adapting scoring logic based on query type (Fact, Temporal, Reasoning). Key parameters (λ, β) tune decay penalties and trust boosts contextually. Hebbian Graph Expansion retrieves co-occurring memories (association threshold P(B∣A)>0.7), maximizing relational recall beyond simple semantic retrieval.

Efficiency Safety (Budget Engine)

Adaptive token budgeting reserves context for reasoning or recall based on retrieval confidence. Planned extensions incorporate toxic memory filters (guardrails for prompt injection), and GDPR-compliant "Right-to-be-Forgotten" cascades to purge derivative summaries.

Governance Cycle: Integrated Memory Adjudication

MemArchitect's governance cycle consists of three tightly coupled execution paths:

- Read Path: Query classification, decomposition, and candidate auction, followed by discrimination and graph expansion before fitting the adaptive token budget.

- Reflect Path: Usage feedback identifies contributing memories for utility reinforcement and resets FSRS curves.

- Background Path: Idle-time consolidation enforces lifecycle rules—pruning obsolete data and compressing episodic details into facts.

This continuous loop maintains memory health, prevents context collapse, and ensures compliance-driven adjudication.

Empirical Evaluation: Comparative Analysis

Benchmarking against MemOS and SimpleMem using the LoCoMo-10 dataset, MemArchitect demonstrates markedly improved aggregate accuracy (+7.45%), confirming the efficacy of policy-driven governance over raw compression or OS-style infinite retention.

- Against SimpleMem (Qwen-3B backbone), MemArchitect achieves dominant improvements across all tasks, notably temporal reasoning (+39.2% accuracy), as policy-driven retention preserves critical temporal markers.

- Against MemOS (Llama-3.1-8B backbone), MemArchitect sacrifices some raw recall (notably in temporal reasoning, -44.9% accuracy) due to aggressive decay. However, it surpasses MemOS in open-domain and multi-hop tasks (+11.3% and +4.1% respectively), indicating superior semantic synthesis and resilience against context pollution.

- These results substantiate the claim that adjudication models shift failure modes from uncontrolled hallucination towards tunable pruning, a manageable trade-off for agentic robustness.

Implications and Research Trajectory

MemArchitect’s explicit governance protocols articulate a new paradigm for memory management in agentic LLM architectures. By adopting active adjudication policies, the system enables sustained coherence for multi-session personalization, compliance, and reliable factual recall. Practically, it reduces context pollution and the proliferation of "zombie memories," making LLM agents suitable for critical deployment scenarios demanding longitudinal reliability and privacy.

The architecture's implications extend to future developments: integrating advanced NLI-based conflict resolvers, refining FSRS calibration for low-frequency fact retention, enforcing compliance (e.g., "Right-to-be-Forgotten"), and expanding evaluations on benchmarks such as LongMemEval, PreFEval, and PersonaMem. The approach positions memory governance as a tunable, policy-driven layer, potentially transformable into learned-regulatory modules for continual adaptation.

Conclusion

MemArchitect establishes a policy-driven governance layer for memory lifecycle, consistency, retrieval, and compliance in agentic LLM architectures. Empirical benchmarks indicate strong aggregate improvements in accuracy and semantic integrity compared to algorithmic and system-level baselines. The architecture shifts agentic memory management from passive storage and compression toward active, policy-controlled adjudication, substantiating the necessity of explicit governance for long-term dependable AI agents. Future research will target robustness, benchmark generalization, and expanded policy enforcement for compliance and personalized agent memory.