Memento-Skills: Let Agents Design Agents

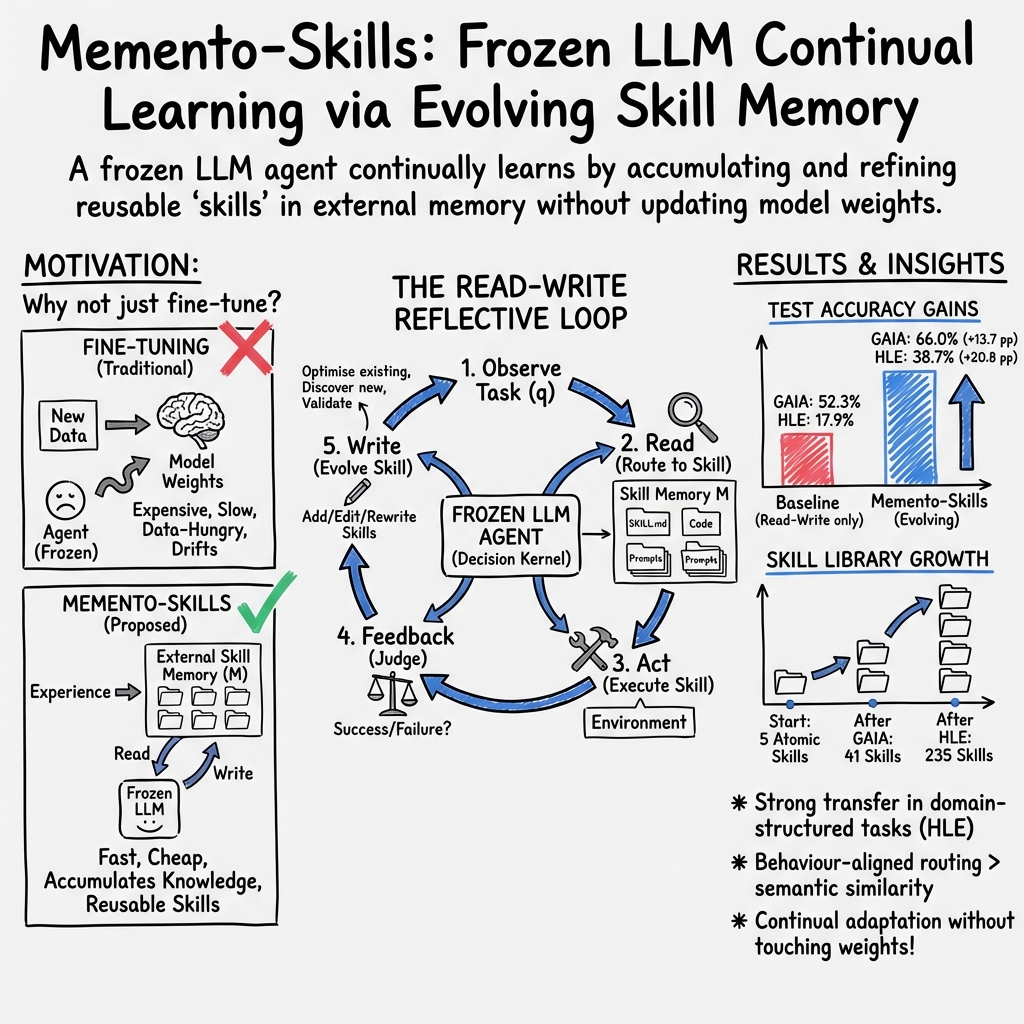

Abstract: We introduce \emph{Memento-Skills}, a generalist, continually-learnable LLM agent system that functions as an \emph{agent-designing agent}: it autonomously constructs, adapts, and improves task-specific agents through experience. The system is built on a memory-based reinforcement learning framework with \emph{stateful prompts}, where reusable skills (stored as structured markdown files) serve as persistent, evolving memory. These skills encode both behaviour and context, enabling the agent to carry forward knowledge across interactions. Starting from simple elementary skills (like Web search and terminal operations), the agent continually improves via the \emph{Read--Write Reflective Learning} mechanism introduced in \emph{Memento~2}~\cite{wang2025memento2}. In the \emph{read} phase, a behaviour-trainable skill router selects the most relevant skill conditioned on the current stateful prompt; in the \emph{write} phase, the agent updates and expands its skill library based on new experience. This closed-loop design enables \emph{continual learning without updating LLM parameters}, as all adaptation is realised through the evolution of externalised skills and prompts. Unlike prior approaches that rely on human-designed agents, Memento-Skills enables a generalist agent to \emph{design agents end-to-end} for new tasks. Through iterative skill generation and refinement, the system progressively improves its own capabilities. Experiments on the \emph{General AI Assistants} benchmark and \emph{Humanity's Last Exam} demonstrate sustained gains, achieving 26.2\% and 116.2\% relative improvements in overall accuracy, respectively. Code is available at https://github.com/Memento-Teams/Memento-Skills.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces Memento-Skills, a system that helps an AI “teach itself” new things by building and improving a shared toolbox of skills. Instead of changing the AI’s brain (the LLM, or LLM), it keeps the brain the same and gets smarter by writing down and refining useful “recipes” for how to solve tasks. Over time, it learns to design small, task-specific agents from these recipes—so, in a sense, it’s an agent that can design other agents.

What are the key questions the paper asks?

- Can an AI keep improving at new tasks without retraining its underlying model?

- If the AI stores what it learns as reusable “skills,” can it reuse them to solve future problems better and faster?

- Can a smart “router” pick the right skill based on what actually works (not just what sounds similar)?

- Does this approach beat simpler systems on real benchmarks with tough questions?

How does the system work? (Simple explanation)

Imagine the AI as a student with a fixed brain and a growing notebook:

- The brain: a frozen LLM that doesn’t get retrained.

- The notebook: a library of skills—each skill is a small folder with a short guide (SKILL.md), prompts, and code that shows how to do a task.

The AI follows a simple, repeatable loop to get better:

- Observe → Read → Act → Feedback → Write

Here’s what that means in everyday terms:

- Observe: The AI gets a new task (like a question or problem).

- Read: A “skill router” acts like a librarian and finds the most useful skill from the notebook for this task. If none fits, the AI can draft a new skill.

- Act: The AI uses the chosen skill to try to solve the task.

- Feedback: A judge checks if the answer is correct and explains what went wrong if it isn’t.

- Write: The AI updates the skill—adding guardrails, fixing mistakes, or splitting out a new skill if needed. It also runs a quick “unit test” to make sure the update didn’t break anything.

Why this matters: the AI learns from its own experiences by improving its notebook, not by changing its brain. Think of it as leveling up by collecting better tools, not by changing who you are.

How the “skill router” is trained (in simple terms)

Picking the right skill is hard. Many skills might sound similar but behave differently. So the team trained the router to care about what works in practice, not just matching words. They:

- Collected lots of example skills.

- Made realistic practice questions and labeled which skills solve them and which look similar but don’t work.

- Trained the router to score skills higher if they’re likely to succeed on a task, using a method similar to learning from many one-step examples.

In short: the router learns to choose the tool that actually solves the problem, not just the one with similar keywords.

What did they find?

- On GAIA (a benchmark with real-world, multi-step questions):

- The self-improving system reached 66% accuracy on the test set, compared to 52.3% for a simpler version without skill rewriting (+13.7 percentage points).

- Training rounds boosted success on practice questions up to 91.6% by the third round.

- Transfer to new GAIA questions was limited because the questions are very diverse—skills learned on one problem often didn’t match new ones.

- On HLE (Humanity’s Last Exam, with structured subjects like Biology, Math, and Physics):

- The system achieved 38.7% test accuracy vs. 17.9% for the simpler baseline—about double the performance.

- Because HLE has clear subjects, skills learned on one Biology problem were reused on other Biology problems—showing strong cross-task transfer.

- The skill library grew and organized itself:

- Starting from 5 basic skills, the library expanded to 41 skills on GAIA and 235 on HLE.

- These skills formed sensible clusters (like Web/Search, Math/Chemistry, Physics, Code/Text), similar to a well-organized toolbox.

- The trained router improved real outcomes:

- It was better at picking the right skill than common keyword or embedding search methods.

- This led to more successful end-to-end task completions.

Why is this important?

- Learns without retraining: The AI gets better by improving its skill library, not by updating its core model. That saves time and money and is easier to deploy.

- Builds reusable knowledge: Skills are like recipes you can reuse. Over time, the system collects reliable routines and “muscle memory” for common tasks.

- Adapts to domains: When tasks share patterns (like in school subjects), the AI transfers skills more effectively and improves quickly.

- Modular and controllable: You can boost performance by:

- Using a stronger LLM,

- Running more learning rounds (collect more “experience”),

- Improving the router’s embeddings.

- Practical safety checks: Each skill update is tested to avoid breaking what already works.

Bottom line

Memento-Skills shows that an AI can keep getting better by writing and refining a shared library of skills—like building a personal playbook—without changing the AI’s core brain. This makes continual learning more practical, reusable, and affordable, and it opens the door to AI systems that design and improve their own mini-agents for new tasks.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of open issues the paper leaves unresolved. Each point is framed to be concrete and actionable for future work.

- External validity and scale of evaluation: Results are reported only on small GAIA (100 train/65 test) and a subset of HLE (788/342). Expand to larger, diverse benchmarks (e.g., SWE-bench, HotpotQA, WebArena, ToolBench) and report confidence intervals and multi-seed variance.

- Dependence on a single underlying LLM: All experiments use Gemini-3.1-Flash. Evaluate across multiple LLMs and sizes (e.g., GPT-4/4o, Claude, Llama variants) to quantify how “frozen LLM strength” impacts learning curves, router performance, and convergence.

- Router training with synthetic queries: The behavior-aligned router is trained on LLM-synthesized goals and curated, high-star GitHub skills. Assess bias and overfitting by (i) training on real user routing queries/logs, (ii) ablating synthetic query quality, and (iii) testing under domain shift.

- Limited router evaluation: Offline retrieval is measured on 140 synthetic queries; end-to-end metrics are reported without uncertainty. Increase test size, add real-world routing tasks, and compare alternative routers (cross-encoders, LLM-as-ranker, ColBERT, two-tower with hard-negative mining) and fusion strategies beyond RRF.

- Incorporating newly created skills into routing: The paper does not specify how the router is updated as the skill library evolves. Design and evaluate online or incremental router updates (e.g., periodic fine-tuning, bandit feedback, index-only vs weight updates) and quantify cold-start routing for new skills.

- Scalability and latency at large library sizes: There is no systematic analysis of retrieval latency, memory footprint, and throughput as skills grow from 102 to 106. Benchmark ANN indices, hybrid retrieval pipelines, and caching strategies; report p50/p95/p99 latencies and cost per query.

- Single-skill routing and composition limits: The router selects a single “most relevant” skill. Many tasks require multi-skill plans. Extend to multi-skill selection, sequencing, and hierarchical composition; evaluate planning quality and joint credit assignment across skills.

- Failure attribution granularity: The optimizer assigns blame to a single target skill. Investigate multi-skill credit assignment and structural causal analyses when failures emerge from skill interactions or shared utilities.

- Reliability of LLM-based judge and attribution: Execution success, failure attribution, and unit-test validation rely on LLM judgments. Quantify judge accuracy against human labels, measure error propagation, and explore programmatic or ensemble critics with calibration.

- Regression control via LLM-generated tests: The unit-test gate uses synthetic tests created and scored by LLMs. Measure test coverage, mutation score, and regression rates; add curated regression suites, property-based tests, and fuzzing to prevent silent degradations.

- Safety and isolation guarantees: Although a security policy and UvSandbox are mentioned, no formal evaluation is provided. Red-team the system, quantify unsafe command rates, enforce resource limits, network egress policies, and secret isolation; report safety metrics and incident outcomes.

- Supply-chain and code provenance risks: Skills originate from public code or are auto-generated. Define and evaluate policies for dependency whitelisting, license compliance, vulnerability scanning, and update policies as skills evolve.

- Skill bloat, pruning, and lifecycle management: The library grows to 235 skills on HLE without a policy for pruning, merging, or versioning. Develop and evaluate criteria for de-duplication, aging/decay, merging related skills, and semantic compression to control retrieval noise.

- Hyperparameter sensitivity and auto-tuning: Key thresholds (utility δ, n_min, retries K, router temperature τ) are not ablated. Provide sensitivity analyses and automatic tuning strategies (e.g., Bayesian optimization, bandits) tied to performance/safety trade-offs.

- Long-horizon and multi-modal tasks: The method is positioned for multi-step, multi-modal problems, but evaluation is limited. Add tasks with images/audio and longer temporal dependencies; measure how skill reuse scales with horizon length and modality.

- Sample efficiency vs fine-tuning: Compare the self-evolving skill approach to parameter-efficient tuning (e.g., LoRA) on equal compute/time budgets to quantify relative sample efficiency, stability, and maintenance costs.

- Baseline breadth: Beyond a read–write ablation, there is no comparison to strong agent frameworks (ReAct variants, MRKL, Toolformer, AutoGen, SWE-bench agents). Include competitive baselines and standardized agent evaluations.

- Theoretical scope and rates: Convergence guarantees are stated for the retrieval policy with KL regularization, but (i) no convergence rate is proven, and (ii) no guarantees address non-stationarity introduced by write operations. Provide rate analyses and sufficient conditions on Write to preserve convergence.

- Domain transfer characterization: GAIA exhibits limited transfer; HLE shows stronger transfer aligned with subject taxonomies. Formalize domain conditions that enable transfer, and explore automatic discovery/induction of domain structures to organize skills.

- Robustness to noisy/ambiguous queries: Acknowledge that real queries are messy; evaluate robustness to typos, code-switching, and underspecified requests; develop query normalization or clarification strategies and quantify their effect on routing and success.

- Embedding model impact: Only Qwen3-Embedding-0.6B is used. Ablate different embedding backbones, training objectives (e.g., supervised contrastive vs pairwise ranking), and temperatures; examine cross-encoder reranking trade-offs.

- Continual router learning under sparse rewards: One-step offline RL sidesteps exploration challenges. Investigate safe online (off-policy) updates using logged rewards, counterfactual evaluation (IPS/DR estimators), and conservative RL to avoid regressions.

- Observability, provenance, and explainability: The system lacks a clear mechanism for auditing why a skill was selected and what artifact changes were made. Add provenance tracking, per-decision explanations, and reproducible execution traces; assess their utility in debugging and trust.

- Privacy and compliance: Storing and evolving skills from deployment traces risks capturing sensitive information. Define PII filtering, data minimization, retention policies, and differential privacy options; evaluate privacy leakage empirically.

- Handling environmental drift: Web sources, APIs, and formats change. Propose and measure continuous revalidation, health checks, and automated skill deprecation/repair in response to drift.

- Tip memory contribution: “Tip memory” is introduced but its quantitative impact is not reported. Isolate and measure the contribution of tip memory vs skill updates; define retention/decay policies.

- Cost and efficiency reporting: Aside from anecdotal latency, there is no systematic accounting of token usage, compute time, and storage growth. Provide cost–performance curves and guidance for deployment budgets.

- Reproducibility and release details: Router training hyperparameters, negative mining protocols, and weight checkpoints are not fully specified. Release exact scripts, seeds, trained routers, and full data splits for reproducibility.

- SKILL.md format standardization: Interoperability, schema evolution, and backward compatibility of skill artifacts across agents/LLMs are not addressed. Propose a versioned schema and migration tools; evaluate portability.

- Ethical constraints and misuse prevention: The system can autonomously synthesize and execute code (e.g., scraping, bypassing restrictions). Define guardrails, policy constraints, and monitoring for dual-use concerns; report compliance practices.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage Memento-Skills’ external skill memory, read–write reflective learning loop, and behavior-aligned routing, along with likely sectors, workflows/products, and key feasibility dependencies.

- Enterprise IT and Customer Support Automation (software, IT operations)

- Use case: Self-improving helpdesk agents that triage, search knowledge bases, run web/CLI tools, and refine runbooks as reusable skills (SKILL.md + scripts).

- Tools/workflows/products: Skill router + skill store; failure attribution and unit-test gate; Grafana-style telemetry for utility scores; GUI/CLI for ops teams.

- Assumptions/dependencies: Safe sandboxing (e.g., uv), strong security policy to block dangerous commands, access to ticket/KB data, LLM/embedding quality, human-in-the-loop for high-risk actions.

- Software Engineering Copilot for Runbooks and Ops Playbooks (software)

- Use case: Agents that convert ad hoc fixes and incident responses into tested, versioned skills; automatically patch brittle scripts after failures.

- Tools/workflows/products: Skill evolution engine (failure attribution → targeted file edits → unit tests → rollback on fail); repo-backed SKILL.md folders; CI hooks.

- Assumptions/dependencies: Reliable LLM-based judge for pass/fail, mature test harnesses, version control and change-management policies.

- Retrieval/Tool Routing Upgrade for Existing Agent and RAG Systems (software/platforms)

- Use case: Drop-in behavior-aligned router (offline InfoNCE fine-tuning) to select the right tool/plugin/skill before execution, improving end-to-end success.

- Tools/workflows/products: Hybrid retrieval pipeline (BM25 + dense + reranking + RRF), Memento-Qwen router as a component; data generation for positives/hard negatives.

- Assumptions/dependencies: Access to or creation of a curated skill/plugin catalog; synthetic query generation quality; embedding model choice; evaluation on real trajectories.

- Low/No-Code Business Process Automation (operations, back office)

- Use case: LLM-designed task agents for reporting, spreadsheet ops, data ingestion/validation; evolves skills from actual run feedback with no weight updates.

- Tools/workflows/products: Desktop GUI/CLI; connectors to SaaS (e.g., spreadsheets, CRM); SKILL.md libraries for repeatable workflows.

- Assumptions/dependencies: API integrations/credentials, permissioning, organizational guardrails, visibility into run logs.

- Knowledge Management with Executable Memory (enterprise)

- Use case: Turn tribal knowledge into executable, auditable skill folders; structured memory that “remembers” and improves across interactions.

- Tools/workflows/products: Skill store with semantic clustering; context manager for stateful prompts; shared, versioned repositories for teams.

- Assumptions/dependencies: Content governance, deduplication and curation policies, taxonomy alignment for better transfer.

- Personal Productivity Agents (daily life, prosumers)

- Use case: Agents that learn recurring tasks (travel booking, expenses, file management), refine steps via read–write feedback and store them as reusable skills.

- Tools/workflows/products: Local desktop agent with web/file tools; SKILL.md-based automations; unit-test gate for benign tasks.

- Assumptions/dependencies: Local sandboxing, API keys for services, user consent and privacy controls.

- Classroom and Study Support (education)

- Use case: Tutor-like assistants that build domain-aligned skill libraries (e.g., math, biology) and reuse them across questions; immediate for ungraded, practice scenarios.

- Tools/workflows/products: Subject-specific skill clusters; practice/test generation via LLM judge; reflective retries for learning.

- Assumptions/dependencies: Alignment with curricula, content quality, oversight to prevent hallucinated or unsafe content; no high-stakes/autograding without review.

- Governance, Audit, and Compliance for Agentic Systems (policy, regulated industries)

- Use case: Prefer agents that adapt via external skills for auditability; SKILL.md and version history provide traceable changes instead of opaque weight updates.

- Tools/workflows/products: Audit dashboards (utility, success rate, change logs), approval gates for skill mutations, policy templates for unit-test thresholds.

- Assumptions/dependencies: Organizational policies that treat skill changes like code changes, reproducible test frameworks, change control boards.

- DevSecOps Guardrails for Agentic Execution (security)

- Use case: Apply the paper’s security policy module and sandbox execution to reduce operational risk of agents running shell/web tools.

- Tools/workflows/products: Command allow/deny lists, environment isolation, automated regression tests after each skill mutation.

- Assumptions/dependencies: OS-level isolation, secret management, robust logging/alerts; tested rollback mechanisms.

- Open Skill Catalogs and Internal Marketplaces (software ecosystems)

- Use case: Seed and share curated libraries of SKILL.md across teams; train routers on the catalog to improve routing and reuse.

- Tools/workflows/products: Skills marketplace (e.g., the provided dataset), internal hubs for discovery, automated deduplication and ranking by utility.

- Assumptions/dependencies: Licensing/IP clarity for shared skills, quality curation processes, reputation/ratings mechanisms.

- Academic Reproducibility and Agent Benchmarking (academia)

- Use case: Researchers reproduce and extend reflective skill-learning studies (GAIA, HLE), swap LLMs/embeddings, and study cross-task transfer.

- Tools/workflows/products: The released codebase/CLI/GUI; experiment configs; unit tests as gates for mutations.

- Assumptions/dependencies: Access to model APIs, consistent evaluation judges, credit assignment reliability.

Long-Term Applications

These opportunities require further research, scaling, domain integration, regulatory alignment, or stronger safety guarantees before broad deployment.

- Cross-Department Self-Evolving Enterprise Agents (software, operations)

- Use case: Organization-wide agent that designs and refines agents (skills) for finance, HR, IT, logistics; scales to tens of thousands of skills with dedup, routing, and governance.

- Potential products/workflows: Enterprise skill fabric; cross-domain router with behavior alignment; multi-tenant governance and observability.

- Assumptions/dependencies: Scalable indexing (millions of skills), policy/versioning at scale, robust retrieval under domain drift, cost controls.

- Clinical Decision Support and Care Pathway Automation (healthcare)

- Use case: Domain-aligned skill libraries for triage, guideline application, coding, and eventually CDS; reflective updates from outcomes with rigorous validation.

- Potential products/workflows: Clinically validated skill packs; integration with EHR; unit-test gates replaced/augmented by clinical test suites and audits.

- Assumptions/dependencies: Regulatory clearance (e.g., FDA/CE), clinician oversight, dataset curation, safety/ethics frameworks, bias monitoring.

- Digital Government Services at Scale (public sector, policy)

- Use case: Self-improving agents to process citizen requests, forms, benefits, and information queries; auditable, domain-structured skills.

- Potential products/workflows: Government skill registries; procurement specs that require externalized-memory agents for traceability; automated compliance checks.

- Assumptions/dependencies: Data privacy regimes, procurement standards, red-team and safety audits, citizen-facing reliability criteria.

- Advanced Compliance and Risk Automation (finance, legal)

- Use case: Agents that encode regulatory rules as skills, adapt to new guidance, and provide explainable audit trails for changes; pre-execution risk scoring.

- Potential products/workflows: RegTech skill libraries; compliance sandboxes; approval workflows for skill updates.

- Assumptions/dependencies: Up-to-date regulatory corpora, formalized unit tests for rules, model risk management frameworks, legal sign-off.

- Industrial/SCADA and Energy Operations (energy, manufacturing)

- Use case: Runbook automation for plant operations, alarms, and maintenance; reflective updates to procedures; strict safety gating.

- Potential products/workflows: OT-integrated skill executors with simulation-before-act; staged rollout (simulate → shadow → act).

- Assumptions/dependencies: Air-gapped or tightly controlled environments, digital twins for safe testing, fail-safe controls, certification.

- Robotics and Embodied Agents (robotics)

- Use case: Translate SKILL.md into embodied procedures; learn and refine manipulation/navigation routines through reflective loops with physical/virtual trials.

- Potential products/workflows: Robot skill repos, task planners aligned with skill memory, sim-to-real workflows with test gates.

- Assumptions/dependencies: High-fidelity simulators, safe execution policies, perception–action integration, hardware variability handling.

- Scientific Discovery and Lab Automation (academia, biotech)

- Use case: Encode experimental protocols, data cleaning, and analysis as evolvable skills; improve reproducibility and expedite method refinement.

- Potential products/workflows: Lab skill notebooks; instrument drivers wrapped as skills; automated assay validation as gates.

- Assumptions/dependencies: Instrument integrations, domain ontologies, rigorous validation metrics, IP and data governance.

- Institution-Scale Adaptive Tutoring and Assessment (education)

- Use case: Curriculum-aligned, self-evolving tutors that capture and reuse skills across cohorts and courses; controlled, fair, and explainable evolution.

- Potential products/workflows: Skill packs per subject and level; educator dashboards for reviewing mutations and outcomes; fairness audits.

- Assumptions/dependencies: Bias/fairness controls, privacy protections for student data, accreditation alignment, robust content QA.

- Standards and Certification for Skill-Based Agents (policy, ecosystems)

- Use case: Standardize SKILL.md schemas, mutation gates, and audit logs; certify skill libraries and routers for sectors (e.g., medical, finance, safety-critical).

- Potential products/workflows: Standards bodies’ specs; certification labs; compliance test suites for skill evolution.

- Assumptions/dependencies: Industry/government collaboration, open schemas, conformance tests, incentives for adoption.

- Federated Skill Exchanges and Trusted Marketplaces (ecosystems)

- Use case: Securely share and monetize skills across organizations; reputation and provenance tracking; federated learning for routers without data leakage.

- Potential products/workflows: Federated router training; cross-org dedup; license-aware distribution.

- Assumptions/dependencies: IP/licensing frameworks, provenance infrastructure, privacy-preserving training, marketplace governance.

- Multi-LLM Orchestration and Cost/Latency Optimization (platforms)

- Use case: Route execution to different LLMs/tools per skill based on cost/latency/quality; read–write evolution learns the best pairing over time.

- Potential products/workflows: Policy engines for model selection; telemetry-driven optimization.

- Assumptions/dependencies: Consistent evaluation across models, vendor diversity, cost tracking, fallback strategies.

- Safety Evaluation and Red-Teaming Frameworks for Skill Evolution (cross-sector)

- Use case: Independent judges, adversarial unit tests, and regression suites tailored to evolving skills; audit-ready safety reports.

- Potential products/workflows: Red-team packs per domain; mutation diff analyzers; continuous compliance pipelines.

- Assumptions/dependencies: Strong evaluator reliability, diverse adversarial corpora, alignment with sector risk frameworks.

Notes on feasibility across applications:

- Transfer is strongest when skills align with structured domain taxonomies (as shown with HLE); highly diverse tasks may need larger libraries and better routers.

- The externalized-memory approach depends critically on: (1) underlying LLM competence, (2) high-quality, behavior-aligned routing, (3) safe, reliable execution environments, and (4) robust, domain-appropriate test gates.

- LLM judges and synthetic data generation introduce subjectivity; high-stakes domains will require human oversight and formal verification beyond LLM-based gating.

Glossary

- BM25: A classic sparse lexical retrieval algorithm that scores documents based on term frequency and document length normalization. "We find that purely semantic routers (e.g., BM25 [12] or embedding routers such as Qwen-Embedding [19]) are insufficient for skill selection"

- Boltzmann routing policy: A stochastic selection rule that samples actions with probability proportional to the exponential of their (temperature-scaled) Q-values. "yielding a Boltzmann routing policy"

- case-based reasoning: An approach that solves new problems by retrieving and adapting solutions from similar past cases. "case-based reasoning LLM agents [6, 7]"

- contrastive retrieval: Training a retriever to pull together matching query–document pairs and push apart mismatches using positive and negative examples. "We train a contrastive retrieval model via single-step offline RL"

- cross-encoder reranker: A re-ranking model that jointly encodes a query and candidate to produce precise relevance scores after initial retrieval. "optionally applies a cross-encoder reranker to produce the final top-k skills."

- credit assignment: The process of identifying which component or action contributed to success or failure for targeted updates. "performing credit assignment at the skill level."

- Direct Preference Optimization (DPO): A post-training method that optimizes models directly from pairwise preference data without explicit reward models. "SFT / RLHF / DPO"

- episodic memory: A memory structure that stores past interactions (episodes) to inform future decisions. "augmenting the agent with an episodic memory Mt"

- failure attribution: Diagnosing the specific module or skill responsible for an observed error to guide corrective changes. "an LLM-based failure attribution selector"

- hard negatives: Challenging non-matching examples that are semantically close to positives, used to make contrastive learning more discriminative. "and hard negatives (same domain and terminology, but the target skill is not the right tool)."

- InfoNCE: A contrastive loss function that maximizes similarity of positive pairs while minimizing similarity to negatives under a softmax normalization. "multi-positive InfoNCE loss"

- KL-regularised soft policy iteration: A policy-improvement scheme that balances maximizing expected returns with staying close to a prior via Kullback–Leibler regularization. "the KL-regularised soft policy iteration over the Reflected MDP"

- LLM decision kernel: The conditional distribution over actions produced by the LLM given a state and retrieved context. "PLLM denotes the LLM decision kernel"

- offline RL: Reinforcement learning from a fixed dataset without further environment interaction, avoiding online exploration. "single-step offline RL"

- One-step offline Q-learning: A horizon-1 Q-learning formulation that learns value estimates from single-step transitions in offline data. "One-step offline Q-learning view."

- Parzen kernel: A kernel function used in nonparametric density estimation (Parzen windows) to approximate probability densities. "Does the Parzen kernel scale?"

- policy iteration: An RL algorithm alternating between evaluating a policy and improving it until convergence. "cast as an implicit form of policy iteration"

- Read-Write Reflective Learning: A closed-loop process that reads from memory to act, then writes back feedback to refine future behavior. "Through the Read-Write Reflective Learning loop, the agent autonomously acquires, refines, and reuses these skills"

- ReAct steps: A prompting/execution pattern that interleaves reasoning (“Thought”) with tool-based actions to solve tasks. "Execution Loop (ReAct steps)"

- reciprocal rank fusion: A rank-aggregation method that combines multiple ranked lists by summing reciprocal ranks, often improving recall. "score-aware reciprocal rank fusion"

- Reflected MDP: A reformulated MDP that augments the state with memory and folds memory updates into the transition dynamics. "The Reflected MDP reformulates this as"

- skill memory: A collection of reusable, executable skill artifacts (code, prompts, specs) serving as persistent external memory. "Definition 1.1 (Skill Memory)."

- skill router: A selector that chooses the most behaviorally relevant skill from the library given the current task. "a behaviour-trainable skill router selects the most relevant skill"

- soft Q-function: A value function whose exponentiated outputs define a soft (Boltzmann) policy, enabling entropy-regularized control. "We interpret the learned score as a soft Q-function"

- SRDP (Stateful Reflective Decision Process): An extension of the MDP that incorporates episodic memory and an LLM decision kernel for stateful agency. "Stateful Reflective Decision Process (SRDP) [17]"

- t-SNE: A non-linear dimensionality reduction technique used to visualize high-dimensional data in 2D or 3D. "t-SNE projection of skill embeddings."

- unit-test gate: An automated safeguard that requires proposed skill changes to pass synthesized tests before being accepted. "an automatic unit-test gate"

- utility score: An empirical measure of a skill’s effectiveness (e.g., success rate) used to govern updates or discovery. "increasing the skill's utility score"

Collections

Sign up for free to add this paper to one or more collections.