- The paper introduces an adaptive, budgeted forgetting framework that optimizes memory retention by scoring entries using recency, frequency, and semantic similarity.

- It employs a constrained optimization method under fixed storage budgets to reduce false memory rates and mitigate performance decay.

- Empirical results on benchmarks like LOCOMO, LOCCO, and MultiWOZ demonstrate robust efficiency improvements and stable long-horizon reasoning.

Novel Memory Forgetting Techniques for Autonomous AI Agents: Balancing Relevance and Efficiency

Problem Context and Motivation

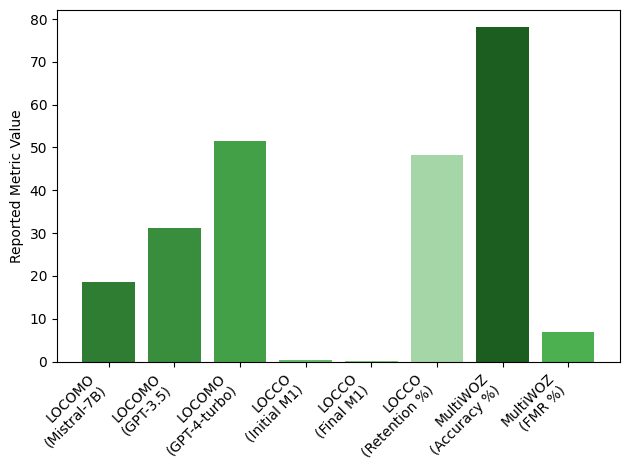

Efficient memory management in autonomous conversational agents is critical for maintaining long-horizon coherence, reasoning accuracy, and computational feasibility. Persistent accumulation of context introduces temporal decay, retrieval noise, and false memory propagation—phenomena well-quantified by degradation in benchmark metrics such as LOCOMO F1 or LOCCO memory persistence. Experimental evidence from LOCOMO and LOCCO demonstrates rapid performance decay, e.g., Openchat-3.5’s M1 dropping from $0.455$ to $0.05$, while MultiWOZ produces a non-trivial false memory rate (FMR) of 6.8% at 78.2% accuracy. These artifacts highlight the infeasibility of naive memory retention and necessitate principled mechanisms for memory attenuation under bounded constraints.

Prior Work and Gaps in Existing Approaches

Memory management literature in AI agents encompasses hierarchical architectures, loss-based compression, and context window optimization. Honda et al. present ACT-R-inspired temporal decay and frequency reinforcement, but do not address tradeoffs between deletion granularity and task fidelity. Similar limitations persist across index-modified retrieval (Ming et al.), block-level memory (Xiao et al.), and KV cache compression (Shen et al.), where deletion policies are heuristic or absent, and resource constraints remain implicit rather than formally optimized.

Few works jointly model memory–performance tradeoffs or empirically validate forgetting strategies in multi-domain, long-horizon dialogue. Most either isolate inference efficiency (Mirani et al.), emphasize storage indexation (Saleh et al.), or restrict evaluation to working or in-trial memory (Hu et al.). The surveyed approaches lack an optimization-driven framework for relevance scoring, especially in the presence of adversarial contamination and temporal decay. This gap motivates formally constrained methods that integrate recency, frequency, and semantic alignment for adaptive, relevance-aware memory selection.

Methodological Framework

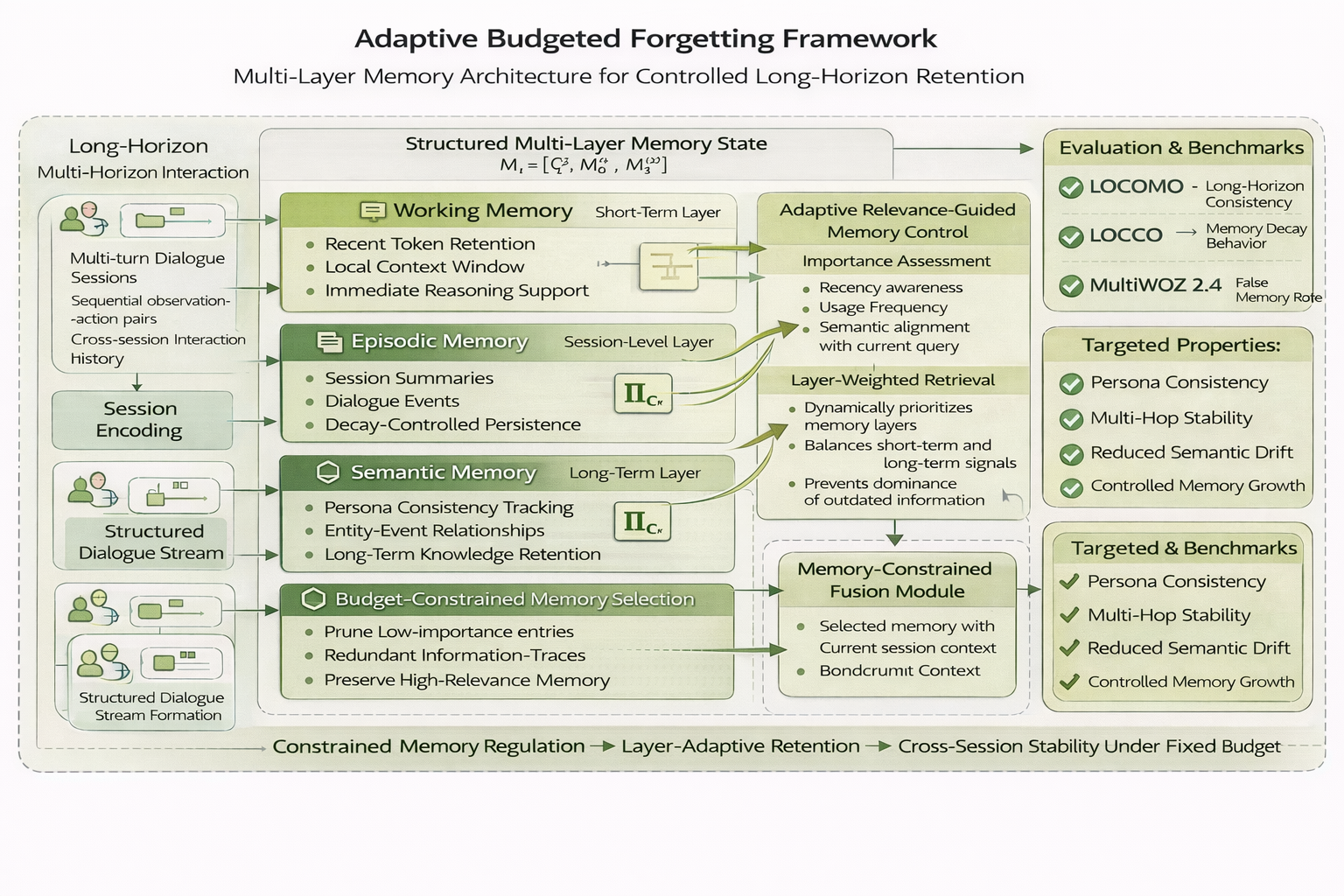

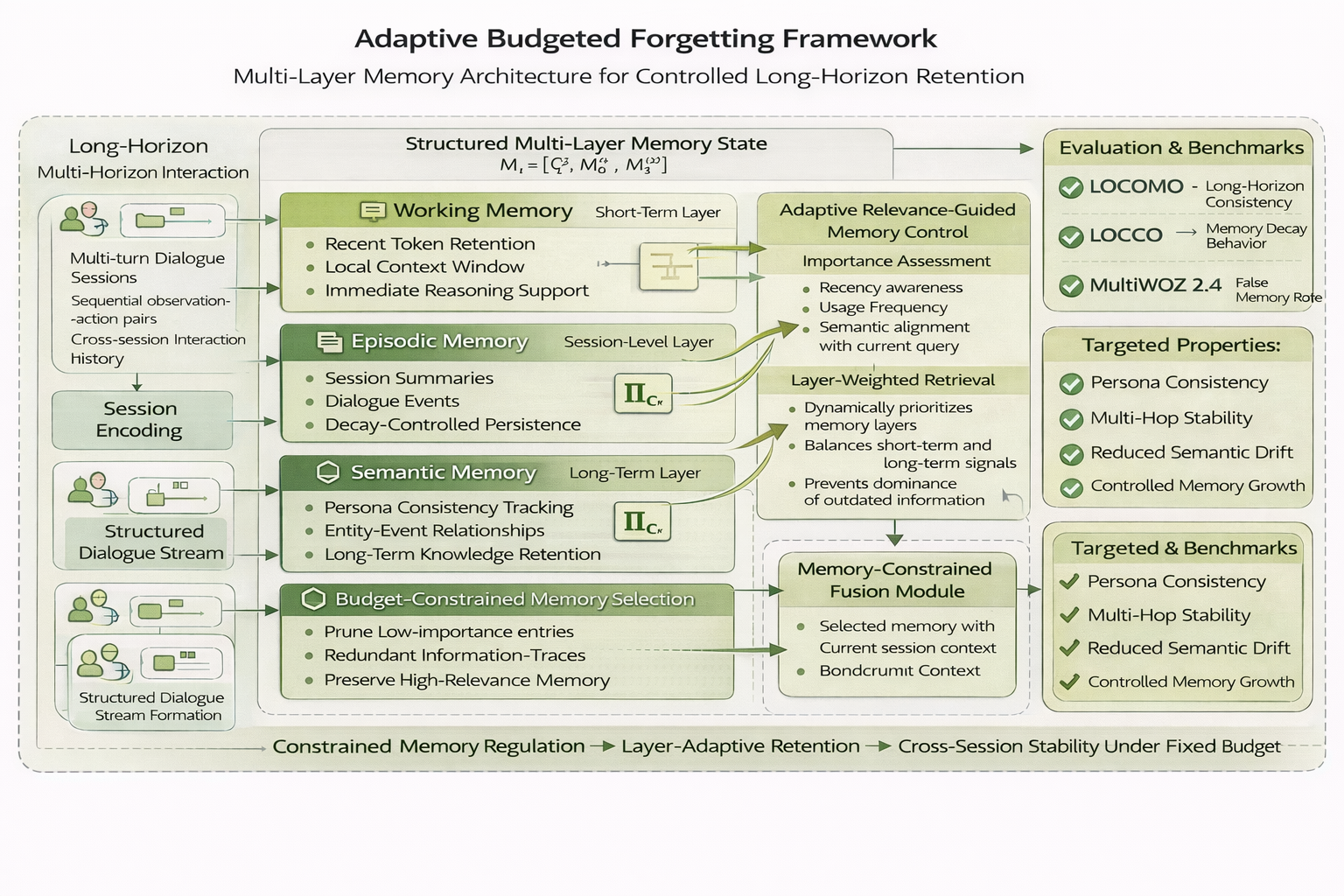

The central proposal is an adaptive budgeted forgetting architecture (Figure 1) integrating multi-layered memory with an explicit, context-aware retention controller. The agent’s state at time t comprises a sequence of observations and actions, summarized in an evolving memory Mt. When Mt exceeds the fixed budget B, each entry is scored by relevance across three axes—recency (exponential decay), access frequency, and semantic similarity to current queries. The controller then solves a constrained maximization problem, selecting memory units that maximize cumulative importance under budget B.

Figure 1: Architecture of the Adaptive Budgeted Forgetting Framework, which combines multi-layer memory, dynamic relevance scoring, and optimization-based retention under fixed storage budgets.

Recency scoring is driven by exponential decay ($0.455$0), while the aggregate importance $0.455$1 balances recency, frequency, and semantic affinity (Equation 2). The deletion policy is non-chronological and context-dependent, which prevents abrupt performance collapse upon budget reduction. The global objective penalizes memory overuse, embedding a tunable efficiency–accuracy tradeoff in overall learning.

Empirical Evaluation

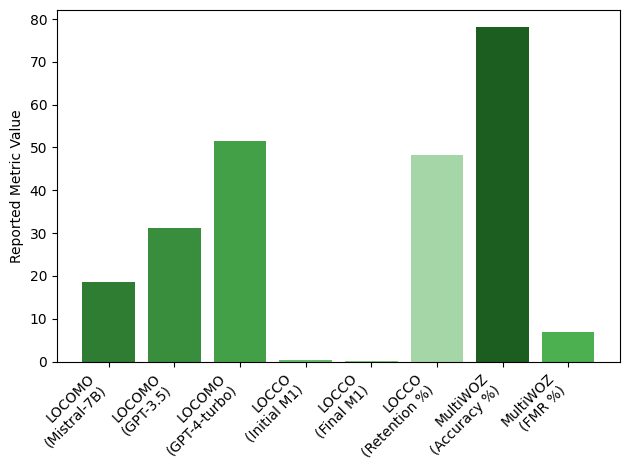

Benchmarks encompass LOCOMO, LOCCO, and MultiWOZ 2.4–chosen for their emphasis on long-horizon reasoning, memory retention, and dialogue quality. Baselines include leading LLMs and agent frameworks evaluated on multi-hop, entity tracking, and adversarial reasoning. Comparative results indicate:

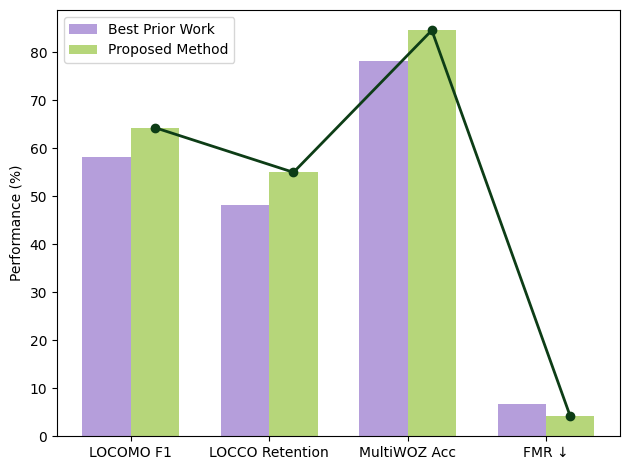

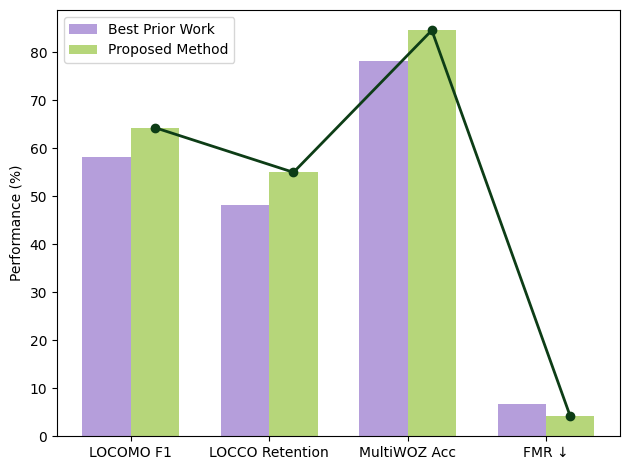

- LOCOMO: The framework outperforms the strongest reported baselines in F1, achieving stability with significantly less context expansion (see Figure 2).

- LOCCO: Memory retention rates remain robust under deletion, unlike static models where performance drops by over 85% across temporal splits.

- MultiWOZ 2.4: The method reduces FMR below strong baselines while preserving task accuracy at or above the 78.2% reference level.

Figure 3: Benchmark results showing long-horizon reasoning, decay, and FMR across LOCOMO, LOCCO, and MultiWOZ 2.4 under the proposed method.

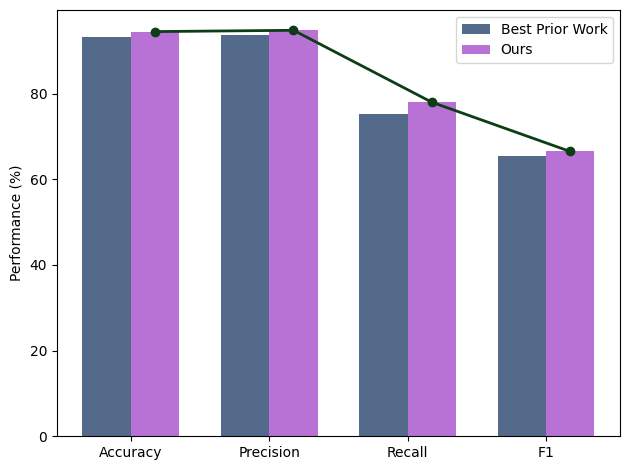

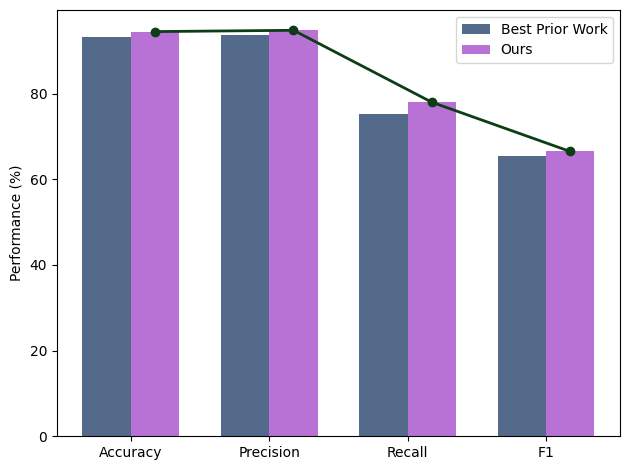

Figure 4: Performance trends on key metrics, with consistent improvement over previous paradigms in efficiency-constrained settings.

Statistical ablation confirms that budget adjustment (from high to moderate levels) does not yield abrupt losses in F1, accuracy, or retention—unlike aggressive, order-based deletion schemes. Relevance-scored forgetting maintains retrieval performance while suppressing false memory and contradiction. Stability analysis further demonstrates convergence: the offset $0.455$2 approaches zero as updates proceed, with importance-weighted retention maintaining operational consistency.

Figure 2: Side-by-side comparison of LOCOMO F1, LOCCO retention, MultiWOZ accuracy, and FMR for the proposed framework versus prior work.

Implications and Prospects

The structured, optimization-driven forgetting policy substantiates that memory constraints—when relevance-aware—do not inherently trade off with reasoning quality. In practice, such architectures enable deployment of conversational and reasoning agents in real-world, resource-bounded settings (e.g., edge inference, multi-session interaction) without incurring the risks of context drift or exponential latency.

Theoretically, the framework bridges cognitive models (e.g., temporal decay, reinforcement learning) and systems desiderata (bounded storage, minimal latency), offering a replicable foundation for scalable agentic systems across both task completion and open-ended interaction. Future research directions include end-to-end differentiable controllers for relevance regulation, learning dynamic schedules for $0.455$3, and extending to cross-agent, multi-session knowledge bases.

Conclusion

This work formalizes and validates an adaptive, relevance-optimized forgetting strategy for autonomous AI agents conducting long-horizon dialogues and reasoning tasks (2604.02280). Empirical and analytical evidence converges on the finding that contextual performance can be decoupled from unbounded memory growth using constrained, multi-factor relevance selection. The proposed methodology reliably attenuates false memory artifacts, dampens performance decay, and maintains efficiency across a diverse set of conversational benchmarks. The findings provide a robust foundation for designing memory-stable, resource-aware agent systems at scale.