- The paper presents the cognitive alignment framework that matches user cognitive demand with AI interaction modes to mitigate cognitive passivity.

- It demonstrates that misaligned interactions can improve short-term performance yet lead to a 17% drop in independent long-term understanding.

- The study advocates for adaptive AI designs and metacognitive assessments to foster deep reasoning and transferable data literacy skills.

Disrupting Cognitive Passivity in AI-Assisted Data Literacy via Cognitive Alignment

Introduction

The proliferation of AI chatbots and LLM-based assistants in data analysis, visualization, and educational workflows is transforming how practitioners interact with data and acquire data literacy. The paper "Disrupting Cognitive Passivity: Rethinking AI-Assisted Data Literacy through Cognitive Alignment" (2604.02783) critically interrogates the impact of AI's prevalent interaction design, particularly its default transmissive (answer-delivering) mode, on the development of data literacy. It challenges the assumption that more reflective or scaffolded AI always mitigates the risk of cognitive passivity, and instead introduces the cognitive alignment framework, which posits that effective human-AI interaction is a function of the alignment between the user's cognitive demand and the AI's mode of interaction.

Cognitive Passivity in AI-Supported Data Practice

A growing body of research demonstrates that AI's default one-off, comprehensive assistant mode can induce cognitive passivity, especially among novices. The phenomenon is characterized by reduced reasoning, loss of learning opportunities, and increased dependence on AI suggestions. Empirical findings cited in the paper report that although LLMs like GPT-4 can improve immediate task performance (e.g., a 48% gain in data analysis practice), access to AI tools correlates with diminished long-term understanding, as measured by a 17% decrease in subsequent AI-independent performance (2604.02783). This effect resonates across design, visualization, and creative tasks, leading also to decreased self-efficacy and idea diversity.

Notably, AI advice tends to short-circuit the multi-level reasoning process necessary for genuine data literacy development. For example, AI chatbots often focus on execution-level solutions—such as recommending specific chart types—while bypassing critical stages like domain problem specification or abstraction, which are essential for attaining principled understanding and transferable skills.

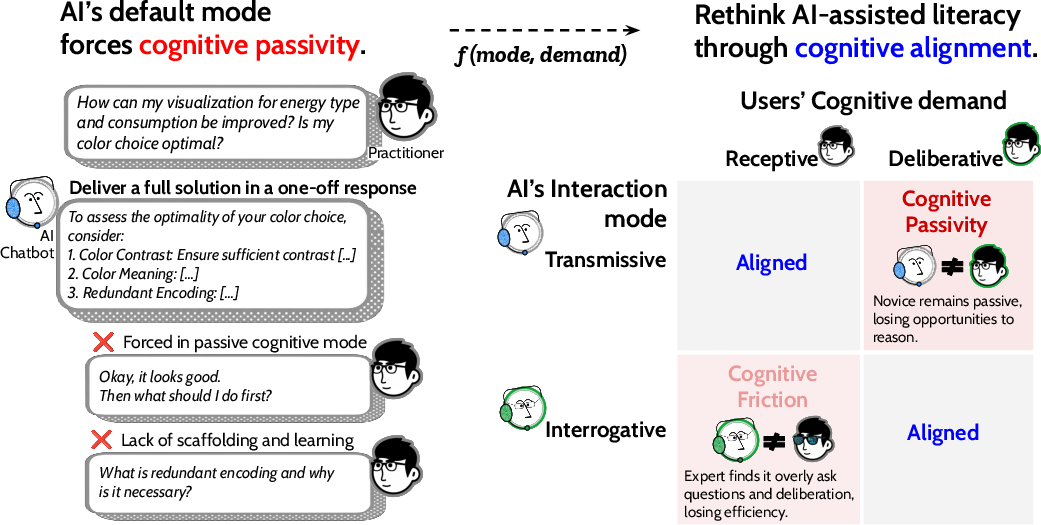

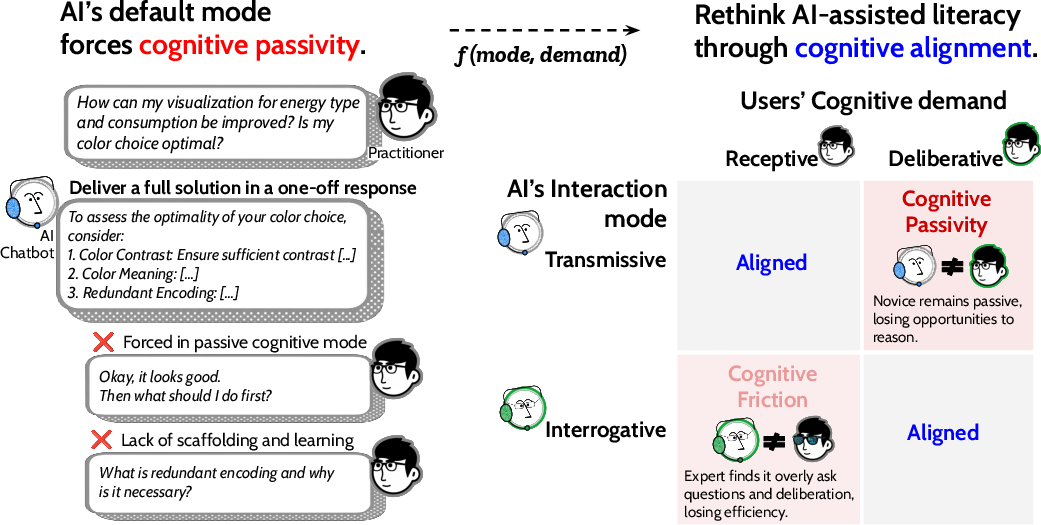

Figure 1: The cognitive alignment framework, mapping user cognitive demand and AI interaction mode, delineates conditions yielding alignment, cognitive passivity, or cognitive friction.

The Cognitive Alignment Framework

The paper proposes that mitigating cognitive passivity requires more than a universal shift toward deliberative or scaffolded AI interactions. Instead, it introduces a dual-axis framework:

- Cognitive Demand: The degree of reasoning the learning moment requires, bifurcated into receptive (requesting straightforward information or confirmation) and deliberative (seeking support for reasoning through trade-offs and higher-order problem-solving).

- Interaction Mode: The AI's mode of engagement, denoted as transmissive (provision of direct answers or explanations) or interrogative (eliciting reflection, scaffolding, or guided reasoning).

The central thesis is that alignment between cognitive demand and AI interaction mode determines the efficacy of AI-assisted data literacy development. Four archetypes of interaction emerge:

- Aligned (Receptive, Transmissive): Efficient information delivery satisfying straightforward needs without unnecessary scaffolding.

- Aligned (Deliberative, Interrogative): Scaffolded interaction fosters deep reasoning and advances literacy.

- Misaligned (Deliberative, Transmissive): Cognitive passivity arises as AI bypasses the reasoning process, undermining learning.

- Misaligned (Receptive, Interrogative): Cognitive friction, characterized by superfluous questioning, reduces efficiency and can cause disengagement.

Crucially, cognitive demand is often opaque, especially to novices, producing a "self-concealing" form of passivity where AI appears to be helpful but in fact circumscribes intellectual engagement.

Implications and Design Challenges

The articulation of cognitive alignment reframes AI-assisted data literacy as an interaction design challenge rather than a content delivery problem. Several implications follow:

- Adaptive AI: Designing AI systems capable of inferring users' cognitive demand (from interaction history, task stage, or question specificity) and adapting their mode accordingly becomes central. This adaptation may necessitate defaulting to deliberative interactions, especially where learners' metacognitive awareness is insufficient to recognize when deeper engagement would be beneficial.

- Measuring Literacy by Reasoning, Not Outputs: The paper advocates for assessing literacy gains based on reasoning processes (e.g., the ability to justify choices and transfer principles), not merely output quality. This shift foregrounds metacognitive skills and the importance of recognizing when AI-generated advice is contextually appropriate.

- Task Phase and Expertise Sensitivity: Not all contexts benefit from deliberative AI. Experts and users in confirmatory phases often require efficiency rather than additional scaffolding, indicating the necessity of phase- and expertise-sensitive AI adaptation.

- Multi-level Reasoning Engagement: There is a design imperative for AI to balance delivering execution-level advice with engaging high-level problem structuring and reasoning, without overwhelming or frustrating the user.

Open Questions and Future Research Directions

The paper identifies outstanding research questions that highlight the complexity and interdisciplinarity of advancing AI-assisted data literacy:

- Under what conditions does AI assistance cross the threshold from facilitating learning to fostering dependency?

- Can AI reliably infer and adapt to real-time cognitive demand without explicit user input?

- How do manifestations of cognitive passivity and friction vary across domains (e.g., statistics, visualization, AI-powered analysis)?

These lines of inquiry suggest future developments in adaptive, context-aware, and metacognitively informed AI tools. Modeling user cognitive demand, integrating real-time behavioral cues, and developing interaction paradigms that balance efficiency and engagement are key technical and HCI challenges.

Conclusion

This work reframes the challenge of fostering data literacy in AI-augmented environments as inherently about cognitive alignment: the precise, context-sensitive matching of AI interaction mode to user cognitive demand. By scaffolding this alignment, AI tools can avoid reinforcing cognitive passivity or causing cognitive friction, thereby more effectively supporting both novice and expert practitioners. The proposed framework invites future AI system designs to be adaptive and metacognitively aware, and prompts the research community to reconceptualize data literacy as an emergent property of dynamic human-AI interaction (2604.02783).