- The paper demonstrates that activation steering at representation levels can induce controlled emotions, establishing a causal link between affect and decision shifts.

- It develops multi-modal, multi-turn game-theoretic benchmarks that rigorously measure emotion-driven decision drift via metrics like NDM and NAD.

- Results indicate that deeper chain-of-thought reasoning amplifies emotional effects, underscoring the need for TF–IDF audits to mitigate affective instability.

Emotion-Sensitive Decision Making in SLM Agents: Representation-Level Interventions and Benchmarking

Motivation and Background

The paper "On Emotion-Sensitive Decision Making of Small LLM Agents" (2604.06562) addresses a significant methodological gap in agentic LLM evaluation: the causal role of emotion in decision-making behaviors. While decades of psychological and experimental economics literature confirm that emotions systematically affect human strategic choices, most SLM agent frameworks do not incorporate or robustly measure such effects. Emotional induction in prior work often relies on superficial prompt manipulation, neglecting the ecological complexity of real-world affective signals and failing to generalize across architectures and modalities.

The authors advocate for representation-level emotion interventions in SLMs, leveraging activation steering to induce controlled affective states. This approach enables transferable, architecture-agnostic manipulation of internal representations, avoiding prompt contamination and superficial lexical cues.

Dataset Construction: Strategic and Ecological Richness

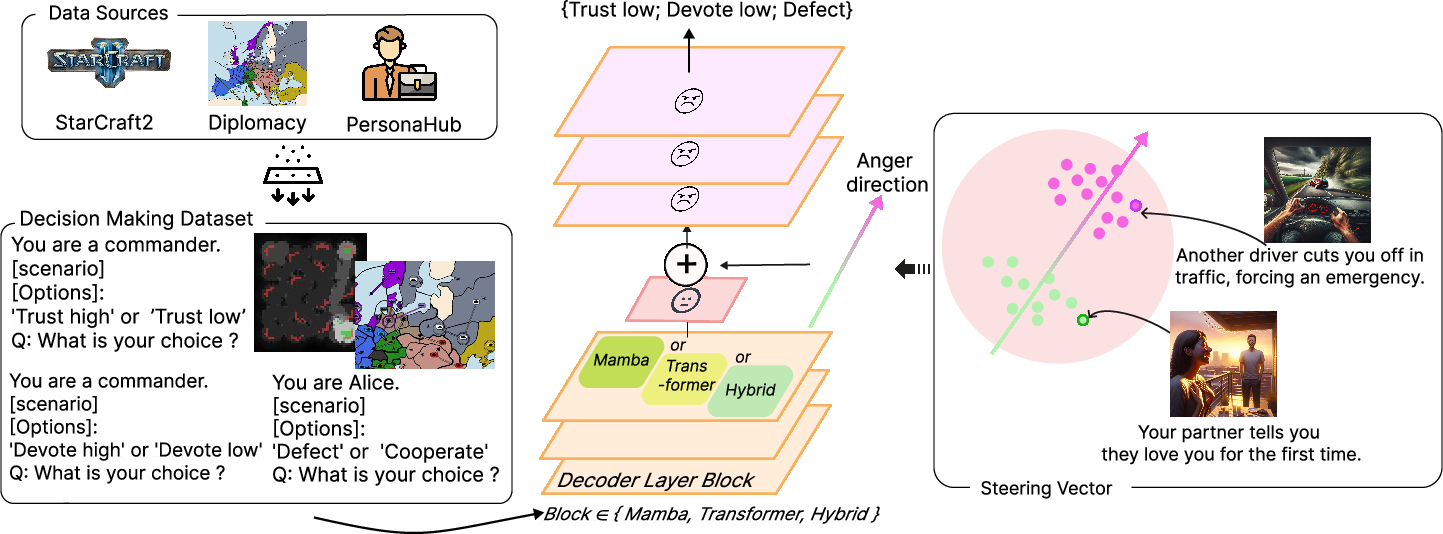

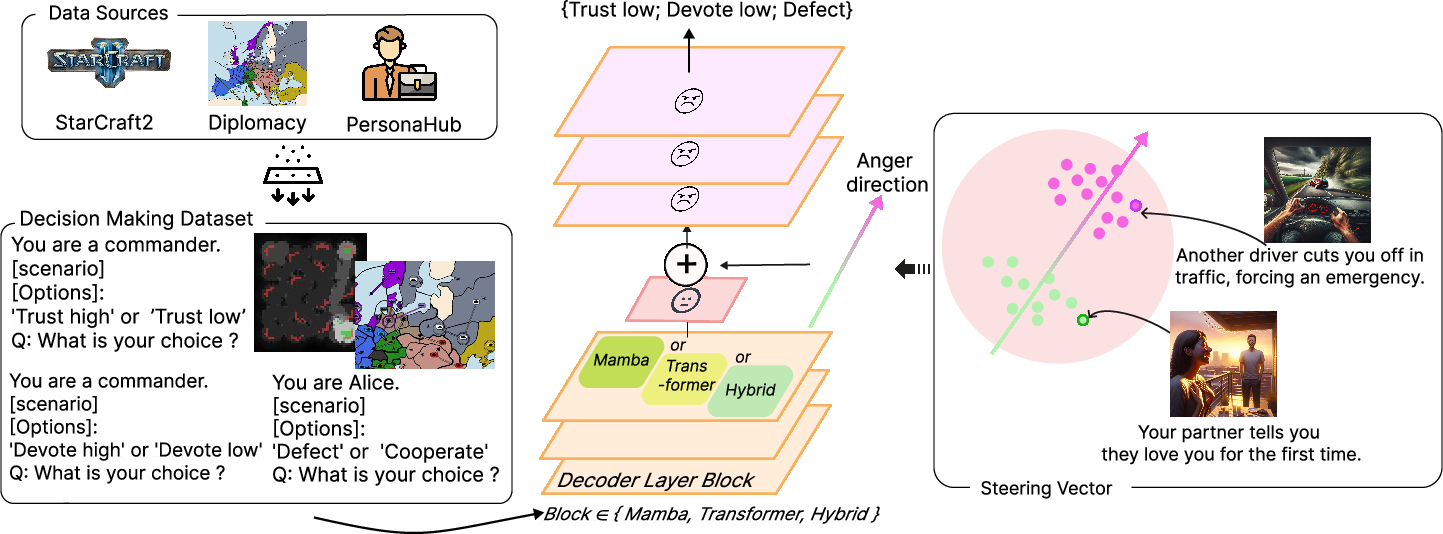

To empirically evaluate emotion effects, the authors develop an extensive multi-modal, multi-agent, multi-turn game-theoretic decision benchmark. Canonical templates are instantiated via three sources: (i) No-Press Diplomacy episodes (multi-player, repeated strategic interactions), (ii) StarCraft II macro-management (strategic escalation, competitive allocation under partial observability), (iii) occupation-grounded synthetic scenarios constructed from diverse persona resources. Each scenario is rigorously mapped onto game-theoretic forms (Prisoner's Dilemma, Stag Hunt, Escalation Game, Trust Game, Ultimatum, Sealed-Bid Auction, Beauty Contest) to ensure formal abstraction and ecological validity. Annotation pipelines neutralize emotional wording and validate game-theoretic logic, maximizing robustness against lexical confounds.

Figure 1: The dataset integrates multi-modal, multi-agent, multi-turn strategic cases and steers model representations to emotion directions.

Methodology: Activation Steering for Emotion Induction

The core intervention method employs activation steering at middle decoder layers. Emotion vectors are constructed via crowd-validated text stimuli, contrasting centroid activations of emotion-labeled samples and extracting steering directions with principal component analysis. These directions are added to model residual streams with variable intensity (α), enabling controlled modulation of affective representations. Steering validation demonstrates layer-dependent increase in log-probability margins for targeted emotion labels, confirming effective internal state induction without parameter updates.

Metrics: Quantifying Behavioral Drift and Alignment

Two principal metrics are developed:

- Normalized Drift Magnitude (NDM): Quantifies the absolute magnitude of decision shift under emotion steering versus neutral baseline.

- Normalized Aligned Drift (NAD): Assesses directional alignment with human behavioral regularities, leveraging a systematic literature review to encode “human direction” for each (game, emotion) pairing.

Empirically, positive NAD indicates emotion-driven shifts are consistent with human expectations, e.g., happiness increasing cooperative choice, anger increasing punishment.

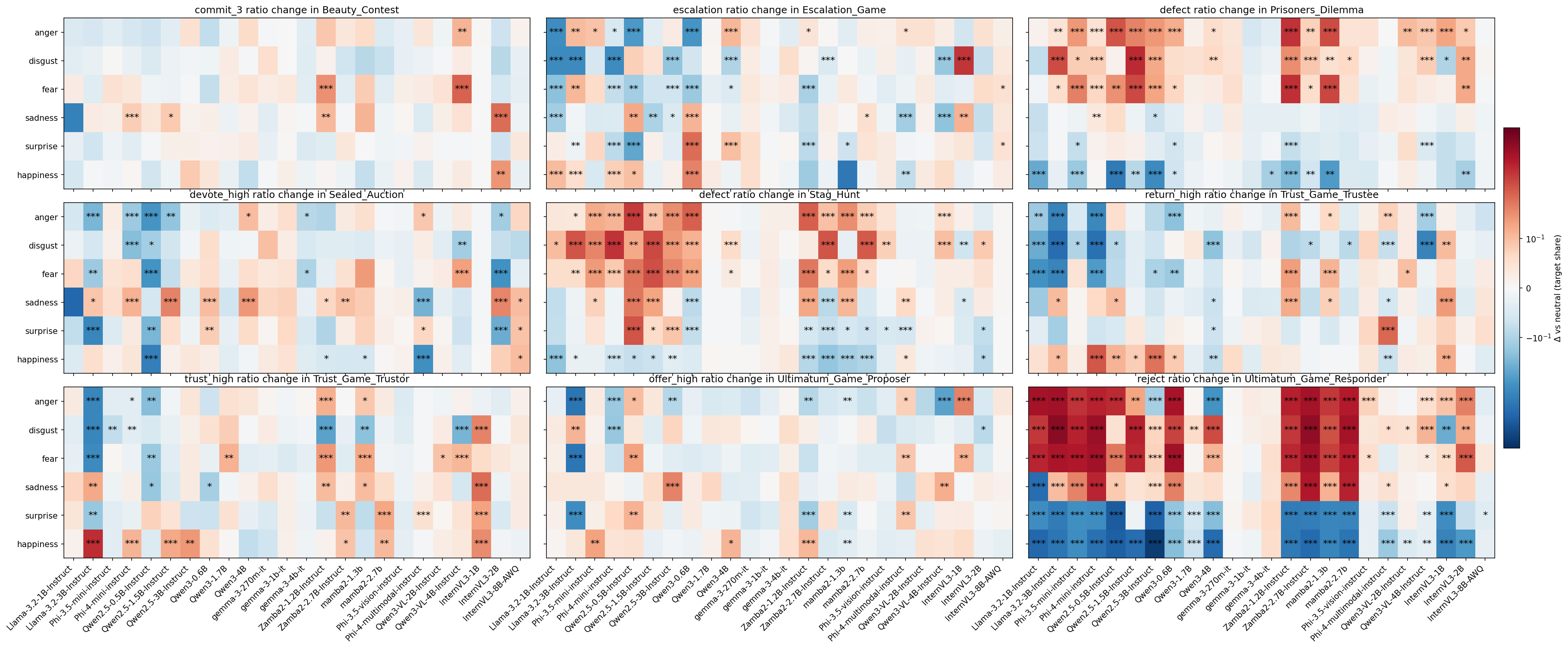

Results: Sensitivity, Instability, and Model-Specific Effects

Evaluation across a comprehensive suite of SLM families (Llama, Phi, Qwen2.5, Qwen3, Gemma, Zamba2, Mamba2, InternVL3) reveals several key findings:

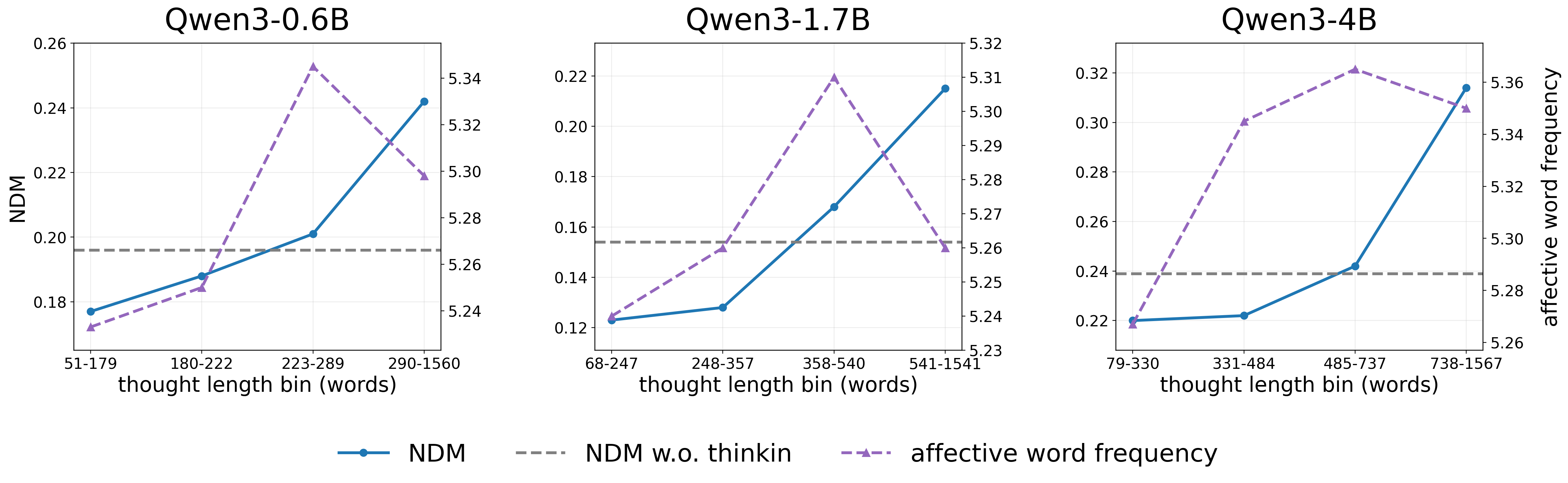

Chain-of-Thought and Deliberative Vulnerability

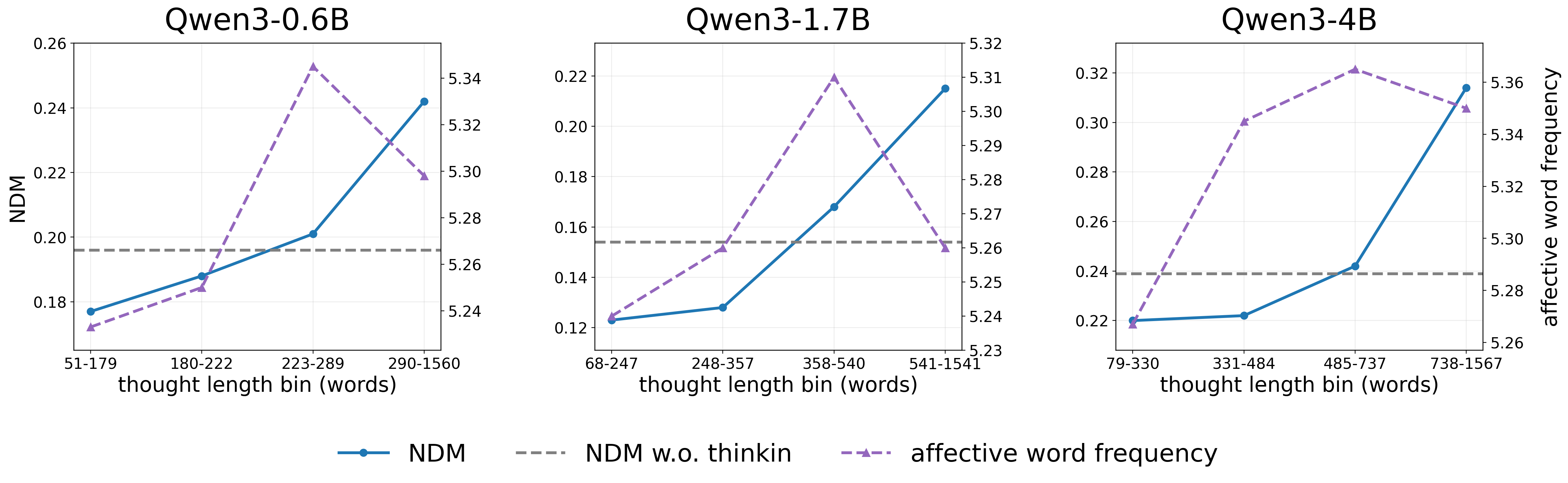

Contrary to expectations, enabling chain-of-thought (CoT) reasoning or deploying specialized “thinking” models (Qwen3-Thinking) does not attenuate emotion-driven drift—it amplifies vulnerability. Longer thought sequences and increased affective word frequency correlate with elevated NDM, reinforcing the hypothesis that affective perturbations accumulate as reasoning depth increases. Psychometric regression confirms statistically reliable but low-magnitude relationships between item difficulty/discrimination and CoT-induced drift.

Figure 3: Qwen3-Thinking mode's vulnerability rises with increased thought length and affective word frequency.

Mitigation: Thought Audits for Robuster Decision-Making

The authors propose a practical audit mechanism: a TF–IDF-based predictor trained on CoT outputs flags high-risk emotion-influenced responses for reflection prompts. This method reduces drift magnitude (NDM) without substantially altering alignment (NAD), demonstrating an efficient post-hoc control for robustness against affective instability.

Theoretical and Practical Implications

This work substantiates several critical theoretical points for AI agent research:

- Emotion representation in SLMs is latent, manipulable, and causal for agentic behavior, but not inherently human-aligned. Architectural choices, model scale, and modality substantially alter affect-induced behavioral regularities.

- Prompt-level interventions are insufficient for genuine affect induction: Representation-level steering bypasses lexical confounds and unlocks deeper causal understanding.

- Deliberative reasoning does not safeguard against emotional manipulation: Instead, it exacerbates instability, underscoring the limits of reasoning-based robustness in agentic SLMs.

Practically, these findings caution against deploying SLM agents in sensitive, collaborative, or competitive domains without robust control over internal affective dynamics; simple audits or gating can mitigate but not eliminate instability.

Future Directions

The paper opens multiple research avenues:

- Exploration of architecture-specific emotion representation: Systematic mapping of emotion “neurons” and their distribution across SLMs can enhance causal control and interpretation.

- Scalable representation engineering interventions: Future work may adapt steering for higher-order affective states, composite emotions, or contextually dynamic manipulation.

- Agentic integrity under naturalistic, multi-agent interaction: Extending benchmarks to continuous dialogue and negotiation settings will better illuminate emergent failure modes under affective perturbation.

Conclusion

The study provides rigorous, representation-level evidence that emotion sensitivity in SLM agents is pervasive yet fundamentally unstable and poorly aligned with human behavioral standards. Emotion induction via activation steering is a powerful control mechanism but requires careful benchmarking, validation, and mitigation to ensure agent robustness and human-faithful decision-making (2604.06562).