DualPath: Breaking the Storage Bandwidth Bottleneck in Agentic LLM Inference

This presentation explores how DualPath revolutionizes large language model inference for agentic applications by eliminating the storage bandwidth bottleneck. As AI agents engage in extended multi-turn conversations with massive context reuse, traditional architectures saturate prefill engine storage interfaces while decode engines sit idle. DualPath introduces a dual-path loading mechanism that aggregates bandwidth across all engines, combines workload-aware scheduling with traffic isolation, and delivers up to 1.87x throughput improvement in production deployments. The system demonstrates that the KV-Cache loading bottleneck is not fundamental but an artifact of suboptimal resource utilization.Script

Modern AI agents don't just answer questions anymore. They engage in hundreds of conversation turns, accumulating massive contexts and reusing cached data at rates exceeding 95 percent. This creates a new kind of bottleneck that has nothing to do with compute power and everything to do with how we move data.

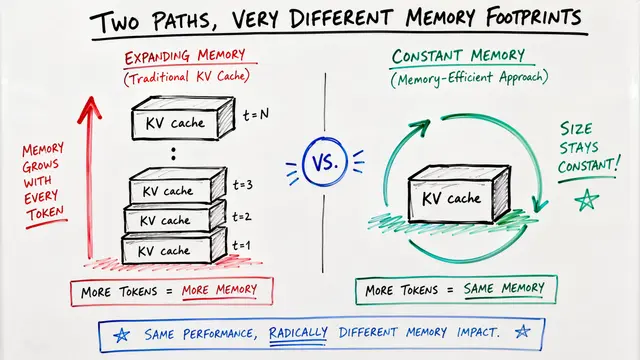

Traditional inference architectures assign all KV-Cache storage reads to prefill engines. When agents run hundreds of turns with extreme cache reuse, those prefill storage interfaces become choke points. Meanwhile, decode engines possess substantial unused I/O capacity. The hardware trend makes this worse: NVIDIA GPUs gained compute power an order of magnitude faster than bandwidth improved, creating a fundamental mismatch.

DualPath rethinks this architecture from the ground up.

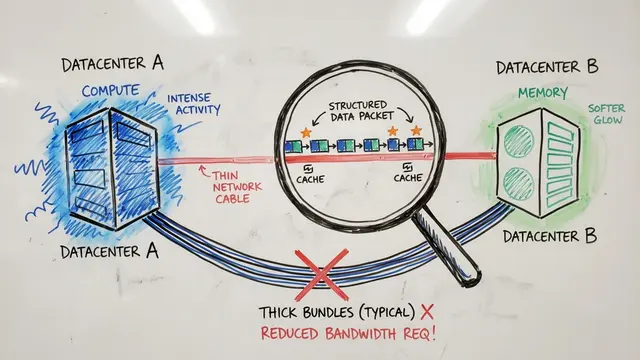

The core insight is elegantly simple. Instead of forcing all cache loads through prefill engines, DualPath creates two paths. The classic path loads from storage into prefill engines directly. The new decode path loads cache into decode engines, then transmits it to prefill engines using RDMA over the high-bandwidth compute network. This aggregates every storage interface in the system, breaking the unilateral bottleneck.

The prefill engine read path orchestrates multiple stages of data movement. Cache flows from storage into DRAM buffers, then incrementally transfers to HBM where computation occurs. The system carefully overlaps these transfers with computation to hide latency. Each stage is designed to exploit available bandwidth without creating contention, using asynchronous layerwise transfers that keep the pipeline full.

Making dual-path work requires two forms of intelligence. The scheduler operates at multiple levels, selecting paths based on current load and managing compute quotas to prevent stragglers. Simultaneously, the traffic manager ensures KV-Cache transfers never interfere with latency-critical model operations like all-to-all collectives. Virtual lanes relegate cache traffic to lower priority channels that only use idle bandwidth.

The numbers validate the approach decisively.

DualPath achieves near-oracle performance across real agentic workloads. On DeepSeek version 3 with 660 billion parameters, throughput nearly doubles compared to production baselines. Online serving sees similar gains, with agent completion rates doubling while tail latencies remain stable even at saturation thresholds where baseline systems collapse from queuing. The system scales linearly to deployments managing 48,000 simultaneous agents.

This scaling behavior reveals something fundamental. The chart shows prompt tokens per second and end-to-end metrics across massive deployments. What's remarkable is the flatness of the curves. As agent concurrency grows from thousands to tens of thousands, per-agent completion times hold steady. The system is limited only by total storage capacity, not by architectural bottlenecks. Load balance metrics confirm the scheduler keeps maximum to average traffic ratios under 1.18 across all network interfaces.

DualPath proves the storage bottleneck is not inherent to agentic inference but a consequence of rigid role assignment and suboptimal bandwidth utilization. Architectures that globally pool I/O resources can approach theoretical throughput limits. This has immediate implications for reinforcement learning rollouts and open-ended agent operations, which demand exactly this kind of sustained multi-turn capacity. The era of agents hitting infrastructure walls may be ending.

When systems finally use all the bandwidth they already have, agents stop waiting and start working. To explore more research like this and create your own video presentations, visit EmergentMind.com.