- The paper introduces a novel latent-space EBM framework that jointly models data and attributes for controllable image generation.

- It leverages an ODE-based sampling method, achieving efficient and robust zero-shot generation with significant improvements over baselines.

- Experimental results on CIFAR-10 and FFHQ demonstrate high quality, precise sequential edits and superior conditional sampling performance.

Controllable and Compositional Generation with Latent-Space Energy-Based Models

Introduction

The paper "Controllable and Compositional Generation with Latent-Space Energy-Based Models" (2110.10873) presents a novel approach to address the challenges in controllable and compositional image generation using Energy-Based Models (EBMs) in the latent space of pre-trained generative models. The proposed method introduces a joint EBM formulation and efficiently formulates sampling from the joint distribution as solving an ordinary differential equation (ODE). This research achieves significant improvements in both conditional sampling and sequential editing tasks, excelling particularly in zero-shot generation of novel attribute combinations.

Methodology

The core contribution of this paper is the introduction of EBMs in the latent space of a pre-trained generative model such as StyleGAN. This approach leverages a novel EBM formulation that jointly models the data and attributes. The joint distribution is sampled by solving an ODE, which is shown to be efficient and robust to hyperparameters. The method requires only the training of an attribute classifier, making it simple, fast to train, and efficient to sample.

Energy-Based Models

EBMs are leveraged to represent data by learning an unnormalized probability distribution. The energy function is defined in the latent space, which allows for scalable high-resolution image generation. Unlike traditional methods that sample directly in pixel space, the proposed method operates in latent space, aligning sampling efficiency with high-quality image synthesis.

Sampling Methodology

The paper introduces a new sampling method based on solving the ODE using probability flow ODEs induced by the reverse diffusion process. This method is efficient and robust, avoiding the high computational cost and sensitivity of Langevin dynamics traditionally used in EBMs.

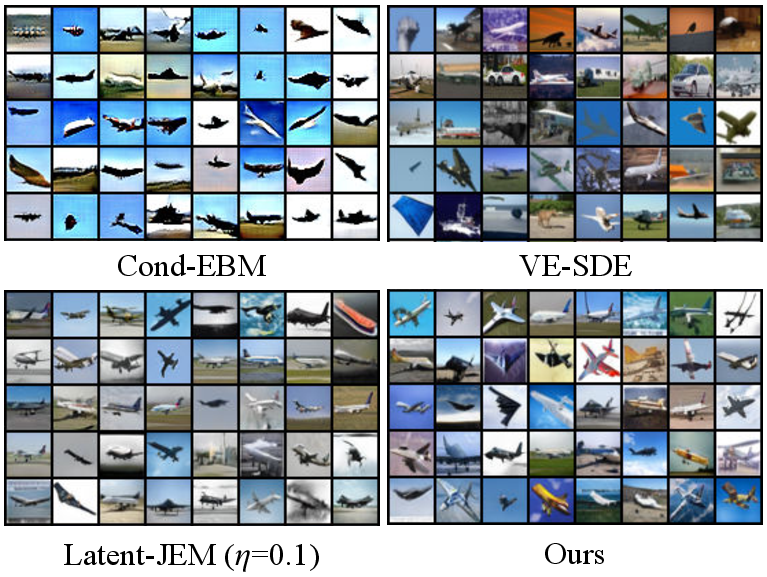

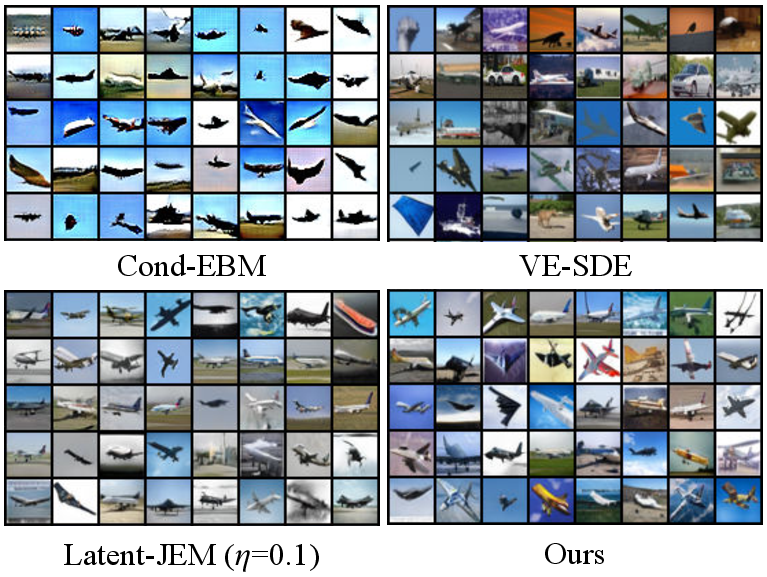

Figure 1: Conditionally generated images of our method (LACE-ODE) and baselines on the plane class of CIFAR-10.

Experimental Results

The experimental evaluation spans multiple tasks, including conditional sampling, sequential editing, and compositional generation on datasets such as CIFAR-10 and FFHQ. The results demonstrate the method's superior performance across these tasks compared to existing baselines such as StyleFlow and JEM.

Conditional Sampling

The proposed method significantly outperforms state-of-the-art baselines in terms of controllability and image quality. For example, on CIFAR-10, the LACE-ODE achieves FID 6.63 and ACC 0.972, demonstrating a robust balance between image quality and conditional accuracy.

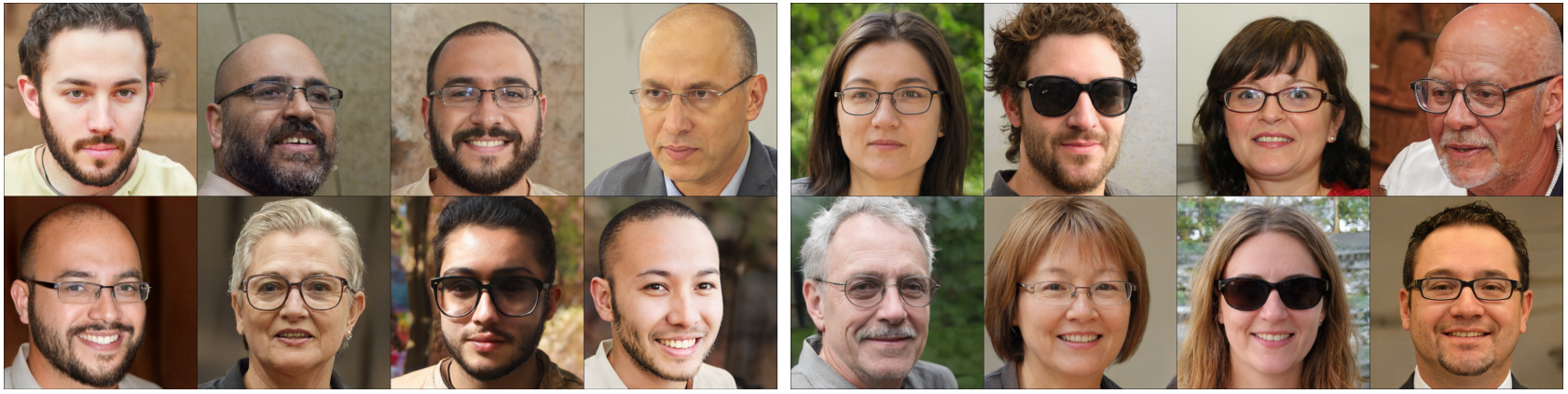

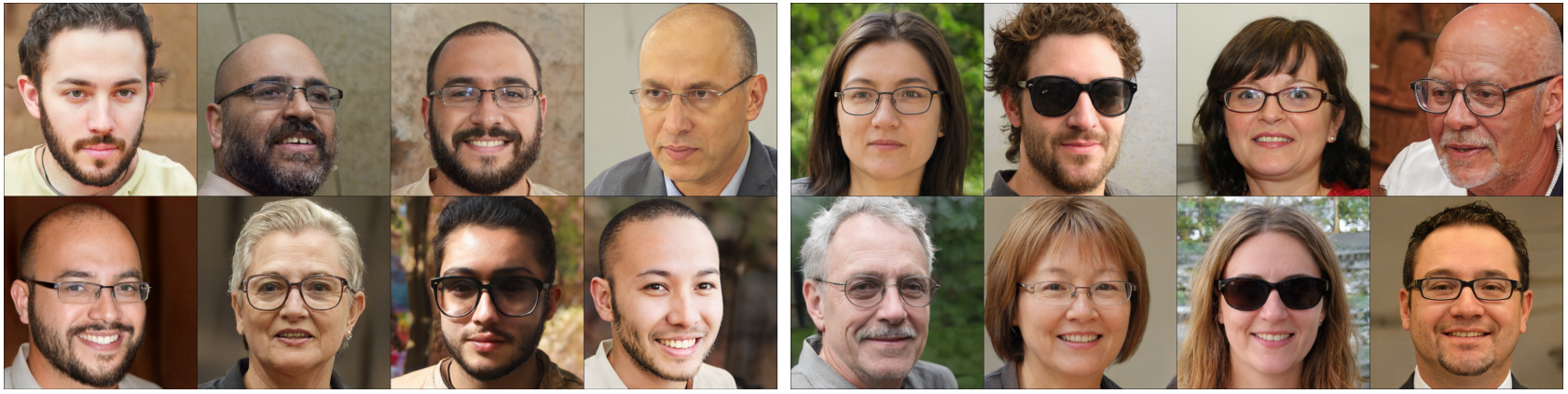

Sequential Editing

In sequential image editing, the approach efficiently handles attribute modifications without affecting attributes previously edited. The methodology allows for precise control over each edit, achieving higher disentanglement and identity preservation compared to baselines.

Compositional Generation

The method's strength is highlighted by its capability for zero-shot generation, where it successfully generates novel images conditioned on unseen attribute combinations, a task where traditional methods like StyleFlow struggle.

Figure 2: Sequentially editing images with our method (LACE-ODE) and StyleFlow, showcasing superior edit precision and identity preservation.

Implications and Future Work

This research advances the capabilities of controllable generation, particularly emphasizing scalability and compositionality. The methodological innovations offer a promising direction for future exploration in latent-space modeling, potentially enhancing applications in diverse areas such as synthetic data generation, virtual reality, and AI-assisted design. Future work may explore integration with other generative platforms and expanding compositional capabilities to even larger attribute sets, broadening the scope and impact of high-resolution image generation.

Conclusion

The paper "Controllable and Compositional Generation with Latent-Space Energy-Based Models" introduces a robust EBMs framework within latent space, facilitating controllable and compositional image generation with high efficiency and quality. By employing a novel ODE-based sampling strategy, the method surpasses existing approaches, particularly excelling in zero-shot and sequential editing tasks. This work underscores the potential of latent-space EBMs in pushing the boundaries of generative modeling, driving forward the integration of generative AI in practical applications.