- The paper proposes LLANA, an LLM-enhanced Bayesian Optimization framework that streamlines analog layout constraint generation.

- It integrates few-shot LLM surrogate modeling and candidate sampling to boost sample efficiency and reduce normalized regret in analog circuit benchmarks.

- The approach offers improved prediction accuracy and uncertainty quantification, addressing challenges in AMS design automation despite higher computational demands.

"LLM-Enhanced Bayesian Optimization for Efficient Analog Layout Constraint Generation" Summary

Introduction

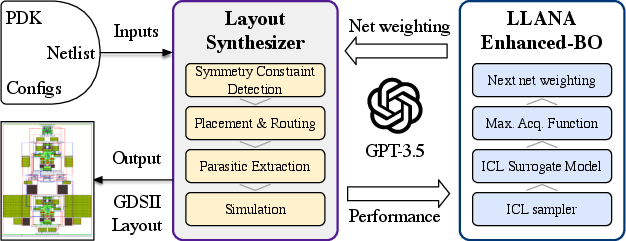

The paper addresses challenges in analog layout synthesis, which traditionally relies on manual processes leading to prolonged design cycles and limited automation. It proposes the LLANA framework, which integrates LLMs with Bayesian Optimization (BO) to streamline the generation of analog design-dependent parameter constraints. LLANA utilizes the few-shot learning capabilities of LLMs to leverage their contextual understanding and efficiency, achieving performance comparable to state-of-the-art methods but with improved exploration of design spaces.

The motivation stems from the increasing demand for expedited design processes in advanced analog and mixed-signal (AMS) integrated circuits for sectors like automotive and IoT. Existing techniques, such as optimization-based tools, require manual input of layout constraints and fail to adapt across different projects. Performance-driven approaches also struggle with accurate predictions due to increased complexities in scaled-down technologies.

LLANA Framework

LLANA enhances the capabilities of BO through two main integrations: surrogate modeling using LLMs and candidate sampling via few-shot generation. The surrogate model estimation is performed in-context, encoding optimization trajectories as natural language, thereby exploiting the rich priors embedded within LLMs. This approach exhibits robust prediction performance and effective uncertainty quantification, crucial for balancing exploration and exploitation in optimization.

Figure 1: Overview of the LLANA framework.

LLM-Enhanced BO Details: The LLANA framework operates by serializing optimization trajectories into textual formats that LLMs can process, which augments the traditional surrogate models. The approach enables querying few-shot examples to predict scores and associated probabilities for design configurations, optimizing hyperparameters with enhanced contextual generation capabilities.

Experiments and Results

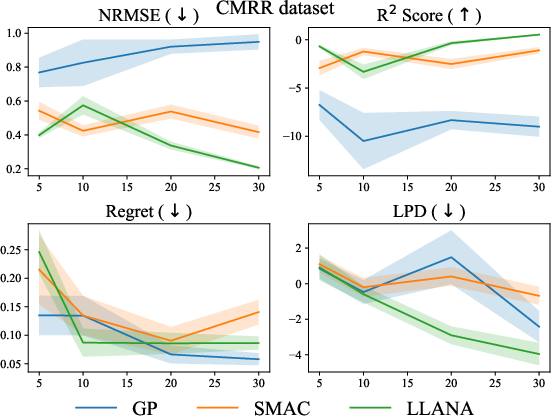

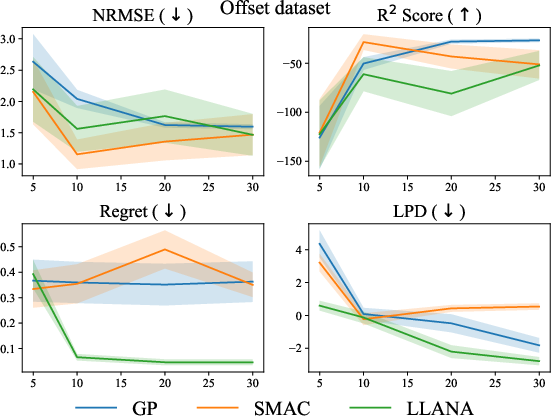

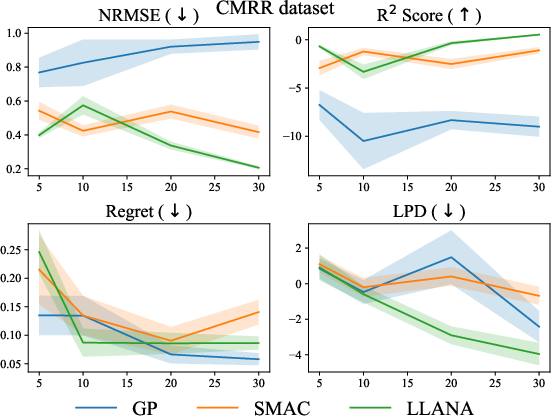

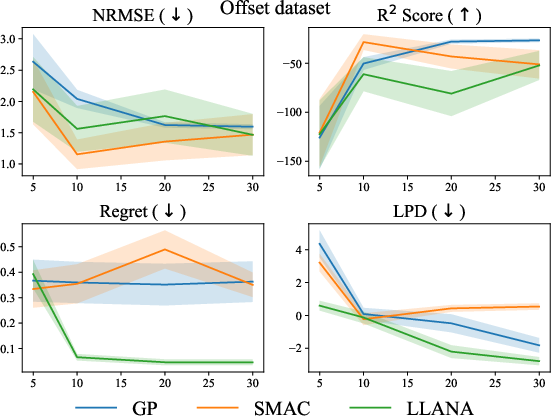

Experiments utilize operational amplifier designs with common-mode rejection ratio (CMRR) and input-referred offset voltage as benchmarks, comparing LLANA against GP and SMAC. LLANA demonstrates superior sample efficiency, with notable performance improvements in prediction accuracy and uncertainty quantification metrics. The framework excels especially in scenarios with limited sample sizes, evidencing its capability as a stand-alone solution for BO tasks.

Figure 2: Comparison of LLANA, GP, and SMAC~\cite{lindauer2022smac3} on CMRR dataset.

The experiments highlight LLANA's capacity to reduce normalized regret and improve log predictive density, supporting its efficacy in constraint generation tasks. Despite higher computational demands due to LLM inference, LLANA effectively trades off complexity for improved sample efficiency.

Figure 3: Comparison of LLANA, GP, and SMAC~\cite{lindauer2022smac3} on Offset dataset.

Conclusion

The paper presents LLANA as a promising framework to integrate LLMs with BO for efficient analog layout constraint generation. While showing slight improvements over traditional methods on tested benchmarks, it opens avenues for further research into multi-objective optimization and higher-dimensional BO tasks. Future work should focus on broadening LLANA’s applicability across diverse optimization challenges, potentially combining it with more efficient computational frameworks for enhanced performance.

The LLANA framework represents a significant step in leveraging AI capabilities to address long-standing challenges in the EDA domain, capitalizing on the generalization capabilities and rich contextual understanding of LLMs to enhance automated synthesis processes. The methodologies presented offer practical insights into the integration of LLMs in complex, data-driven tasks outside their usual scope, signaling a transformative potential in optimization-driven EDA implementations.