- The paper introduces SAGE, a framework that augments LLMs with self-evolving, reflective, and memory-optimized capabilities, demonstrating up to 2.26X accuracy gains in complex tasks.

- The methodology employs an iterative feedback loop with distinct roles (User, Assistant, Checker) and leverages the Ebbinghaus forgetting curve for efficient memory management.

- Experimental evaluations on AgentBench reveal significant performance improvements in long-context tasks and enhanced decision-making in dynamic scenarios.

Self-Evolving Agents with Reflective and Memory-Augmented Abilities

Introduction

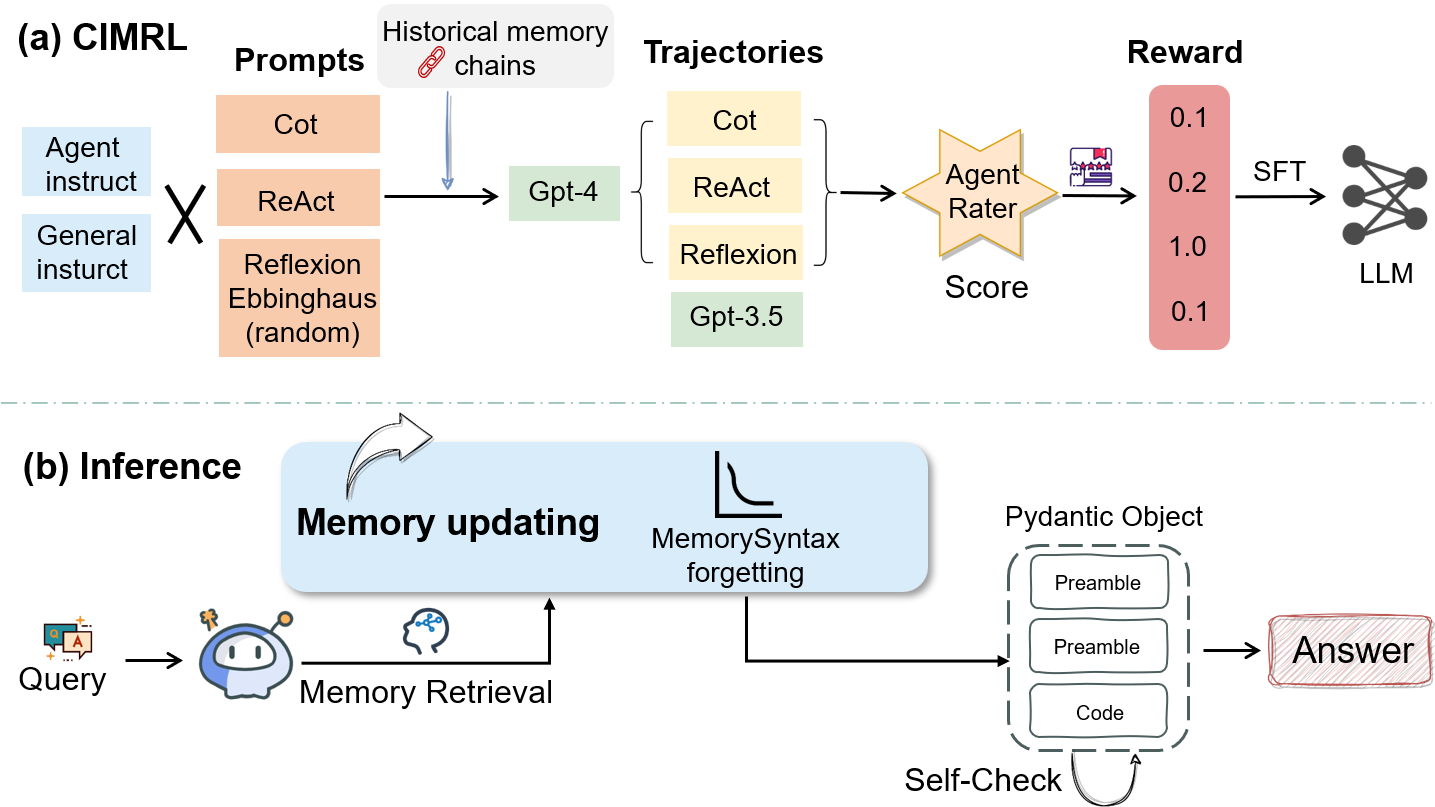

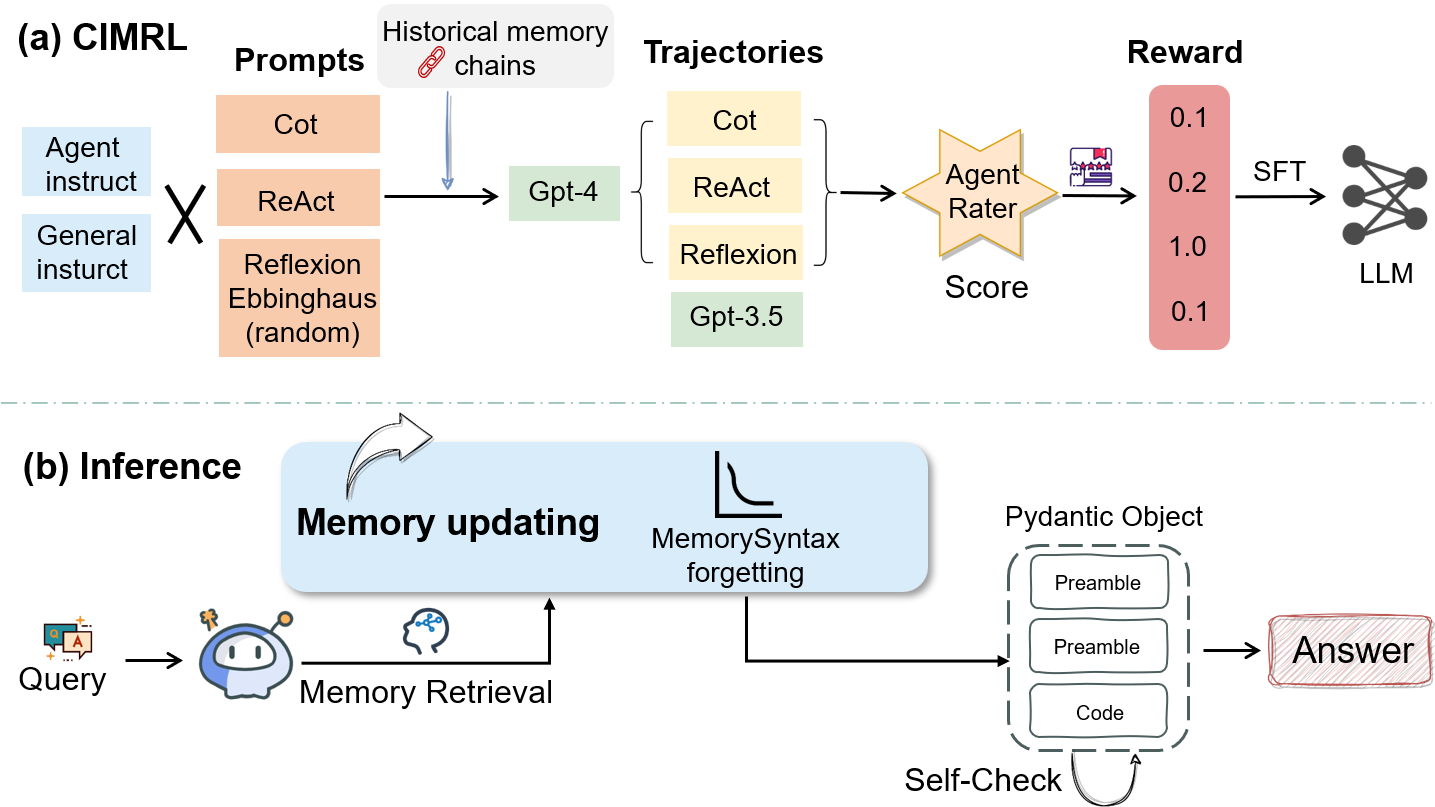

The paper "Self-evolving Agents with Reflective and Memory-augmented Abilities" focuses on enhancing the capabilities of LLMs used in dynamic environments where continuous decision-making, long-term memory, and expansive context management are critical. The proposed solution, the SAGE framework, integrates several innovative mechanisms to efficiently handle multi-tasking scenarios and dynamic information processing. The framework consists of three agents: the User, the Assistant, and the Checker, which work in tandem to adaptively optimize strategies and information management using iterative feedback and memory enhancements based on the Ebbinghaus forgetting curve.

SAGE Framework Overview

The foundation of the SAGE framework lies in its ability to evolve agents through reflection and memory augmentation. The framework introduces a memory optimization mechanism to combat the context limitations inherent in LLMs, enhancing transferability and adaptability to various tasks. By simulating human memory processes, SAGE allows LLM agents to focus strategically on long-span interactions, reducing cognitive load and improving decision-making.

Figure 1: An illustration of the SAGE.

Methodology

Iterative Feedback Loop

The core of the SAGE framework is an iterative feedback loop involving multiple agents designed to solve complex problems through a continuous improvement cycle. The initial step involves role assignment among three agents: User (task initiator), Assistant (responsible for task execution and adjustments), and Checker (providing evaluative feedback). The framework leverages feedback-driven optimization to refine outputs through the reflection mechanism, enhancing the model's capabilities without requiring additional training.

Memory Management Mechanisms

Integral to the SAGE framework is advanced memory management to handle short-term and long-term tasks effectively. Short-Term Memory (STM) is characterized by high volatility, while Long-Term Memory (LTM) focuses on retaining vital task information. SAGE uses the Ebbinghaus forgetting curve to manage the retention of critical information, thus optimizing decision-making processes through efficient memory storage mechanisms.

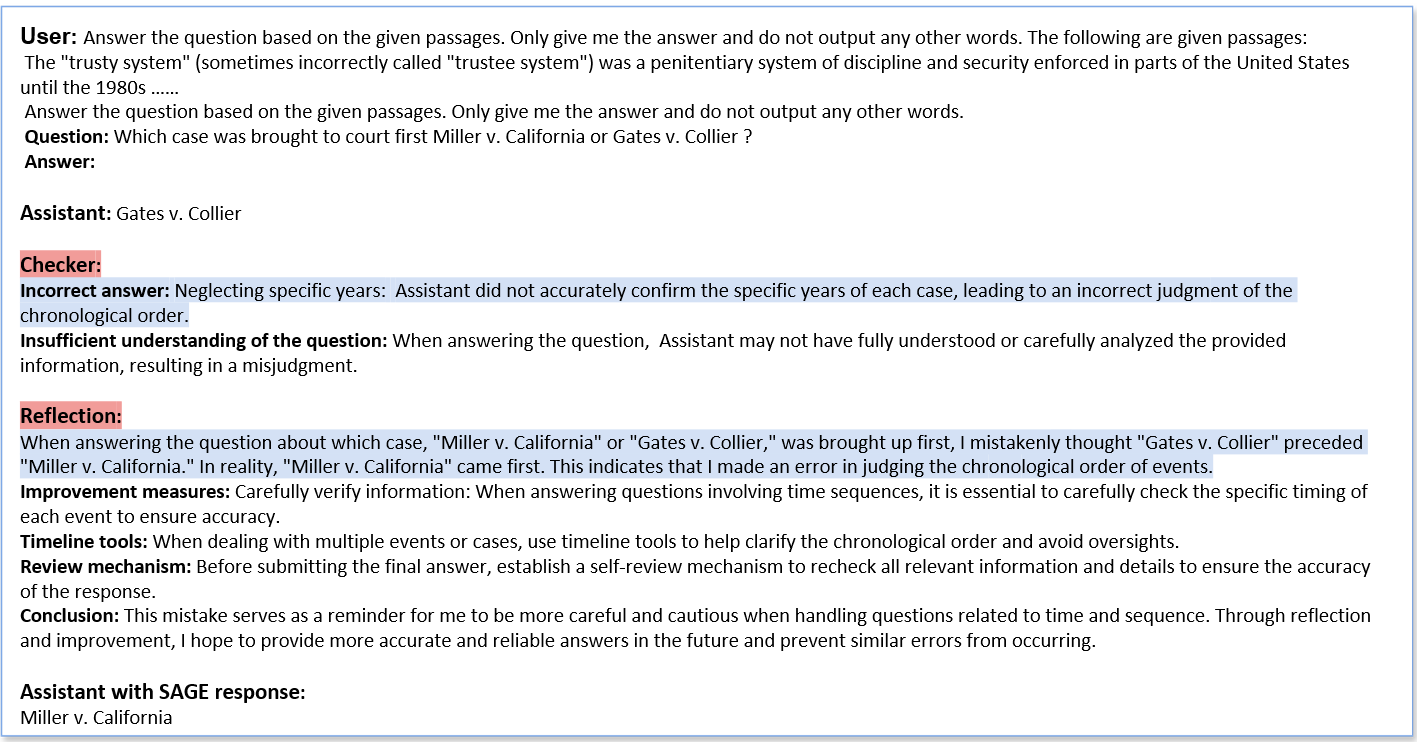

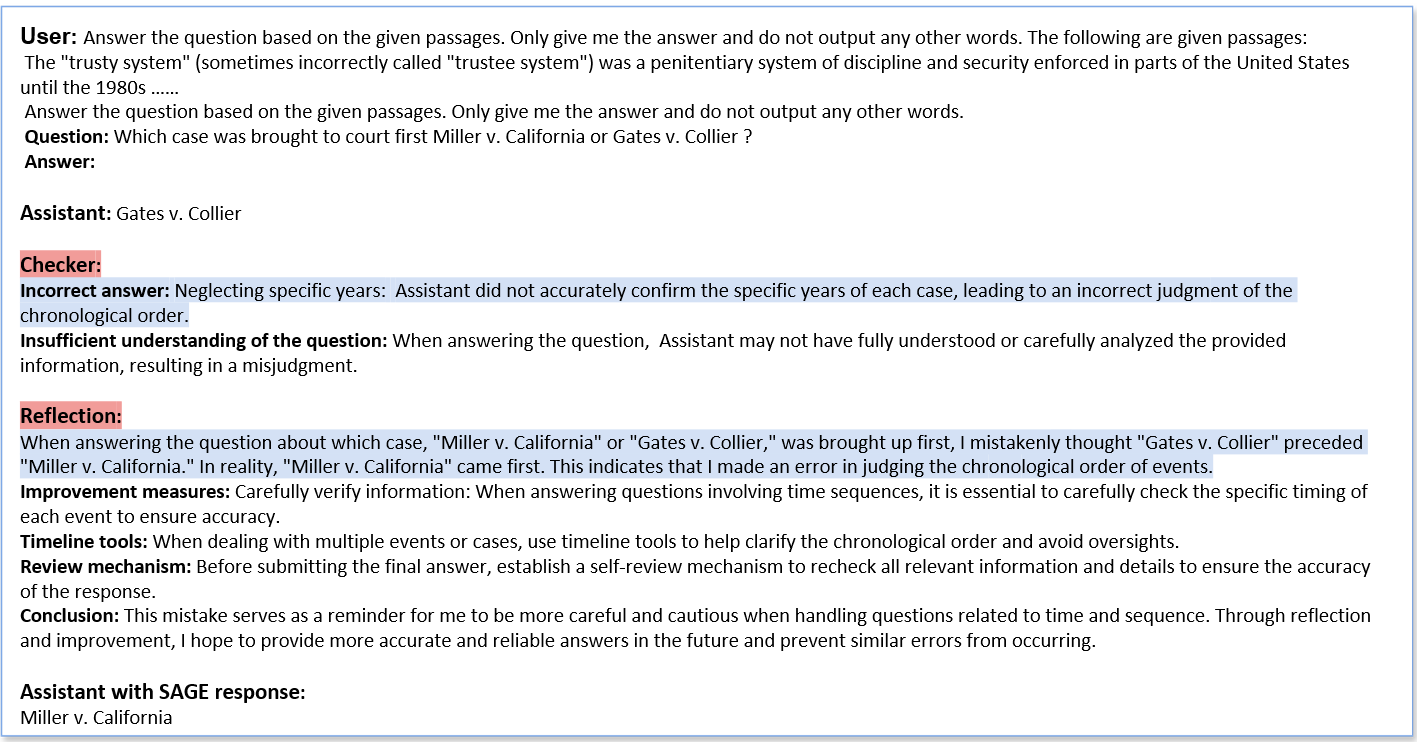

Figure 2: The illustration of an example HotpotQA with SAGE. Please refer to the appendix.

Experimental Evaluation

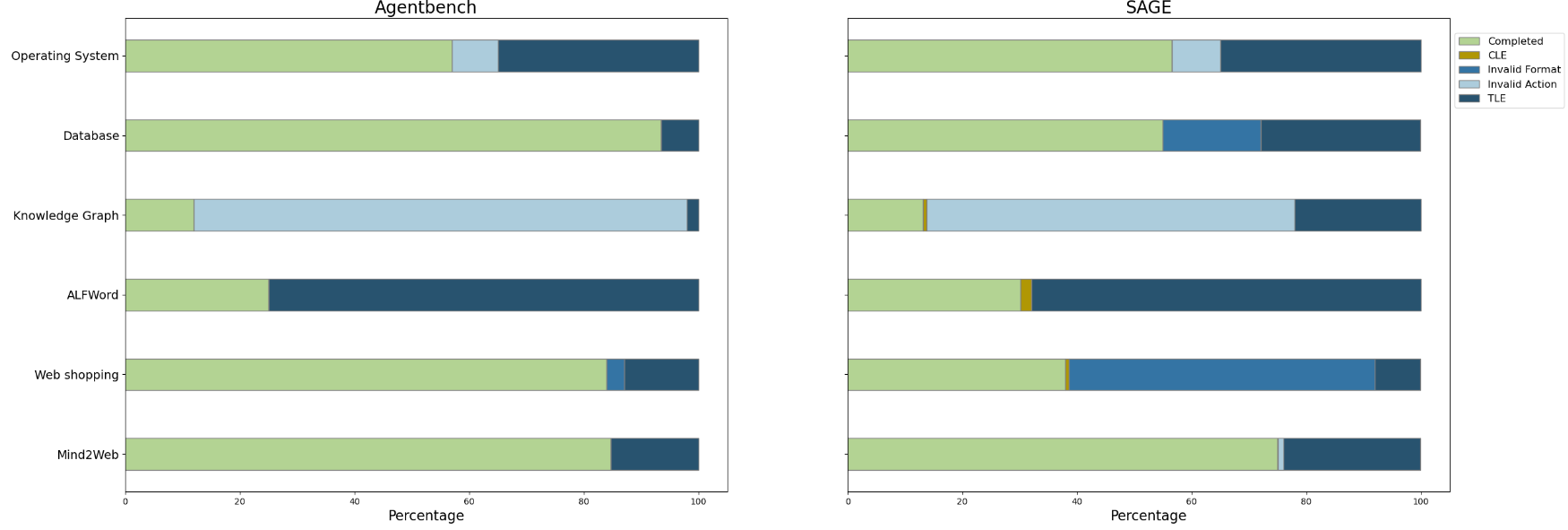

Benchmarking on AgentBench

The SAGE framework was rigorously evaluated using AgentBench, a comprehensive benchmarking suite designed to measure agents' reasoning and decision-making abilities across diverse tasks. SAGE demonstrated a substantial performance improvement in various open-source and closed-source models, with a notable up to 2.26X enhancement in model accuracy in complex tasks such as database management and knowledge graph interactions.

Long-Context Tasks

In long-context tasks, SAGE showed strong improvement over traditional LLMs in both code completion and reasoning tasks. Its reflection and memory mechanisms enabled the model to efficiently integrate context over extended sessions and optimize response strategies, significantly outperforming competitors in scenarios requiring complex decision-making.

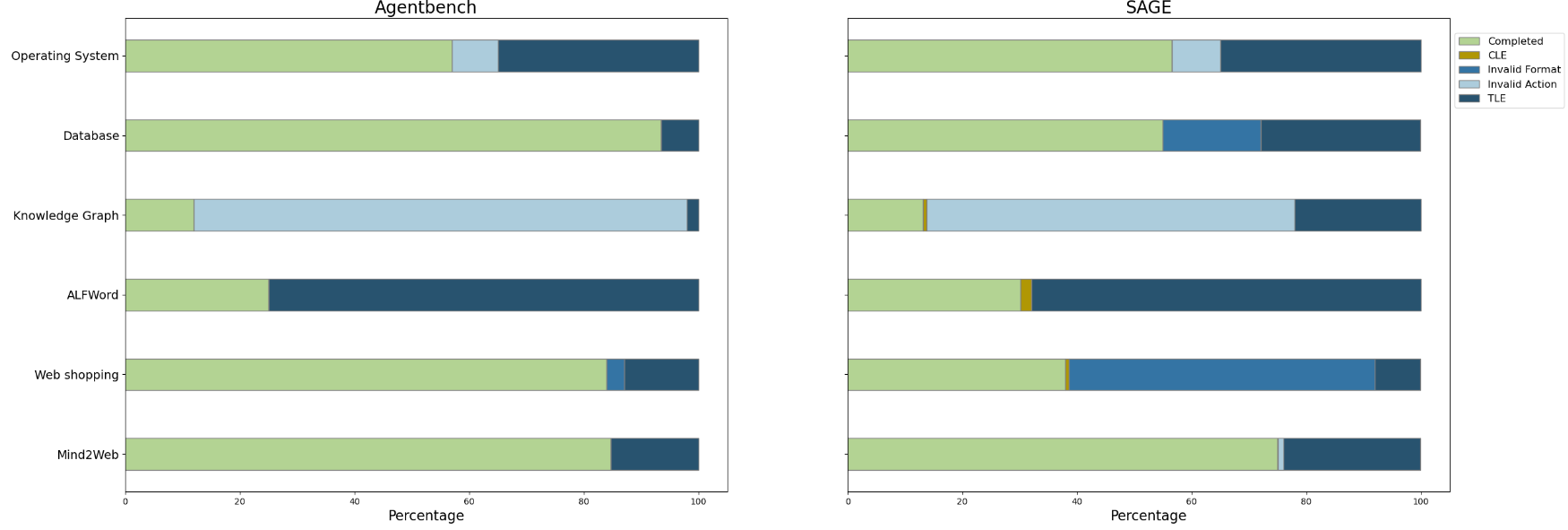

Figure 3: Distribution of various execution results across six tasks. (CLE: Exceeded Context Limit, TLE: Surpassed Task Limit). Task limits exceeded are the main reason for incomplete tasks, pointing to limitations in LLM agents' reasoning and decision-making within constrained timeframes.

Conclusion

The introduction of the SAGE framework marks a significant advancement in enhancing the adaptability and efficiency of LLM-based systems in dynamic and complex tasks. By employing reflective and memory-augmented abilities, the framework addresses crucial bottlenecks in long-span interactions and decision-making accuracy. The compelling results across multiple benchmarks highlight the potential of SAGE to extend the usability and effectiveness of LLM agents, particularly illustrating the framework's promise in deploying smarter, more capable autonomous systems in real-world environments. Future enhancement could further refine memory mechanisms and explore applications across a broader range of tasks.