- The paper introduces the Entropy Matching Model that quantifies information dynamics in diffusion-based neural networks.

- It leverages thermodynamic principles and stochastic optimal control to link entropy production with Wasserstein distance and KL divergence.

- Experimental results reveal that increased neural entropy correlates with performance trade-offs, guiding design optimizations in diffusion models.

Neural Entropy

Introduction

The paper "Neural Entropy" explores the parallels between deep learning models, specifically diffusion models, and concepts from thermodynamics and information theory. The author proposes a novel framework called the Entropy Matching Model, which offers insights into the information dynamics of neural networks during diffusion processes. This model characterizes how information is stored and processed in neural networks by examining its relationship to the entropy that must be counteracted when reversing a diffusion process.

Diffusion Models and Thermodynamics

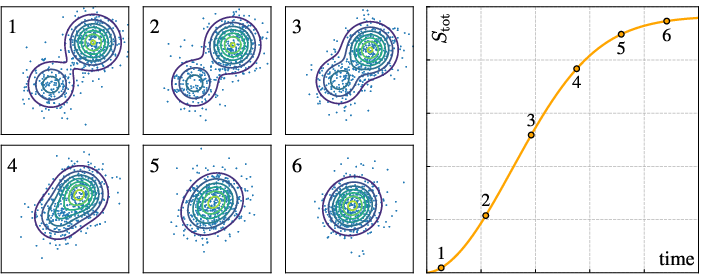

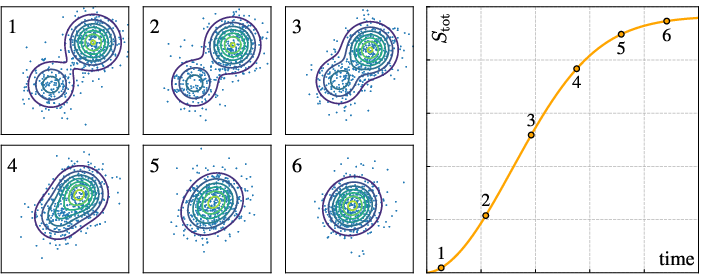

Diffusion models are instrumental in machine learning as they provide a framework where data is incrementally noised, transforming it into a simpler distribution (often Gaussian) and then reversed through learned dynamics to generate structured data. The forward diffusion process increases total entropy, reflecting the erasure of information. Conversely, reversing this process involves reintroducing information, analogous to the operation of Maxwell's demon in thermodynamics.

Figure 1: Diffusion is a non-equilibrium process that generates entropy over time.

Entropy Matching Model

The Entropy Matching Model refines existing diffusion modeling techniques with a focus on information dynamics and storage capacity. During the forward diffusion process, information is systematically removed from the data but is stored in the neural network. This information is quantified by a property termed 'neural entropy'. The model emphasizes the relationship between the information introduced during training and the corresponding entropy reduction required during generation.

Theoretical Underpinnings

The paper draws upon stochastic optimal control and the principles of nonequilibrium thermodynamics to establish the mathematical foundations of the Entropy Matching Model. Central to this process is the concept that the entropy produced during diffusion has a direct lower bound related to the Wasserstein distance between data distributions, linking diffusion models with optimal transport theory. This connection allows for new design choices in developing diffusion models by optimizing the forward diffusion parameters to balance computational efficiency against model performance.

Experimental Results

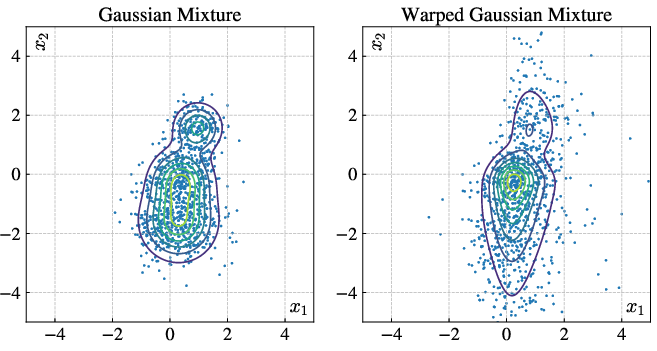

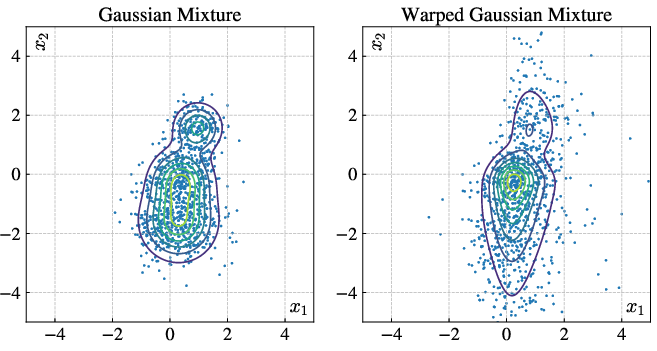

The experiments conducted provide evidence of the theoretical claims, demonstrating how variations in data distributions impact the performance of neural networks when trained under the Entropy Matching Model framework. Quantitative analysis shows a correlation between neural entropy and KL divergence, serving as a performance metric. It highlights that as more information is embedded into a network, performance in distribution approximation—measured through KL divergence—can degrade if the network's capacity to encode this information is exceeded.

Figure 2: A representative example of the type of data distributions utilized in experimental setups.

Implications

This work has significant implications for understanding and designing neural networks within diffusion frameworks. By tying information dynamics to thermodynamic concepts, it opens avenues for optimizing neural architectures based on their capacity to store and process information. Furthermore, it underscores the importance of entropy as a measure of information efficacy within generative models, potentially influencing future network designs to accommodate more complex data structures effectively.

Conclusion

The "Neural Entropy" paper posits a compelling relationship between thermodynamics, information theory, and neuronal models through the Entropy Matching Model. It bridges theoretical principles with practical implementations in machine learning, offering new insights and tools for enhancing the efficacy of diffusion models. Future work could extend this framework to more complex neural architectures and real-world datasets, deepening our understanding of the information-theoretic underpinnings of artificial intelligence systems.