- The paper presents a framework that combines retrieval augmented generation, denoising strategies, and iterative syntax error feedback to autoformalize mathematical content.

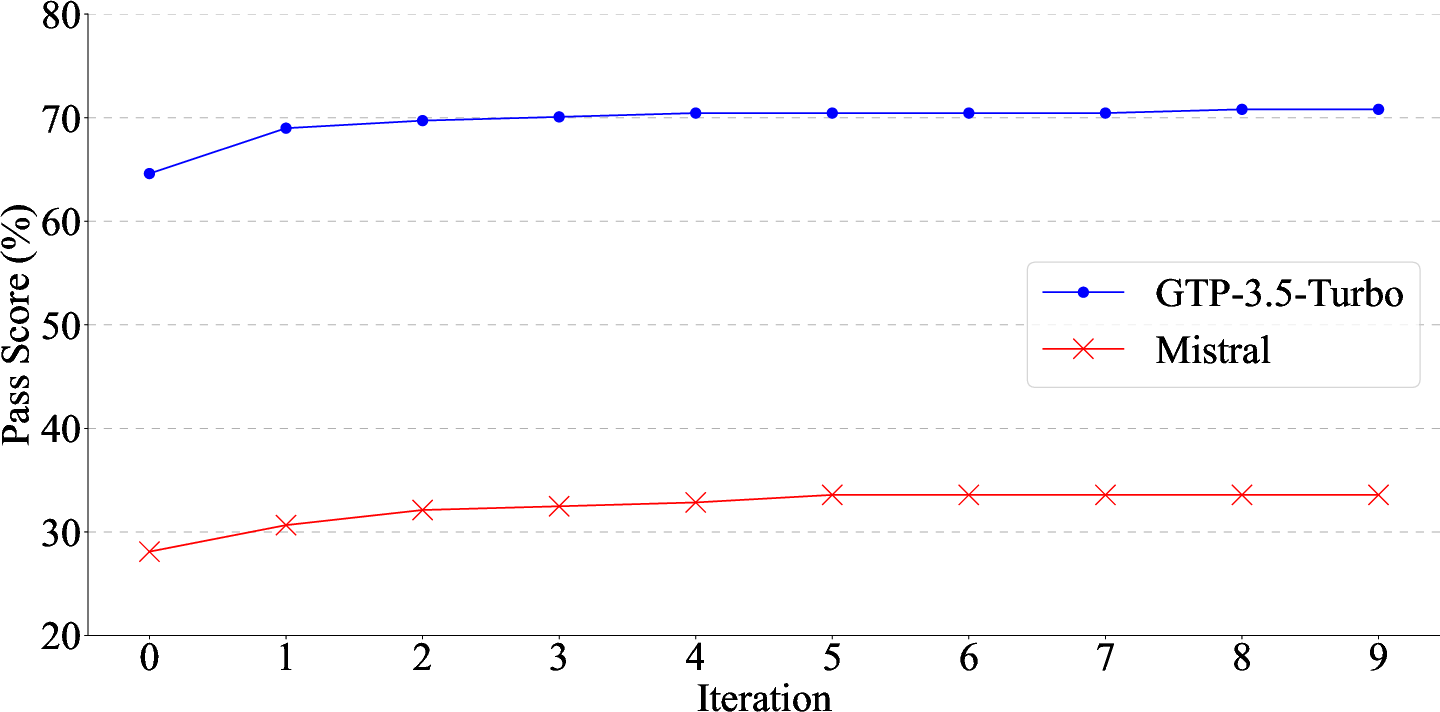

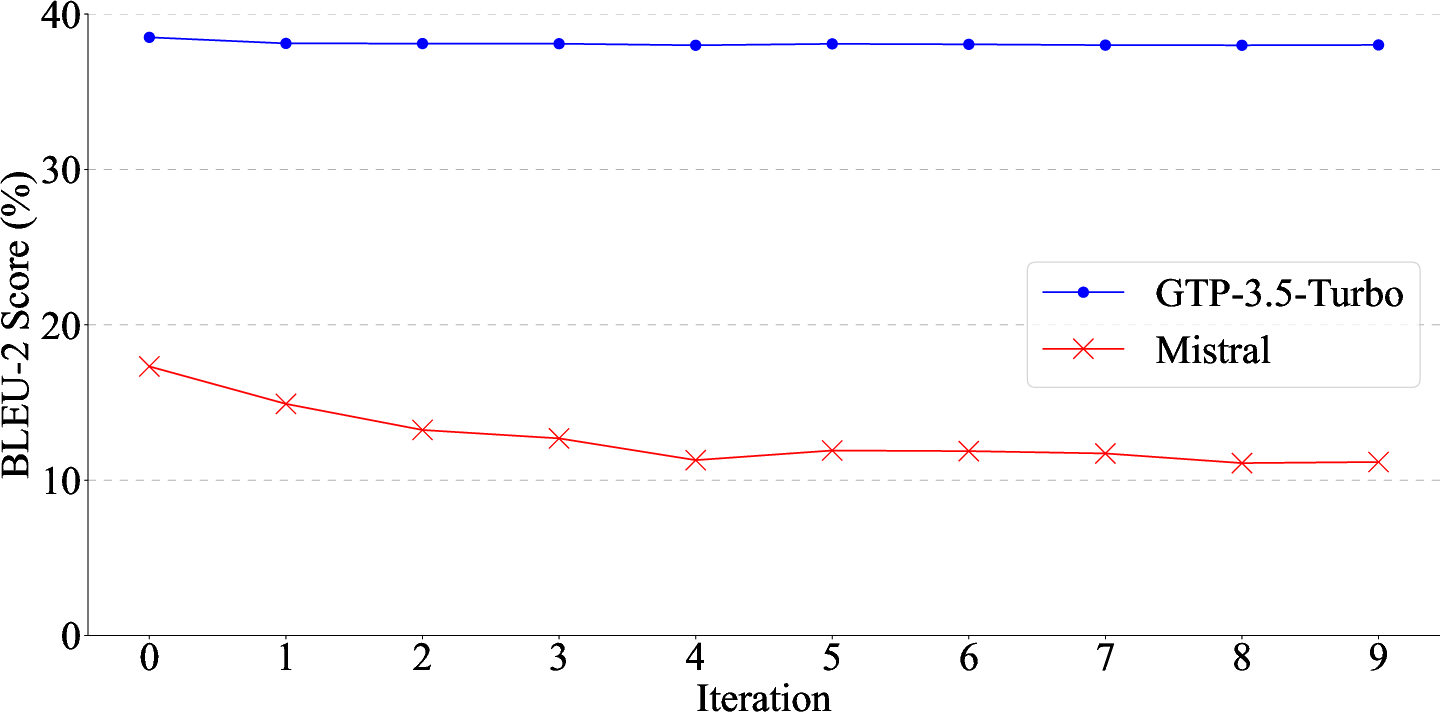

- It demonstrates significant improvements in metrics like BLEU, ChrF, and syntactic correctness, highlighting the effectiveness of its MS-RAG and Auto-SEF approaches.

- The study establishes a robust method for constructing mathematical libraries, reducing manual efforts and paving the way for advanced AI-driven formal reasoning.

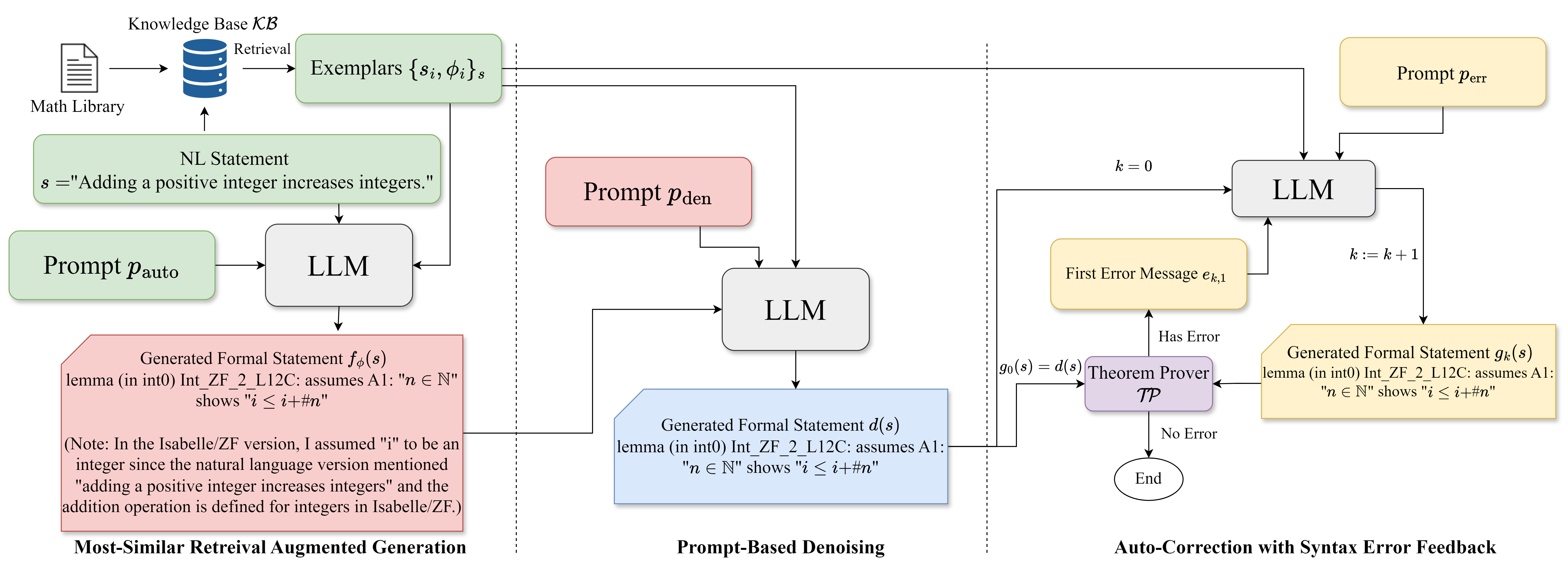

The paper "Consistent Autoformalization for Constructing Mathematical Libraries" (2410.04194) aims to enhance the reliability and consistency of autoformalization processes, which translate mathematical content from natural language into formal language expressions. The study presents a comprehensive framework involving retrieval augmented generation, denoising techniques, and iterative refinement strategies to achieve high-quality formal representations essential for building robust mathematical libraries.

Framework Overview

The proposed approach leverages three core mechanisms built around LLMs:

- Most-Similar Retrieval Augmented Generation (MS-RAG):

- Denoising Techniques:

- Addressing inherent biases and noise introduced by LLM outputs, the paper proposes both code-based and prompt-based denoising strategies. These strategies aim to remove extraneous explanations and correct stylistic inconsistencies, facilitating clearer outputs that adhere to desired formal standards.

- This two-pronged approach ensures that formal statements generated by LLMs are syntactically correct and retain terminological coherence with established libraries.

- Auto-correction with Syntax Error Feedback (Auto-SEF):

Evaluation and Results

The evaluation conducted utilized several semantic similarity and syntactic correctness metrics. Notable improvements are observed when employing MS-RAG and denoising techniques:

Implications and Future Directions

The framework proposed in the paper offers a robust method for constructing mathematically formalized knowledge bases and libraries, significantly reducing manual efforts and errors. By improving the coherence and accuracy of autoformalizations, the study lays groundwork for the seamless integration of computational assistance in formal reasoning tasks.

Future research should explore how these methods can be extended or adapted for other domains requiring formal reasoning. Additionally, further exploration into the interplay between LLM capabilities and formal theorem proving could unveil new prospects for more autonomous formalization systems.

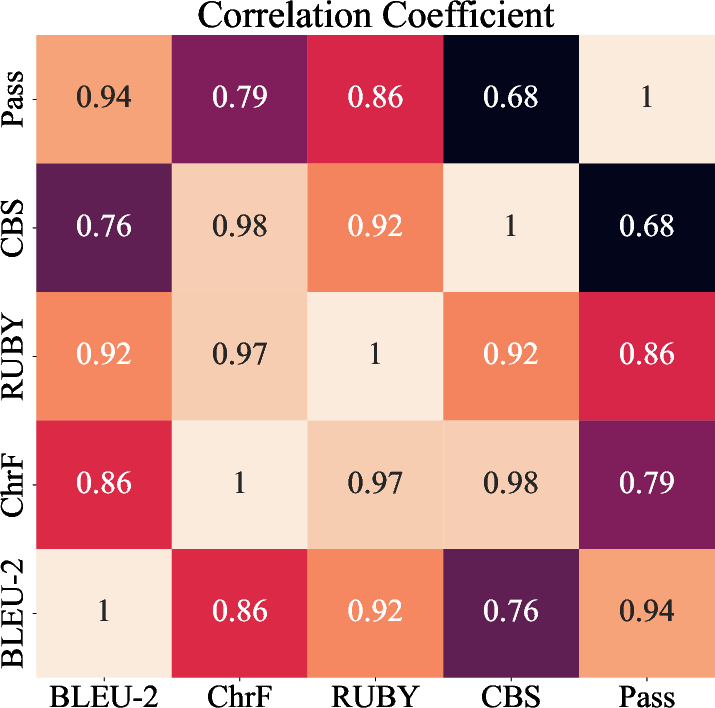

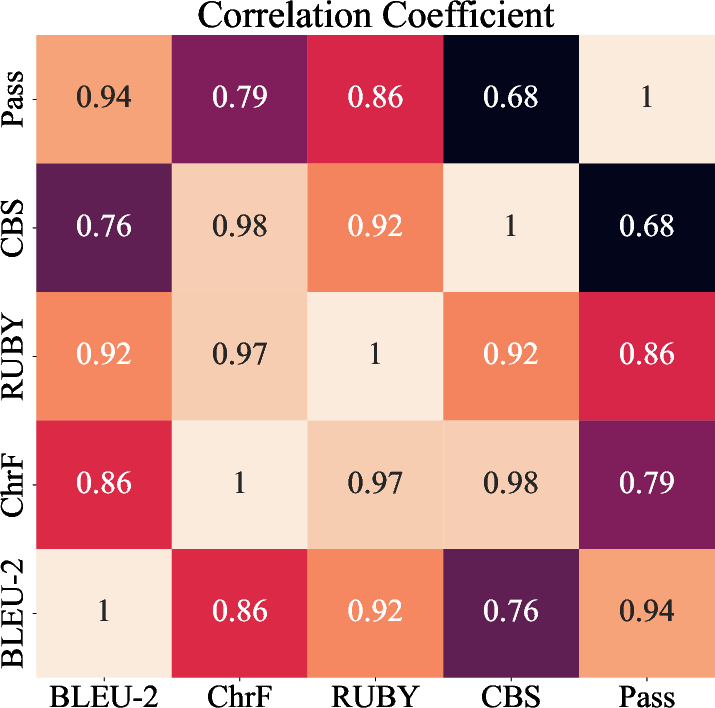

Figure 4: Correlation coefficients between metrics.

Conclusion

"Consistent Autoformalization for Constructing Mathematical Libraries" illustrates a vital step in the evolution of computational theorem proving, enhancing the autoformalization of complex mathematical domains. By systematically combining retrieval strategies, denoising techniques, and feedback-driven refinement, the study advances the development of high-quality mathematical libraries, paving the way for more sophisticated AI-driven insights in formal reasoning domains.