- The paper introduces an end-to-end theorem proving pipeline that autoformalizes natural language into formal Lean code using reinforcement learning.

- It employs innovative evaluation methods with LeanScorer, combining syntactic checks and fuzzy semantic assessments for improved accuracy.

- The system demonstrates state-of-the-art performance on benchmarks like Gaokao-Formal, achieving a 22% improvement in pass rates.

The paper "Mathesis: Towards Formal Theorem Proving from Natural Languages" addresses the challenge of automating theorem proving from natural language statements by introducing an end-to-end pipeline combining autoformalization with formal theorem proving techniques. This represents a significant advancement in the field by attempting to bridge the gap between informal problem statements and formal theorem proving systems.

End-to-End Theorem Proving Pipeline

The proposed pipeline begins with an autoformalization stage using Mathesis-Autoformalizer, which translates informal natural language problem statements into formal Lean code. This component is novel in that it utilizes reinforcement learning to enhance the translation quality dynamically, leveraging both syntactic correctness and semantic preservation as reward signals.

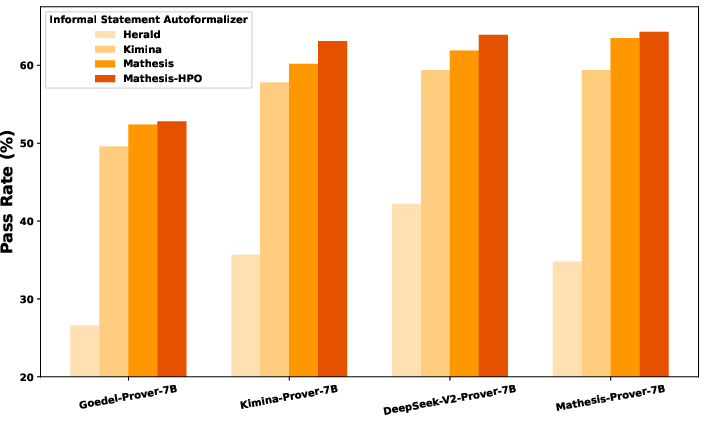

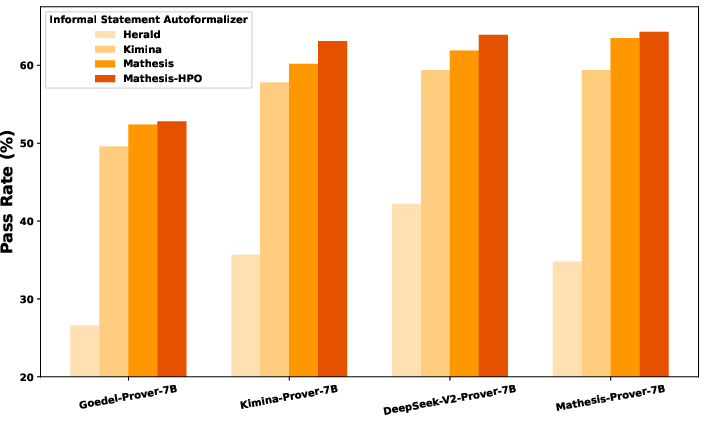

Figure 1: Performance of end-to-end theorem proving on the MiniF2F test set.

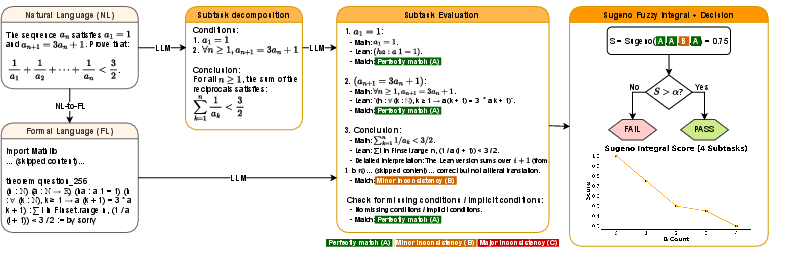

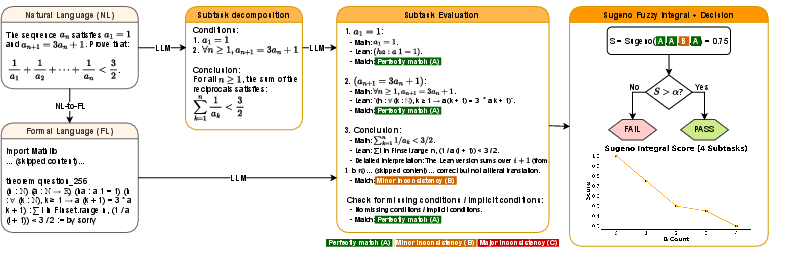

The system progresses to the validation phase, where formalized statements undergo syntactic checks with Lean and semantic assessments using the LeanScorer framework, ensuring both mechanical validity and alignment with the original problem intent. The final stage involves a prover generating formal proofs from the validated formal statements, utilizing Mathesis-Prover, trained with expert iteration to enhance its problem-solving capabilities. The integration of LeanScorer for nuanced evaluation represents an innovative approach to assessing autoformalization correctness.

Mathesis-Autoformalizer leverages Group Relative Policy Optimization (GRPO) to refine the translation process continuously, using rewards from syntactic checks and semantic evaluations. This strategy introduces a dynamic learning framework that allows the autoformalizer to incrementally improve its capabilities, taking advantage of both syntactic verification and semantic alignment.

The Hierarchical Preference Optimization technique further refines the translation by aligning both local and global objectives. By incorporating Direct Preference Optimization (DPO) in its training, Mathesis-Autoformalizer improves task-grounded output preferences, further enhancing translation fidelity and capability.

LeanScorer introduces a robust evaluation system for formalizations, focusing on semantic integrity. Utilizing a two-stage process, it first decomposes problem statements into subtasks and then employs a three-tier rating by LLM for assessment. The Sugeno Fuzzy Integral aggregates these categorical ratings, providing a continuous correctness score that offers flexibility in evaluation precision, accommodating nuanced LLM judgments while enforcing rigorous standards.

Figure 2: Overview of our LeanScorer evaluation method.

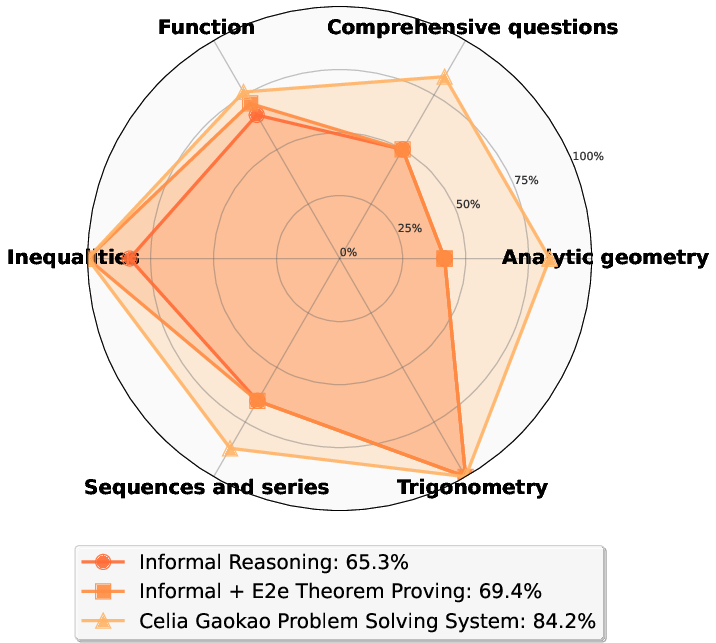

To evaluate the efficacy of autoformalization and end-to-end theorem proving systems, the paper introduces the Gaokao-Formal benchmark. It comprises 488 complex problems from China's National Gaokao exam, providing a rigorous testbed for assessing autoformalization complexity and capability across diverse domains.

Experimental Results

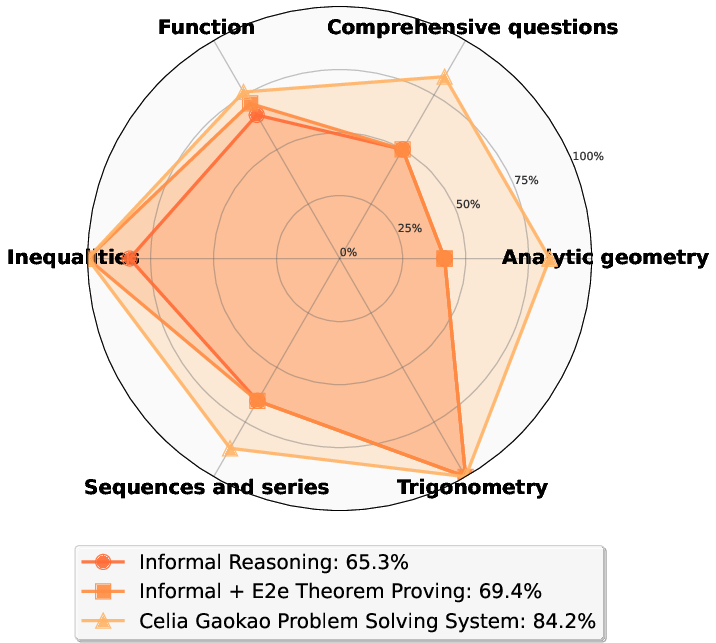

The Mathesis pipeline achieves state-of-the-art performance on Gaokao-Formal, demonstrating significant improvements over existing baselines. The autoformalizer outperforms previous models by 22% on Gaokao-Formal pass rates, while the end-to-end system achieves 64% accuracy on MiniF2F with pass@32 and reaches a benchmark of 18% on Gaokao-Formal.

Figure 3: Performance improvement by category.

Conclusion

The paper succeeds in advancing end-to-end theorem proving by integrating formal reasoning capabilities from informal natural language inputs, establishing a new benchmark with Gaokao-Formal, and delivering enhanced performance measures with Mathesis-Autoformalizer and Mathesis-Prover. This work paves the way for future research into complex mathematical problem solving directly from natural language inputs, addressing both theoretical and practical challenges.