- The paper presents a systems-theoretic framework to enhance understanding and design of AI systems by focusing on emergent behaviors and integrated tool interactions.

- It defines agency using causal decision theory, highlighting mechanisms such as environmental cognition, predictive reasoning, and metacognitive interactions.

- Key challenges include balancing experiential learning with pretraining, optimizing agent interactions, and establishing robust human-agent governance.

Agentic AI and Systems Theory: A Concise Analysis

Introduction to Agentic Systems

The paper "Agentic AI Needs a Systems Theory" (2503.00237) advocates for a systems-theoretic perspective in the development of agentic AI systems. The central thesis is that current approaches overly focus on modeling capabilities without adequately considering broader systemic interactions and emergent behaviors. By adopting a holistic system viewpoint, the authors argue there is potential to better comprehend capabilities and mitigate the associated risks. They emphasize on the need for agents that can engage in sophisticated reasoning and long-horizon tasks with minimal supervision, which underscores the relevance of systems theory in AI.

Definition and Characteristics of Agency

The paper proposes a definition of agency based on a causal, decision-theory perspective. Agency is defined in terms of functional agency, comprising three conditions: action generation, outcome modeling, and adaptation. The degree of agency is not binary; it exists on a spectrum where various systems may display varying levels of agency based on their mechanisms for these conditions. For example, humans exhibit epistemic action generation and counterfactual outcome modeling, which are superior in complexity compared to the reactive action generation of a thermostat.

Conceptualization of Agentic Systems

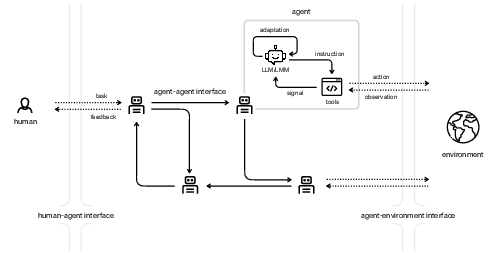

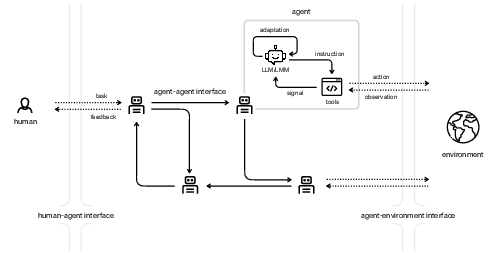

An agentic system consists of LLMs or LMMs equipped with tools interfacing with humans and an environment to achieve specified tasks. These systems necessitate agents that simulate sensorimotor interactions with tools, to enrich cognitive capabilities. The system-level agency is argued to surpass the individual capabilities of its components, through interaction dynamics and multimodal experiences facilitating emergent intelligence.

Figure 1: An agentic system encompassing human-seeded initial tasks, LLM/LMM driven agents, and tool interactions that adapt based on environmental observations.

Mechanisms of Emergence

Emergence in agentic systems is realized through detailed mechanisms:

- Environmental Cognition: Inspired by embodied cognition, interacting with the environment via tools allows an agent to develop and enhance cognition similarly to biological systems. Multimodal signals aid in forming generalized, abstract representations, supporting learning and generalization.

- Predictive Reasoning: Prediction errors and hierarchical predictive processing promote causal reasoning, as agents adjust behaviors according to sensory feedback and dynamic environmental models.

- Metacognitive Interaction: Metacognition develops from prediction, error detection, and agent-agent communication. Shared representations of uncertainties foster collective metacognitive awareness and adaptive capacities.

Key Challenges

The paper identifies pivotal challenges in the evolution of agentic systems:

- Generalist Agent Development: Balancing pretraining with experiential learning, agents can potentially develop generalization abilities through exploratory interactions with environments, but practical methods and optimal strategies remain to be established.

- Agent Interaction Design: Efficiently decomposing and delegating subtasks within agentic systems demands exploration into trust transfer mechanisms and task competence assessment.

- Subgoal Management: Monitoring the emergence of subgoals and limiting autonomously generated goals are critical to mitigate complexity-induced risks.

- Human-Agent Governance: Given the inherent incompleteness in human-instantiated tasks, establishing frameworks for residual control rights and escalation mechanisms remain areas for further research to ensure system safety and reliability.

Conclusion

The development of agentic AI systems necessitates a systems-theoretic approach to effectively address emergent capabilities and risks. By fostering a systems-level perspective, AI systems can be designed to safely augment human potential while preserving agency. The paper underlines that while agentic capabilities are attainable, they must be intentionally guided through thoughtful design and regulation of interaction mechanisms. As new modalities are integrated, these considerations will become increasingly critical to navigate the complexities of future AI advancements.