- The paper introduces the DMET framework that models LLM generation as trajectories on a semantic manifold with metrics for continuity, attractor compactness, and topological persistence.

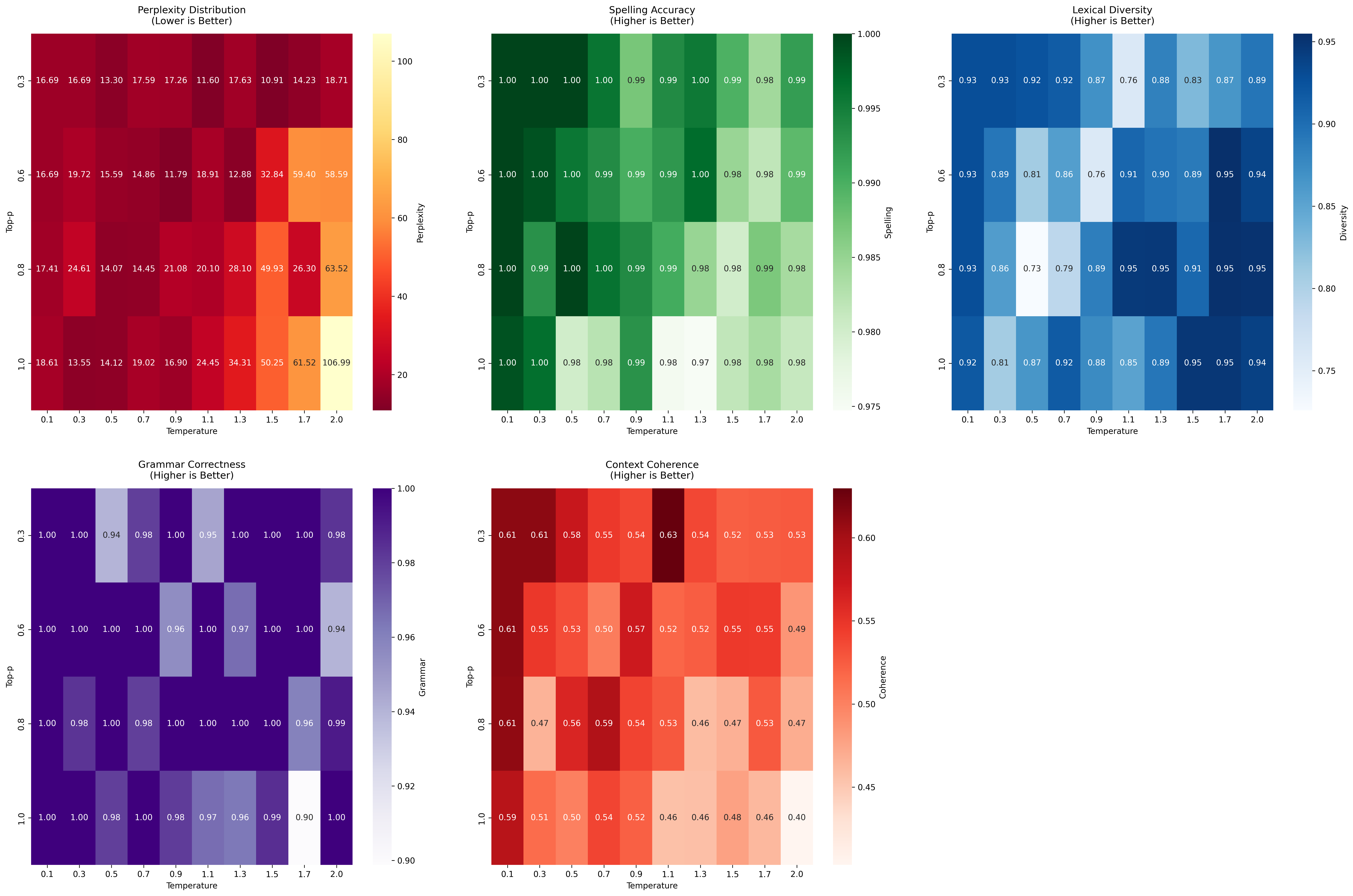

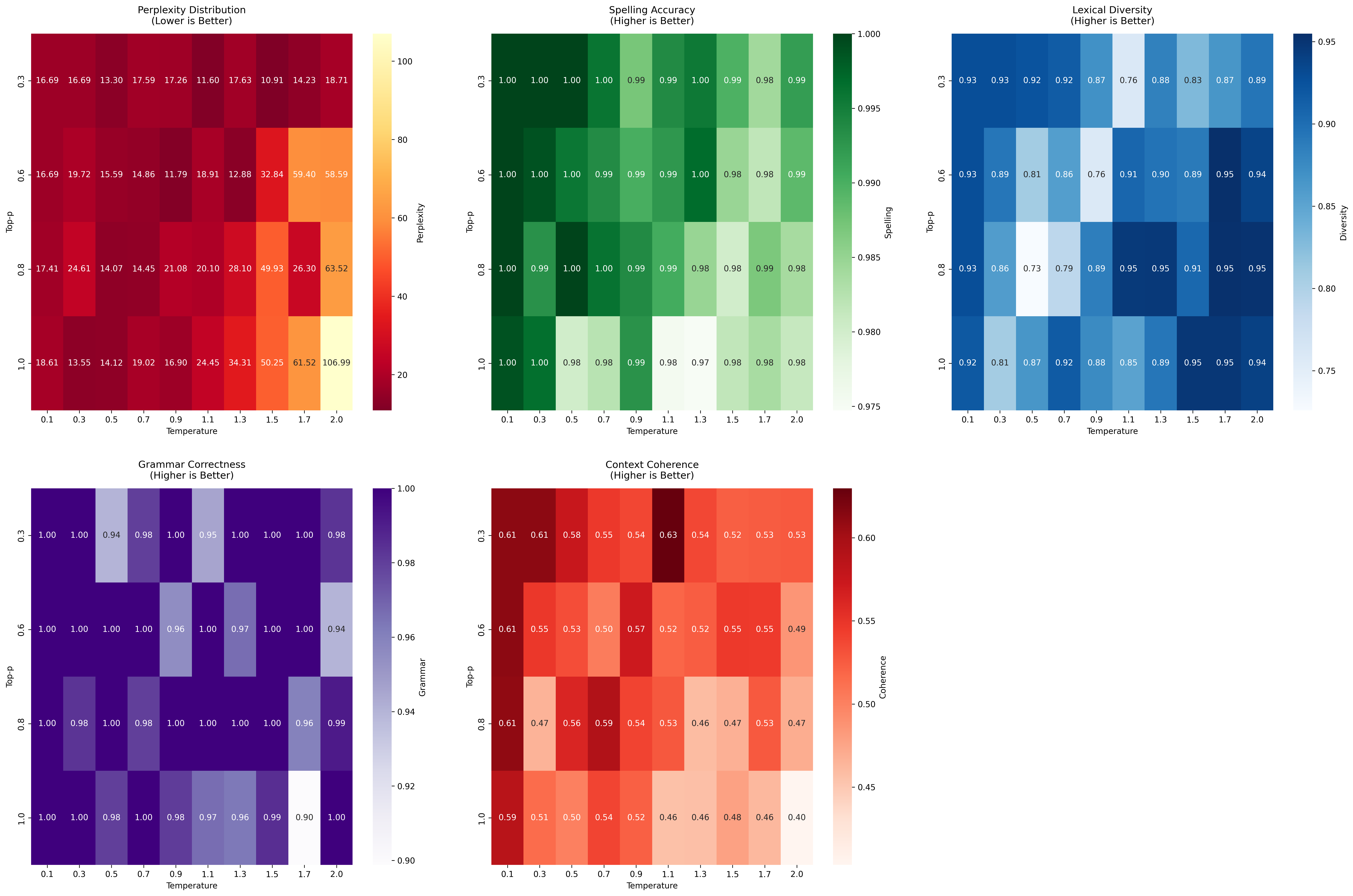

- It empirically links decoding parameters like temperature and top-p to measurable performance metrics such as perplexity, fluency, and grammatical consistency.

- The study offers actionable strategies for balancing fluency and creativity while highlighting challenges and opportunities for real-time latent dynamics analysis.

Empirical Investigation of Latent Representational Dynamics in LLMs: A Manifold Evolution Perspective

Introduction

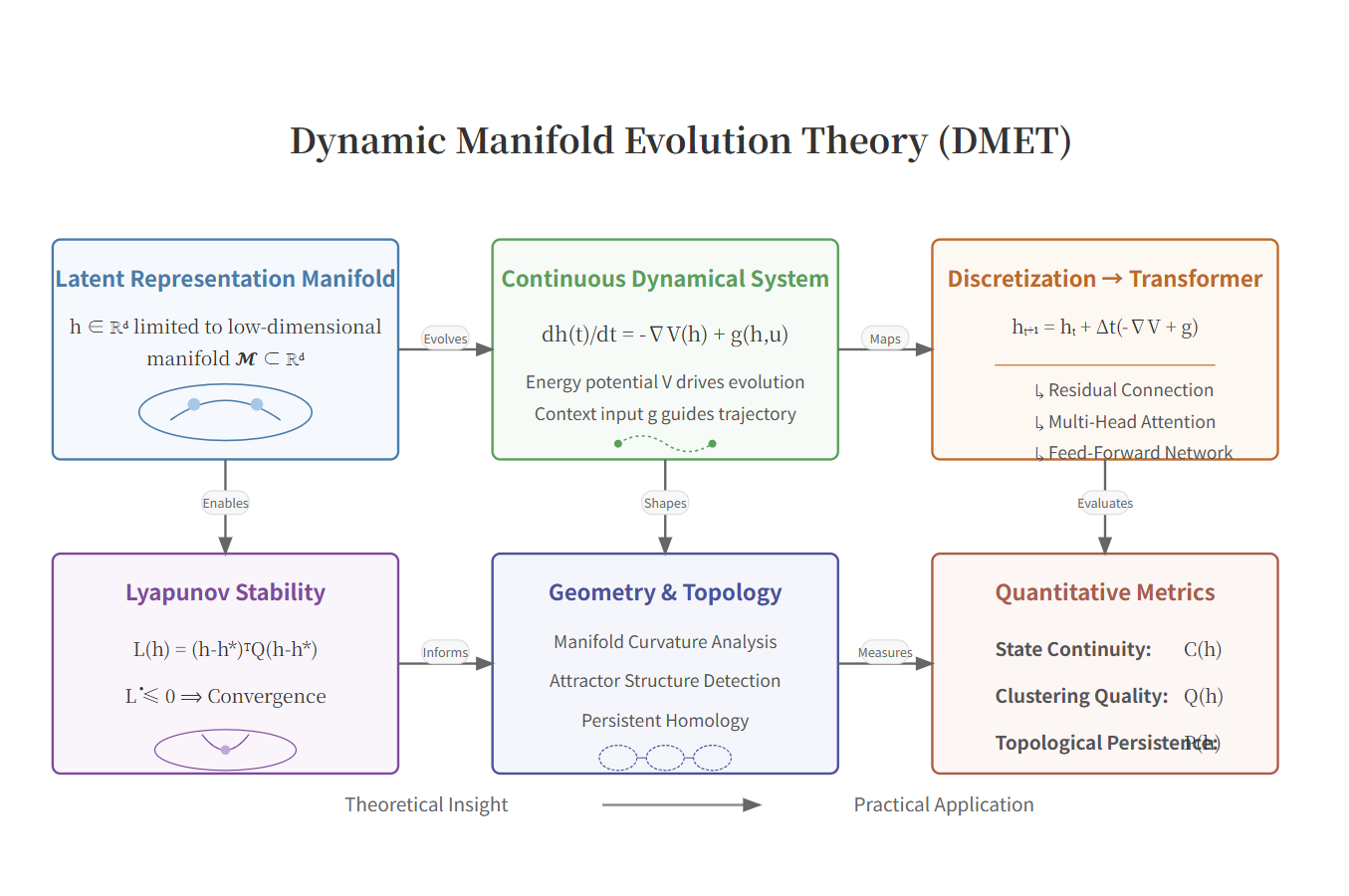

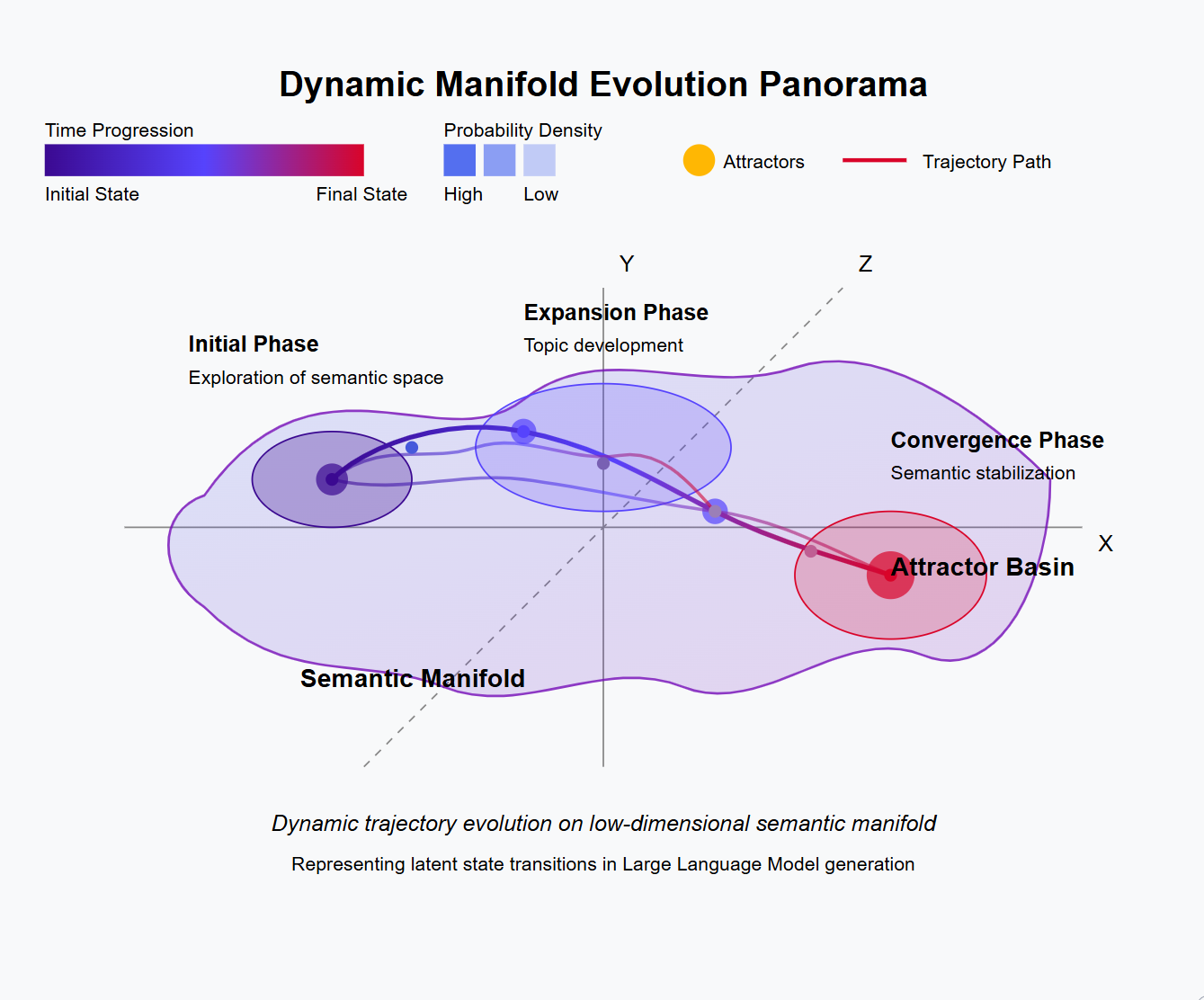

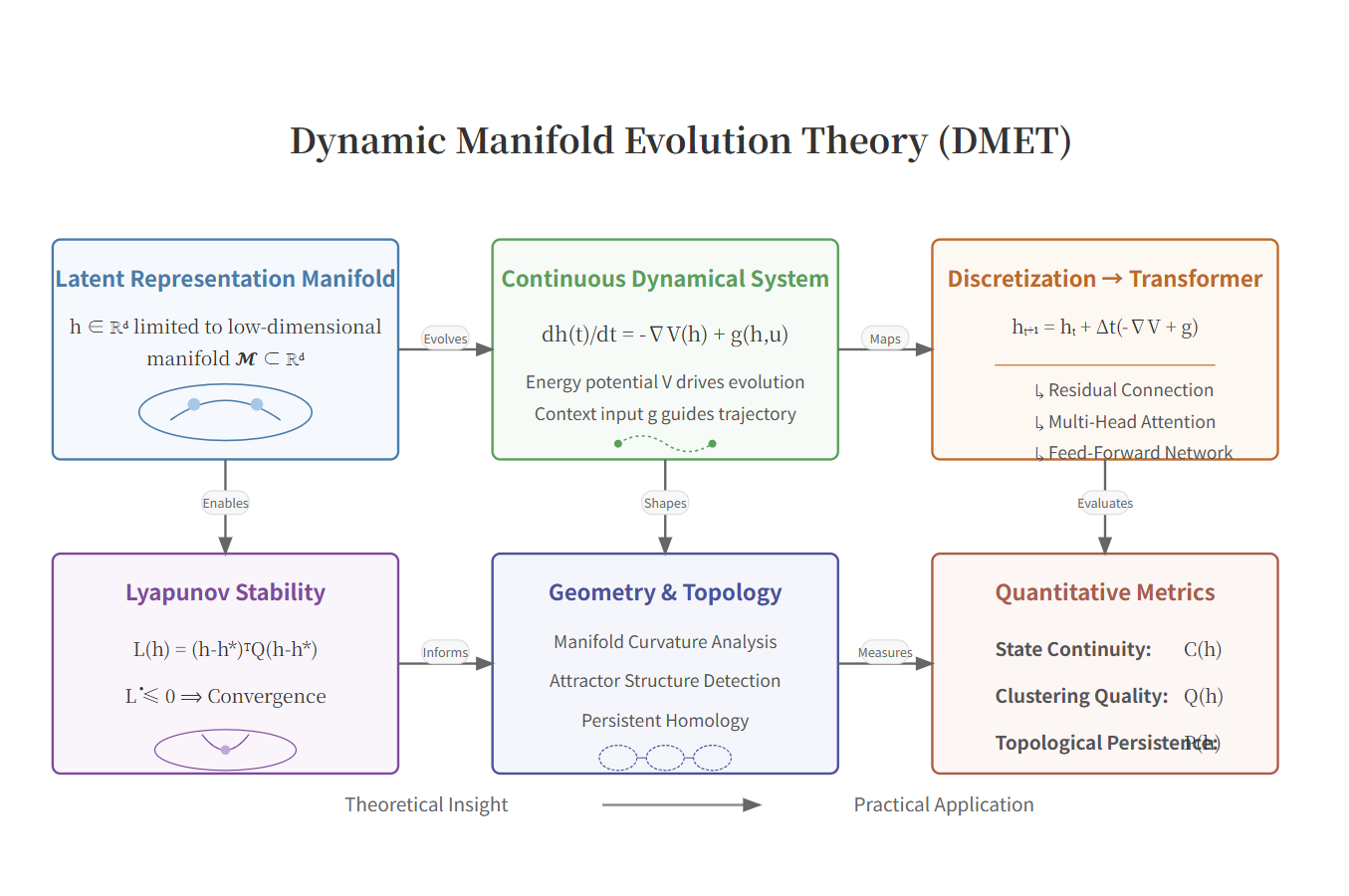

The paper "Empirical Investigation of Latent Representational Dynamics in LLMs: A Manifold Evolution Perspective" introduces the Dynamical Manifold Evolution Theory (DMET) as a novel framework to conceptualize the generative process of LLMs. It models LLM generation as a trajectory evolving on a low-dimensional semantic manifold, characterized by three metrics: state continuity (C), attractor compactness (Q), and topological persistence (P). This representation allows for a comprehensive understanding of how latent dynamics relate to text quality, including fluency, coherence, and grammaticality. The framework extends previous work by providing a unified, continuous view of LLM dynamics and suggesting that decoding parameters like temperature and top-p can predictably influence these trajectories.

Dynamical Manifold Evolution Theory (DMET) Framework

DMET models LLM generation as a controlled dynamical system on a semantic manifold. The central assumptions are:

- Manifold Hypothesis: Latent representations exist on a low-dimensional manifold within a high-dimensional space.

- Continuity: Text generation corresponds to a smooth trajectory rather than discrete jumps.

- Attractors: The manifold is organized into attractor basins that correspond to coherent semantic states.

Figure 1: Overview of the DMET framework where trajectories evolve on a low-dimensional semantic manifold under intrinsic energy gradients and context-driven forces.

The mathematical formulation uses a continuous-time model and maps the manifold dynamics onto Transformer architecture components like the Feed-Forward Network (semantic refinement) and Multi-Head Attention (context integration).

Empirical Validation

Empirical analyses across Transformer architectures validate DMET's propositions. Key experiments involved the evaluation of three LLMs: DeepSeek-R1, Llama2, and Qwen2, using prompts that varied in complexity. The experiments leveraged a grid of temperature and top-p values to assess how these parameters affect latent trajectories and text quality.

Findings:

Trajectory Dynamics and Parameter Sensitivity

The study explores how decoding parameters shape latent trajectories, revealing that:

- Low temperature enhances continuity but reduces diversity.

- High top-p values allow for broader exploration, beneficial for creative tasks but potentially at the cost of fluency.

The findings support DMET's predictions and illustrate a trade-off between fluency and creativity across the parameter space.

Figure 3: Fluency–Coherence trade-off under varying temperature and top-p using DeepSeek-R1.

Practical Implications and Limitations

The study identifies actionable strategies for parameter tuning, such as using moderate temperatures and top-p values for balanced generation outcomes. However, limitations include the high computational complexity of manifold analyses and the need to establish causal links between latent dynamics and text quality.

Conclusions

This work establishes DMET as a robust framework for interpreting LLM behavior by relating latent dynamics to text quality. Future work could focus on developing efficient algorithms for real-time manifold analyses and exploring direct interventions to manipulate latent trajectories for improved control over LLM outputs. The insights provided by DMET offer a principled basis for designing and understanding next-generation LLMs.